-

-

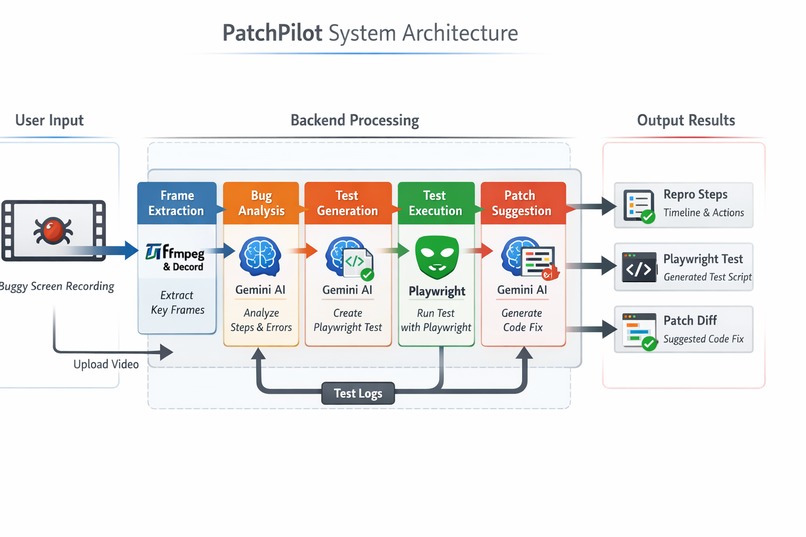

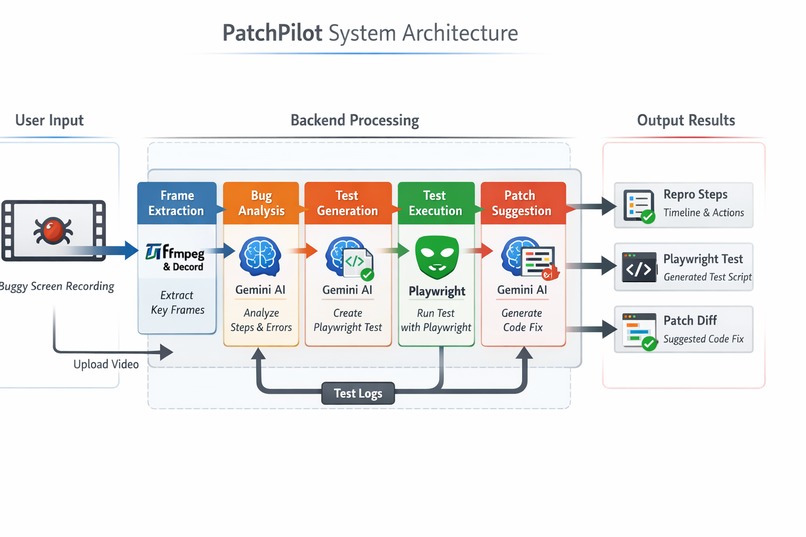

PatchPilot System Architecture

-

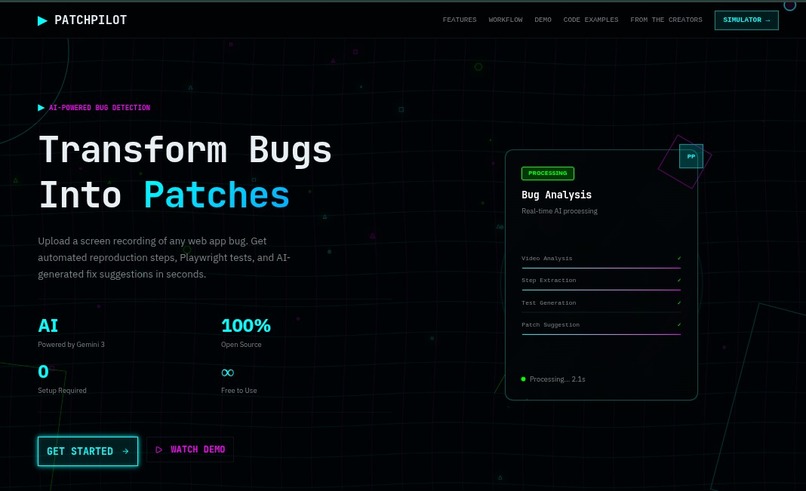

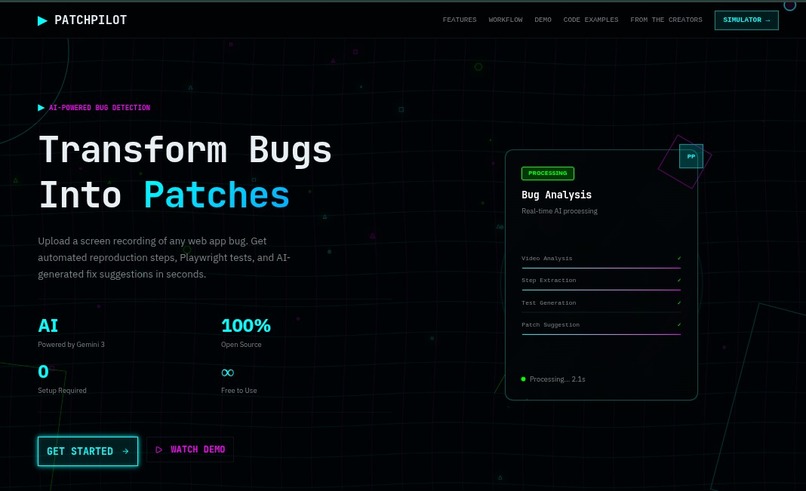

Homepage

-

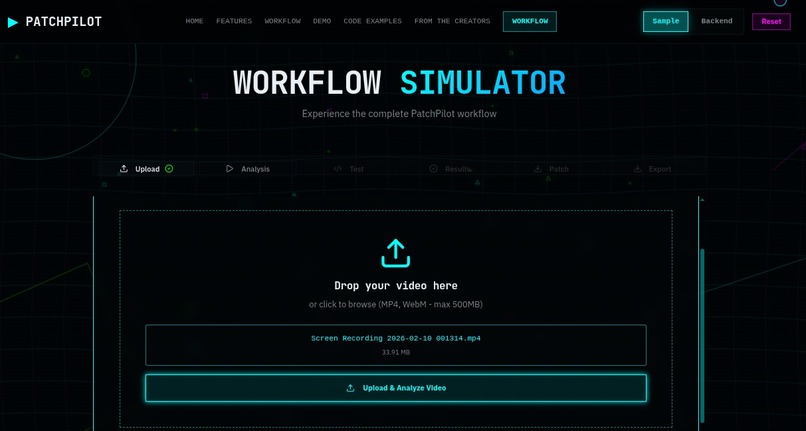

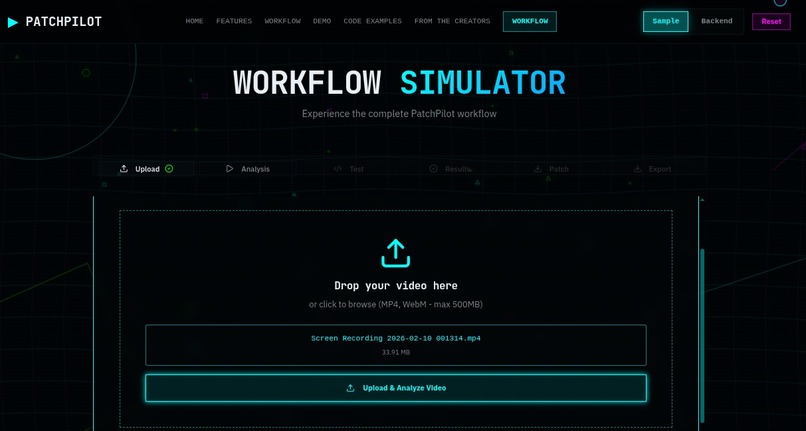

Upload Media Step [Workflow page]

-

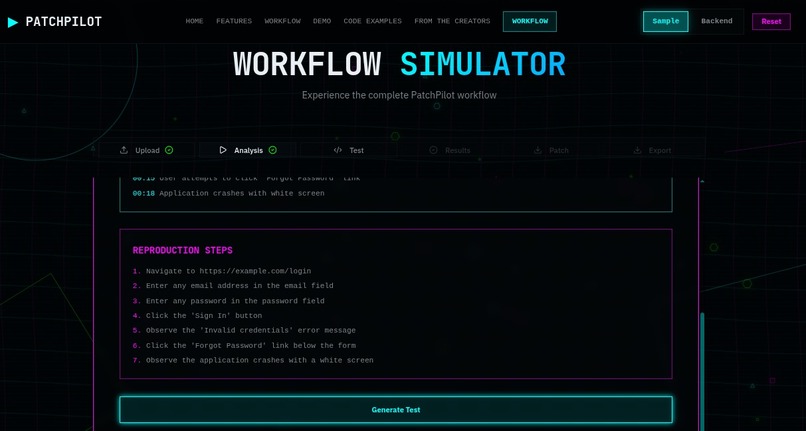

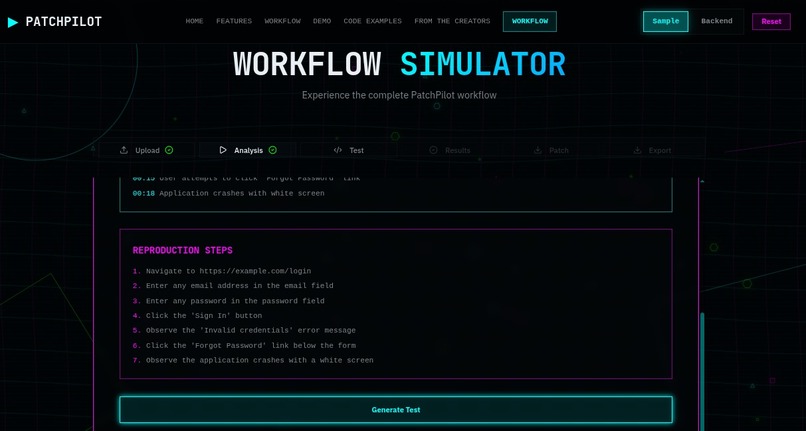

Video -> Gemini Code Analysis Step [Workflow page]

-

Auto-Test Generation Step [Workflow page]

-

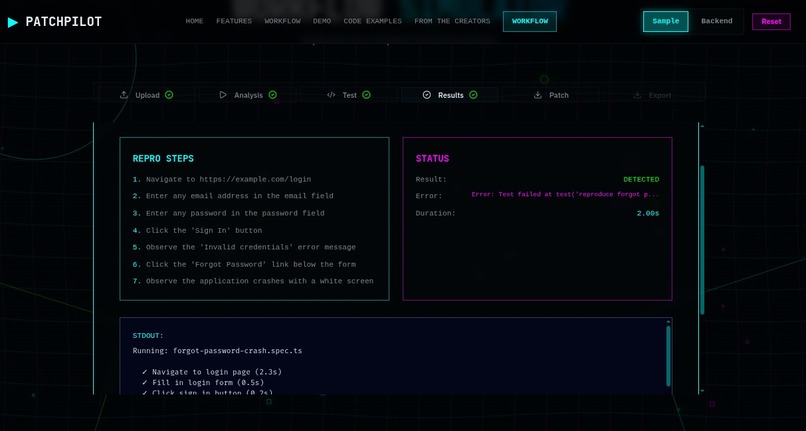

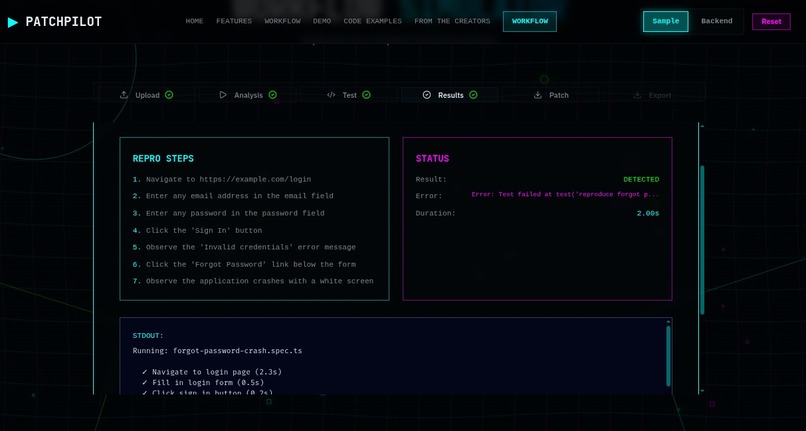

Test-Run Results & Bug Detection Step [Workflow page]

-

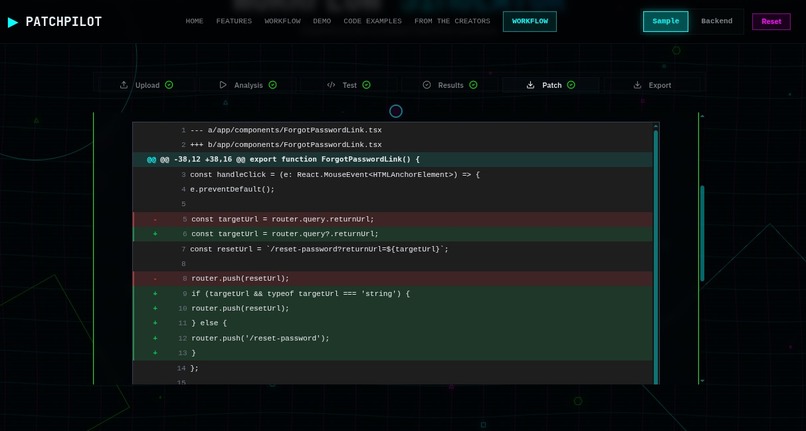

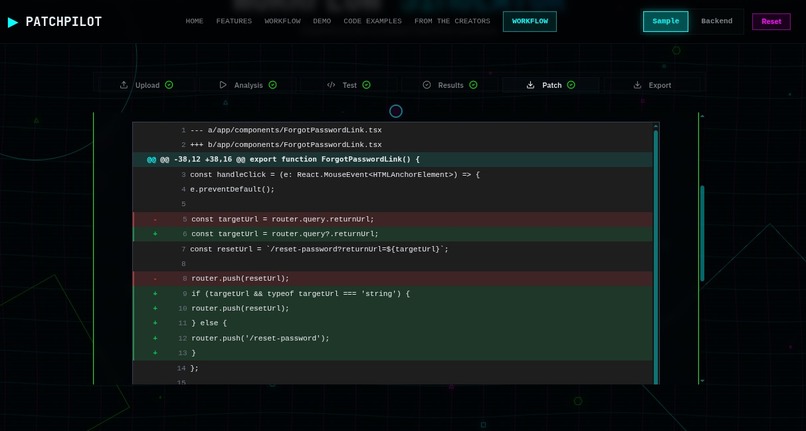

Gemini Inferred Patch Suggestions Step [Workflow page]

-

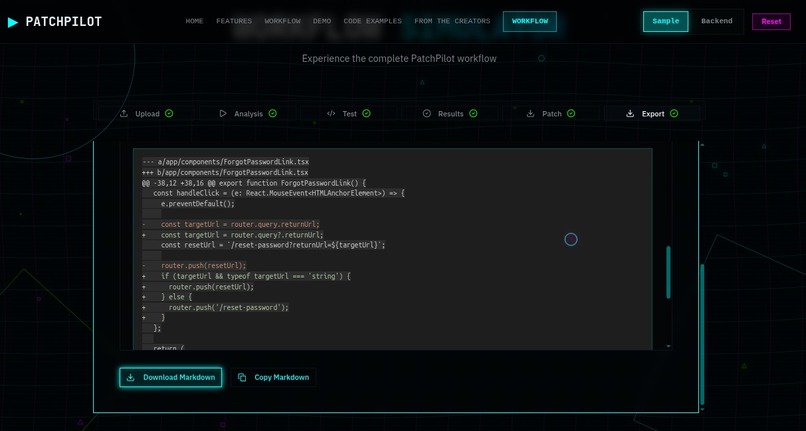

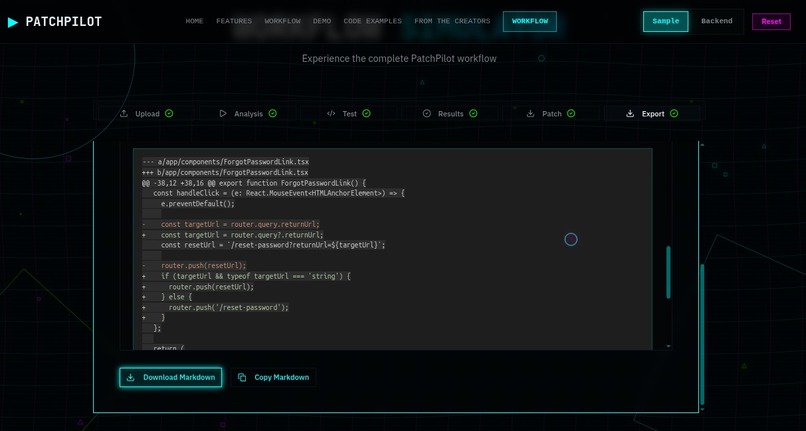

Export Report as README Step [Workflow page]

Inspiration

Every developer has faced the nightmares of a notorious bug report, having a 10-second screen recording with a caption that simply says, "It's broken!" Reproducing these bugs manually is a massive sink for developer productivity. Therefore, we asked ourselves: What if the bug report could fix itself? We wanted to build a bridge between a user's visual recording and a developer's codebase, turning seeing into solving.

What it does

PatchPilot is an autonomous QA engineer that transforms video recordings into code patches:

- Vision: It analyzes a video upload, using intelligent scene-change detection to extract the most relevant UI transitions.

- Cognition: Using Gemini 3 Flash (Preview), it "watches" these frames to infer user intent and generate a structured bug report.

- Verification: It automatically writes and executes a Playwright test to reproduce the bug in a real browser.

- Remediation: If the test fails, PatchPilot analyzes the logs and suggests a Unified Diff to fix the underlying code, completing the loop from bug to patch in seconds.

How we built it

We have designed our tech stack tailored for speed and technical precision:

- Backend: FastAPI provides a high-performance asynchronous API layer.

- Vision Engine: We used Decord with GPU acceleration and OpenCV to implement custom scene-detection algorithms, ensuring the AI only sees the most important UI changes.

- Brain: Gemini 3 handles the heavy lifting, from visual reasoning to code generation, utilizing JSON Mode for strict schema adherence.

- Execution: A custom Playwright Runner executes tests in a sandboxed environment, managing concurrency via unique UUID scoping to ensure thread-safe operations.

- Data Integrity: Pydantic schemas act as the "contract" between our AI and our execution engine.

Challenges we ran into

Building an end-to-end AI agent is rarely a straight line. We faced four major technical hurdles:

- Decoupling from an Unstable Backend: In the early stages, our backend API was evolving rapidly. To prevent frontend development from grinding to a halt, we implemented an Adapter Pattern (using SampleAdapter vs HttpAdapter). This allowed us to mock backend responses and decouple the UI logic from the API implementation, even when stable documentation was non-existent.

- The "Schema Friction" (422 Errors): We spent significant time resolving schema mismatches. From missing mandatory fields like analysis.title to data format conversions (converting MM:SS strings from the UI into seconds integers for the AI), we had to bridge the gap between frontend flexibility and strict Pydantic validation. We eventually unified our naming conventions to resolve constant camelCase vs snake_case conflicts.

- State Management in Multi-Step Workflows: Managing a complex, six-stage pipeline (Upload → Analyze → Test → Run → Patch → Export) was a state-management puzzle. We built a centralized workflow hook to handle timing tracking, retry logic for flaky AI responses, and graceful error recovery so that a single failure didn't break the entire user experience.

- Visual Optimization & AI Hallucinations: Extracting the right signal from video noise was challenging. We had to tune our scene-detection sensitivity to find the perfect 1% pixel change threshold. Furthermore, we had to combat "AI hallucinations" where Gemini would occasionally invent UI elements or return malformed JSON. We solved this by implementing strict Type Safety boundaries and normalization functions to ensure our TypeScript types mirrored our Pydantic schemas perfectly.

Accomplishments that we're proud of

- We built a resilient architecture that let the frontend move forward while the backend was still changing. The adapter pattern decouples the frontend and backend changes, with a sample mode for development and demos. We added a type-safe normalization layer that handles format conversions between frontend and backend, so the app works even when schemas shift.

- We transformed the UI from a humble scroll page into a 3-pane workspace that feels like a developer tool. We implemented a dual theme system (light/dark) with proper contrast, fixing visibility issues. Motion and micro-interactions are included without hurting performance. We eliminated scroll growth and improved navigation so the workflow stays focused.

- In addition, we also built developer-focused features like an Inspector panel for API debugging, clear error messages with retry flows, and a workflow state machine with timing tracking. A token-based design system keeps the UI consistent and maintainable.

- On backend integration, we resolved schema mismatches that caused 422 errors, handled data format conversions for timeline and reproSteps, and maintained type safety across frontend/backend boundaries. This work ensures the app works reliably with the backend API.

What we learned

- Engineering for Instability: Building with an early-stage, evolving backend taught us the critical value of the Adapter Pattern. By implementing a SampleAdapter alongside our HttpAdapter, we decoupled the frontend from API changes, ensuring we could progress and demo even when documentation was shifting. We learned that normalization layers are non-negotiable for handling format differences as the backend evolves.

- Precision of AI Vision: We discovered that spotting a bug is a balancing act. Optimizing our scene-detection thresholds, using the 1% pixel change baseline, taught us how to filter out visual noise while capturing critical UI state transitions.

- Prompt Engineering & Hallucination Defense: Crafting the brain of PatchPilot taught us that LLMs require strict boundaries. We mitigated AI hallucinations by moving from generic instructions to highly structured, system-level prompts with clear rules and constraints. We learned that providing sequential thought instructions and forcing strict JSON output formats is the only way to turn a creative model into a reliable code-generation engine.

- State Management in Complex Loops: High-stakes, multi-step workflows (from Video Upload to Patch Export) cannot be managed with scattered state. We painstakingly learned to design centralized workflow hooks that make timing tracking, error handling, and explicit retry/reset logic predictable and user-friendly.

- API Contract Management: Schema mismatches are the silent killers of AI apps. We learned the hard way that missing a single field like analysis.title results in 422 errors. This taught us to verify schemas directly from the Pydantic source code rather than assuming documentation was current, and to be militant about unifying camelCase and snake_case across boundaries.

- The Power of Polish: Finally, we learned that UI details—motion, contrast, and spacing—aren't just "extra." In an AI tool, a workspace-oriented layout reduces cognitive load, helping the user understand what the agent is "thinking." Attention to these edge cases is what separates a functional prototype from a polished product.

What's next for PatchPilot

While we have a functional core application, we do possess a potential roadmap that transforms PatchPilot from a hackathon project into a robust, production-ready developer platform.

Security & Enterprise Privacy

- Local Processing Options: To handle private codebases, we plan to implement a local worker mode where the AI analysis stays within the user’s firewall, ensuring no proprietary source code is exposed to public APIs.

- Access Control: Robust authentication and role-based access to ensure only authorized team members can generate or apply patches.

Backend & Performance Stabilization

- Standardized Contracts: Transitioning to full OpenAPI/Swagger documentation to finalize our API contracts.

- Real-time Updates: Moving from polling to WebSockets to provide live progress updates as the AI infers through the video frames.

- Intelligent Caching: Implementing caching layers to reduce redundant AI processing for similar bug patterns.

The Developer Experience

- VS Code Extension: Bringing the power of PatchPilot directly into the IDE. Imagine a "Patch Available" notification appearing right in your sidebar.

- Visual Diff Viewer: A frontend side-by-side preview that lets developers inspect, edit, and approve patches before they are applied.

- CI/CD Integration: A CLI tool that acts as a gateway to GitHub Actions, automatically suggesting fixes for any failing tests in a Pull Request.

AI & Vision Evolution

- Multi-Modal Context: Training the model to look at network traces and console logs simultaneously with the video for deeper root-cause analysis.

- Community Plugins: Opening a plugin ecosystem so the community can build drivers for different testing frameworks.

Built With

- cors

- eslint

- fastapi

- framer-motion

- gemini

- genai

- https

- next.js

- nginx

- pnpm

- python

- radixui

- react

- restapi

- ssl

- tailwindcss

- typescript

- vps

Log in or sign up for Devpost to join the conversation.