-

-

Website Landing Page

-

Demonstration: Image Used for Checking Portion

-

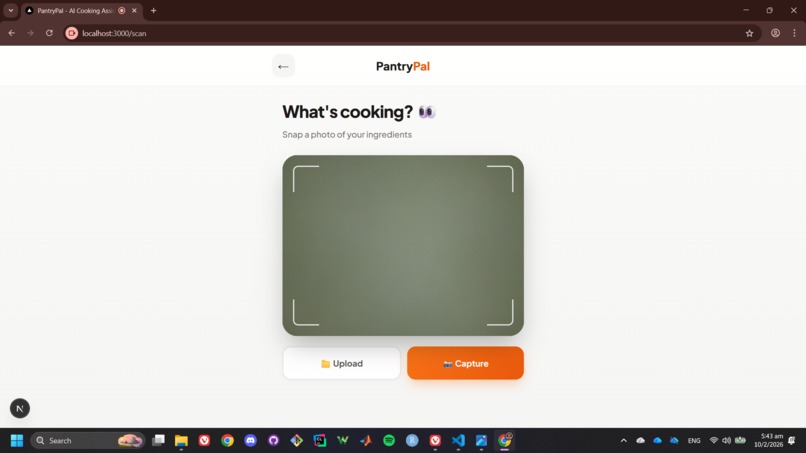

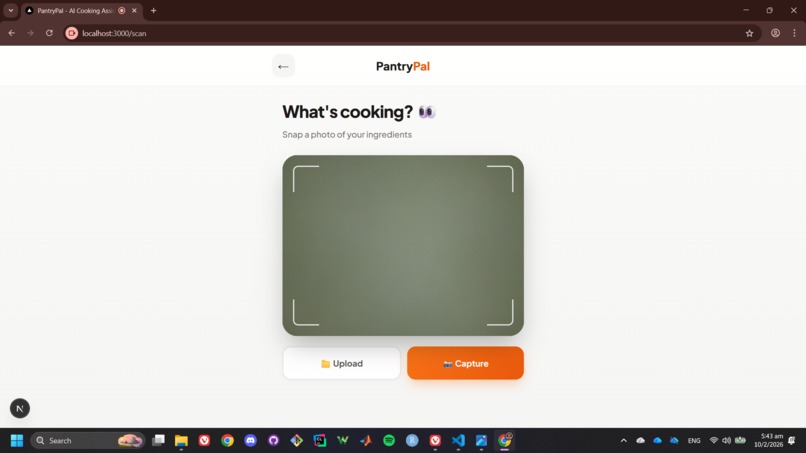

Uploading/Camera Capture Page for Ingredients

-

Demonstration: Image Used for Uploading Ingredients Portion

-

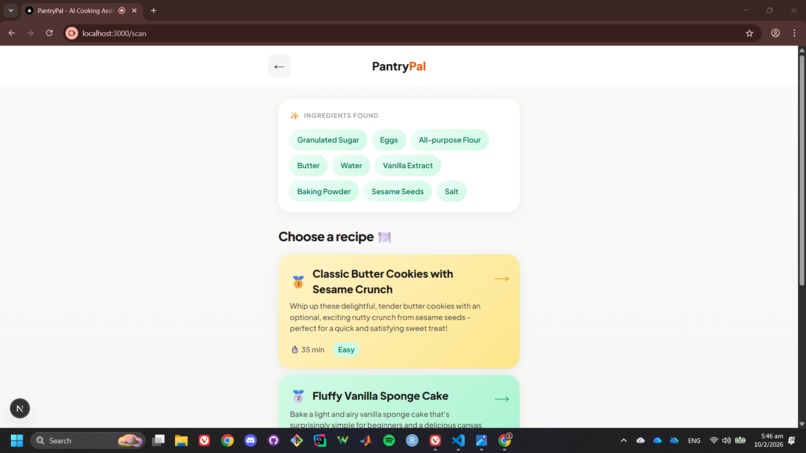

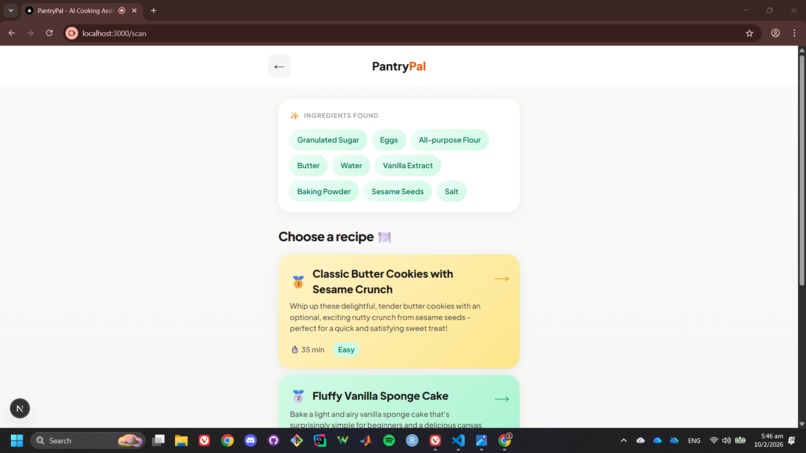

Top of Image Shows Ingredients Detected by AI

-

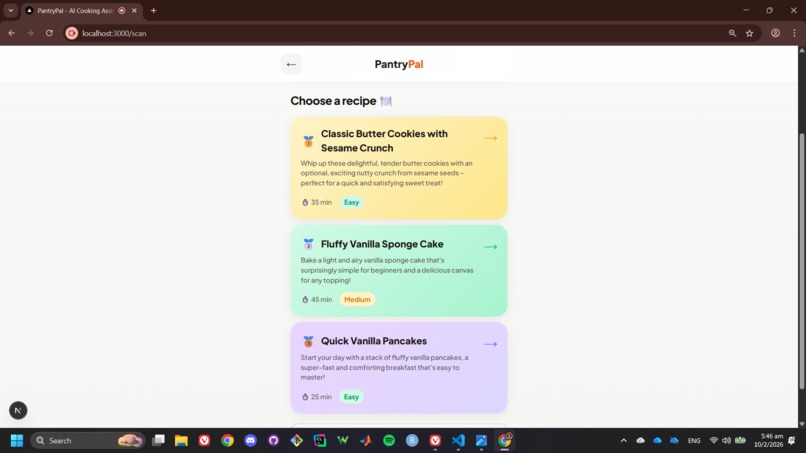

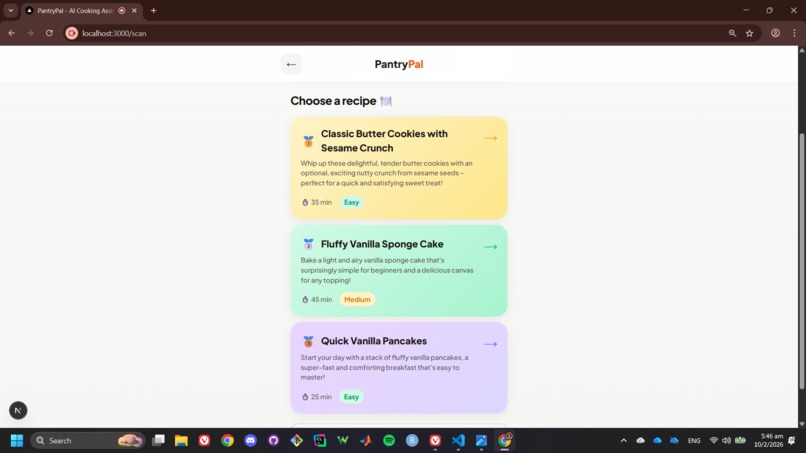

Given 3 Personalised Cooking Recipes (Choose one!)

-

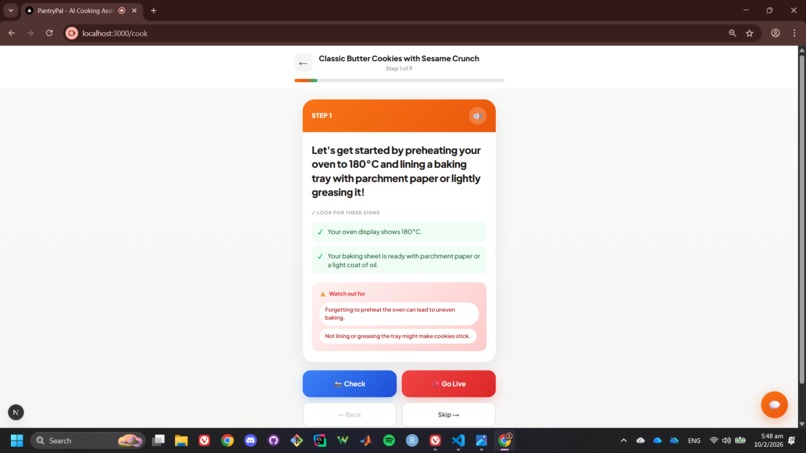

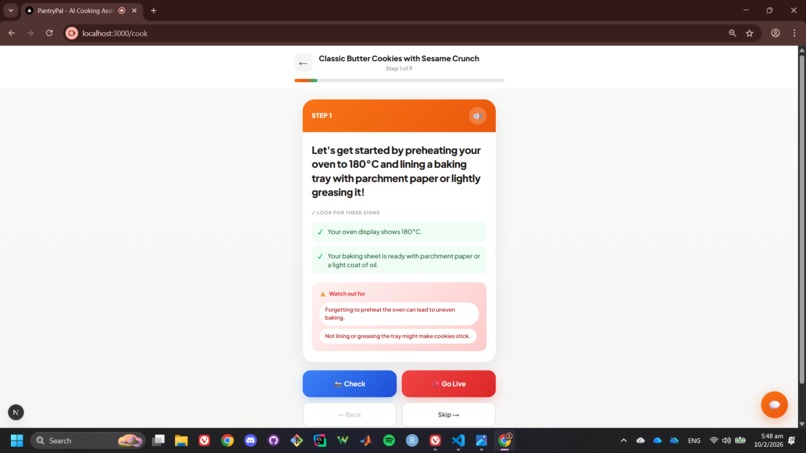

Step-by-Step Guidance with Visual Criteria

-

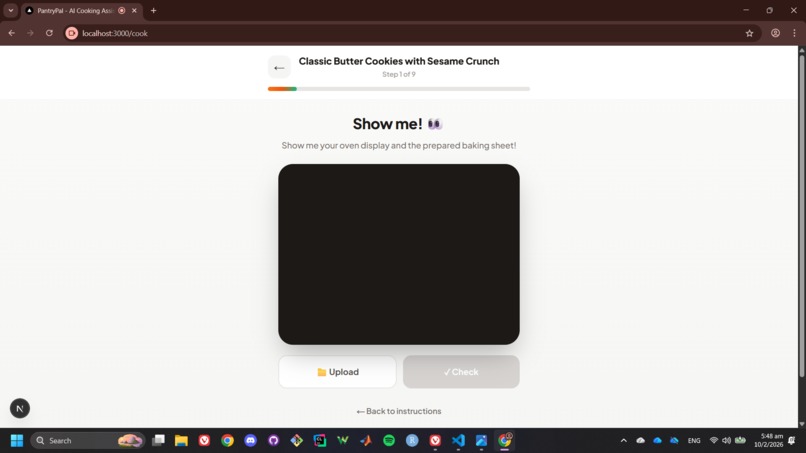

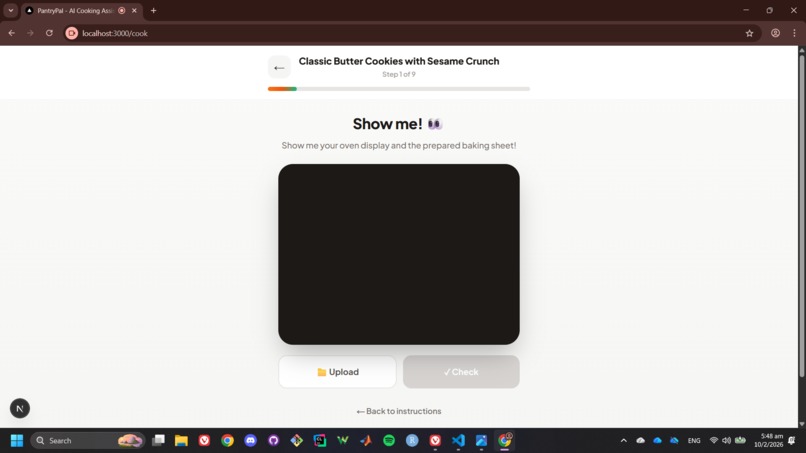

Uploading/Camera Capture Page for AI Checks on Current Step

-

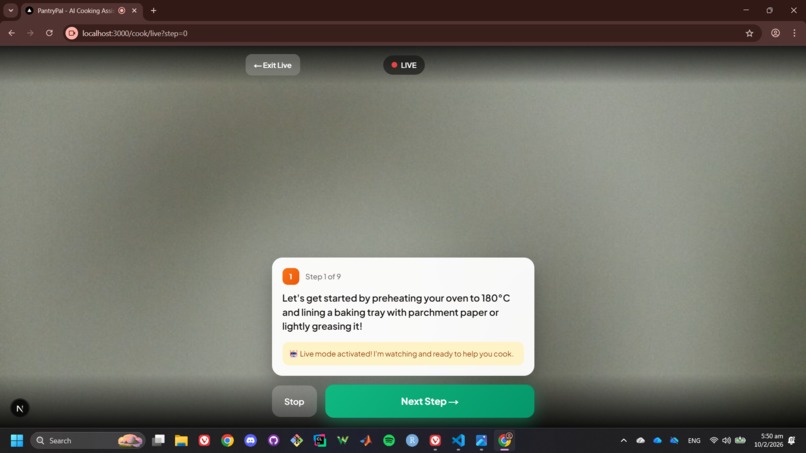

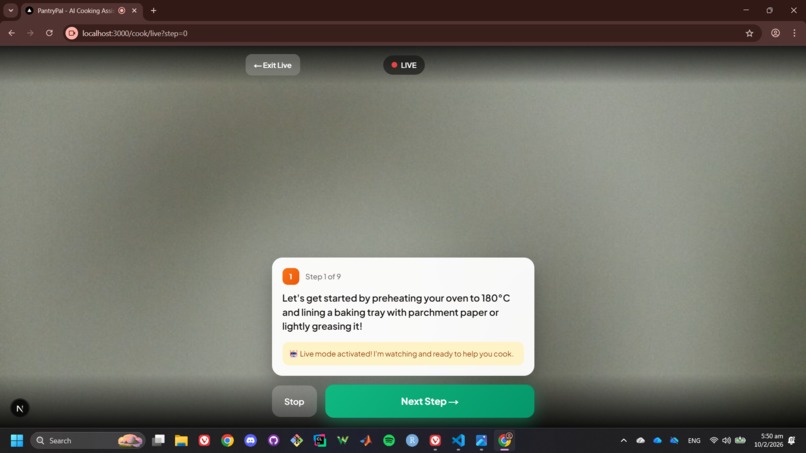

Live Mode (Real Time Camera)

-

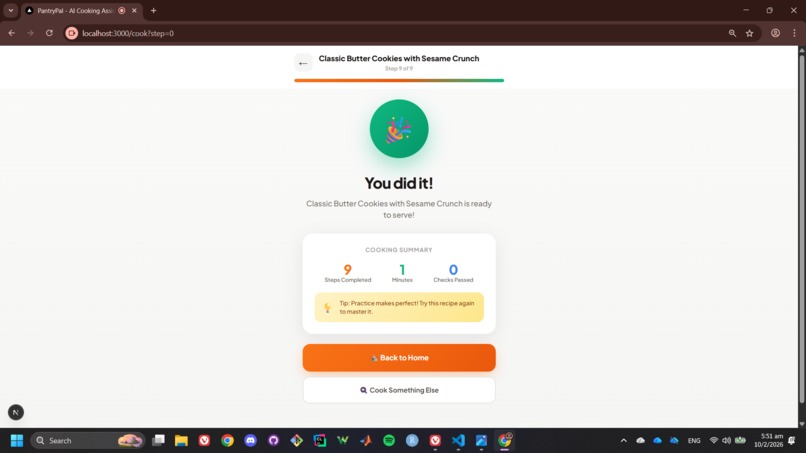

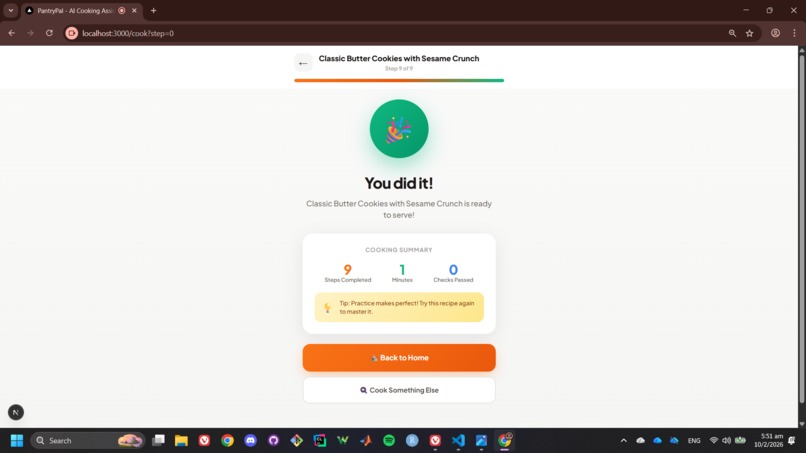

Success/Finished Landing Page

Inspiration

Cooking is intimidating for beginners and busy people.

- Recipe instructions are often vague ("cook until golden brown" – how does that looks like?)

- No feedback is received until you taste the final food result (often too late)

- People waste food because they don't know what to make with what they have

- Hard to cook while reading instructions (hands are messy!)

What it does

PantryPal is an AI-powered cooking assistant that transforms the way people cook at home. Using Google's Gemini 3 multimodal AI, PantryPal can see your ingredients through your camera, suggest personalized recipes, and guide you through every cooking step with real-time visual verification.

Unlike traditional recipe apps that just show instructions, PantryPal actively watches your progress and tells you if you're doing it right—like having a professional chef looking over your shoulder.

How we built it

- Frontend: Next.js 16, React 19, TypeScript

- Styling: Tailwind CSS 4, Framer Motion

- AI: Google Gemini 3 (multimodal)

- Validation: Zod schemas for type-safe JSON

- Voice: Web Speech API (TTS + STT)

- Effects: canvas-confetti

Challenges we ran into

Enumerating the best add-on features to maximize Gemini's capabilities, where we considered the following:

- Adaptive cooking adjustments: Dynamically adjusting recipe steps based on what Gemini observes (e.g., modifying heat levels or timing when food browns faster or slower than expected).

- Personalized skill-level guidance: Using Gemini to tailor instruction detail based on whether the user is a beginner or experienced cook, detected through interaction patterns.

- Dietary and allergy-aware reasoning: Leveraging Gemini’s reasoning ability to automatically adapt recipes based on dietary constraints inferred or provided by the user.

- Post-cook visual evaluation: Asking users to show the final dish for an overall quality assessment, plating feedback, or improvement tips.

Accomplishments that we're proud of

- Built a True Multimodal Cooking Assistant: It is not just adding AI, but also building an assistant that sees, listens, speaks, and reasons in context. PantryPal understands images, voice, and chat together to guide users through cooking in real time.

- Intelligent Ingredient Recognition with Multimodal AI: We successfully integrated Gemini 3’s multimodal vision to detect real ingredients from photos, including confidence scoring and smart pantry assumptions. This removes manual input and makes cooking more natural and frictionless, which we believe is a major step beyond traditional recipe apps.

- Real-Time Visual PASS/FAIL/UNSURE Verification: We implemented step-level visual validation, allowing the AI to confirm whether a cooking step is done correctly. This goes beyond static recipes and turns cooking into an interactive, feedback-driven experience. Here, AI acts as an active cooking coach, not a passive instruction sheet.

- Real-Time Live Mode with Continuous Coaching: One of our biggest achievements is Live Mode, streaming video to Gemini Live API for continuous, proactive guidance. PantryPal can: watch cooking in real time, give voice feedback, automatically detect step completion, and monitor for hazards. This is a major technical and UX milestone that demonstrates true real-time AI teaching.

- Proactive AI Safety Monitoring: We’re especially proud of building safety into the core experience. PantryPal actively monitors for: smoke and fire, unsafe knife handling, oil splattering, cross-contamination, overheating cookware, burns and cuts. With clear CAUTION and STOP alerts, PantryPal prioritizes user safety, something almost no recipe apps do.

- Context-Aware AI Chat During Cooking: Our AI chat assistant understands what step you’re on and what just happened, allowing for relevant, real-time Q&A. This lets users ask natural questions like: “How do I know when it’s done?” “What if I don’t have this ingredient?” This reinforces PantryPal as a teacher, not just a static guide.

- Hands-Free Voice Experience: We built full text-to-speech and speech-to-text support so users can cook without touching their phone. Step instructions, safety alerts, and AI responses can all be spoken aloud, enabling a truly hands-free cooking workflow.

- Structured AI Outputs You Can Trust: We designed robust structured JSON responses (ingredients, confidence scores, step feedback) and validated them with Zod, making the system reliable, debuggable, and production-ready, and not a fragile demo.

- Delightful UX: Confetti celebrations when you pass a step, smooth animations throughout, a warm, playful color palette, and a mobile-first responsive design

What we learned

- We learned the importance of human-centered focus. At its core, PantryPal helps people waste less food, feel confident cooking, and enjoy the process instead of stressing.

- We learned that while multimodal AI can understand images, text, and voice impressively well, its outputs are not always predictable. Designing structured prompts, strict schemas, and validation layers (like Zod) is essential to make AI responses reliable in real applications.

- AI intelligence alone isn’t enough. We learned the importance of validating AI-generated data at runtime to prevent crashes, handle edge cases gracefully, and maintain a good user experience even when the AI is uncertain or wrong.

- AI performs significantly better when it understands where the user is in the flow. By tracking the current recipe step and session state, the AI could give far more relevant and accurate advice than generic chat responses.

- Designing for uncertainty improves trust. Instead of forcing the AI to always give a “yes” or “no,” we learned to embrace uncertainty. Adding an “UNSURE” state made the system more honest and increased user trust, rather than pretending the AI is always correct.

What's next for PantryPal

We defined a believable roadmap toward:

- Pantry tracking

- Personalization

- Mobile and smart home integration

Built With

- canvas-confetti

- framer-motion

- google-gemini-3

- next.js

- react-19

- tailwind-css-4

- typescript

- typescript-**styling**:-tailwind-css-4

- web-speech-api

- zod-schema

Log in or sign up for Devpost to join the conversation.