-

-

GIF

GIF

A sample of the kind of video that will be posted on r/owiehack with a auto-generated caption

-

The hardware we used to control the chair; it's a transistor that causes the chair to fall by de-powering the electromagnets holding it up

-

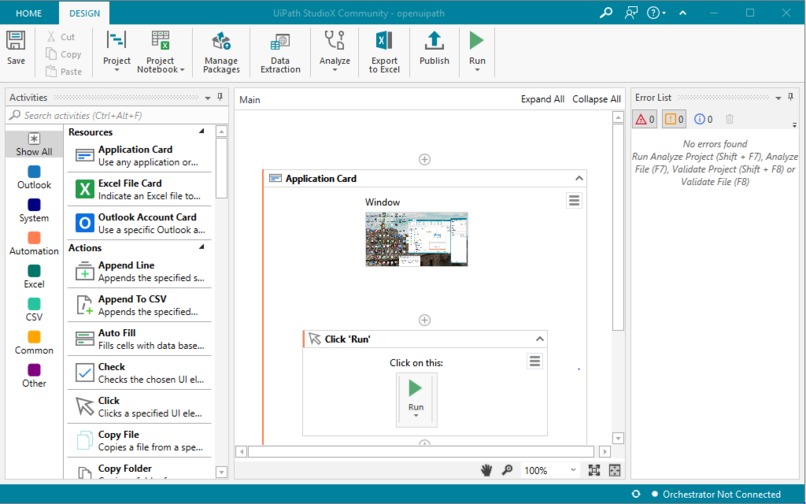

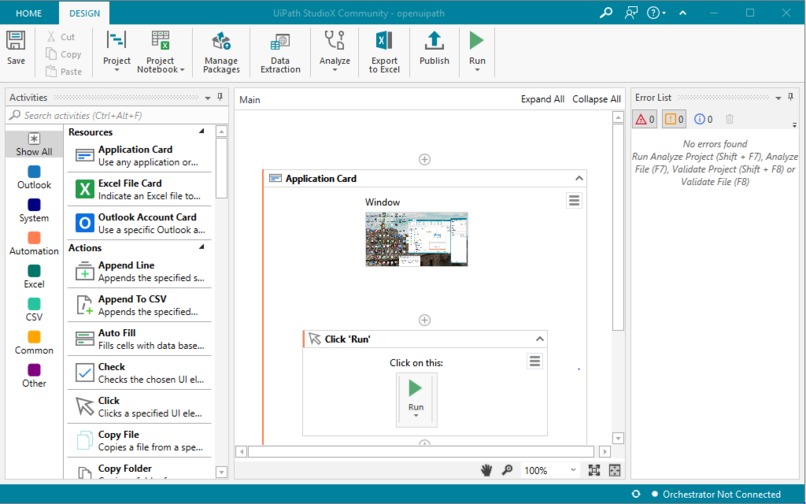

Our first UiPath automation that exists solely to start our second one

-

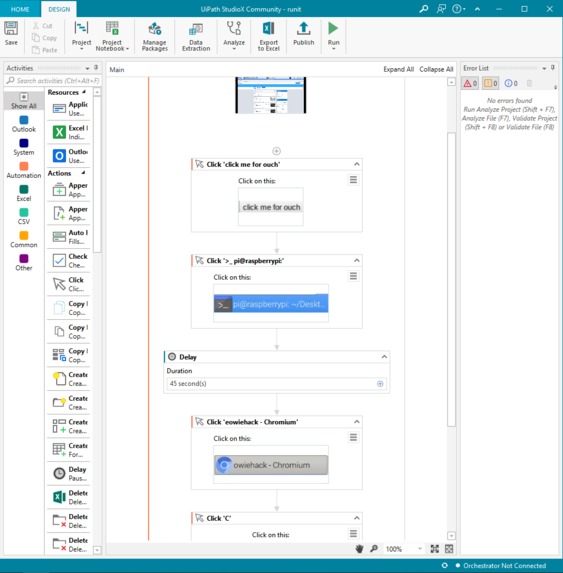

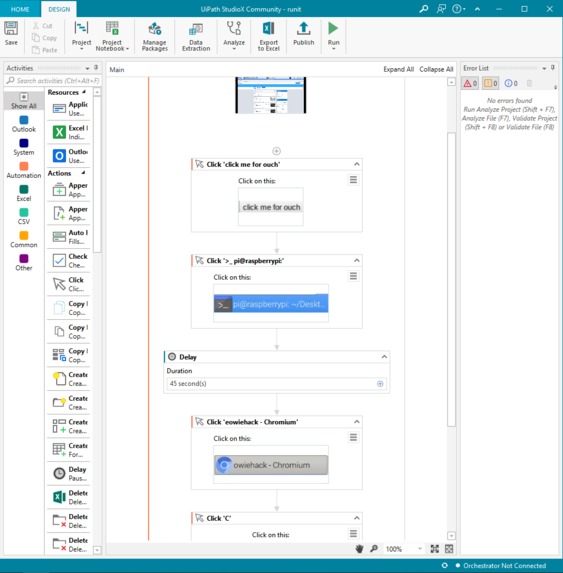

Our second UiPath automation that runs the bulk of the demo

Inspiration

When we were looking at MLH's hackathon offering for this weekend, we noticed the "Well, it's not rocket science" category and the breakaway chair that we had built recently that still didn't have any code for it, and we knew what we had to do. Introducing owie , the brand new framework for GPIO powered devices like our chair that makes breaking your butt a lot more fun (at least for everyone else).

What it does

In essence, owie is simple: hit some button on some server and watch your friend get a one way ticket to the ground. However, in the spirit of this hackathon, we thought we should take things a step further, so we did! Here's a rundown of what our project does, with first mentions of a API,tool,or service in bold.

-We built a UiPath automation that runs our demo for us, shown off in the video at the top of this page.

-However, we thought we'd take things a step further: our UiPath setup doesn't just run the demo; it starts a SECOND UiPath automation to do the rest. You heard me correctly: a UiPath automation that solely exists to start another UiPath automation. If that's not innovation, I don't know what is. In addition, since all our embedded code runs off a Raspberry Pi, and UiPath isn't available on that platform we used Real VNC to remote into the Pi, and have our automations interact with the remoted in GUI.

-The second UiPath automation clicks a button corresponding to a HTML form on our Flask webserver running on our Pi

-This triggers the RANDOM NUMBER GENERATION PHASE, which determines how long your dear friend will have to wait before the chair falls (it's completely random, which just makes it so exciting , don't you think?). We used the Wikipedia API to grab a random article from their database and use a simple A1B2C3 cypher to convert all the letters in the title to number and then sum all those numbers and take that number and modulo it with 9 to get our random time value.

-After this is the CAPTION GENERATOR PHASE , which is a special tool that will help us later. Our radar.io implementation grabs the users coordinates using their geocoding API, and stores them. Our GCP implementation then takes it's turn, using the fswebcam linux module to take a picture of the user sitting in the chair using the webcam we're using to record the clip of them falling for farther down the pipeline. We then use the GCP Vision API to gather labels for the image, and randomly select a meaningful one for use in the caption. The caption is then stitched together to take the form "yung bruh really think they can have GOOGLE LABEL at RADAR.IO COORDINATES" to further humiliate the poor soul who thought it would be a good idea to sit in this chair while owie was online.

-Then, as the program sleeps for the time chosen in the RANDOM NUMBER GENERATION PHASE, a new thread (that's right, this is MULTITHREADED too, take that haters) starts recording a video using ffmpeg that should ideally capture the person falling to their unexpected doom. Since this is multithreaded, the main process of execution can wait while the video is being recorded.

-Now for the IT'S ALL ONLINE? ALWAYS HAS BEEN phase. We take the recorded clip from the webcam and upload it to the internet using the Imgur API and get a shortened link for it, which we then post, using the reddit API (along with the python package praw), to a subreddit created JUST for this project, r/owiehack. A lot of our tests are uploaded there, so you can see how we made progress towards our ultimate goal.

-Oh, and of course the Chair breaks during all of this inflicting maximal shame to their gluteus maximus.

How we built it

Using our breakaway chair (whose construction is NOT a part of this project, just the code written for it is) as a piece of hardware that we could interact with, we used the following to pull the project together: -python3

-html/css

-Flask

-UiPath (x2)

-radar.io

-GCP Vision API

-ffmpeg

-fswebcam

-reddit API

-imgur API

-Wikipedia API

-Raspberry Pi 3

-Real VNC

-multithreading

Challenges we ran into

-We were going to add additional features, like having a speaker read out the caption through GCP Text To Speech, but the Pi couldn't run a version of python new enough, and I only had python set up properly on my laptop running WSL , which can't do I/O interaction since my laptop is just pretending to be a Linux device

-It also took us a whole bunch of tries to get the recording, since there are so many moving parts to the demo.

Accomplishments that we're proud of

-Using ALL the sponsor APIs

-Connecting so many things together to do something very simple

-Using UiPath to trigger a UiPath automation :)

What we learned

-Making things messy and unnecessarily complicated is kind of fun

What's next for owie

ADD MORE UNNECESSARY FEATURES!!!!!!

Log in or sign up for Devpost to join the conversation.