-

-

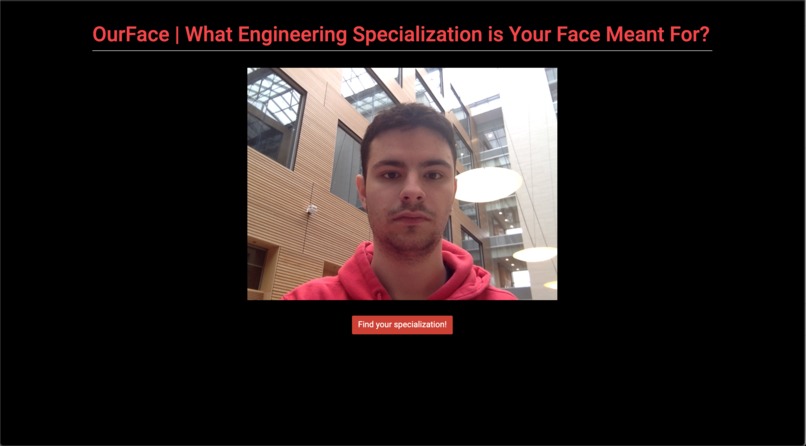

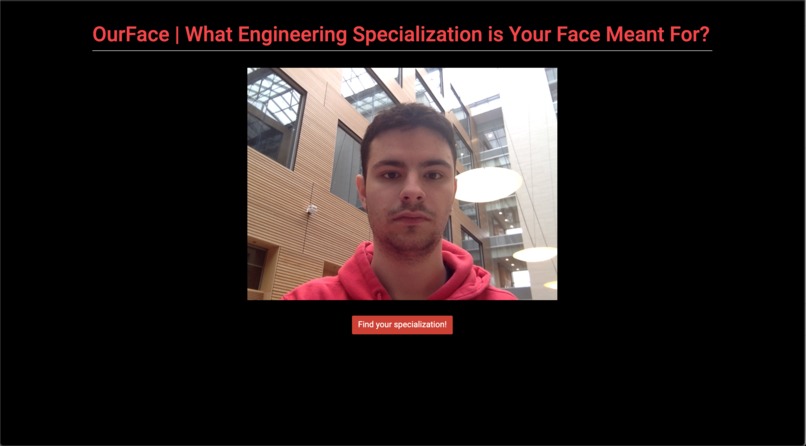

Eddy waiting for his results to see if he really belongs to ECE

-

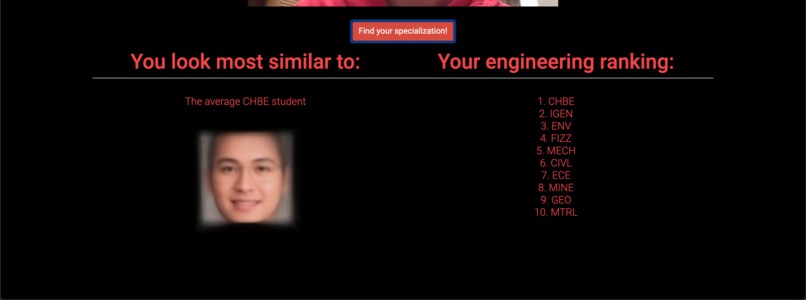

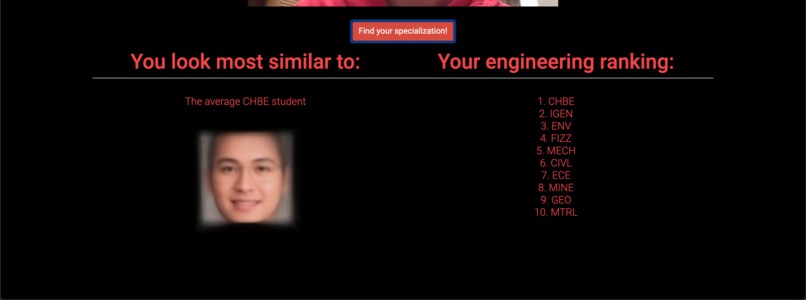

Looks like Eddy chose the wrong faculty...

-

The average CHBE student

-

The average CIVL student

-

The average ECE student

-

The average ENV student

-

The average FIZZ student

-

The average GEO student

-

The average IGEN student

-

The average MECH student

-

The average MINE student

-

The average MTRL student

Inspiration

The beginning of this project stemmed from a curiosity regarding whether each engineering faculty had a distinctively average face. Using openCV and the following library: https://github.com/stekhn/average-faces-opencv we created a data set of all the faces graduating from UBC engineering using previous yearbooks and fed this information through the library to create an average face for each specialization.

Once an average face was created, we thought it would be interesting to see whether scanned faces could be matched against the average face of each faculty to and assigned a score based on distinctive facial features (eyes, jawline, etc). This score could be used to determine which engineering faculty a user is most likely to be in based on their face.

What it does

This is a django web application that allows users to take a picture of their face using a webcam. The picture is passed to the python back end using ajax where it is decoded and Google's Cloud Vision API is used to crop the picture so that only the user's face remains. This cropped picture is compared against the average face of each engineering faculty and assigned a score based on their facial feature similarities. A higher score means the person is more likely to be in that faculty. This data is returned and displayed to the user.

How I built it

This was built as a Django Web Application that utilize Google Cloud Vision libraries to detect faces in an image and do some image manipulation. The OpenCV scripts for determining the average face were based off the open source Github library https://github.com/stekhn/average-faces-opencv. The library compares faces based on 64 distinctive facial feature points. Thanky To worked on modifying the script and trying to optimize it by using only 2 points (a person's eyes).

When comparing faces to the average student, we used the FLANN based Feature Matching algorithm in OpenCVs community contribution version. What this algorithm does is it takes an input image and finds the image with the most matching features. We used this algorithm to score each input from our web-app against our previously generated average faces, and returned the one with the most matching features. More about this algorithm can be read here: https://docs.opencv.org/master/dc/dc3/tutorial_py_matcher.html.

Web RTC was used for the template view for the web application: https://webrtc.github.io/samples/src/content/getusermedia/canvas/

Challenges I ran into

Passing the picture from the canvas to the python back end was a challenge but Ajax helped with that. Aligning faces to create the average face based off just 2 data points in Thanky's optimized script proved a challenge as rotating the pictures was necessary but the rotational matrix required for that sometimes distorted faces.

Having disproportionate datasets affected the scores assigned. For example, ECE has a large number of faces. This resulted in all users being matched with ECE (ECE having the highest score) for a while. This was fixed by removing some of the data from ECE and regenerating the ECE average face, but this is not an optimal solution. Ideally, our score system should take this into account. Also, this set of data was scanned earlier with a higher resolution, so that could have affected some of the statistics of our pixel distributions in this dataset. We didn't have enough time to rescan these pages and re-run all of our scripts.

Accomplishments that I'm proud of

Found out what average faces for each faculty looked like, and created a fun application to see where our algorithm places you.

What I learned

The average faces mostly look quite similar. So maybe looks aren't the best way to pick which field of engineering you should study :)

What's next for Our Faces

Scan year books for all faculties, not just engineering. Make the front-end prettier. Make the project more easily deployable.

Log in or sign up for Devpost to join the conversation.