-

-

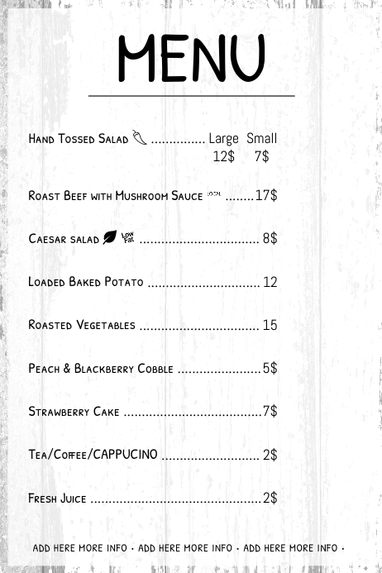

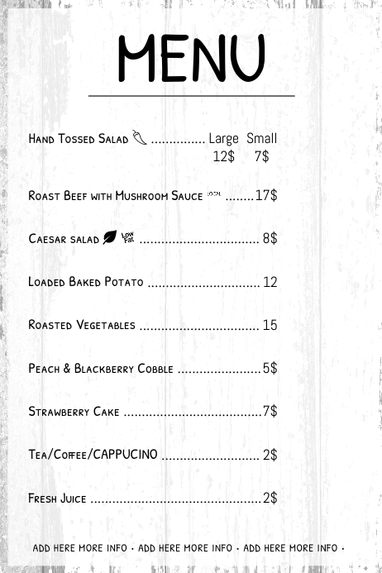

sample menu for menu scanner function

-

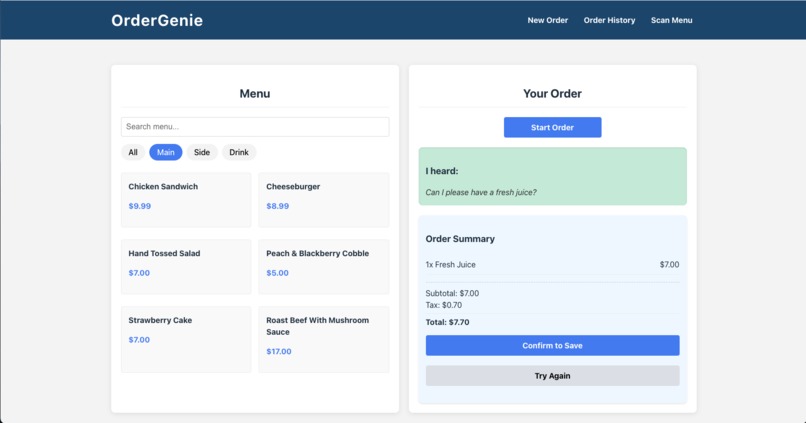

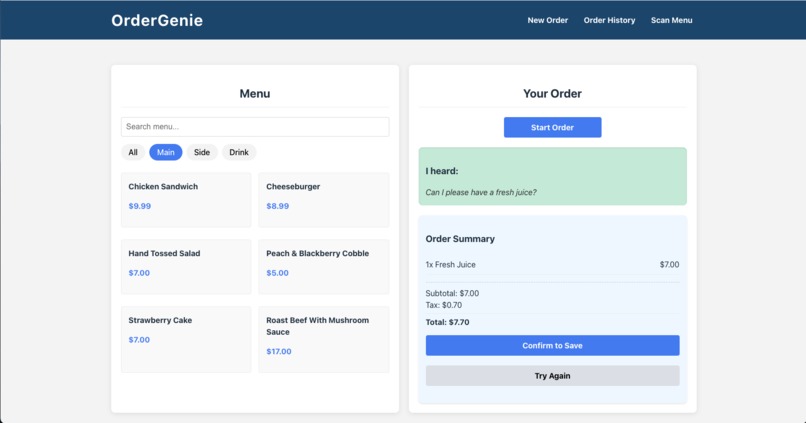

Create new order page on desktop view

-

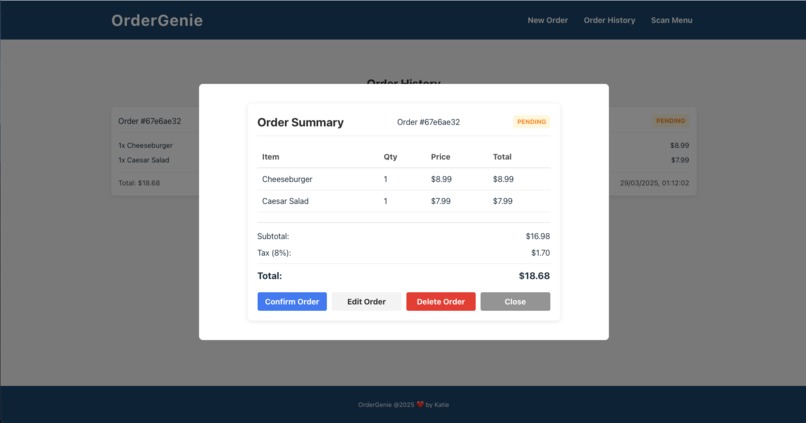

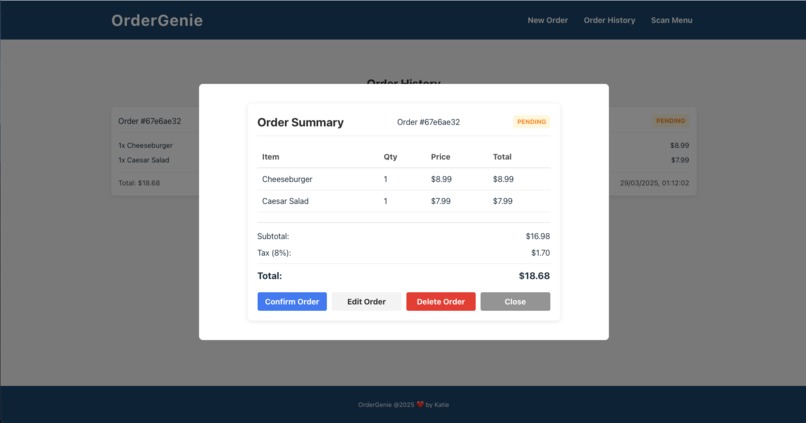

Order History page allows viewing invoice and actions

-

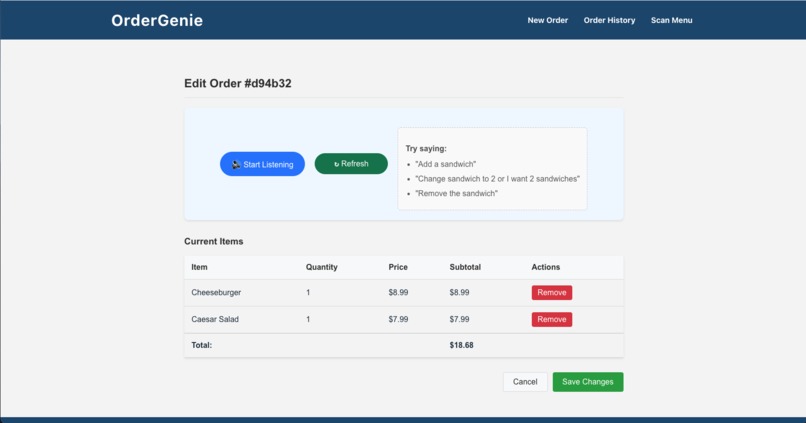

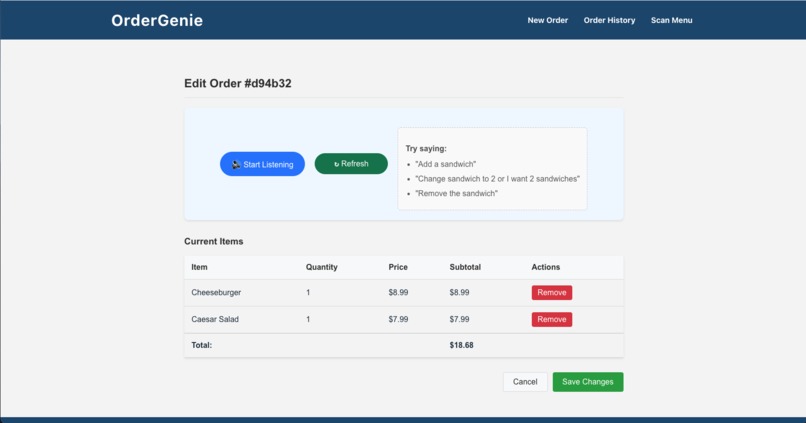

Edit Order page : edit order with voice command

-

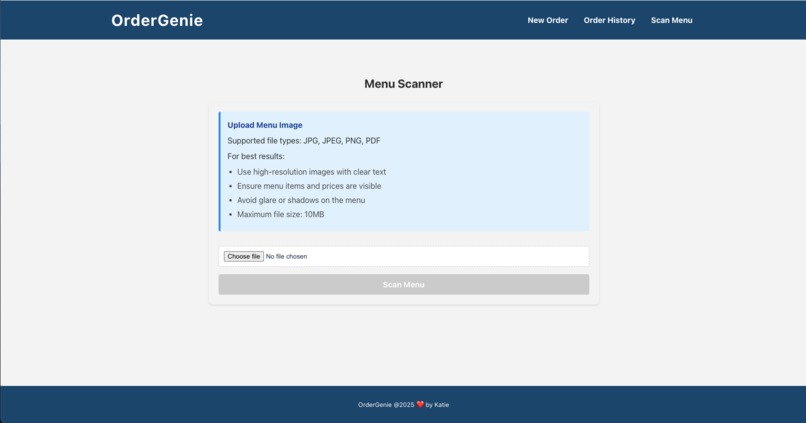

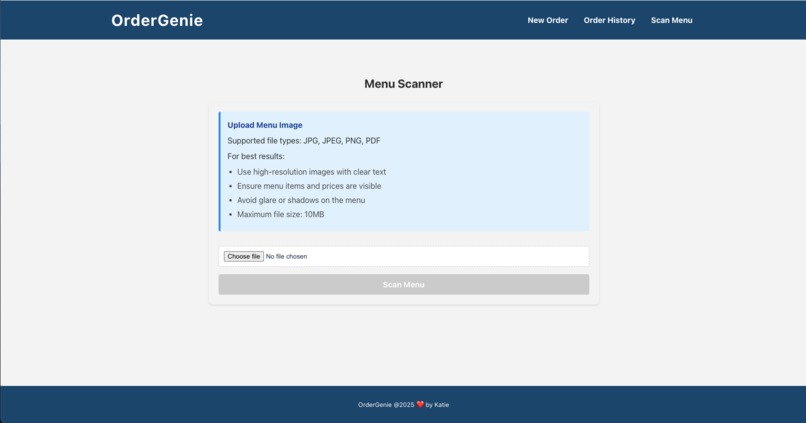

Menu Scanner Page

-

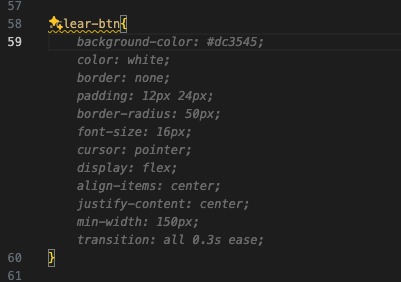

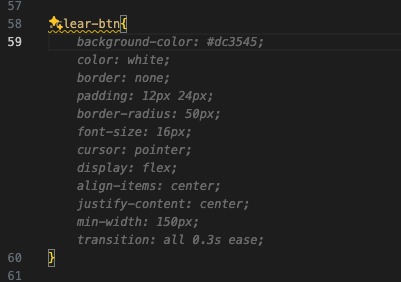

Github Copilot CSS code suggestion

-

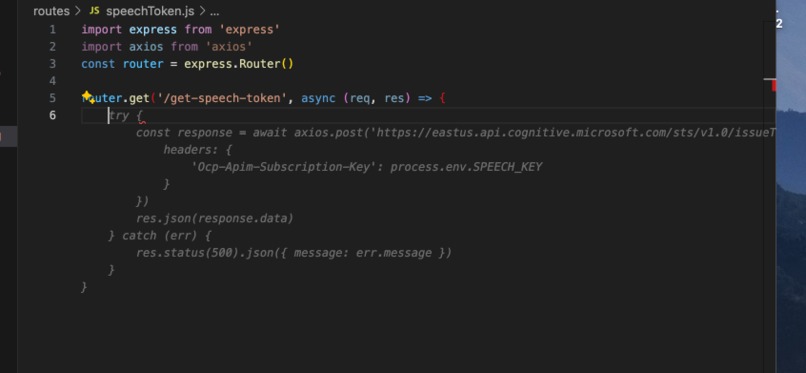

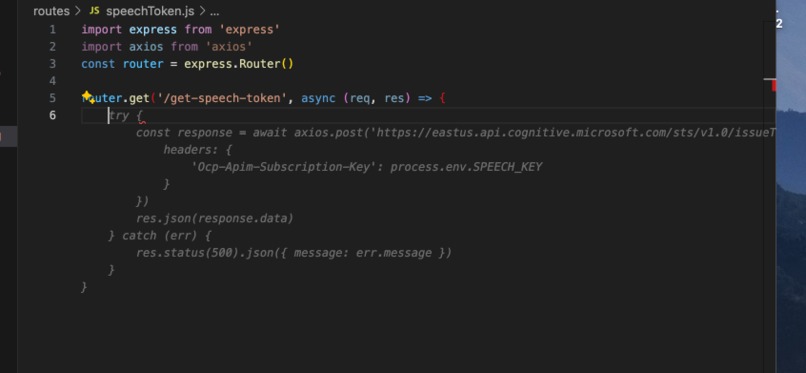

Github Copilot : AzureAi api link suggestion

-

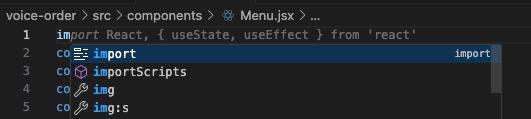

Github Copilot: Checks the code and suggest importing libraries

-

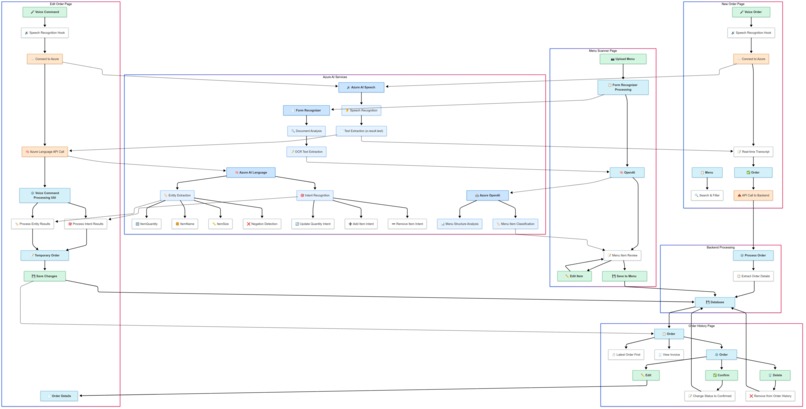

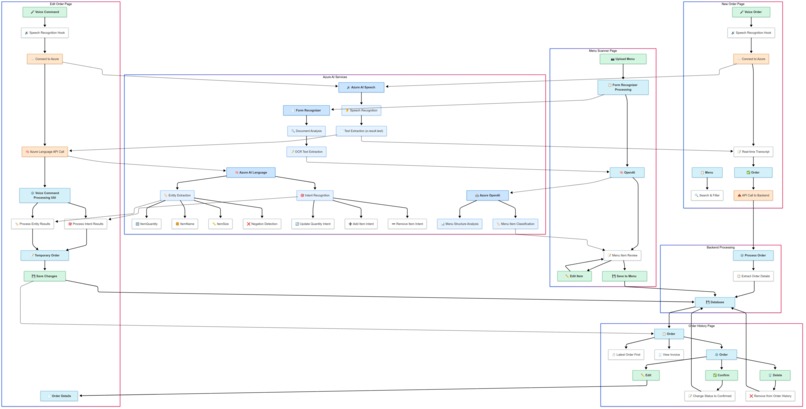

mermaid flowchart of OrderGenie

Inspiration

As a frequent diner, I noticed restaurant staff juggling handwritten orders, often leading to errors and delays. The vision for OrderGenie emerged from a simple question: What if servers could just speak orders into a device that not only captures what they say but truly understands their intent? An application built for servers in a fast-paced restaurant.

What it does

OrderGenie transforms restaurant ordering through voice recognition and natural language understanding:

- Takes customer orders through voice commands with real-time transcription

- Processes natural language to understand order modifications ("make that two," "I don't want burger anymore")

- Scans physical menus (images/PDFs) to automatically extract menu items

- Provides an intuitive order management system that staff can navigate and edit using voice

- Maintains order history with simple voice-powered modifications

How I built it

OrderGenie leverages multiple Azure AI services working in harmony:

- Azure Speech Service for accurate real-time transcription

- Azure Language Understanding for intent recognition and entity extraction

- Azure Form Recognizer to process menu images

- Azure OpenAI Service for advanced menu interpretation

- React frontend with MongoDB backend for a responsive experience

- GitHub Copilot as my AI pair programmer, accelerating development

Challenges I ran into

- Training the language model to understand diverse ordering phrases and negations

- Managing Azure service rate limits within the free student tier

- Implementing real-time voice recognition that works consistently

- Creating a robust menu scanning system that handles different formats

- Developing an intuitive user experience for voice-based interactions

Accomplishments that I am proud of

- Successfully trained a custom language model that understands complex ordering commands

- Created a seamless integration between multiple Azure AI services

- Implemented a responsive interface that works across devices

- Built a functional menu scanning system that extracts structured data from images

- Delivered a complete, working system within the hackathon timeframe

What I learned

- The importance of AI services in modern web application development - the need to understand how AI works and build database model and api services that can integrate with AI

- The power of combining multiple AI services for a cohesive solution

- Techniques for training language models with limited examples

- Strategies for handling API rate limits and service constraints

- Approaches for making voice interfaces intuitive and user-friendly

- The impressive capabilities of Azure's AI ecosystem when leveraged correctly

What's next for OrderGenie - Voice-Powered Restaurant Ordering

- Multi-language support using Azure Translator

- Payment processing integration ( with QR code)

- Customer-facing mobile app for self-ordering

- Kitchen display system integration

- Analytics dashboard for restaurant managers

- Expanded menu scanning capabilities with image recognition

Built With

- axios

- azure-ai-language

- azure-ai-speech

- azure-form-recognizer

- azure-openai

- css

- express.js

- github-copilot

- gpt-3.5-turbo

- javascript

- mongodb

- mongoose

- node.js

- nodemon

- react-router

- react.js

- vite

- vs-code

Log in or sign up for Devpost to join the conversation.