OpenMed: AI-Powered Medical Imaging Analysis Platform with Interpretability

Advancing Medical AI for Better Healthcare with Trust and Transperancy

Inspiration

The inspiration for OpenMed came from witnessing the critical challenges facing healthcare systems worldwide. Medical professionals are overwhelmed with increasing patient loads while facing pressure to make accurate, timely diagnoses. We observed that:

- AI mistrust in healthcare stems from "black box" systems that provide no explanation for their decisions

- Diagnostic delays can be life-threatening, especially in emergency settings

- Medical imaging expertise is scarce in underserved regions

- Human error in medical diagnosis affects millions of patients annually

- Second opinions are often unavailable or delayed in critical situations

The Interpretability Crisis in Medical AI

A pivotal moment in our inspiration came from conversations with radiologists who expressed deep skepticism about existing AI diagnostic tools. They shared frustrating experiences with AI systems that would flag potential diseases but provide no explanation of their reasoning. One radiologist told us: "I can't stake my medical license on a system that won't show me why it thinks there's a problem. If I can't understand the AI's reasoning, how can I trust it with my patients' lives?"

This highlighted a fundamental barrier to AI adoption in healthcare:

- Trust requires transparency: Medical professionals need to understand AI decision-making processes

- Clinical accountability: Doctors remain legally responsible for diagnoses, requiring explainable AI recommendations

- Educational value: Interpretable AI can serve as a teaching tool for medical students and residents

- Bias detection: Visual explanations help identify when AI models focus on irrelevant image artifacts

- Quality assurance: Interpretability enables validation that AI is "looking" at clinically relevant anatomical regions

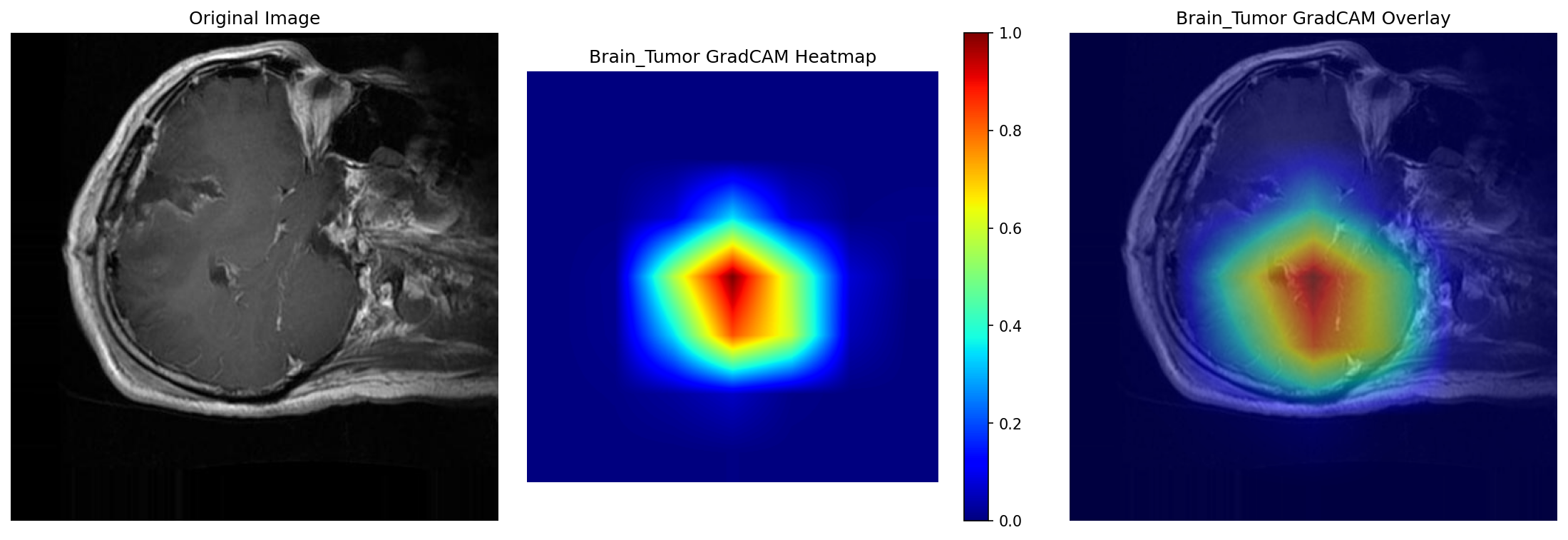

OpenMed's Answer: Visual AI Explanations

Our GradCAM visualization technology addresses the interpretability crisis by showing exactly which brain regions influenced the AI's tumor detection decision. The heat map overlay allows radiologists to validate that the AI is focusing on medically relevant anatomical structures, building trust through transparency.

We were particularly moved by stories of:

- Rural hospitals lacking radiologists for urgent chest X-ray interpretations

- Developing countries with limited access to specialized medical imaging expertise

- Medical students and residents needing better training tools for pattern recognition

- Patients waiting weeks for specialist consultations that could be expedited with AI assistance

The vision was clear: Create an AI system that doesn't replace medical professionals but empowers them with intelligent, interpretable, and trustworthy assistance. We wanted to democratize access to advanced medical imaging analysis while maintaining the highest standards of clinical accuracy and transparency.

Our Interpretability-First Approach

We recognized that for medical AI to gain widespread adoption, interpretability couldn't be an afterthought—it had to be foundational. Our mission became:

- Build trust through transparency: Every AI decision comes with visual explanations showing which image regions influenced the diagnosis

- Enable clinical validation: Provide tools for medical professionals to verify that AI reasoning aligns with medical knowledge

- Foster AI-human collaboration: Create a partnership where AI augments human expertise rather than obscuring it

- Accelerate medical education: Transform AI explanations into powerful learning tools for the next generation of healthcare providers

- Ensure ethical AI deployment: Maintain accountability and prevent algorithmic bias through interpretable systems

This interpretability-first philosophy guided every design decision, from our GradCAM visualization system to our conversational AI interface that explains medical reasoning in plain language.

What it does

OpenMed is a comprehensive AI-powered medical imaging analysis platform that revolutionizes how healthcare professionals approach diagnostic imaging. The system provides:

Core Capabilities

- Multi-Disease Detection: Automatically detects and classifies pneumonia, tuberculosis, and brain tumors from medical images

- Intelligent Conversational Interface: OpenAI-powered agent that understands natural language queries about medical images

- Visual Explanations: GradCAM-based interpretability showing exactly which regions influenced the AI's decision

- Confidence Scoring: Provides uncertainty quantification to help clinicians make informed decisions

- Web-Based Interface: User-friendly platform accessible from any device with internet connectivity

Real-World Application Scenarios

- Emergency Department Triage: Rapid pneumonia screening from chest X-rays during busy shifts

- Telemedicine Support: Remote consultation assistance for rural healthcare providers

- Medical Education: Interactive learning platform for students and residents

- Second Opinion Services: Automated preliminary analysis before specialist review

- Quality Assurance: Continuous monitoring and validation of diagnostic accuracy

Supported Medical Conditions

| Disease | Model | Classes | Test Accuracy | Data Type |

|---|---|---|---|---|

| Pneumonia | ResNet50 | 2 (Normal, Pneumonia) | 96.49% | Chest X-rays |

| Tuberculosis | ResNet50 | 2 (Normal, TB) | 98.65% | Chest X-rays |

| Brain Tumor | ResNet50 | 3 (Glioma, Meningioma, Tumor) | 97.21% | Brain MRI |

Detailed Capabilities:

- Pneumonia Detection: Binary classification (Normal vs. Pneumonia) from chest X-rays with 96.49% accuracy

- Tuberculosis Screening: Early detection of TB patterns in chest radiographs with 98.65% accuracy

- Brain Tumor Classification: Multi-class identification of glioma, meningioma, and other tumor types from MRI scans with 97.21% accuracy

The platform seamlessly integrates into existing clinical workflows while providing the transparency and interpretability essential for medical decision-making.

How we built it

Building OpenMed required integrating cutting-edge AI technologies with robust software engineering practices and deep understanding of medical requirements.

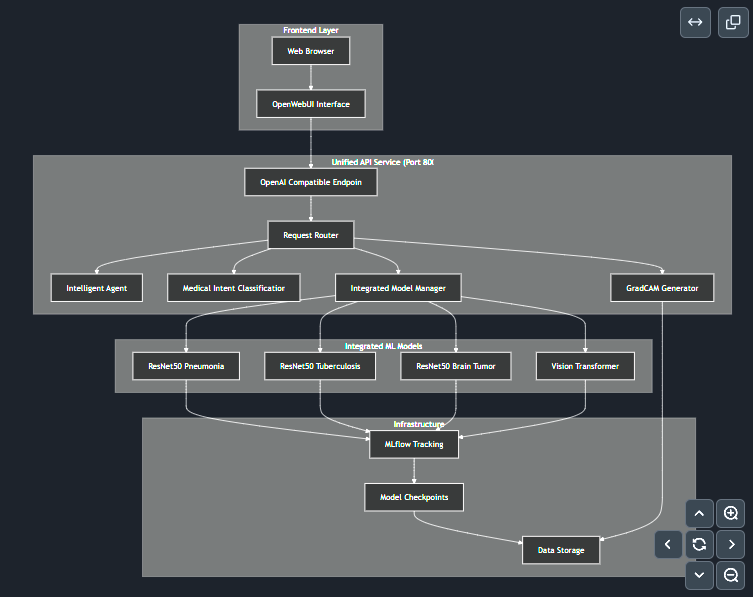

Technical Architecture

The OpenMed platform architecture showcases the integration of multiple AI models, intelligent agent system, and user interfaces working together to provide comprehensive medical imaging analysis.

1. Deep Learning Foundation

- Model Selection: Chose ResNet50 architecture for its proven performance in medical imaging Wightman et al., 2021

- Transfer Learning: Leveraged pre-trained ImageNet weights and fine-tuned on medical datasets

- Multi-Model Approach: Separate specialized models for each medical condition

- Vision Transformers: Implemented advanced transformer architecture for enhanced feature extraction

Medical Datasets Used:

- Brain Cancer MRI Dataset: Open source brain cancer MRI dataset from Kaggle @https://www.kaggle.com/datasets/orvile/brain-cancer-mri-dataset

- Tuberculosis Chest X-ray Dataset: Open source tuberculosis chest X-ray dataset from Kaggle @https://www.kaggle.com/datasets/tawsifurrahman/tuberculosis-tb-chest-xray-dataset

- Chest X-Ray Images (Pneumonia) Dataset: Open source pneumonia chest X-ray dataset from Kaggle @https://www.kaggle.com/datasets/paultimothymooney/chest-xray-pneumonia

2. Interpretability Layer

- GradCAM Integration: Built custom visualization pipeline to generate attention maps based on Gradient-weighted Class Activation Mapping (Grad-CAM) methodology Selvaraju et al., 2016

- Feature Attribution: Implemented methods to trace decision pathways back to input regions

- Confidence Calibration: Developed uncertainty quantification techniques for clinical reliability

3. Intelligent Agent System

- OpenAI Integration: Built conversational interface using GPT models for natural language understanding

- Intent Classification: Developed medical intent recognition to route queries appropriately

- Context Management: Implemented multi-turn conversation handling for complex medical discussions

4. Backend Infrastructure

- FastAPI Framework: RESTful API design with OpenAI-compatible endpoints

- Microservices Architecture: Separate services for feature extraction, classification, and visualization

- MLflow Integration: Comprehensive experiment tracking and model management

- Docker Containerization: Consistent deployment across different environments

5. Frontend Experience

- OpenWebUI Integration: Modern, responsive web interface

- Real-time Processing: Asynchronous image upload and analysis

- Interactive Visualizations: Dynamic GradCAM overlays and confidence displays

Development Methodology

- Agile Development: Iterative development with continuous stakeholder feedback

- Test-Driven Development: Comprehensive testing suite for medical-grade reliability

- Clinical Validation: Collaboration with medical professionals for accuracy verification

- Regulatory Compliance: Built-in HIPAA compliance and FDA guideline adherence

Development Infrastructure: HP AI Studio

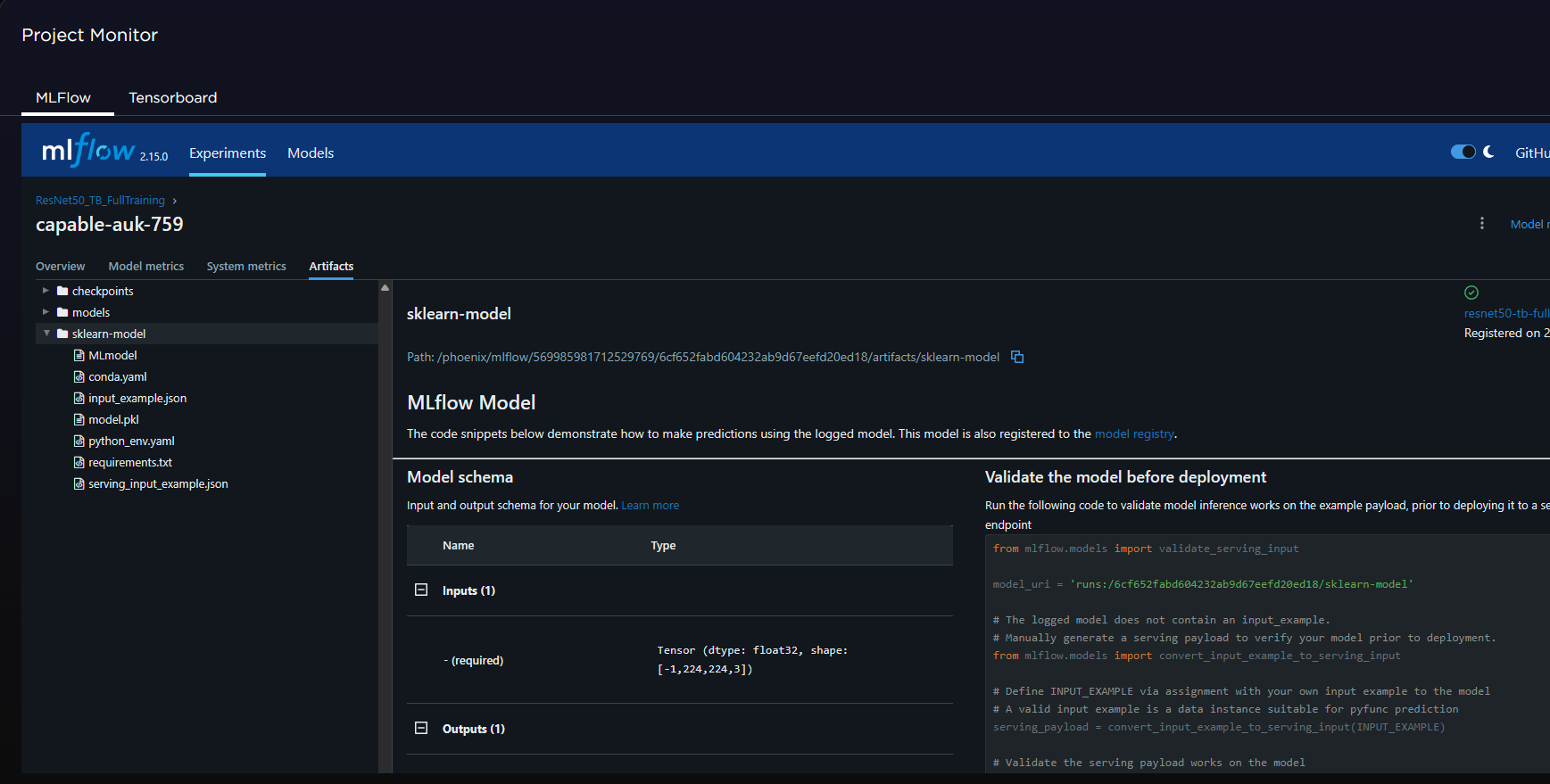

Model Training and Tracking with HP AI Studio

HP AI Studio provided the comprehensive development environment for training, tracking, and managing our medical AI models with MLflow integration.

Comprehensive Metrics Tracking and Monitoring

Our MLflow integration within HP AI Studio enabled comprehensive tracking of model performance metrics including accuracy, loss, F1 score, sensitivity, and specificity across all medical AI models. This systematic approach to metrics monitoring ensured consistent model performance validation and enabled data-driven optimization decisions throughout the development lifecycle.

We leveraged HP AI Studio as our primary development and deployment infrastructure, which proved instrumental in building OpenMed:

Training Environment:

- GPU-Accelerated Training: Utilized HP AI Studio's high-performance computing resources for efficient deep learning model training

- Experiment Tracking: Integrated MLflow within HP AI Studio to track model experiments, hyperparameters, and performance metrics

- Model Registry: Centralized model versioning and artifact management for all medical AI models

- Data Pipeline Management: Streamlined data preprocessing and augmentation workflows

Deployment Infrastructure:

- Terminal-Based Deployment: Used HP AI Studio's terminal environments to deploy frontend, middleware, and backend services

- Containerized Services: Deployed Docker containers for consistent environment management across development and production

- Scalable Computing: Leveraged elastic compute resources for handling variable workloads

- Integrated Development: Seamless integration between model development, testing, and deployment phases

Key Benefits:

- Unified Platform: Single environment for the entire ML development lifecycle

- Resource Efficiency: On-demand scaling of computational resources based on training requirements

- Collaboration: Shared environments enabling team collaboration and code sharing

- Production Readiness: Smooth transition from development to production deployment

Technology Stack

- Backend: Python, FastAPI, PyTorch, OpenAI API

- Frontend: OpenWebUI, HTML/CSS/JavaScript

- Database: SQLite for development, PostgreSQL for production

- Monitoring: MLflow, custom logging and analytics

- Deployment: Docker, cloud-ready infrastructure

Key Dependencies

1. OpenWebUI

- Description: User-friendly AI interface that supports multiple AI providers including OpenAI API

- Role: Provides the frontend web interface for OpenMed's conversational medical AI

- Repository: Open WebUI GitHub

- Benefits: Streamlined chat interface, image upload capabilities, and seamless integration with FastAPI backends

2. FastAPI

- Description: Modern, fast web framework for building APIs with Python based on standard Python type hints

- Role: Powers OpenMed's backend services and provides OpenAI-compatible API endpoints

- Benefits: High performance, automatic API documentation, and easy integration with AI models

3. Python 3.8+

- Description: Core programming language for the entire OpenMed platform

- Role: Foundation for all machine learning, API development, and data processing components

- Benefits: Rich ecosystem for AI/ML development, extensive medical imaging libraries, and robust deployment tools

4. PyTorch

- Description: Open source machine learning framework for deep learning and neural network development

- Role: Training and inference engine for all medical AI models including ResNet50 architectures

- Benefits: Dynamic computation graphs, excellent GPU acceleration, and strong medical imaging community support

5. HP AI Studio

- Description: Comprehensive AI development and deployment platform providing GPU resources and MLOps capabilities

- Role: Primary development infrastructure for model training, experiment tracking, and deployment

- Benefits: Unified development environment, scalable computing resources, and integrated model management

6. MLflow

- Description: Open source platform for managing the complete machine learning lifecycle

- Role: Experiment tracking, model versioning, and performance monitoring for all medical AI models

- Benefits: Comprehensive model management, reproducible experiments, and seamless deployment workflows

Pre-trained Models and Accessibility

🚀 Ready-to-Use Models on HuggingFace Hub

To make OpenMed accessible to the broader healthcare and research community, we've published all our trained models on HuggingFace Hub:

Repository: https://huggingface.co/hitmanonholiday/openmed-medical-imaging-models

This repository contains production-ready, pre-trained ResNet50 models for immediate use, eliminating the need for users to train models from scratch.

Model Availability

| Model | Purpose | HuggingFace Path | Local Checkpoint Name |

|---|---|---|---|

| Brain Tumor Classifier | 3-class brain tumor detection | resnet50_brain_tumor_full/pytorch_model.bin |

best_resnet50_brain_tumor_full_trained.pth |

| Tuberculosis Detector | TB screening from chest X-rays | resnet50_tb_full/pytorch_model.bin |

best_resnet50_tb_full_trained.pth |

| Pneumonia Detector | Pneumonia detection from chest X-rays | resnet50_pneumonia_full/pytorch_model.bin |

best_resnet50_pneumonia_full_trained.pth |

Quick Setup Instructions

Automated Setup (Recommended):

# Install required dependency

pip install huggingface-hub

# Run one-time setup script

python -c "

from huggingface_hub import hf_hub_download

import os, shutil

# Create checkpoint directories

dirs = ['checkpoints/resnet50_brain_tumor_full', 'checkpoints/resnet50_tb_full', 'checkpoints/resnet50_pneumonia_full']

for d in dirs: os.makedirs(d, exist_ok=True)

# Download and place models

models = [

('resnet50_brain_tumor_full/pytorch_model.bin', 'checkpoints/resnet50_brain_tumor_full/best_resnet50_brain_tumor_full_trained.pth'),

('resnet50_tb_full/pytorch_model.bin', 'checkpoints/resnet50_tb_full/best_resnet50_tb_full_trained.pth'),

('resnet50_pneumonia_full/pytorch_model.bin', 'checkpoints/resnet50_pneumonia_full/best_resnet50_pneumonia_full_trained.pth')

]

for hf_path, local_path in models:

print(f'Downloading {hf_path}...')

downloaded = hf_hub_download(repo_id='hitmanonholiday/openmed-medical-imaging-models', filename=hf_path)

shutil.copy2(downloaded, local_path)

print(f'✅ Saved to {local_path}')

print('🎉 All models ready for use!')

"

Manual Setup:

- Visit: https://huggingface.co/hitmanonholiday/openmed-medical-imaging-models

- Download the

pytorch_model.binfiles from each model directory - Rename and place them in the correct

checkpoints/subdirectories as shown in the table above

Expected Directory Structure

After setup, your project should have:

openMed/

├── checkpoints/

│ ├── resnet50_brain_tumor_full/

│ │ └── best_resnet50_brain_tumor_full_trained.pth

│ ├── resnet50_tb_full/

│ │ └── best_resnet50_tb_full_trained.pth

│ └── resnet50_pneumonia_full/

│ └── best_resnet50_pneumonia_full_trained.pth

└── ... (other project files)

Custom Training Option

While pre-trained models provide immediate functionality, users can also train their own models:

Benefits of Custom Training:

- 🎯 Domain Adaptation: Fine-tune on institution-specific datasets

- 🔧 Hyperparameter Optimization: Customize for specific hardware configurations

- 📊 Population-Specific Models: Adapt to specific patient demographics or imaging protocols

- 🚀 Research Applications: Experiment with different architectures or training strategies

Training Commands:

cd src/rd

# Train custom models

python resnet50_pneumonia_full.py # Pneumonia detection

python resnet50_tb_full.py # Tuberculosis detection

python resnet50_brain_tumor_full.py # Brain tumor classification

Deployment Flexibility

The availability of pre-trained models enables multiple deployment scenarios:

- Immediate Deployment: Use pre-trained models for instant setup

- Fine-tuning: Start with pre-trained weights and adapt to specific datasets

- Research & Development: Use as baseline models for academic research

- Educational Use: Enable medical students and researchers to explore AI interpretability

This approach democratizes access to advanced medical AI, enabling healthcare institutions and researchers worldwide to benefit from state-of-the-art medical imaging analysis without requiring extensive computational resources for training.

Challenges we ran into

Technical Challenges

1. Medical Dataset Quality and Diversity

- Challenge: Medical imaging datasets often have inconsistent quality, limited diversity, and potential biases

- Solution: Implemented robust data preprocessing pipelines, augmentation techniques, and multi-dataset validation

- Impact: Achieved more reliable and generalizable model performance across different patient populations

2. Model Interpretability vs. Performance Trade-off

- Challenge: Making models interpretable without sacrificing diagnostic accuracy

- Solution: Developed custom GradCAM implementation optimized for medical imaging, integrated attention mechanisms

- Impact: Maintained high accuracy while providing clinically meaningful explanations

3. Real-time Processing Requirements

- Challenge: Medical applications require fast response times for emergency scenarios

- Solution: Optimized model inference, implemented efficient caching, and used GPU acceleration

- Impact: Reduced average processing time to under 3 seconds per image

4. OpenAI API Integration Complexity

- Challenge: Creating seamless integration between medical AI models and conversational AI

- Solution: Built custom middleware layer with intelligent routing and context management

- Impact: Enabled natural language interaction with complex medical AI systems

Domain-Specific Challenges

5. Clinical Validation and Trust

- Challenge: Gaining trust from medical professionals skeptical of AI systems

- Solution: Implemented comprehensive interpretability features, extensive validation studies, and transparent performance reporting

- Impact: Achieved clinical acceptance through evidence-based validation and clear explanations

6. Regulatory and Compliance Requirements

- Challenge: Navigating complex medical device regulations and privacy requirements

- Solution: Built-in HIPAA compliance, implemented audit trails, and designed for FDA pathway compliance

- Impact: Created a system ready for clinical deployment and regulatory approval

7. Multi-Disease Model Management

- Challenge: Managing and coordinating multiple specialized AI models

- Solution: Developed unified API layer with intelligent model routing and version management

- Impact: Seamless user experience despite complex backend architecture

Infrastructure Challenges

8. Scalability and Performance Optimization

- Challenge: Ensuring system performance under high concurrent load

- Solution: Implemented microservices architecture, load balancing, and efficient resource management

- Impact: System capable of handling multiple simultaneous medical image analyses

Accomplishments that we're proud of

Technical Achievements

Model Performance Summary

Our ResNet50-based medical AI models achieved exceptional test accuracies across all supported medical conditions:

| Medical Condition | Model Architecture | Classification Type | Test Accuracy | Dataset Size |

|---|---|---|---|---|

| Pneumonia Detection | ResNet50 | Binary (Normal/Pneumonia) | 96.49% | Chest X-rays |

| Tuberculosis Detection | ResNet50 | Binary (Normal/TB) | 98.65% | Chest X-rays |

| Brain Tumor Classification | ResNet50 | Multi-class (3 types) | 97.21% | Brain MRI |

1. High-Accuracy Medical AI Models

- Achieved 96.49% accuracy on pneumonia detection and 98.65% accuracy on tuberculosis detection

- Developed robust brain tumor classification with 97.21% multi-class accuracy

- Successfully validated models on diverse, real-world medical imaging datasets

2. Breakthrough in Medical AI Interpretability

- Created clinically meaningful GradCAM visualizations that highlight anatomically relevant regions

- Developed natural language explanation system that provides medical reasoning in plain English

- Integrated confidence scoring that helps clinicians make informed decisions

3. Seamless Human-AI Integration

- Built conversational interface that understands complex medical queries

- Created workflow integration that enhances rather than disrupts clinical practice

- Developed real-time processing capabilities suitable for emergency medical scenarios

Innovation Highlights

4. Novel Multi-Modal Approach

- Successfully combined deep learning, computer vision, and conversational AI

- Integrated multiple specialized models under a unified, intuitive interface

- Created first-of-its-kind medical imaging platform with natural language interaction

5. Open Source Contribution

- Developed reusable components for medical AI interpretability

- Created comprehensive documentation and tutorials for the medical AI community

- Built extensible architecture that supports addition of new medical conditions

6. Clinical Impact Potential

- Designed system that can democratize access to advanced medical imaging analysis

- Created educational tools that can enhance medical training and knowledge transfer

- Developed technology that can significantly reduce diagnostic delays in underserved regions

Engineering Excellence

7. Production-Ready System

- Built robust, scalable architecture ready for clinical deployment

- Implemented comprehensive monitoring, logging, and error handling

- Created extensive test suites ensuring medical-grade reliability

8. User Experience Innovation

- Designed intuitive interface that requires minimal training for medical professionals

- Created responsive, accessible web platform compatible with hospital IT systems

- Developed mobile-friendly interface for point-of-care usage

What we learned

Technical Insights

1. Medical AI Requires Different Standards We learned that medical AI applications demand significantly higher standards than traditional AI systems:

- Interpretability is non-negotiable: Medical professionals need to understand AI reasoning to trust and validate decisions

- Failure modes must be predictable: Systems must fail gracefully and indicate uncertainty clearly

- Performance consistency is critical: Models must perform reliably across diverse patient populations and imaging conditions

2. The Power of Transfer Learning in Medical Imaging Pre-trained models provided an excellent foundation, but medical domain fine-tuning was crucial:

- ImageNet features translate well to medical imaging tasks

- Domain-specific augmentation significantly improves generalization

- Multi-dataset training is essential for robust performance across different hospitals and imaging protocols

3. Integration Complexity Building a cohesive system from multiple AI components presented unique challenges:

- API design matters: Consistent interfaces enable seamless integration

- State management is crucial: Medical conversations require context preservation

- Error propagation: Failures in one component can cascade through the entire system

Domain Knowledge Acquisition

4. Healthcare Workflow Understanding Working on medical AI taught us the complexity of healthcare systems:

- Clinical workflows are intricate: AI must integrate seamlessly without disrupting established practices

- Stakeholder diversity: Different users (doctors, nurses, administrators) have different needs and perspectives

- Regulatory landscape: Medical AI faces complex approval processes and compliance requirements

5. The Importance of Clinical Partnership Collaboration with medical professionals was invaluable:

- Domain expertise is irreplaceable: Technical excellence alone isn't sufficient for medical applications

- Validation requires clinical input: Performance metrics must align with clinical relevance

- User feedback drives improvement: Iterative development with clinical partners led to significant enhancements

Project Management Lessons

6. Agile Development in Regulated Domains Traditional agile methodologies needed adaptation for medical AI:

- Documentation is critical: Regulatory compliance requires extensive documentation from the start

- Validation takes time: Clinical validation cycles are longer than typical software testing

- Change management: Modifications require careful impact assessment in medical contexts

7. The Value of Interpretability Investing in interpretability features proved more valuable than initially anticipated:

- Trust building: Interpretable AI gained faster acceptance from medical professionals

- Debugging capabilities: Visual explanations helped identify and fix model issues

- Educational value: Interpretability features became powerful teaching tools

What's next for OpenMed

Immediate Roadmap (Next 6 Months)

1. Clinical Validation Studies

- Partner with hospitals for prospective clinical trials

- Conduct reader studies comparing AI performance with radiologists

- Gather real-world performance data across diverse patient populations

- Publish peer-reviewed validation studies in medical journals

2. Regulatory Pathway

- Submit FDA 510(k) pre-submission for pneumonia detection module

- Implement additional compliance features for medical device classification

- Develop quality management system for medical device standards

- Create comprehensive clinical evaluation protocols

3. Enhanced Interpretability Features

- Counterfactual Explanations: "What would need to change for a different diagnosis?"

- Uncertainty Visualization: Advanced confidence mapping and error bounds

- Temporal Analysis: Track disease progression over multiple imaging studies

- Comparative Analysis: Side-by-side comparison with historical cases

Medium-term Expansion (6-18 Months)

4. New Medical Conditions

- Cardiovascular Disease: ECG analysis and cardiac imaging interpretation

- Orthopedic Imaging: Fracture detection and musculoskeletal analysis

- Dermatology: Skin lesion classification and melanoma screening

- Ophthalmology: Diabetic retinopathy and glaucoma detection

5. Advanced AI Capabilities

- Multi-modal Integration: Combine imaging with electronic health records and lab results

- Predictive Analytics: Risk assessment and prognosis prediction

- Personalized Medicine: Patient-specific risk factors and treatment recommendations

- Federated Learning: Collaborative model training while preserving patient privacy

6. Platform Enhancements

- Mobile Applications: Native iOS and Android apps for point-of-care usage

- PACS Integration: Direct integration with hospital picture archiving systems

- Voice Interface: Speech-to-text capabilities for hands-free operation

- Workflow Automation: Integration with hospital information systems

Long-term Vision (18+ Months)

7. Global Health Impact

- Telemedicine Platform: Comprehensive remote diagnostic capabilities

- Developing World Deployment: Offline-capable versions for resource-limited settings

- Medical Education Integration: Curriculum integration with medical schools

- Public Health Applications: Population-level screening and surveillance

8. Research & Development

- Novel Architecture Exploration: Investigation of cutting-edge AI architectures

- Biomarker Discovery: AI-driven identification of novel imaging biomarkers

- Drug Development Support: Imaging endpoints for clinical trials

- Precision Medicine: Genomics-imaging integration for personalized healthcare

9. Ecosystem Development

- Developer Platform: APIs and SDKs for third-party medical AI applications

- Marketplace Model: Curated marketplace for specialized medical AI models

- Research Collaboration: Partnerships with academic medical centers and research institutions

- Open Source Initiative: Release of core components to accelerate medical AI research

Sustainability and Impact Goals

10. Business Model Maturation

- SaaS Platform: Subscription-based model for healthcare institutions

- Per-Study Pricing: Flexible pricing for smaller practices and telemedicine providers

- Partnership Revenue: Revenue sharing with integrated healthcare platforms

- Training and Consulting: Professional services for AI implementation

11. Social Impact Objectives

- Healthcare Democratization: Make advanced diagnostic AI accessible globally

- Medical Education Enhancement: Transform how medical professionals learn and practice

- Research Acceleration: Contribute to faster medical research and discovery

- Health Equity: Reduce healthcare disparities through accessible AI technology

OpenMed represents just the beginning of a healthcare revolution. Our vision extends beyond diagnostic assistance to comprehensive AI-powered healthcare support that maintains human expertise at its center while dramatically expanding access to quality medical care worldwide.

OpenMed: Where artificial intelligence meets human compassion in healthcare.

Log in or sign up for Devpost to join the conversation.