-

-

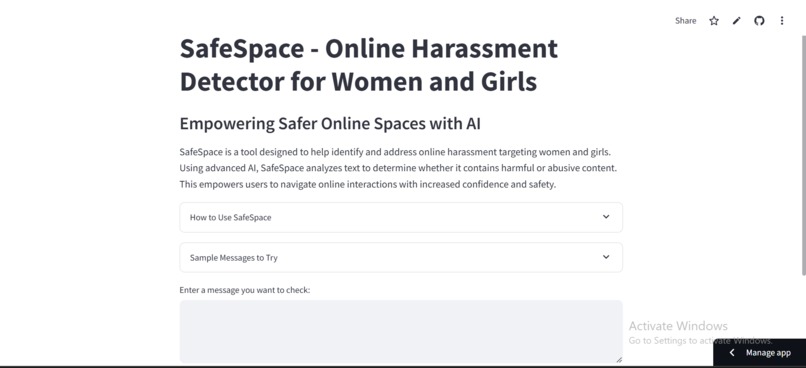

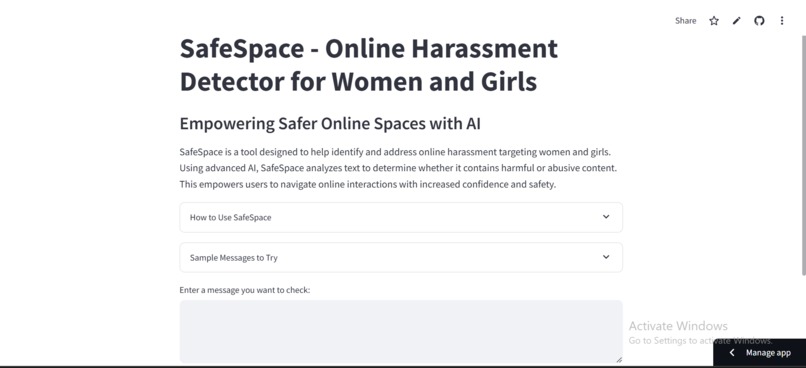

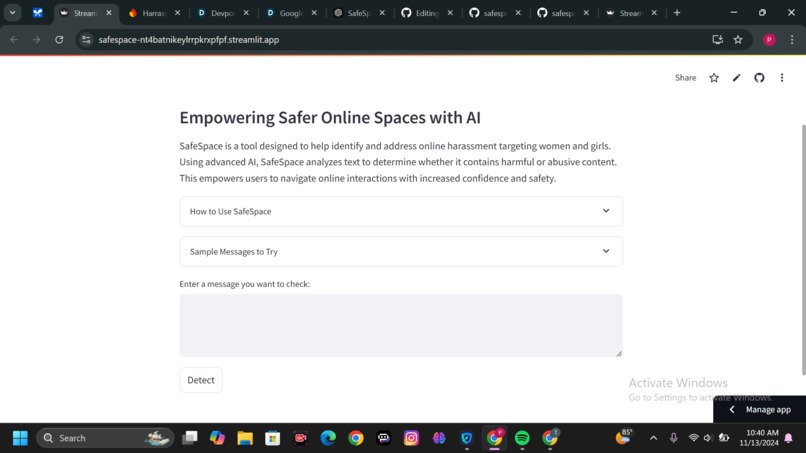

This is an overview of the welcome page of the streamlit application

-

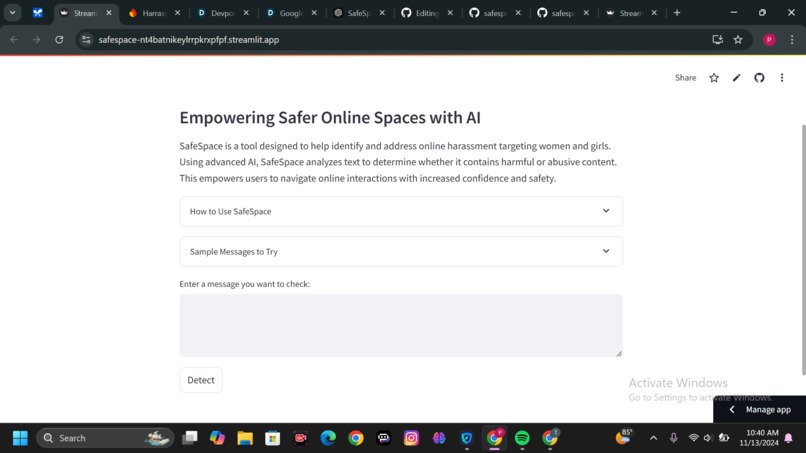

This is a continuation of the welcome page

-

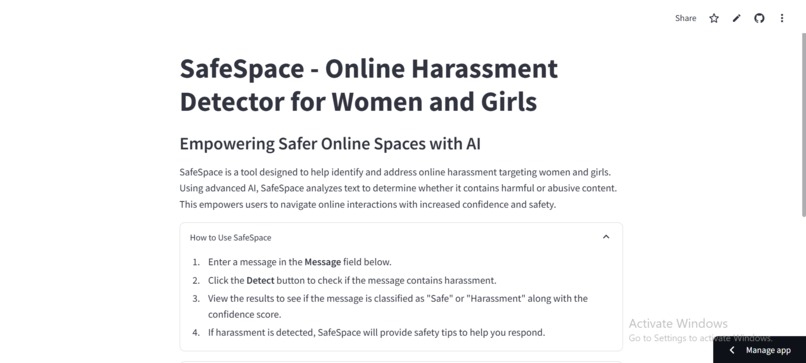

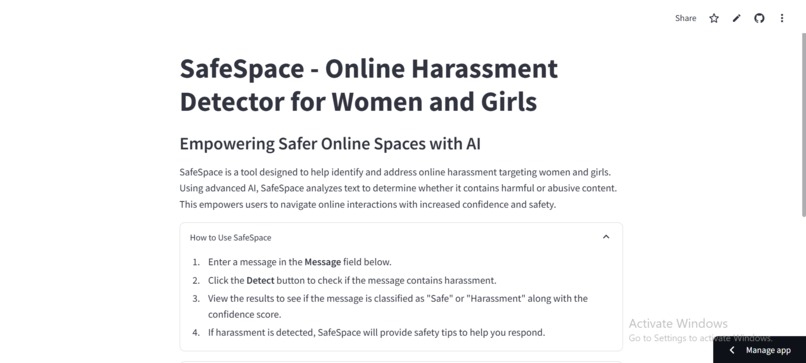

This is the result of expanding the how to use safespace bar

-

This is a result of expanding the sample messages bar

-

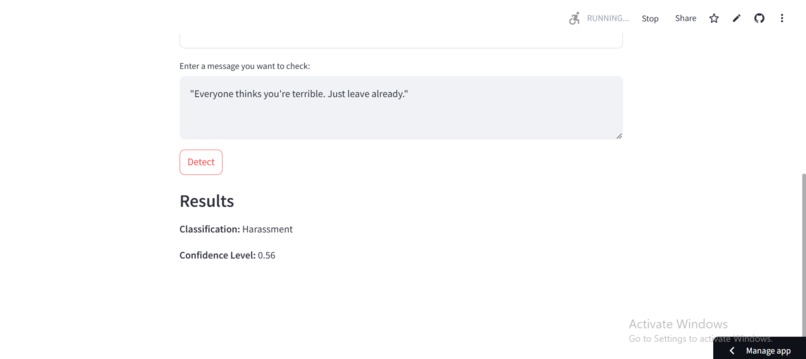

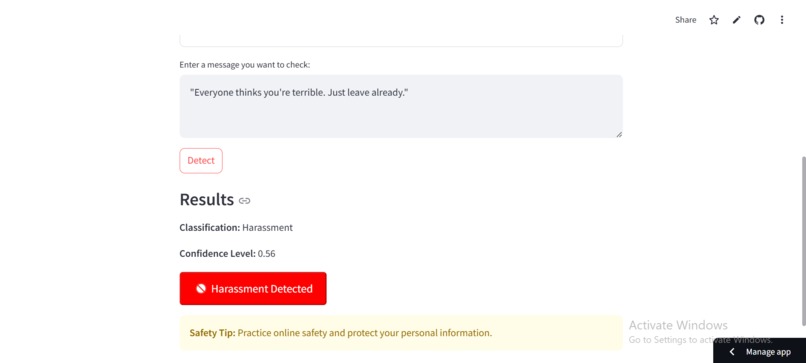

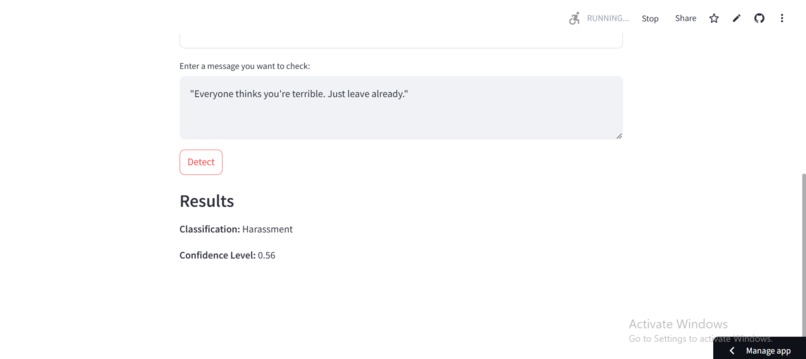

Shows the detection results of a sample of harassment text

-

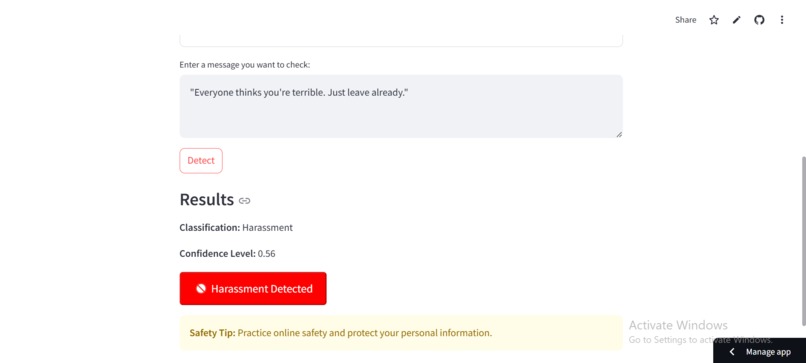

Shows the colorcode button of the harassment and also a safety tip

-

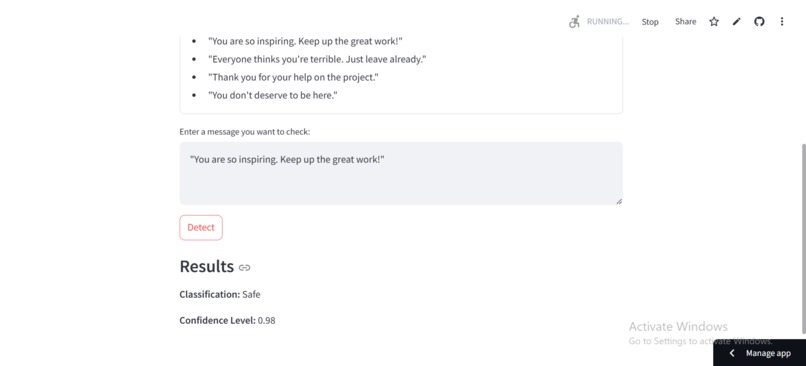

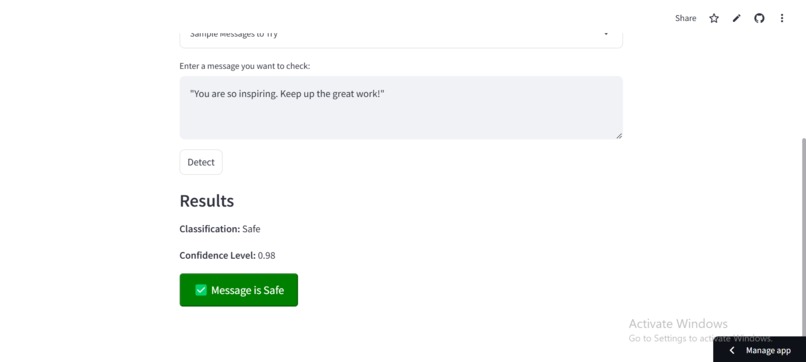

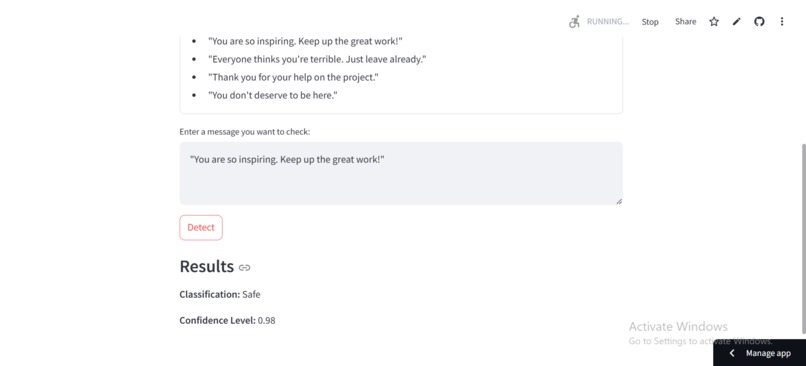

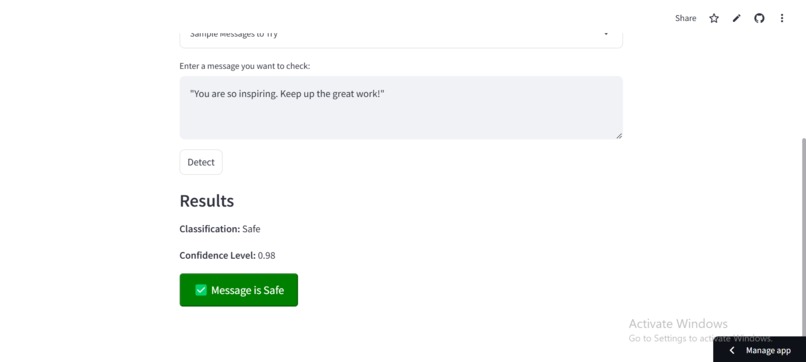

Shows the example of inputting a safe text

-

Shows the final result with a colorcoide button to show safety

Inspiration

The project was inspired by the urgent need to create safer online spaces for women and girls, who often face disproportionate harassment on digital platforms. Recognizing that such abuse can hinder their participation and expression online, the project aligns with SDG 5 by promoting gender equity and addressing this pervasive issue. The solution aims to empower users and provide them with actionable steps to mitigate online harassment, enhancing online inclusivity and safety.

What it does

The Online Harassment Detector, SafeSpace, uses natural language processing (NLP) to classify text as either “Safe” or “Harassment.” By analyzing content with high accuracy, it provides users with immediate feedback, a confidence score, and supportive guidance if harassment is detected. Additionally, the system saves these reports securely in a Firebase database, allowing for potential tracking and analysis of harassment patterns while protecting user privacy.

How I built it

We built SafeSpace using the Hugging Face Transformers library, leveraging the cardiffnlp/twitter-roberta-base-offensive model for text classification. The solution integrates Streamlit for an interactive web-based interface, Firebase for secure report storage, and custom functions to manage user authentication and data handling. The app is designed to be user-friendly, giving actionable tips to users facing online abuse, and storing data securely to help identify trends and improve the model.

Challenges I ran into

Multilingual Support: Ensuring the model can adapt to various African languages, as harassment terminology and expressions vary significantly across cultures and languages. Firebase Integration: Configuring Firebase for secure data storage while maintaining user privacy was complex, requiring careful setup and handling to meet security standards. UI/UX Design: Designing an interface that was both informative and supportive for users encountering distressing content was a nuanced task.

Accomplishments that I am proud of

I am proud to have: Developed a functioning prototype that can classify text reliably, offering a practical tool for detecting and addressing online harassment. Integrated Firebase to securely save reports, laying the groundwork for future analysis and model improvements. Created a resource that not only detects harassment but provides users with actionable tips, offering a comprehensive response that goes beyond classification. Designed a tool that aligns with SDG 5, making strides in promoting gender equity through technology.

What I learned

Technical Skills: Enhanced our understanding of NLP and cloud-based storage (Firebase) in handling sensitive data. Design Thinking: Developed a user-centered approach, prioritizing a supportive user experience, especially in sensitive contexts. Cultural Sensitivity in AI: Recognized the importance of contextualizing AI models for specific regions, such as Africa, where language and social norms vary greatly.

What's next for Online Harassment Detector for Women and Girls

Multilingual Support: Expanding the model to support African languages, making it more accessible and culturally responsive. Enhanced Model: Training the model with additional harassment data to improve accuracy and reduce false positives, especially in diverse contexts. Collaboration with NGOs and Governments: Partnering with organizations working on digital safety and gender equality to broaden the tool's reach and impact. Feedback Mechanism: Introducing a feedback loop where users can anonymously report if they disagree with classifications, allowing continuous improvement of the model.

Log in or sign up for Devpost to join the conversation.