Omnnia — Turn Your Love Story Into an Animated Film

Inspiration

It started with a simple question everyone kept asking: "So, how did you two meet?" Every time, I'd light up. I never got tired of the details, the lucky timing, the moments that almost didn't happen. Then one night I thought about my parents. About their parents. About all the love stories that existed before us that we'll never fully know, the ones that were never written down, never recorded, just passed along in fragments until even the fragments faded. What if my kids could actually see our story? Not as a photo album, but as something that feels like being there, warm, lighthearted, a little magical. That's where Omnnia was born. Not from a technical insight or a market gap. From a feeling, the feeling of wanting to hold onto something beautiful before time takes it from you.

What It Does

Omnnia transforms real love stories into personalized animated films. Couples share their story, how they met, their first date, the awkward moments, the inside jokes, and Omnnia turns it into a Disney-style animated short that feels uniquely theirs.

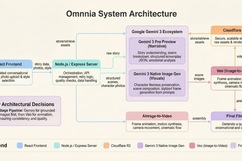

The pipeline: couples provide their story through a guided conversational interface along with photos and details. Gemini 3 Pro Preview processes the raw narrative and crafts an emotionally resonant screenplay, breaking it into structured scenes with visual descriptions, dialogue cues, and mood direction. For each scene, Gemini 3's native image generation creates animated-style images of the couple that capture their real likeness as stylized characters. Each image is then fed into Veo as an image-to-video input, producing animated clips with motion, expression, and cinematic flow.

The result isn't generic AI content. It's their story, with their details, their humor, their turning points, brought to life as an animated film that looks like them and feels like a memory you can watch.

How We Built It

Gemini 3 is both the brain and the eyes of Omnnia. It handles two distinct roles across the pipeline:

Gemini 3 Pro Preview (Text Generation) processes unstructured couple stories and outputs structured JSON screenplays with scene breakdowns, visual descriptions, dialogue cues, and emotional direction. It takes the beautiful chaos of how couples actually tell stories, nonlinear, full of tangents and "oh wait, I forgot to mention" moments, and shapes it into coherent cinematic narratives while preserving what makes each story unique. It tracks character details, emotional arcs, and callbacks across scenes so the final film feels like a complete story, not disconnected vignettes.

Gemini 3 Native Image Generation takes the couple's reference photos as multimodal input and generates animated-style character images that preserve their real likeness in each scene. These aren't generic avatars, they're stylized versions grounded in the actual couple's features, their energy, the way they stand together, rendered in the context of each specific scene. We anchor every generation with persistent character descriptions (hair color, build, clothing, distinguishing features) extracted from the photos during initial processing to maintain consistency across 6-12 scenes.

Veo 3.1 Image-to-Video API receives each generated scene image and produces animated clips with motion, camera movement, expression, and cinematic flow. This two-stage approach, Gemini 3 for grounded still images first, then Veo for animation, was our key architectural breakthrough. Our first attempt at direct text-to-video produced inconsistent, lifeless results. The image-first pipeline gave Veo a strong visual anchor and the quality jumped dramatically.

The frontend is React with a conversational story input flow. The backend is Node.js/Express orchestrating Gemini 3 API calls, Veo generation, and Cloudflare R2 storage.

Challenges

Our biggest breakthrough was discovering the two-stage pipeline. Our first approach was feeding scene descriptions directly into video generation, and the results were inconsistent and lifeless. Generating a grounded still image first with Gemini 3, then animating it with Veo, gave dramatically better results and became our architectural foundation.

Maintaining character likeness across 6-12 scenes required anchoring every generation with persistent character descriptions extracted from reference photos. We also built retry logic and quality-checking heuristics to handle API output variability, and developed a specialized prompt construction layer that translates emotional story beats into precise visual specifications, encoding lighting, camera angle, character proximity, and facial expression derived from narrative context.

Accomplishments

We built something that makes people emotional. That sounds simple, but it's the hardest thing to engineer. The first time we watched a complete generated film, it didn't feel like a tech demo. It felt like a memory. That moment told us we were building something real. Every couple we've shown it to has said the same thing: "Can you make one for us?" That's not polite feedback.

What's Next

Voice narration layered over animation, AI-composed soundtracks matching each story's emotional arc, and living love stories, couples continuing to add chapters over time, because a love story doesn't end at the first chapter. Omnnia makes sure it's not just remembered, but felt, chapter after chapter, for as long as the love keeps going.

Built With

- cloudflare

- css

- elevenlabs

- express.js

- ffmpeg

- firebase

- gemini

- gemini3

- google-antigraviry

- javascript

- lucide

- node.js

- railway

- react

- react-native

- veo

- vercel

Log in or sign up for Devpost to join the conversation.