-

-

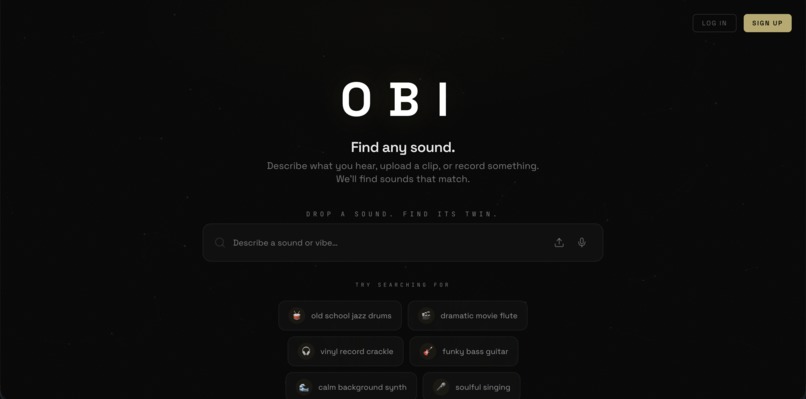

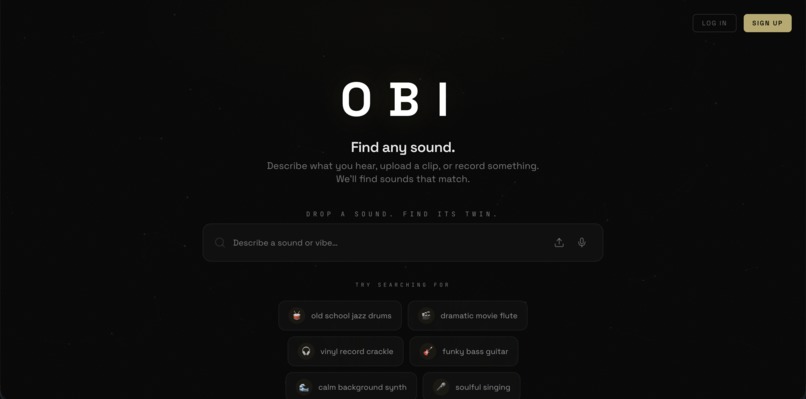

The main search interface where users can type a vibe, upload a clip, or record audio.

-

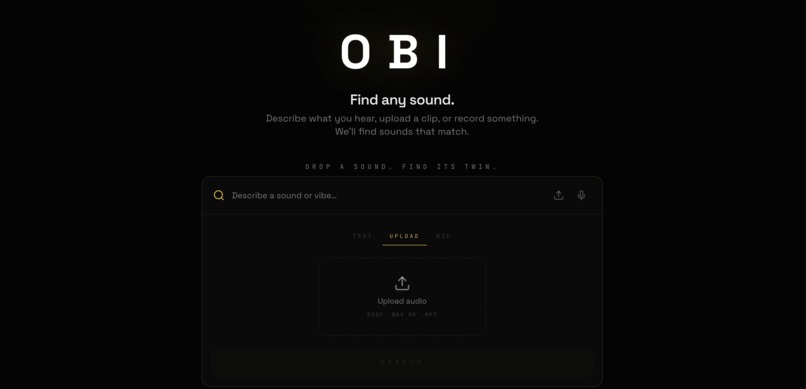

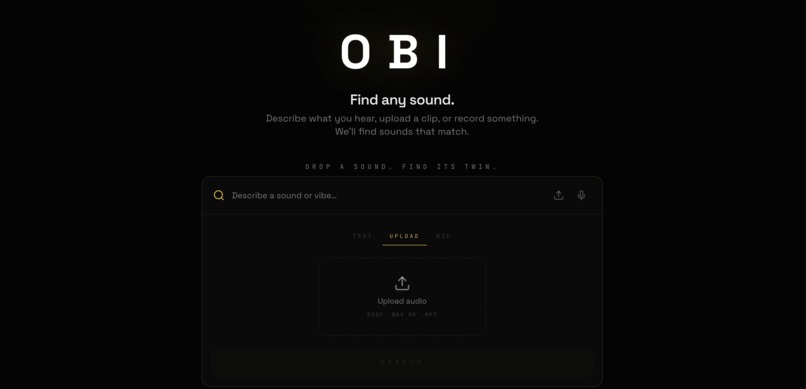

The dedicated file upload interface, allowing users to drag and drop existing .wav or .mp3 samples for similarity matching.

-

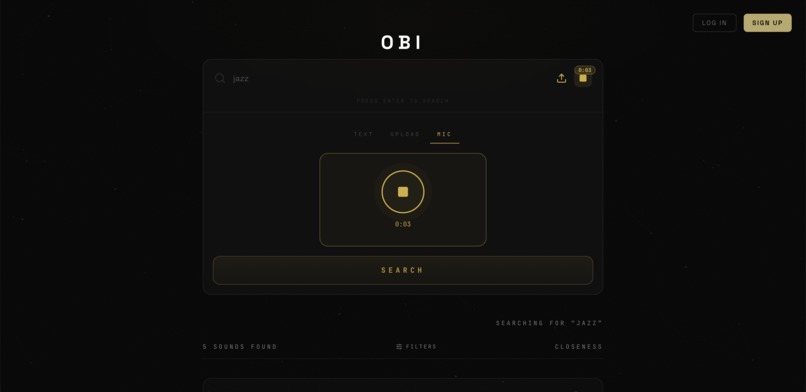

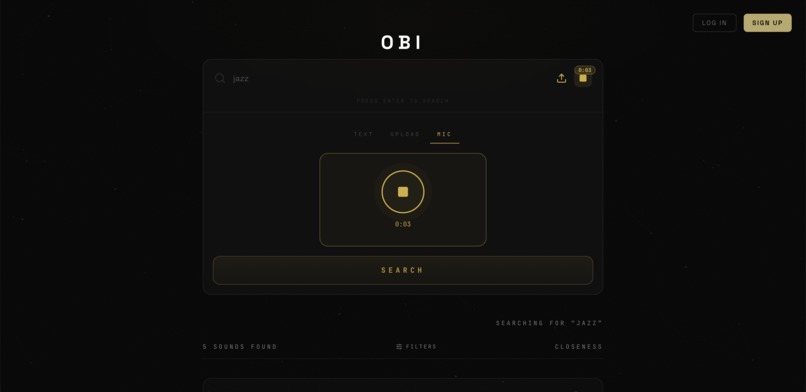

The live microphone interface, allowing producers to record and submit a quick audio snippet directly from the browser.

-

The loading visualizer that displays while the system processes and compares audio.

-

The results view for a text query, featuring matching audio files ranked by similarity score and our custom SVG waveform player in action.

-

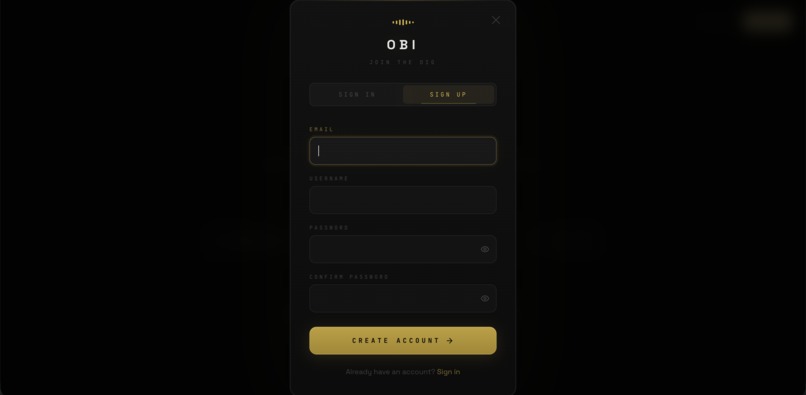

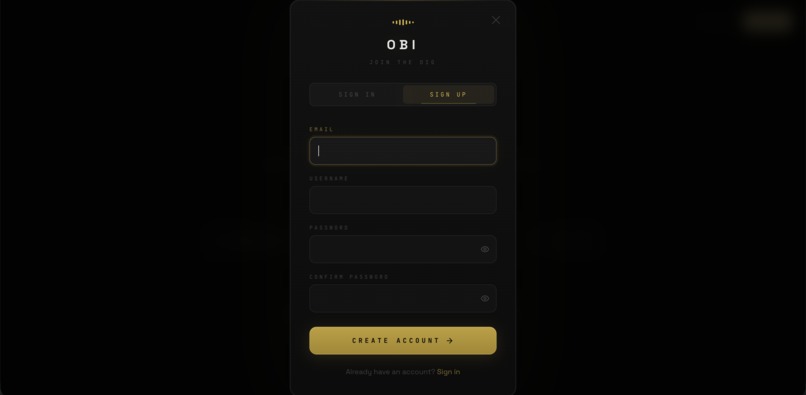

The user registration overlay where producers can sign up to create a new OBI account.

-

Meet Our Team!

Inspiration

Every producer knows the feeling. You have a sound locked in your head: a specific drum swing, a gritty bass texture, a melodic vibe that you heard somewhere. But you can't find it.

You can't type "dusty warm crackle with a lazy swing" into a search bar and get good results. You're stuck digging through thousands of files, site after site (Splice, Looperman, freesound), spending hours hunting for something you already know how to describe. The creative flow dies in the search.

We built OBI because that process is broken. The whole experience of making music should be faster, more intuitive, and more human. You describe what you hear, and OBI handles the rest.

What it does

OBI is a multi-modal sonic search engine built for producers, sound designers, and sample hunters.

Three ways to search, one interface:

- Text — type a vibe: "old school jazz drums with a lazy swing"

- Upload — drop a .wav or .mp3 and find sounds like it

- Record — hum something straight from your mic

Under the hood, OBI converts any audio into a 128-dimensional embedding vector using an MFCC-based ML pipeline, then runs cosine similarity search via Qdrant to surface the closest matches. Results are played back through a hand-built waveform player with 48-bar SVG visualization, real-time progress tracking, and click-to-seek.

How we built it

We spent significant time planning the full architecture before writing a single line of code. We created the OBI GitHub organization, defined the project roadmap, and agreed on the system design as a team.

The stack:

- Frontend — Next.js 16, React 19, TypeScript (App Router, SSR)

- Styling — Tailwind CSS v4

- Animations — Framer Motion 12, custom Canvas particle system

- Audio — Custom SVG waveform renderer, full playback controls

- Backend — Python, FastAPI

- ML pipeline — librosa, MFCC feature extraction → CLAP embeddings

- Vector search — Qdrant (cosine similarity)

- Storage — Supabase

- Hosting — Vercel (frontend), Railway (backend)

Challenges we ran into

- Dataset curation: Finding a clean, well-labeled dataset of diverse sounds that the ML pipeline could meaningfully embed was harder than expected. Not all audio is equal, as quality, length, and labeling variance all affect search results.

- Visual analysis accuracy: Getting the embedding pipeline to produce meaningfully close results for vibe-based text queries (not just exact sonic matches) required iteration on how we were processing and representing audio features.

- Deployment: Getting the frontend on Vercel to correctly point at the Railway backend instead of localhost caused real friction. Environment variable handling across two separate hosting platforms is not fun.

- Syncing across the stack: Five people, three layers of the system (ML, backend, frontend), and tight time constraints. Connecting everyone's work into a single coherent deployed app required active coordination throughout the build.

Accomplishments that we're proud of

We're proud of the amount of real progress we made. Getting a live, deployed, functional product with multi-modal search working where text input returns actual results via Qdrant is a real MVP.

More than the code though, we planned the full system architecture, made the OBI GitHub organization with a proper roadmap and contributing guide, and treated this like a real product from day one. That level of intention is something we're proud of.

What we learned

- ML pipeline architecture at a real scale — not just "run a model," but understanding the full path from raw audio to stored vector to queried result.

- How audio analysis actually works — the MFCC pipeline, mel spectrograms, feature extraction tradeoffs, why CLAP embeddings represent audio semantically instead of just acoustically.

- Railway deployment — handling FastAPI on Railway, nixpacks config, environment management across a Python backend.

- Cross-team frontend/backend integration — coordinating API contracts across a team on a deadline forces clarity and communication.

What's next for OBI

- Full CLAP model upgrade: Right now OBI uses MFCC-based features as a bridge to CLAP. The next step is moving to LAION's music-specific CLAP checkpoint (

music_audioset_epoch_15_esc_90.14.pt), trained on 630k+ audio-text pairs, which achieves 90%+ zero-shot audio classification accuracy. This is the same embedding model backing Meta's AudioCraft evaluation pipeline. - Stem separation — search inside a song: Integrate Meta's Demucs (Hybrid Transformer v4) so producers can drop in any full song and OBI extracts individual stems (drums, bass, vocals, other), then searches the index for sounds that match each stem. Imagine uploading a J Dilla track and instantly finding drum samples with the same swing characteristics.

- BPM + key detection as searchable metadata: Librosa already supports BPM and onset detection; Essentia handles key/Camelot wheel matching. Adding these as filterable attributes makes OBI genuinely useful in a real production session, not just as a discovery tool but as a workflow tool.

- Personal library indexing: Let producers upload and index their own sample packs. OBI searches across your private collection the same way it searches the public index, so your 20,000 local sounds become as queryable as your memory.

- DAW plugin (VST3/AU): The endgame is putting OBI directly inside Ableton, FL Studio, and Logic via a JUCE-based plugin. No browser, no context switching. Search from inside your session and drag a result straight into your DAW. That's the product producers actually want.

Built With

- clap

- fastapi

- framer-motion

- librosa

- next.js

- python

- qdrant

- railway

- react

- supabase

- tailwindcss

- typescript

- vercel

Log in or sign up for Devpost to join the conversation.