-

-

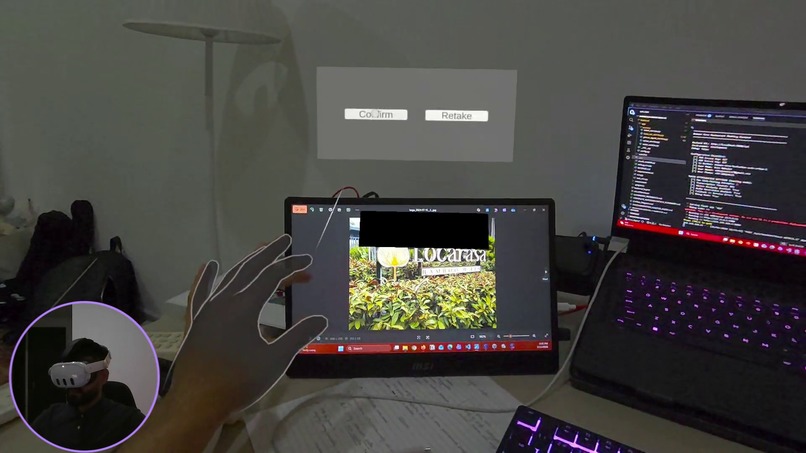

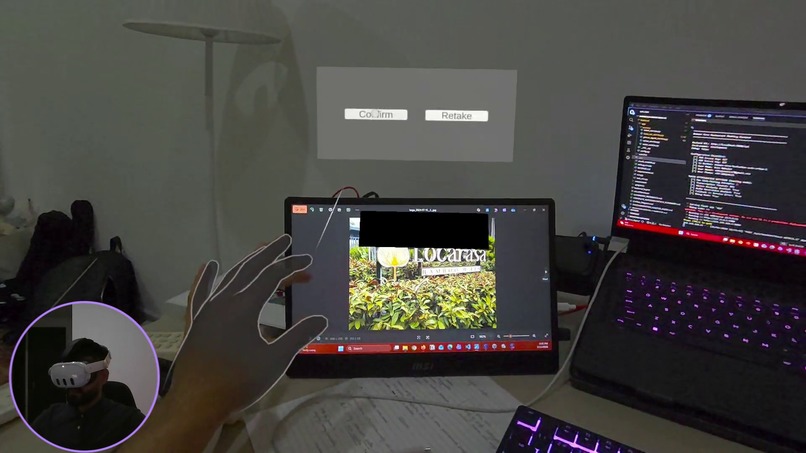

Gesture-based image capture using hand tracking for natural interaction.

-

User confirms the captured environment before the AI begins restaurant identification.

-

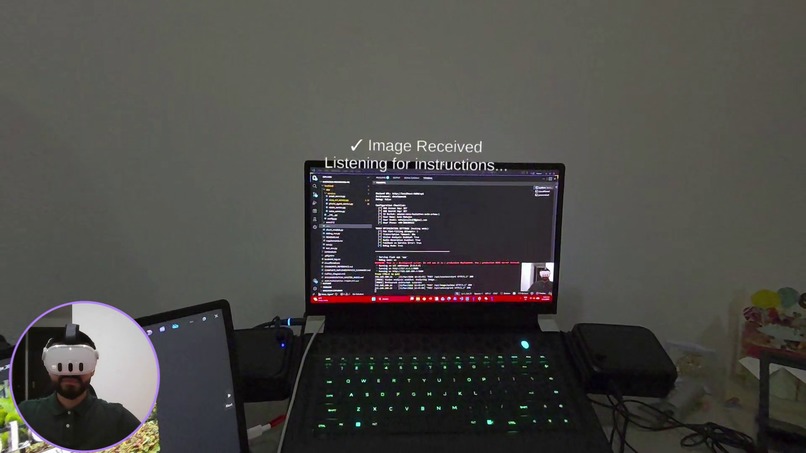

Context captured. The AI agent now listens for booking details.

-

AI agent listening to the user’s voice to understand booking instructions.

-

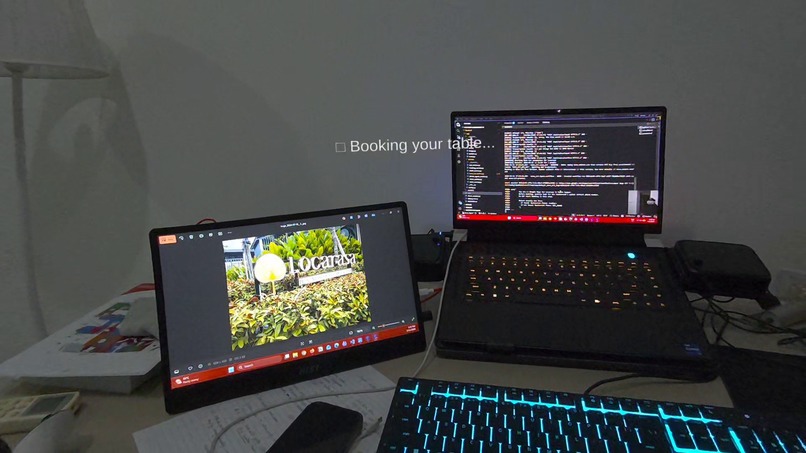

User provides final confirmation before the AI agent executes the reservation.

-

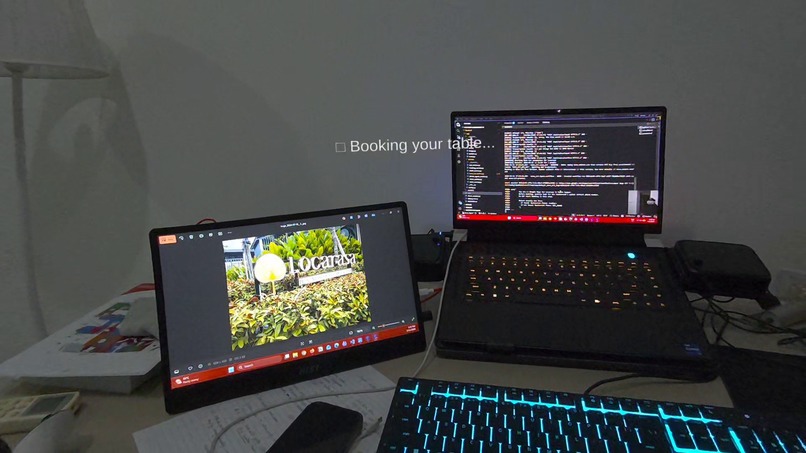

AI agent autonomously executing the booking process using Nova Act.

-

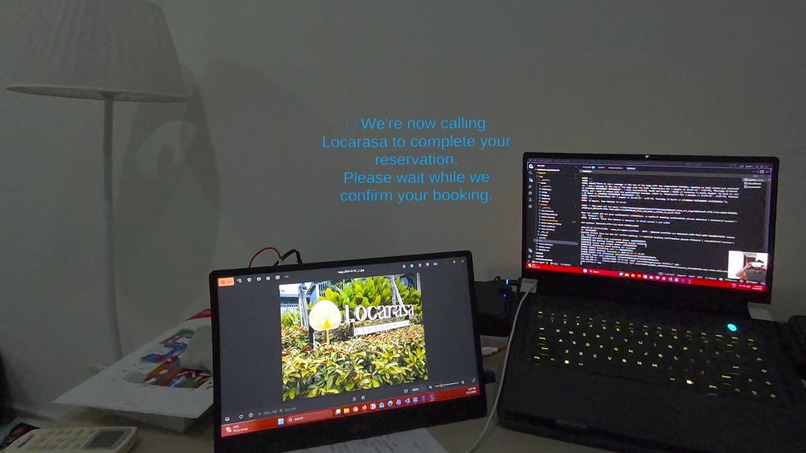

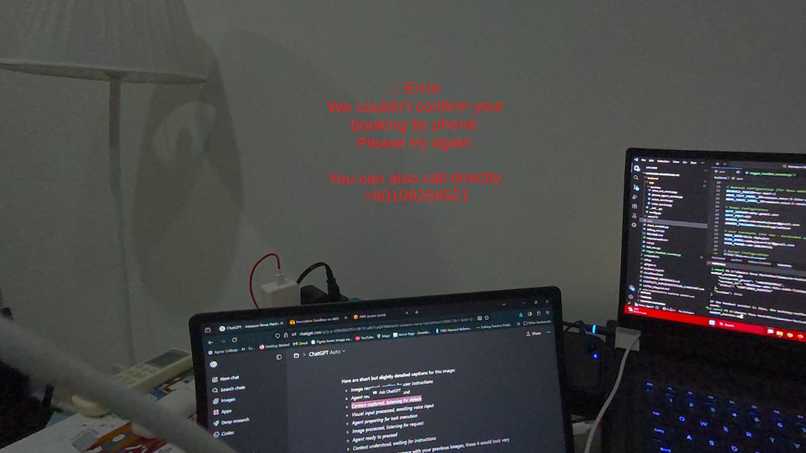

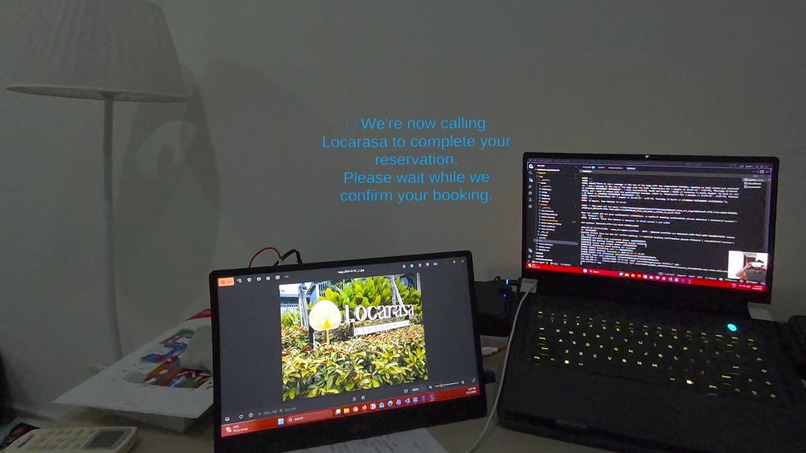

Web booking failed. The AI agent automatically switches to a phone call to complete the reservation.

-

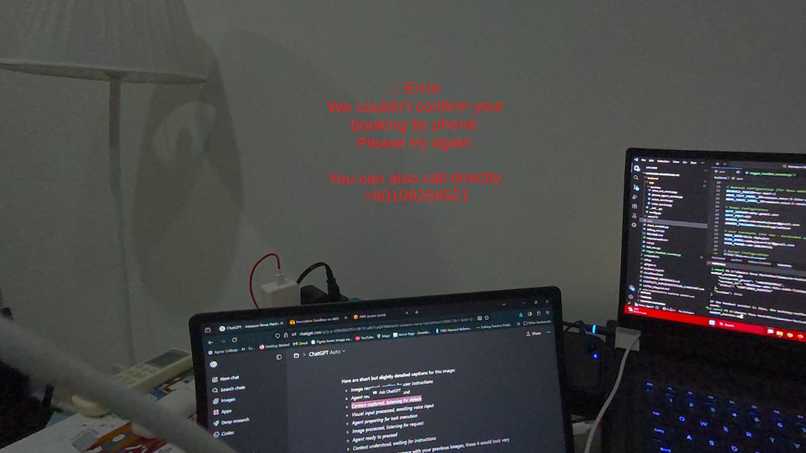

AI agent suggests a manual call option as an alternative booking method if restaurant do not pick the call.

-

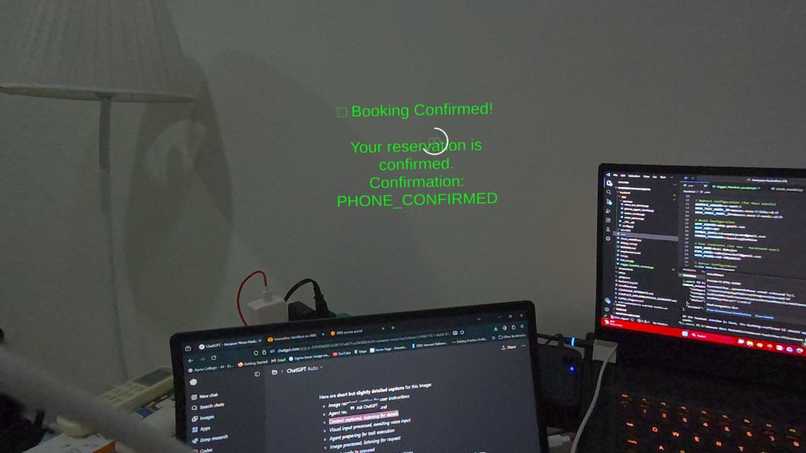

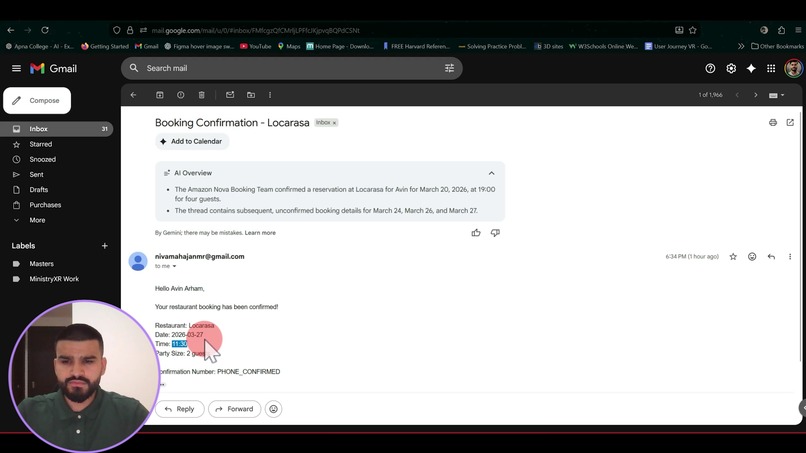

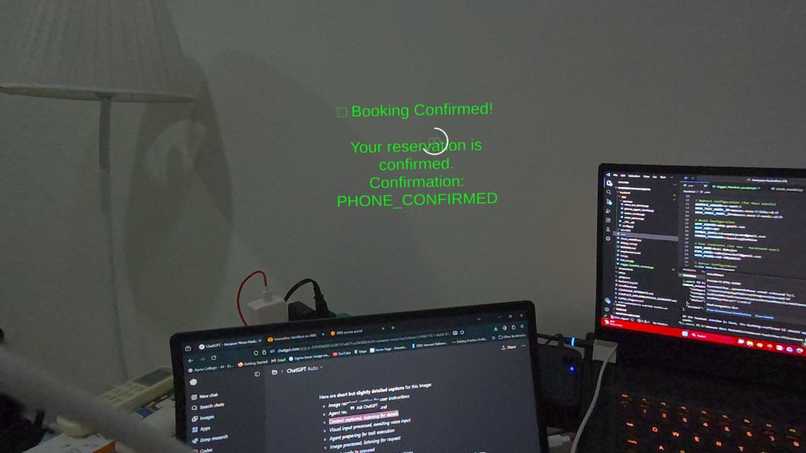

AI agent successfully completes the reservation from capture to confirmation.

-

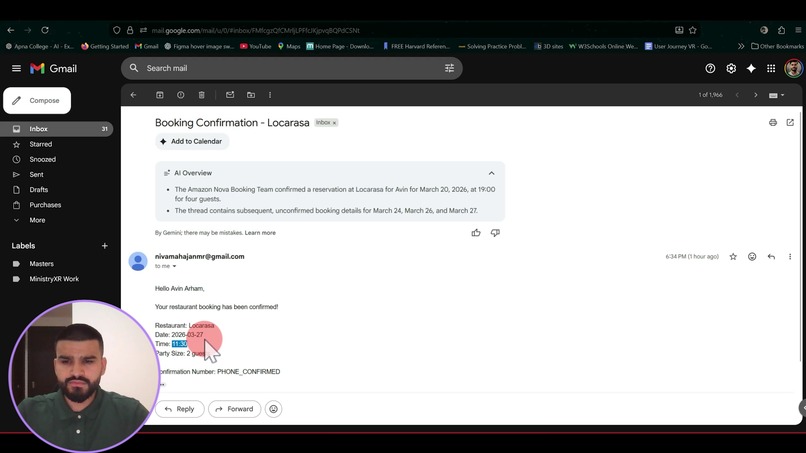

Reservation details sent to the user via email.

Inspiration

As a developer building applications for AI glasses and spatial devices over the past few years, I have worked across multiple platforms including HoloLens, Meta Quest, Quest 2, Quest 3, and Snap Spectacles. Through this journey, I have witnessed the rapid evolution of spatial hardware and AI capabilities.

These devices are called smart glasses, but through my experience developing for them, I realized something important:

They are technologically advanced, but they are not yet truly intelligent assistants.

Today’s AI glasses can:

- Capture photos

- Show maps and directions

- Translate text

- Display notifications

- Run immersive applications

But they still depend heavily on app-based interactions.

Users still have to:

- Open specific apps

- Navigate menus

- Perform manual steps

- Switch between workflows

Despite all the advances in AI, these devices still behave more like phones on your face rather than intelligent agents.

This led to a simple realization:

The future of AI glasses should not be app-driven.

It should be agent-driven.

What it does

Pragna is an agent-driven AI system for smart glasses that transforms how users interact with the real world by enabling AI to not just assist, but act.

Instead of navigating multiple apps to make a simple reservation, users can simply look at a restaurant and speak naturally. Pragna uses Amazon Nova to understand the environment, interpret user intent, and autonomously execute the booking process.

The system combines multimodal perception and agent execution into a single workflow:

- Using Nova Lite, the agent identifies restaurants from real-world images captured through the headset.

- Nova Micro then processes conversational voice input to extract booking details and confirm user intent.

- Once confirmed, Nova Act attempts to complete the reservation automatically through restaurant booking websites.

What makes Pragna unique is its reliability-first agent design:

- If web automation fails due to availability or site limitations, the system automatically switches to an AI phone agent that calls the restaurant to complete the booking.

- This ensures the task is completed rather than abandoned, demonstrating real agent behavior rather than simple AI interaction.

By combining:

- vision

- conversation

- reasoning

- real-world execution

Pragna demonstrates a future where AI glasses evolve from passive information displays into proactive intelligent companions capable of completing everyday tasks. This approach highlights how Amazon Nova can enable the next generation of agentic computing and frictionless commerce experiences.

How we built it

We built Pragna as an agent-driven architecture that combines a Unity VR client, a Python Flask orchestration backend, and Amazon Nova models to enable perception, reasoning, and real-world task execution.

On the client side, we developed the experience in Unity for Meta Quest, where users interact naturally through:

- spatial UI

- passthrough image capture

- voice input

The Unity application manages the booking workflow as a state machine, handling multimodal input and communicating with the backend through REST APIs.

On the backend, we designed an AI orchestration layer that coordinates multiple Nova and AWS services.

When a restaurant is captured:

- the image is stored in Amazon S3

- analyzed using Amazon Nova Lite to extract the business identity

Voice requests are processed through AWS Transcribe, and Nova Micro is used to reason about user intent, extract booking parameters, and dynamically ask follow-up questions until the request is complete.

Once confirmed, Nova Act is used to execute the booking through real restaurant websites. This allows the system to move beyond conversational AI into real task automation.

A key engineering focus was reliability. Instead of stopping when automation fails, we implemented a multi-path execution strategy.

If Nova Act cannot complete the reservation:

- the system automatically switches to a Twilio-powered AI phone agent

- that contacts the restaurant directly

- and completes the booking through conversational interaction

This ensures the agent focuses on task completion rather than best-case demonstrations.

The system follows a See → Listen → Reason → Act → Complete architecture pattern:

- See → Nova Lite vision understanding

- Listen → AWS Transcribe voice processing

- Reason → Nova Micro decision logic

- Act → Nova Act web automation

- Complete → Phone fallback + confirmation delivery

This architecture demonstrates how Amazon Nova enables the transition from AI assistants that provide information to AI agents capable of executing real-world workflows.

Challenges we ran into

One of our main challenges was building Pragna as a real AI agent instead of just a chatbot. We needed to connect:

- image understanding

- voice input

- reasoning

- booking execution

into one smooth workflow.

Another challenge was handling different processing speeds. Image analysis, voice transcription, and booking automation do not finish at the same time, so we built a state-based workflow to keep the interaction smooth and avoid confusion for the user.

We also faced challenges with real-world booking reliability. Sometimes Nova Act could not complete the booking due to website limitations. To solve this, we added a fallback system where will extract the contact information of the business and it web automation fails the AI automatically calls the restaurant using Twilio to complete the reservation.

These challenges helped us focus on building an AI agent that completes tasks reliably, not just one that gives responses.

Accomplishments that we're proud of

One of the things we are most proud of is successfully orchestrating multiple Amazon Nova models and services to work together as a single AI agent. Seeing:

- vision understanding

- voice interaction

- reasoning

- execution

all work together in a smooth flow was a major achievement for us.

We are also proud that the system performs in realistic situations, not just ideal conditions. During testing, our AI phone agent handled real call challenges such as:

- weak signal quality

- unclear responses

- unavailable booking times

The agent was able to adapt to these situations and negotiate a new available time, showing real-world robustness instead of a scripted demo.

Another accomplishment we value is building this for AI glasses instead of a traditional web or mobile app. We wanted to explore what the next generation of computing could look like, and designing this experience for spatial devices made the project much more exciting and meaningful for us.

We are also proud of how we used the capabilities of the headset, worked within hardware limitations, and still built a complete end-to-end experience from capture to booking confirmation.

Most importantly, we are proud that Pragna shows how AI glasses can move from running apps to running intelligent agents that actually complete tasks.

What we learned

his project was our first time building a complete AI agent system where multiple AI models work together instead of working independently. We learned how to orchestrate:

- vision understanding

- voice processing

- reasoning

- task execution

into one coordinated workflow.

It was also our first time building an AI-driven experience specifically for smart glasses. While we have previously built immersive VR experiences, designing an intelligent agent for spatial devices was a completely new challenge. This helped us understand how AI can move beyond immersive visuals to become an active assistant inside spatial computing.

Another important lesson was that building AI agents is not just about model accuracy, but about workflow design and reliability. We learned that real-world systems must handle:

- failures

- unexpected responses

- changing conditions

which is why designing fallback execution paths became an important part of our architecture.

We also learned how powerful Amazon Nova can be when used not just for responses, but for real-world task execution through agent workflows.

Overall, this project helped us better understand how AI agents could become the next interaction layer for smart devices.

What's next for Pragna - An agent that sees, listens, reasons, and acts.

Our next goal is to continue developing Pragna into a persistent AI assistant that lives inside AI glasses instead of being limited to a single workflow. We want the agent to remain available throughout the user experience, ready to understand context and help complete tasks whenever needed.

We also plan to expand the agent beyond restaurant booking into a broader service platform. This includes use cases such as:

- grocery ordering

- appointment scheduling

- home services

- travel booking

- other real-world automation tasks

Our goal is to build a general agent architecture that can connect users to services naturally through voice and context.

Another important next step is deployment. We plan to further refine the experience and explore publishing the application on platforms like the Meta Quest Store and adapting the architecture for other AI glasses and spatial devices.

In the long term, we see Pragna evolving into a general agent layer for AI glasses that demonstrates how future smart devices can move from running apps to running intelligent agents that proactively help users complete everyday tasks.

Log in or sign up for Devpost to join the conversation.