-

-

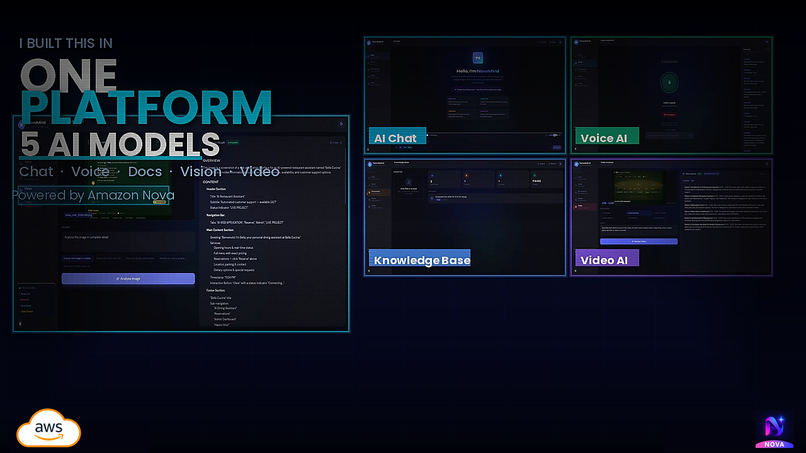

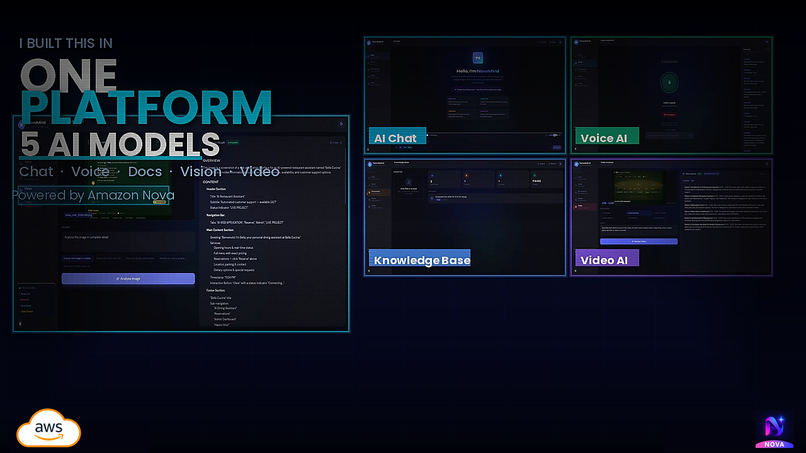

One platform. Five Amazon Nova models. Chat, Voice, Vision, Video, and Documents — powered by AWS Bedrock.

-

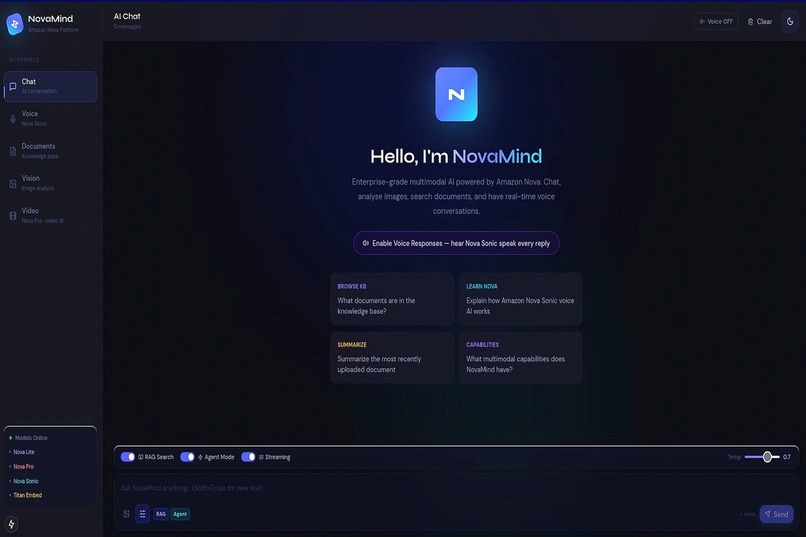

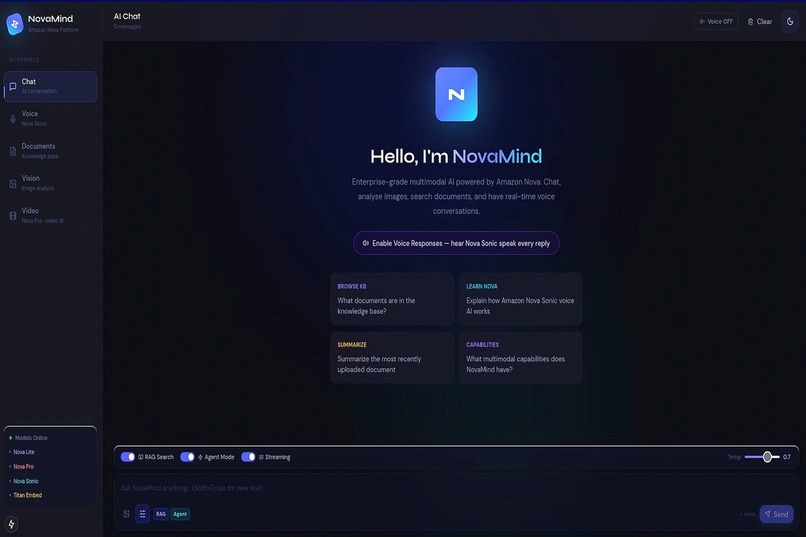

NovaMind AI Chat with RAG search and Agent Mode powered by Amazon Nova Lite and LangChain ReAct on AWS Bedrock.

-

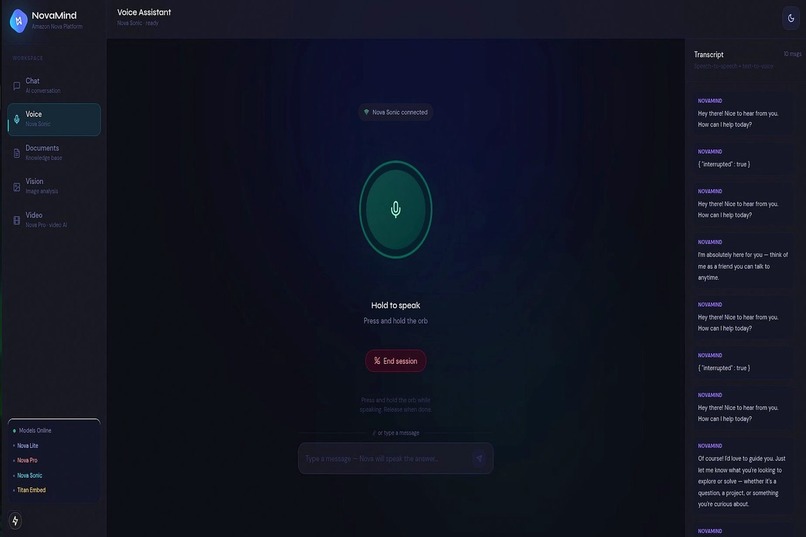

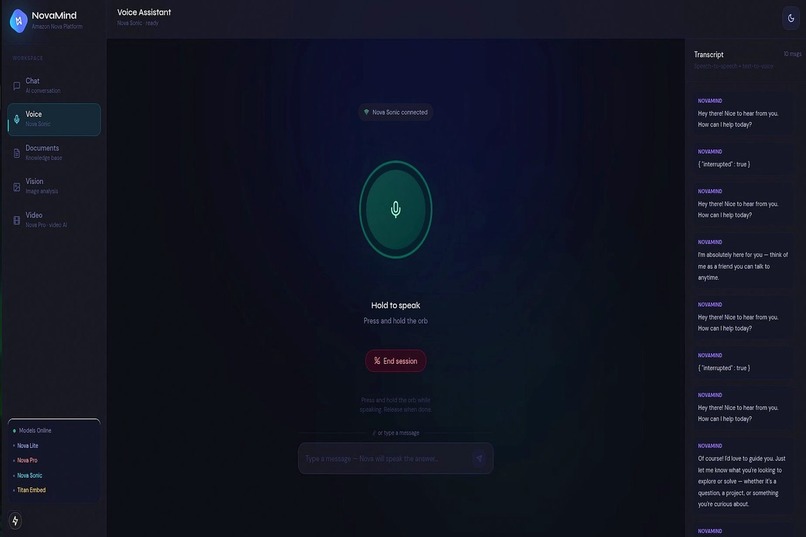

Real-time voice conversation with Amazon Nova Sonic via Smithy bidirectional SDK. Hold to speak, Nova responds instantly.

-

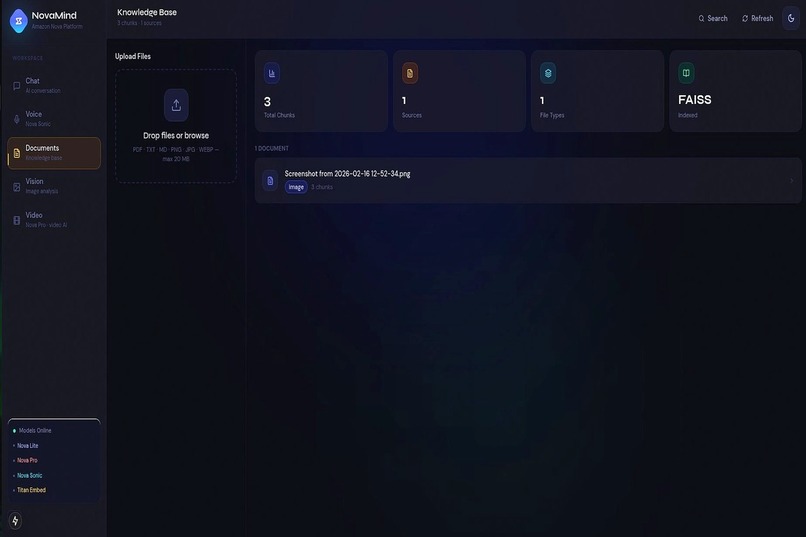

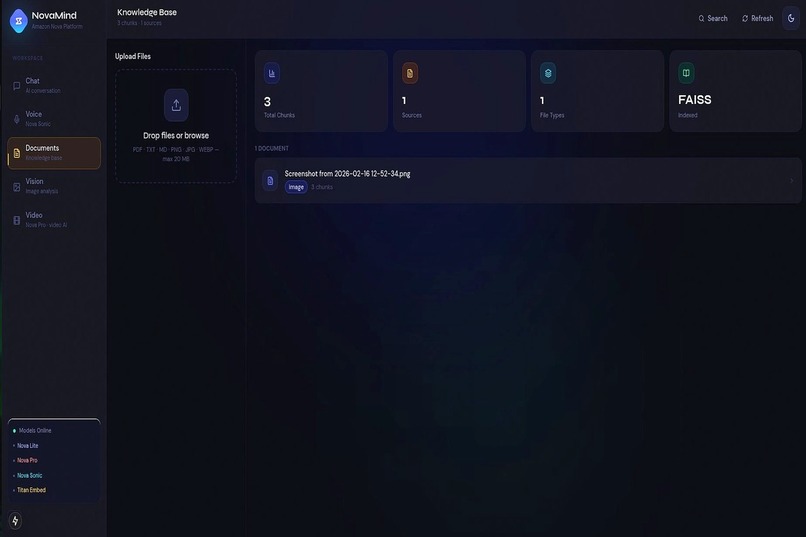

Knowledge base with FAISS vector search and Amazon Titan Embeddings. Upload PDF, TXT, MD, or images — instantly searchable.

-

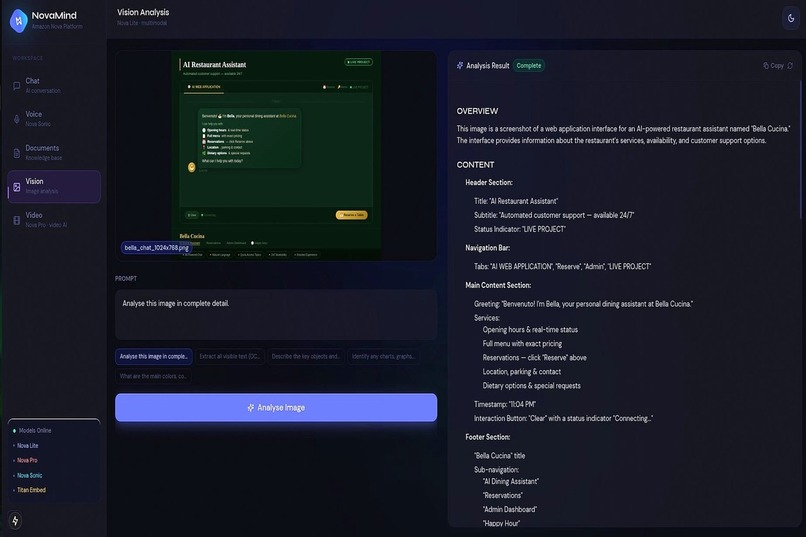

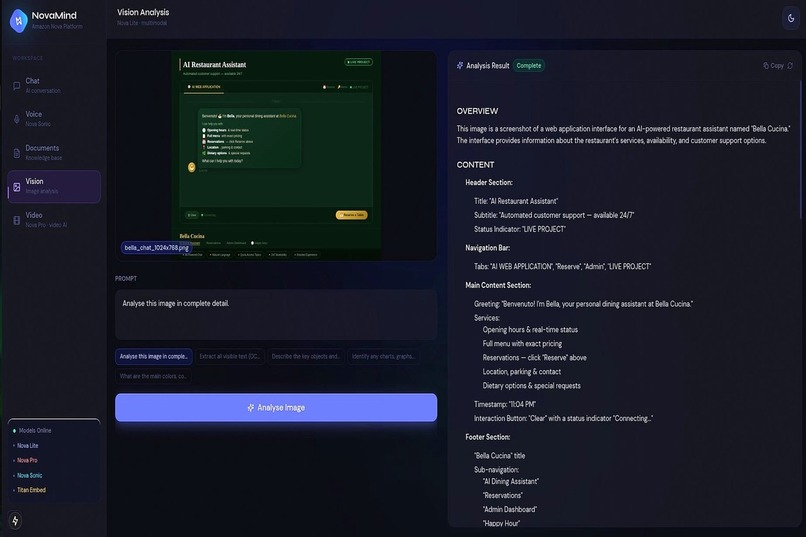

Image analysis powered by Amazon Nova Lite multimodal. Structured output with OCR, object detection, and actionable insights.

-

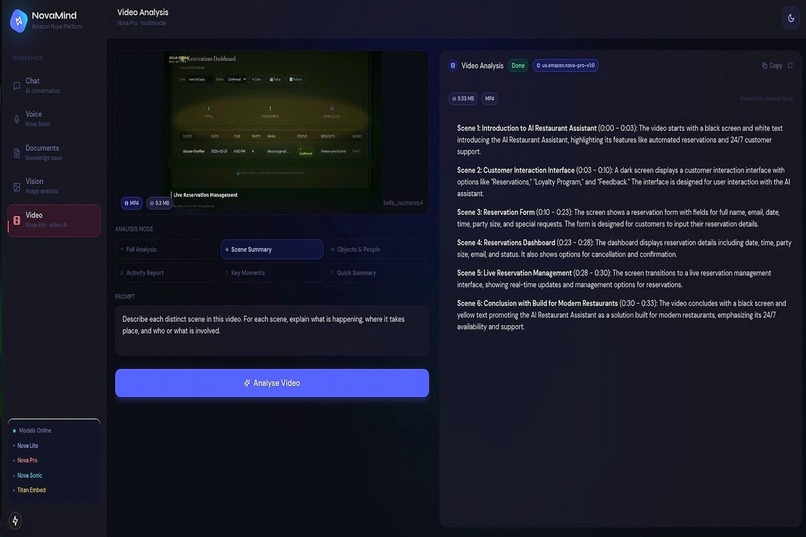

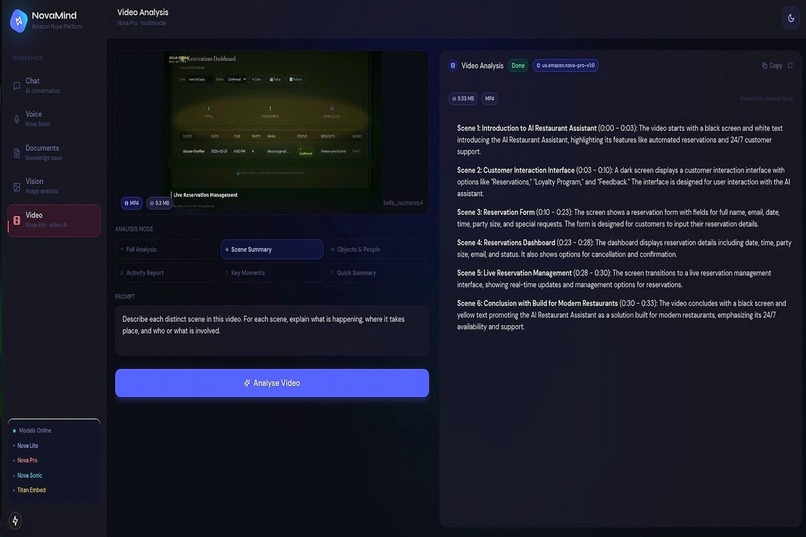

Video understanding powered by Amazon Nova Pro. Automatic frame sampling up to 960 frames. Six analysis preset modes.

Inspiration

Enterprise teams working with AI today face a fragmentation problem. Image analysis tools do not connect to document search. Voice assistants cannot reason over uploaded files. Video understanding requires a separate pipeline. Every capability lives in a different product, forcing constant context-switching and eliminating the compound value that comes from combining modalities.

The question that drove NovaMind was simple: what if a single session could handle everything? Upload a document, ask about it by voice, attach an image to a follow-up message, and analyse a related video without switching tools, losing context, or managing five different APIs.

Amazon Nova made that question answerable.

What It Does

NovaMind is an enterprise-grade multimodal AI platform that unifies five Amazon Nova foundation models into one production-ready application.

Chat with RAG and Agent Mode — Documents are chunked, embedded via Amazon Titan, and indexed in FAISS. Every message optionally retrieves the most relevant chunks before invoking Amazon Nova Lite via the Bedrock Converse API. Agent Mode activates a LangChain ReAct loop with four tools: semantic knowledge base search, topic summarisation, KB statistics, and a safe mathematical expression evaluator.

Real-Time Voice — Amazon Nova Sonic is connected via the Smithy bidirectional streaming SDK over a WebSocket pipeline. Audio is captured at 16 kHz through an AudioWorklet, encoded to base64 PCM, and streamed in real time. Nova responds with 24 kHz LPCM audio scheduled for gapless browser playback.

Image Analysis — Images are sent as inline bytes to Amazon Nova Lite via the Bedrock Converse API. A structured system prompt returns section-formatted analysis covering visible text, objects, charts, layout, and actionable insights.

Video Understanding — Videos up to 25 MB are sent to Amazon Nova Pro, which samples frames automatically at 1 fps up to 960 frames across six analysis preset modes. Nova Lite serves as automatic fallback.

Knowledge Base — Documents flow through a pipeline of extraction, chunking, embedding, and FAISS indexing — immediately searchable with semantic similarity scores.

How I Built It

The backend is FastAPI with a clean dependency injection layer — every service is a singleton wired once at startup. The frontend is Next.js 15 App Router with TypeScript strict mode, Tailwind CSS custom design tokens, and the motion animation library.

Amazon Nova integration points:

Model Integration

us.amazon.nova-lite-v1:0 Bedrock Converse API — chat, RAG, streaming, image analysis

us.amazon.nova-pro-v1:0 Bedrock Converse API — video frame understanding

amazon.nova-2-sonic-v1:0 Smithy bidirectional SDK — real-time voice over WebSocket

amazon.titan-embed-image-v1 Bedrock InvokeModel — joint image and text embeddings

amazon.titan-embed-text-v2:0 Bedrock InvokeModel — document chunk embeddings

The voice pipeline required the most care. Nova Sonic uses a bidirectional streaming protocol through the Smithy SDK — not the standard Converse API. The session lifecycle follows a strict event sequence:

sessionStart → promptStart → SYSTEM contentBlock

→ USER contentBlock (TEXT or AUDIO) → contentEnd → [Nova responds]

Learning that turnEnd does not exist in the Nova Sonic API — and that sending it causes an HTTP 400 that surfaces as "No events to transform were found" was the most technically demanding discovery of the build.

The FAISS vector store uses IndexFlatIP with L2-normalised vectors, making inner product equivalent to cosine similarity. Batch ingestion is fully atomic under an asyncio lock, with disk writes offloaded to a thread pool via run_in_executor to keep the event loop responsive.

The frontend uses a FOUC-prevention inline script in the root layout to apply the correct theme class before the first browser paint, preventing a flash of wrong theme on load.

Challenges I Faced

Nova Sonic event protocol — The bidirectional streaming API has strict requirements that are not immediately obvious from documentation. Sending a turnEnd event causes an HTTP 400 that surfaces as "No events to transform were found" a misleading error. The correct trigger for a Nova response is contentEnd on a USER content block with interactive: true. Diagnosing this required building an isolated test script and validating each event in sequence.

WebSocket CORS on upgrade requests — Starlette's CORSMiddleware checks the Origin header on WebSocket upgrade requests. A mismatched origin returns 403 before the WebSocket handler is ever reached. The fix allow_origins=["*"] with allow_credentials=False resolves this for all localhost development origins without requiring exact port matching.

24 kHz audio output — Nova Sonic outputs audio at 24 kHz. Playing 24 kHz PCM in a 16 kHz AudioContext produces audio at 66.7% speed with a pitch shift. The output AudioContext must be initialised at exactly sampleRate: 24000 to match Nova's output rate. This is distinct from the input rate (16 kHz), which means two AudioContext instances with different sample rates run simultaneously during a voice session.

React 19 and motion library — framer-motion v11 ships its own type definitions that conflict with the newer motion package under React 19. The resolution required removing framer-motion entirely and migrating all imports to motion/react, then resolving a secondary conflict between react-dropzone's onAnimationStart prop type and motion.div's internal type for the same prop.

Dual memory systems — The original architecture had the LangChain agent saving conversation turns to its own ConversationBufferWindowMemory while the chat API simultaneously saved to a SessionManager. Two unsynchronised stores for the same session diverged after a few turns. The fix was to designate the agent as the single source of truth when use_agent=True, and skip SessionManager updates for agent turns entirely.

What I Learned

Building with Amazon Nova across five modalities in a single application revealed how much the models complement each other. Nova Lite handles fast, cost-efficient reasoning. Nova Pro brings deep visual understanding to video without requiring frame extraction pipelines. Nova Sonic enables genuinely natural conversational AI the difference between a chat interface with text-to-speech bolted on and a real voice-first experience.

Working directly with the Smithy SDK for Nova Sonic rather than through a higher-level abstraction gave me precise control over session lifecycle, audio scheduling, and error recovery that would not have been possible otherwise.

The project also reinforced that production-quality AI applications require as much engineering around the models as within them. Connection pooling, retry logic, atomic vector store writes, gapless audio scheduling, and theme persistence across server and client rendering all matter as much as the model calls themselves.

Built With

amazon-nova-lite · amazon-nova-pro · amazon-nova-sonic · amazon-titan-embeddings · aws-bedrock · fastapi · langchain · faiss · next.js · typescript · tailwindcss · motion · websockets · docker

Built With

- amazon

- amazon-web-services

- bedrock

- css

- docker

- embeddings

- faiss

- fastapi

- langchain

- lite

- next.js

- nova

- pro

- python

- react

- sonic

- tailwind

- titan

- typescript

- websockets

Log in or sign up for Devpost to join the conversation.