-

-

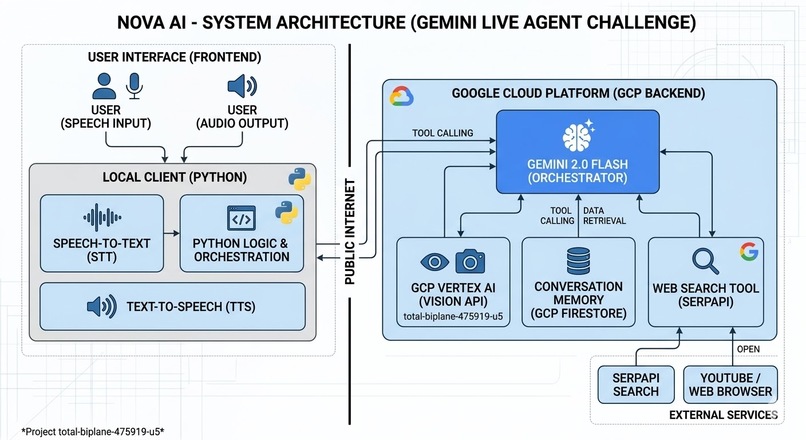

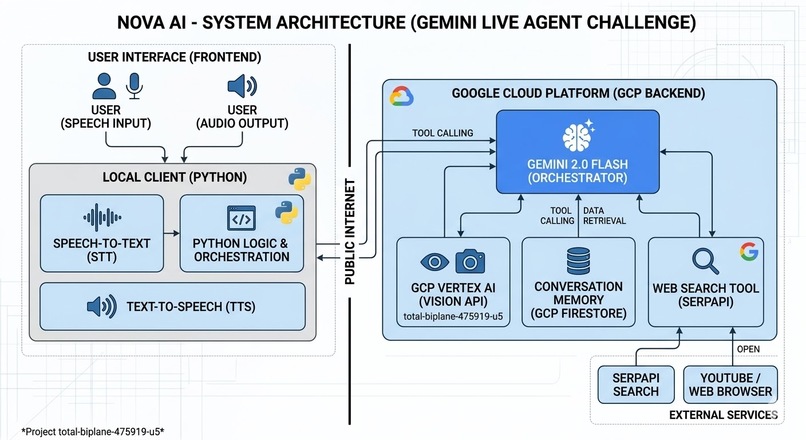

System Architecture: Shows how the Python client connects to Gemini 2.0 Flash on Google Cloud Platform and uses SerpAPI for real-time .

-

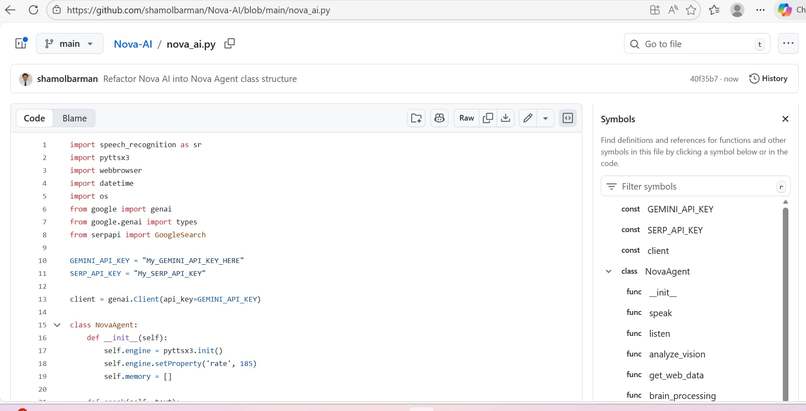

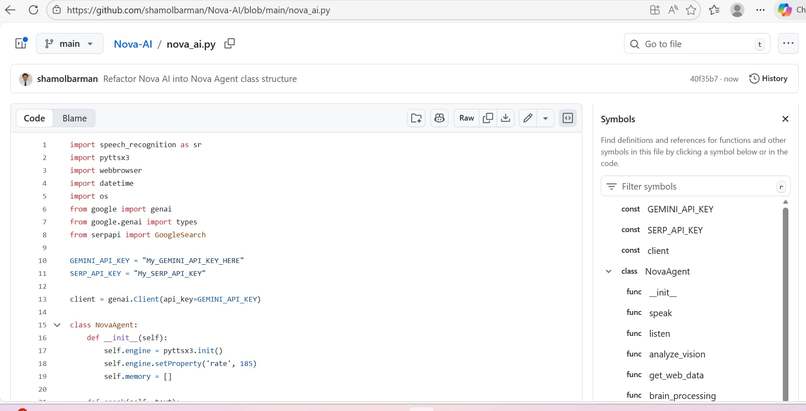

Core Logic: Implementation of Gemini 2.0 SDK and tool-calling integration for seamless multimodal interaction

-

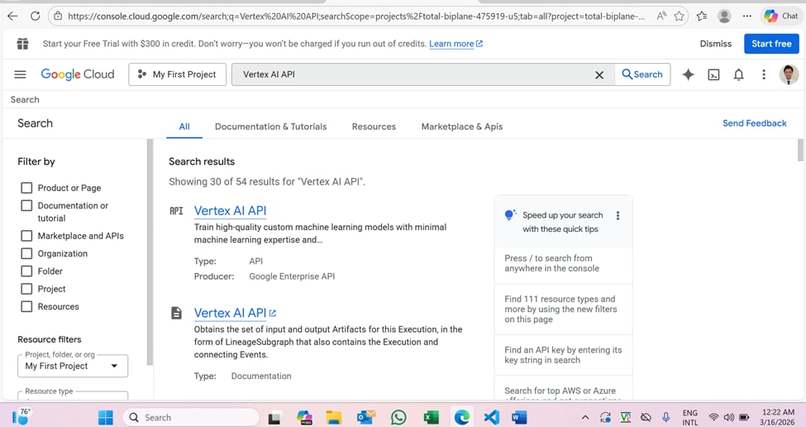

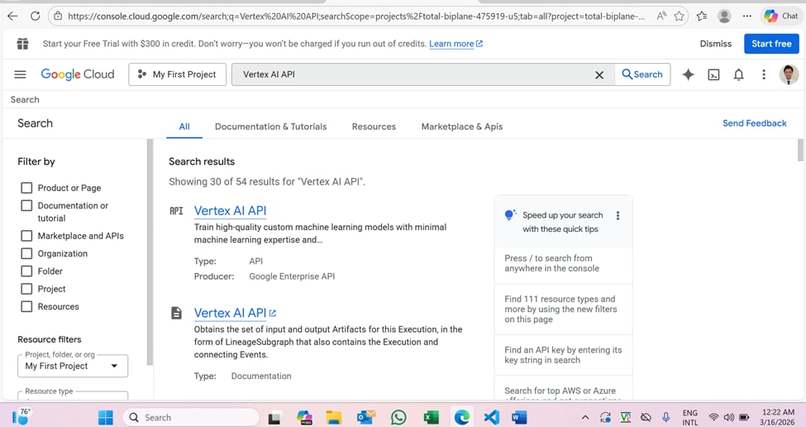

GCP Infrastructure: Backend project setup on Google Cloud (Project ID: total-biplane-475919-u5) using Vertex AI

-

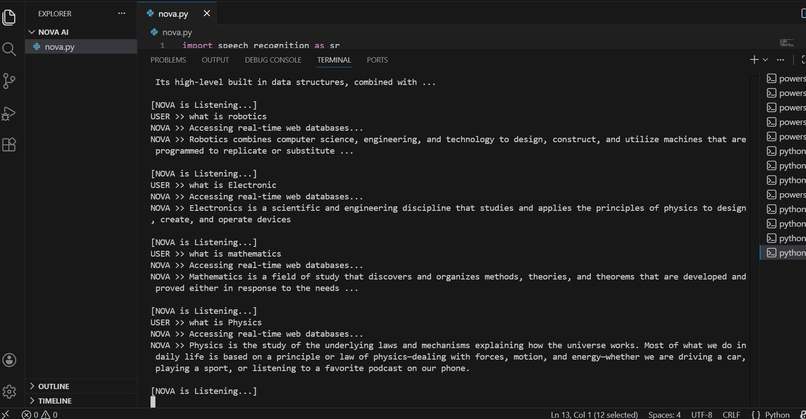

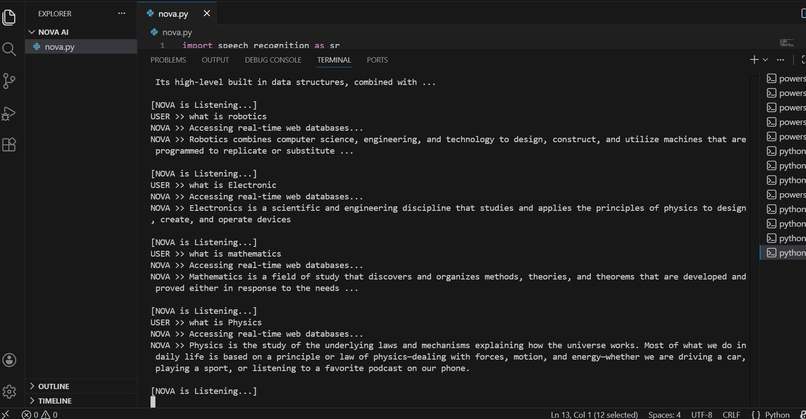

Live Demo: Nova AI responding to user queries with real-time web data and voice output

-

Inspiration The inspiration for Nova AI came from the limitations of current AI assistants that often feel disconnected from the real world. We wanted to build a "Live Agent" that doesn't just talk, but actually reasons, sees, and searches the internet in real-time to provide contextually accurate and up-to-date answers.

What it does Nova AI is a next-generation multimodal agent. It can:

Listen & Talk: High-speed voice interaction using Gemini 2.0 Flash.

Real-time Grounding: It uses SerpAPI to search Google and answer questions about current events (e.g., today's gold price or news).

Multimodal Vision: It can analyze images and screenshots to drive intelligent conversations.

Automate Tasks: It can open YouTube, Google, and other web services based on voice commands.

How we built it Core Logic: Built with Python using the latest Google GenAI SDK.

AI Model: Powered by Gemini 2.0 Flash for low-latency, multimodal reasoning.

Backend & Infrastructure: Deployed and managed via Google Cloud Platform (Project ID: total-biplane-475919-u5), utilizing Vertex AI services.

Tools: Integrated SerpAPI for web searching and Pyttsx3/SpeechRecognition for the voice interface.

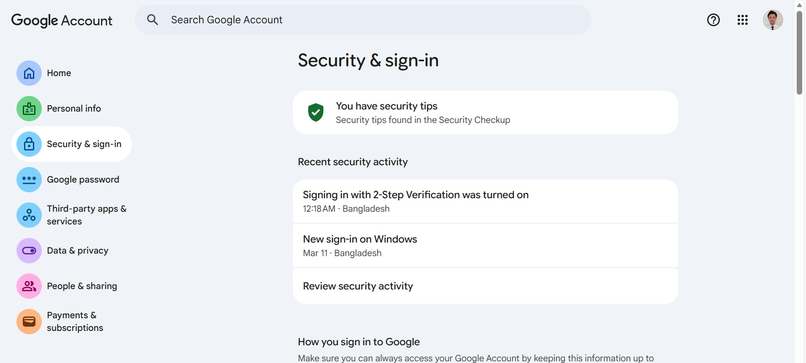

Challenges we ran into The biggest challenge was Orchestration—making sure the agent knows when to search the web and when to rely on its internal knowledge without lagging. We also faced hurdles with Google Cloud 2-Step Verification and setting up the IAM permissions for the Vertex AI environment, which taught us a lot about cloud security.

Accomplishments that we're proud of We are incredibly proud of achieving Real-time Web Grounding. Seeing Nova AI successfully fetch current news and explain it with a human-like voice while running on a Google Cloud backend was a massive milestone for us. We also successfully implemented a modular architecture that makes the agent highly scalable.

What we learned We learned the true power of Function Calling in Gemini 2.0. Understanding how to connect an LLM to external APIs like SerpAPI changed our perspective on AI agents. We also gained hands-on experience in navigating the Google Cloud Console, managing project quotas, and deploying AI services.

What's next for Nova AI - Multimodal Live Agent The journey doesn't end here! We plan to:

Direct Cloud Deployment: Move the entire client-side logic to a Cloud Run container.

Long-term Memory: Implement a Firestore database to help Nova remember user preferences across different sessions.

Advanced UI: Build a sleek React-based dashboard to visualize Nova’s "thought process" and search results.

Log in or sign up for Devpost to join the conversation.