-

-

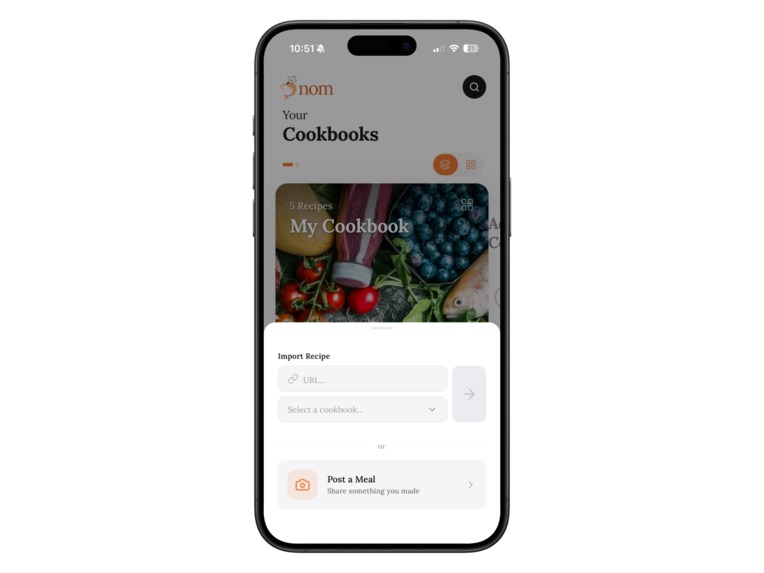

Cookbooks Home – All your recipe collections, one tap away. Organize, browse, and dive into your favorites anytime.

-

My Cookbook – Saved recipes meet smart suggestions. Something new is always waiting for you right here.

-

Recipe Detail – Nutrition, ingredients, prep time—all right here. One tap to cook, one tap to meal plan.

-

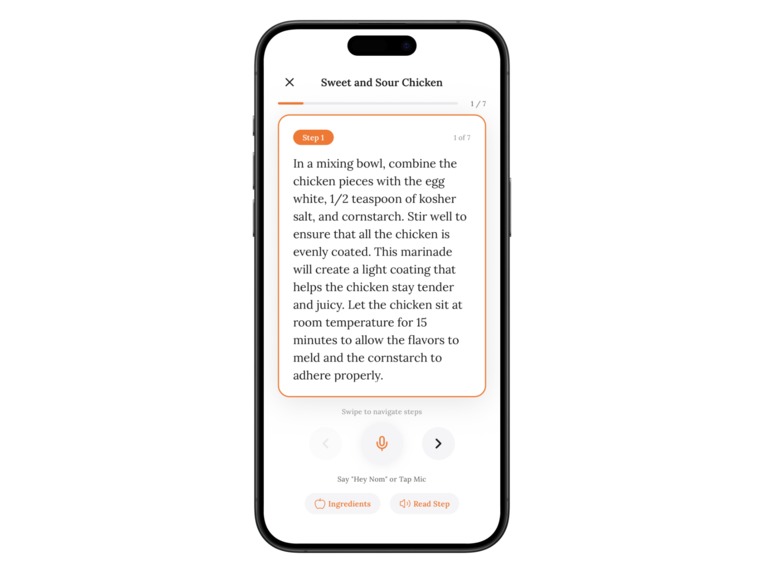

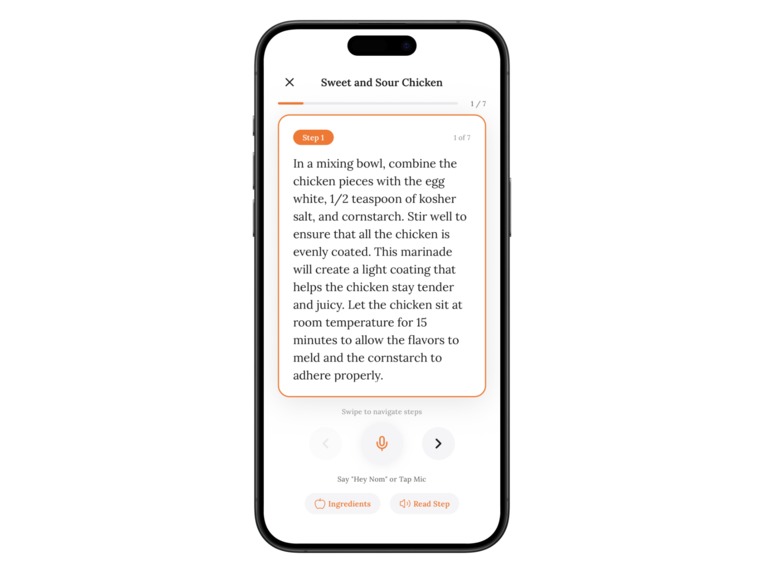

Cooking Mode – Hands-free, voice-powered, step-by-step. Just say "Hey Nom" and let the app guide you through it.

-

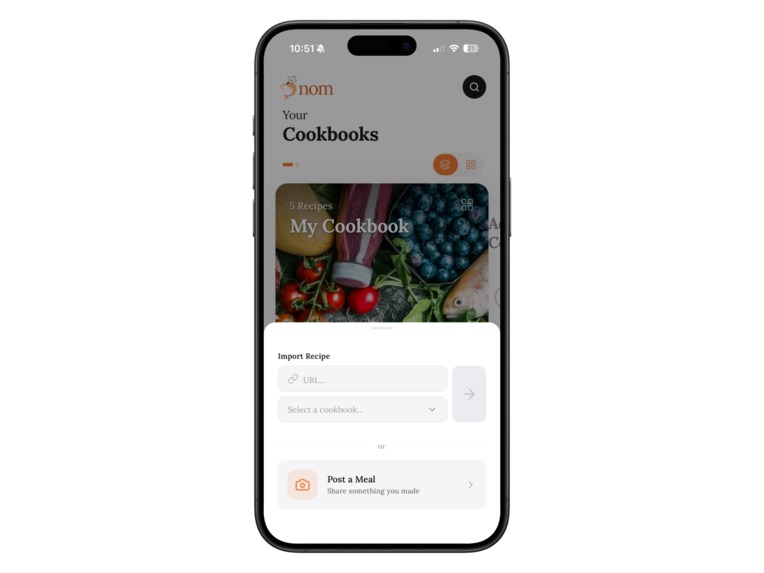

Import Recipe – Found something great online? Paste the URL, done. Or share your own creations with the community.

-

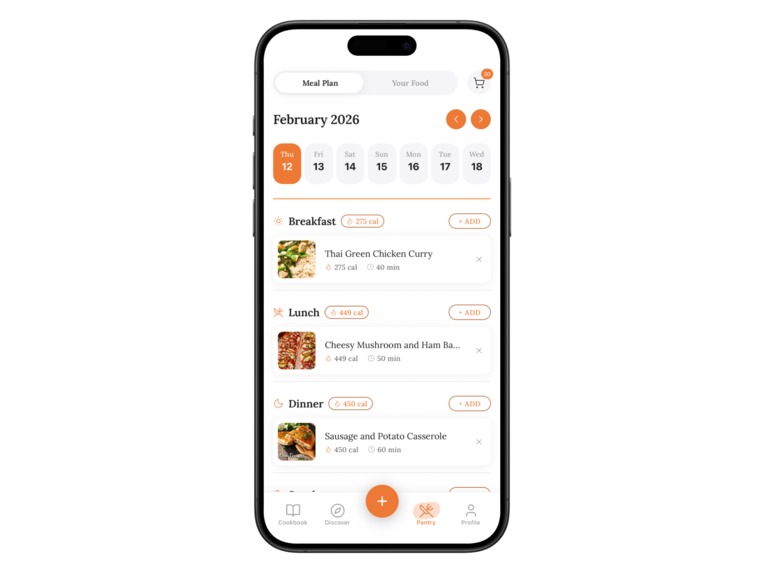

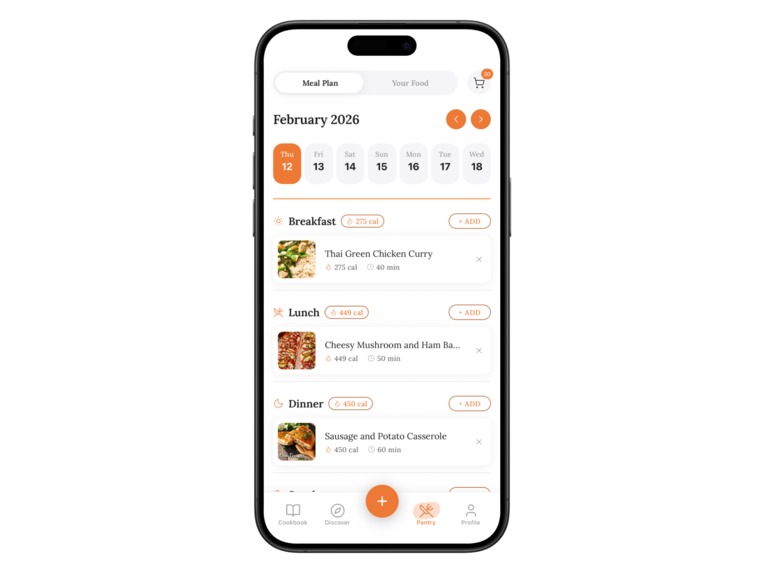

Meal Planner – Breakfast, lunch, dinner—planned. Calorie tracking built in, and zero dinnertime decision fatigue.

-

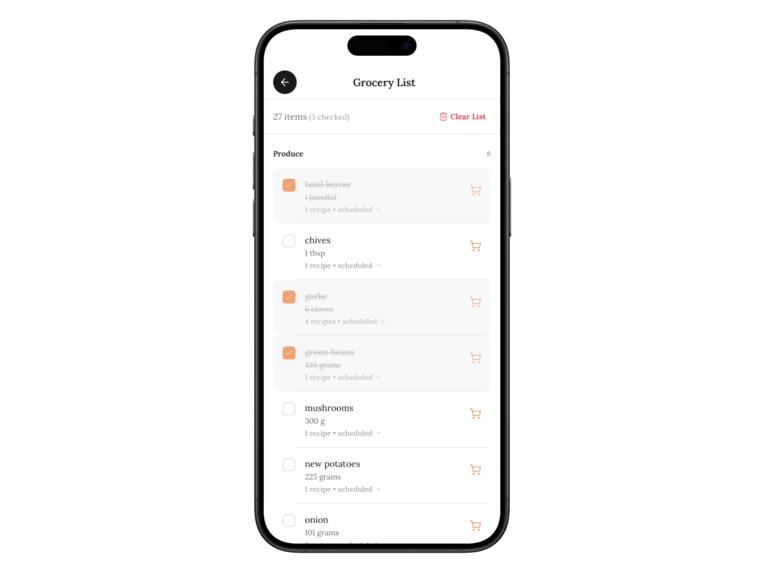

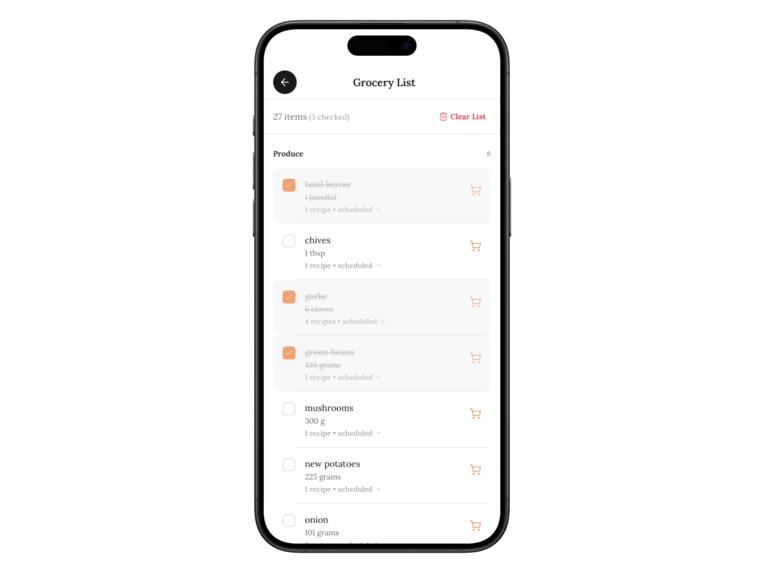

Grocery List – Auto-generated straight from your meal plan. Sorted by category, easy to check off as you shop.

-

Discover Recipes – Swipe. Save. Repeat. Quick stats at a glance make finding your next favorite dish effortless.

-

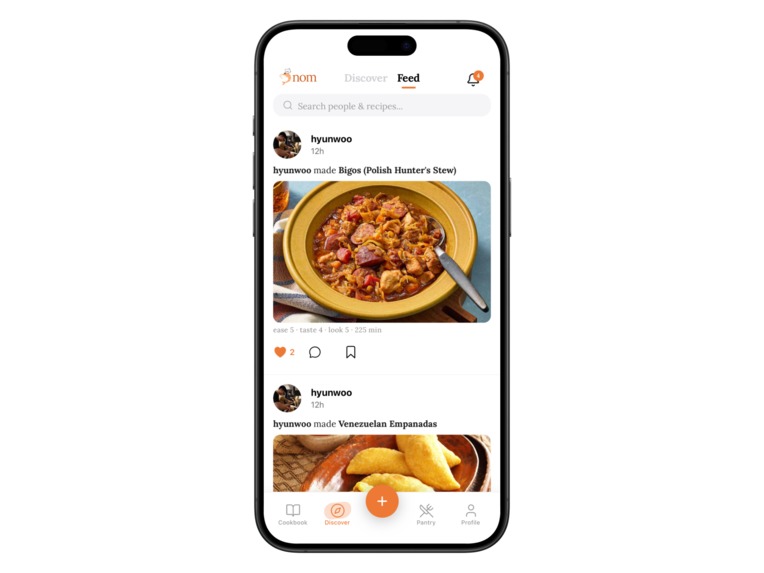

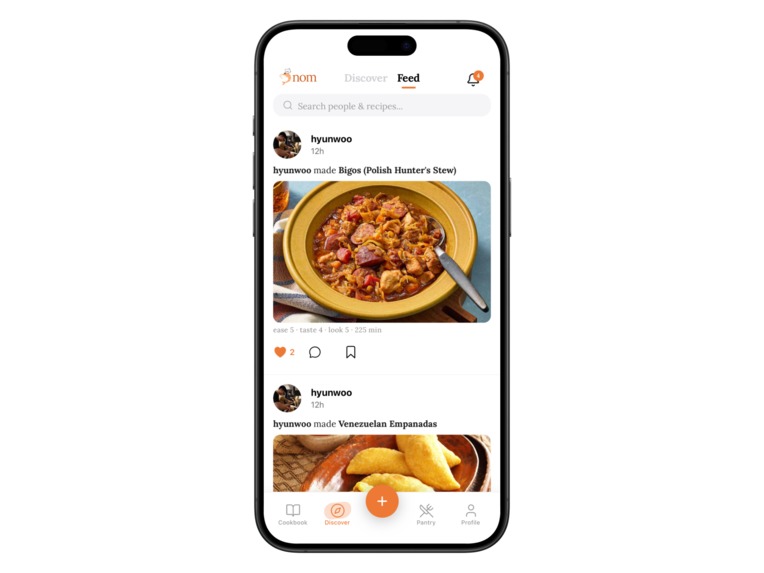

Social Feed – What's the Nom community cooking? Get inspired, drop a like, or bookmark something for later.

Nom: From Feed to Fork

Inspiration

We all have the same guilty habit: saving dozens of cooking videos we'll never actually make. We noticed that the gap between food inspiration and food on the table isn't motivation — it's friction. The cooking workflow is scattered across five or six apps, and nobody has stitched it together. We wanted to build the app that turns a TikTok link into dinner.

How We Built It

The core technical challenge was turning unstructured video content into structured, cookable recipes. Our extraction pipeline downloads video via yt-dlp, pulls key frames with ffmpeg, transcribes audio with OpenAI Whisper, analyzes frames with GPT-4o Vision, and then uses GPT-4o-mini to synthesize everything into a clean recipe with ingredients, steps, and nutritional estimates. This runs on a FastAPI backend.

The mobile app is React Native with Expo SDK 54 and Expo Router for navigation. Convex handles our serverless database and real-time sync. Clerk manages auth, Zustand handles client state, and the UI is built with Gluestack UI and NativeWind. We also built a native iOS share extension in Swift so users can send links to Nom straight from TikTok or Instagram. Monetization is wired up from day one with RevenueCat.

Challenges

Video-to-recipe parsing is messy. Creators skip measurements, talk fast, and show ingredients without naming them. Getting the AI pipeline to reliably produce structured output — distinguishing "a pinch of salt" from "1 tsp salt" when someone just says "add some salt" — took serious prompt engineering and iteration.

Multi-platform link handling. TikTok, Instagram, and YouTube each have different URL formats, embed behaviors, and download restrictions. TikTok's frequently changing API was especially frustrating, requiring fallback logic and constant testing.

Pantry matching. Matching fuzzy user-entered items ("cherry tomatoes") against recipe ingredients ("diced tomatoes" vs. "tomato paste") without over- or under-matching was a real NLP problem that we're still refining.

What We Learned

Multi-modal AI — combining vision, audio, and text — is surprisingly effective for extracting structure from chaotic content when the models are orchestrated well. But the deeper lesson is that the hardest consumer app problems aren't technical; they're workflow problems. The AI is impressive, but the real value is making a fragmented process feel effortless.

Built With

- clerk

- convex

- expo-router

- fastapi

- gluestack

- nativewind

- openai

- openai-vision

- openai-whisper

- python

- react-native

- revenuecat

- tailwind

- themealdb-api

- typescript

- yt-dlp

Log in or sign up for Devpost to join the conversation.