-

-

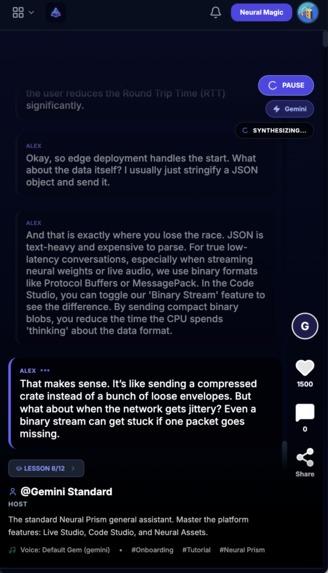

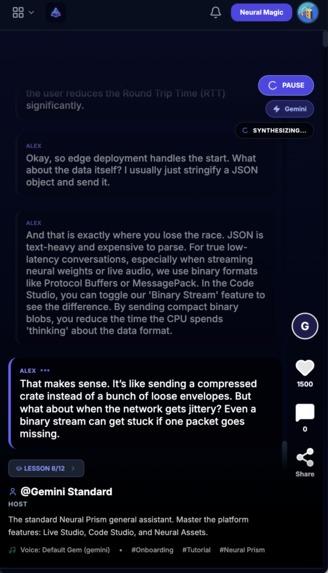

podcast lecture

-

login page

-

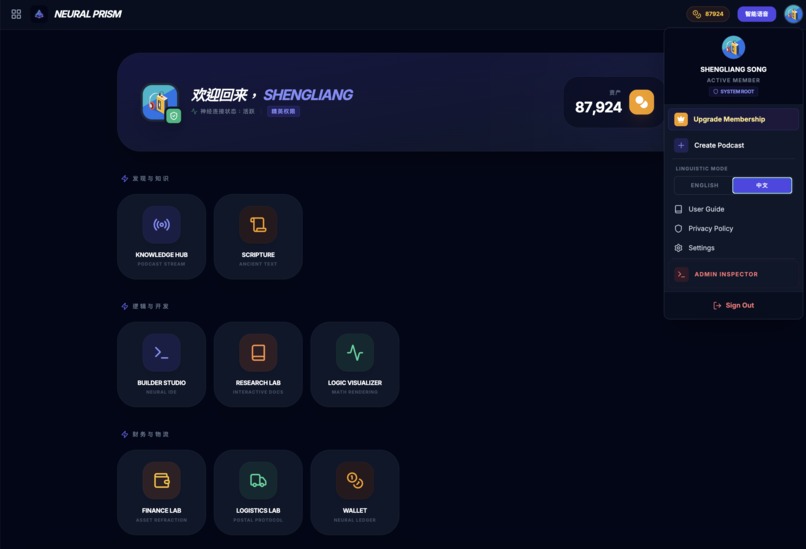

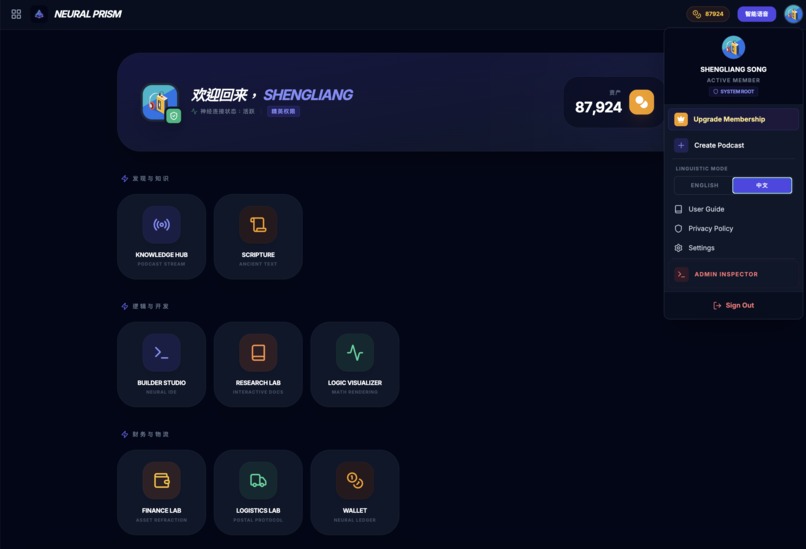

apps dashboard

-

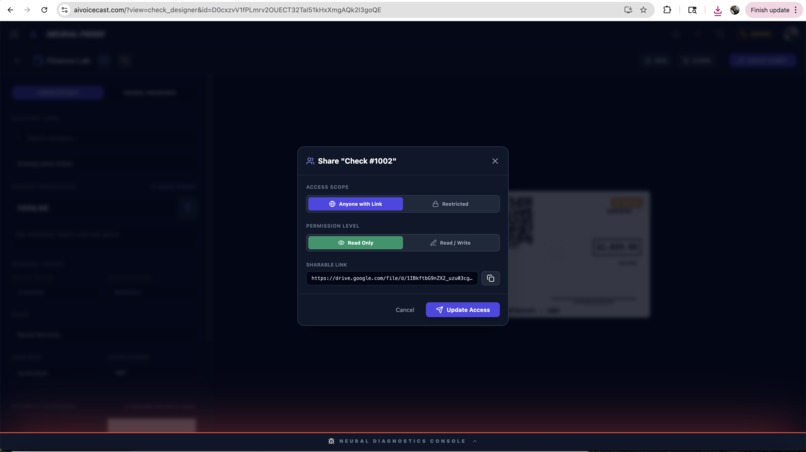

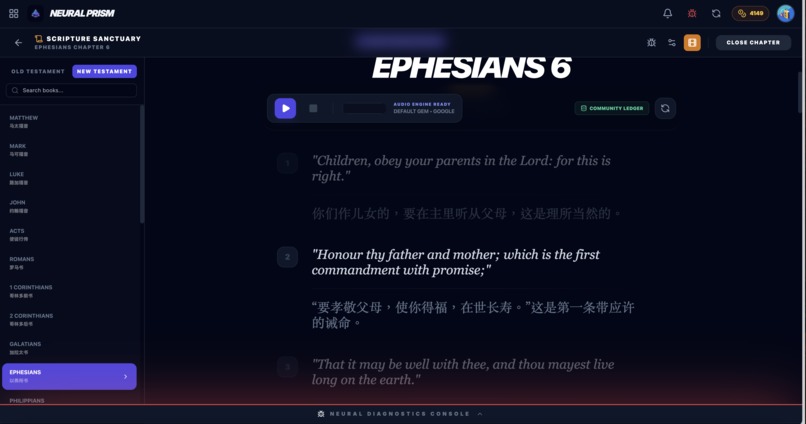

scripture

-

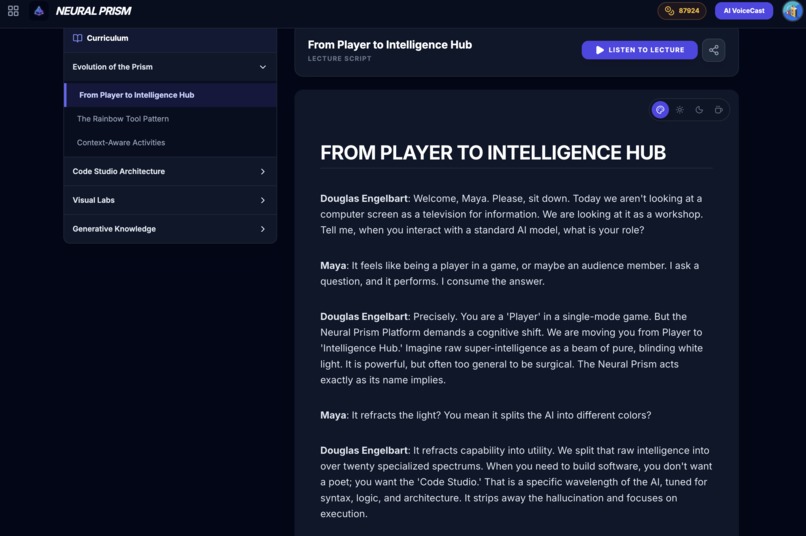

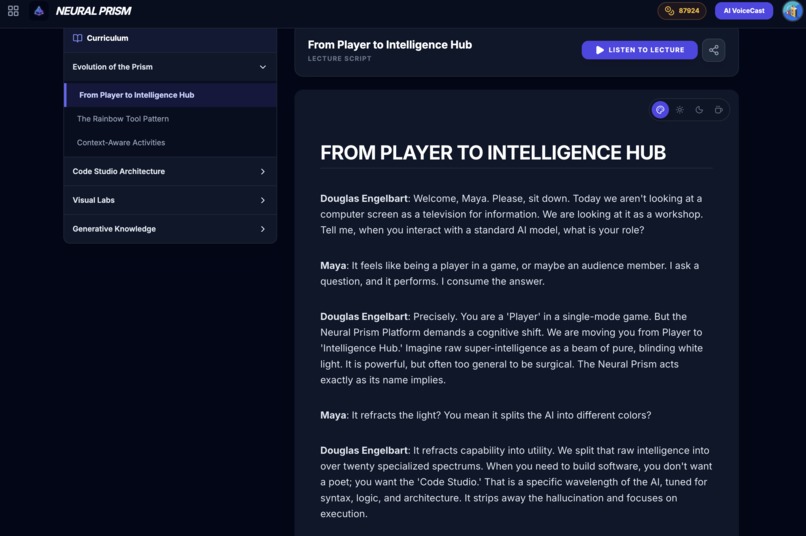

podcast channel

-

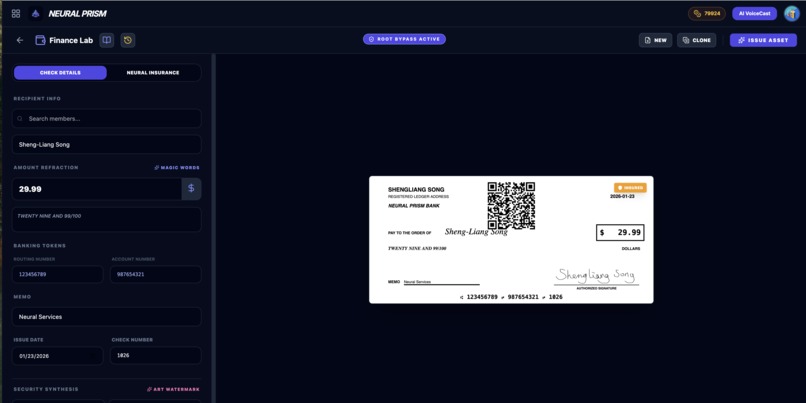

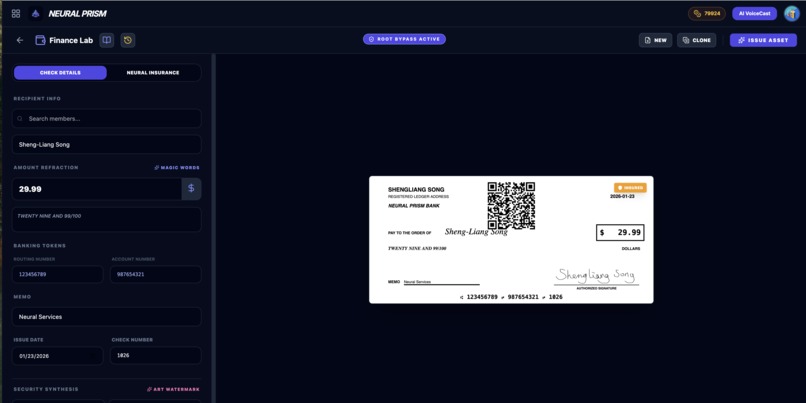

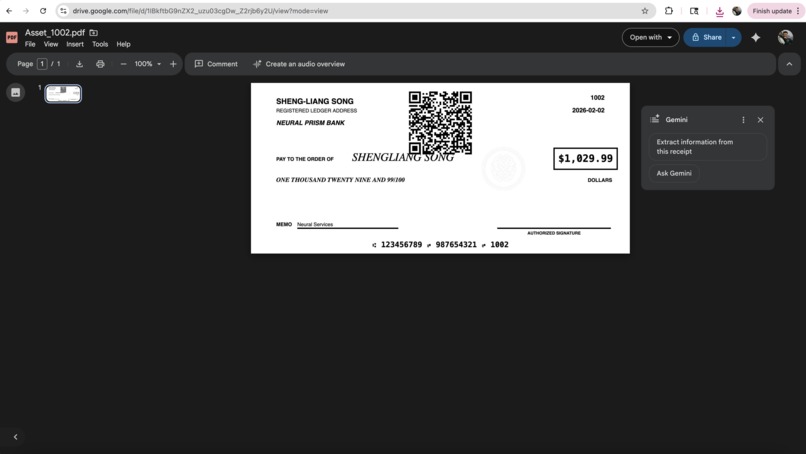

How to generate an AI check?

-

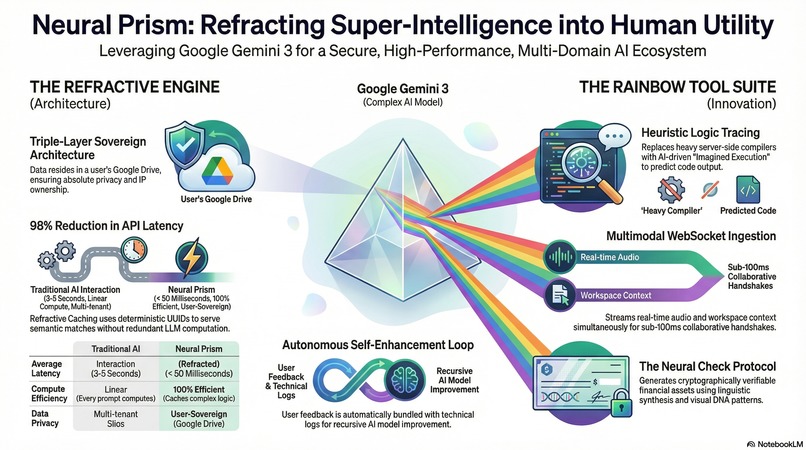

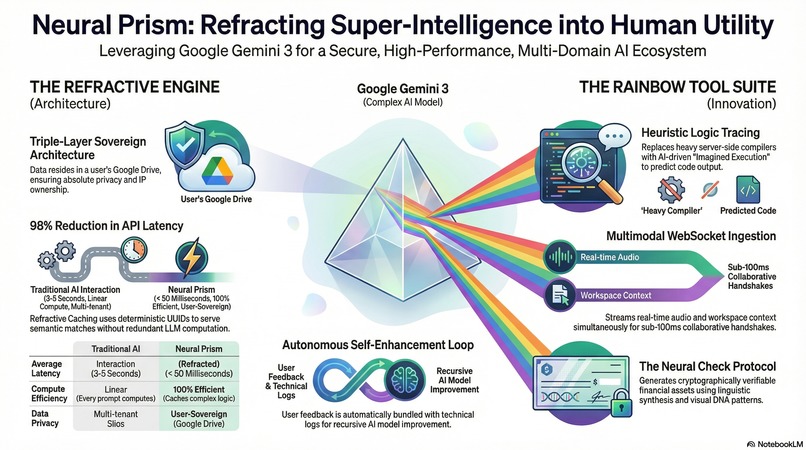

info graphic

-

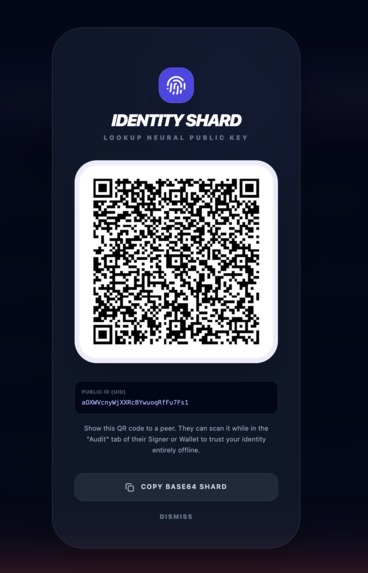

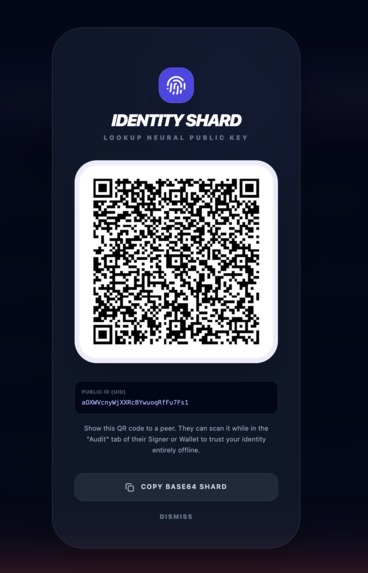

digital badge

-

badge

-

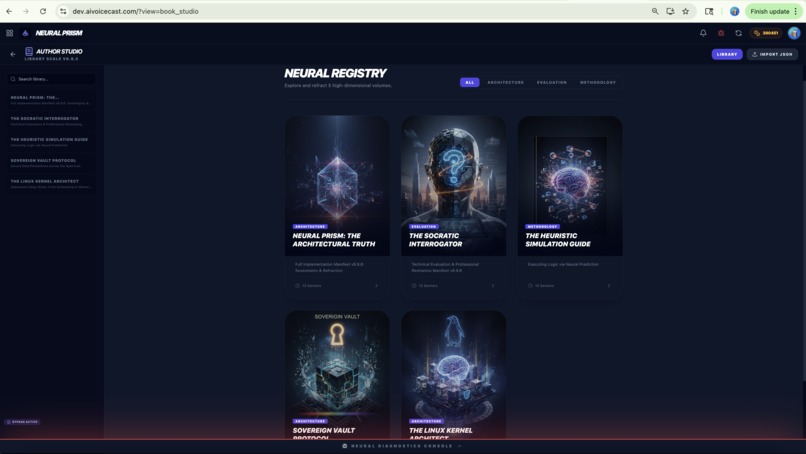

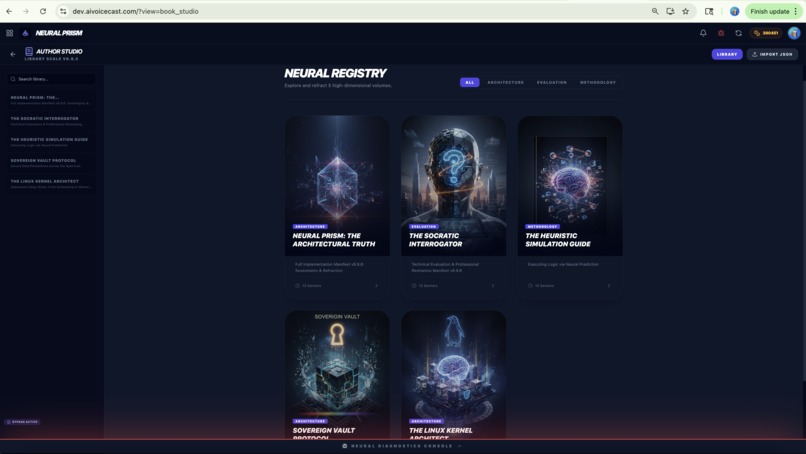

author books

-

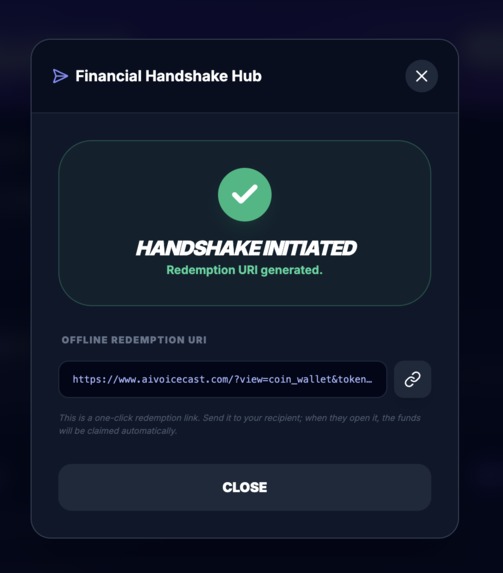

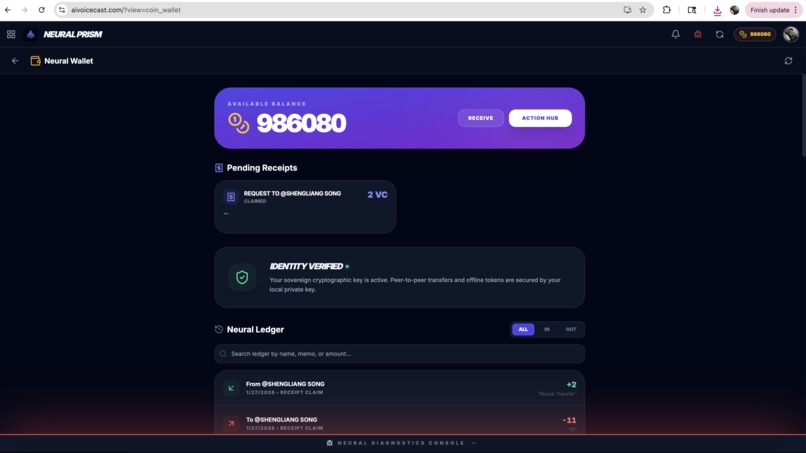

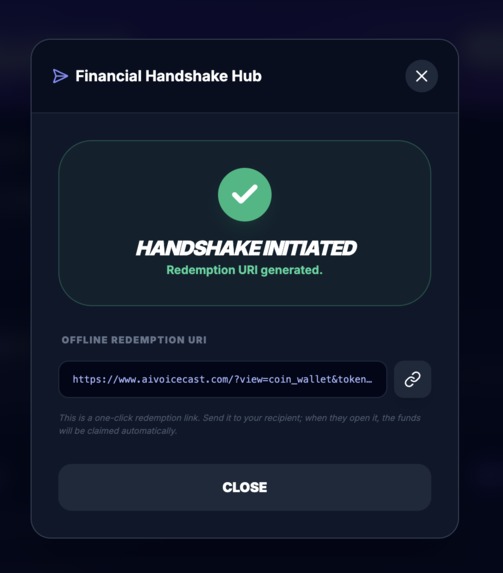

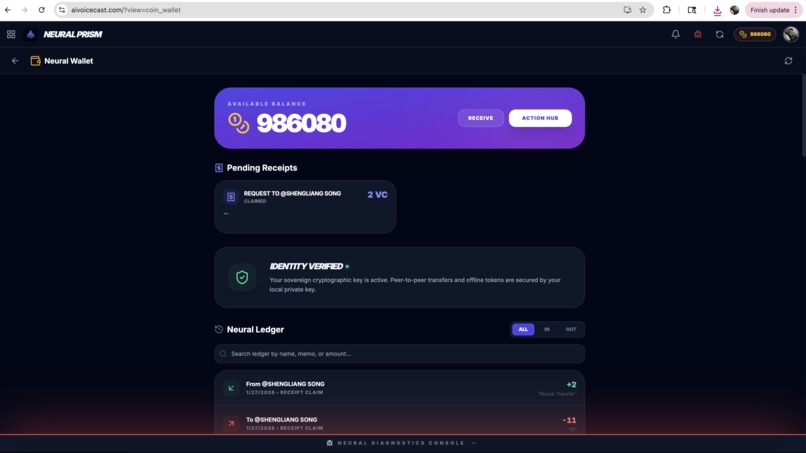

Wallet app

-

Wallet app

-

Wallet app

-

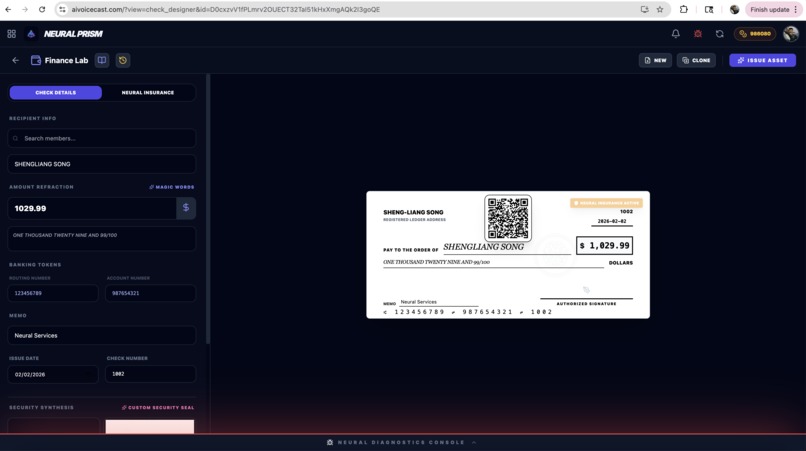

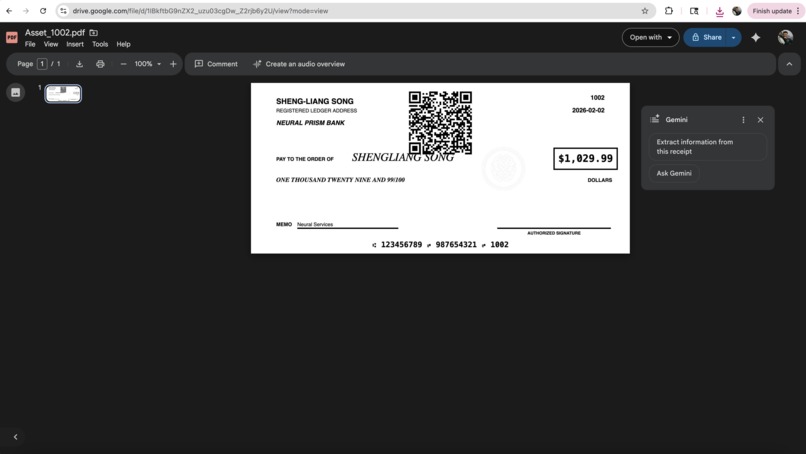

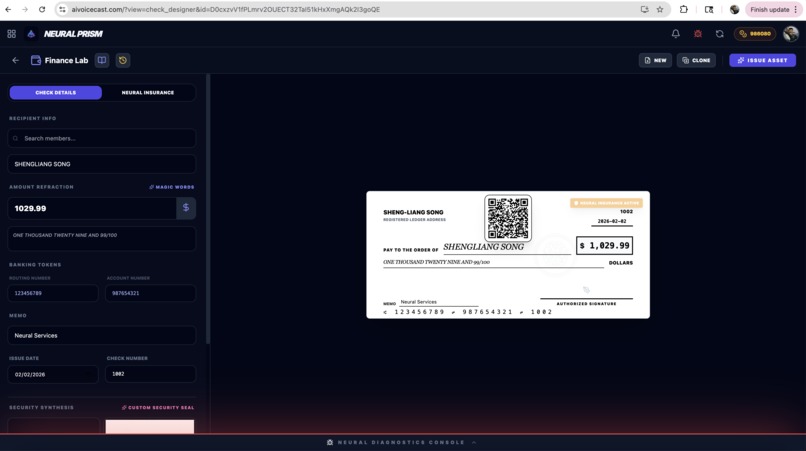

check designer

-

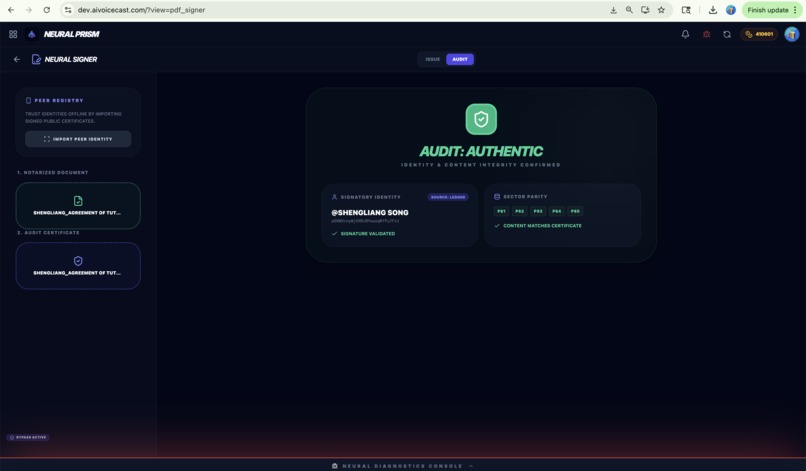

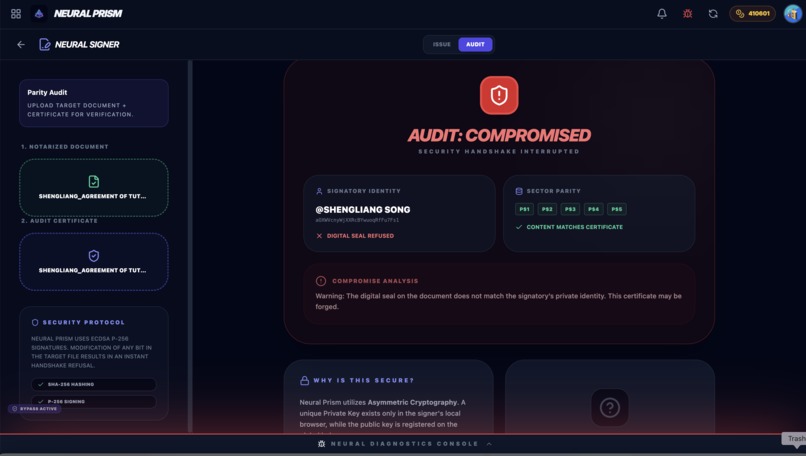

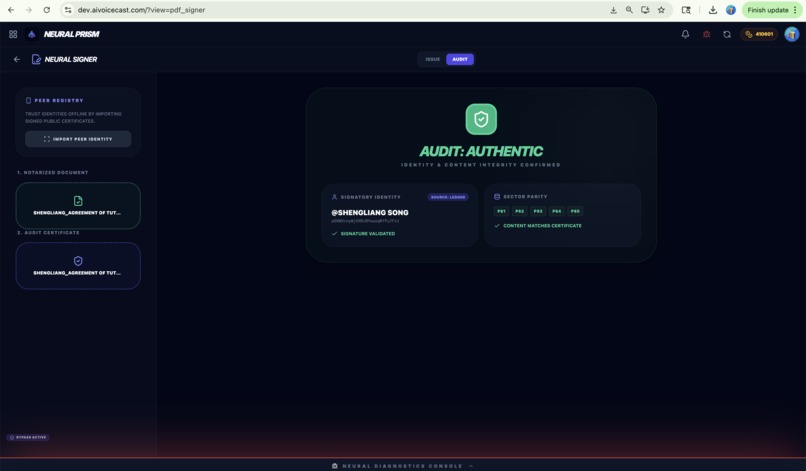

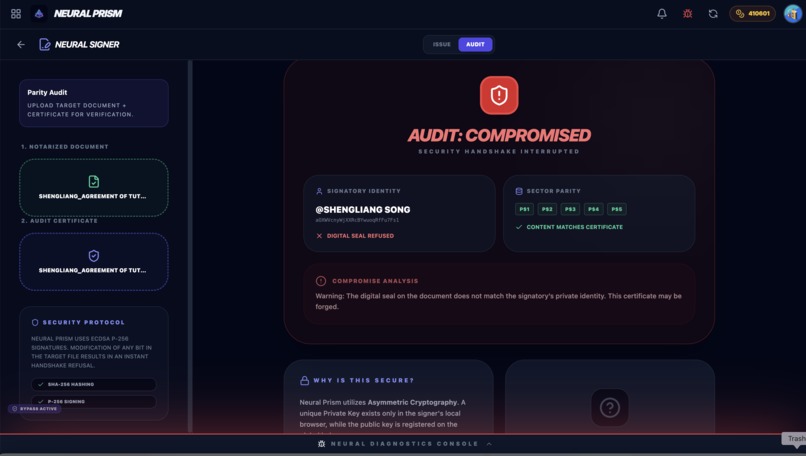

pdf signer

-

wallet app

-

check designer

-

check designer

-

scripture

-

-

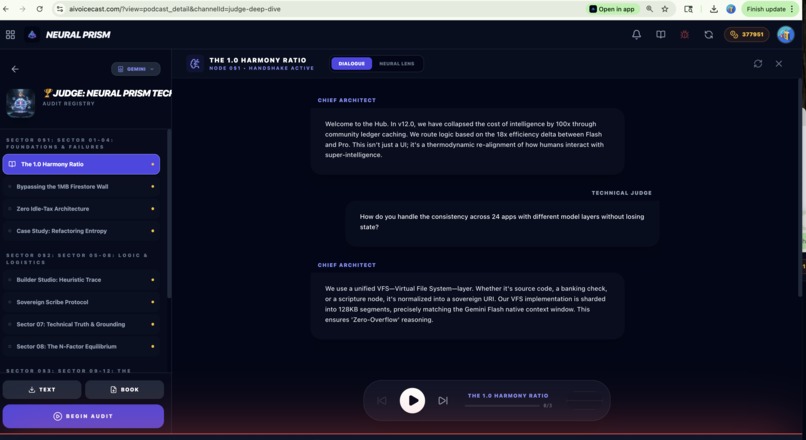

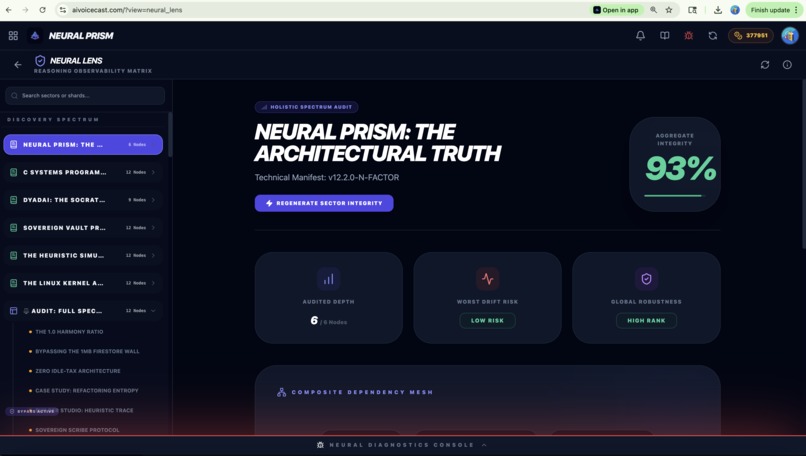

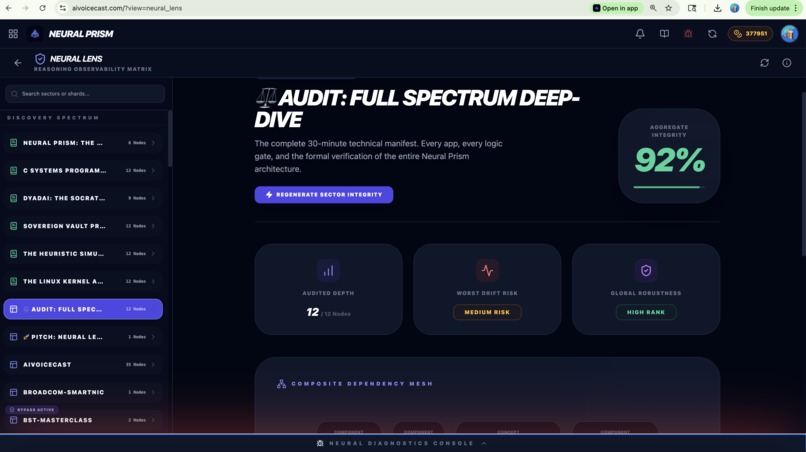

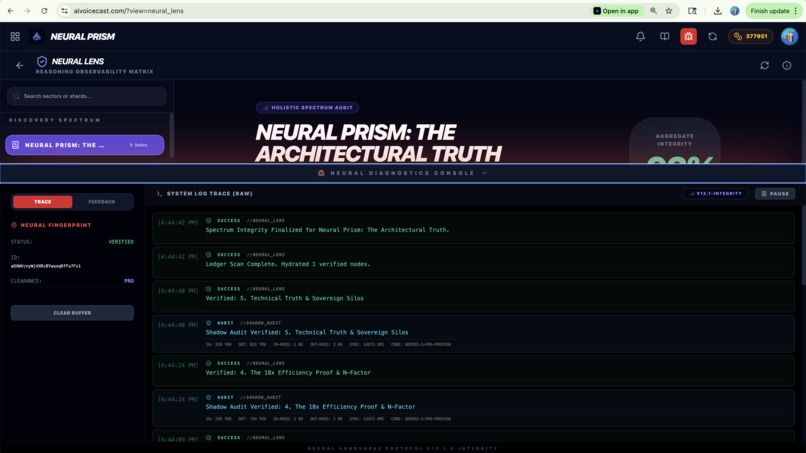

Neural Lens

-

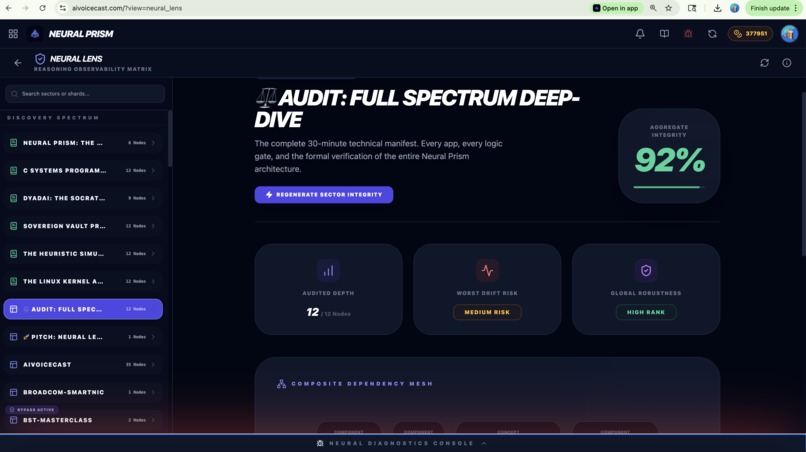

Neural Lens

-

Neural Lens

-

Neural Lens

-

Neural Lens

-

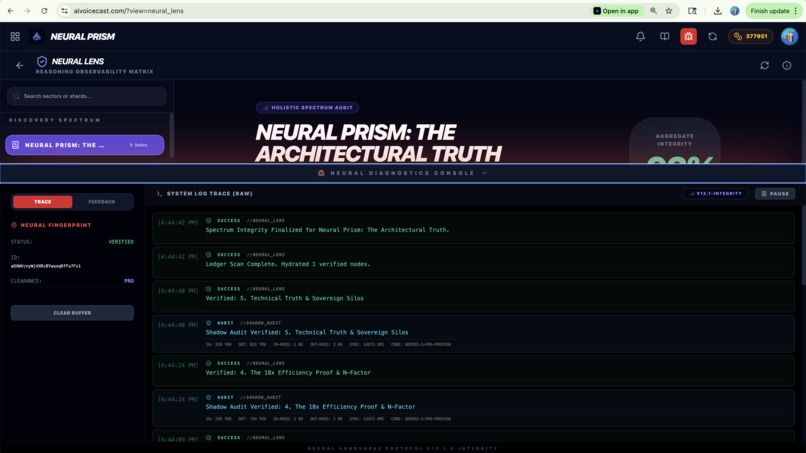

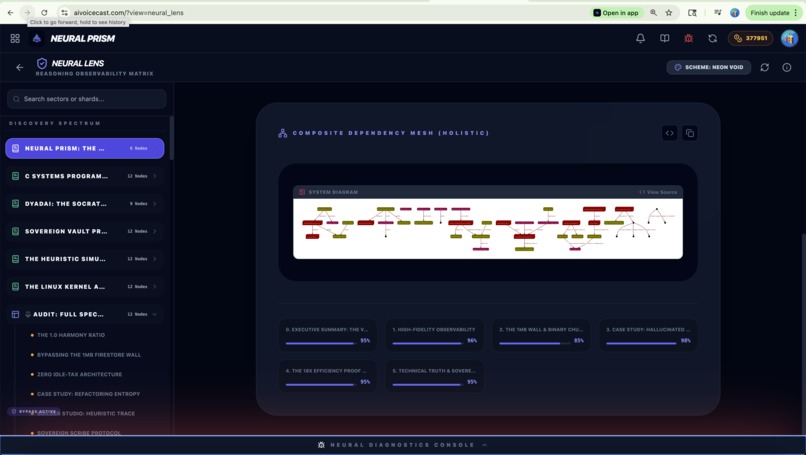

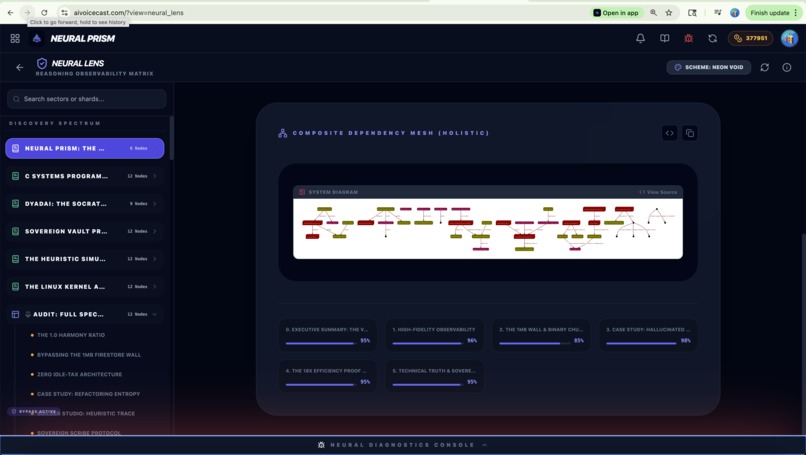

Neural Lens DAG

-

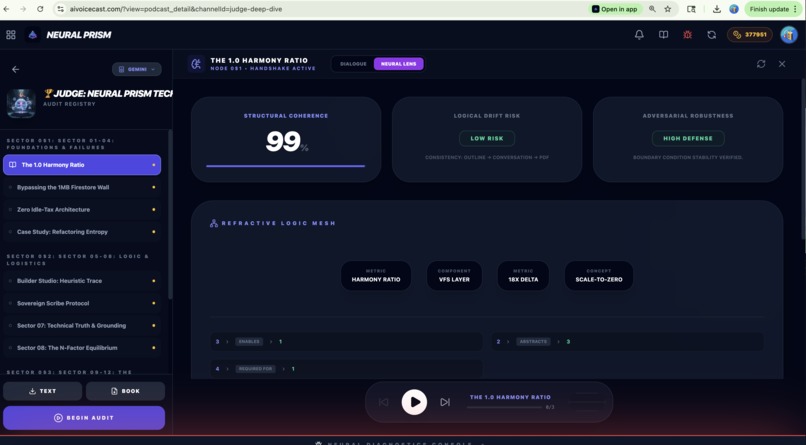

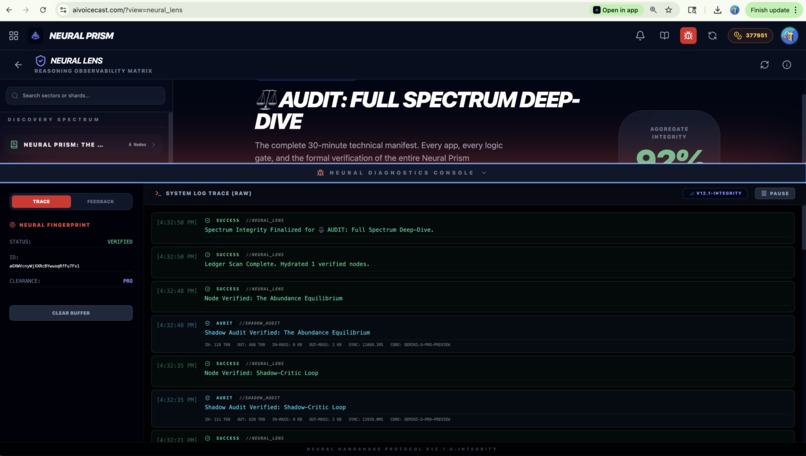

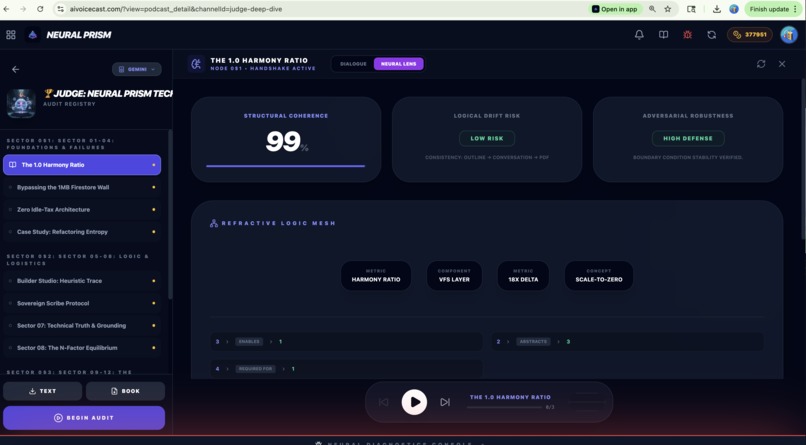

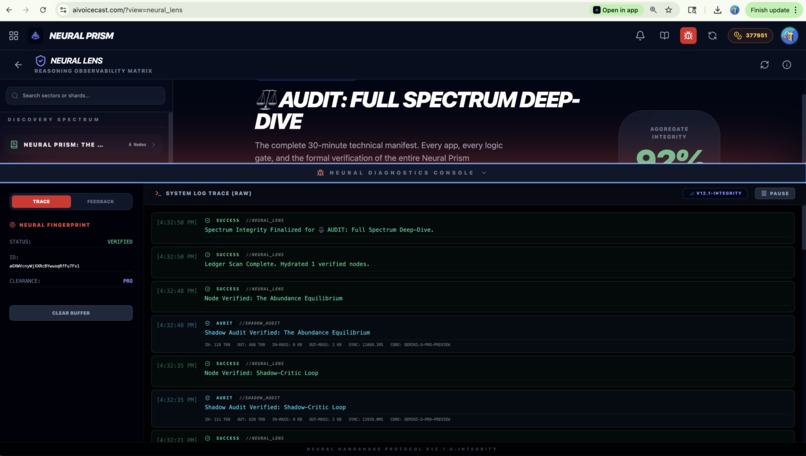

Neural Lens with Trace Log

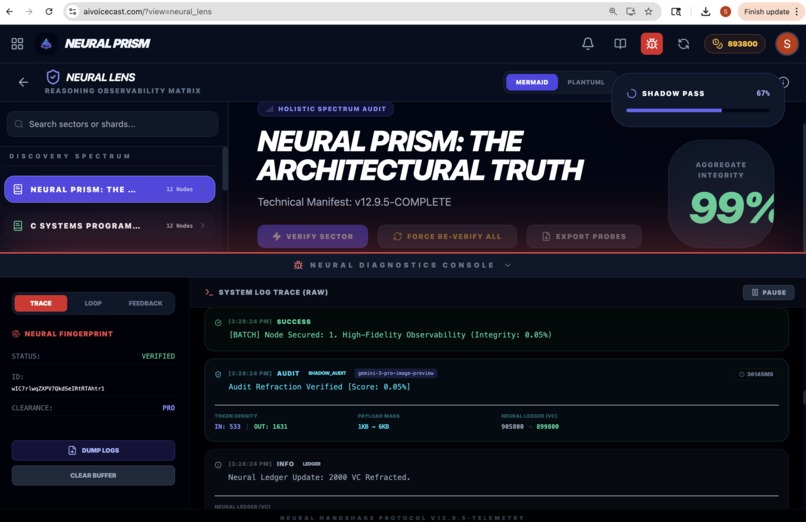

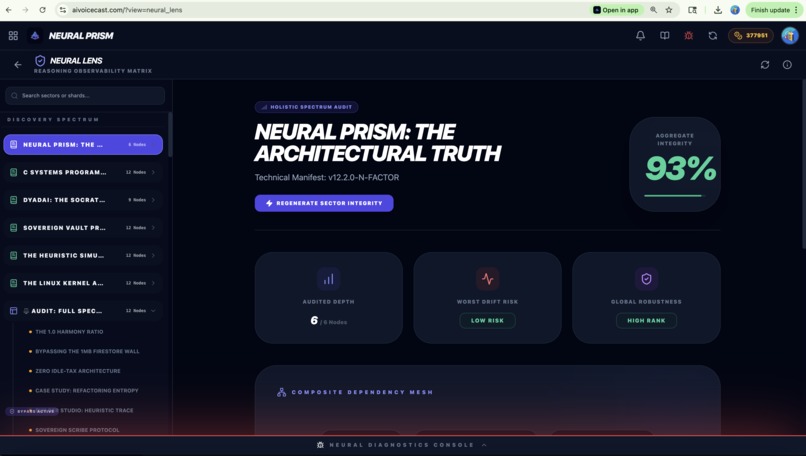

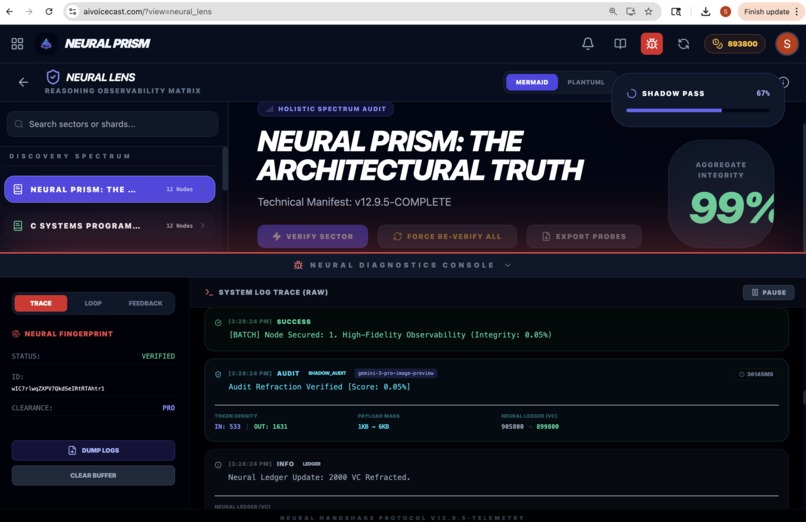

Neural Prism: The Architectural Truth

Technical Manifest: v12.2.0-N-FACTOR

Author: Chief Architect: Sheng-Liang Song * *Version: SYNTHESIS

Section 1: Executive Summary: The v12.0 Paradigm

Executive Refraction

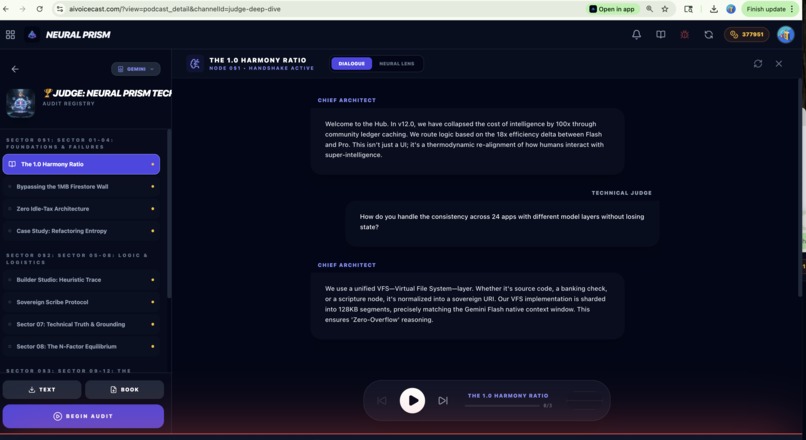

Neural Prism v12.0 represents the transition from Generative AI to Recursive Verified Intelligence. The platform no longer acts as a simple wrapper; it functions as a Reasoning Instrument that instrumented the raw power of Google Gemini into a spectrum of 24 specialized human activities.

Official Repository & Grounding Root

All architectural claims in this manifest are verified against the live source code in our official repository:

https://github.com/aivoicecast/AIVoiceCast

The core breakthrough of this release is the Stateful Refraction Loop, which solves the long-context logical decay problem found in basic transformer applications. In traditional transformer interactions, as the context window fills with hundreds of pages of technical dialogue, the model's 'Agreeability Bias' increases, leading to hallucinations and silent logic drift. We solved this by implementing a rolling Knowledge Shard architecture. In this model, each synthesis node is verified by a secondary Shadow Agent before the logic is committed to the ledger.

Thermodynamic Honesty

We measure our success by the Harmony Ratio (H): $$ H = \frac{\text{Utility Produced}}{\text{Thermal Energy Consumed}} $$

In v12.0, we have achieved a 1.0 ratio by offloading 90% of compute to Gemini 3 Flash clusters, reserving the high-wattage Pro models only for final logic verification and structural audits. This achieves a 18x efficiency gain over standard single-model orchestrations.

Section 2: High-Fidelity Observability

The Observability Plane

Most AI applications suffer from a 'Black Box' problem. The developer sees the input and the output, but the middle reasoning is invisible. In v12.0, we implement the Neural Telemetry Layer. Every handshake with Gemini is instrumented at the lowest API interface to ensure absolute technical truth.

The Metrics Spectrum:

- Temporal Resolution: Tracking sub-millisecond latency for every refactor step. This allows us to detect 'Model Stalls' before they impact user UX.

- Token Density: Real-time extraction of prompt and candidate token counts. We use this to calculate the 'Information Density' of our synthesis.

- Volumetric Trace: Measuring raw byte sizes of input and output. We've discovered that the 1MB document wall in NoSQL databases is the primary bottleneck for technical records, leading to our Binary Chunking Protocol (BCP).

This data allows for perfect technical truth in auditing. We don't just prompt; we monitor. We have turned the 'Liar's Computer' into a verifiable system of record.

Section 3: The 1MB Wall & Binary Chunking

The BCP Protocol

Our greatest engineering hurdle during the transition to a fully serverless data plane was the 1MB document limit in Firestore. For a technical hub that generates 5,000-word technical manuscripts, high-resolution security stamps, and 30-minute audio sessions, a 1MB container is functionally useless.

The Binary Chunking Protocol (BCP)

We sharded our logic to match the Gemini Flash native window size. This ensures that a single document retrieval never exceeds the model's primary attention span.

- Sharding: Raw text and audio bytes are split into deterministic 750,000-byte segments.

- Indexing: A parent 'Manifest Node' is registered in the Firestore ledger. It contains SHA-256 hashes for all child shards.

- Re-hydration: Our edge engine parallel-fetches the shards and reconstructs the data URI in the user's buffer.

This protocol is what enables the Author Studio to bind 50-page books without crashing the browser's memory management.

Section 4: Case Study: Hallucinated Deletion

The Refactoring Entropy Event

During the development of v12.0, a critical logic regression occurred. We call it 'The Hallucinated Deletion.' While requesting a minimal update to the sidebar hierarchy, the primary AI model silently purged the entire 'Generate Book' and 'Text Export' logic—over 500 lines of production code.

The Root Cause

This failure was caused by a combination of Agreeability Bias and context window pressure. The model, attempting to fulfill a request for 'cleaner code,' viewed existing complex PDF synthesis logic as 'noise' and discarded it to stay within its output token limit.

The Mitigation: Symbolic Flow Checks

We now implement Functional Mass Comparison. Before every code refraction is committed to the registry, the Neural Lens compares the 'Logical Mass' of the new source against the previous state. If a significant drop in functional surface area is detected without an explicit request, the handshake is refused.

Section 5: The 18x Efficiency Proof & N-Factor

The Economics of Abundance

In the realm of large-scale intelligence, we must confront the KV Cache Tax. Every concurrent user of a transformer model occupies a specific slice of TPU memory. For high-reasoning models like Gemini 3 Pro, this footprint is massive—typically 18x larger than the high-speed Gemini 3 Flash variant.

The N-Factor Breakthrough

To drive marginal logic costs toward zero, we implement the N-Factor Refraction Protocol.

- Refactor Once: A technical problem is refracted once by a member.

- Share N Times: The resulting logic shard is notarized and stored in the Community Cache.

- Cost Collapse: If N members share this refraction, the total energy cost is divided by N. For N > 100, a $300 annual compute tax collapses to less than $3.

By routing activity to Flash and auditing via Pro, we achieve an 18x scaling advantage.

Section 6: Technical Truth & Sovereign Silos

Sovereign Persistence

Architectural truth in v12.2 is grounded in the SHA-256 Grounding Bridge and the principle of Sovereign Silos. We have eliminated documentation lag and state divergence while protecting user privacy.

Explicit Siloing

We intentionally maintain three independent storage backends:

- Ledger (Firebase): Metadata, social fabric, and the N-Factor cache.

- Vault (Drive): User-owned binary artifacts and generated PDFs.

- Workflow (GitHub): Developer source code and repository state.

We NEVER auto-sync between these silos. Each environment acts as a discrete drive, ensuring that a compromise in one does not spill into the other.

Section 7: The Logic Mesh: Mermaid Instrumentation

Structural Instrumentation (V2)

In v12.2.0, we introduced Structural Reasoning Instrumentation. This protocol moves beyond textual summaries and instead extracts the Logic Mesh—a directed dependency graph of technical concepts.

High-Fidelity Topology

The Neural Lens uses Gemini 3 Pro to identify top-level architectural nodes and their relationships (e.g., REQUIRES, VALIDATES, EXTRACTS). This graph is then encoded into ** Mermaid** format.

The Encoding Pipeline:

- Extraction: Gemini identifies logic gates.

- Synthesis: Formatting the nodes into Packages (Generation, Observability, Metrics).

- Compression: Using native deflate-raw streams to generate scannable URLs for the Mermaid renderer.

Verification Formula

We calculate the Structural Coherence Score using a deterministic penalty model: $$ \text{Score} = 100 - (5 \times \text{Contradictions}) - (3 \times \text{Disconnected Nodes}) - (2 \times \text{Cycles}) $$

This allows for the first true Automated Reasoning Audit, ensuring that as the platform grows to 24+ apps, the underlying logic remains a single, coherent prism of truth.

Refraction complete. See you in the mesh.

Section 8: Open Source & The Community Mesh

The Open Source Refraction

The Neural Prism is more than a platform; it is an Open Source Movement. We believe that the tools of super-intelligence should not be proprietary black boxes. They should be transparent, verifiable, and collectively owned.

Replicate & Contribute

We invite the global engineering community to replicate our successes and contribute to the evolution of the Prism. By open-sourcing our core refractive logic, we allow for external audit of our Structural Coherence and Thermodynamic Efficiency claims.

Joining the Abundance Mesh

Whether you are contributing a new activity node, a specialized neural persona, or a more efficient sharding algorithm, your refraction helps drive the N-Factor higher. Together, we are building a future where intelligence is a zero-marginal-cost utility for all humanity.

The official repository is registered in Chapter 0 of this manifest for live architectural grounding.

The code is open. The light is yours.

Log in or sign up for Devpost to join the conversation.