-

-

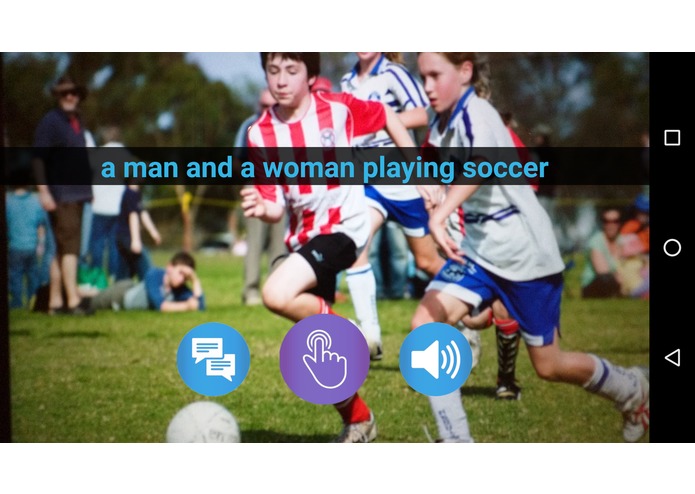

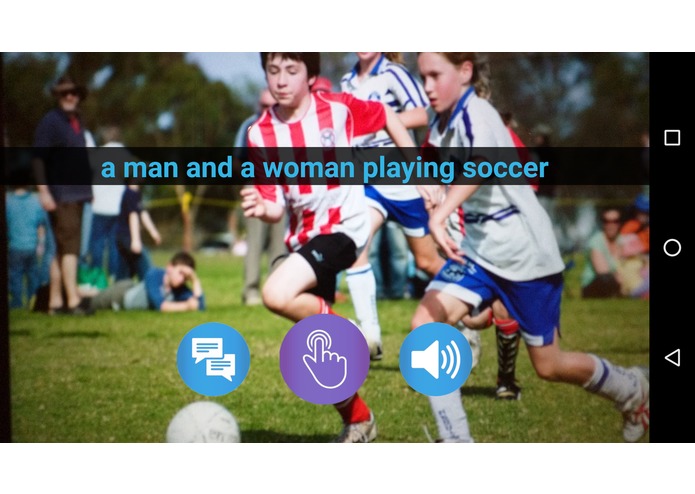

Sample 1 - The text is read out to the visually challenged person

-

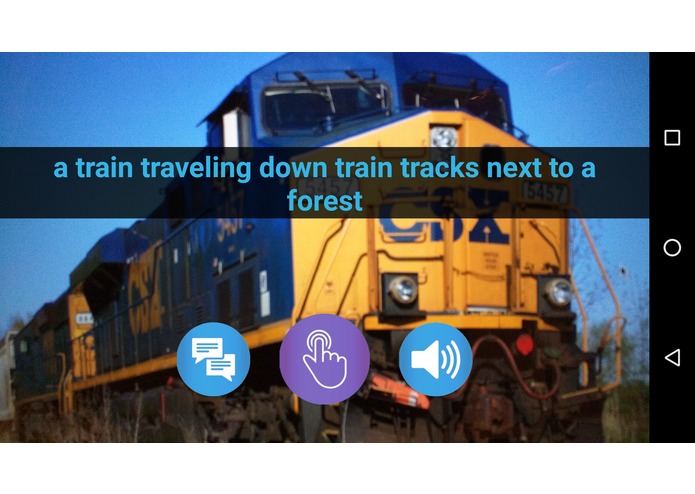

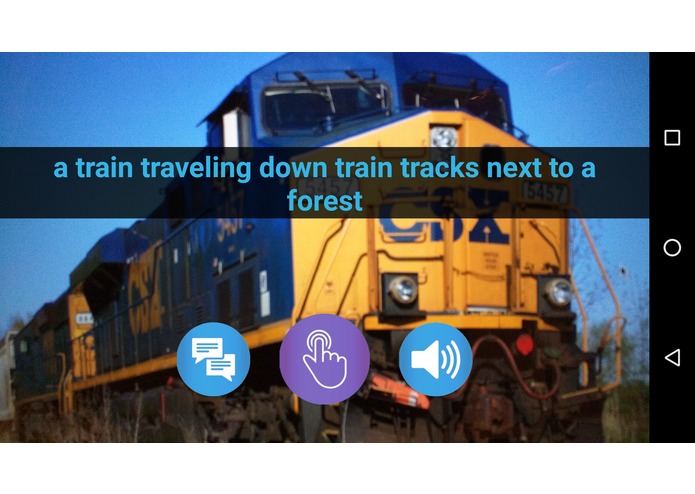

Sample 2 - The text is read out to the visually challenged person

-

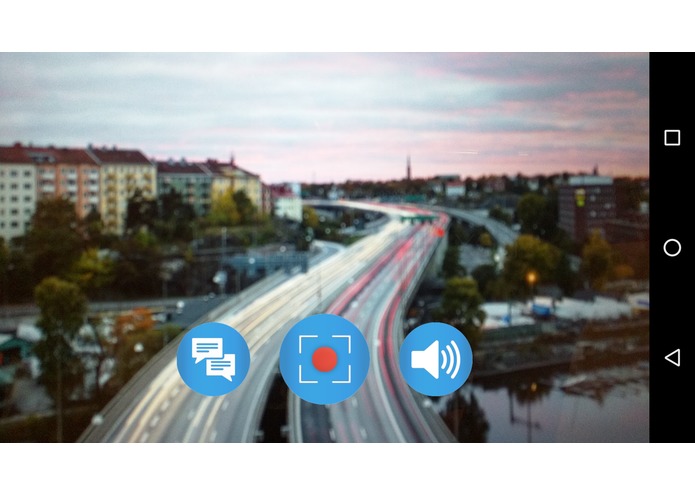

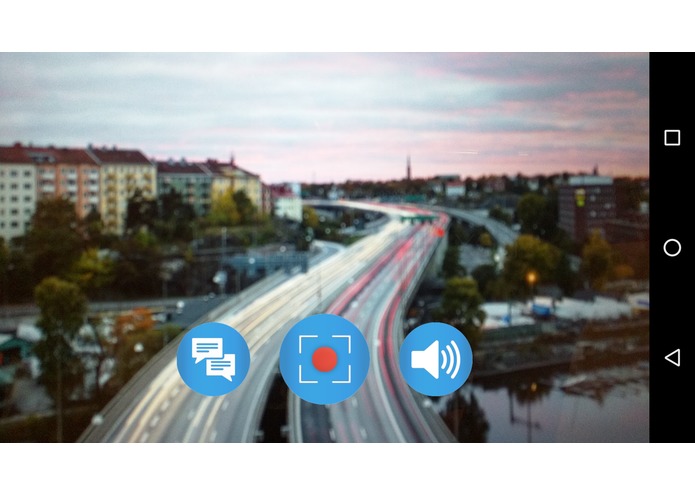

Launcher screen - text captions are enabled, video/real time mode is on and the audio assistant is turned on

-

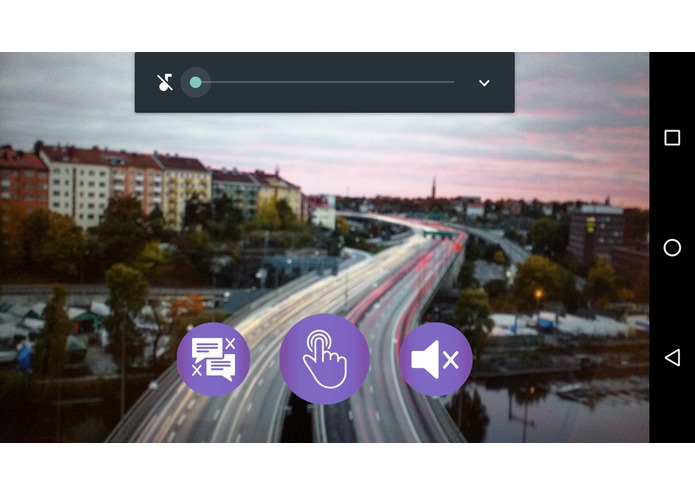

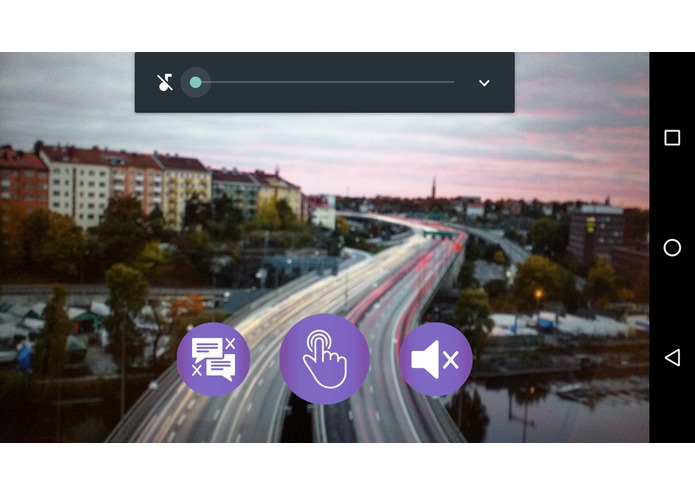

Controls and gestures - Text captions disabled, one-click mode enabled, sound muted (slide up to unmute)

Inspiration

There has been a lot of research in the field of image and video feature extraction and recognition. We decided to leverage on some of these advancements into building an audio assistant for the visually challenged - enabling them to get a feel of what is happening around them.

What it does

The application, "netra"(Sanskrit: eyes) , allows the user to operate in two modes - one click mode in which an audio assistant describes the contents of the user's current screen (camera lens) and a second, real time mode, which constantly examines the user's surroundings and notifies the user with audio tags. The user can enable and disable the text captions appearing on the screen. The navigations also have gesture support enabled to make it easy for people who are visually challenged.

How we built it

We build this application as an android mobile application making use of the async tasks to run background services. We used the Algomus API, based on the developments in recurrent neural networks for the caption generation (one click mode) and the Clarifai API for the tag generation (real time mode).

Accomplishments that we're proud of

Securing the 1st place at USC's ACM Mobile Hackathon for this project.

What's next for netra

Release a beta version soon

Log in or sign up for Devpost to join the conversation.