-

-

Nebula Blue logo

-

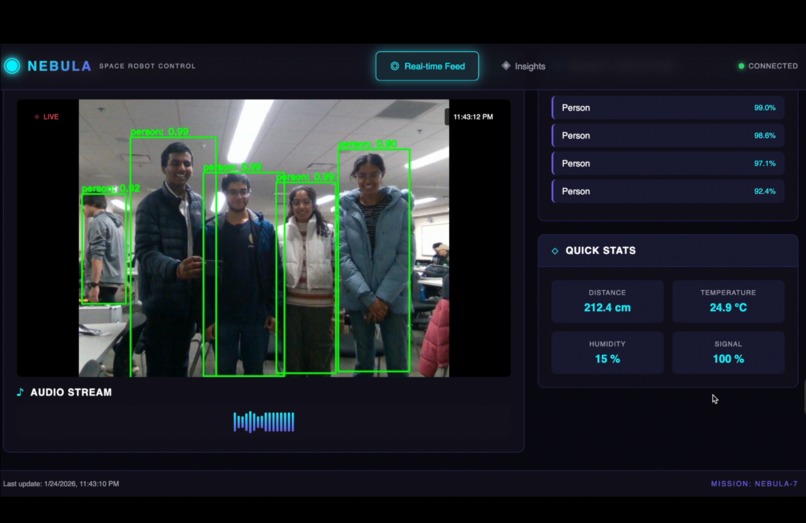

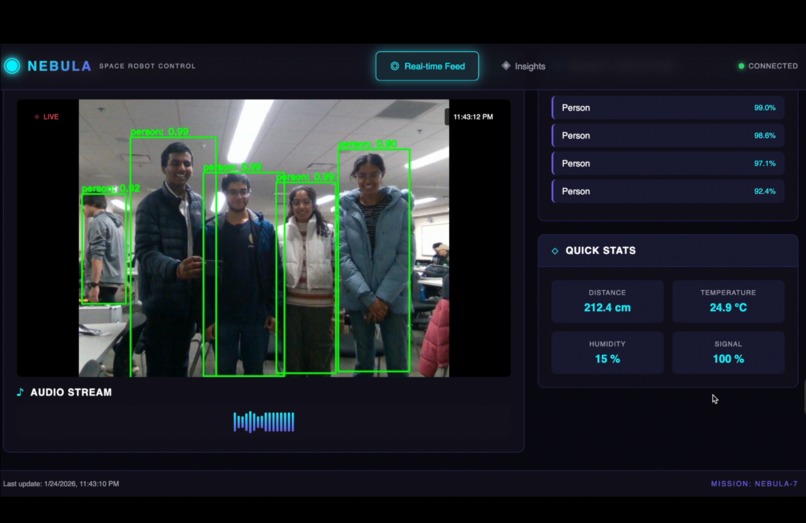

Nebula Blue interface showing the robot’s camera detecting all four of our team members as people in its environment.

-

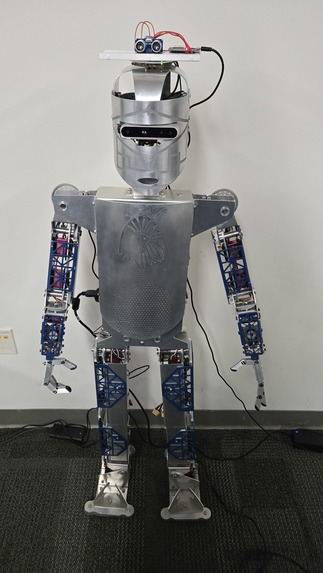

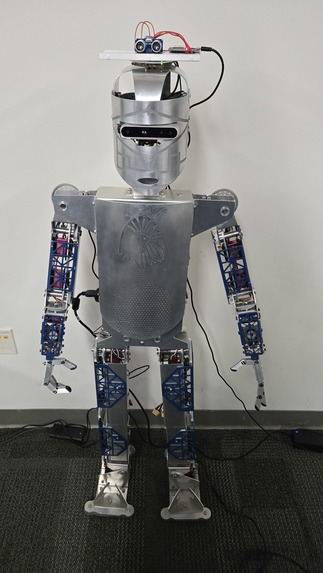

Zeus2Q, the STW robot we used for this project

-

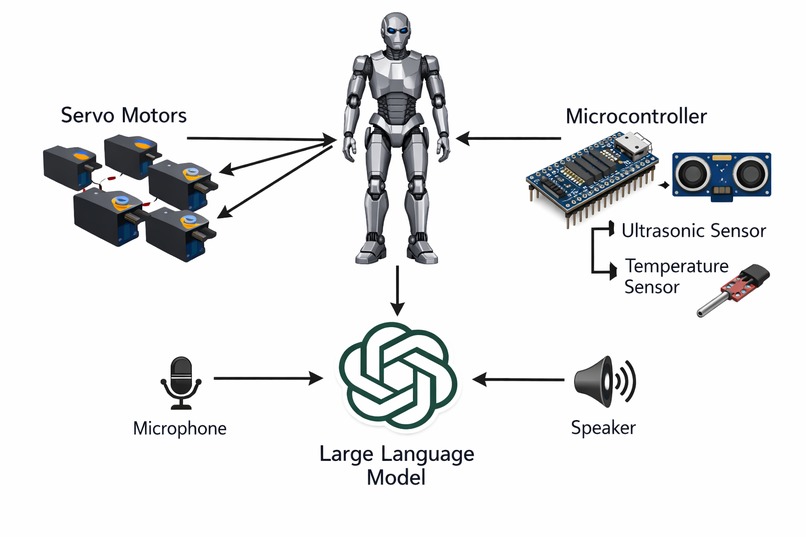

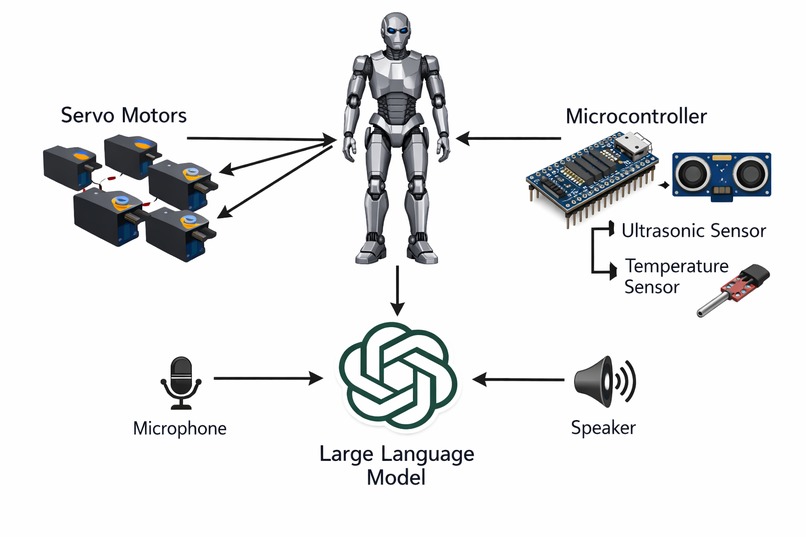

Diagram of the various components of the Nebula Blue robotic system

-

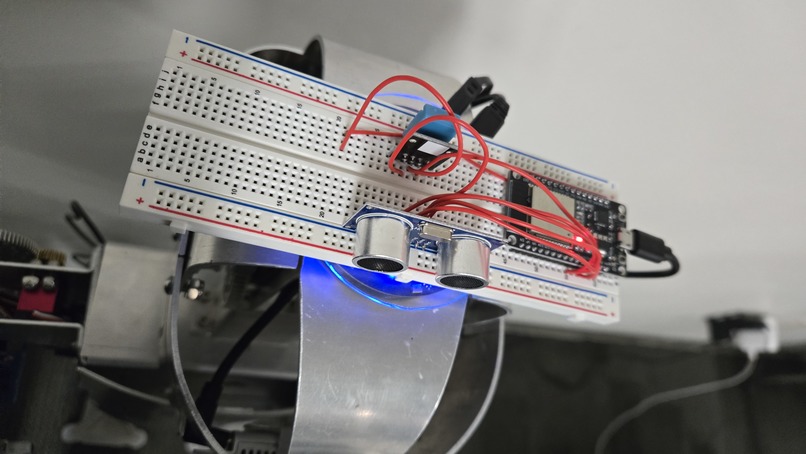

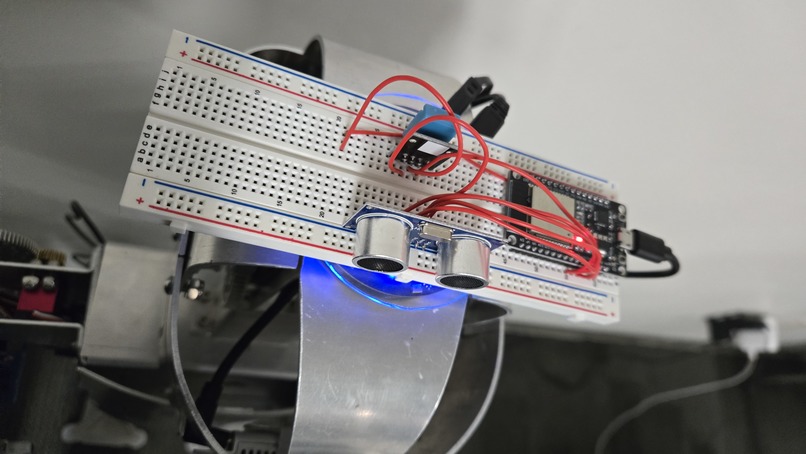

Board which houses Nebula Blue's ultrasonic and temperature sensors, along with the ESP-32 microcontroller which interfaces with them

Inspiration

We were inspired by the idea of the CEO and founder of System Technology Works of using humanoid robots to aid the elderly in their everyday tasks. Similarly, we wanted to use and modify these robots to help astronauts with similar chores in space. The original idea was to use these robots to keep the elderly population safe by acting as caregivers. Likewise, Nebula Blue will keep astronauts safe by monitoring the safety of the surrounding temperature and humidity, as well as obstacles/other objects around it. Both astronauts and the elderly often experience loneliness, which motivated us to develop our robot to address these issues.

What it does

Nebula Blue interacts with an astronaut through verbal communication, human-like gestures, and electronic sensors. It can complete multiple different tasks to simplify and prevent any issues for an astronaut.

How we built it

We built our project using Python code to control the robot’s movements and create the logic behind its automations. The robot analyzes the incoming audio through a microphone that sends the input into OpenAI’s Whisper for speech recognition and transcription. The code then uses this information to perform different tasks, depending on whether certain keywords were mentioned. If the audio input does not include any of those keywords, OpenAI will find another appropriate response to give. In addition, the robot has an Intel RealSense camera that uses a YOLOv4 machine learning model to detect various objects in its view. This allows it to announce the objects in its view, as well as the objects around it (by turning its head all the way around) We also added an ultrasonic/SONAR sensor to the robot’s head, allowing it to find its distance away from the closest object. In addition, we used humidity and temperature sensors to detect the information and decide whether or not they were in the safe range for humans. All of the sensor information, as well as the camera feed with bounding boxes and confidence values were displayed in a web interface for easy access for astronauts and/or ground control.

Challenges we ran into

One main challenge we ran into was that we had trouble figuring out the logic for the code, especially when writing the voice keywords for the robot to follow. Since the robot had to constantly switch between listening to the audio input, and moving different parts and speaking, the code ended up being very complicated. In particular, there had to be multiple different ways of completing the same task depending on the order of tasks.

Accomplishments that we're proud of

One accomplishment we are proud of is getting the sensors to work and integrate properly into our main code base. In addition, another accomplishment we are proud of is getting image detection to work properly, and using OpenCV to detect the objects and draw the bounding boxes around them in real time.

What we learned

We learned a lot about combining multiple parts of the robot to create a cohesive project. For example, we had multiple types of inputs from a microphone, camera, and ultrasonic and DHT-11 sensors. We were able to figure out how to combine all this information in a sensible way so the robot could complete all of the tasks.

What's next for Nebula: Astronaut's Best Friend

Next, we hope to figure out how to get the robot to walk properly. While we started working on this functionality, we quickly learned that this problem was much bigger than expected. Even though we began the steps necessary for this, we hope to continue it until the robot can take multiple small steps in a row.

Log in or sign up for Devpost to join the conversation.