-

Finished mobile telepresence robot.

-

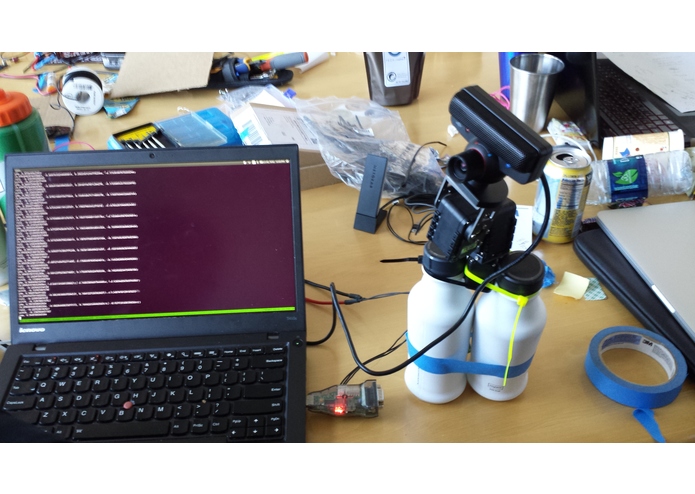

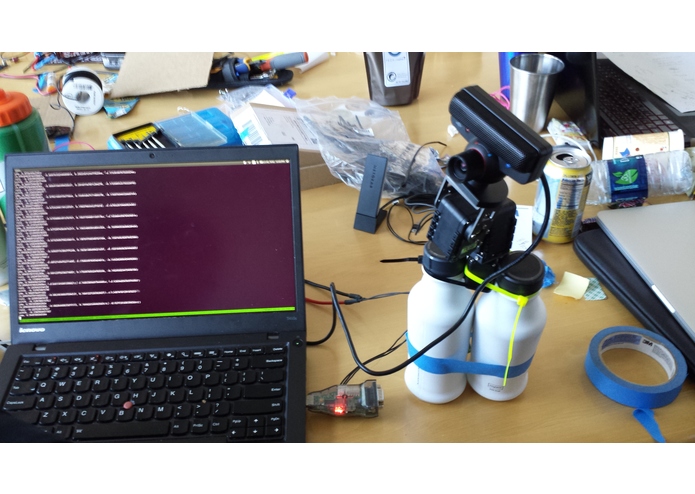

Gimbal testing

-

Brent controls the camera by moving his head while wearing the Cardboard.

-

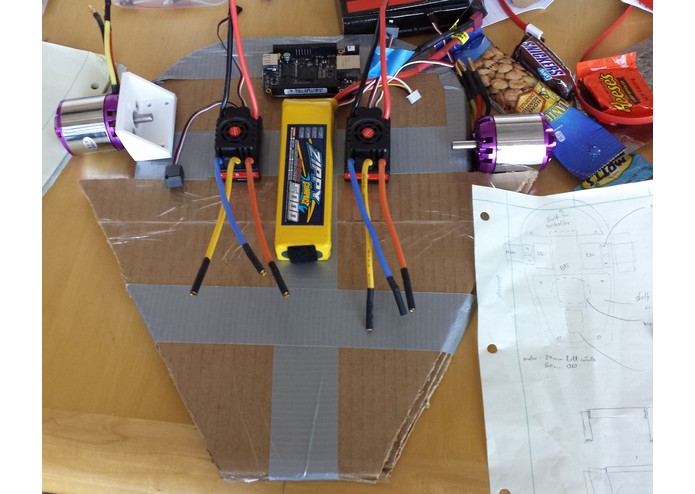

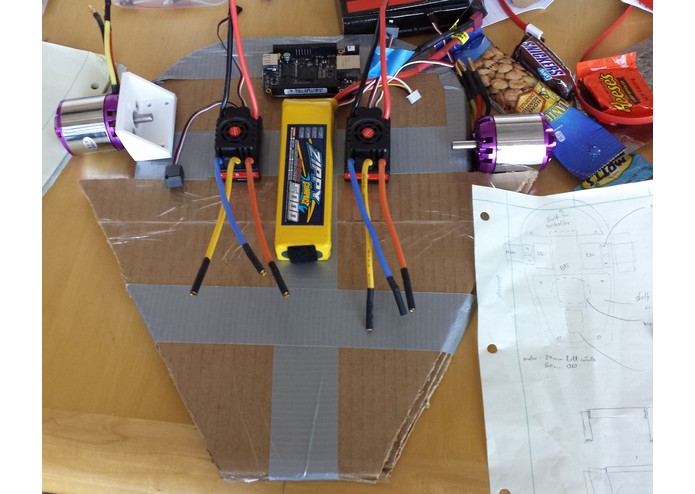

The layered cardboard chassis was carefully planned to provide ample room for our electronic systems.

-

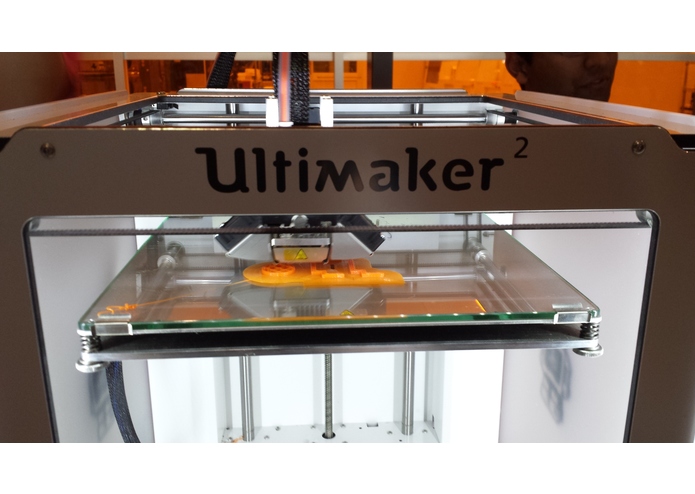

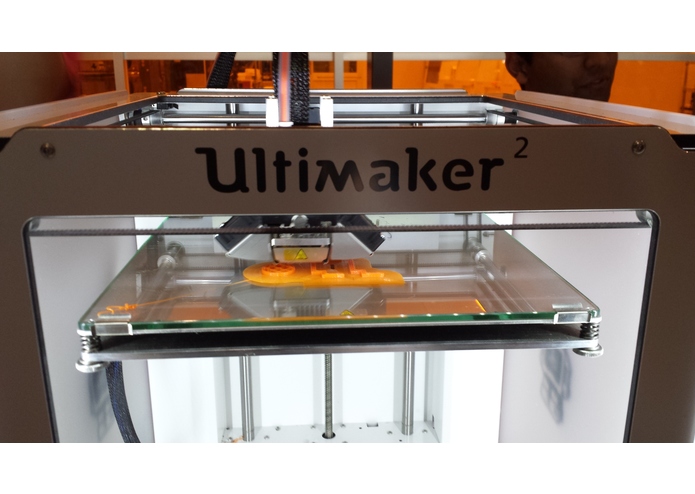

Courtesy of the UIUC MakerLab and other hackers, we were able to print critical motor and servo mounting hardware.

Inspiration

We're a group of high school friends who attend universities around the country. When we found ourselves together again, we decided to build a mobile telepresence robot which allows us to interact with far away environments and maintain interpersonal relationships.

What it does

Users see the world from the perspective of the robot through a Google Cardboard. The onboard gimbal reorients a camera based on user head movement, allowing a full 360 degree view of the surroundings. The user commands the robot to move to new locations using RC-style controls in a phone app.

These capabilities give the robot a multitude of possible use cases. It could be used to save lives by searching for people trapped in a house fire, or evaluating a military target using its small profile and 30 mph top speed to evade hostile threats. It could provide remote users with the ability to explore far away destinations, bringing people from around the world together.

How we built it

Two mobile applications, compatible with both iOS and Android devices, give users the ability to fully explore and interact with the world around them.

The VR app reads raw IMU data and streams it back to the robot via a WebSocket, while the robot streams video from its camera to the app.

The gimbal consists of servos which provide 3 degrees of freedom. IMU data from the phone is passed though a complementary filter. The filtered attitude is passed to the arm controller as a quaternion. The controller calculates the rotation error using a quaternion product which is ultimately converted to individual servo setpoints.

The second application sends desired linear and angular velocities to the robot. One the robot, a BeagleBone Black embedded Linux system receives this information and sets the motor duty cycles accordingly.

The robot structure is comprised of tape, glue, cardboard and 3d printed mounting brackets. By gluing together layers of cardboard with their grains running in perpendicular directions, we were able to create a rigid and strong structure at low cost. CAD of the robot was completed in SolidWorks, allowing for easy implementation of complicated geometry

Challenges we ran into

One problem was that the camera would sometimes skip over an angle range instead of smoothly panning as expected. This was because one of the angle parameters in the prebuilt libraries we initially used had a smaller angle range of 0-90 degrees, creating a discontinuity in the quaternion. We fixed this by analyzing the raw sensor values instead, fusing them ourselves with a complementary filter to create the reorientation response we desired. We also wrote a calibration algorithm to take care of the effects of drift.

Accomplishments that we're proud of

We dreamed big with this hardware-intensive project and pushed our personal limits. Despite being unable to obtain some components which would have made our lives easier, we tackled every problem head on and made the best of what we had.

What we learned

We learned that hardware hacks are hard, but the satisfaction of having a tangible product is pretty great.

What's next for Natural_Telepresence

We're planning to implement autonomous features to make the robot less intensive to drive. We will also consider using higher quality materials and improved construction techniques for improved robustness.

Built With

- 3dprinting

- battery

- beaglebone

- cardboard

- google-cardboard

- javascript

- motor

- python

- ros

- rubber

- solidworks

Log in or sign up for Devpost to join the conversation.