-

-

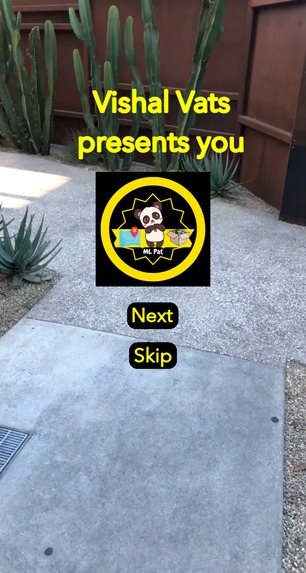

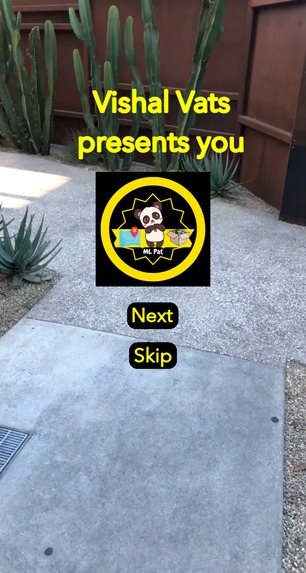

Landing UI for the lens

-

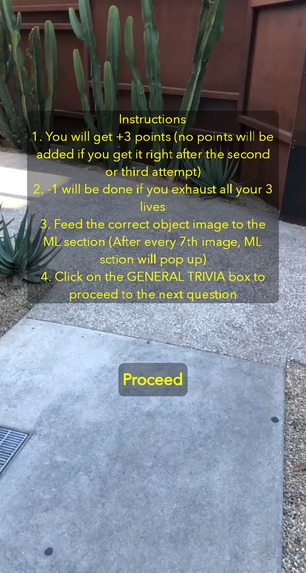

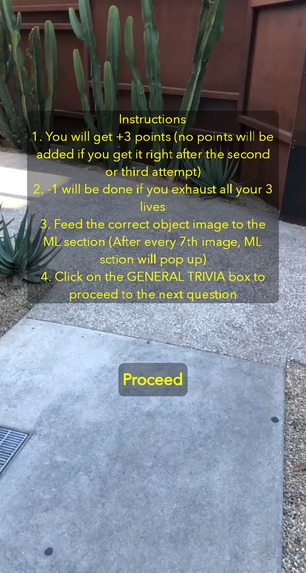

Instructions panel

-

ML model asks for a particular class object

-

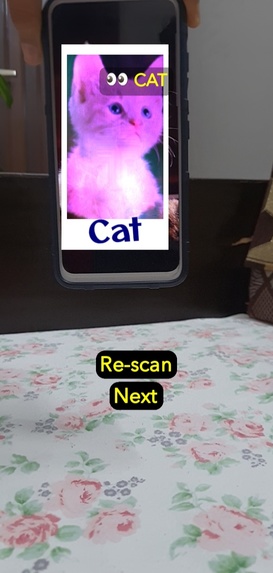

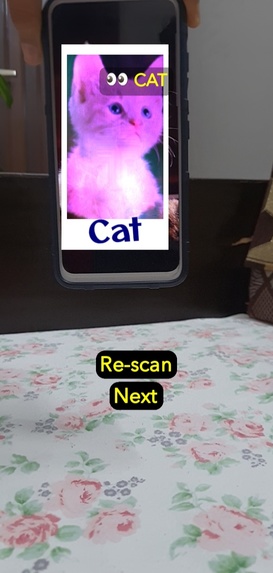

YOLO model recognizing a CAT

-

YOLO model recognizing a CUP

-

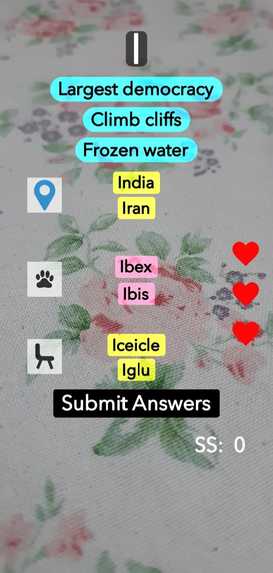

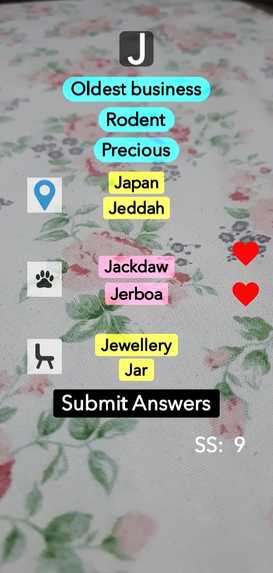

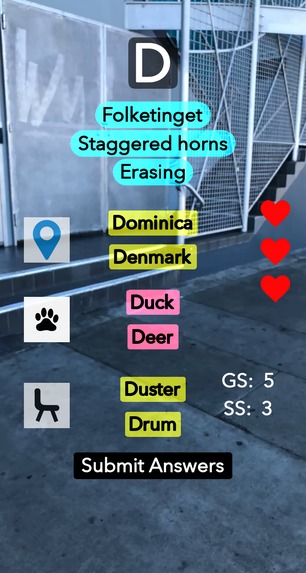

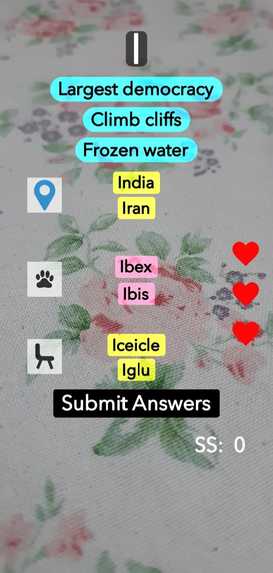

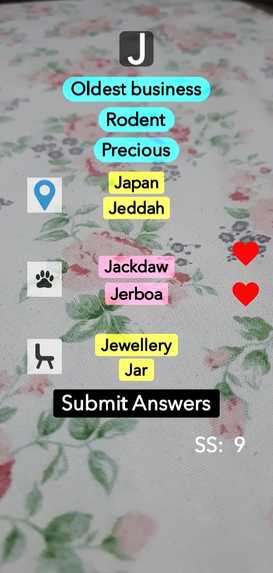

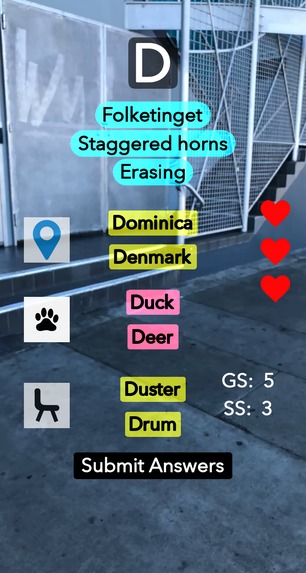

P.A.T section displaying questions

-

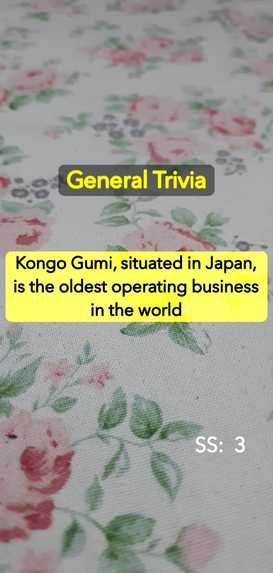

General Trivia question

-

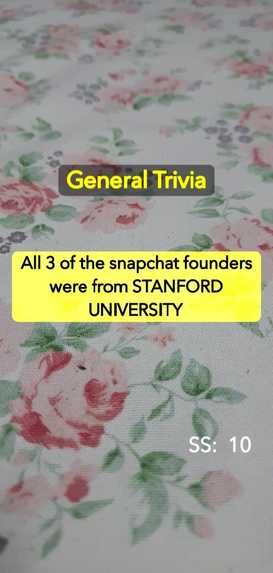

General trivia question containing snapchat facts

-

Score & life being reduced after a wrong attempt

-

Persistent storage cloud variant

-

Persistent cloud storage variant showing global & session scores

-

Try out the lens by using the snapcode

Inspiration

When I first installed & used Snapchat, I was baffled to see the vast amount of lenses made and consumed on a daily basis. There were all sorts of lenses ranging from those using material & textures to those using connected lens features. But I noticed that there was a missing piece in the puzzle, that is, there was no lens that could harness the power of Machine Learning & integrate it with a game so that the end user won't only get detached from the infinity lens scrollbar but also gathers/caters some knowledge while using the lens along with beautiful UI and UX. With these thoughts, I started making ML PAT: The OG Lens

What it does

Let's break down the lens' title. ML in the title is because the lens uses the Multi Class Detection (YOLO) algorithm and PAT sorts of introduces the game that will be played. In our childhood, all of us played the game of Name, place, animal & thing. So, this lens tries to provide a digital replica of the same childhood game. I have dropped the Name section because it is an ambiguous section of the game because Place, Animal & Thing are globally renowned/recognized aspects whereas the Name entity can vary from region to region. So, to make sure that the lens evaluates every user on the same matrix, I dropped the Name field. I thoroughly enjoyed the process of giving a digital revival to the age-old game and keeping the restricted/isolated development environment of Lens Studio, I think I did a great job.

Read the following points to see what it does:

1️⃣ Whenever a user visits the lens, the landing introductory UI will pop up. Two buttons: Next & Skip will be there. Next will take the user to the instructions page whereas the Skip button will take the user to the game.

2️⃣ Instructions page just tells the user about the game and also familiarizes him with the scoring pattern. The scoring pattern is as follows:

a. +3 will be awarded for each correct answer cycle if the user has not lost any life. (What is a correct answer cycle? Don't worry, I have discussed it in the later section).

b. With each reducing life, a point will be deducted from +3, that is if the user gets the answer right after losing a life then +2 will be awarded and with 2 lives lost, +1 will be awarded.

c. -1 will be awarded from the session score with each wrong answer

3️⃣ If the skip button is pressed then the instructions page will be bypassed & the user will directly enter the game

4️⃣ When the user uses the lens, in the initial load the ML section will pop up and it will prompt something like 👀 Cat. Eyes basically tell that the lens is expecting the user to point to a particular class of object out of the 7 objects (car, cup, cat, dog, plant, tv, bottle) that the tflite model can detect.

5️⃣ In the ML section, there will be Scan & Re-scan buttons. For the first time scanning, the scan button will use the world/face camera to detect the object at the texture that you are pointing to. If there is no target class found in that camera input then the user has to use the re-scan button to take consecutive snaps until the desired object is found.

6️⃣ Post successfully providing the object to the ML section, the user has to click on the Next button to enter into the PAT (place, animal, thing) section. There is ample number of questions for this section and this is how the section works:

a. A random alphabet will be chosen from the array and displayed at the top of the screen. Say, the chosen alphabet is A, then following the alphabet there will be hints related to the question set for the PAT section, and accordingly, the user has to choose from the 2 options.

b. I have ensured that the user can't submit the answers before selecting any of the answers for all 3 things. When the choices are being made and the submit answer button is clicked then the answer check will be done. (There are separate question objects for all 3 things that is for p.a.t )

c. If the selected answers are correct then, claps sound will be played followed by increasing the session score. If a wrong choice was made, then buzzer sound will be played followed by lives & score deduction. So, in this manner user gets an audial output for the responses he/she provides.

d. Previously I have mentioned complete game cycle. By it, I meant that after 7th question, the user has to pass through the ML section. That is, at the time of first opening the lens, the user will be prompted to provide the correct object as required by the ML model and then after every 7th question, the user has to redo the task. (So, every 8th iteration, the complete game cycle will be providing an ML input and then playing the PAT section). I chose 8th because the ML model isn't 100% accurate and I don't want to make the user lose all of the progress by a mere AI error. I used those number of iterations so that the game can be fair and square for the players.

7️⃣ The session score will be constantly displayed on the right side and will look out for every mistake and correct response.

How I built it

I bet that everyone will agree with me that "Lens studio at the first glance can be a bit overwhelming to the user as there are tons of panels, asset resources & much more". So, I started from scratch, that is, started using the components API, read the documentation/resources, and then first draw the exoskeleton of what the PAT section should look like. Because in the game, I am doing a lot of UI manipulations and logical checks, I used the Behavioral script so that I can bind the required functions to the components. I binded a total of 20 script components to the behavior script to achieve the final outcome.

It was great to use bare JS to make the entire game as it helped me gather a lot of JS topics together and I cleverly used logical programming all the way along to conquer the difficulties. I programmed and laid out every part of the PAT game.

After the PAT section was made, I was onto attaching the ML model to the same. For, this I used the template for the Multi-Object Detection algorithm. The template was having dozens of files that were looking after the ML model. But due to the fact that I already made the PAT section, I was confident in my abilities and thus I chose the process of customizing that template to my needs. First of all, I changed the model such that it was not AUTO-RUNNING for each instance of processing because I needed it only at specific junctions in the game. So, that was a tough task as I went through each file and grabbed the data points of my interest, and changed them to global variables such that they can be modified from any script out there. Whether it is removing the bounding box from the screen or choosing a random class from the labels, I can proudly say that I finally achieved what I was looking for.

Note: The maximum score that can be achieved by a player if all the ML inputs & the PAT questions are answered correctly is 135 (Try the lens on your own and see if you can reach there in a single go or not)

Challenges I ran into

I guess everyone who will be reading this paragraph will be startled. Actually, after I finished the PAT section, I thought of adding the game to the persistent cloud storage and after toiling 3 days of work I did that & it was working fine, but when I tried to integrate the ML model into it, it was throwing an error because the ML model & persistent cloud storage didn't work together as both of them are resource intensive task and running both of them synchronously is not possible. Hey, Vishal, don't brag!! I am not bragging, I actually did that and I have also made the working video for the persistent cloud storage lens so that everyone can experience its power also. (Through persistent storage, I made the game remember the PAT questions that the user has answered and in this manner, there were 2 game scores shown to the user at the time of playing, one is Session score and the other one is Global score). The session score is for the current game sessions & the global one depicts the overall score accumulated over various sessions of using the lens. The fact that the game remembers the scores and the question visited, made the game a real game-changer.

It works absolutely fine and adds a new dimension to the lens. The reason why I made another lens is that the persistent cloud storage lens was having the following shortcomings:

a. Each user has dedicated cloud storage for each lens and the maximum capacity of that is 3072 bytes & which is why my game was only storing 10 strings to store data points which was constraining me to a fewer number of questions.

b. Moreover, along with persistent cloud storage I can't use the ML model.

After making a successful version of the game, I went on to change the persistent cloud variant to a normal one that can be used with the ML model and I did that finally.

Accomplishments that I'm proud of

My lens is not just a UI/visual culmination of materials, textures & UI elements, it is a great blend of utmost programming discipline, versatility & ML model (don't forget persistent cloud storage also). I have provided a game in the form of a lens that will attract both knowledge lovers & those who are looking for something non-repetitive & out-of-the-box lens. For the sake of sharing the code, I am sharing the Resources section of the lens project which rightly reflects the hard work and innovation that I put into the project

I guess my lens is the first of its types and will surely do the following things:

1️⃣It will catch many eyeballs because it is a software/game in the form of a Snapchat lens & the users would love it.

2️⃣Generally, all other lenses either just run an ML algorithm endlessly which does the same thing for every input, or use scripts to control the behavior of the script but my lens not only runs the ML algorithm according to the lens execution state and also benefits the behavior scripts thoroughly

3️⃣Change the meaning of games because it is structured in such a way that user will not only gain score with each passing level but will also get to know about various new things all on the expense of playing a game

What I learned

- How to use Lens Studio to the full extent & make a good lens project file structure

- Utilizing the different assets & components provided in the lens studio along with the almighty Behaviour script.

- Pass variables between various scripts using the script & global API.

- Using different lens functionalities like ML components, Persistent cloud storage & various components in the lens

What's next for ML PAT: The OG (original/outstanding game)

I tried integrating the COCO SSD detection model to the lens but it was posing some issues related to the tensorflow library. I will try to shape the model in such a way that it can be easily integrated with any Lens studio and due to the fact that it poses 80 object classes, it will give more options to the user because the user can now interact with more object classes.

Built With

- ar

- javascript

- lensstudio

- machine-learning

- persistentcloudstorage

Log in or sign up for Devpost to join the conversation.