-

-

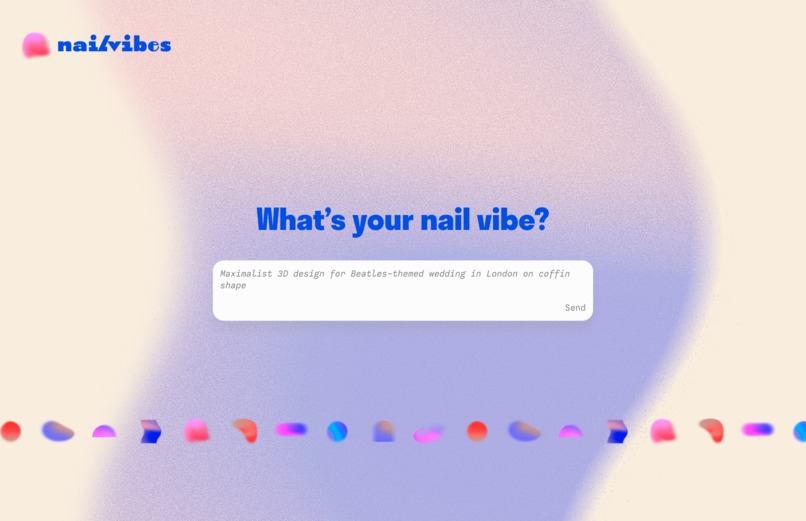

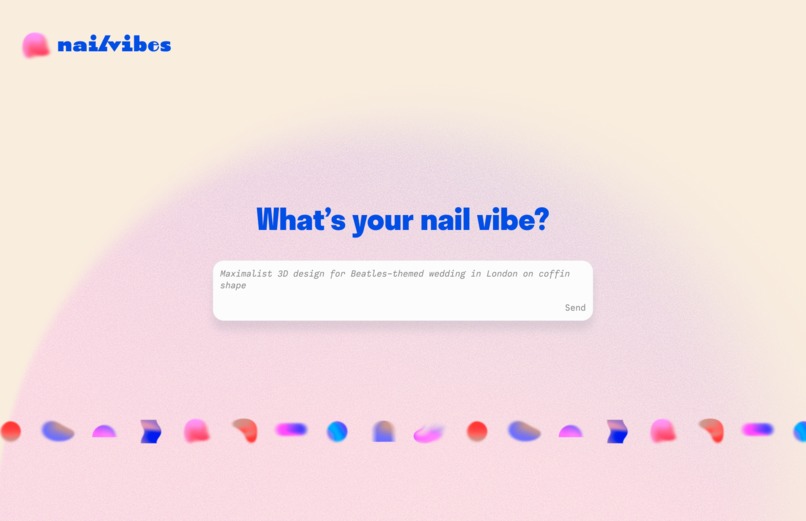

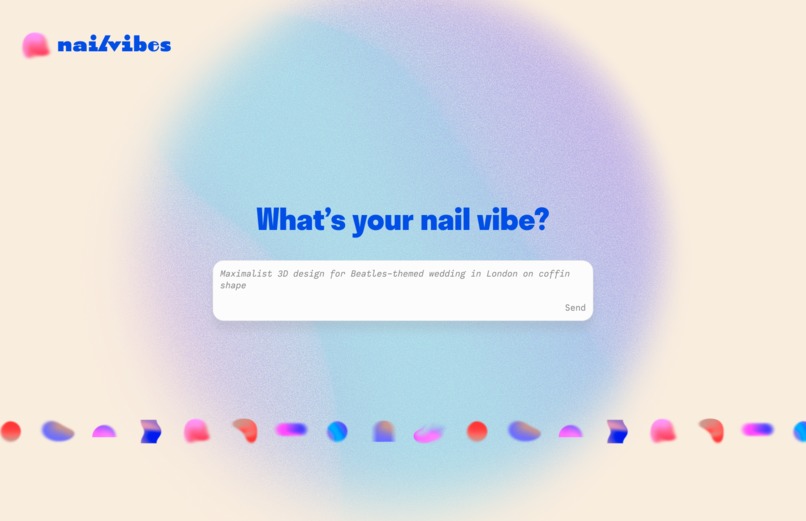

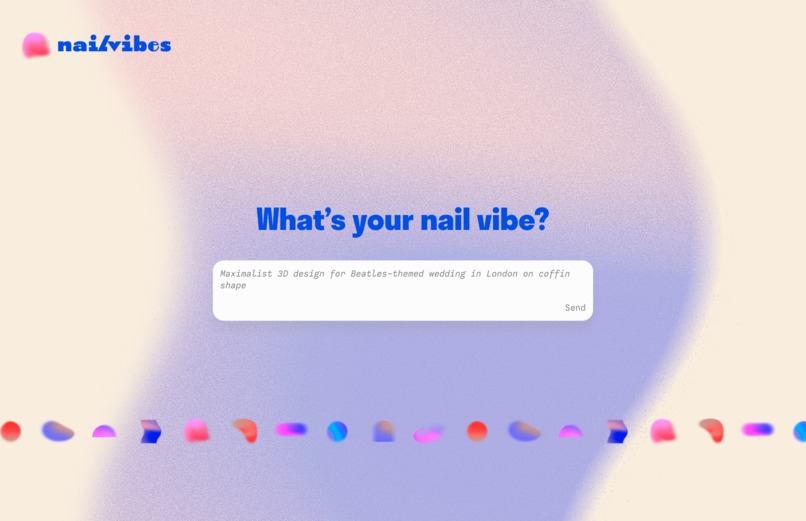

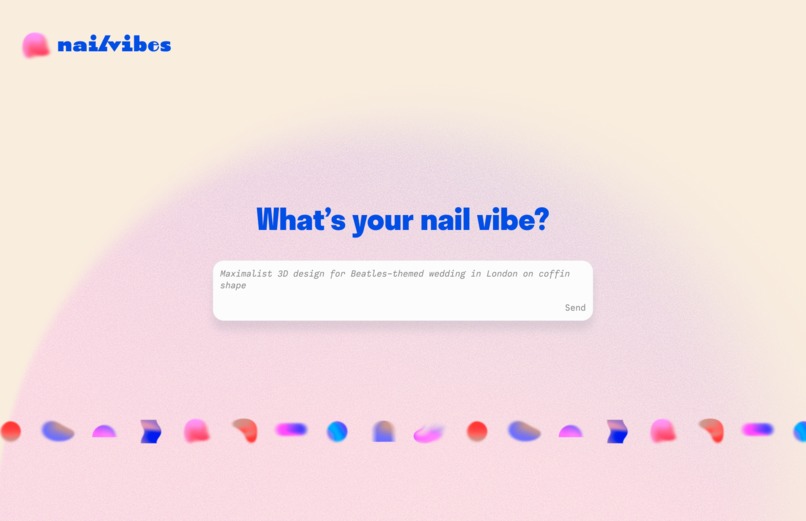

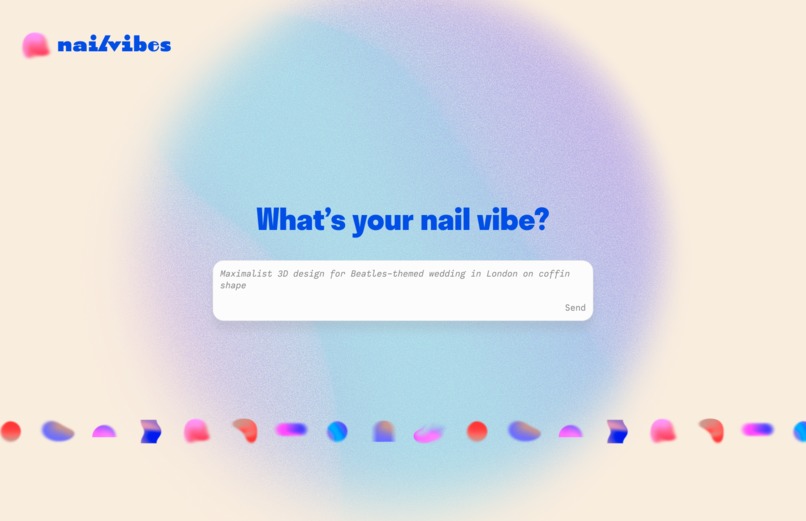

A concept of what the homepage could have looked like (from my Figma)

-

A concept of what the homepage could have looked like (from my Figma)

-

A concept of what the homepage could have looked like (from my Figma)

-

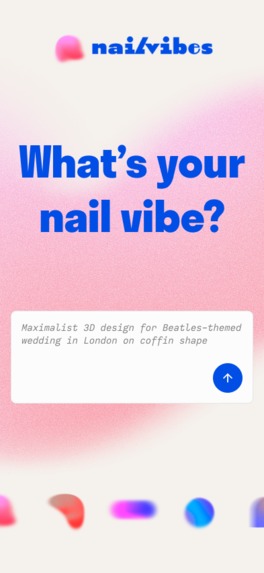

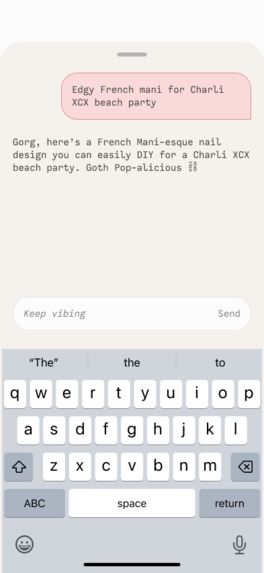

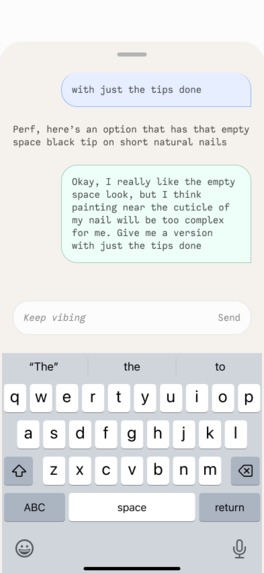

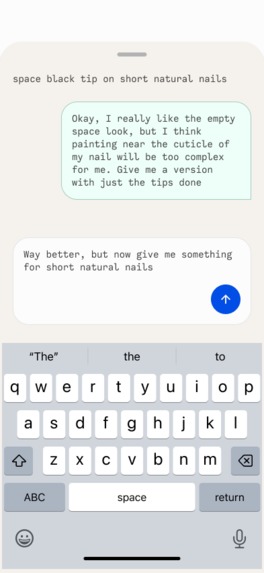

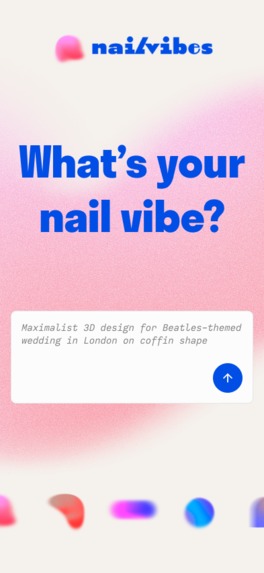

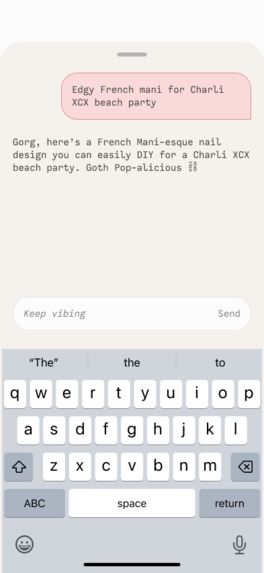

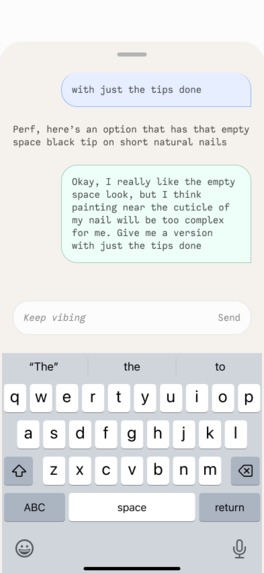

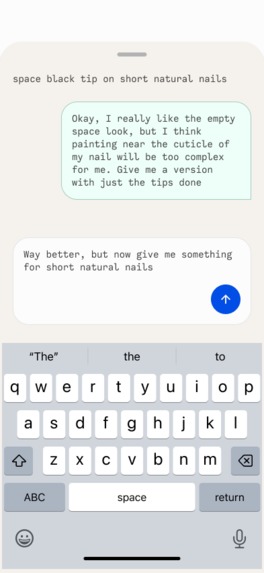

My Figma mockup of mobile

-

My figma mockup of the chat functionality on small form factors

-

My figma mockup of the chat functionality on small form factors

-

My figma mockup of the chat functionality on small form factors

-

The messy inspiration and ideation process

Inspiration

I (Sarah) save cute nail ideas almost daily, but when it comes time for my nail appointments or doing my own nail art at home, I never know exactly what look I should get done.

When I did some keyword searching and realized that "nail ideas" and related strings are some of the most top-searched but under-served phrases, I realized I wasn't alone.

We thought it would be fun to create a visual, dynamic, emotive experience for folks to explore just-in-time nail designs without knowing exactly what they want. You can simply enter “a vibe” and see where it takes you.

What it does

NailVibes generates custom nail art ideas based on open-ended text prompts like “goth picnic” or “coastal grandma.”

Users start with curated inspo, then refine the look using natural language. Behind the scenes, it blends image retrieval with AI-powered editing to let users go from vibe to visual — no design skills needed.

How we built it

We built NailVibes using Bolt.new for the frontend and Supabase for backend storage, logic, and image retrieval.

Users start by entering a vibe prompt (e.g., “vampy romantic”) which is mapped to aesthetic tags via custom keyword extraction logic. These tags are passed to a Postgres SQL function in Supabase that returns the best-matching nail design from a curated image dataset we manually uploaded.

Once the system has rendered the base image, the user can refine the design using natural language (e.g., “make it moodier” or “add shimmer”). That request, along with the selected image, is sent to a Supabase Edge Function, which calls the Replicate API using the black-forest-labs/flux-fill-pro model to intelligently edit only the nails.

We render precise edits by sending both the image and prompt to the model, and in some cases, crafting the prompt behind the scenes to guide the model to focus on nails.

Challenges we ran into

First, getting CORS configured correctly across Bolt.new, Supabase Edge Functions, and the Replicate API took a lot of trial and error.

We also had to carefully control how user prompts were interpreted by the image model — Black Forest was powerful, but without proper guidance, it would generate full scenes instead of just updating the nail design.

This led us to a hybrid approach: retrieving curated base images from Supabase first, then only using the model for edits. We also had to write custom SQL functions to intelligently match user vibes to stored designs using tags, which required tuning and debugging to make sure results felt accurate and inspiring.

And of course, integrating everything into Bolt.new while balancing visual polish with MVP speed meant constantly refining our flow.

Sarah also learned the hard way that it's highly advisable to integrate GitHub into your projects from the very beginning...she got pretty far deep into her initial build of the project, but then Bolt started hallucinating, and she couldn't walk it back...so she had to start the project all over again.

Accomplishments that we're proud of

Sarah, a non-technical designer, set up a database in supabase, created the keyword mapping structure, and got the main flow and logic of the app working on her own all with the help of vibe coding!!! Wow!!! It was totally out of her normal wheelhouse to set up the backend functionality before doing the true frontend rendering, but it was so important to do this

This was truly a lesson in scoping. Sarah pared down the vision of what we created quite a bit, but we still kept enough scope to demonstrate the concept and have a complete user flow

We came up with solutions for how we could get our Replicate model to have more accurate results with our hybrid “the first image retrieval is from the database” and then “the Replicate model will refine that first image as a starting point”

Nikolai got to stretch his architecture skills when it came to manually connecting our app to our Replicate model, which he doesn’t often get to do as part of his usual job as a frontend engineer

Nikolai got to play with genAI image generation models for this first time and got to experience prompt engineering

We both got to learn a new world of debugging with the help of GenAI in Bolt and ChatGPT

Sarah created a visual language lickety-split for this project! Whew!

Nikolai feels super accomplished that we were able to get a edge function to communicate with the Replicate API

What we learned

ALWAYS use branches on GitHub for version control

When prompting in Bolt, prompt small incremental changes at a time. This is especially important the deeper you get into the project

Use screenshots when prompting Bolt often to help give the AI more context

Bolt is able to refactor code super easily. What would have took me (Nikolai) an hour was accomplished in a minute

I did not know you can have a select zone AI will edit an image (says Nikolai)

What's next for NailVibes

Sarah is going to show this proof of concept at the Create & Cultivate Festival in Los Angeles she’s attending in a couple weeks to gain feedback from target users.

Additionally, we think there could be a monetization strategy for NailVibes if we were to continue to refine the GenAI image editing technology (maybe further train our Replicate model), build in a products recommendation engine to help users bring their nail vibe to life, and even do brand partnerships with nail product brands and/or influencers.

It would be really cool to one day get acquired by Pinterest or a nail products brand.

Categories we're aiming for

While we are open to receiving recognition in all Hackathon categories, we are particularly interested in: all the global prizes and the bonus prizes for Uniquely Useful Tool, Creative Use of AI, Most Beautiful UI, Most Viral Project, and We Didn’t Know We Needed This.

Thank you for your consideration!

Built With

- bolt

- deno

- figma

- node.js

- postman

- replicate

- sql

- supabase

- tailwind

- typescript

Log in or sign up for Devpost to join the conversation.