-

-

Visualization of human body with symptoms

-

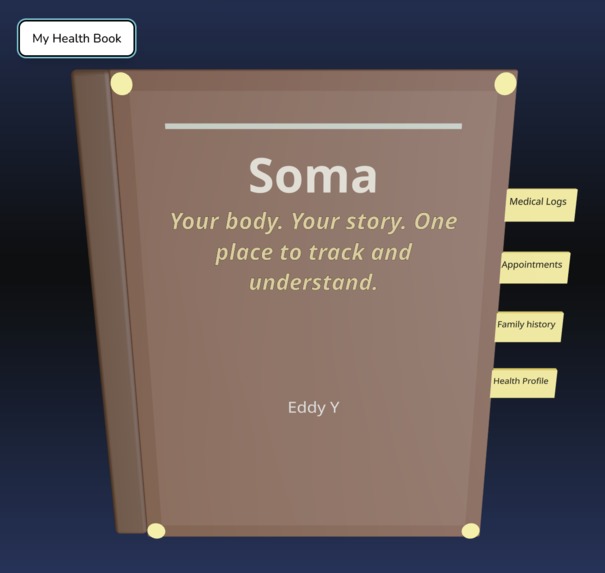

Health journal, tracking all medical records for a patient

-

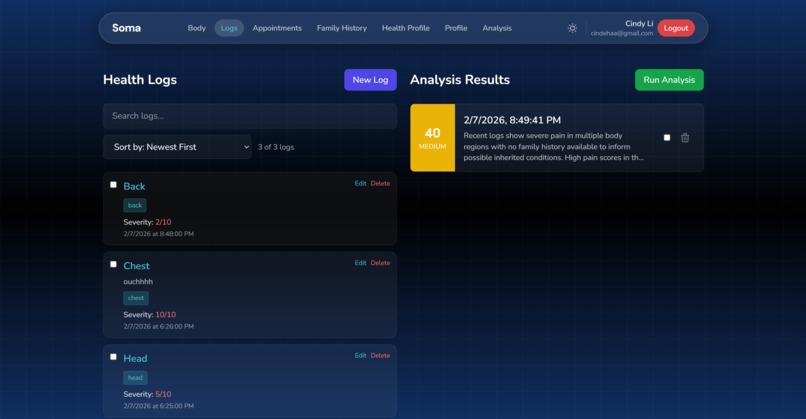

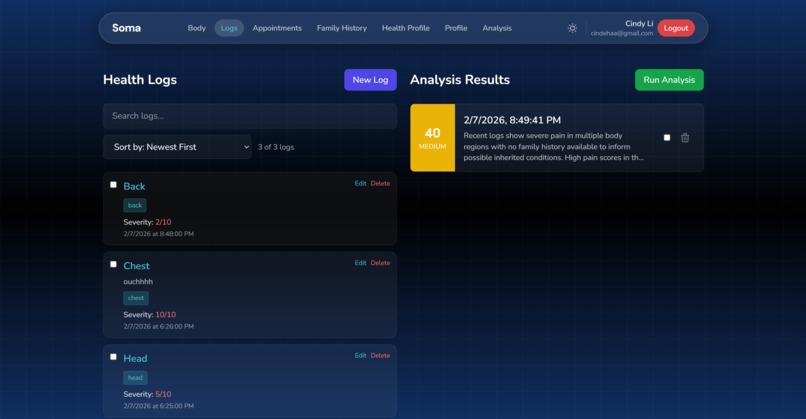

Timeline of logs with filtering, sorting ability on left. Timeline of AI analysis results based on recent logs and user info on right.

-

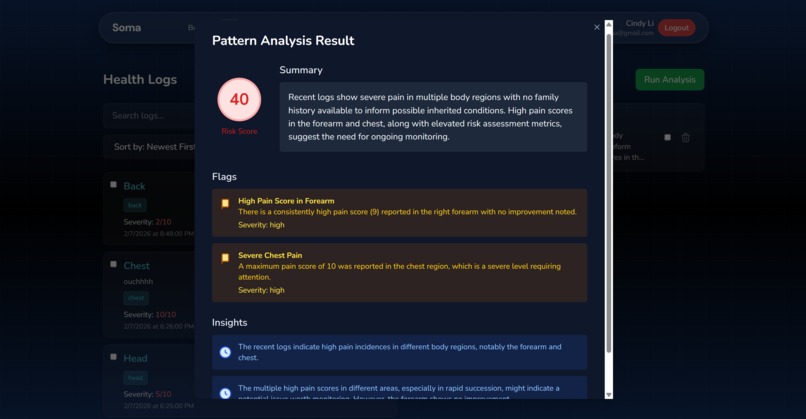

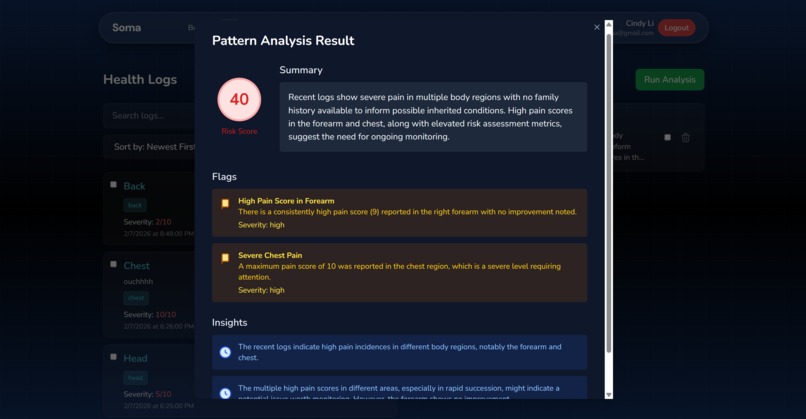

Pop-up of AI analysis results; contains detailed information with risk score

-

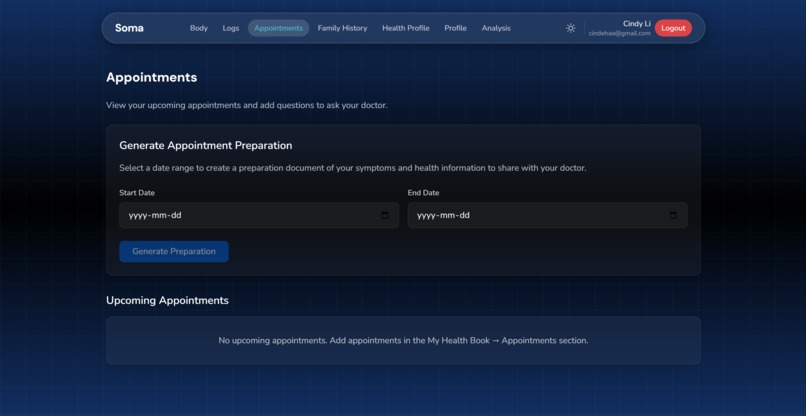

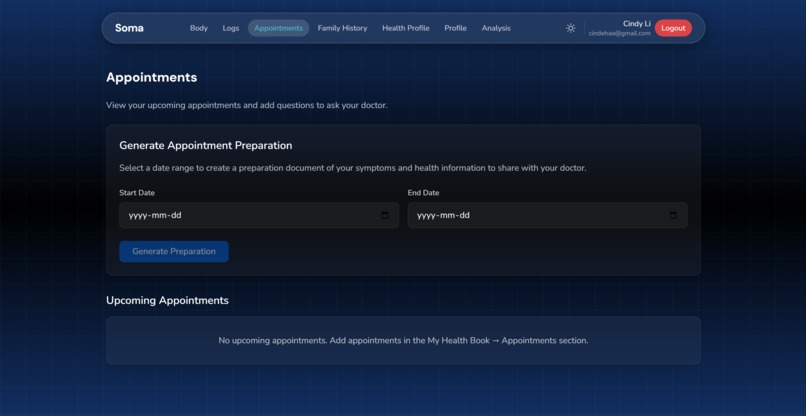

Users can create appointment preparation notes and export to PDF

-

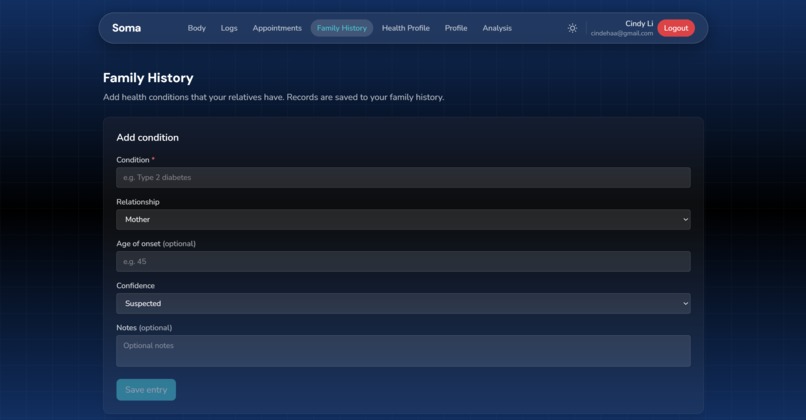

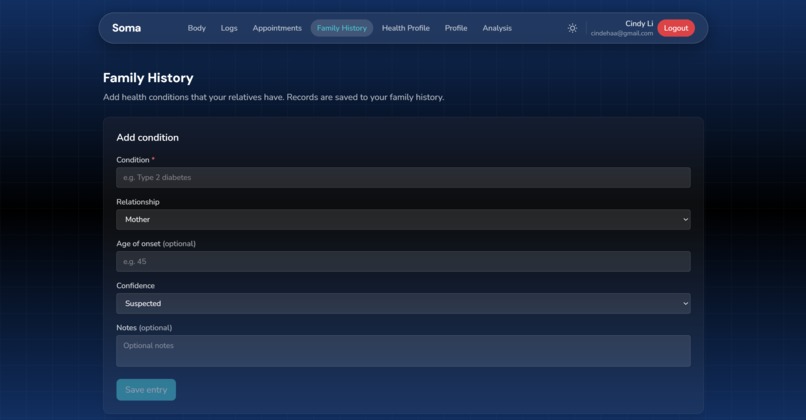

Feature to input family history

-

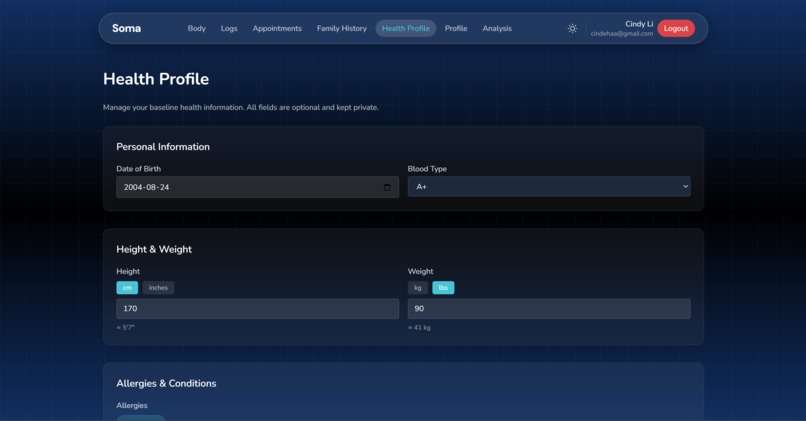

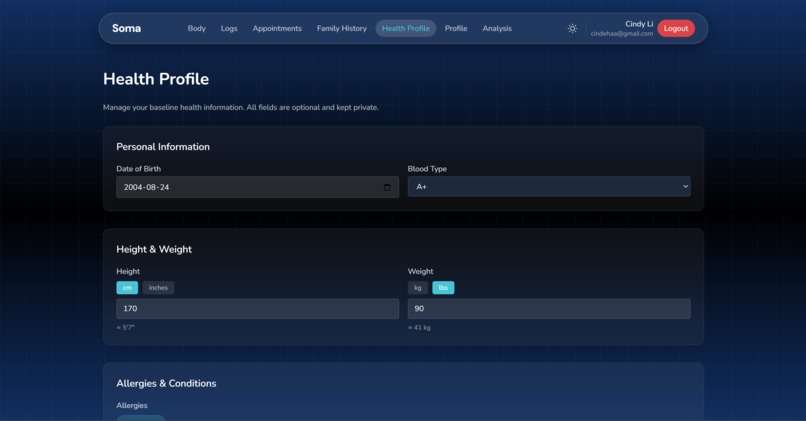

Health profile page to note basic information

Soma

Your body. Your symptoms. One place to track and understand.

Inspiration

Everyone has experienced that moment at the doctor’s office when they’re asked, “When did this start?” or “How often does it happen?”—and suddenly, you’re forced to rely on vague memories and guesses. Our health is something we live with constantly, yet we have no intuitive way to observe it, track it, or understand patterns over time.

We realized that the human body doesn’t come with an interface. There’s no dashboard, no timeline, no system that helps people visualize and truly understand what their body is experiencing. As a result, important signals often go unnoticed until they become serious.

We were inspired to build something that bridges this gap—a system that makes health visible, trackable, and understandable. By combining an visual interface with AI pattern detection, we wanted to create a tool that helps people better understand their own bodies and enables more informed conversations with healthcare professionals.

What it does

Our application is an AI-powered interactive body journal that allows users to visually log symptoms by clicking directly on a 3D model of their body. Instead of writing vague notes or trying to remember details later, users can precisely track where and how they’re feeling discomfort over time.

As users log entries, our AI continuously analyzes their symptom history to detect patterns, trends, and anomalies. It identifies recurring issues, monitors changes in severity, and connects symptoms to relevant factors such as lifestyle and family health history. The system then generates insights, risk indicators, and structured summaries that help users understand their health more clearly.

Over time, this transforms scattered symptom observations into a coherent, visual timeline of the user’s health—empowering users with awareness and helping clinicians see patterns that might otherwise be missed.

How we built it

We built Soma as a full-stack web application using Vite, React 18, and TypeScript to create a fast, scalable, and interactive frontend. At the core of the interface is an immersive experience powered by Three.js and React Three Fiber, featuring a realistic 3D human model alongside a dynamic health journal. Users can click on approximately 20 mapped body regions, which triggers animated page-flipping transitions that open dedicated logging pages within the interactive health book. This system enables precise symptom tracking, heatmap visualization of symptom frequency and severity, and real-time updates as new health data is logged.

To support secure user access and personalization, we integrated Auth0 for authentication and single sign-on, along with a comprehensive health profile system that allows users to document lifestyle metrics such as sleep, activity, and diet, as well as family medical history. These factors are incorporated into Soma’s analysis pipeline to provide more context-aware insights.

We used Supabase as our primary backend and database, enabling us to store structured symptom logs, multimedia attachments, medical records, health profiles, and AI-generated insights. Supabase’s blob storage allows users to upload files such as images and scans, while edge functions written in TypeScript handle more advanced data processing and AI-related workflows. Users can create, edit, delete, and search logs, and view their health history through a chronological timeline and an interactive 3D book interface with organized sections and rich detail views.

For medical imaging analysis, we implemented a FastAPI backend that processes MRI scans using NVIDIA MONAI segmentation models. This allows Soma to automatically identify and highlight abnormalities in medical images, which are then integrated with the user’s logged symptom history to provide a more complete and contextualized view of their health.

The intelligence of Soma is powered by a hybrid AI analysis engine combining statistical methods and large language models. We implemented deterministic anomaly detection using Z-score analysis, severity trend slope calculations, recurrence frequency tracking, and predictive risk scoring. We also integrated Backboard.io to manage LLM-powered analysis workflows, enabling persistent AI memory across user sessions and allowing the assistant to act as a physician-like agent that analyzes symptom patterns, correlates them with family medical history, and generates structured insights.

This AI system produces anomaly flags, confidence scores, predictive risk indicators, and doctor-ready summaries across selected date ranges. These summaries can be exported as structured PDF reports, helping users and clinicians quickly understand symptom progression and prepare for medical consultations.

Finally, we deployed Soma using Vercel to ensure reliable hosting and performance, creating a fully functional end-to-end system that integrates interactive visualization, structured health logging, medical image analysis, and AI-powered health intelligence into a seamless user experience.

Full Feature List

Authentication & Profile

- Auth0 single sign-on integration

- User health profile with lifestyle tracking (sleep, activity, diet)

- Family medical history documentation

Interactive 3D body viewer with clickable regions for symptom logging

- Human realistic anatomical model with detailed anatomy mapping

- Body region detection and mapping system (~20 clickable regions mapped to detailed anatomy)

- Heat map overlay showing symptom frequency and severity by body region

- Real-time data visualization updates based on log history

- Health Logging

Backboard.io

- Used for LLM prompts when performing an analysis on a patient.

- We define an assistant that acts like a physician, run threads which allows our models to retain memory of previous medical records when a new analysis is run.

Create, edit, and delete symptom/health logs

- Severity and location tracking

- Multimedia attachments (images/files) with blob storage in Supabase

- Rich log detail views with timeline context

- Advanced search across all logs

- Timeline & Visualization

Chronological timeline view with health events

- 3D interactive health book with page-flipping animations

- Organized information display across multiple book sections

Automated AI pattern analysis with anomaly detection

- Z-score statistical analysis for outlier detection

- Severity trend analysis and slope calculations

- Anomaly severity scoring and confidence ratings

- Family history correlation detection

- AI-generated insights from pattern analysis

- Doctor appointment preparation summaries with AI analysis across date ranges

- PDF export of appointment preparation documents grouped by body region

- Predictive risk indicators

Infrastructure

- Vite + React 18 on TypeScript for fast development

- Three.js + React Three Fiber for 3D graphics

- Vercel for deployment and hosting

- Supabase for database storage and edge functions for AI processing (Deno/TypeScript)

- FastAPI for handling the segmentation analysis with a model

Challenges we ran into

- Cindy: working with Edison on integrating backboard with Supabase and auth0 was challenging but we managed to figure it out

- Michelle: Integrating NVIDIA MONAI required resolving input tensor dimension mismatches and adapting our medical imaging pipeline to ensure compatibility between uploaded MRI scans and the segmentation model.

- Yolanda: Integrating heat map logic into a 3D mesh and ensuring clicking precision was accurate on the 3D model.

Accomplishments that we’re proud of

Successfully building a fully functional end-to-end application, with all core features—from interactive logging to AI insight generation—working together seamlessly Integrating a fully interactive 3D human body model into the web interface, which required extensive research into 3D rendering, model interaction, and frontend-backend coordination Integrating NVIDIA MONAI to enable medical image segmentation and abnormality detection, allowing users to visualize and understand potential issues without requiring immediate clinical interpretation Designing and implementing a clean, modern, and intuitive UI that makes complex health tracking feel natural and accessible Developing a complete AI analysis pipeline that transforms raw symptom logs into meaningful insights, summaries, and risk indicators Building a robust backend infrastructure with authentication, database persistence, and real-time data synchronization

What we learned

Cindy: Learned how to deploy a full-stack application, including setting up authentication, configuring a production-ready database, and ensuring secure and reliable data storage and access. Michelle: Learned how to integrate external machine learning and AI models into a production application, and strengthened my understanding of frontend-backend communication, API design, and building systems that connect user interactions with intelligent analysis. Yolanda: Learned how to create creative data visualizations using out-of-the-box thinking. I used React Three Fiber to integrate Three.js directly into React, allowing us to treat the 3D model as a component in our UI.

What's next for Soma

Our next step is to make Soma deeply personalized, predictive, and seamlessly integrated into real-world healthcare workflows. Instead of relying on a generic 3D body model, we plan to integrate SHAPY (https://github.com/muelea/shapy), which allows users to generate an accurate, personalized 3D model of their own body from a simple photo. This makes symptom tracking far more precise and intuitive, allowing Soma to become a true digital twin of the user’s physical body. We also plan to integrate wearable device data from smartwatches and health platforms such as Apple Health and Google Fit. This will allow Soma to continuously monitor physiological signals like heart rate, heart rate variability, sleep patterns, and activity levels. By combining this data with symptom logs, we can train machine learning models to detect early warning signs of potential health issues, such as cardiovascular abnormalities or chronic stress patterns. To improve accuracy and personalization, we will expand Soma’s health profile to include additional biometric and lifestyle metrics such as height, weight, activity level, sleep quality, and family health history. This allows the AI to generate more context-aware insights and identify inherited or lifestyle-related risk patterns more effectively. We also plan to implement secure NFC tags or QR codes that allow healthcare providers to quickly access a patient’s health summary during appointments. This creates a frictionless way to share structured symptom timelines, AI-generated insights, and relevant medical history, improving communication and enabling more informed clinical decision-making. Beyond these features we plan to integrate multimodal inputs such as voice logs, photos, and medical documents, allowing users to capture their health experiences more naturally. Over time, Soma will function as a continuously learning system that adapts to each user, helping them better understand their body and detect issues earlier. Ultimately, our goal is to transform Soma from a symptom tracker into a personalized health intelligence companion — one that helps users visualize, understand, and proactively manage their health over time.

Built With

- fastapi

- javascript

- node.js

- nvidia

- python

- react

- supabase

- typescript

- vercel

Log in or sign up for Devpost to join the conversation.