-

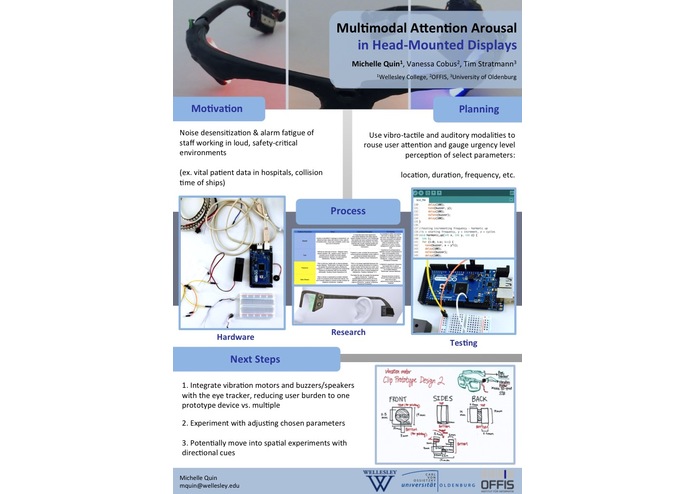

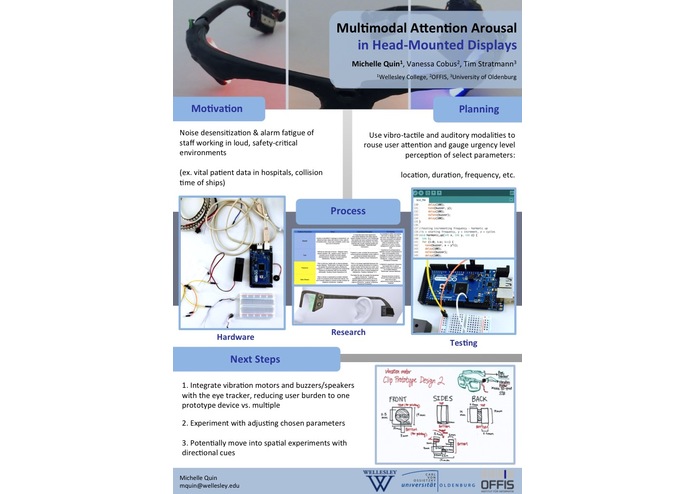

Presentation Poster

-

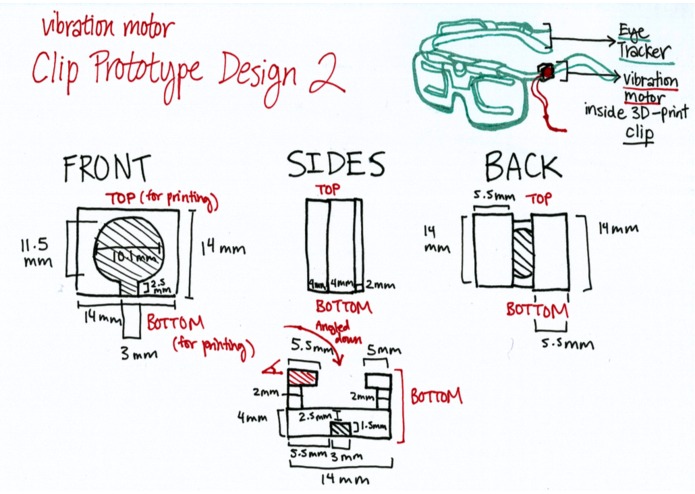

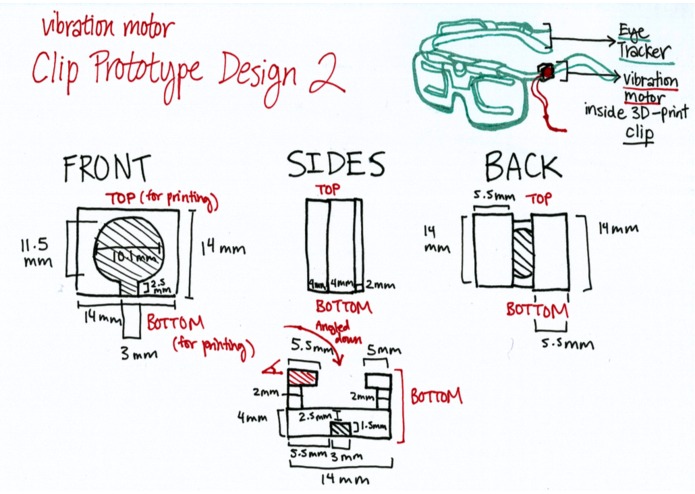

One of the prototype design sketches

-

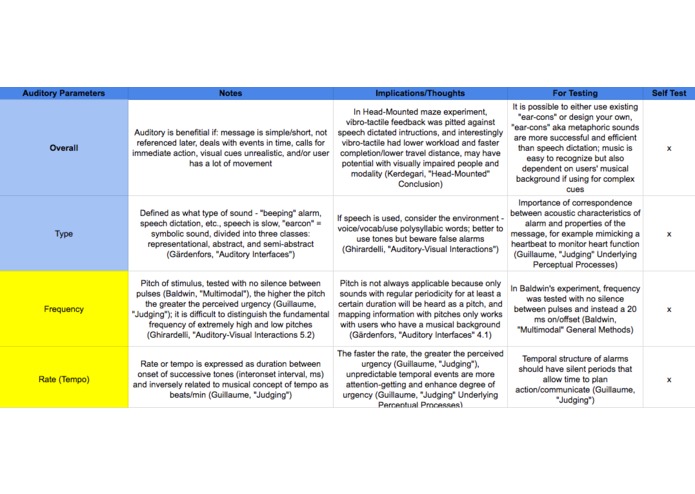

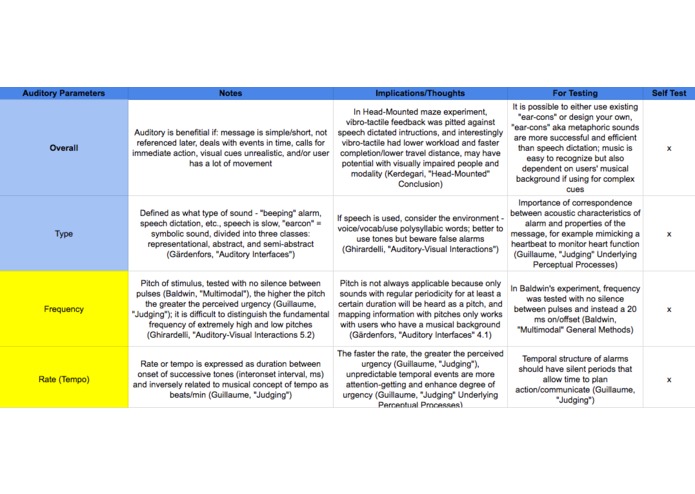

Part of literature research done

-

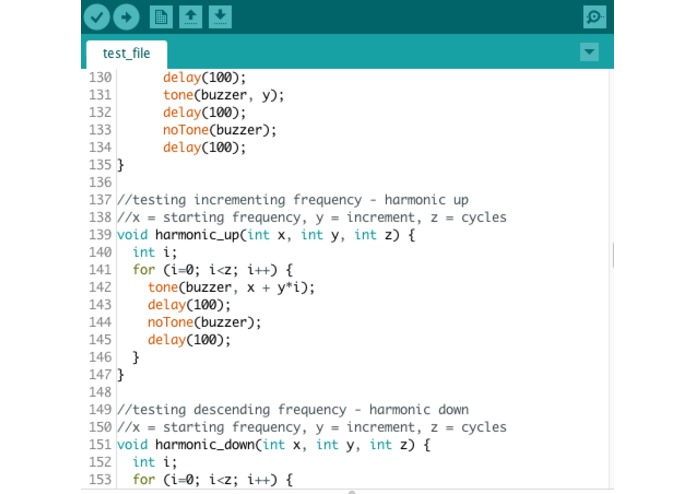

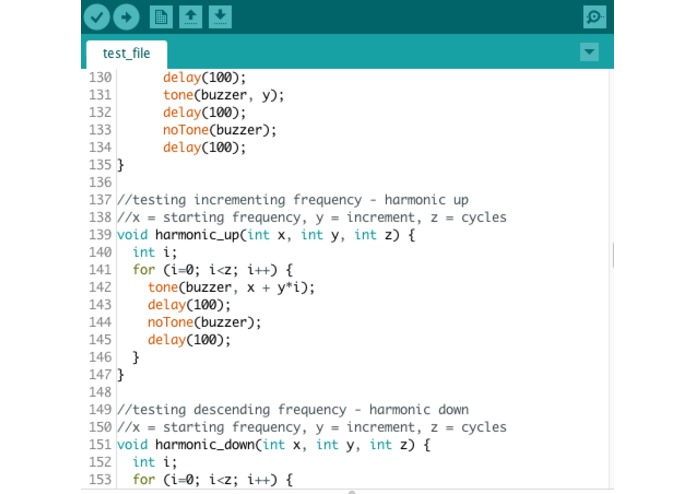

Snippet of code from Arduino test file of parameters

-

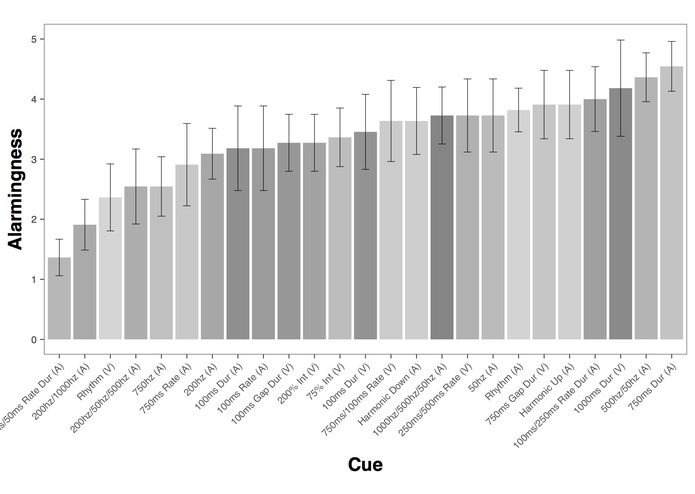

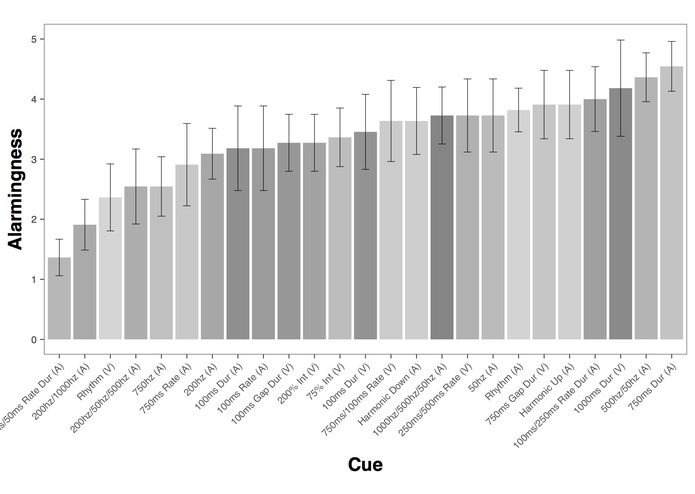

Data Analysis run in RStudio - this one plots user perception of alarm for all of the cues

-

Demo for Dr. Andrew Kun (University of New Hampshire), Dr. Orit Shaer (Wellesley College) and Dr. Susanne Boll (University of Oldenburg)

-

Final Prototype

-

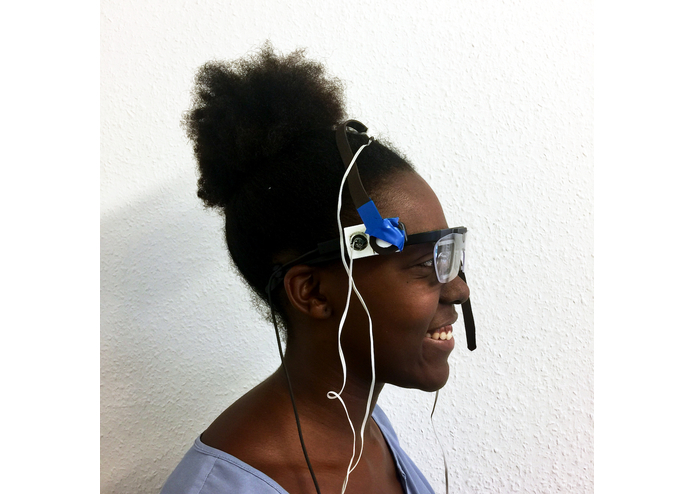

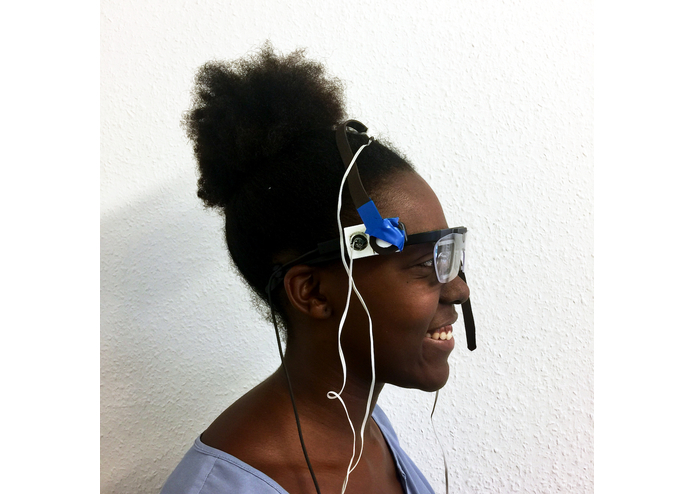

User wearing prototype

Inspiration

An issue plaguing staff of safety-critical, noisy environments such as hospitals and cargo ships is alarm desensitization or fatigue, which stems from the accelerated volume of noise and false alarms. This is not only detrimental to staff health, but also decreases awareness and efficiency in critical situations. For instance, for a hospital caregiver who monitors multiple patients' heart rates, a few seconds can be the difference between life and death.

What it does

My research project contributes to ongoing research in multimodal attention arousal at the University of Oldenburg, in collaboration with the university and the health division of OFFIS - Institute for Information Technology. The goal is to gain a better understanding of what types of head-mounted feedback would best alert staff while minimizing discomfort and desensitization.

The achieved project plan was to research vibro-tactile and audio parameters in head-mounted displays, and to design and implement a working prototype that tested selected parameters. I coded functions that would allow me to manipulate various parameters (ex. frequency, duration, rhythm), then came up with potential specific parameters, which I narrowed down to 25. I created a prototype that combined vibration motors and speakers with a Tobii eye tracker, then designed an experiment and ran user studies with the narrowed parameters and questionnaires gauging urgency level perception. I concluded by running data analysis of results.

How I built it

Arduino, 3D rendering (Autodesk), 3D printing, Tobii Eye Tracker, RStudio

Challenges I ran into

The time constraint of my program (NSF-funded "US-German Research on Human-Computer Interaction in Ubiquitous Computing") was a challenge, and I had to schedule wisely to fit in prototyping, experiment design, user studies, and data analysis.

Accomplishments that I'm proud of

I'm proud of being able to achieve my set goals, and to have successfully collaborated with other researchers in OFFIS and the University of Oldenburg.

What I learned

I learned how to work with new software and hardware, such as eye trackers and R.

What's next for Multimodal Attention Arousal in Head-Mounted Displays

Further results analysis, and moving to integrate results with existing and ongoing research in this topic.

Log in or sign up for Devpost to join the conversation.