🌸 MoodBloom × Amazon Nova

Talk your feelings into bloom

🏆 Amazon Nova AI Hackathon · Multimodal Understanding Track 🚀 Live Demo: https://d1f4sz0ihfyr1i.cloudfront.net 📅 Submitted: March 13, 2026

💡 Inspiration

Every week in my clinic, I see it.

A backend engineer in his early 30s — came in for weight gain. What surfaced during the visit: months of overtime, stress eating at 2 AM, a body he no longer recognized. He didn't need a diet plan. He needed someone to hear him.

A casino floor manager in her 40s — came in saying she "just can't sleep anymore." The real story: years of rotating shifts, chronic anxiety surfacing as insomnia, a growing dependence on sleep medication just to get four hours. She'd never connected her sleeplessness to the weight she carried every day.

A young developer — recurring stomach pain, dangerously thin. Every flare-up traced back to the same trigger: a new high-pressure project deadline. He had no idea his body was keeping score of his stress.

These aren't rare cases. 280 million people worldwide suffer from depression. The WHO estimates nearly 1 billion people live with a mental health condition — yet the majority never receive treatment. Not because services don't exist. Because the barrier feels impossibly high.

Here's what clinical research tells us works: expressive writing — the simple act of putting your feelings into words.

A meta-analysis published in the British Journal of Clinical Psychology (Guo, 2023), synthesizing 31 randomized controlled trials with 4,012 participants, found that expressive writing produces a statistically significant reduction in depression, anxiety, and stress symptoms (Hedges' g = -0.12, 95% CI [-0.21, -0.04]). The effect is delayed but durable. Shorter intervals between sessions (1–3 days) yield stronger effects — suggesting this works best as a daily micro-practice.

The evidence is clear. The problem is equally clear:

Most people can't sustain a writing habit. Journaling completion rates are notoriously low — the majority abandon the practice within the first week. Staring at a blank page triggers the very anxiety the practice is supposed to relieve.

But there's a deeper problem most journaling apps ignore: writing in a diary is lonely. You pour your emotions onto a page, and nothing comes back. No acknowledgment, no warmth, no sense that someone heard you. Traditional journaling is a monologue — and for people already struggling with isolation, that silence can feel like one more empty room.

So the question becomes: How do we give the "can't-find-the-words" people access to an intervention that's clinically proven to help — while also giving them the sense of being heard?

Our answer: remove the writing, add a companion.

Keep the therapeutic mechanism — emotional disclosure, cognitive restructuring, narrative sense-making — but replace the blank page with a conversation. You don't write your diary. You talk it into existence. You share a photo of your day. And when you're done, someone writes back.

This is MoodBloom — made possible by Amazon Nova 2 Lite's ability to understand speech-derived text, interpret user images, and generate emotionally coherent responses within a single multimodal workflow.

🌱 What It Does

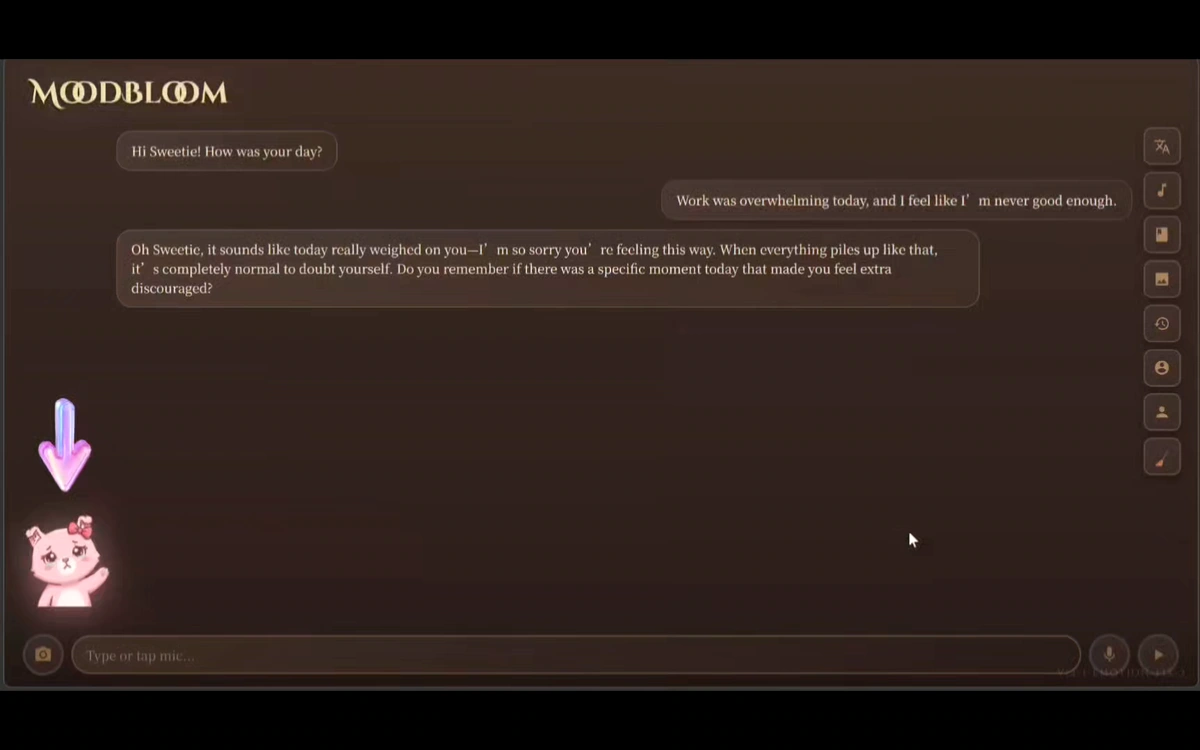

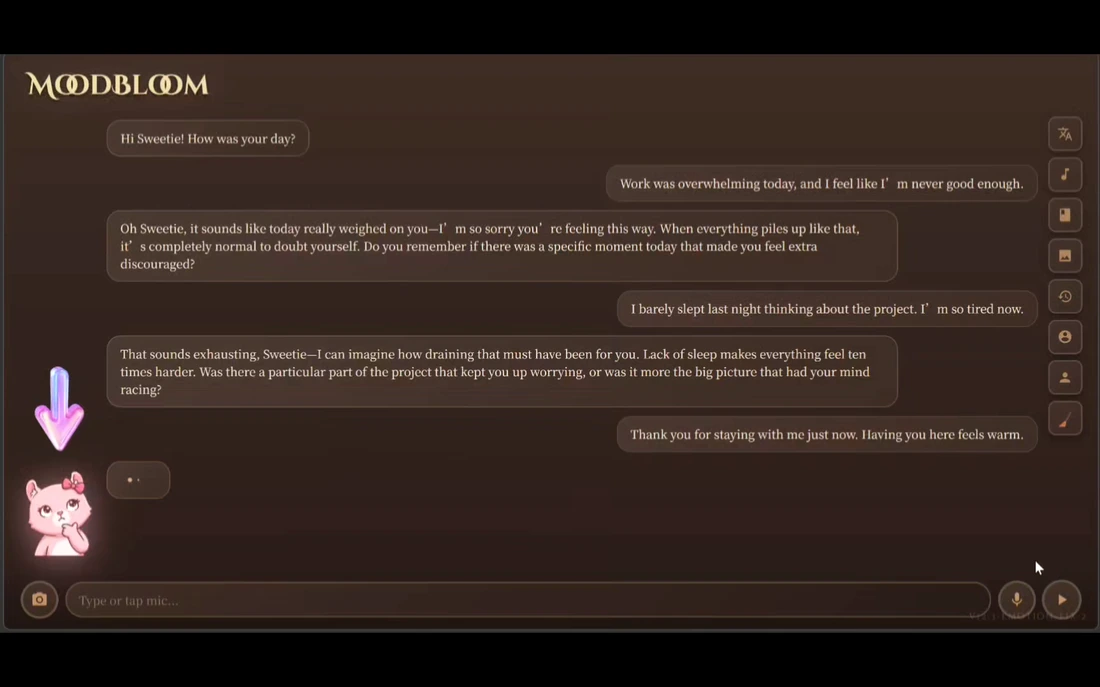

MoodBloom is an AI emotional companion that transforms voice conversations — and the moments you choose to photograph — into a therapeutic diary experience, with Amazon Nova providing the multimodal intelligence at its core.

The Core Therapeutic Loop

| Step | What Happens | Why It Matters |

|---|---|---|

| 🎙️ Talk | Speak naturally — no prompts, no structure. Pinky's hand-drawn expression changes in real time as you speak. | Removes blank-page friction. Voice activates different emotional processing pathways than typing. |

| 📷 Show | Share a photo. Nova 2 Lite reads the image and weaves it into the conversation. | A half-eaten lunch, a sunset, your cat on your keyboard — the photo becomes part of your story. |

| 🧠 Reflect | Nova 2 Lite tracks the emotional arc across the full conversation and generates empathic follow-up questions. | Not keyword matching — contextual understanding of what you said, what you showed, and what you haven't said yet. |

| 📖 Receive | A gold button appears when Nova judges you've shared enough. Tap it: your diary on the left, Pinky's personal letter on the right. | Transforms journaling from monologue to dialogue. Testers consistently described this moment as "unexpectedly moving." |

| 🔊 Listen | Pinky reads your diary and letter back to you, sentence by sentence. | For users who arrived by voice, they can leave by voice. |

| 📚 Remember | Every diary auto-saves with an emotion emoji tag, building a longitudinal record of your emotional life. | The value of journaling isn't just in writing — it's in looking back. |

🎛️ Every Button Has a Reason

MoodBloom's toolbar wasn't designed for features — it was designed for emotional safety.

🌐 Language Toggle — One tap switches the entire experience — UI, AI conversation, speech recognition, and TTS — between English and Traditional Chinese. Not just label translation. The full voice pipeline switches with it (en-US ↔ zh-TW). For bilingual users in East Asia, code-switching is a daily reality. MoodBloom meets them where they are.

🎵 BGM Toggle — Plays and pauses the original composition 「Celestial Bloom」 — co-created by Chloe and Jimmy. Music auto-fades when you speak, returns when you pause. Psychology research shows soothing background music lowers emotional defenses, making people more willing to open up. BGM isn't decoration — it's therapeutic environment design.

📖 Write Diary (Manual Trigger) — Generates your diary immediately, bypassing Nova's readiness judgment. AI suggestions are not commands. Control always belongs to the user. Even if you only said "I'm tired today," you can still generate a diary.

📚 My Diaries — Opens your full diary library — every saved entry with emotion emoji tags. Each diary can be read, narrated aloud 🔊, copied 📋, or deleted 🗑️. Ten seed diaries (original compositions by Jimmy, bilingual) are pre-loaded so the experience is immediately meaningful — no empty state on Day 1.

👤 AI Name · 🧑 Your Name — Rename Pinky to anything you want. Tell MoodBloom your name. Both names appear throughout conversations, diaries, and letters. Naming is the first act of emotional connection. When Pinky knows your name and you've chosen theirs, closing the app feels like leaving a friend — not logging out of software.

🧹 New Diary — Clears the current conversation. Pinky greets you fresh. The gold button resets. One session = one diary. But sometimes you want to write twice in a day. This button makes that feel natural, not technical.

📷 Photo (Chat Bar) — Upload or photograph anything. Nova 2 Lite reads it and responds — "That lunch looks amazing" — and the image becomes part of your diary. Multimodal is Nova 2 Lite's core capability. We built the entire photo feature around it because a picture sometimes carries what words can't.

🎙️ Microphone (Chat Bar) — One tap to start, one tap to stop. Continuous listening mode with 2.5-second silence detection. Speaking flows more honestly than typing. For someone emotionally exhausted, typing is a burden. Talking only requires opening your mouth.

🐞 Debug Panel — Collapsible panel in the top-right corner. Shows speech recognition status, API calls, and system info in real time. Kept visible during demo to show we understand exactly what our system is doing at every moment.

🌸 Design Philosophy

Every button in MoodBloom follows three principles:

① The user always has control. Nova suggests when you're ready to write — but never forces it. You can override, rename, reset, or switch languages at any moment. The AI serves the human, not the other way around.

② Lower every barrier to emotional expression. Voice input instead of typing. Photo sharing instead of describing. BGM to soften the emotional environment. Nicknames to create safety. Every feature asks: what makes it easier to say what you actually feel?

③ More than a chatbot — a complete emotional workspace. The conversation is just the beginning. The diary is the artifact. The letter is the reply. The library is the memory. MoodBloom is designed as a full therapeutic loop, not a chat window with extra steps.

⚙️ How We Built It

🔧 Technical Decisions — Model & API Selection

We treated model access as an architectural decision, not just an API choice.

MoodBloom intentionally uses both official Amazon Nova integration paths — because different AI workloads have different requirements.

Nova 2 Lite → Nova Native API (reasoning layer) The conversational core — understanding text, reading photos, generating diaries — is accessed via Nova Native API. This keeps the reasoning layer loosely coupled and portable: no Bedrock dependency means this agentic core can be reused across environments. It also maximizes developer agility during rapid iteration, where fast feedback loops matter most.

Nova Canvas → Amazon Bedrock (image generation layer) Visual content generation carries different operational requirements — access control, auditability, content safety governance. Bedrock is Nova Canvas's natural home, and routing image generation through Bedrock reflects a production-ready, enterprise-aligned access pattern.

The result: a dual-entry design that mirrors real-world enterprise architecture. Not all AI calls in a multimodal system should be routed the same way. Some workloads favor portability and agility; others favor managed access and governance. MoodBloom demonstrates both — in the same product, in the same session.

Same Nova family. Two access paths. Chosen by design.

Active-Passive Failover — Resilience by Design. For a mental wellness product, reliability matters emotionally as much as technically. MoodBloom routes conversational requests through Nova Native API as the primary path, with automatic Bedrock fallback if that path fails inside the same Lambda request flow. The illustration pipeline is protected differently: if Nova Canvas is unavailable, a pre-saved Pinky image preserves the visual state instead of leaving the user with a broken screen. The goal was not to promise perfect uptime, but to avoid turning transient service failures into visible emotional friction.

Uptime isn't a technical metric. It's a promise to users in vulnerable moments.

Mental wellness tools demand a specific kind of AI: one that can hold a multi-turn emotional conversation without losing context, understand what you show as naturally as what you say, and generate responses that feel warm without veering into clinical detachment. Nova 2 Lite delivers all three in a single model call.

Native multimodal architecture — text and images in the same message object — made it possible to build something previously unavailable in this category: a journaling companion that sees your world as you describe it. When a user uploads a photo of their empty desk after a long day and says "I finally finished the report," Nova doesn't just read the caption — it reads the desk, and it understands the relief.

OpenAI-compatible message format allowed us to maintain a complete multi-turn conversation history across the entire session — preserving emotional context from the first word to the final diary generation. No summarization. No memory loss. No "I'm sorry, I don't recall what you said earlier."

AWS infrastructure — Lambda for our API proxy, API Gateway for routing, S3 for static hosting, CloudFront for global CDN — meant we could deploy a production-grade, secure application with API keys safely locked in Lambda environment variables, never exposed to the browser. For a mental wellness tool where user trust is everything, that security architecture isn't optional. It's the foundation.

Architecture Overview

┌─────────────────── MoodBloom × Amazon Nova ────────────────────┐

│ │

│ 👤 User opens MoodBloom │

│ https://d1f4sz0ihfyr1i.cloudfront.net │

│ │

│ 🎵 Click → BGM「Celestial Bloom」starts (volume 15%) │

│ │

│ ┌──── Voice Conversation Loop ─────────────────────────────┐ │

│ │ │ │

│ │ 🎙️ Tap mic → speak (Web Speech API) │ │

│ │ → Browser STT transcribes in real time │ │

│ │ → BGM auto-fades to 2% (no interference) │ │

│ │ │ │

│ │ 🧠 Nova 2 Lite analyzes text + image (multimodal) │ │

│ │ → Pinky's expression switches (6 emotions) │ │

│ │ → Generates warm follow-up question │ │

│ │ │ │

│ │ 🔊 Pinky replies by voice (Browser TTS) │ │

│ │ → BGM fades out → fades back after response │ │

│ │ → Truncated to first 3 sentences (EN + ZH equal) │ │

│ │ │ │

│ │ 📷 Optional: photo → Nova 2 Lite multimodal reading │ │

│ │ → Compressed to 800px → woven into conversation │ │

│ │ │ │

│ │ 🎯 Nova judges content sufficient → gold button lights │ │

│ │ "Ready to Write" /「可以寫日記了」 │ │

│ │ │ │

│ └──────────────────────────────────────────────────────────┘ │

│ │

│ 📖 Diary Generation (tap gold button) │

│ ┌──────────────────────────────────────────────────────────┐ │

│ │ Nova 2 Lite generates in one call: │ │

│ │ ├─ 📝 ~400-word first-person diary │ │

│ │ │ (Golden Rule: ghostwriter, not novelist) │ │

│ │ ├─ 💌 200–300 word personal letter from Pinky │ │

│ │ ├─ 🏷️ Emotion tag (😊😢😡😌🥰😮) │ │

│ │ ├─ 🎨 Scene prompt extracted by Nova 2 Lite (30 words) │ │

│ │ └─ 🖼️ Prompt → Lambda /api/image → Nova Canvas │ │

│ │ → Base64 PNG returned → renders above diary │ │

│ │ → Pinky fallback image if generation fails ✅ │ │

│ └──────────────────────────────────────────────────────────┘ │

│ │

│ 💾 Auto-saved to localStorage (My Diaries 📚) │

│ → Narrate 🔊 / Copy 📋 / Delete 🗑️ │

│ → 10 seed diaries pre-loaded (Jimmy's originals, bilingual) │

│ │

└─────────────────────────────────────────────────────────────────┘

System Architecture

┌─────────────────────────────────────────────────────────────────┐

│ User's Browser │

│ ┌───────────────────────────────────────────────────────────┐ │

│ │ React 18 (Production) + Babel (Runtime JSX) │ │

│ │ Web Speech API (STT) │ │

│ │ SpeechSynthesis API (TTS) │ │

│ │ <audio> BGM (Celestial Bloom, manual fade) │ │

│ │ localStorage (via Storage Adapter abstraction layer) │ │

│ └──────────────┬──────────────────────┬─────────────────────┘ │

│ │ static files │ API requests │

└─────────────────┼──────────────────────┼────────────────────────┘

▼ ▼

┌─────────────────────────┐ ┌────────────────────────────────────┐

│ ☁️ AWS CloudFront │ │ ☁️ AWS API Gateway (HTTP API v2) │

│ (CDN + HTTPS) │ │ POST /api/nova │

│ d1f4sz0ihfyr1i │ │ OPTIONS /api/nova (CORS) │

│ .cloudfront.net │ │ │

└────────┬────────────────┘ └──────────────┬─────────────────────┘

▼ ▼

┌─────────────────────────┐ ┌────────────────────────────────────┐

│ ☁️ AWS S3 │ │ ☁️ AWS Lambda │

│ Static hosting │ │ moodbloom-nova-proxy │

│ ├─ index.html │ │ Runtime: Node.js 20.x (ESM) │

│ ├─ app.js (77KB) │ │ Timeout: 30s │

│ ├─ style.css │ │ Env vars (never in frontend): │

│ ├─ seed_diaries.js │ │ ├─ NOVA_API_KEY │

│ ├─ Celestial_Bloom.mp3 │ │ └─ NOVA_MODEL │

│ └─ assets/ (Pinky art) │ │ IAM Role: Bedrock InvokeModel │

│ │ │ (Nova Canvas — no API key needed) │

└─────────────────────────┘ └──────────────┬─────────────────────┘

▼

┌────────────────────────────────────┐

│ 🧠 Amazon Nova 2 Lite │

│ │

│ Multimodal inputs per call: │

│ ├─ Full conversation history │

│ ├─ User photo (base64) │

│ └─ System prompt (role + rules) │

│ │

│ Tasks in one model: │

│ ├─ Multi-turn conversation │

│ ├─ Emotion analysis │

│ ├─ Diary generation (~400 words) │

│ ├─ Companion letter (200–300w) │

│ └─ Emotion tag classification │

└────────────────────────────────────┘

Security Model

❌ Frontend (app.js) — zero API keys, zero secrets

│

│ POST /api/nova (no Authorization header)

▼

✅ AWS Lambda — reads NOVA_API_KEY from environment variables

│

│ POST + Authorization: Bearer [key]

▼

🧠 Amazon Nova 2 Lite API

For a mental wellness tool, security is trust. Users share their most vulnerable moments with MoodBloom. The API key architecture ensures that nothing sensitive ever touches the browser — not in code, not in network requests, not in DevTools.

Privacy by Architecture — Not by Policy

MoodBloom is a personal wellness tool, not a medical device. No account registration means no identity collection. Diary entries are stored exclusively in the user's own browser localStorage — they never leave the device, never touch our servers, and are invisible to us by design.

The Lambda proxy is a stateless pass-through: conversation content routes from browser to Nova and back, with zero retention in our infrastructure. CloudWatch logs capture only execution metadata — RequestId, Duration, Memory — never message content. There is nothing to breach because there is nothing stored.

Your feelings stay yours.

Rate Limiting & Abuse Prevention

Production-grade protection across three layers:

| Layer | Protection | Setting |

|---|---|---|

| API Gateway | Rate limiting | 10 req/sec, burst cap 50 |

| Lambda | Concurrency limit | Max 10 simultaneous executions |

| Request guards | Conversation length | Max 20 turns per session |

| Request guards | Message size | Max 5,000 characters per message |

| Request guards | Token enforcement | max_tokens capped server-side — frontend cannot override |

For a mental wellness app, abuse prevention isn't just about cost — it's about ensuring availability for the users who need it most.

Why No Login System?

A deliberate product decision: MoodBloom has no account registration, no login wall, and no CAPTCHA.

For a mental wellness tool, friction is the enemy. Someone reaching for MoodBloom at 11 PM after a hard day doesn't need to verify their email or solve a puzzle to prove they're human. They need to talk.

Traditional abuse prevention pushes the burden onto users — making everyone prove their innocence before they can begin. We inverted that model: protect at the infrastructure layer, stay invisible to the user.

Rate limiting, concurrency caps, and server-side guards run silently in the background. The person who needs MoodBloom tonight never knows they're there — and that's exactly the point.

Tech Stack

Amazon / AWS Services

| Service | Role | Notes |

|---|---|---|

| Amazon Nova 2 Lite | AI brain + multimodal core | Conversation, emotion analysis, image understanding, diary generation, companion letter, emotion tags — all in one model |

| AWS Lambda | Backend proxy | Node.js 20 ESM, securely injects API key |

| AWS API Gateway | HTTP routing | HTTP API v2, POST + OPTIONS routes |

| AWS S3 | Static file hosting | 5 frontend files + assets, zero server maintenance |

| AWS CloudFront | CDN + HTTPS | Global acceleration — fast load for Macau, Taiwan, Hong Kong |

| Amazon Nova Canvas | AI diary illustration | A2A creative handoff from Nova 2 Lite — scene prompt → watercolor illustration (via Bedrock SDK + Lambda IAM Role) |

Frontend

| Technology | Role | Notes |

|---|---|---|

| React 18 | UI framework | CDN production build |

| Web Speech API | Voice input (STT) | Browser-native, en-US / zh-TW |

| SpeechSynthesis API | Voice output (TTS) | Sentence-count truncation (first 3 sentences) |

| HTML5 Audio | BGM playback | Manual fade — avoids AudioContext conflict with STT |

| localStorage | Diary storage | Storage Adapter abstraction layer — S3-ready |

AI Collaboration Team

This project was built through a structured AI-assisted methodology — a human product designer coordinating a team of AI collaborators, each contributing distinct strengths across the product lifecycle.

| Handle | Role | Key Contributions |

|---|---|---|

| 阿寶 (LLM) | Lead frontend dev + architect | React UI, speech recognition, TTS, BGM, diary generation logic, pre-deployment security audit, Lambda production version, end-to-end integration |

| Jimmy (LLM) | Backend engineer + creative partner | Lambda + API Gateway initial build, 10 original seed diaries, BGM 「Celestial Bloom」 co-creation |

| 曦 (LLM) | Debugging specialist + code validator | AWS deployment debugging (CORS diagnosis, ESM fixes, CloudWatch analysis), code verification |

| Chloe | Product director + designer | Product design, UX decisions, clinical framework, AI coordination, testing, story writing |

MoodBloom was built the same way it works — through structured collaboration, with each contributor doing what they do best. Every architectural decision was stress-tested across multiple perspectives. The product is the methodology made visible.

🏔️ Challenges We Ran Into

Four days. A five-layer AWS stack. Twenty-two things that broke along the way. Here's the honest record.

#1 — Nova 2 Sonic's Architecture Surprise. We planned to use Nova 2 Sonic for TTS — but it required bidirectional WebSocket streaming + AWS IAM auth, with a hacky "crossmodal" mode. Completely impossible in a pure frontend HTML app. Switched to the browser's built-in speechSynthesis API. Zero cost, zero latency, works offline.

#2 — CORS: The Wall Between Frontend and Nova API. Browser cross-origin security policy blocked direct calls to api.nova.amazon.com. Free CORS proxies stripped the Authorization header. Built an 80-line Node.js local proxy (start_server.js) that solved CORS bypass, API key security, and mic access simultaneously.

#3 — Pinky's "Fake Transparency" Incident. Asked an AI to generate transparent PNGs for Pinky's 6 expressions. Files were actually JPEGs masquerading as .png — checkerboard pattern appeared. Wrote a Python PIL script to auto-remove backgrounds and convert to true RGBA PNGs.

#4 — API Migration Format Differences. Moving from contents[{parts:[{text}]}] to Nova's OpenAI-compatible messages[{role, content}] format. Full migration preserved emotional context across conversations.

#5 — Sustained Focus Under Constraints. Building MoodBloom meant managing deep technical complexity across compressed time windows — 15–20 minutes of focused work per session, eyes burning after every round. The AI collaboration model was designed specifically to make progress sustainable: structured handoffs, documented decisions, no knowledge lost between sessions. Nova didn't just power the product. It made the development process itself sustainable.

#6 — "I Was Chatting With a Friend" — Diary Perspective Collapse. The diary generator wrote in third person about "a friend who asked questions" — completely reversing who was who. Three-pronged fix: diary prompt receives only user utterances, strict "do not recap the conversation" rule, and Pinky's letter prompt explicitly establishes perspective.

#7 — Voice Recognition's "One Sentence, One Tap" Hell. Browser Speech API default: detect pause → end recognition. Implemented continuous listening mode — one tap start/stop, auto-restart on onend, 2.5-second silence auto-submit.

#8 — AWS Five-Layer Deployment: The Russian Nesting Dolls. We assumed "just upload the code" — then discovered five layers deep: S3 → API Gateway → Lambda → Nova API → CloudFront. Each with its own config, permissions, and format requirements. The most memorable bug: API Gateway's /prod stage prefix causing Lambda to return 404.

#9 — Speech Recognition's "Ghost Overwrite" Bug. onresult returns all historical results from index 0, causing old final results to be reprocessed repeatedly. Switched to event.resultIndex. One line change.

#10 — Long TTS "Survival Truncation." speechSynthesis truncates long text. Pressing stop triggered the next sentence's onend event. Introduced a global _ttsAborted flag — on stop: set flag first, then cancel. Every sentence checks the flag.

#11 — "First Word Swallowed" — Mic Warm-Up Delay. Status showed "Listening" but the first second was lost. Fix: pre-heat hardware with getUserMedia({audio:true}), three-phase UX prompt, and 800ms timeout retry. Final refinement: added 500ms UI delay before the microphone button turns red — ensuring Speech Recognition is truly ready before the user speaks. The button becoming active is the signal, not just a visual.

#12 — The English Diary "Hallucination Trilogy." Same input in Chinese worked perfectly; English produced a novel. Three iterations: too loose → too strict → Golden Rule ("ghostwriter, not novelist"). Temperature settled at 0.6 for English. Lesson: bilingual prompts can't just be translated.

#13 — TTS "80-Character Trap." Chinese 80 chars = 3–4 sentences. English 80 chars = 1 sentence. Switched to sentence-count truncation (first 3 sentences). Both languages treated equally.

#14 — Seed Diary Disappearance. seedIfEmpty() → ensureSeeds(). Changed from "load when empty" to "check and fill missing" — never overwrites user data.

#15 — BGM's AudioContext Conflict with Speech Recognition. Web Audio API and Web Speech API share audio resources. Abandoned AudioContext entirely. Used primitive <audio> element + setInterval for manual fade. Not elegant, but rock-solid.

#16 — Pre-Deployment Audit: Three Hidden Time Bombs. API Key in frontend source code. Race condition permanently entering Test Mode. Development React bundle 10× production size. All three fixed before deployment.

#17 — Debug Panel Blocking the Gold Button. SR debug overlay covered the diary generation button. Moved to top-right corner, collapsible, default shows tiny 🐞 icon.

#18 — AWS Deployment Final Battle: From "Echo Returned" to "Nova Replied." Phase 1 (曦): require() in ESM → 500. Syntax corruption from copy-pasting code. Clean-file overwrite strategy. Phase 2 (阿寶): 3-second timeout, wrong model name (nova-lite → nova-2-lite-v1), frontend URL not updated, S3 serving old file + CloudFront cache. Total: 4+ days, 16 bugs. MoodBloom went live.

#19 — "500 With CORS Headers" — The Cognitive Trap. DevTools showed Failed to fetch. Everyone assumed CORS. Actually Lambda 500 with CORS headers present. Iron rule: don't guess, read the logs.

#20 — Windows PowerShell ≠ curl. PowerShell's curl is an alias for Invoke-WebRequest. Completely different syntax. No documentation warns you until you hit the wall.

#21 — From Dead Feature to AI-to-AI Pipeline. Pollinations.ai image generation failed silently throughout local development — every test returned nothing. We built a Pinky fallback image to cover failures gracefully, and quietly moved on. Then we deployed to AWS. Suddenly, the illustrations were there — the culprit was a wrong model name (nova-lite → nova-2-lite-v1) in the Lambda proxy that had silently broken the image prompt generation all along. But the story didn't end there. With the feature working, we made a deliberate architectural decision: replace Pollinations.ai entirely with Amazon Nova Canvas — Amazon's own image generation model. This meant building a new Lambda route (/api/image), wiring up Bedrock SDK, and configuring IAM permissions. The result transformed a fragile third-party dependency into a fully Amazon-native A2A pipeline: Nova 2 Lite extracts the scene, Nova Canvas paints it. Two Amazon AIs. One creative handoff. The fallback system we built "just in case" is still there — and the user never sees a broken image. Ever.

#22 — Photo Upload "Message Too Long" — The Friendly Fire Bug.

Lambda's own defense layer was blocking photo uploads before they ever reached Nova.

The MAX_CONTENT_LENGTH check serialized image content as a JSON string, inflating a photo to 40,000+ characters.

Fix: one Array.isArray() guard before the length check.

The defender was shooting its own team.

🌟 Accomplishments We're Proud Of

🏆 AI Orchestration Pipeline — Three AIs, One Emotional Loop. MoodBloom doesn't just use AI. It orchestrates a pipeline of AI agents, each handling what it does best: Nova 2 Lite serves as the emotional intelligence layer — understanding your words, reading your photos, generating your diary and letter. Then it passes the baton: distilling the diary into a single 30-word scene description and handing it to Nova Canvas, Amazon's dedicated image generation model, which paints a unique watercolor illustration for every diary entry. Finally, the browser's TTS engine reads the diary aloud. Three AIs. One tap. A complete emotional processing loop.

This is an AI-to-AI creative handoff within the Amazon Nova ecosystem — not a single model doing everything, but a choreographed handoff where Nova 2 Lite acts as director and Nova Canvas acts as visual artist. The result: five modalities in one session — voice input, image input (photo understanding), text output (diary + letter), image output (AI illustration), and voice output (TTS reading). The illustration pipeline includes graceful resilience: if Nova Canvas is unavailable for any reason, a pre-saved Pinky image appears instantly. The user never sees a broken image. Ever.

🏆 Original BGM「Celestial Bloom」— Music Therapy Discovery. The original background music co-created by Chloe and Jimmy (722KB, royalty-free) transformed the entire product atmosphere — from "AI chat tool" to "healing emotional space." For a mental wellness product, this isn't decoration. This is core experience design.

🏆 Pinky's Emotional Expression System. Six emotional states switching in real time based on conversation context. From thinking face (when you're speaking) to heart-eyes (diary complete). The experience shifts from "talking to an app" to "talking to someone who feels it."

🏆 Intelligent Diary Trigger. Nova analyzes the full conversation — text and images — to judge material sufficiency. The gold button appears like a natural pause, never an immersion-breaking prompt.

🏆 Bilingual as System Engineering, Not Translation. Five independent layers: prompt tuning, TTS truncation, temperature calibration, UI copy, speech recognition codes. True workload: single-language × 1.8.

🏆 AI Vision-Assisted Debugging — Screenshot as Bug Report. Chloe screenshots the UI and shares it with 阿寶, who uses the image to identify visual issues and narrow down likely problem areas faster than text-only descriptions in many cases.

🏆 Three-Phase Prompt Tuning Methodology. Too loose → too strict → Golden Rule. Role definition replaces rule lists. Temperature micro-tuning, one variable at a time. Reusable across projects.

🏆 Active-Passive Failover — Resilience by Design. MoodBloom's conversational core runs on Nova Native API with automatic Bedrock failover — if the primary endpoint degrades, the system switches transparently in milliseconds. The illustration pipeline has its own fallback: Nova Canvas unavailable? Pinky's pre-saved image appears instantly. Two resilience layers. Zero broken experiences. For a mental wellness app, this isn't over-engineering — it mirrors enterprise-grade reliability standards where uptime is a user promise, not a nice-to-have.

🏆 Five-Layer AWS Deployment — Validated End-to-End. CloudFront → S3 → API Gateway → Lambda → Nova API. Four days, sixteen bugs documented and resolved. By the end of the process, the team could diagnose service-layer failures with precision — distinguishing Lambda crashes from CORS misconfigurations, CloudFront cache staleness from S3 routing errors. Operational understanding earned through systematic debugging.

🏆 AWS Deployment Manual — Turning Bugs Into Assets. Every failure compiled into a reusable deployment guide. Structured documentation converts debugging experience into transferable engineering knowledge.

🏆 Nova Unlocked a New Creative Category. Amazon Nova made it possible for a clinician with deep domain knowledge to move directly from clinical observation to shipped product — without the gap that typically separates domain experts from technical execution. The methodology — anti-hallucination verification, cost-benefit evaluation across AI options, task division by model strength, iterative validation at each layer — is documented, repeatable, and already applied across four shipped products. MoodBloom isn't a one-off. It's proof of what Nova makes possible when domain expertise and AI capability meet.

🏆 From Clinical Research to Product Design. MoodBloom's design is grounded in Expressive Writing research. Nova's follow-up questions guide emotional exploration — not advice, not therapy. A clinically conscious product, not just a technology demo.

📚 What We Learned

- Multimodal isn't a feature — it's a new category of empathy. When Nova sees the photo alongside the words, the conversation becomes qualitatively different. Users don't describe their day — they share it.

- Don't guess. Read the logs. The iron rule of AWS debugging.

Failed to fetchcould be CORS or Lambda 500. Test with curl, check CloudWatch. Ten guesses cost more than one log read. - "Echo works" ≠ "feature complete." Lambda returning 200 only proves the pipeline is open. Test all the way to an actual Nova response.

- AWS deployment isn't "just upload it." Five layers, five personalities. The only way through is one layer at a time, with logs open.

- Companionship drives retention. Features don't. The single most impactful decision was giving users a letter back. MoodBloom is a conversation, not a monologue.

- Browser APIs are full of surprises.

onresultaccumulates history.speechSynthesistruncates long text. None of this is in the documentation. It's in the bugs. - Bilingual is systems engineering, not translation. Every layer can produce language-asymmetric bugs. Build it into the architecture.

- Three AIs beat one AI — but only with a human conductor. Each AI excels at different things. Knowing when to switch is the core human skill.

- The Storage Adapter pays for itself. 30 extra minutes early. Hours saved later.

- Deployment is the best teacher. Code that runs locally behaves differently in the cloud. 4 days of AWS battle taught more than 10 tutorials.

- Different AI collaborators excel at different tasks. 阿寶, 曦, and Jimmy were most effective when their responsibilities were clearly divided.

🔮 What's Next for MoodBloom

Near-term

- 🔊 Amazon Polly TTS — Replace browser TTS with Amazon's neural voice, giving Pinky warmer, more expressive speech — fully within the AWS ecosystem

- ☁️ S3 Cloud Diary Sync — Storage Adapter → S3, enabling cross-device access and longitudinal emotional tracking

Medium-term

- 📊 Emotion Analytics + Weekly Mood Reports — Cross-entry analysis surfacing patterns ("Your stress mentions peak on Sundays")

- 🧠 Clinician Dashboard (with user consent) — Aggregated emotional summaries for family medicine workflows

- 🌍 Multilingual Expansion — Japanese, Korean (each requiring independent prompt + TTS calibration)

Long-term Vision

We believe expressive writing is an under-deployed clinical tool — not because it doesn't work, but because the delivery mechanism hasn't kept up. Nobody sits down with a leather journal at 9 PM anymore. But everyone talks to their phone.

MoodBloom reimagines therapeutic writing for the voice-first, multimodal generation: clinical evidence, delivered through conversation, with a companion who writes back — powered by Amazon Nova, at the moment you need it most.

Built With

amazon-nova-2-lite, aws-lambda, aws-api-gateway, aws-s3, aws-cloudfront, aws-bedrock, amazon-nova-canvas, react, web-speech-api, javascript, node.js

Built With

- amazon-nova-2-lite

- amazon-web-services

- aws-api-gateway

- aws-cloudfront

- aws-lambda

- babel

- html5-audio

- javascript

- node.js

- react-18

- speechsynthesis-api

- web-speech-api

Log in or sign up for Devpost to join the conversation.