-

-

Using Monday Manager Google Action

-

Using Monday Manager Google Action

-

Using Monday Manager Google Action

-

Monday Manager Alexa Skill

-

Linking Alexa and Monday Account

-

Using Monday Manager Google Action

-

Monday Manager Google Action Linking

-

Adding someone's idea on the fly

-

Adding items for shower thoughts

-

Sick day Alex putting off tasks

-

Staying productive while commuting

Inspiration

I've been a monday.com user for a few years now and use it quite a bit for roadmap planning, task management, team comms, and so much more! I wanted to bridge the gap of what I've been working on in conversational AI and accessibility over the last few years with the same productivity tools I use to work on that technology. This led me to a few key points:

- Build something for everyone

- Build something to use anywhere

- Build something actually useful

- Build something production ready

- Teach people along the way

With all of that together, I set out on building the Monday Manager - a voice and conversational assistant that lets you interact with your Monday boards, items, and more!

What it does

Monday Manager lets you interact with your monday.com account in an entirely new way - with your voice! It's currently available as an Alexa Skill and a Google Action, but with more platforms to come. To get started simply:

- Enable the Skill/Action

- (Once the skill is in the skill store), say "Alexa, enable Monday Manager" or find it in the skill store from the Alexa mobile app. Note: at the point of this project submission, the Alexa skill is still in review and not publicly available.

- On google say "Hey Google, talk to Monday Manager", or use this link to the actions directory

- Link your Amazon/Google account to your monday.com account

- On Alexa, go to the Alexa app, then to the Monday Manager skill and select "settings" to link your account. Then sign in with your Monday account and give the Monday Manager permission to access your boards:

- On Google, just say "Talk to Monday Manager" and the sign-in process will start right away.

- Once you've link your account, you're good to go and no longer need to sign in.

- Note: you need at least "editor" permissions within your Monday team to use the app

- On Alexa, go to the Alexa app, then to the Monday Manager skill and select "settings" to link your account. Then sign in with your Monday account and give the Monday Manager permission to access your boards:

- Start talking to the Monday Manager and interacting with your boards

You can ask all sorts of questions including major features like:

- Iterating through your boards

- Iterating through each item

- Getting board details

- Adding new items directly into groups

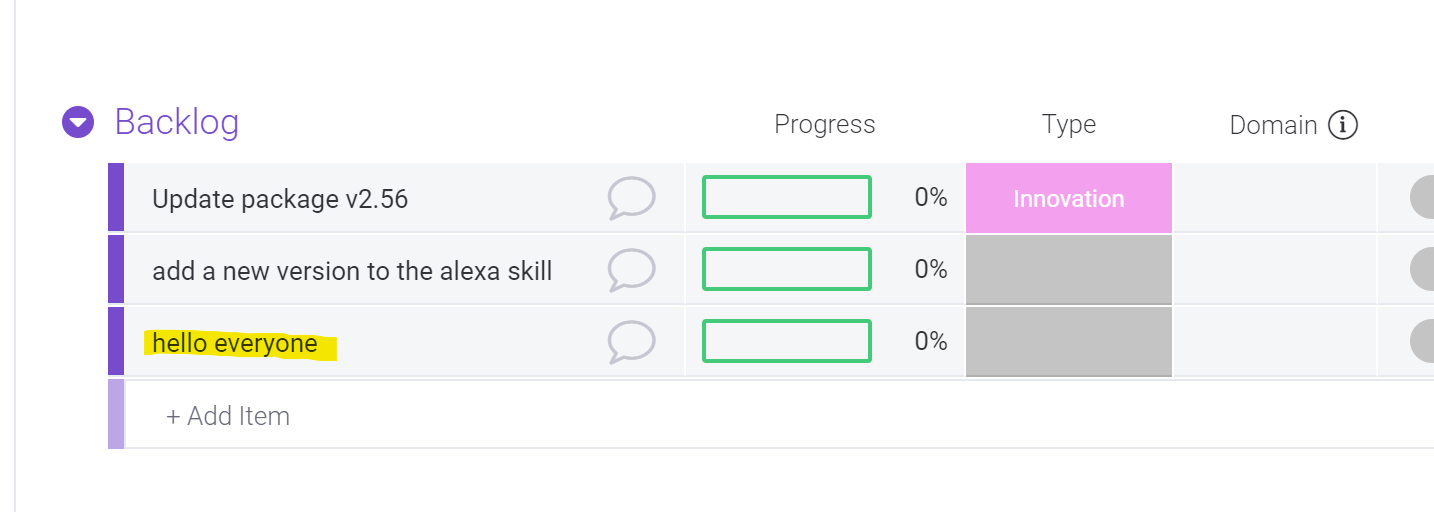

Then you can immediately see the result in your monday boards:

There are also a number of experimental features shown in the video which only work for certain users such as:

- Pushing task dates in bulk

- Getting aggregated item information that you are assigned to As the system gets smarter, these features will start to be enabled for all users. You can read more about it in the "Challenges I ran into" section.

How I built it

First off, most of the app has been built live on my stream as a means to help teach developers how to implement similar functionality along the way.

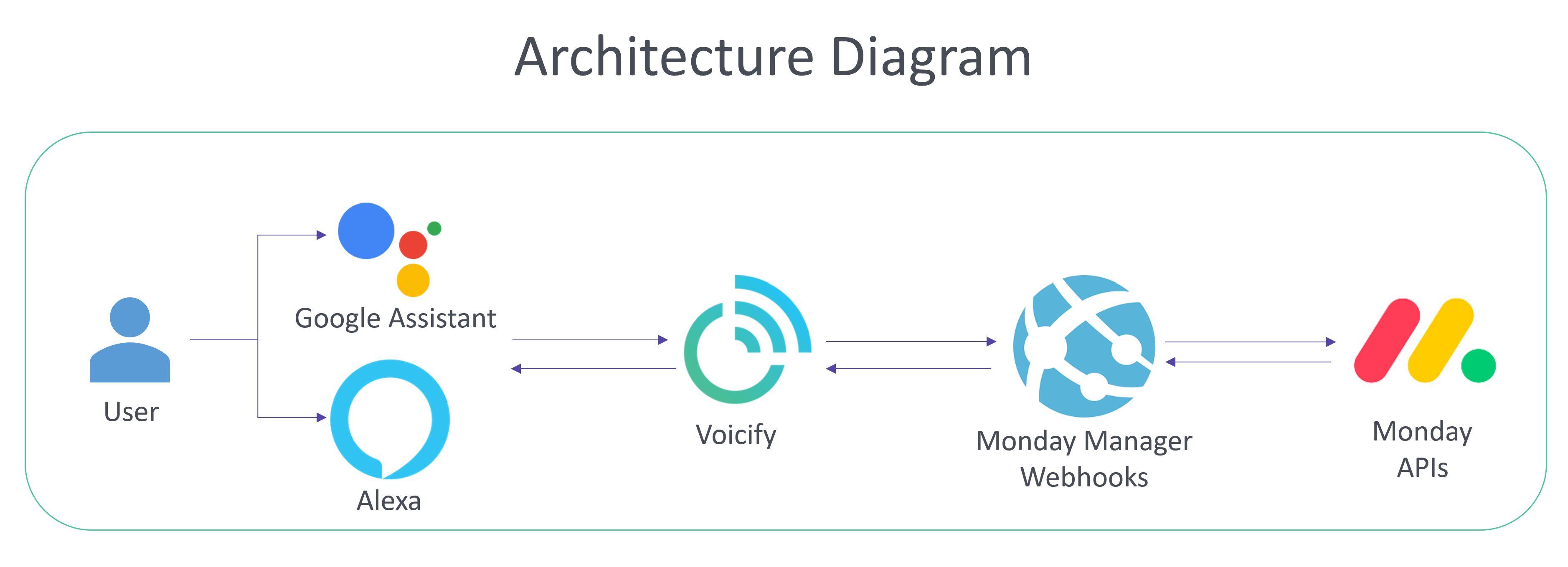

In terms of high-level structure, it basically looks like this:

The underlying flow is basically:

- User speaks to their device

- Device sends request to assistant service

- Assistant service sends request to the underlying Voicify app

- Voicify sends webhook requests to the Monday Manager API (when applicable)

- Monday Manager API talks directly to the Monday GraphQL API

- Monday Manager API handles business logic for how to turn data into responses

- Voicify responds to assistant service with the output after mapping it to the proper output

- Device speaks and displays the result

In terms of the roles:

The assistant platforms (the actual skill/action manifest) handle:

- Initial NLU

- Store listings

- Managing endpoint to Voicify

Voicify handles:

- Secondary NLU

- Conversation state

- Conversation flow

- Integrations

- Response structures

- Configurations

- Cross-platform deployments

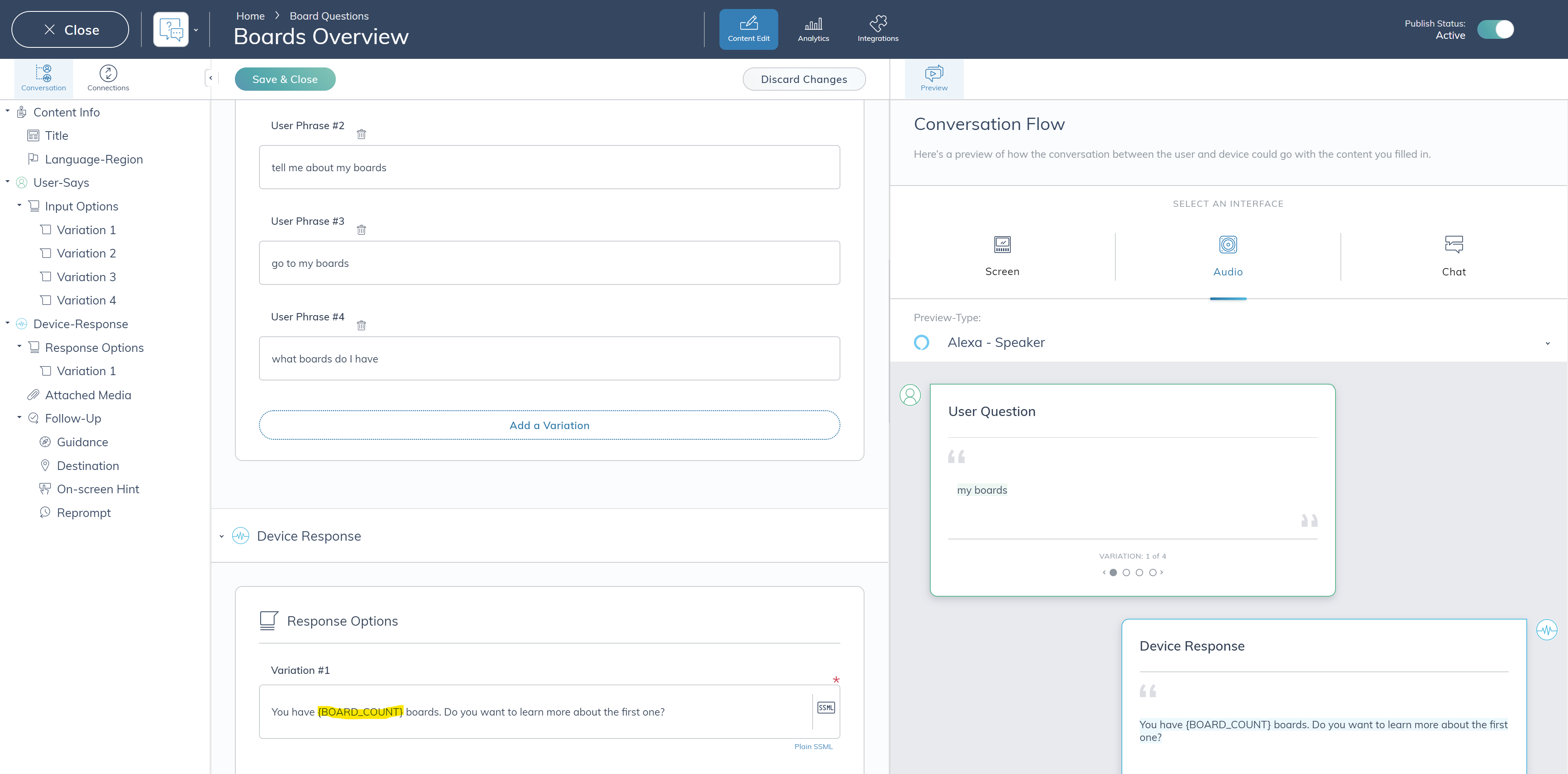

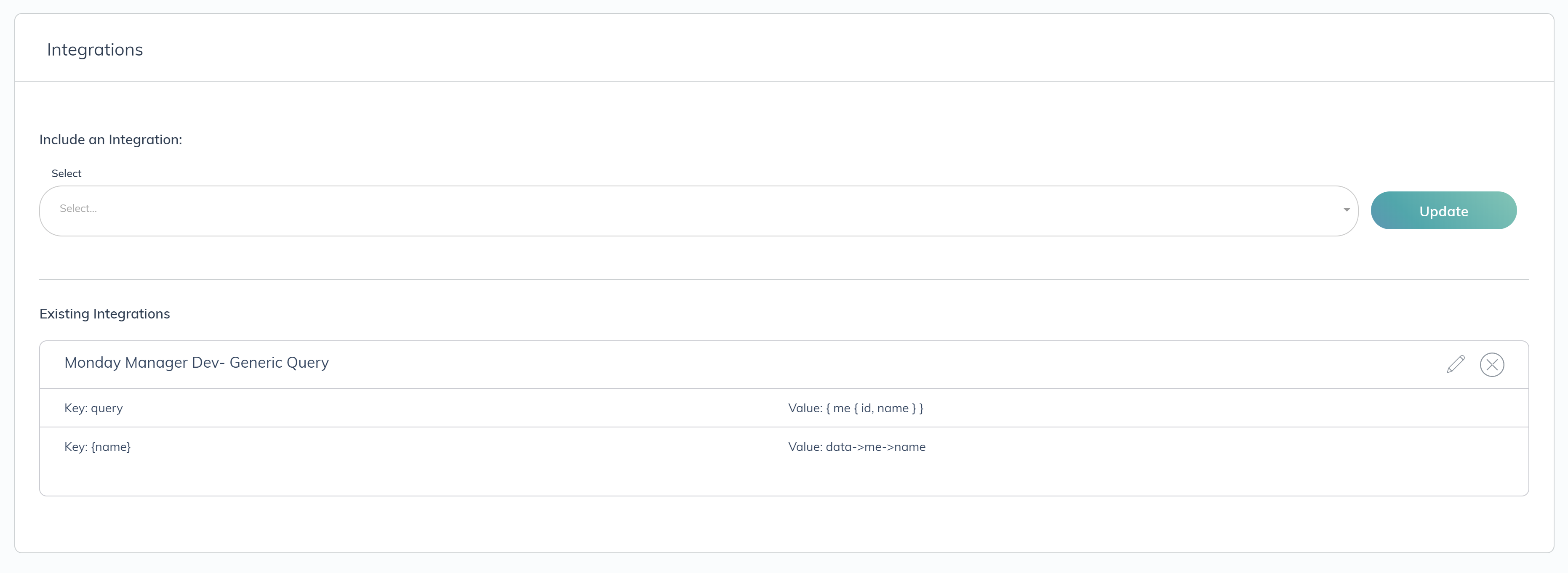

Basically we create conversation items to handle each turn and setup variables that the wehbook can manage filling such as:

The Monday Manager API handles:

- Mapping input and conversation state from the request

- Communication to the Monday API

- Mapping Monday data to the response structure Voicify expects

The Monday Manager API was built using C# 8 and asp.net core hosted in a linux app service in Azure. Within the project, there's a basic onion design pattern implementation to separate HTTP logic, business logic, and data access logic. This enables really slim and easy to update and build business logic. For example, the core of letting Voicify get access to the user's current board in context looks like this:

public async Task<GeneralFulfillmentResponse> GetCurrentBoard(GeneralWebhookFulfillmentRequest request)

{

try

{

if (string.IsNullOrEmpty(request.OriginalRequest.AccessToken))

return Unauthorized();

var currentBoard = await GetCurrentBoardFromRequest(request);

if (currentBoard == null)

return Error();

return new GeneralFulfillmentResponse

{

Data = new ContentFulfillmentWebhookData

{

Content = BuildBoardResponse(request.Response.Content, currentBoard),

AdditionalSessionAttributes = new Dictionary<string, object>

{

{ SessionAttributes.CurrentBoardSessionAttribute, currentBoard }

}

}

};

}

catch (Exception ex)

{

Console.WriteLine(ex);

return Error();

}

}

and the GetCurrentBoardFromRequest method basically checks to see if we already have it in session context, or it goes and gets it from the monday API:

private async Task<Board[]> GetBoardsFromRequest(GeneralWebhookFulfillmentRequest request)

{

(request.OriginalRequest.SessionAttributes ?? new Dictionary<string, object>()).TryGetValue(SessionAttributes.BoardsSessionAttribute, out var boardsObj);

if (boardsObj != null)

return JsonConvert.DeserializeObject<Board[]>(JsonConvert.SerializeObject(boardsObj));

var boardsResult = await _mondayDataProvider.GetAllBoards(request.OriginalRequest.AccessToken);

return boardsResult?.Data;

}

private async Task<Board> GetCurrentBoardFromRequest(GeneralWebhookFulfillmentRequest request)

{

(request.OriginalRequest.SessionAttributes ?? new Dictionary<string, object>()).TryGetValue(SessionAttributes.CurrentBoardSessionAttribute, out var boardObj);

if (boardObj != null)

return JsonConvert.DeserializeObject<Board>(JsonConvert.SerializeObject(boardObj));

var boards = await GetBoardsFromRequest(request);

return boards?.FirstOrDefault();

}

This layering of abstractions and separation of concerns lets us create brand new features without having to even write code at times, but still allows us to easily implement entirely new sets of logic and functionality if required.

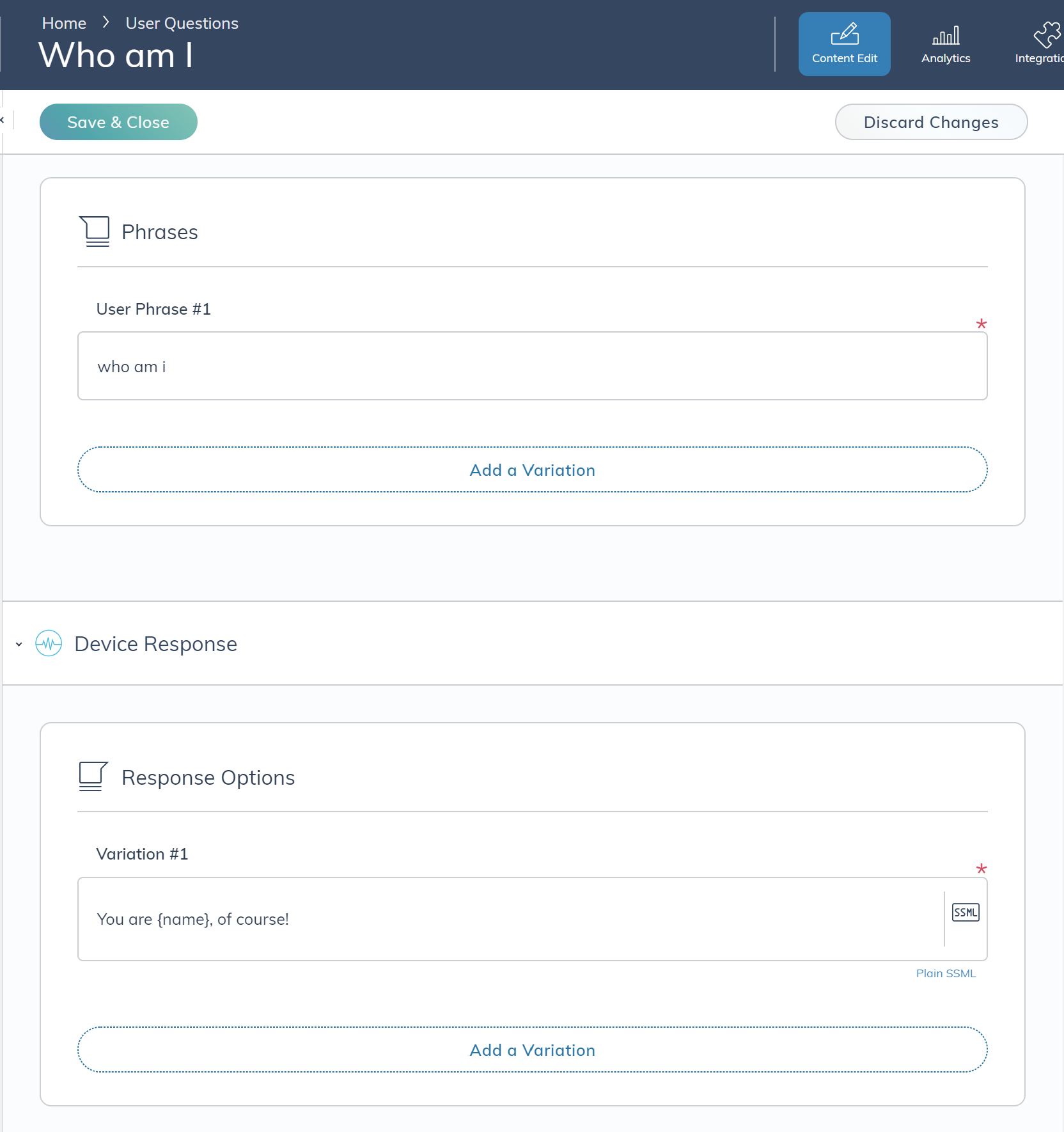

For example, if we wanted to add the ability for the user to say "who am I", and get a response back about their Monday username, it's as simple as:

- Creating a conversation item like this:

Attach webhook to it:

And then it just works!

One last note is that the entire thing works by having the user link their account to Amazon and Google. This is done by a standard practice called "Account Linking" which just requires some OAuth 2.0 auth code grant flow configuration. Then the user's access token is sent with each request to Voicify. Voicify then sends it to the webhook, which then uses it in the Monday requests. Here's the general account linking flow according to Alexa:

Challenges I ran into

The biggest technical hurdle (which I'm working through now) is handling the fact that columns are entirely customizable. So, adding features that use those values in bulk require some serious assumptions. For example, something like "What items of mine are due tomorrow?". Well, we can most certainly handle understanding the goal of that statement, but determining which column(s) actually dictate the "mine" and "tomorrow" part is tricky. Currently, the features that use those work exclusively with some of my board structures to safely handle the assumptions, but my goal is to essentially guess at which column(s) are best for those decisions, and if we aren't sure, just ask the user and remember it for next time.

The other challenge I ran into was filming my fun sample scenarios while my dog was following me around and panting 😂 Hopefully the editing helped there a bit.

Accomplishments that I'm proud of

There are a few key things that I am super proud of:

- Successfully building something that functions end to end

- Getting the Action approved by Google on the first try!

- Being able to actually teach people along the way while we were building it

All-in-all, it was unbelievably satisfying to actually use the thing for my real day job. Especially adding items to groups with a single command - it immediately showed it's usefulness.

What's next for Monday Manager

Tons of stuff! This was not just a hackathon for me, but instead the building of a real product. That's why I submitted it to Alexa and Google for public certification and continue to use it myself everyday! The biggest next items are:

- More contextual features for users

- Access more parts of the users data like activity, updates, dashboards, etc.

- Learning and managing user preferences and automating that process based off how they interact

- Adding more channels like Bixby, chat bots in MS Teams, Slack, etc.

- Gathering real end-user feedback and iterating on the functionality as we go

Conclusion

I hope you like the Monday Manager Voice Assistant - it meant a lot to me to be able to find something to build that is meaningful, useful, educational, and accessible to more people while also helping with my own productivity. I'm excited to see the future of the product and continue to build it out myself.

Log in or sign up for Devpost to join the conversation.