-

-

Title screen – introducing Mofumofu Animals, an MR petting experience.

-

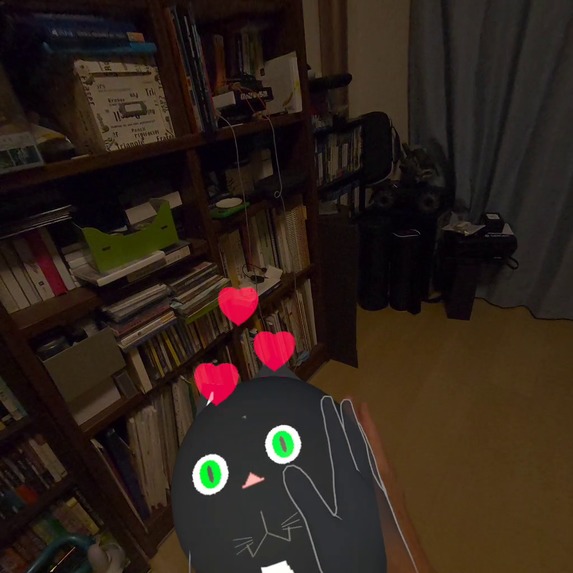

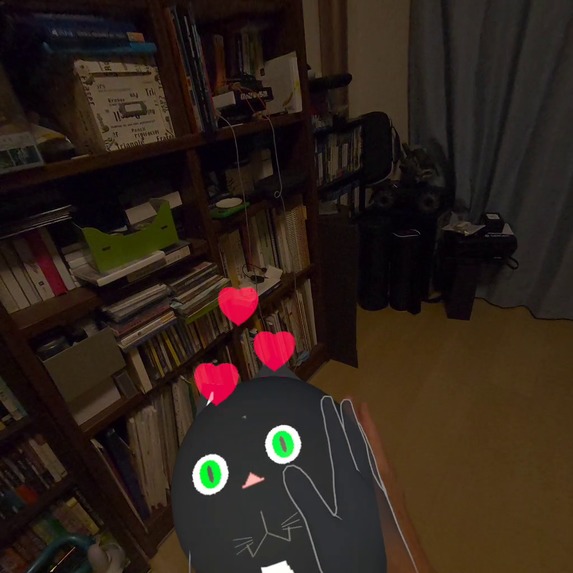

Core interaction – petting animals with hand tracking.

-

Unique mechanic – holding out your hand lets a bird perch on it.

-

Multiple animals appear as the waves progress.

-

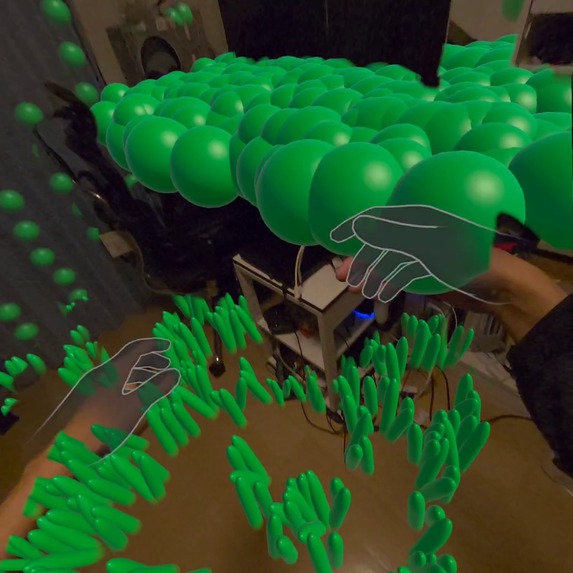

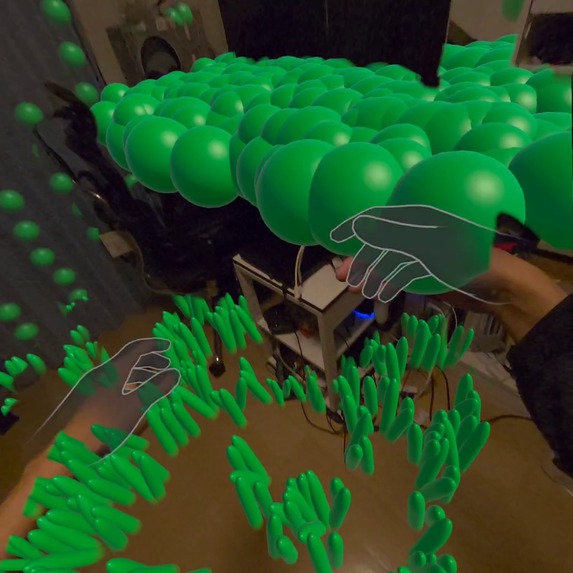

Room Overgrowth – your room becomes greener as you pet animals.

-

Result screen – total score after completing 3 waves.

Mofumofu Animals – Project Story

Inspiration

At first, I planned to create a tower defense game that takes place in the player’s real room.

But as I explored the concept further, I realized that many games already rely on defeating enemies, and I wanted to create something emotionally different — an experience rooted in positivity rather than conflict.

This made me rethink the basic structure of the game.

Instead of a system built on rejecting or pushing away intruders, I wondered if the core experience could be reframed around accepting and welcoming them.

I have always loved cats, and the simple act of petting an animal brings me comfort.

That personal connection naturally led me toward an MR experience where the goal is not to fight, but to welcome animals into your room and gently pet them.

When I prototyped the early petting mechanic, I was surprised by how soothing and joyful it felt.

That emotional reaction became the foundation of Mofumofu Animals.

Since I am not a professional programmer, another key part of this project was using AI agents to support implementation.

By relying on AI for code generation and technical guidance, I was able to build the project solo and focus more deeply on interaction design and user experience.

My goal was to create an app that even first-time Meta Quest users could enjoy instantly — no controllers, no complex UI, just you and the animals sharing the same room.

What it does

Mofumofu Animals is an MR petting game for Meta Quest 3 that uses Passthrough, Scene Understanding, and Hand Tracking.

The experience consists of three 30-second waves where cats, dogs, and birds appear in the player’s real room.

Players pet the animals using natural hand motions to earn points.

Each animal responds with joyful movements and heart effects.

For birds, the interaction is intuitive:

When the player extends their hand forward and keeps it still, the bird gently lands on it.

A full session lasts about 2–3 minutes, making it ideal for casual, relaxing play.

In addition to petting the animals, the player’s room gradually transforms through a system called Room Overgrowth.

Every time the player earns points by petting an animal, small plants begin to grow across the room — on the floor, walls, ceiling, and even furniture surfaces detected through MRUK.

As the score increases, the room becomes progressively covered in greenery, creating a sense of reward and a feeling that the player's actions are bringing life into their environment.

How we built it

I used Unity 6, URP, Passthrough, MRUK (Scene Understanding), and Meta Hand Tracking.

Key systems

- Custom pet-detection logic based on hand movement patterns

- Contact colliders on each animal for touch verification

- NavMesh-based movement for cats and dogs

- Floating behavior and landing detection for birds

- Heart and burst particle effects for emotional feedback

- AI-generated code to support development tasks

This hybrid approach allows players to pet animals naturally, without needing precise or complex hand gestures.

For the environmental feedback system, I implemented a feature called Room Overgrowth.

Using MRUK surface detection, the application identifies planes such as floors, walls, ceilings, and furniture.

Whenever the player gains score, a plant object is procedurally spawned on one of these detected surfaces.

Different plant types (grass, flowers, leaves) are randomly selected to create natural variation.

This system reinforces the idea that the player’s gentle actions have a positive impact on the environment, turning the entire room into a growing garden as they continue to play.

Challenges we ran into

Refining natural-feeling petting interactions was challenging due to hand-tracking noise, lighting differences, and varied user motions.

I implemented smoothing, stability checks, and extensive tuning to create a soft, reliable interaction feel.

Another major challenge was working with AI agents.

As a non-programmer building this solo, I relied heavily on AI-generated code.

I learned that effective AI use requires clear instructions, task decomposition, and iterative refinement — not passive reliance.

Mastering this communication became essential and significantly sped up development.

Accomplishments that we're proud of

- Completed the entire MR experience as a solo creator

- Combined design expertise with AI-assisted programming to build beyond my technical limits

- Achieved natural, intuitive petting interactions despite the complexity of hand tracking

- Created expressive animal behaviors using simple, abstract animations

- Delivered a controller-free MR experience accessible even to first-time users

What we learned

I learned that emotional expression in MR does not require realism; it requires clear, intentional motion.

Small, abstract movements — a head tilt, a gentle sway, a soft heart particle — can strongly convey affection or joy.

Working with AI agents taught me the importance of structured collaboration.

Precise prompts and iterative guidance allowed me to build systems I could not have implemented alone.

This project became a hands-on study in both emotional interaction design and human–AI collaboration.

What's next for Mofumofu Animals – Pet Them in Your Room

- Further refine emotional expression for each animal

- Add an endless mode for unlimited relaxation and interaction

- Implement a shared MR multiplayer mode, allowing multiple people in the same room to see and pet the animals together

Built With

- aiagent(googleantigravity)

- c#(ai-assistedcodegeneration)

- metahandtracking

- metapassthrough

- metaxrsdk

- mrutilitykit(mruk)

- navmeshai

- unity6(urp)

Log in or sign up for Devpost to join the conversation.