-

-

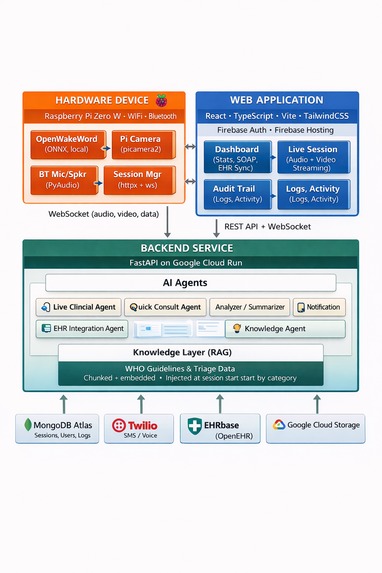

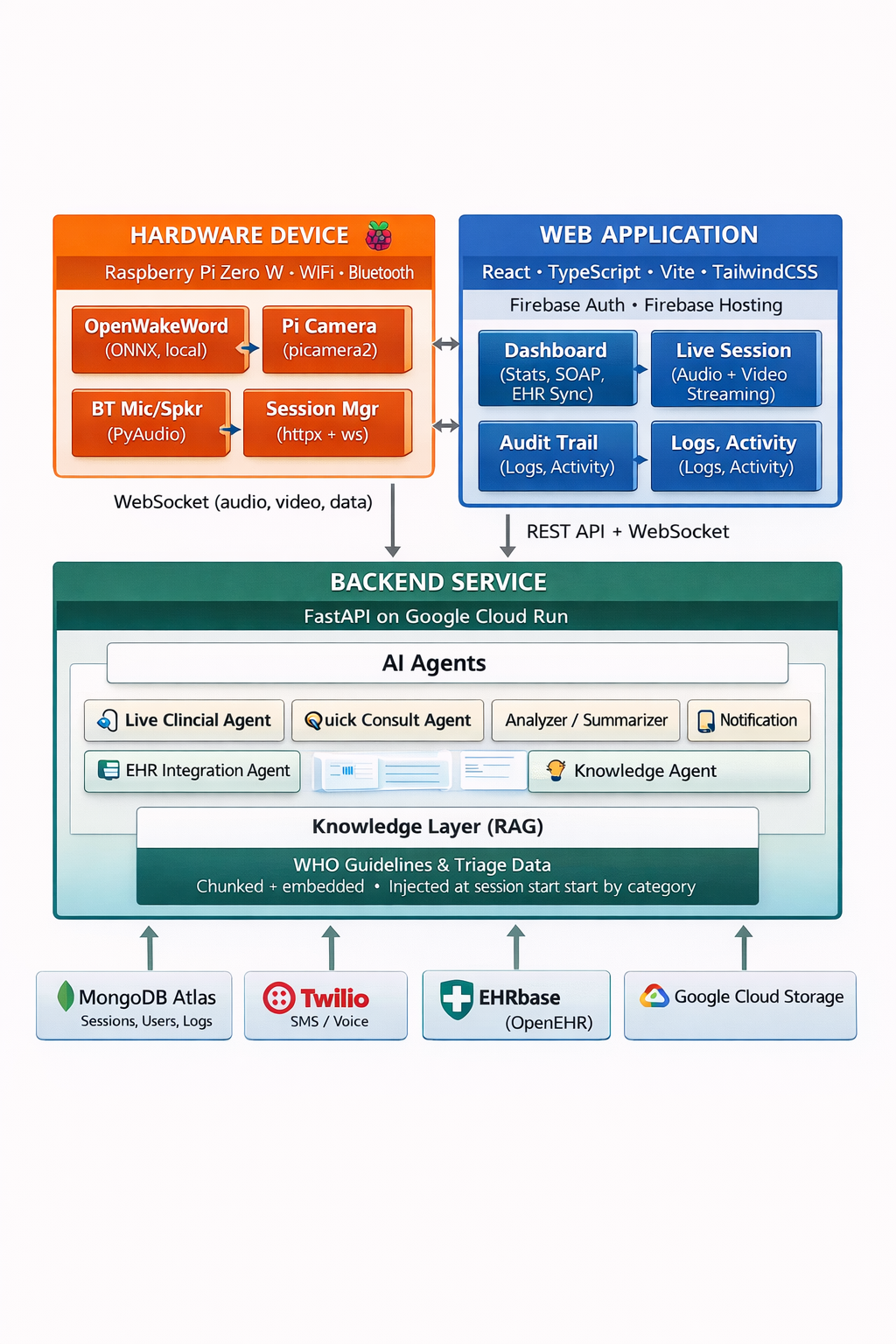

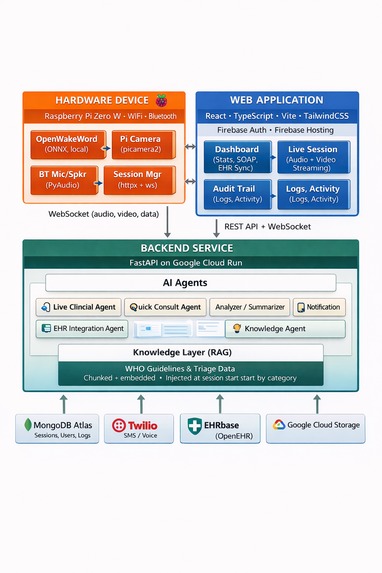

Minerva - High level Architecture

-

Minerva - Overview

-

Healthcare - Time and Reach problems

-

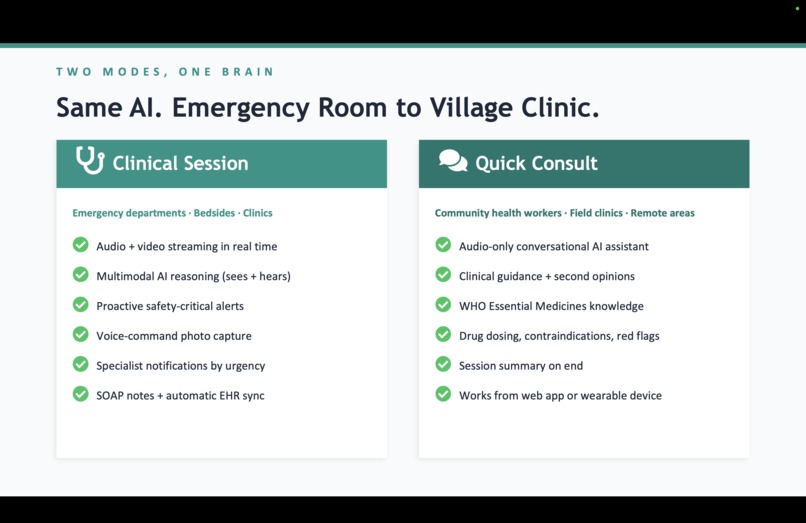

One AI Brain - Two Worlds

-

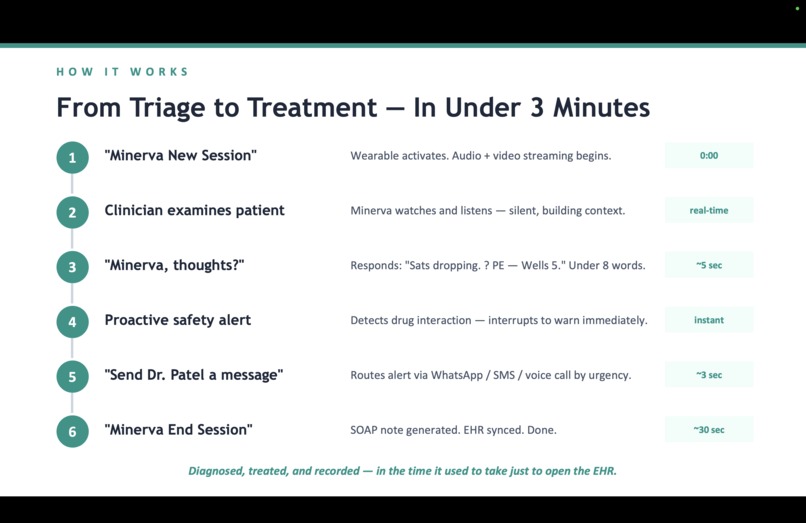

From Triage to Treatment

-

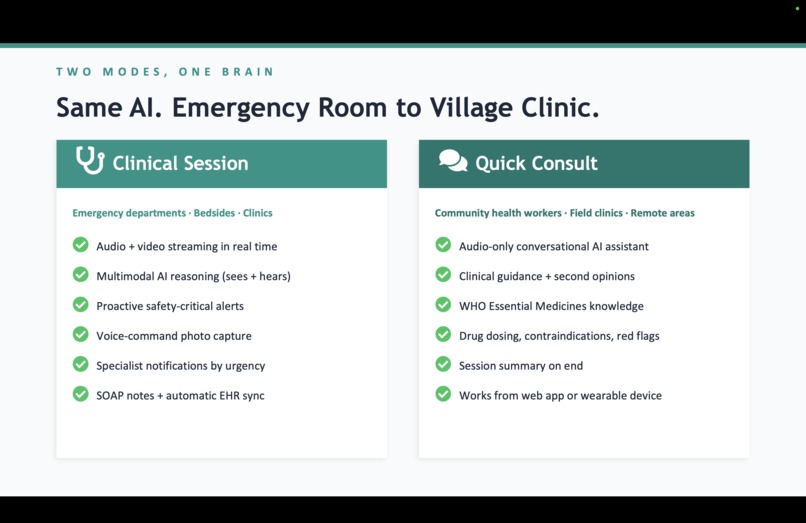

Same AI in both worlds

-

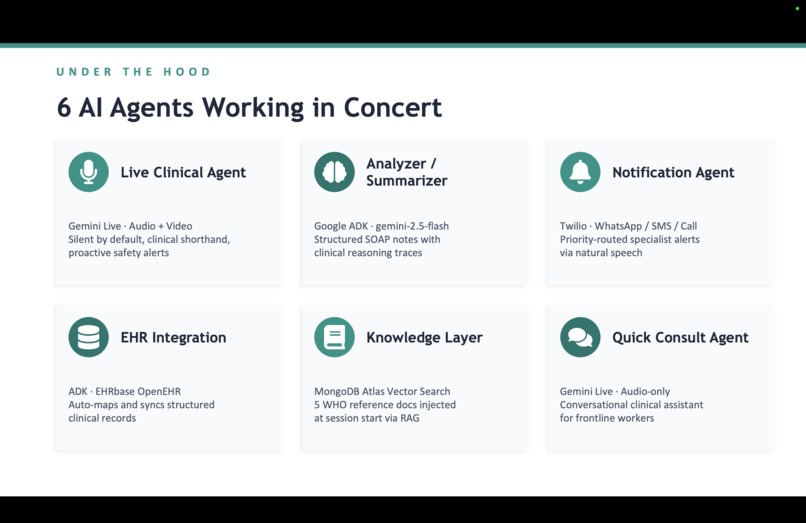

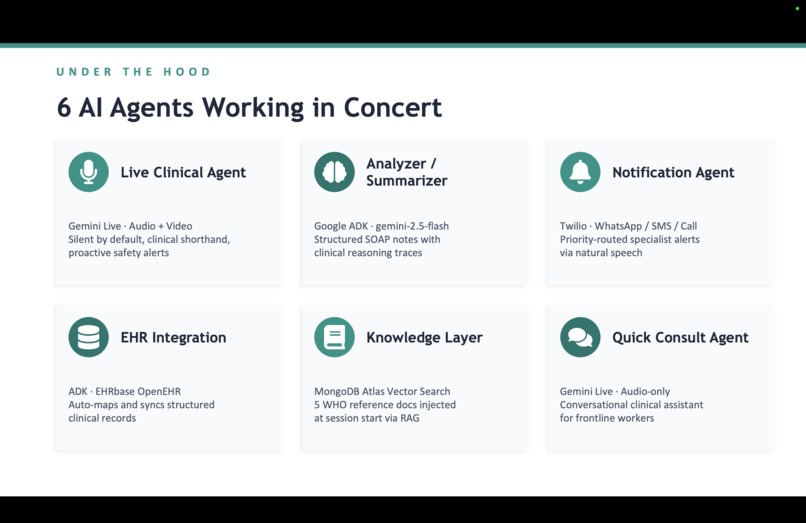

Six AI agents

-

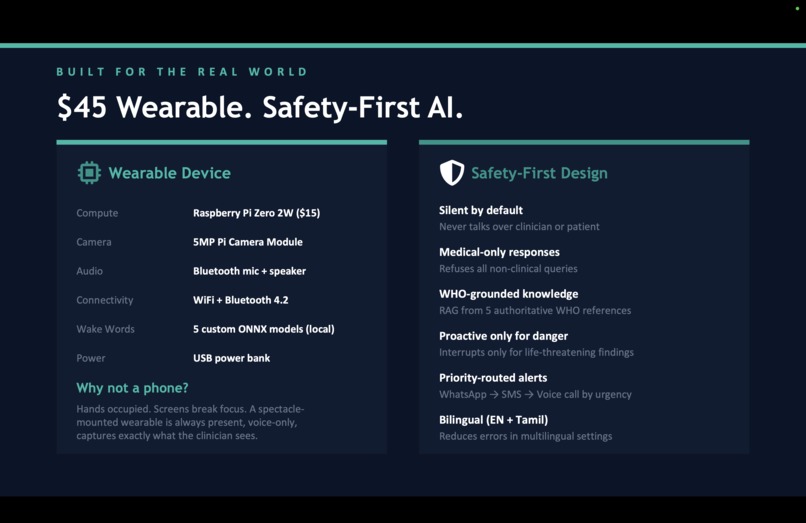

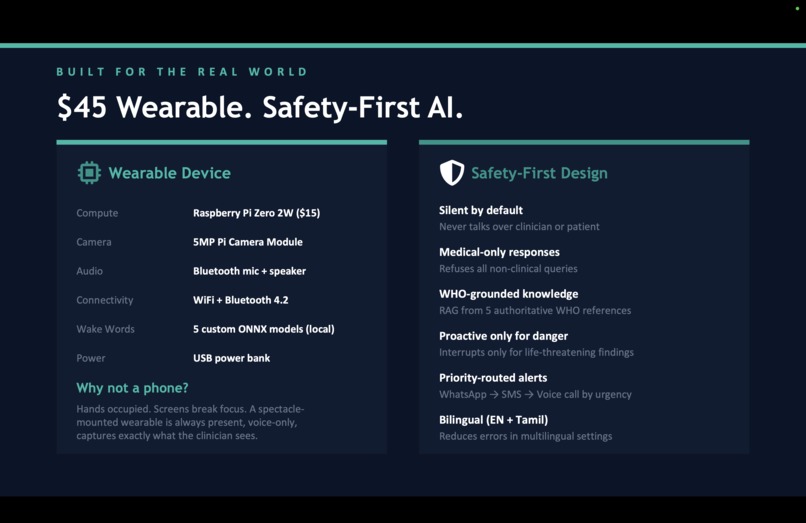

Wearable

-

Why this matters

-

Raspberry Pi Zero W

-

Raspberry Pi Zero W with Camera module

-

Raspberry Pi Zero W & Camera enclosed

-

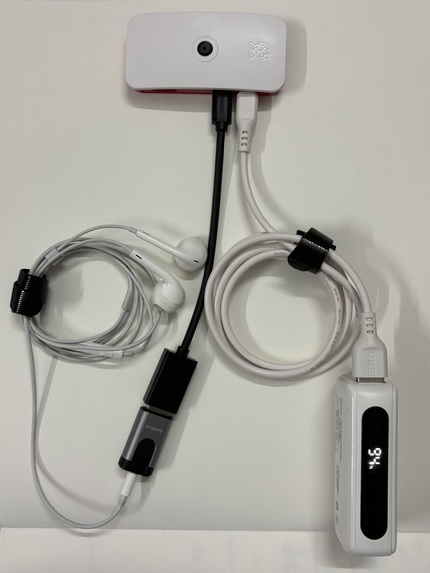

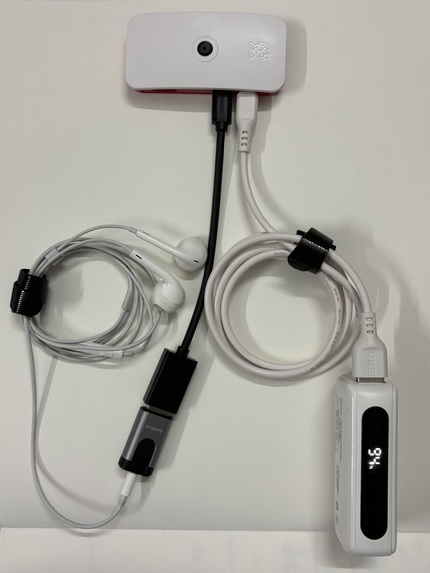

Wearable: Raspberry Pi Zero W + Camera + Earphones + Power Supply

-

Future State of Wearable

-

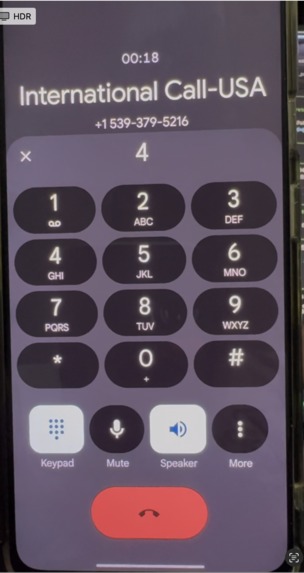

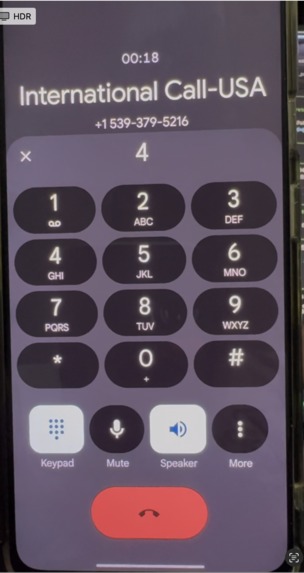

Agent Call Notification - High priority

-

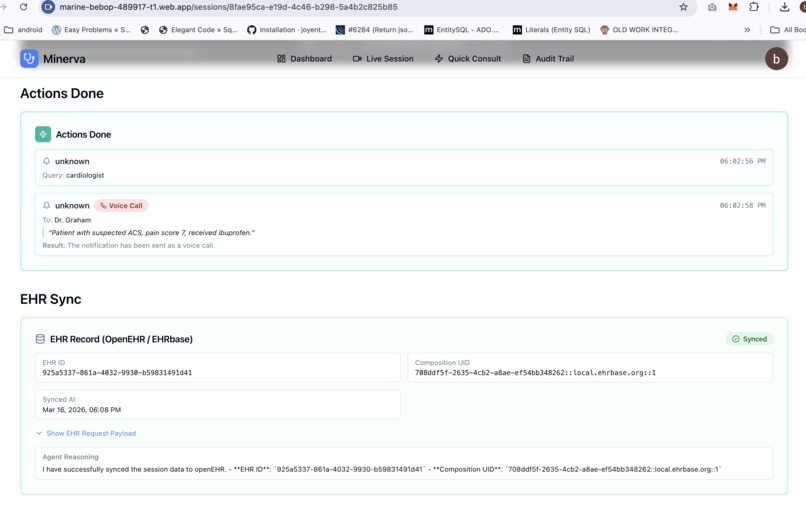

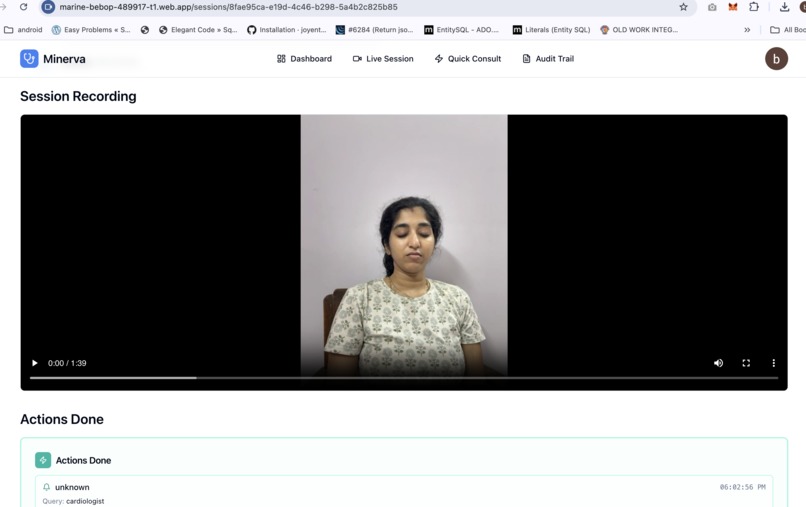

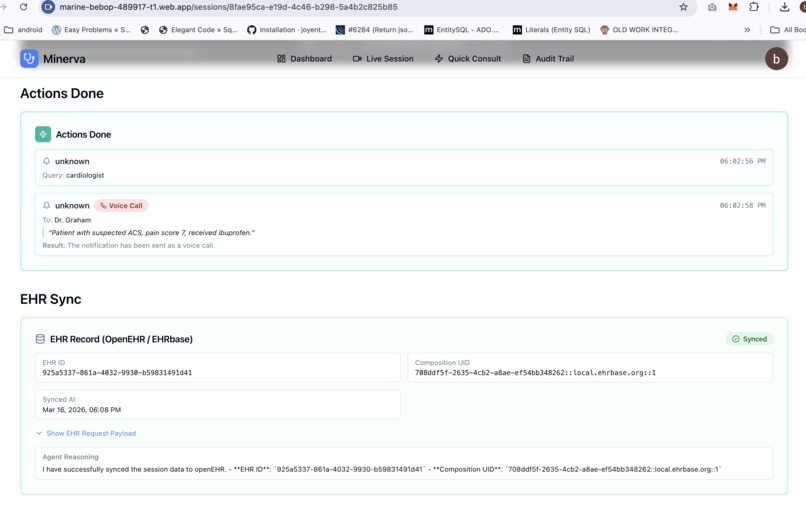

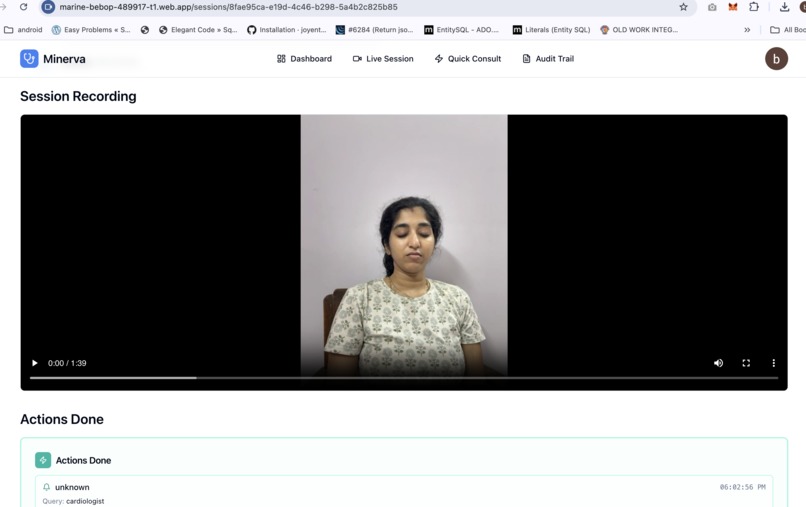

Actions taken and EHR Integration

-

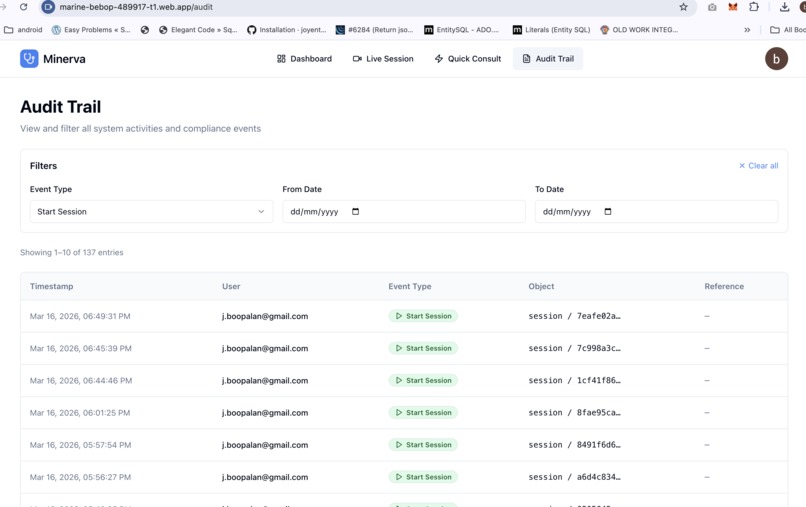

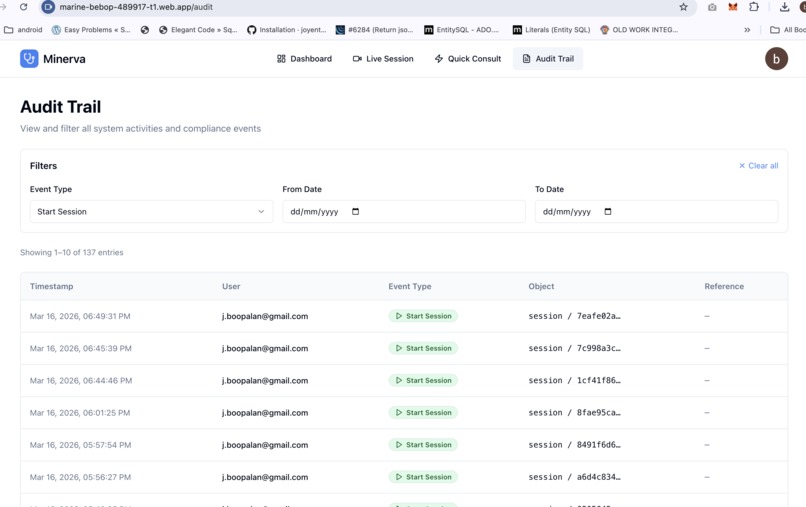

Audit page

-

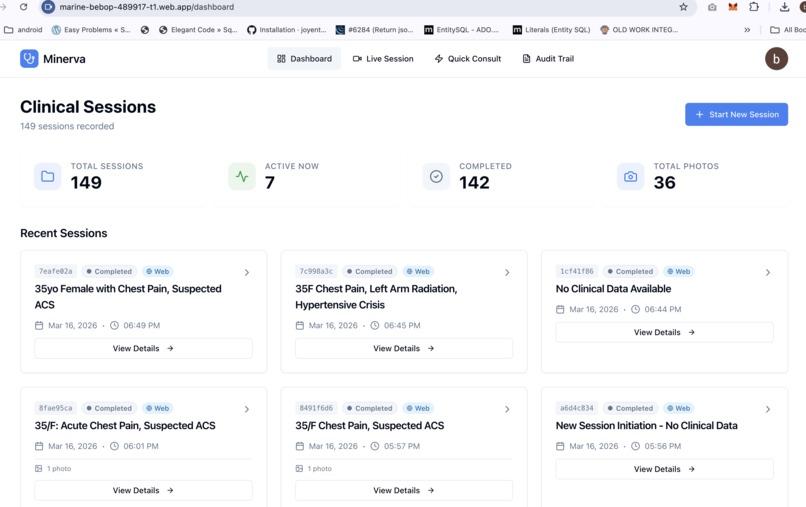

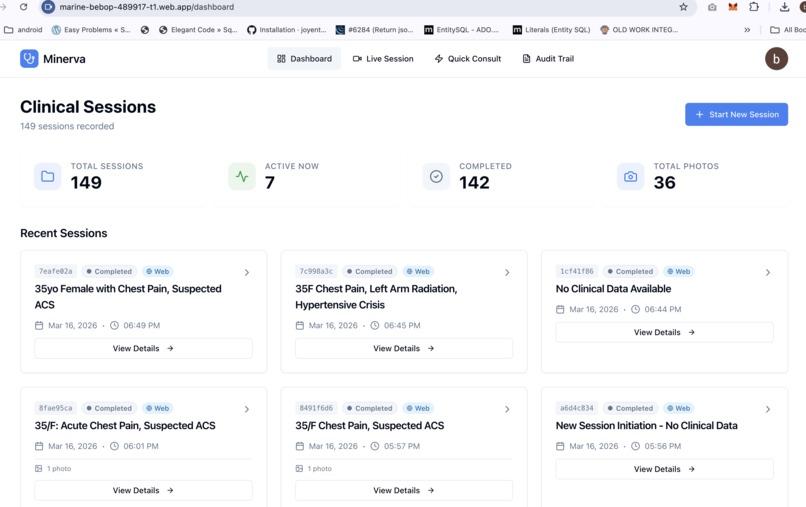

Dashboard

-

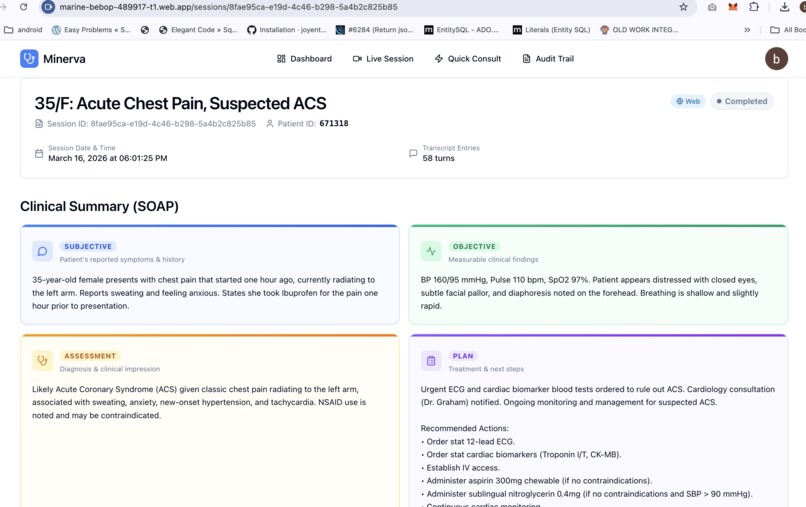

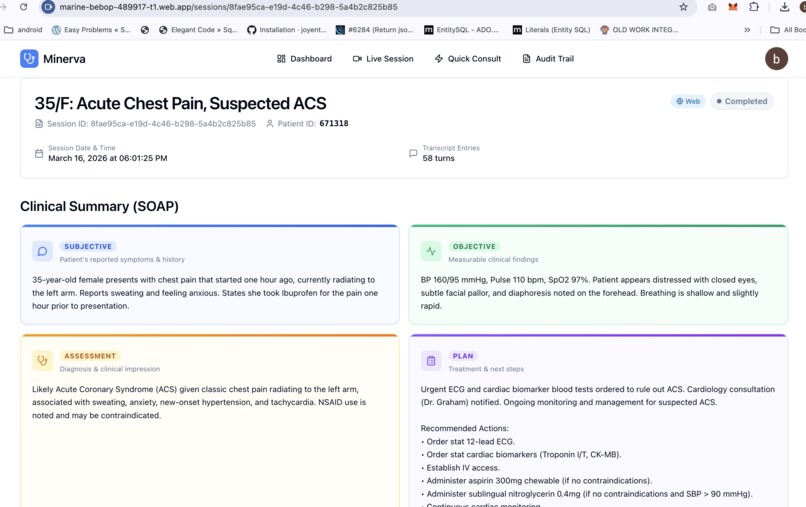

SOAP Session notes - Web View

-

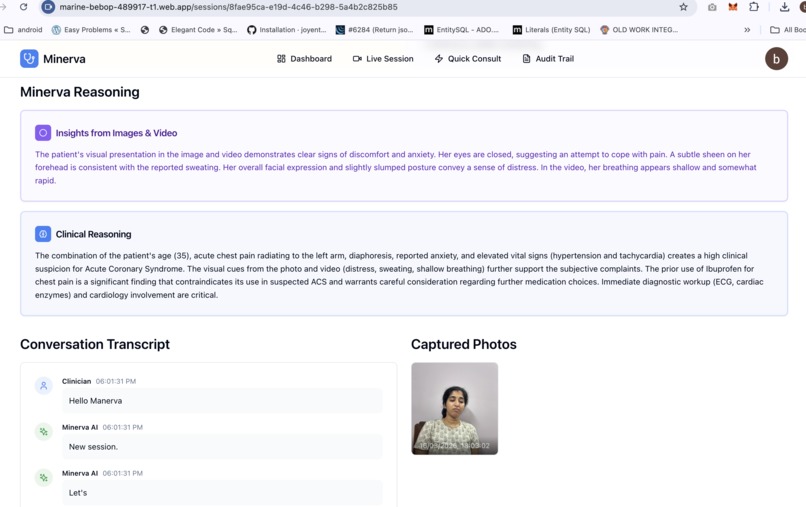

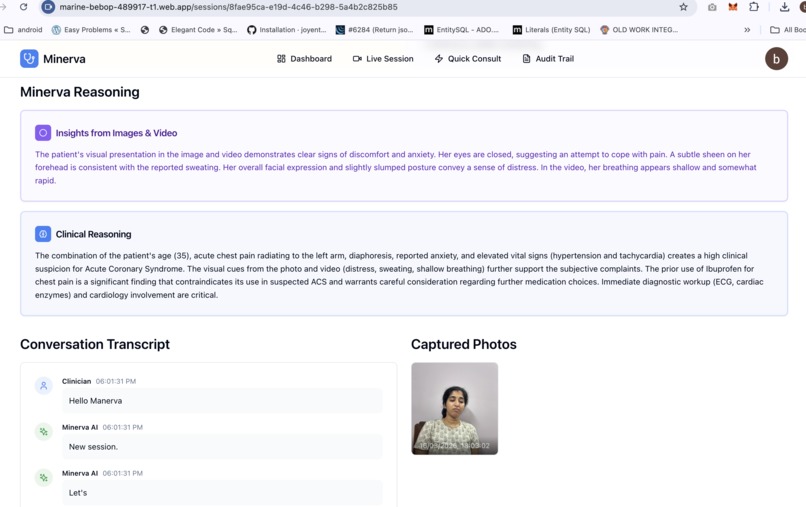

Minerva Insights / synthesis from images, data and videos

-

Session Recording

-

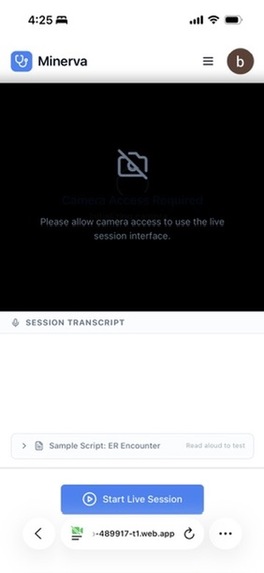

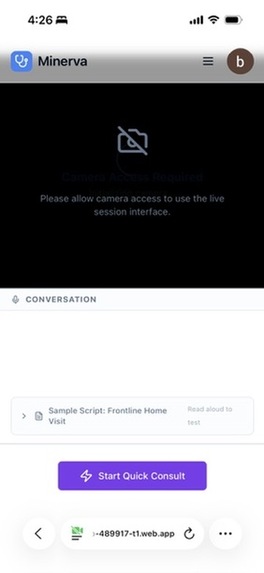

Live Session Screen (Mobile Web)

-

Quick Consult Screen (Mobile Web)

Minerva — The Healthcare Copilot

Inspiration

This project started with a personal experience on a hospital emergency floor.

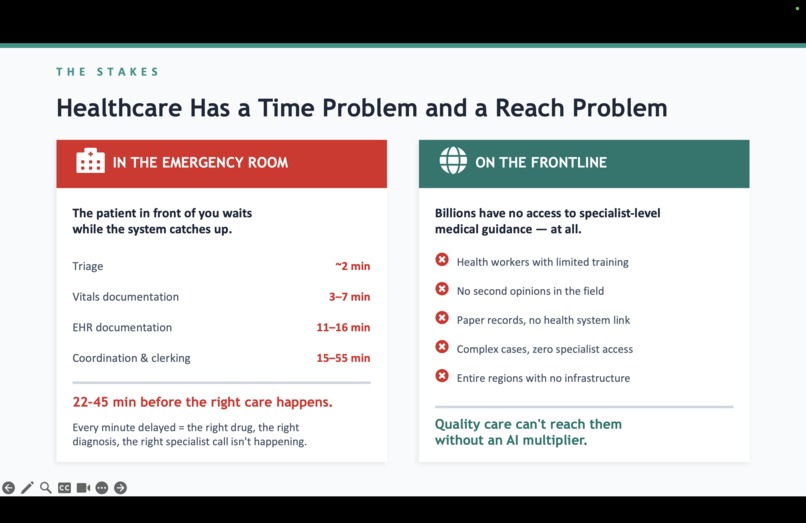

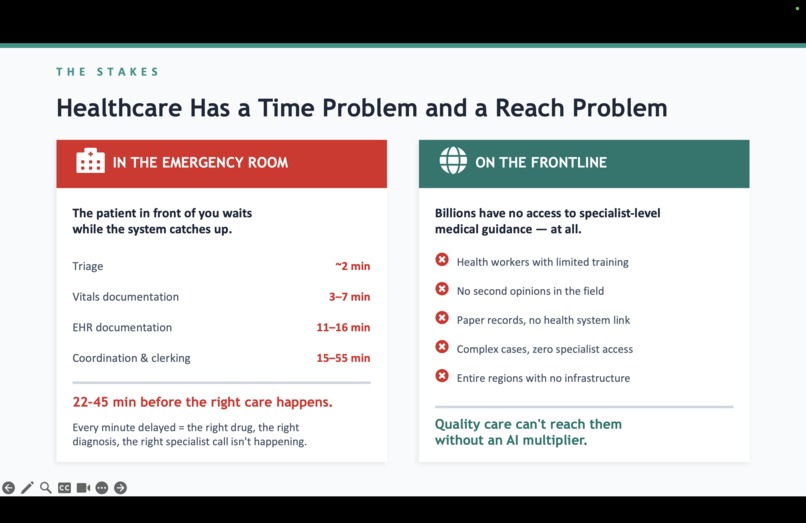

A close family member was rushed to the ER after a road accident. While we watched — helpless, anxious — we had an opportunity to observe how patients were being processed. Triage nurses assessed patients in under two minutes. Sharp, focused, clearly skilled. Then they would turn to a computer and spend the next fifteen minutes typing that assessment into the system, field by field, while other patients waited. A doctor would finish stitching a wound, walk to a workstation or pick up an iPad, and try to reconstruct from memory what had happened ten minutes ago. Specialist alerts happened later. Context got further lost when the shift change happened — critical details relayed verbally in a hallway, nothing written down.

The journey from a clinician observing a patient to a completed medical record takes 22 to 45 minutes on average. Not because anyone is slow — because the systems around them can't keep up with the speed of real clinical work. During the golden hours, these minutes matter a lot.

That experience stayed with us.

Then we looked at the other end of the spectrum — frontline health workers in rural and underserved regions. Workers with weeks of training, no specialist backup, no digital tools, serving populations of thousands. The same pattern in a different form: a child with treatable pneumonia, a mother with postpartum complications, a fever that was actually meningitis — the health worker was standing right there, but the knowledge wasn't.

Every year, 5 million people die from conditions that frontline workers could have caught with the right support. In trauma emergencies, 37% of deaths happen within the first three hours — when chaos is highest and information is hardest to hold together.

We asked ourselves: what if AI worked the way healthcare actually works? Hands on the patient. Eyes on the wound. No time to type. No screen to look at. Voice as the only free channel. That question became Minerva.

What it does

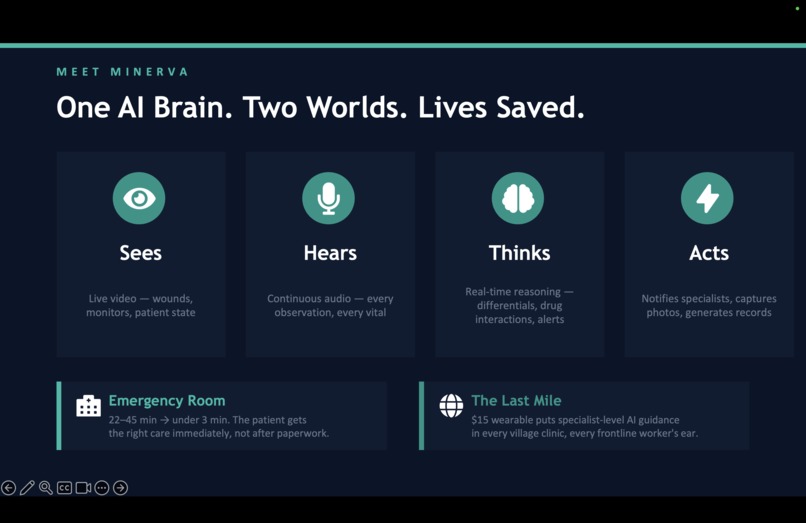

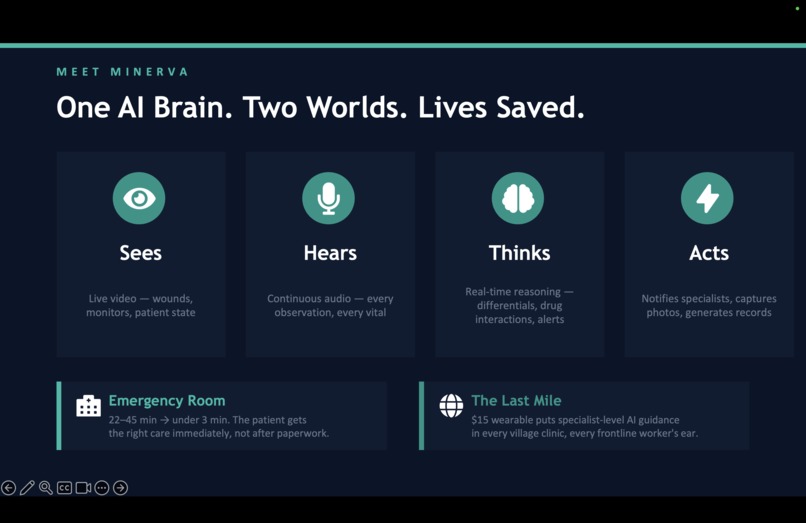

Minerva is a healthcare copilot — a system that sees, hears, speaks, and acts alongside clinicians in real time.

At the bedside, Minerva is a \$45 wearable device mounted on the clinician's spectacles. Camera, microphone, earpiece. The clinician puts it on and forgets it's there. She talks to her patient, examines wounds, checks vitals — and Minerva listens, watches, and builds the clinical picture silently in the background.

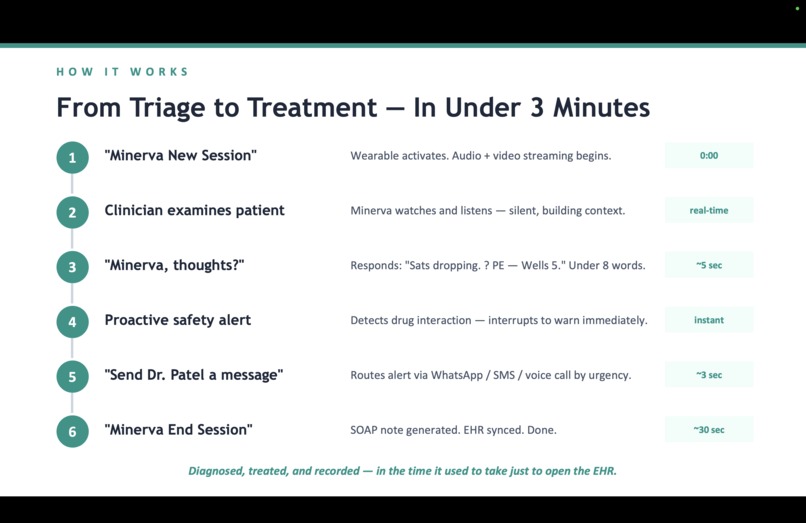

When she needs help, she speaks naturally: "Minerva, any thoughts?" and gets a concise clinical assessment through her earpiece. When she sees something to document — "Minerva, capture photo" — the camera fires without her hands leaving the patient. When a critical flag arises — a dangerous drug interaction, deteriorating vitals, a pattern suggesting meningitis — Minerva alerts proactively through the earpiece.

At the end of the encounter, a complete structured SOAP note is generated automatically. Specialists are notified via WhatsApp, SMS, or voice call based on urgency. Structured records sync to OpenEHR. No typing. No forms. No data entry.

But Minerva is not just the device. It is a holistic system with four pillars:

Voice AI Agent — Powered by Gemini Live API with native audio, the Live Clinical Agent handles real-time multimodal interaction. It stays silent by default — only responds when directly addressed or when something safety-critical is detected. It uses Proactive Audio to distinguish clinician-to-patient speech from clinician-to-Minerva speech, so it never talks over a doctor-patient conversation.

Specialized ADK AI Agents — Six agents, each handling a distinct piece of the clinical workflow. The Analyzer generates SOAP notes from transcripts and images. The Notification Agent routes alerts to specialists with priority-based channel selection (WhatsApp → SMS → voice call with retries). The EHR Agent maps encounters to OpenEHR compositions. The Knowledge Agent provides vector search over WHO clinical documents.

Web Dashboard — A React application where clinicians review AI-generated insights and analysis, view annotated images, track audit trails, and manage patient records. The device handles the high-pressure moments. The web handles everything else.

Clinical Knowledge Layer — Grounded in WHO Essential Medicines (adult and pediatric), Emergency Triage Assessment and Treatment (ETAT), Integrated Management of Childhood Illness (IMCI), and emergency triage protocols for medical officers. These are chunked, embedded as 768-dimensional vectors, and injected into the agent's context at session start — so every recommendation is protocol-grounded, not generic.

Two session modes serve different clinical realities: Clinical Sessions with full audio, video streaming, photo capture, and SOAP generation for emergency and bedside encounters, and Quick Consult with audio-only conversational guidance for frontline workers needing fast answers in the field.

The result: what used to take 22–45 minutes of documentation and coordination now happens in under 3 minutes. A 20–25x improvement — while the clinician's hands never leave the patient.

An entirely new clinical experience

Today, clinical work and documentation are two separate activities. You treat the patient, then you stop and document. You observe something concerning, then you go find the right protocol. You need a specialist, then you call, wait, explain from scratch. Every step is sequential, manual, and disconnected. The clinician is the glue holding it all together through memory and effort.

Minerva collapses all of that into a single moment. The clinician does her job — talks, examines, decides — and documentation, clinical reasoning, specialist alerts, and structured records happen simultaneously as a byproduct. She never switches contexts. She never touches a screen or types a word.

No clinician has ever had that before — an AI that sees what they see, hears what they say, reasons alongside them in real time, and handles everything they used to do after the patient encounter, during the encounter itself.

For frontline health workers, the shift is even more fundamental. There was no "status quo" to improve — there was nothing. No digital records, no decision support, no specialist access. Minerva doesn't make an existing system faster. It creates a system where none existed, accessible through the most universal interface: the human voice, in any language.

Why a Hardware layer? Why not a mobile app?

A phone requires the clinician to stop clinical work in order to use it — pick it up, unlock it, open an app, hold it steady for a photo / video, read the screen for results. Each step takes the clinician's hands off the patient and attention away from the examination. In emergency and frontline settings, this interruption is not a minor inconvenience — it is a barrier to adoption. Minerva removes the device from the clinician's conscious experience entirely. The clinician speaks, and the system hears. The clinician looks, and the system sees. There is nothing to hold, nothing to unlock, nothing to learn. The AI operates through the channels the clinician is already using — voice and vision — allowing clinical work to continue uninterrupted. The technology serves the clinician, rather than the other way around.

However, That said, we also built a full web interface as a fallback — accessible from any phone or desktop browser — for situations where the wearable is unavailable, or for reviewing sessions, consulting remotely, and training. The wearable handles the high-pressure moments. The web handles everything else.

How we built it

We started with the interaction model, not the technology. The question was: what is the simplest possible interface for someone whose hands are full, eyes are on a patient, and every second counts? The answer was voice and vision — nothing else.

Hardware. A Raspberry Pi Zero W streams audio and video over WebSocket to a FastAPI backend on Google Cloud Run. Five custom wake word models — trained using OpenWakeWord with synthetic speech from Piper TTS — let the clinician control everything by voice: "Minerva New Session," "Minerva Capture Photo," "Minerva End Session." The camera captures what the clinician sees through their spectacles. Everything runs headless on Pi OS Lite. Total hardware cost: \$45.

AI Backbone. We chose gemini-live-2.5-flash-native-audio on Vertex AI specifically because it handles speech-to-speech in a single model pass. No separate STT → LLM → TTS pipeline means dramatically lower latency — critical when a nurse asks a question during a coding situation and three seconds feels like an eternity. The Proactive Audio capability was essential for making the agent clinically appropriate — it needed to know when it was being spoken to versus when it should stay silent.

Agent Orchestration. Post-session processing is handled by Google ADK agents. The Analyzer receives full transcripts and captured images, then generates structured SOAP notes. The Notification Agent uses Twilio to route messages by urgency — WhatsApp for routine updates, SMS for time-sensitive alerts, voice call with retries for life-threatening emergencies. The EHR Agent maps encounter data to OpenEHR flat JSON compositions and syncs to an EHRbase sandbox. Each agent stores its reasoning trace for transparency.

Knowledge Layer. WHO reference PDFs are extracted, chunked at ~500 tokens with overlap, and embedded using Google's text-embedding-004 model. Stored in MongoDB Atlas with vector search, these chunks are injected into the Gemini Live Agent's context window at session start — clinical sessions receive triage protocols, quick consults receive medicines references. The agent cites dosages, contraindications, and red flags from these references without reading raw text aloud.

Frontend. React with TypeScript, Firebase Authentication for Google OAuth, deployed on Firebase Hosting. The dashboard shows live session status, completed SOAP notes, captured images, EHR sync status, and a full audit trail.

Deployment. The backend runs as a containerized service on Google Cloud Run with autoscaling. GitHub Actions handles CI/CD — Docker images push to Google Artifact Registry, the frontend deploys to Firebase Hosting. Everything triggers on push to main.

Architecture

Challenges we ran into

Getting the AI to stay silent was harder than getting it to talk. Our first prototype had Minerva responding to everything in detail — including the clinician talking to the patient. We spent significant effort tuning the Proactive Audio behavior and the system prompt so Minerva distinguishes who is being addressed and stays quiet by default.

Wake word training on a Pi Zero was a journey. We chose OpenWakeWord over commercial alternatives to avoid vendor lock-in. Training five custom models meant ~45 minutes of Piper TTS synthesis per model.

Bluetooth audio on the Pi Zero is fragile. Pairing, maintaining connection, and handling audio streaming over Bluetooth while simultaneously running wake word detection and camera capture pushed the Pi Zero's 512MB of RAM to its limits. We spent more time debugging bluetoothctl pairing failures and audio routing than we would like to admit.

Latency is unforgiving in emergencies. A three-second delay when a nurse asks about a drug dosage during a critical moment is unacceptable. Using Gemini's native audio model instead of a chained pipeline was a latency win. But we also had to optimize WebSocket frame sizes, camera capture resolution, and audio buffer management on the Pi to keep the full round-trip responsive.

Clinical grounding cannot be an afterthought. A generic LLM gives generic medical advice — "you might want to consider antibiotics." We needed: "Per ETAT protocol, this child classifies as emergency — administer first dose of ceftriaxone 50mg/kg IM before transport." Building the RAG pipeline with WHO documents, tuning chunk sizes, and routing the right knowledge to the right session type was essential for clinical credibility.

Bilingual clinical speech is messy. Clinicians in India routinely mix English medical terminology with Tamil or Hindi conversational speech mid-sentence. Configuring the Gemini Live API for bilingual support (English and Tamil) and ensuring it handled code-switching without garbling transcription or losing clinical meaning was a non-trivial challenge.

Accomplishments that we're proud of

The 22-minute-to-3-minute compression is real. We measured the full clinical documentation workflow — from observation, alerting, documenting and through EHR sync — and Minerva genuinely reduces it by 20-25x. A complete, structured, protocol-grounded medical record is generated from a natural voice conversation without the clinician doing anything beyond their normal clinical work.

Five custom wake words running locally on a \$45 device. No cloud dependency for wake word detection. No vendor lock-in. The Pi Zero 2W runs OpenWakeWord inference on custom ONNX models entirely on-device, responding reliably to five distinct voice commands in real clinical noise environments.

Priority-based specialist notifications from natural speech. A clinician says "Send Dr. Patel a message about this patient" mid-encounter, and the Notification Agent reasons about urgency and routes via the appropriate channel — WhatsApp for routine, SMS for time-sensitive, voice call with retries for critical. This happens during the live session without breaking the clinical flow.

End-to-end structured EHR sync. The encounter goes from spoken words → AI-generated SOAP note → OpenEHR flat JSON composition → EHRbase — entirely automatically. The EHR Agent reasons about field mapping and stores its reasoning trace, so every record is auditable.

It works in Tamil. For community health workers in rural Tamil Nadu, India, Minerva responds in their own language, grounded in WHO protocols they were trained on. A health worker who has never used a computer can now access specialist-level clinical reasoning through her voice alone.

What we learned

The most important lesson was not technical. It was this: the best clinical AI is the one the clinician forgets is there.

If the device demands attention, it fails. If the AI interrupts at the wrong moment, it fails. If documentation requires a separate step, it fails. The only way it works is if doing clinical work is the input, and everything else — documentation, decision support, alerts, records — happens as a byproduct. Invisible utility is the design principle that guided every decision.

We learned that clinical discipline matters more than model capability. Gemini is extraordinarily capable, but raw intelligence without protocol grounding is dangerous in healthcare. It cannot suggest diagnoses. It cannot hallucinate. Hence, framing every recommendation as "Per ETAT protocol..." rather than "I think..." is not just better UX — it is the difference between a tool clinicians trust and one they rightfully reject.

We learned that hardware constraints force good design. The Pi Zero's limitations — 512MB RAM, single-core effective performance for most tasks, unreliable Bluetooth — forced us to push all heavy computation to the cloud and keep the device as a pure sensor and audio endpoint.

We learned that a phone is not a suitable clinical interface. This was our strongest conviction going in, and building the prototype confirmed it. Pulling out a phone, unlocking it, opening an app, holding it steady for a photo — every one of those steps is a moment the clinician's hands leave the patient and eyes leave the wound. A wearable that captures what you see and hears what you say, with zero manual interaction, is not an incremental improvement. It is a categorically different experience.

What's next for Minerva — The Healthcare Copilot that saves lives

Deeper clinical knowledge. We want to expand beyond the current WHO documents to include national treatment guidelines for specific countries, drug interaction databases, and locally relevant disease epidemiology. The RAG pipeline is built for this — more documents mean better grounding. On the AI side, we want to fine-tune on real clinical conversations — how doctors actually talk, the shorthand, the mid-sentence language switching.

Better Hardware and Fine tuning The Pi Zero prototype works, but is not completely reliable. We want to build something with a better mic for noisy ERs, bone conduction audio so the clinician still hears the patient, and battery that lasts.

Pilot with real clinicians. The most important next step. We want to deploy Minerva with a small group of community health workers in one district and emergency staff in one hospital — to learn what works, what breaks, and what we never anticipated. The technology is ready. The real test is whether a health worker puts this on in the morning and refuses to take it off.

Built With

- docker

- ehrbase-(openehr-sandbox)

- ehrbase-(openehr-sandbox)-ci/cd:-github-actions

- fastapi

- firebase-authentication

- firebase-hosting

- firebase-hosting-backend:-python

- gemini-2.5-flash

- gemini-live-2.5-flash-native-audio

- gemini-live-api

- github-actions

- google-agent-development-kit-(adk)

- google-artifact-registry

- google-cloud

- google-cloud-build

- google-cloud-logging

- google-cloud-run

- mongodb-atlas

- mongodb-atlas-vector-search-(768-dim-embeddings)

- mongodb-atlas-vector-search-(768-dim-embeddings)-hardware:-raspberry-pi-zero-2w

- openwakeword-(custom-onnx-models)

- pi-camera-module-5mp

- picamera2

- picamera2-apis-&-integrations:-twilio-(whatsapp

- piper-tts

- pyaudio

- pydantic-frontend:-react

- python

- raspberry-pi-zero-w

- react

- sms

- tailwindcss-database:-mongodb-atlas

- text-embedding-004

- text-embedding-004-cloud:-google-cloud-run

- twilio

- twilio-voice-call)

- twilio-whatsapp

- typescript

- vertex-ai

- vertex-ai)

- vite

- voice-call)

- websockets

Log in or sign up for Devpost to join the conversation.