-

-

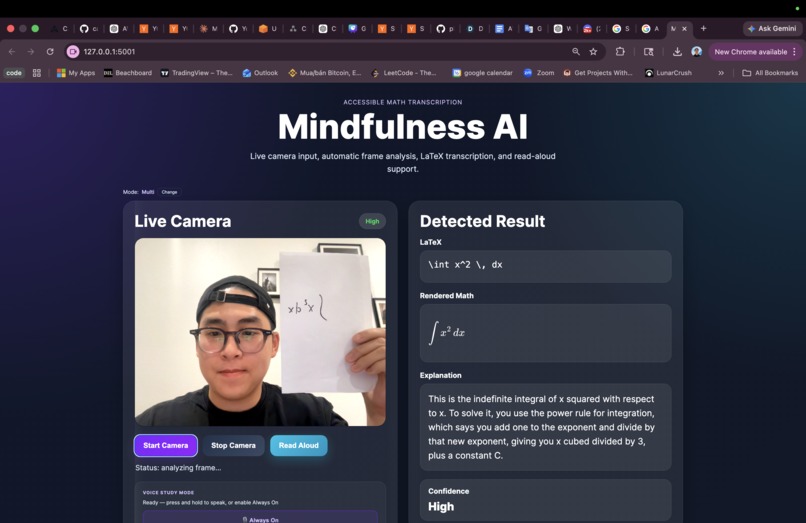

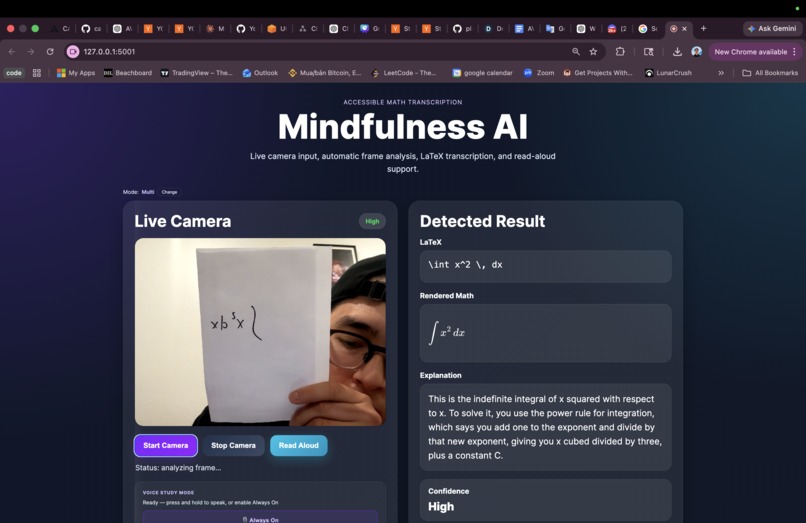

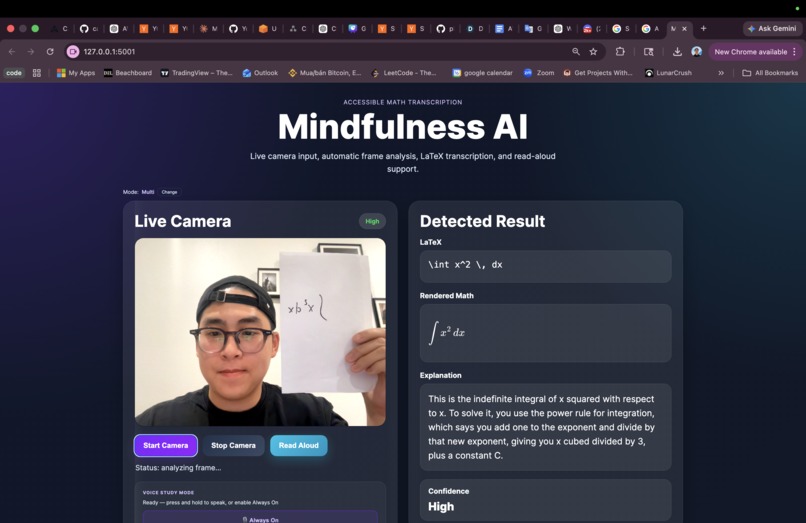

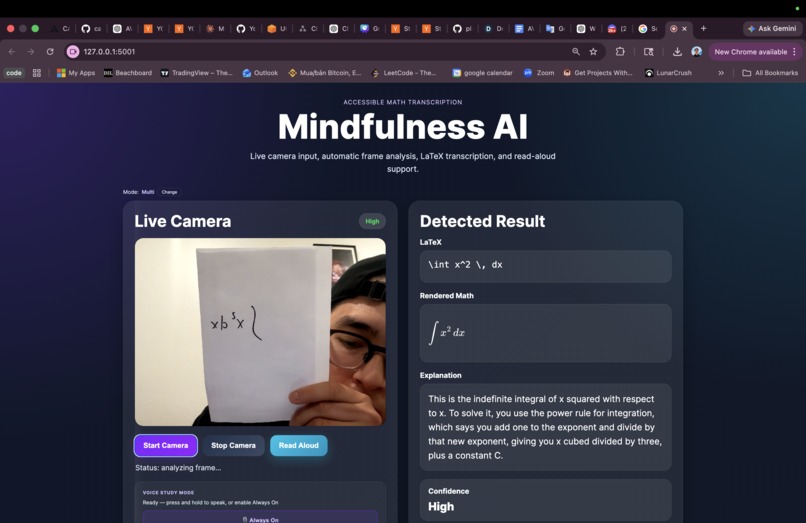

Mindfulness AI platform with multiple mode for any disabled student

-

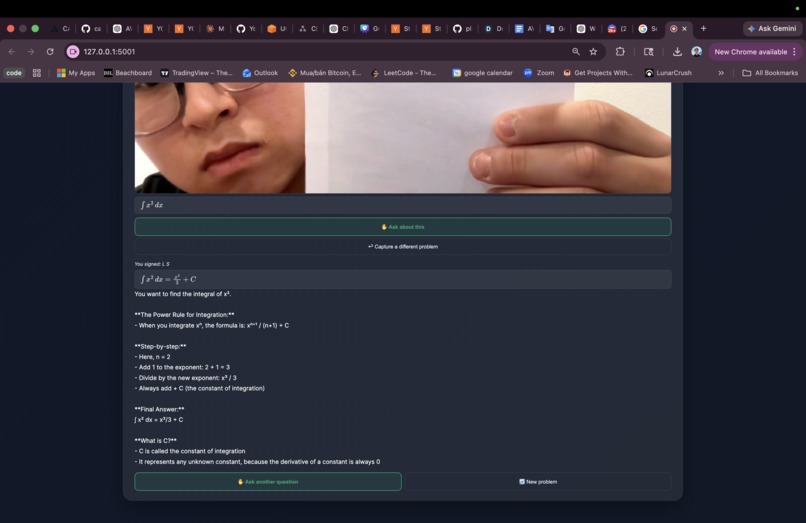

Taking Picture of any equation or any problem in real-time

-

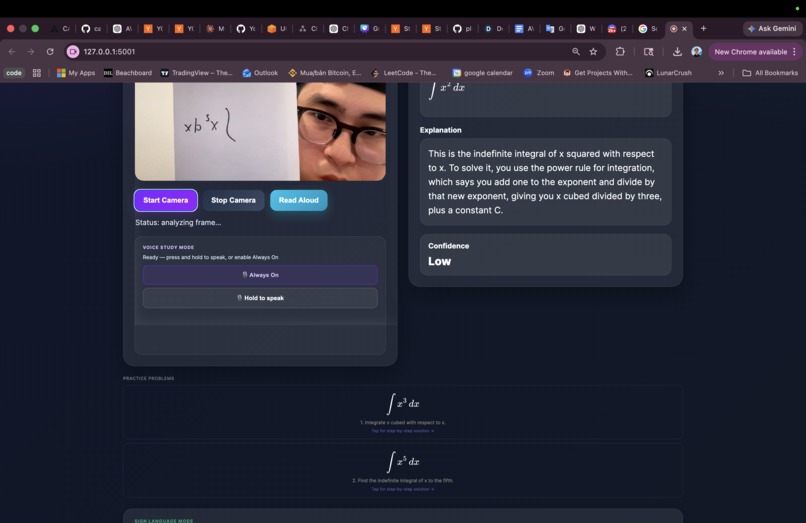

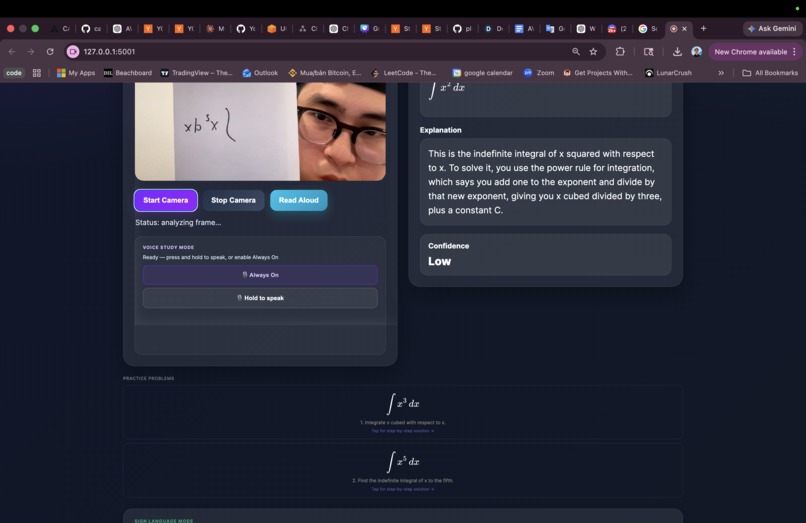

Voice Support On Real-Time and Recommendation

-

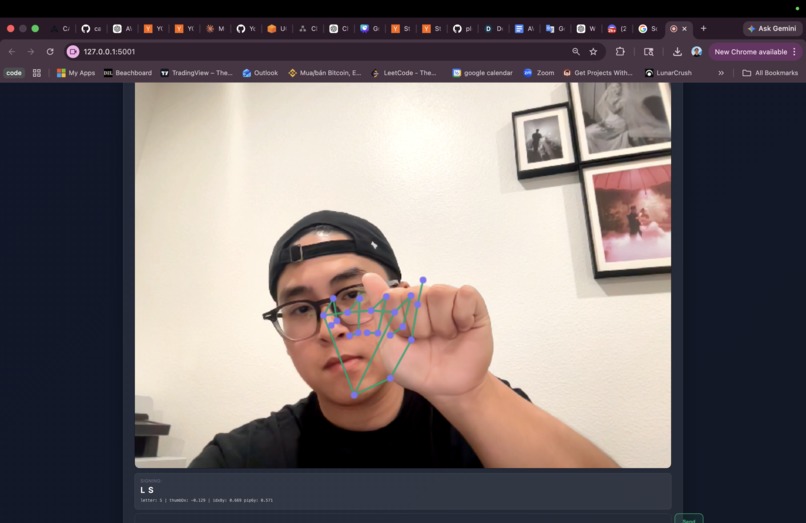

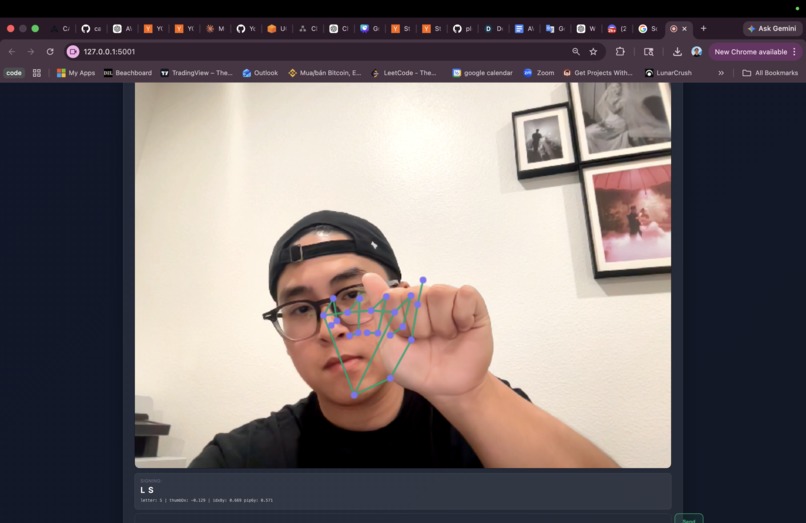

American Sign Language also build to support user need to ask any question

-

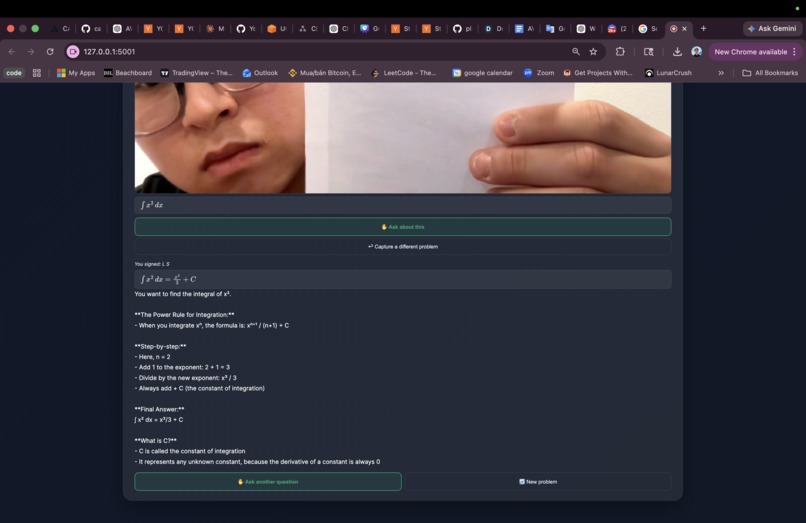

Giving disabled student response and step by step how to solve any problem

Inspiration

STEM education is already hard — but for students with visual impairments, motor disabilities, hearing loss, or cognitive differences, it can feel completely out of reach. A student who cannot easily read a whiteboard, speak aloud, or hear a lecture shouldn't have to choose between accessibility tools and learning math. We wanted to build something that meets every student exactly where they are, adapting in real time to their needs. Mindfulness AI was born from that question: what if your study assistant actually understood you?

What it does

Mindfulness AI is an accessibility-first STEM study companion that turns any handwritten or printed math problem into a fully accessible learning experience.

- Point your camera at any equation — the app instantly transcribes it into LaTeX, renders it visually, and generates a plain-English explanation

- Ask follow-up questions by voice — a conversational AI tutor answers in natural speech via Amazon Polly

- Sign your question using ASL fingerspelling — deaf and hard-of-hearing students can communicate with the app entirely in sign language, no voice required

- Practice problems are auto-generated based on what you just studied, with step-by-step solutions on demand

- Every interaction adapts to a disability profile (visual, motor, cognitive, hearing, or multi) chosen at onboarding — font sizes, output modes, and interaction styles all shift accordingly

How we built it

The frontend is pure HTML/CSS/JavaScript — no framework overhead — served by a Flask backend deployed on AWS Lambda behind Amazon API Gateway.

Math recognition uses Amazon Bedrock (Claude Sonnet) with multimodal vision: a camera frame is sent as a base64 image alongside a structured prompt, and the model returns:

$$\text{image} \xrightarrow{\text{Bedrock}} { \texttt{latex},\ \texttt{explanation},\ \texttt{confidence} }$$

Voice mode streams answers sentence-by-sentence using Server-Sent Events — Bedrock generates the full answer first, then each sentence is synthesized independently by Amazon Polly (Neural engine) and streamed as base64 audio chunks so the first word plays within ~400ms.

Sign language mode runs entirely in the browser — TensorFlow.js with the MediaPipe Hands model detects 21 hand landmarks per frame, and a custom ASL fingerspelling classifier maps joint angles to letters A–Z with no server call needed. The assembled text is then sent to Bedrock for a caption-only response.

Challenges we ran into

- SSE + Lambda: API Gateway doesn't natively buffer SSE streams — we had to enable Lambda streaming response and carefully tune

X-Accel-Bufferingheaders to prevent proxies from collapsing the stream - ASL accuracy: Fingerspelling classification from raw landmarks is noisy. Getting reliable per-letter accuracy required carefully tuning angle thresholds for visually similar letters like

M/N,R/U, andG/H - Latency on voice stream: The first audio chunk arriving fast enough to feel responsive required splitting Bedrock's response into sentences before synthesis, so Polly starts on sentence 1 while sentences 2–3 are still being processed

- Disability profiles: Designing a system prompt that meaningfully changes Claude's output style (verbosity, jargon level, formatting) for five different disability profiles took many iterations

Accomplishments that we're proud of

- A single app that genuinely serves five distinct accessibility needs with zero mode-switching friction

- Sub-500ms first-audio latency on the voice stream in real-world testing

- ASL fingerspelling working reliably in-browser with no external API — fully offline after model load

- Clean separation between profile logic and AI logic, making it easy to add new disability profiles

What we learned

- Amazon Bedrock's multimodal API is remarkably capable at math OCR — even messy handwriting on a phone camera

- Browser-side ML (TF.js) has gotten good enough to replace server-side inference for real-time landmark detection

- Accessibility is not a feature you bolt on at the end — designing for it from day one shaped every architectural decision we made

What's next for Mindfulness AI

- DynamoDB integration to persist user profiles and study history across sessions

- Expand ASL support beyond fingerspelling to full ASL word-level gesture recognition

- Support for more STEM subjects beyond math — chemistry equations, circuit diagrams, biology diagrams

- Mobile-native app (React Native) for students who primarily study on phone

- Teacher dashboard to track which concepts students are struggling with most

Built With

- amazon-web-services

- bedrock

- lambda

- polly

- speechrecognition

Log in or sign up for Devpost to join the conversation.