-

-

GIF

GIF

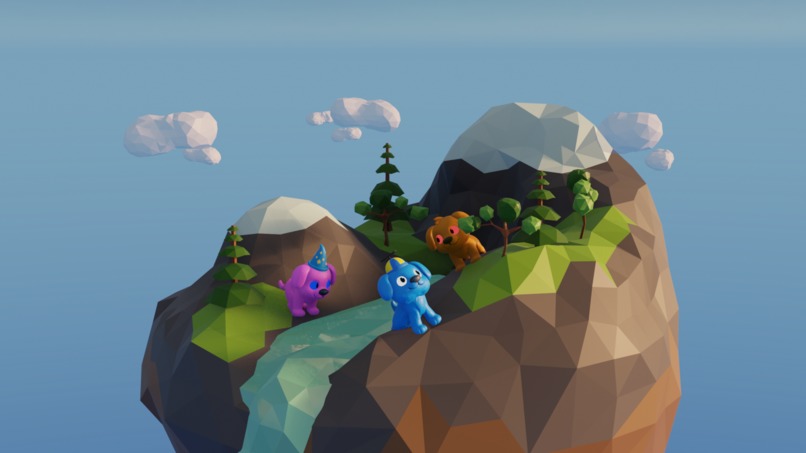

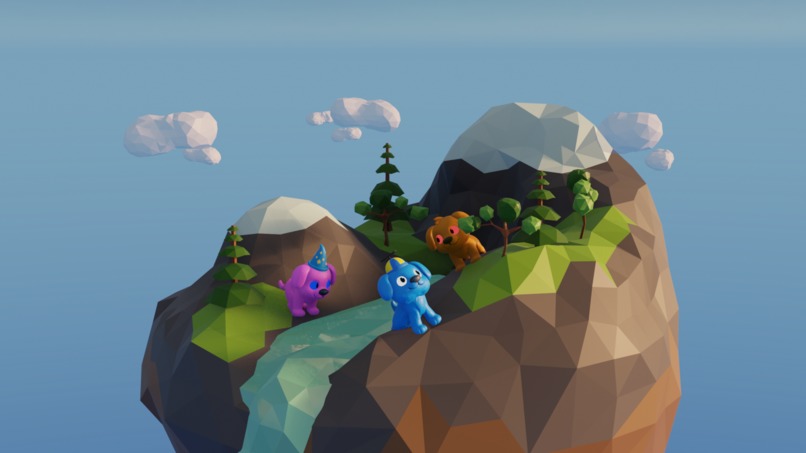

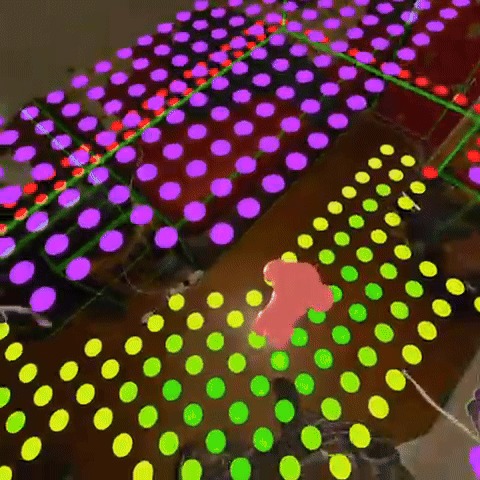

One of the first milestones, realising the space and now the challenge of having the Petz interact with the environment

-

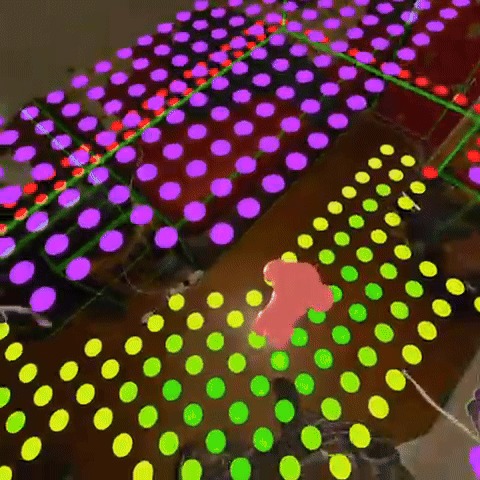

Laying out the ground work for A* pathfinding so that the Petz can intelligently navigate the environment!

-

Banner for MetaStore

-

Had a friend try break the game, also highlighting the new furniture occlusion shaders

-

GIF

GIF

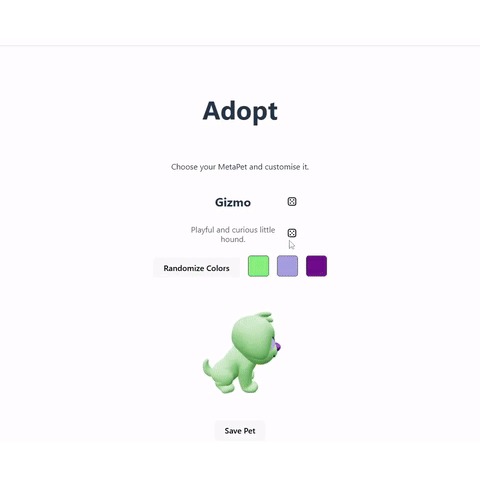

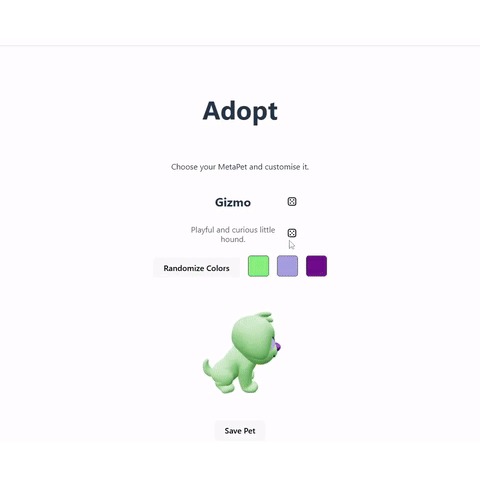

Unlock and equip accessories as your pet levels up. Customize colors and sizes.

-

GIF

GIF

Real-time 3D navigation mesh built from room scanning, enabling intelligent pet movement. Furniture Islands and culling unreachable areas.

-

GIF

GIF

A* Pathfinding in 3D! the pet prefers to idle on the ground but sometimes jumps up, and will always prefer smaller jumps first.

Inspiration

We wanted to bring back the joy of virtual pet ownership, but reimagined for the spatial computing era where your pet actually lives in your room, not just on a screen.

What it does

MetaPetz lets you adopt a 3D pet that roams your real space. Throw a bone toy that bounces off your actual walls, watch your pet wander around your furniture, and interact using hand tracking.

How we built it

Meta Spatial SDK for the core mixed reality framework, MRUK for room scanning, custom shaders for room visualization, physics for toy interactions, and Jetpack Compose for UI panels. Firebase and vite for the website and getting/setting info on pets.

Challenges we ran into

Getting the top down topology of the furniture specifically and aligning it to a 2D grid, in order to get the A* pathfinding algorithm to align with the 3D world in any orientation. Getting MRUK room meshes to align properly with physical space, we discovered that mixing reference space modes breaks spatial anchor alignment. Also I experienced a bug trying to get meta developer studio to run on my PC because of an older CPU. I also wanted to extract the joint / finger location within the kotlin layer, however this was inaccessable even in CPP because that data is not exposed by the SDK and you would need to hook into the Open XR process.

Accomplishments that we're proud of

Making our own 3D models, and having them be highly customisable. A working grab-and-throw mechanic (despite this being unsupported within the meta-spatial-sdk) with realistic physics, and seamless room boundary visualization that feels natural in mixed reality. I would say I am most proud of the 2D navigation grid that functions in a 3D space, it uses AABB for the largest rectangle in a structure of compound rectangles and also cuts off the space walkable space with the walls, it then culls the smaller spaces and keeps the largest walkalble area, and can theoretically work for any room dimensions, even if they are irregular.

What we learned

Spatial computing requires thinking in world space, not screen space. Small coordinate system mismatches become immediately obvious when virtual objects don't line up with reality.

What's next for MetaPetz

Multiplayer pet playdates, a full care system with stats and leveling, and more interactive toys and pet behaviors as we push toward a concept that could one day sit comfortably alongside Meta’s own mixed reality experiences.

Log in or sign up for Devpost to join the conversation.