-

-

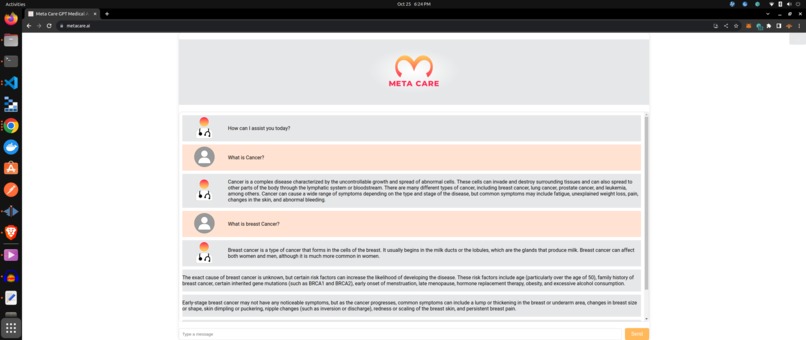

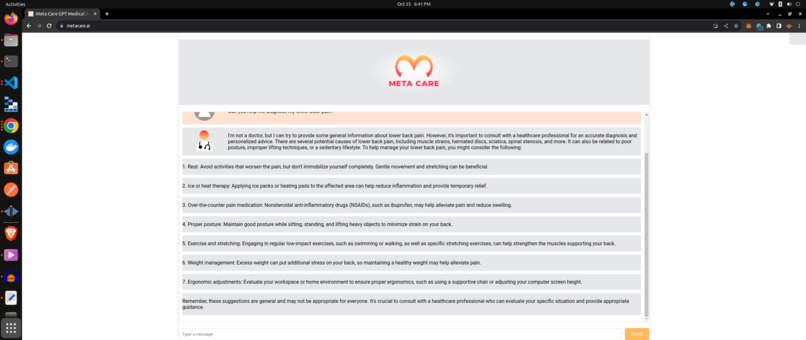

I am Metacare AI - Your Helpful Medical Assistant

-

Ask me a medical related topic.

-

I dont answer chats that are not medical questions. I dont like PII/PHI.

-

I am helpful, but not a substitute for a doctor or hc professional

-

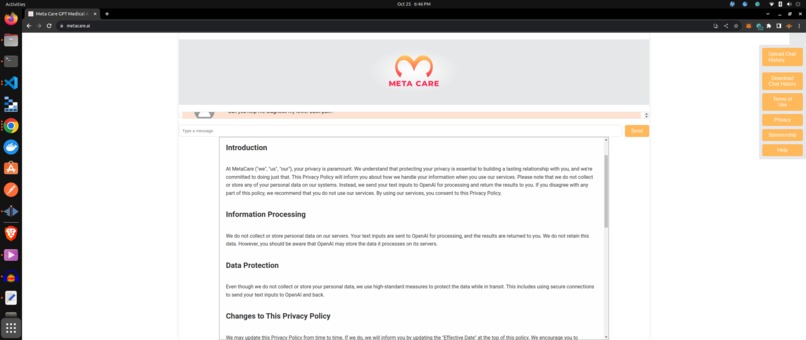

I respect your privacy and the confidentiality of the topic, by not requiring a login or storing any chat information.

-

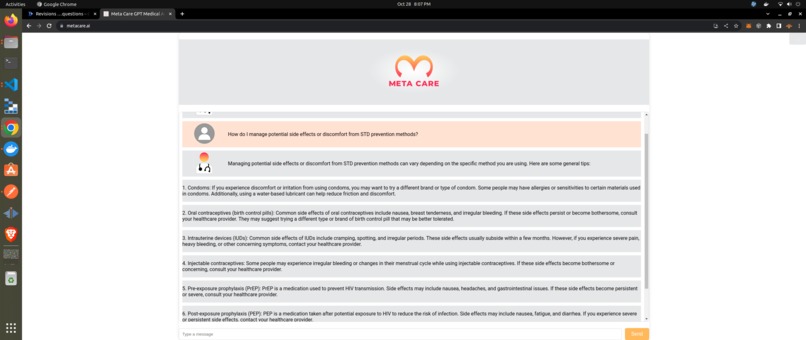

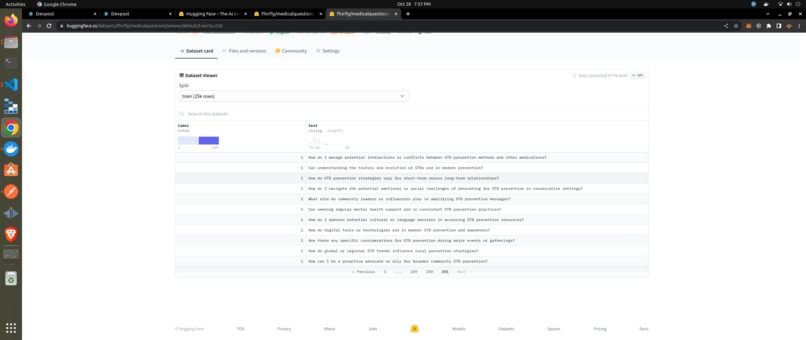

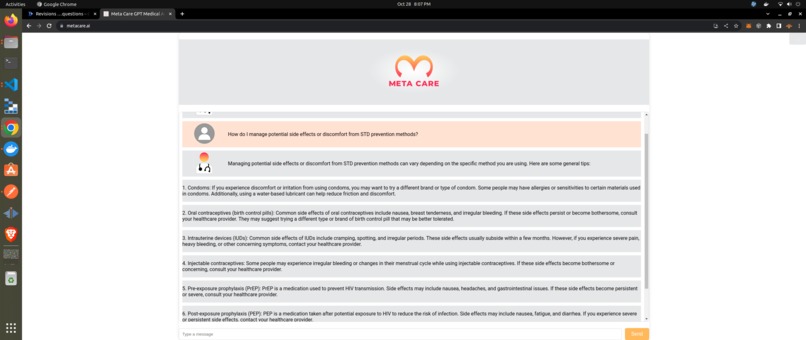

STI/STD Questions were added to my filter's data model to allow me to answer a broad range of questions about them.

-

Now I can answer questions about STIs in a private & secure manner.

Inspiration

To provide humans with a private means to chat with Large Language Models about medical topics.

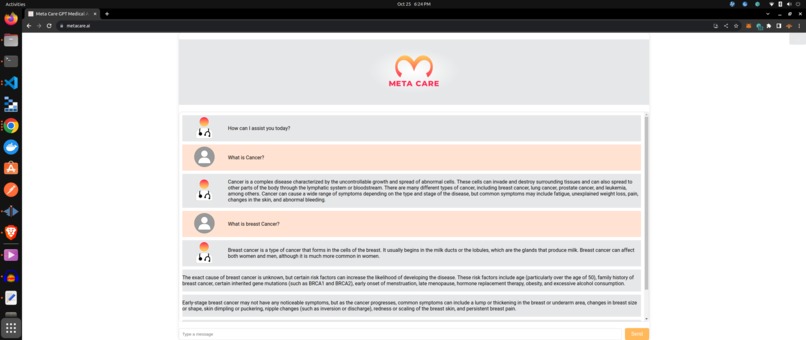

What it does

Lets human beings chat about sensitive medical related education topics (example STDs) with Chat GPT without prying eyes. This enables GPT to chat with the patient about STDs without the patient having any privacy concerns.

How we built it

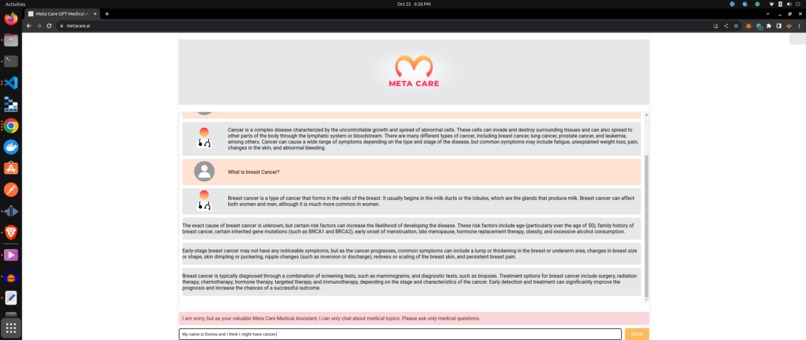

Topic Filter

Metacare.AI leverages Chat GPT for Generative LLM functions. Metacare.AI has its own service API layer backed by fine tuned BioBert AI model, that is trained to act as a firewall against spam or off topic questions. Instead of forwarding bad a bad chat message to GPT, it returns a customizable message to the user that basically says it is not designed to chat about anything other than medical questions.

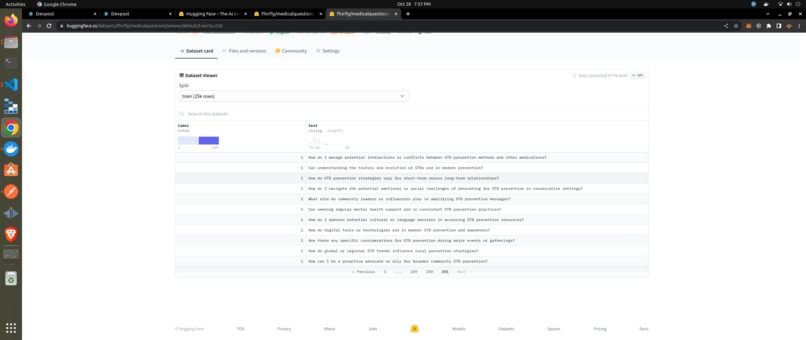

Open licensed on Hugging Face

We created datasets and trained models The trained medical questions model is licensed MIT and available as a transformer at HuggingFace Model The dataset used in training the model is available at: HuggingFace FHIRFLY Medical Questions Dataset If you scroll to the end of the dataset, you will see the STI/STD that were added to the model during the hack.

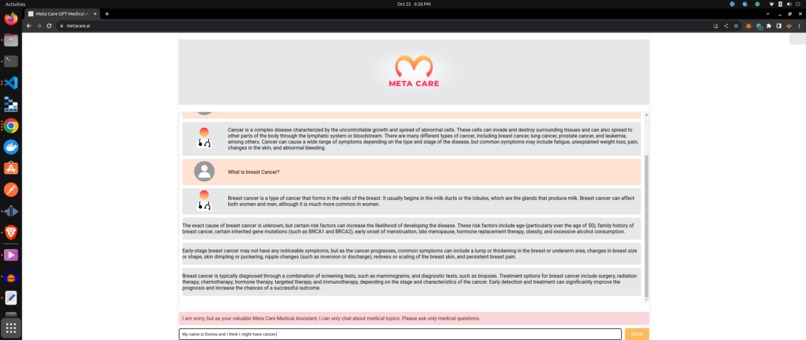

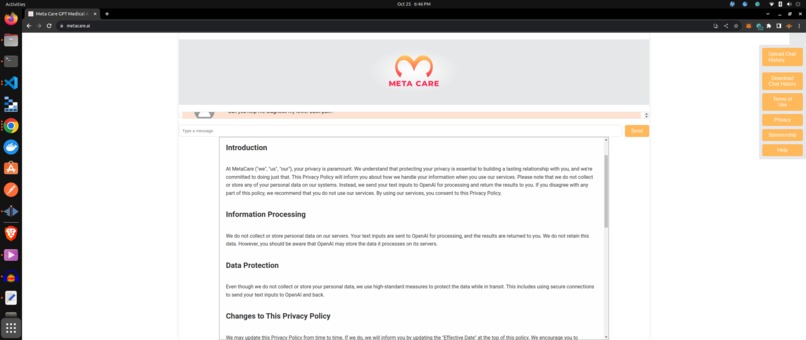

Privacy/PII/PHI

Metacare.AI solves the PHI privacy problem of using ChatGPT in three ways:

- It is not designed to record data. Metacare.AI creates no logs, and it contemplated to be open source for public inspection or even certified to HiTRUST/SOC2.

- The Topic Filter mentioned above is currently biased against PII such as names, meaning it wont normally wont pass PHI to Chat GPT and will return an off topic chat message.

- Metacare.ai deploys a third round of protection against PII, by using the open source Microsoft Presidio git hub repository. Presidio performs an AI/based analysis of the chat, and anonymizes any and all PII. This final layer ensures that no PHI ever goes to Chat GPT, regardless of where it came from.

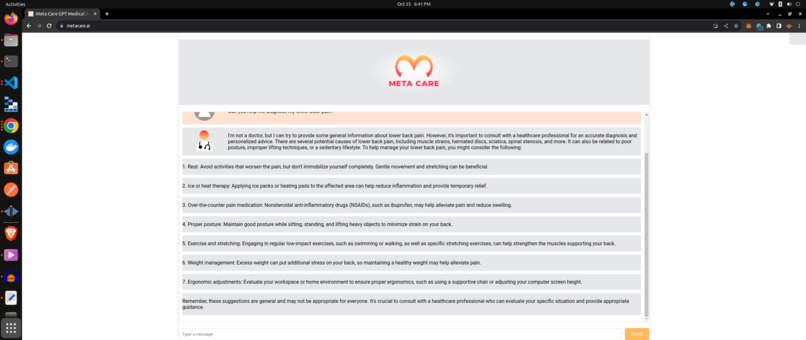

RAG

The analyze and anonymize layer enables Retrieval Augmented Generation (RAG) of patient data (like FHIR) to occur in chat, so a user can actually chat with ChatGPT about their medical record with being concerned about privacy. This medical record can be retrieved on behalf of the user from a FHIR API ,analyzed and anonymized, and safely submitted to ChatGPT's API

Fine tuning and Low temp ChatGPT.

We have fine tuned our instance of ChatGPT using low temp API calls, fine tuning and feature engineering techniques that reduce hallucinations.

Deployment to GCloud from Docker. Everything is build in Docker images that are deployed to Gcloud.

Challenges we ran into

Training AI models to act as guardrails is not without its own difficulties. Obtaining datasets, continuous training and testing of trained models are all challenging.

Accomplishments that we're proud of

The guardrails we created around Chat GPT are very effective and create endless possibilities for this technology without sacrificing privacy.

What we learned

How to implement topic and privacy guardrails using AI.

What's next for Metacare.AI

Open Source the source code and model training data for public inspection and provide relevant certification. Promote its use with the public. Continue to integrate more user driven features and experiences into the chat like FHIR Record retrieval.

Log in or sign up for Devpost to join the conversation.