Mentora: Revolutionizing Education with Multi-Agent LLMs and Dynamic React Visualizations

🎯 Inspiration

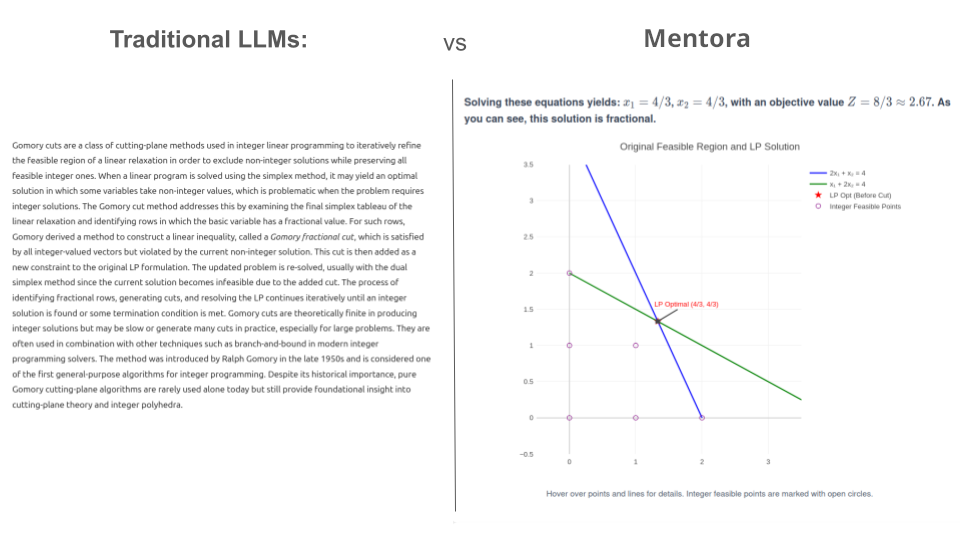

Traditional AI-generated educational content is static, text-heavy, and uninspiring. We’ve all seen LLMs produce walls of text that explain concepts without really showing them.

With Mentora, our vision was clear: build an AI-powered learning platform that generates rich, interactive educational experiences - not just text-based lessons, but dynamic visualizations, charts, and interactive components.

The Code with Kiro Hackathon challenged us to rethink how AI can support education.

Why should AI stop at text when it can create full interactive experiences?

🚀 What it does

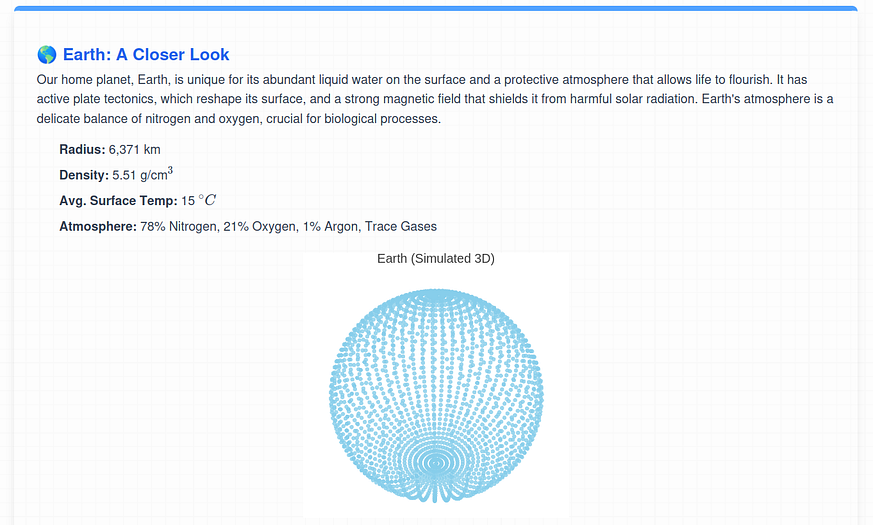

Mentora transforms simple queries like “I want to learn about the solar system” into fully interactive courses that include:

Dynamic 3D Visualizations – Watch planets orbit in real time, explore Earth’s layers, or step through algorithm animations

- Interactive Charts & Graphs - Data comes alive with Plotly-powered scientific visualizations and Recharts components

- Adaptive Learning Paths - AI-generated course structures that adapt to your learning style

- React-Powered Interactivity - Every explanation includes interactive components you can manipulate

- Smart Assessment - Quizzes that also use React components for engaging, visual problem-solving

Live Demo:mentora-kiro.de

🏗️ How we built it

Revolutionary Multi-Agent Architecture Mentora coordinates several specialized AI agents powered by Gemini:

- Course Planner Agent - Generates overall course structure and learning objectives

- Content Creator Agent - Produces chapter content with educational explanations

- Explainer Agent - Creates interactive React components with visualizations

- Quiz Generator Agent - Develops adaptive assessments using React components

- Interactive Chat Agent - Provides contextual support throughout learning

- Image Agent - Sources relevant visual content via external APIs ! Agent Diagram Agent Structure

The Breakthrough:

React Code as LLM Output Instead of generating static text or markdown, we made a bold decision: Let the LLM output raw React code directly. Our agents generate React components using:

- Plotly for complex scientific and mathematical visualizations

- Recharts for data visualization and charts

- React-Flow for flowcharts and algorithm visualizations

- LaTeX rendering for mathematical equations

- Syntax highlighting for code examples

Tech Stack

Backend:

- Python 3.12 with FastAPI

- MySQL + ChromaDB for data storage

- Docker containerization Frontend:

- React with Vite

- Mantine UI components

- Tailwind CSS - Dynamic React component rendering Validation Pipeline:

- ESLint analysis for code quality

- Security validation to prevent code injection

- Multi-iteration feedback loops for code improvement

⚡ How we used Kiro

The entire application was built inside Kiro, and we don’t believe we could have delivered such a large project in just one month without it.

At the very beginning, we used Spec mode to brainstorm requirements, design the project foundation, and scaffold the initial codebase.

Once the base was set, we mostly worked in Vibe mode - testing ideas, debugging, and making incremental frontend updates. For more complex tasks, we switched back to Spec.

One of the biggest boosts to our workflow came from a custom Kiro hook we developed. Early on, small changes in our SQL schema (like adding a new field) would cause large cascading updates across endpoints and CRUD functions. To solve this, we created a hook that automatically updated all related endpoints and CRUD code whenever the schema files changed. This eliminated a huge amount of repetitive work and was a major reason we could finish the project on time.

🔥 Challenges we ran into

- Code Generation Reliability - Getting LLMs to consistently generate valid, executable React code was initially problematic. We solved this with a sophisticated validation pipeline and iterative feedback loops.

- Agent Coordination - Maintaining coherence across multiple agents while handling state was complex.

- Security Concerns - Executing AI-generated code posed security risks. We implemented comprehensive validation and sandboxing.

- Performance Optimization - Rendering complex visualizations while maintaining smooth user experience required significant optimization.

- Context Management - Ensuring agents maintained context about the overall course while focusing on their specific tasks.

📚 What we learned

- LLMs are more capable than expected - Modern language models can generate sophisticated, functional code when properly guided and validated

- Multi-agent coordination is powerful - Multi-agent coordination is extremely powerful for structured applications

- User experience is everything - Technical innovation means nothing without intuitive, engaging user interfaces

- Validation is critical - Robust testing and validation pipelines are essential when dealing with AI-generated code

- Visual learning works - Interactive visualizations dramatically improve comprehension compared to static text

🔮 What's next for Nexora

- Collaborative Learning - Multi-user courses and peer interaction features

- Advanced Analytics - Learning pattern analysis and personalized recommendations

- Mobile Applications - Native iOS and Android apps for learning on-the-go

- Global Accessibility - Multi-language support and accessibility improvements

- Flash Cards - Utilise active recall for optimal memorisation

👥 Built with ❤️ by Team Mentora Markus Huber - Software Architect Luca Bozzetti - AI Engineer Sebastian Rogg – Frontend Specialist & Data Analyst 👉 Try it today: mentora-kiro.de

The future of AI isn't just about generating better text - it's about generating better experiences. And with tools like Kiro making prototyping extremely fast, we're just scratching the surface of what's possible.

Log in or sign up for Devpost to join the conversation.