-

-

Entire screenshot of website in mobile

-

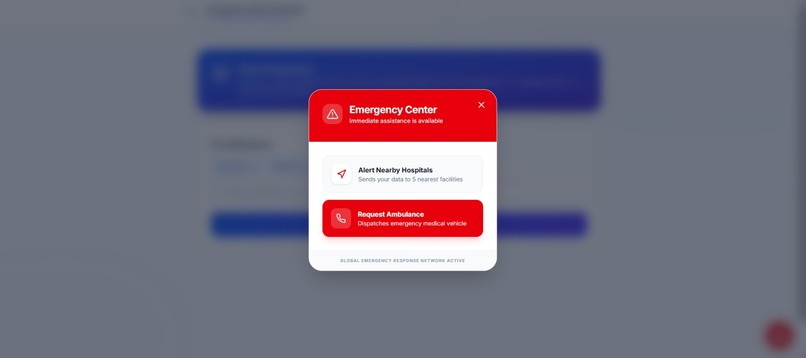

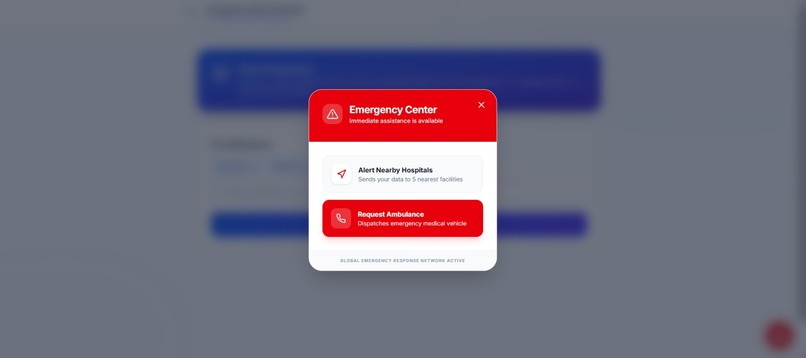

SOS feature

-

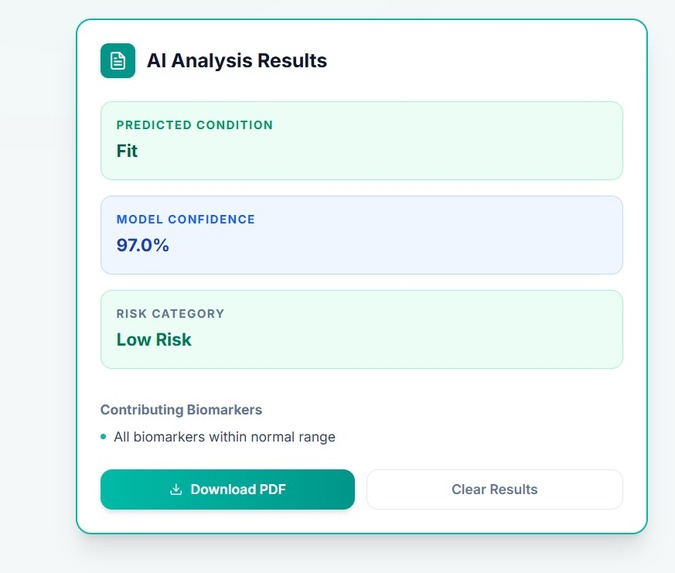

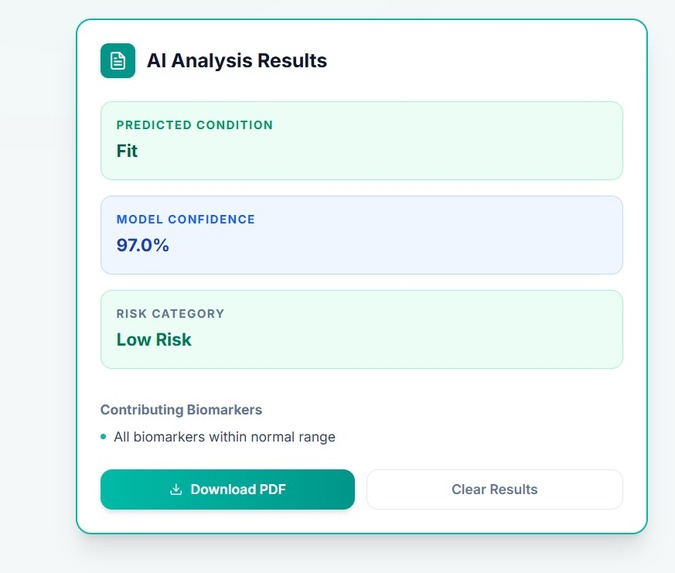

AI analysis of report uploaded by patient (In laptop or desktop)

-

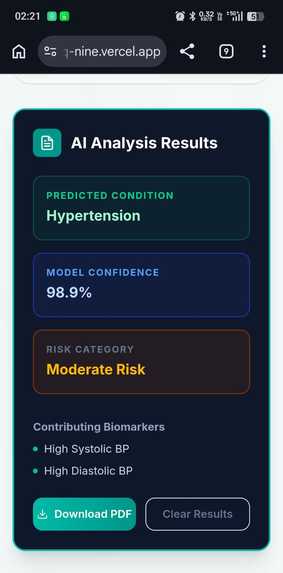

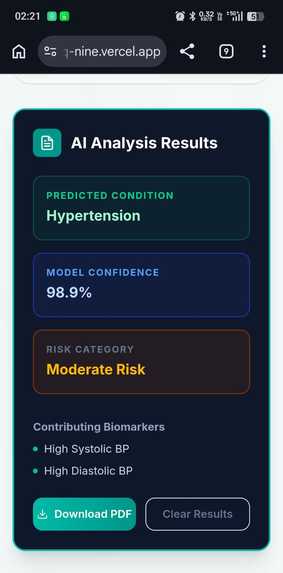

AI analysis of report uploaded by patient (In mobile)

-

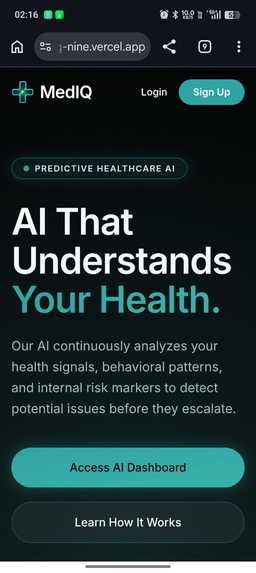

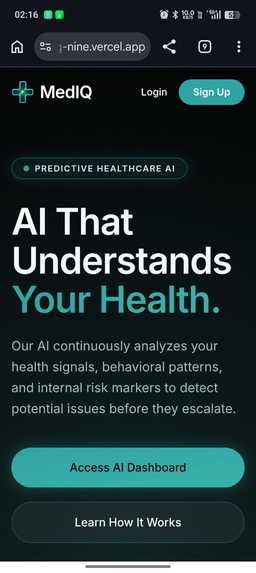

Homepage in mobile

-

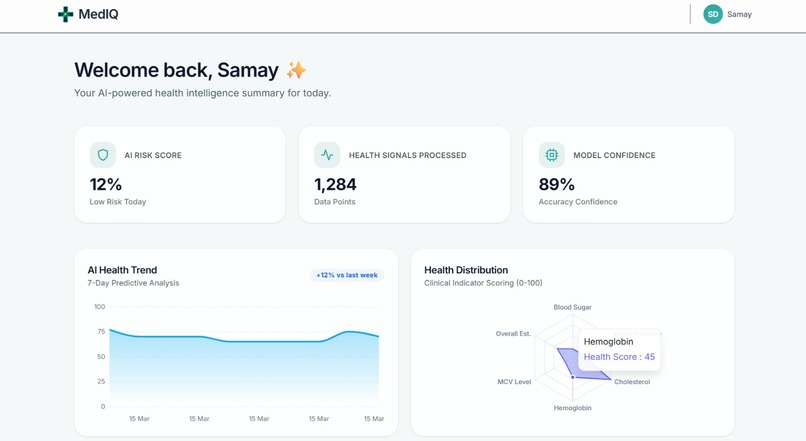

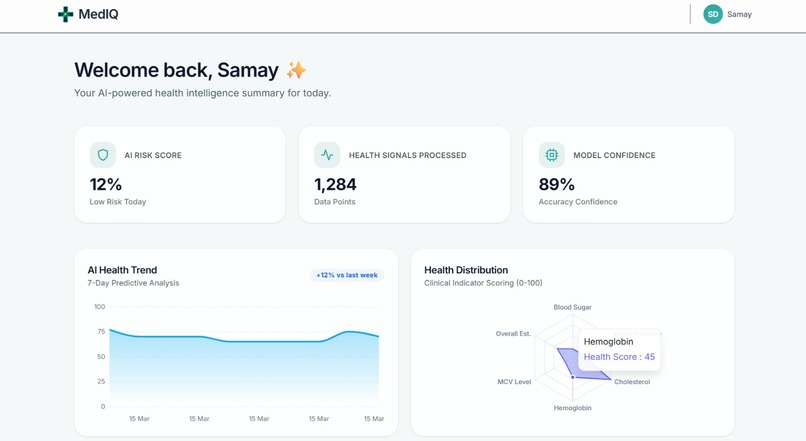

Homepage after login 2

-

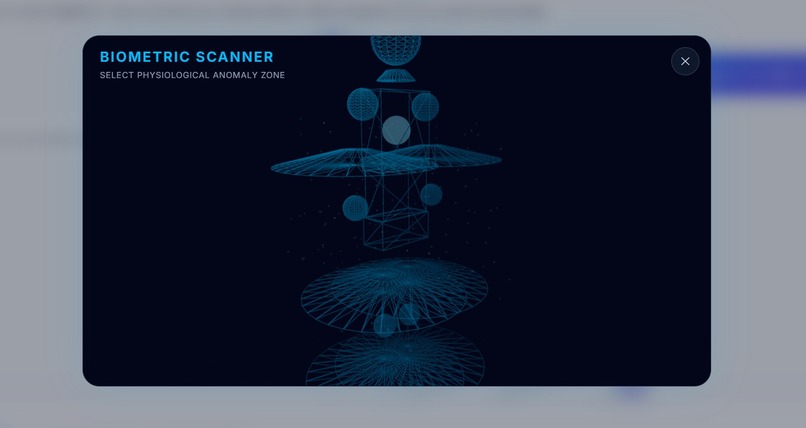

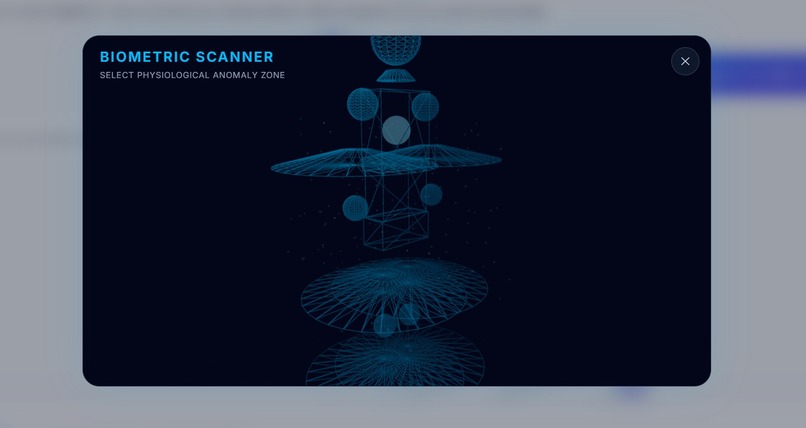

Biometric scanner that writes the prompt itself based on the body part selected by the user of the 3D model

-

Homepage after login 1

-

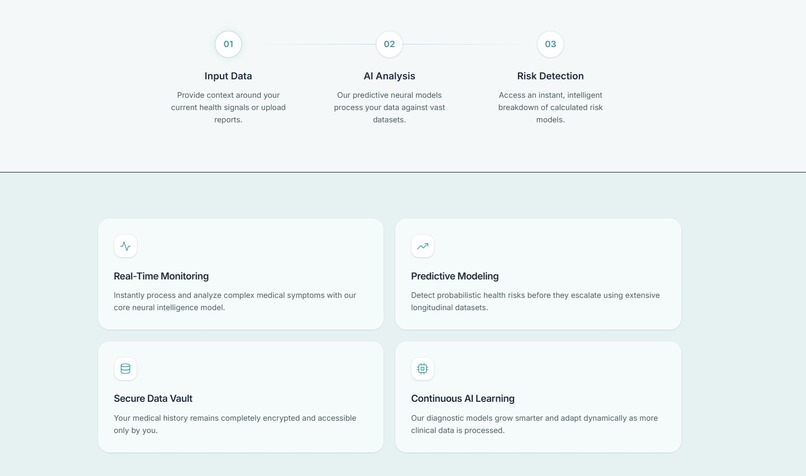

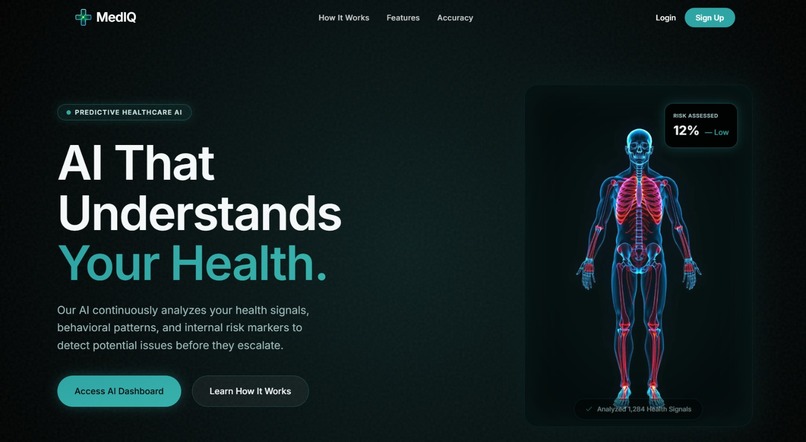

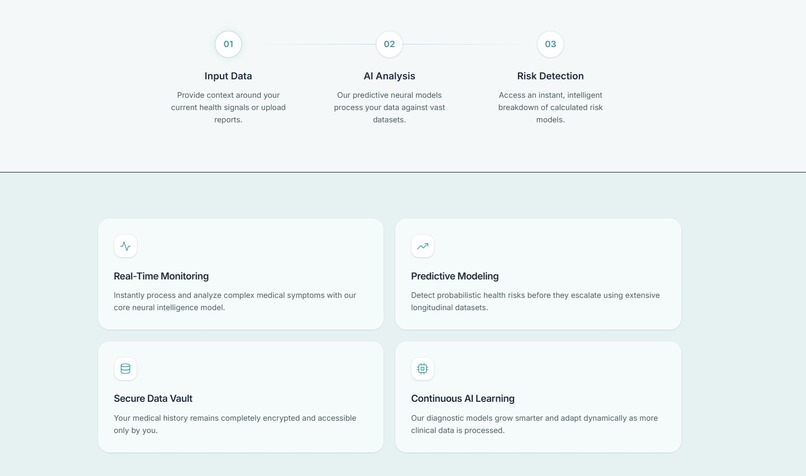

Homepage in laptop or desktop

-

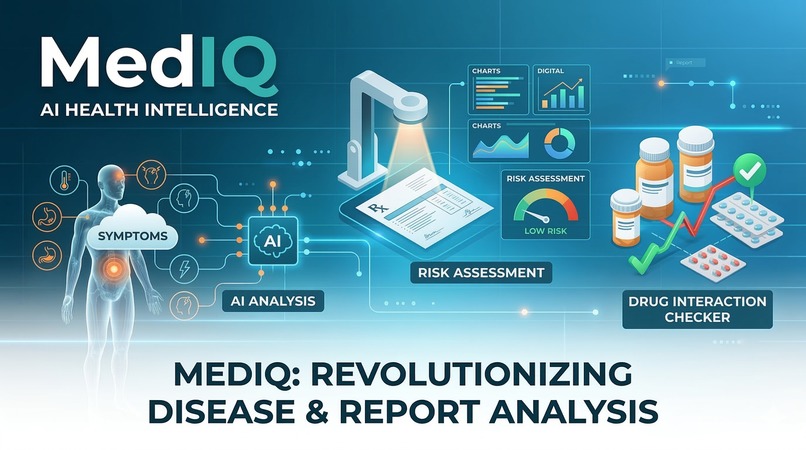

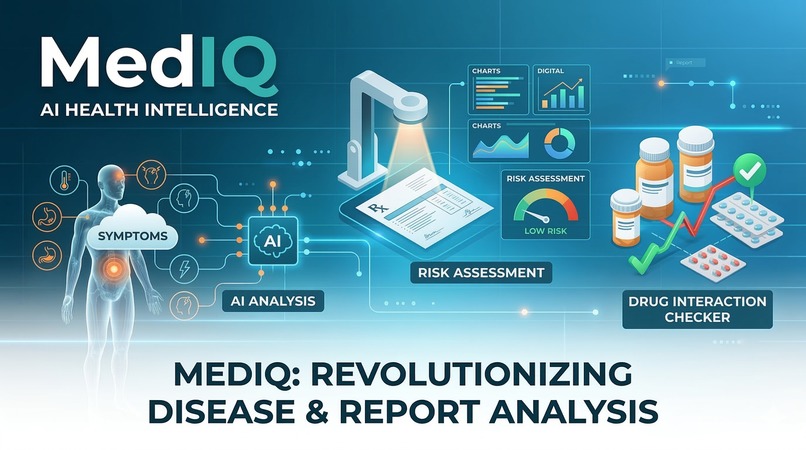

MedIQ poster

Inspiration

We realized that most people don’t really understand their health until things get serious. Whether it’s symptoms, medical terms, or knowing when something is urgent, there’s a gap between information and actual understanding. At the same time, healthcare systems are often overloaded, and people don’t always know whether their situation is serious enough to seek immediate care. So we wanted to build something that helps at that exact point, and that is, before a decision is made.

What it does

MedIQ is an AI-powered health assistant that helps users understand their condition and decide what to do next. It includes the following features like: Symptom analysis that uses natural language input. Follow-up questioning to refine understanding step-by-step. Emergency detection to identify critical cases early. Clinical-style reports with risk factors and medication insights. A full frontend + backend system that simulates a real health workflow. Therefore, the idea is to act as a first layer of triage and understanding, not a diagnosis tool.

How we built it

We built MedIQ as a full-stack application which includes: Frontend: Interactive UI for real-time symptom input and responses Backend (Python + FastAPI): Handles AI logic, APIs, and processing AI Layer: Combines probabilistic modeling, Bayesian updates, and rule-based logic Symptom Mapping: Uses pattern matching + fuzzy logic for real-world input Risk Modeling: Includes factors like age, smoking, and BMI System Design: Modular backend + frontend architecture with API communication Instead of relying purely on ML, we designed a hybrid system to balance flexibility and safety.

Challenges we ran into

One of the biggest challenges was handling real user input is that many times people don’t describe symptoms clearly, and mapping that reliably took a lot of iteration. We also had to deal with: Overconfident predictions Clinically unrealistic outputs* Irrelevant follow-up questions Balancing ML predictions with rule-based safety Another challenge was making everything work smoothly as a full system, not just individual components. (Especially establishing connections with backend)

Accomplishments that we're proud of

We’re proud that MedIQ is more than just a prototype model. It is a full stack health model. But most importantly, it behaves responsibly, not just intelligently (The AI-model understands it's limits).

What we learned

We learned how to build a full-stack AI health system. We learned how to implement real-time Bayesian updates during interaction We learned how to design emergency-first logic We learned how to integrated risk factors like BMI and lifestyle into predictions We learned how to create a system that feels interactive and usable Most importantly, it behaves responsibly, not just intelligently.

What's next for MedIQ

We want to expand MedIQ into a more complete healthcare platform.

Improve NLP for better symptom understanding Use better datasets for stronger calibration Enhance follow-up logic to feel more natural Integrate real healthcare workflows (like consultation or queue systems) Expand features beyond symptom analysis The goal is to make MedIQ a reliable first step in healthcare decision-making.

Log in or sign up for Devpost to join the conversation.