-

-

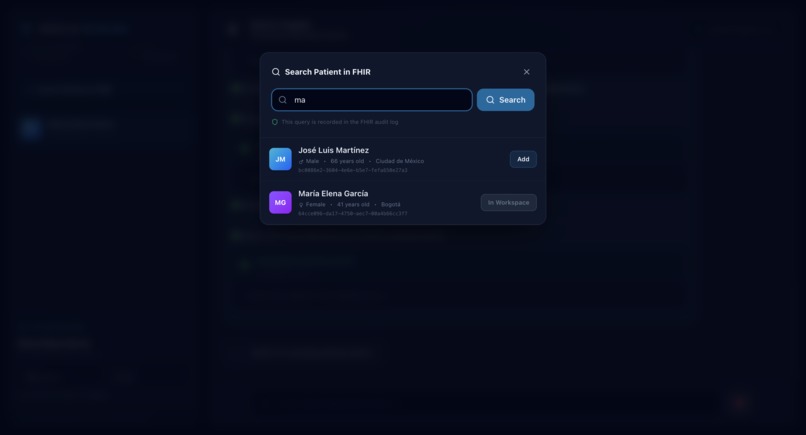

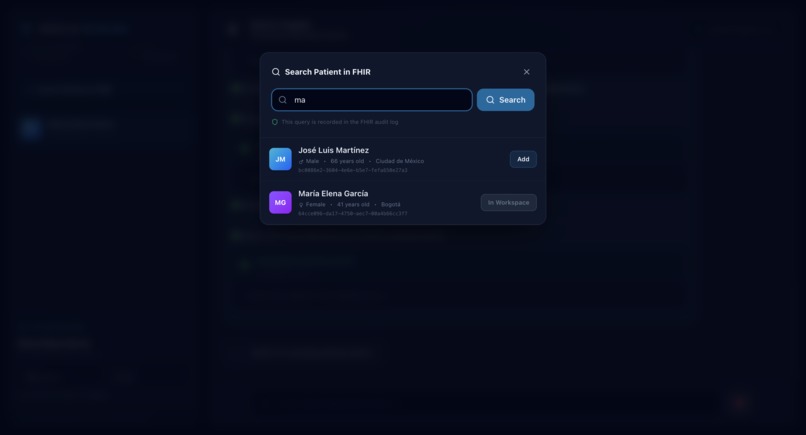

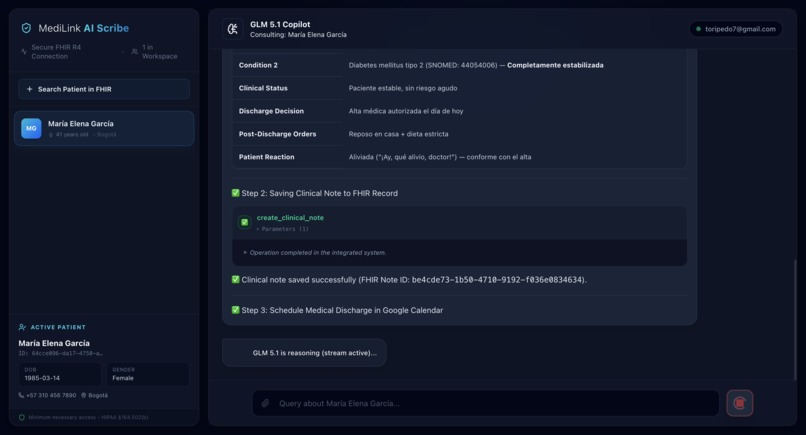

Real-time GCP FHIR integration: Securely querying clinical records using strict "Minimum Necessary" access principles.

-

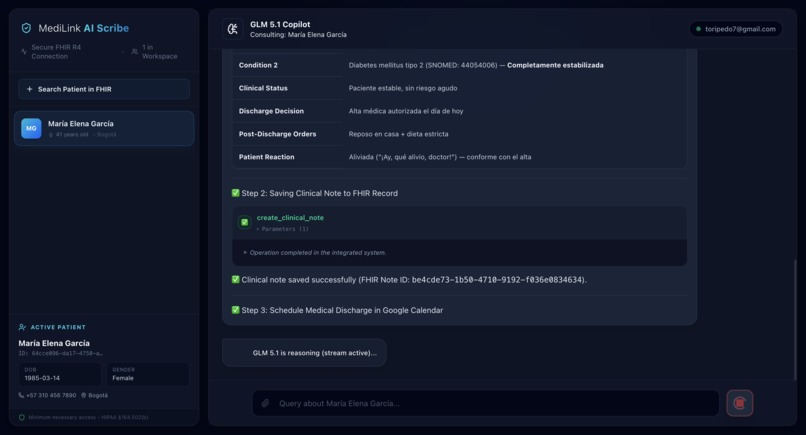

GLM 5.1 acting as a clinical co-pilot, generating comprehensive medical analysis and drug interaction warnings in real-time.

-

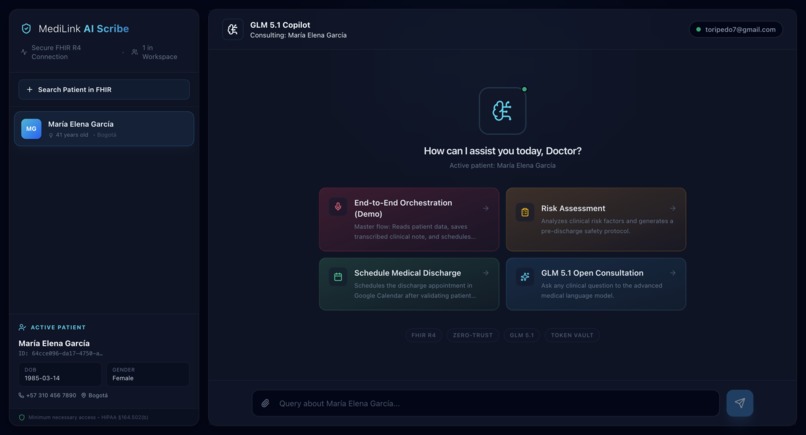

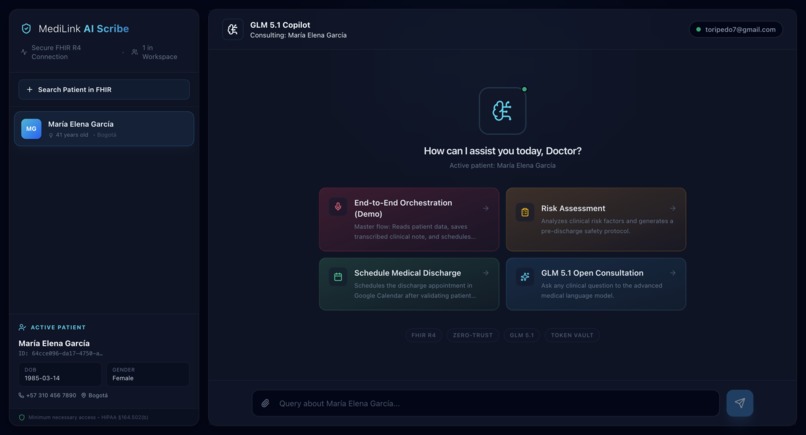

The Zero-Trust Dashboard: Physicians select one-click agentic workflows (like scheduling discharges) for the active patient.

🩺 Inspiration

Clinical burnout is real. Physicians spend more time documenting than treating patients. While AI "Scribes" exist, they are often passive note-takers. We wanted to build an agent that acts—one that can update records and schedule appointments—but as an engineering student from the National University of Colombia (UNAL), I know that in healthcare, autonomy without security is dangerous. MediLink AI Scribe was born to prove that AI can be both autonomous and "cryptographically chained" to human consent.

🚀 What it does

MediLink AI Scribe is a full-horizon clinical orchestrator. It executes a seamless 4-step workflow from a single audio input:

- Intelligent Transcription: Uses Z.AI’s GLM-ASR to transcribe clinical sessions.

- Clinical Reasoning: GLM 5.1 extracts findings and generates professional SOAP notes.

- FHIR Interoperability: Automatically updates "Encounter" resources in a GCP FHIR R4 server.

- Secure Action: Schedules medical discharges in Google Calendar, but only after a Zero-Trust security interrupt via Auth0.

🛠️ How we built it

The "brain" is GLM 5.1, orchestrated by LangGraph to manage complex state transitions. We used the Auth0 Next.js SDK (v4) for identity and the new Token Vault for delegated authorization. Unlike traditional apps that store static API keys, MediLink uses a federated token exchange to access Google Workspace APIs only when the doctor is present and authorizes the specific action.

🛑 Challenges we overcame

The biggest hurdle was implementing the "Security Interrupt". We had to design the LangGraph logic so that when the agent attempts a high-stakes tool call (like scheduling), the graph execution halts. It emits a signal to the Next.js frontend to trigger the Auth0 consent flow. Synchronizing this asynchronous "human-in-the-loop" gate with an autonomous AI reasoning chain was a massive technical challenge that Token Vault helped us solve elegantly.

🎓 What we learned

We learned that the future of AI isn't just about better models, but about better identity layers. Implementing FHIR standards taught us the importance of data interoperability, and working with GLM 5.1 showed us the power of long-horizon reasoning in specialized domains like medicine.

🏁 What's next for MediLink AI Scribe

We plan to expand the toolset to include real-time drug interaction checks (via FDA APIs) and a "Self-Hosted" mode for hospitals with strict data residency requirements, maintaining the Auth0 security layer as the primary trust provider.

🔐 Bonus Blog Post: Chaining the AI with Token Vault

Building an autonomous clinical agent with GLM 5.1 is exciting, but handing it the keys to a doctor's calendar is terrifying. In healthcare, convenience can never eclipse security. This was the core mission of MediLink AI Scribe: How do we let an AI orchestrate complex workflows while maintaining absolute human-in-the-loop control?

The answer was Auth0 Token Vault. Initially, our agent wanted to execute all tools consecutively. We had to redesign the architecture so the AI's autonomy was "cryptographically chained." We implemented a system where the Next.js backend extracts the Auth0 refresh token from the session and attempts a federated token exchange (urn:ietf:params:oauth:token-type:refresh_token) to access Google Workspace.

If the physician hasn't granted consent, the tool doesn't just fail; it throws a controlled 'GraphInterrupt'. This pauses the LLM’s reasoning mid-thought and renders a secure Auth0 consent popup. Once the doctor grants permission, the system auto-resumes the exact node in the graph.

Token Vault allowed us to build a true 'Zero-Trust' AI agent that doesn't just "chat," but performs secure, authorized actions in the real world.

Built With

- auth0-token-vault

- gcp-healthcare-api-(fhir-r4)

- glm-5.1

- glm-asr-2512

- langgraph

- next.js-15

- python

- tailwind-css

- typescript

Log in or sign up for Devpost to join the conversation.