-

-

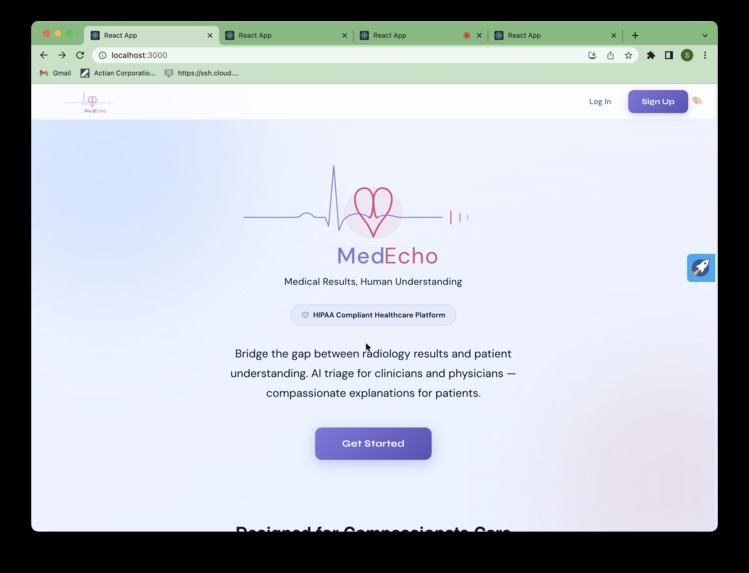

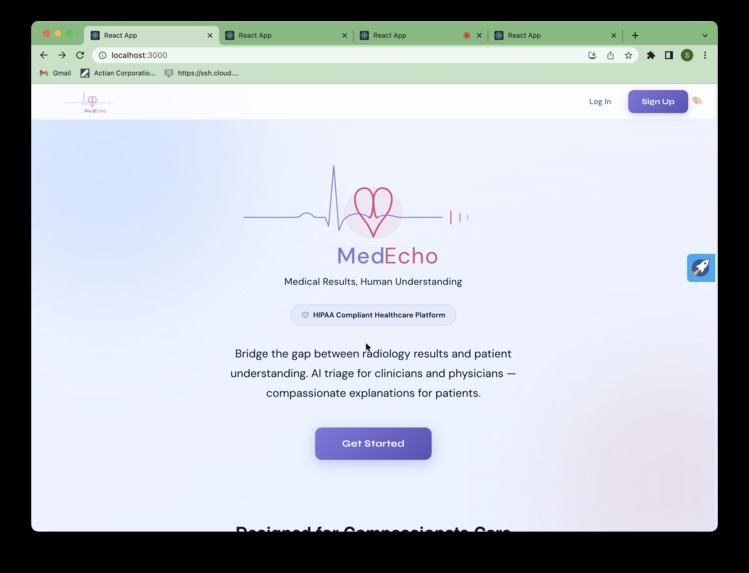

Landing page 1

-

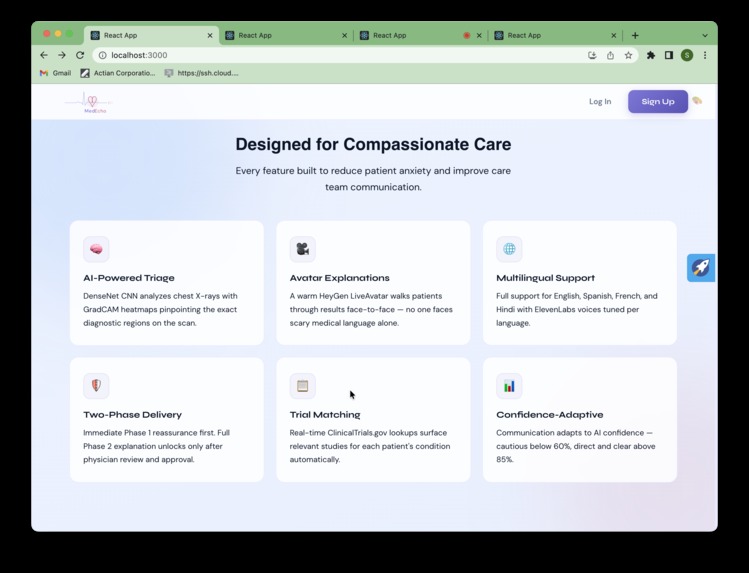

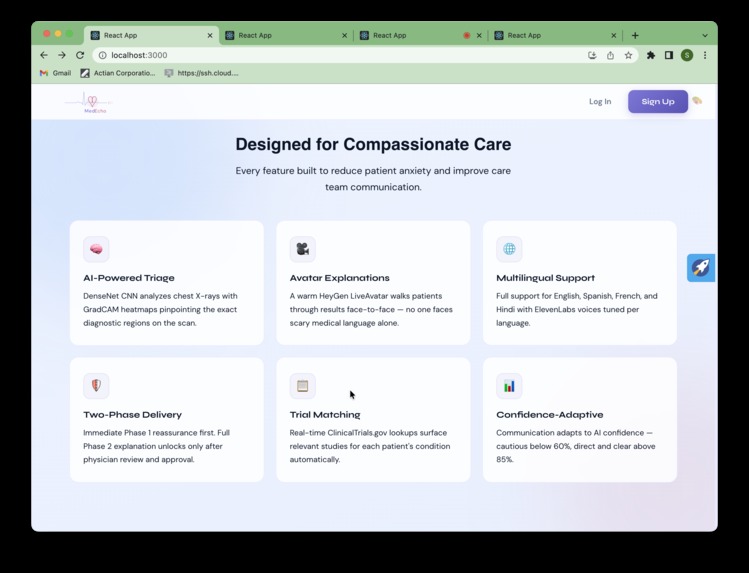

Landing page 2 - What it does

-

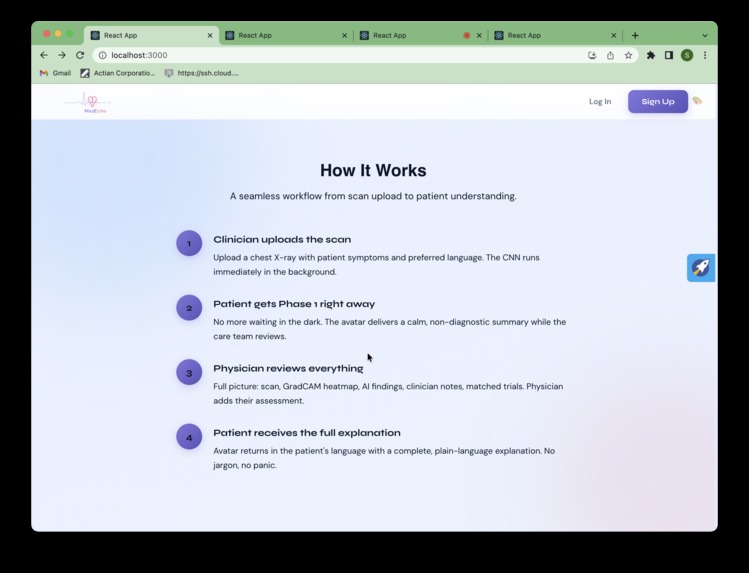

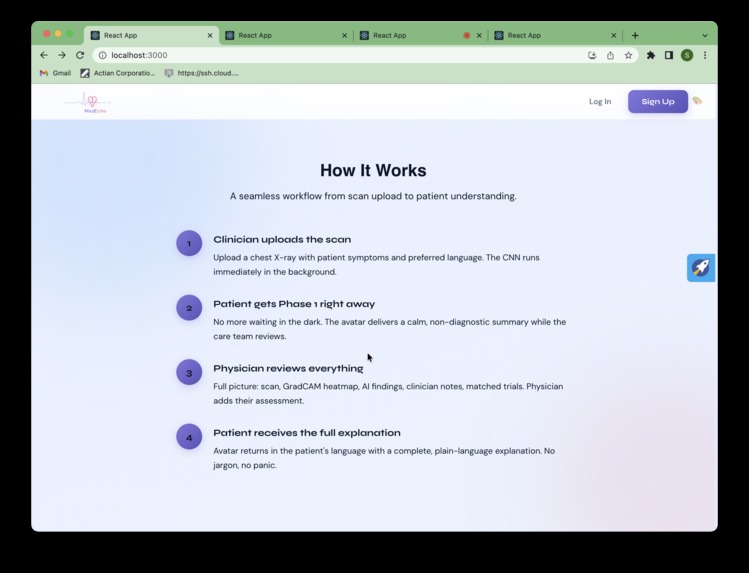

Landing page 3 - How it works

-

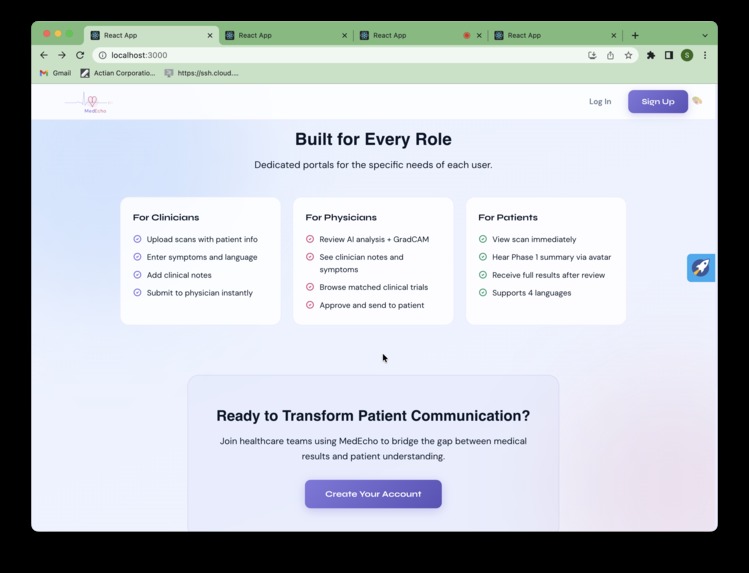

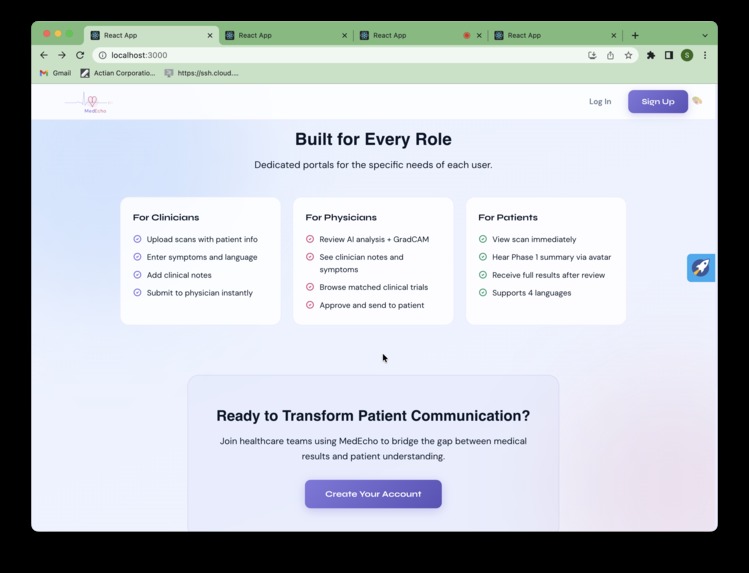

Landing page 4 - 3 Portals

-

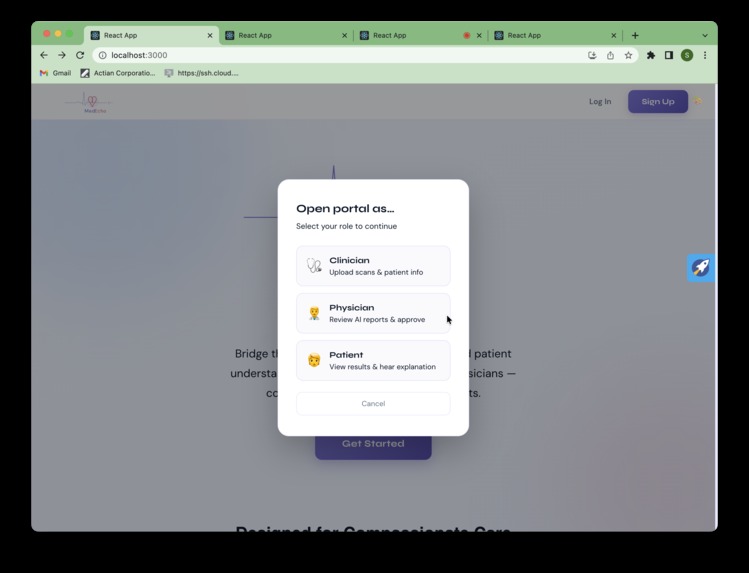

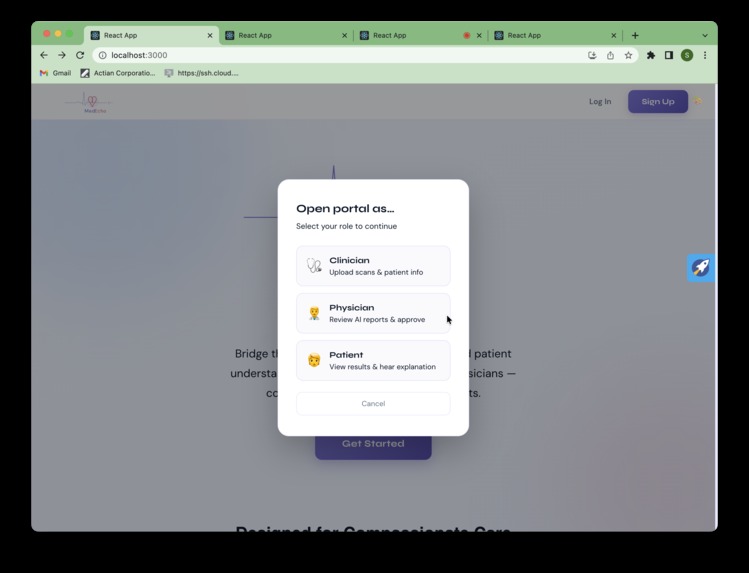

Getting started

-

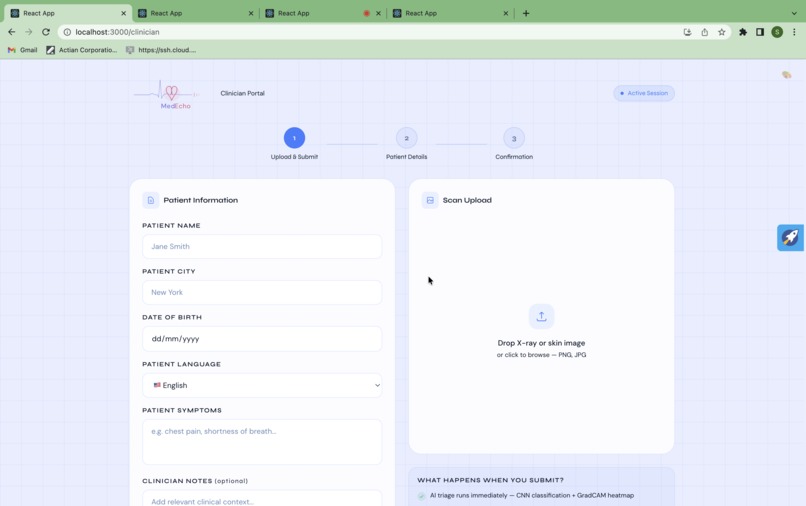

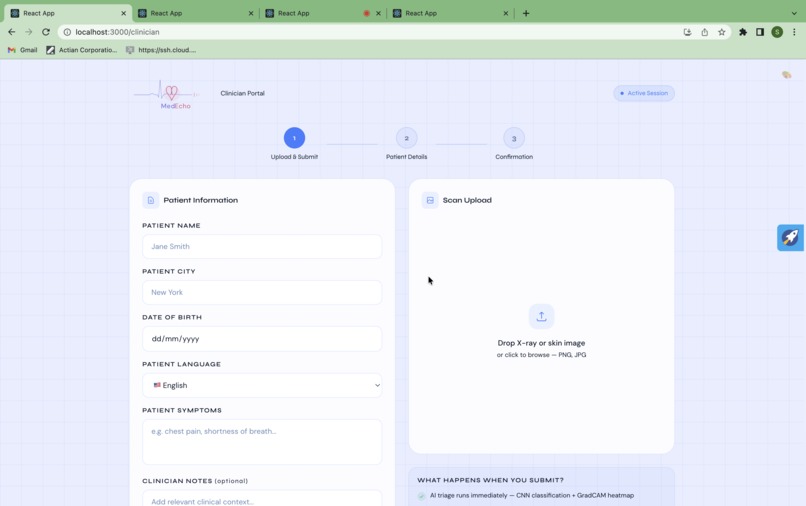

Clinician portal intial form

-

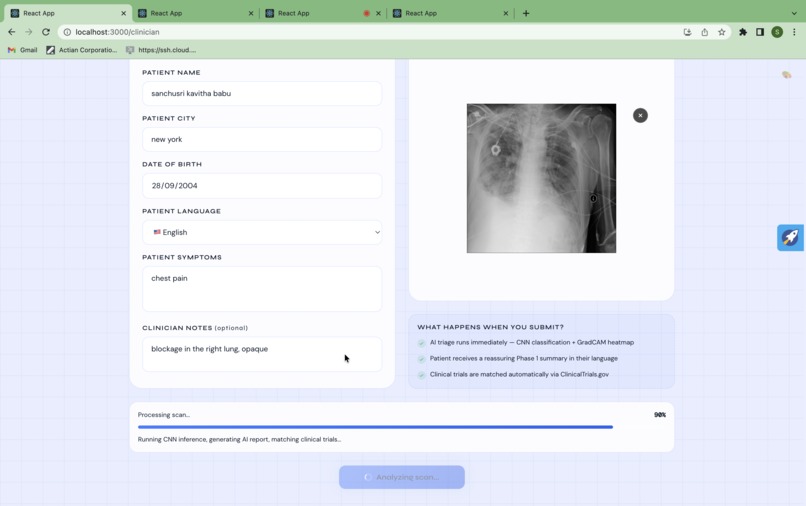

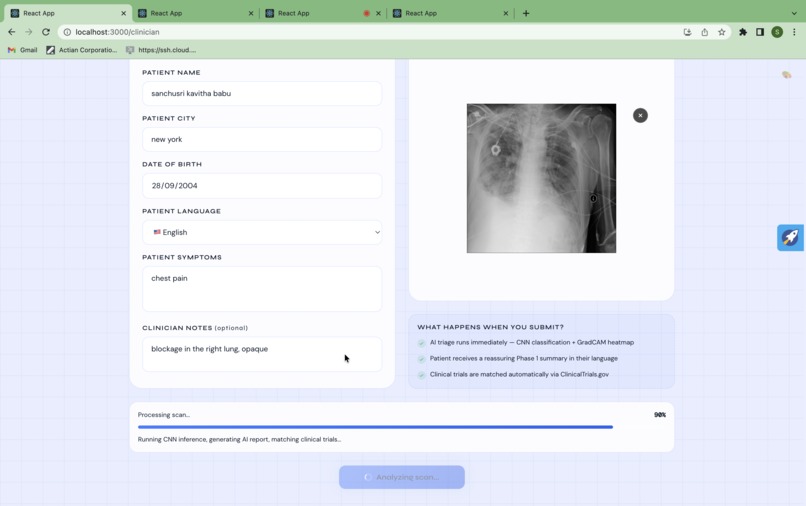

Clinician portal patient scan sending

-

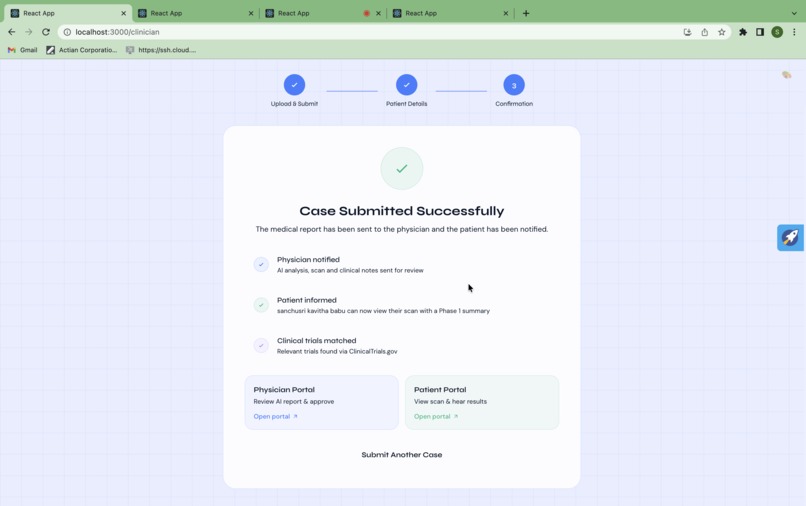

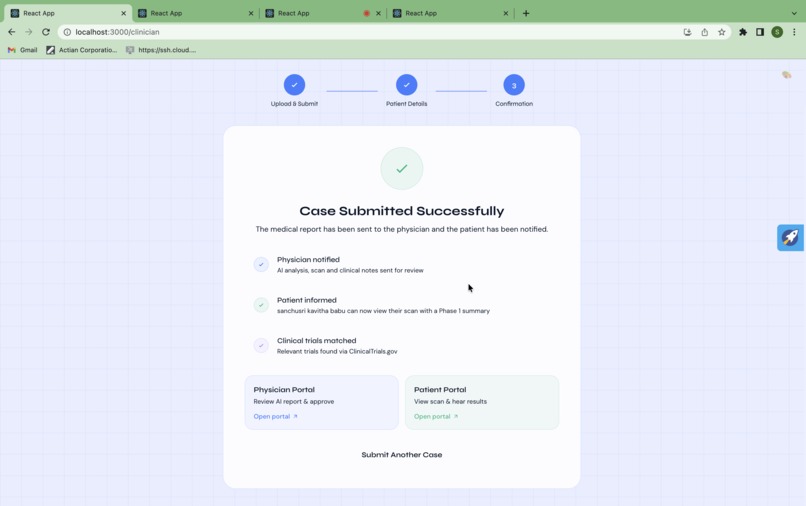

Clinician portal patient details submission

-

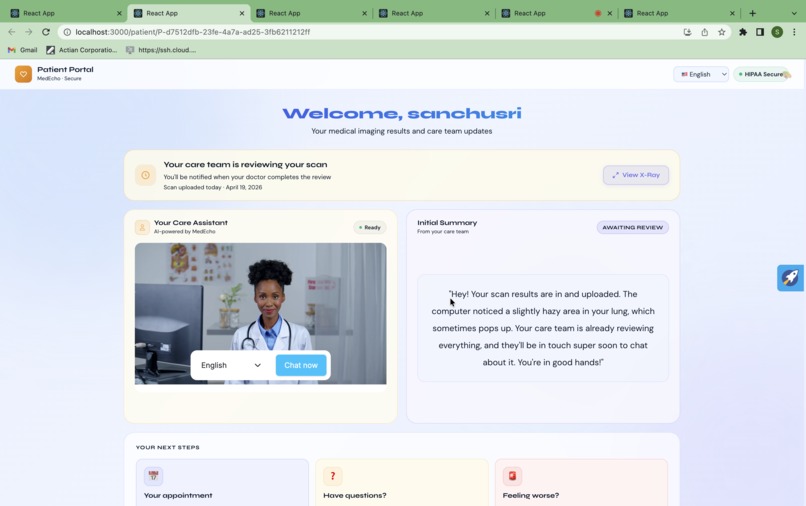

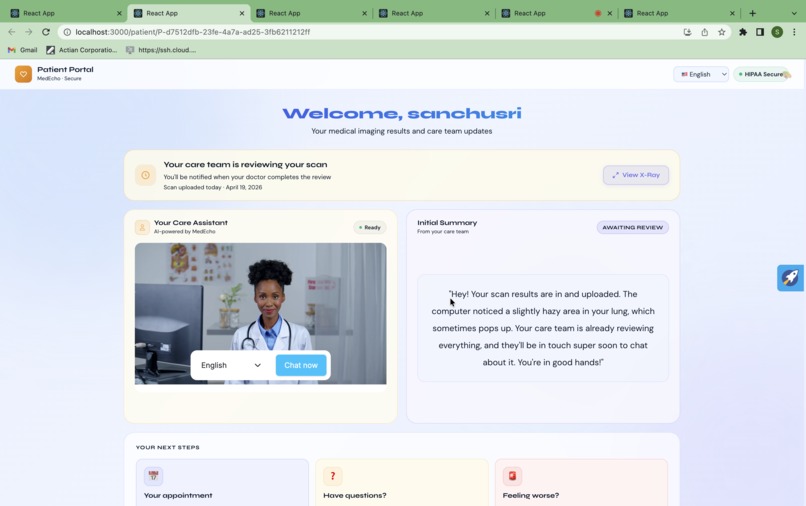

Patient portal initial AI assessment

-

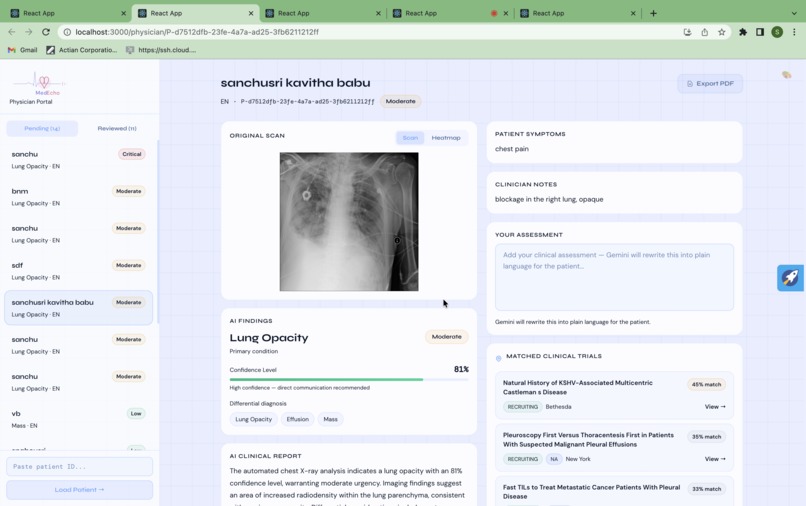

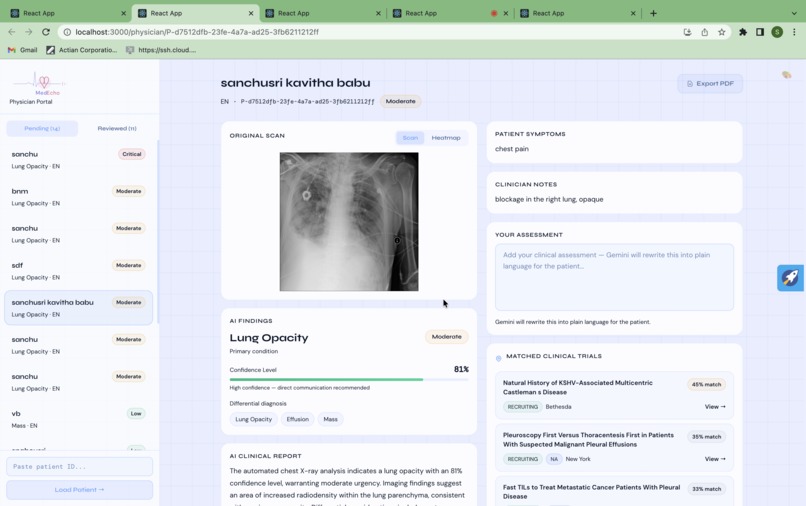

Physician portal initial review

-

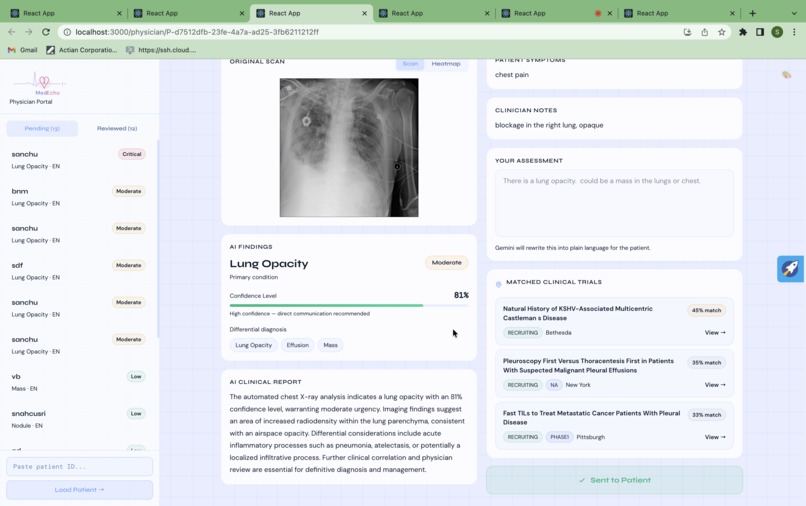

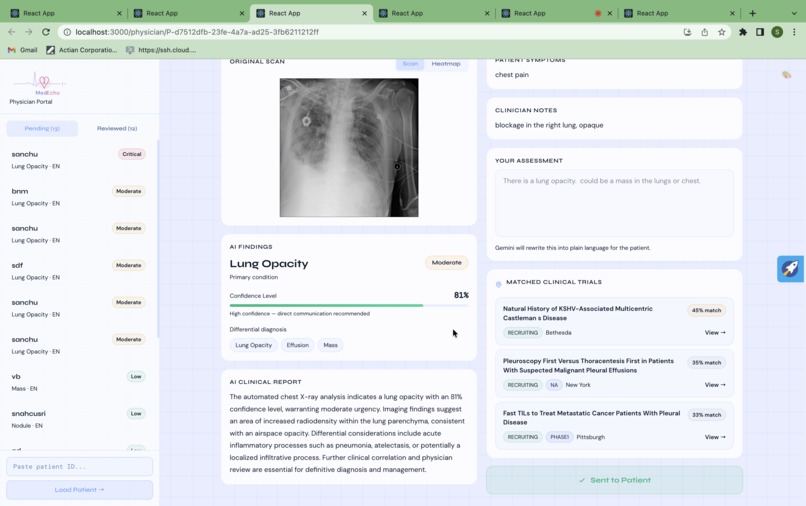

Physician portal review sent

-

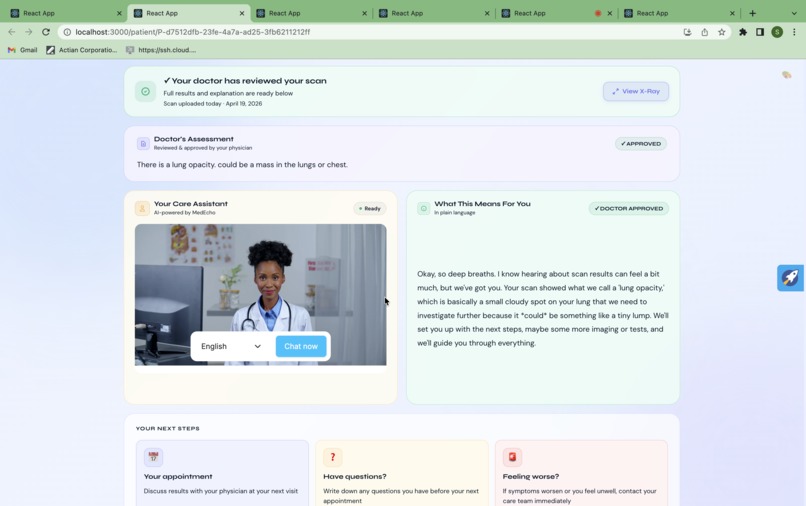

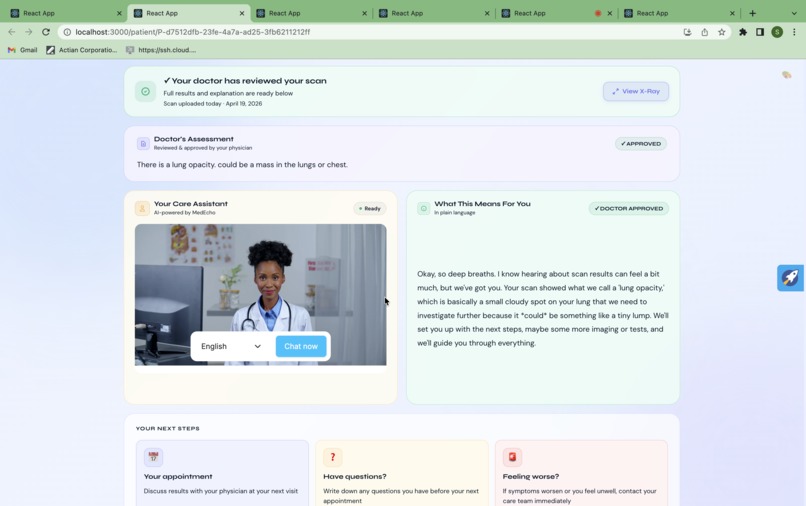

Patient portal scan reviewed

Inspiration

It was 11pm. A family member had a chest X-ray earlier that day, went home, opened MyChart, and read:

"pulmonary opacity suggesting possible malignancy."

No doctor on the line. No explanation. Just those words sitting on a phone screen in the dark.

Turns out this isn't a rare edge case — it's a policy. Under the 21st Century Cures Act (2021), patients now receive their test results simultaneously with their doctor, often before the physician has even opened the file.

The law was written to give patients more access. What it accidentally created was thousands of people every night reading words like opacity, malignancy, and effusion completely alone, with no one to explain what any of it means.

For non-English speakers, it's total incomprehension. For everyone else, it's panic. For both, the fear is almost always worse than the actual diagnosis.

We kept coming back to one question: what should actually happen in that moment?

Not a PDF. Not a patient portal with a wall of clinical text. A person. Something that feels like a person, that looks at you, speaks your language, and says: you're in good hands, and here's what we know so far.

That's MedEcho.

What It Does

MedEcho is a three-portal system that takes a chest X-ray from upload to plain-language explanation in the patient's own language, delivered face-to-face by an AI avatar.

Clinician Portal

The clinician uploads a scan, enters symptoms and patient language. Immediately:

- A DenseNet CNN classifies the scan and assigns condition, confidence, and urgency

- GradCAM generates a heatmap showing exactly which region drove the prediction

- ClinicalTrials.gov v2 API matches live recruiting trials against condition, differential diagnosis, symptoms, location, and trial phase

- Gemini 2.5 Flash generates a formal clinical report for the physician and two patient-facing scripts simultaneously

- ElevenLabs converts the Phase 1 script to natural speech in the patient's language immediately

Physician Portal

The physician sees the full picture: scan, GradCAM heatmap, AI findings, differential diagnosis, confidence score, clinician notes, and matched trials with confidence scores and direct links to ClinicalTrials.gov. They add their own assessment and hit Send to Patient.

Patient Portal

The patient is never left alone.

- The moment the scan is uploaded, a HeyGen LiveAvatar delivers a warm Phase 1 message in their language — no diagnosis, no percentages, just presence. ElevenLabs audio autoplays immediately.

- After physician approval, the full plain-language explanation is delivered face-to-face in English, Spanish, French, or Hindi

- The portal polls every 5 seconds and transitions automatically when the physician approves — no refresh needed

Regeneron Pipeline Relevance

MedEcho's trial matching directly surfaces patients relevant to Regeneron's investigational pipeline at the exact moment of diagnosis:

- Respiratory/Pulmonary — Lung opacity, pneumonia, effusion findings

- Cardiovascular — Cardiomegaly → heart failure pipeline

- Oncology — Mass/nodule findings → early detection studies

Every physician interaction passively builds an investigator profile: condition specialty, patient volume, diagnostic patterns. Cross-referenced with ClinicalTrials.gov, that's a real-time site-finder built from actual clinical behavior — not spreadsheets, not KOL recommendations.

MedEcho's trial matching engine scores each study across six weighted factors: condition keyword match in the trial title and description (30%), differential diagnosis alignment (15%), patient-reported symptom overlap (15%), geographic proximity via Nominatim geocoding (20%), language-country match to reduce access barriers (10%), and trial phase weighting — Phase 3 trials score highest as they represent the most clinically relevant interventional studies (10%). The system searches not just the primary condition but the top two differential diagnoses simultaneously, deduplicates across all searches, and returns the top three trials sorted by score. Every result is clickable, linking directly to the ClinicalTrials.gov study page. For Regeneron specifically, this means a patient presenting with cardiomegaly in Princeton could surface an active Phase 3 heart failure trial with a 74% match score — automatically, at the moment of diagnosis, before they leave the system.

Traditional trial referral relies on physician memory and network — systematically excluding patients who don't speak the doctor's language, don't fit the typical profile, or simply aren't asked. MedEcho's matching is condition-driven, not physician-driven — every patient with a relevant finding gets surfaced, regardless of who they are or where they're from.

How We Built It

Backend (Flask + Python 3.11)

- DenseNet CNN via torchxrayvision for scan classification — condition, confidence, urgency, differential diagnosis

- GradCAM via pytorch-grad-cam for explainability heatmaps, resized to original scan resolution via scipy.ndimage.zoom

- Gemini 2.5 Flash generates three distinct outputs from one prompt: formal clinical report, warm Phase 1 patient script, and plain-language Phase 2 explanation — same finding, three completely different registers, all adapted to the patient's generation and language

- ElevenLabs eleven_multilingual_v2 converts patient scripts to natural speech in the patient's language. Phase 1 audio generates at scan upload. Phase 2 audio generates at physician approval. Autoplays immediately on the patient portal.

- HeyGen LiveAvatar API for face-to-face avatar delivery with context-aware greeting and patient knowledge

- ClinicalTrials.gov v2 API with multi-factor match scoring across condition, differential, symptoms, location, language, and trial phase

- Patient data stored as flat JSON per patient, served via Flask static routes

Frontend (React)

- Three fully separate portals:

/clinician,/physician,/patient - Real-time polling on the patient portal switches from Phase 1 to Phase 2 the moment the physician approves

- Physician sidebar loads live cases with urgency badges, condition tags, and trial match confidence scores sorted by severity

- Light/dark theme toggle across all portals

- PDF export of full clinical reports

Challenges We Ran Into

1. HeyGen medical content guardrails The avatar's underlying LLM would soften or redirect anything that sounded clinical. We worked around it by front-loading all patient-specific information directly into the delivery script rather than relying on the avatar to retrieve it dynamically.

2. GradCAM resolution mismatch

The CNN processes images at 224×224 internally. The heatmap came back at that size, mismatched against the original scan resolution. Fixed with a scipy.ndimage.zoom call to stretch it back to original dimensions without losing heat distribution.

3. NumPy/PyTorch version conflict torch, torchxrayvision, and pytorch-grad-cam all pin different NumPy versions. Resolved by pinning NumPy to 1.26.4 — the highest version compatible across all three libraries.

4. Multilingual avatar delivery ElevenLabs eleven_multilingual_v2 handles the audio naturally, but ensuring the Gemini-generated scripts were culturally appropriate across English, Spanish, French, and Hindi required careful prompt engineering — particularly for Hindi where medical terminology needed to be simplified differently than in European languages.

Accomplishments We're Proud Of

- A working end-to-end pipeline: scan upload → CNN → GradCAM → Gemini → ElevenLabs → HeyGen avatar → patient explanation, all in the patient's language

- Patient safety by design: two-phase gated delivery means no patient ever receives a diagnosis without physician sign-off. Phase 1 is deliberately non-diagnostic — presence and reassurance without clinical claims

- Deep trial matching scoring across six factors, surfacing results at the moment of diagnosis — directly addressing Regeneron's patient identification bottleneck

- Generation-adaptive communication: the same diagnosis is explained differently to a Baby Boomer and a Gen Z patient — tone, vocabulary, and framing all adapt automatically

- Physician portal as an investigator dossier: capturing condition specialty, patient volume, and diagnostic patterns in real time

- Hindi translation confirmed working — the same pipeline that serves English patients serves Hindi patients without any code changes

What We Learned

The 21st Century Cures Act created a real, unsolved problem — and the solution isn't more medical jargon, it's less.

Medical communication has to be personalized, not just translated. Language, health literacy, and generation all change how a patient receives news. The same diagnosis sounds completely different to a 68-year-old and a 22-year-old.

We also learned that the gap between a working demo and a working product in healthcare is trust:

- The physician has to trust the AI enough to send it

- The patient has to trust the explanation enough to act

Every design decision — the heatmap, the avatar, the phased delivery, the confidence scores — was about building that trust.

What's Next

- ASL integration — American Sign Language delivery for accessibility

- EMR integration — Replace URL-based patient IDs with real hospital EMR lookup

- HIPAA compliance review — Prepare for production deployment

- Multi-modal scanning — Expand to MRI, CT, and ultrasound

- Longitudinal patient tracking — Avatar builds context across visits over time

Closing

The 11pm MyChart moment happens to thousands of patients every night.

MedEcho is what should happen instead.

Log in or sign up for Devpost to join the conversation.