-

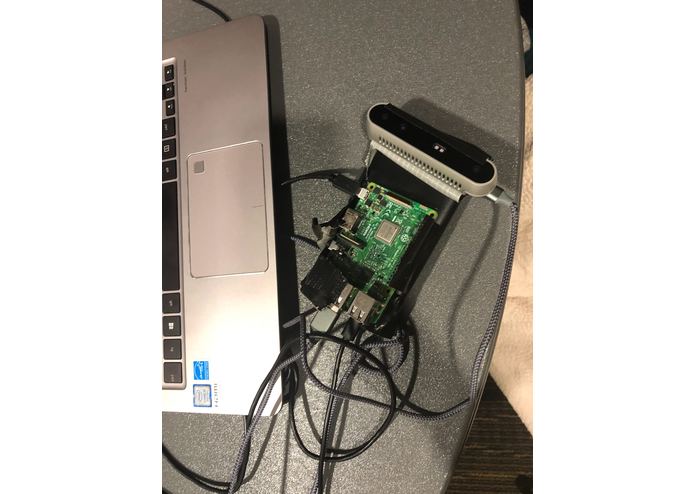

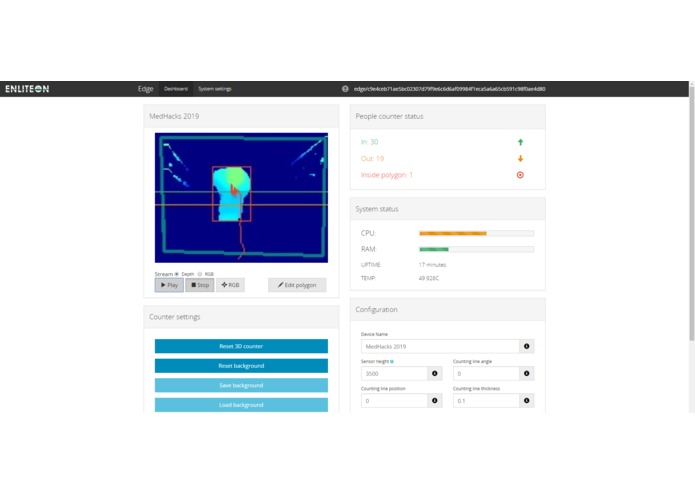

The room camera we built that can be used to assess doctor-patient interactions through quantified means (ie.doctor's distance from patient)

-

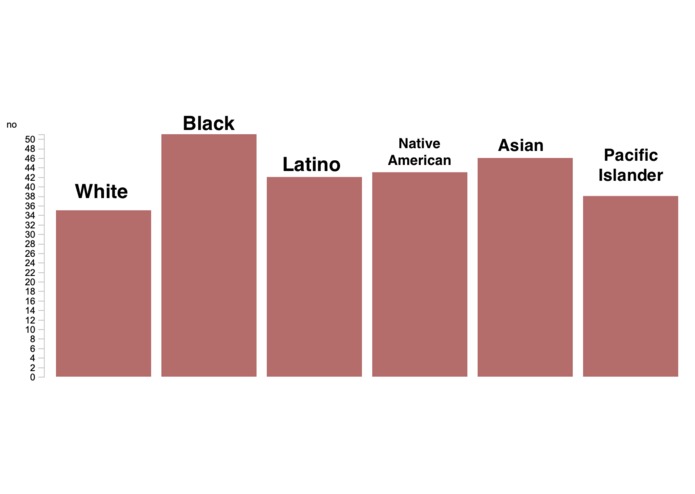

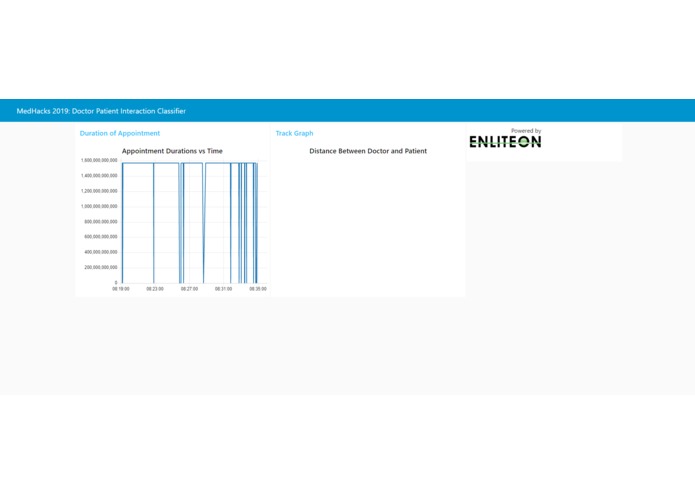

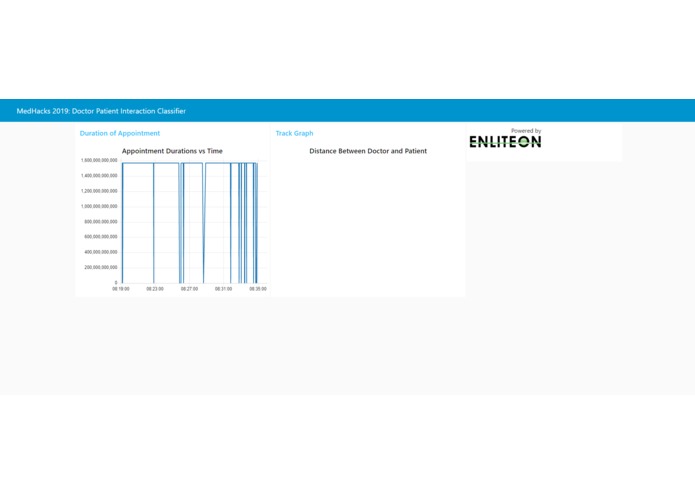

An example of the kind of graphs the tool can produce.

-

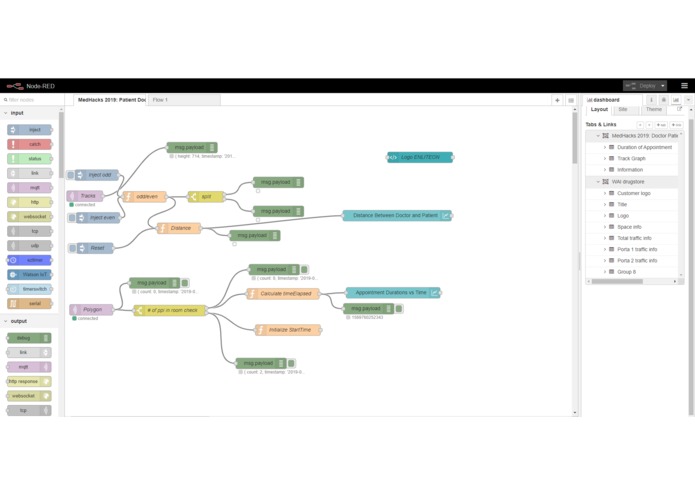

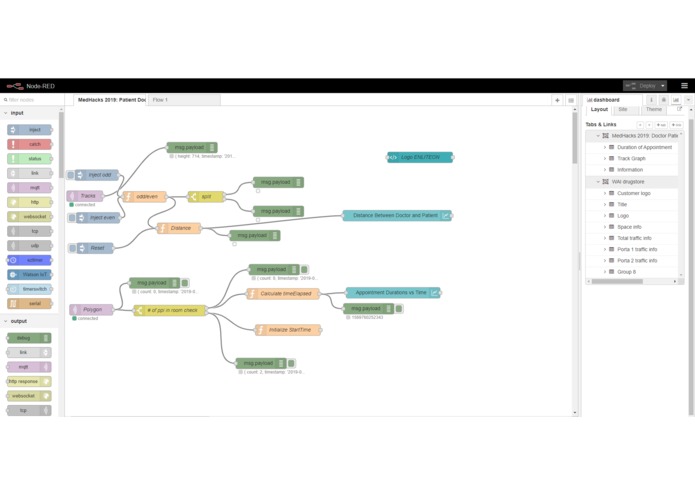

The flow system used to produce the doctor-proximity data and the doctor-patient appointment datasets

-

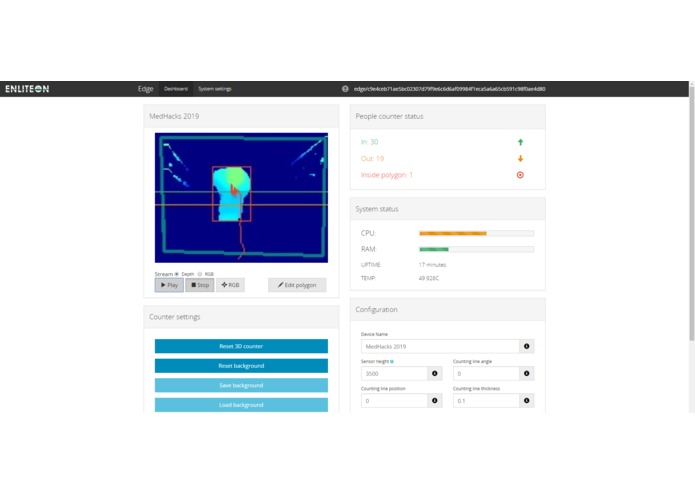

Smart Camera Depth field system used to partition doctor and patient objects from the background

-

Partially Complete Graphs of Metrics with Option for user to download dataset and use with our statistical package

Inspiration

We wanted to address the major issue of racial and gender bias in healthcare. This is a massive and systemic issue that has not been adequately addressed. One reason for its pervasiveness is how difficult it has traditionally been to quantify. Despite our naturally human tenancies, an overwhelming majority of doctors would not want to accept that their medical recommendations for patients have had any racial/gender-based bias behind them. MedAware is the result of our combined effort to give doctors a tool that will allow them to realize their subconscious racial/gender biases and address the issue with more quantified metrics at hand.

What it does

We shift the paradigm on how we approach healthcare by designing a model for doctors and hospitals to asses bias in the decisions that doctors make. This is available as a statistics package download from our open-source website. For the intellectually curious doctor who wants to improve their patient outcomes and for the hospital administrator who wants to demand higher standards from their employees, this software compliance package delivers results through answers for detecting implicit bias in the medical community.

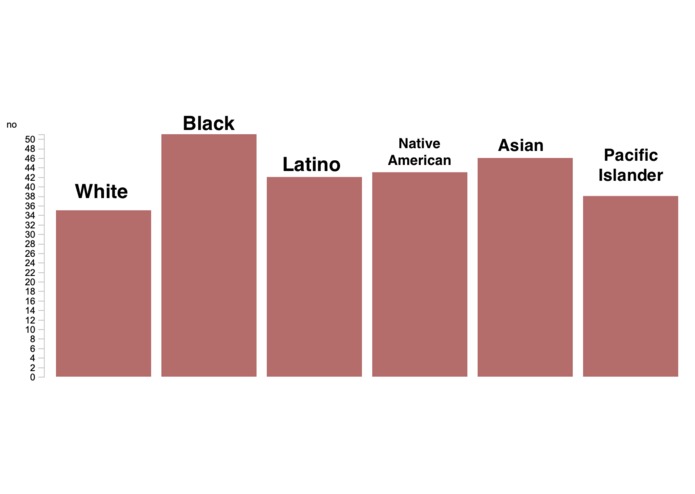

The user first comes up with a simple yes or no decision, whether that's the decision to recommend amputation for a diabetes patient, or whether or not a patient was diagnosed with a disease like ADHD. 3 sets of data are then needed to find a potential bias in that medical decision: anonymous demographic information of the patients that have been seen, as many sets of quantitative measurements that act as an objective factor in that decision as desired, and the actual yes/no decision that was made by the doctor that saw the patient. Our statistical analysis tool then takes all this data and produces graphs that depict any unexplained yet significant discrepancies across the various demographics.

In order to demonstrate the flexibility and molecularity of the tool, we worked on two specific use cases.

The diagnosis of ADHD is a place where diagnostic bias based on race is clearly present. Based on many studies, children of color are far less likely to be diagnosed and medicated. Using a body language quantification tool developed by a team of doctors and researchers from MIT and Harvard, we present a facial behavior analysis tool that uses Video AI to quantify a person's fidgitiness, an important (yet usually qualitative/subjective) metric used in the diagnosis of conditions such as ADHD and Autism. The tool can be used by psychiatrists to collect quantitative measurements of how fidgity their patients are. This data can then be run through our statistical analysis program to depict any significant discrepancies between the diagnosis rate of various demographic groups despite similar measures of fidgitiness.

The second product involves a smart depth-percepting camera system to quantify and analyze doctor-patient interactions. This was done using the Elinteon API with downwards-facing smart camera hung from the ceiling.

How we built it

Our analysis tool was built the statistical analysis tool in RStudio using the website Shiny. The statistical test at the heart of our tool is an ANOVA that seeks to answer if the diagnosis of the doctor can be correlated to patient demographic data.

A big part of our project was generating the datasets for the customer, and not having the whole platform be driven from results in the past. We created two dataset gathering methods to create more data for doctors seeking to characterize their implicit bias.

For the first dataset, we sought to characterize physical ways the doctor may act during the appointment that is a result of their implicit bias We used a smart-camera system running Enliteon-Edge, a Machine Learning body motion API with Node-Red, a JavaScript Flow service to create datasets of patient-to-doctor physical proximity over the course of the appointment and record length of appointment as well.

The face analyzer runs a scalable,secure, and serverless AWS backend. It uses Amazon Rekognition technology for the AI video analysis along with various Lambda functions for the processing of that data. It allows for the doctor to provide input about patient demographics to pair with the video analysis data in order to produce a CSV file that is ready for use with our statistical analysis tool. The web app itself currently runs out of an S3 bucket.

Challenges we ran into

Our biggest challenges involved determining the scale and approach we wanted to take in addressing the issue. Having read multiple studies on how doctor body language correlates with patient health outcomes (as well as being an indicator for bias), we originally wanted to use OpenPose with the Intel Realsense depth camera, gathering body language cues from the doctor to asses their level of engagement with various patients. After a lot of deliberating, we came up with the version of MedAware we are most proud of.

Accomplishments that we're proud of

We're proud of how flexible and modular the model is. It's simplicity allows it to be used with any set of quantified data to determine a demographic bias across virtually any binary medical decision.

What's next for Medaware

The development of other specific use cases and means of measuring quantified data in doctor-patient interaction and decision making.

Built With

- amazon-web-services

- javascript

- node-red

- python

- r

- rekognition

- rstudio

- shiny

- smart-camera

Log in or sign up for Devpost to join the conversation.