-

-

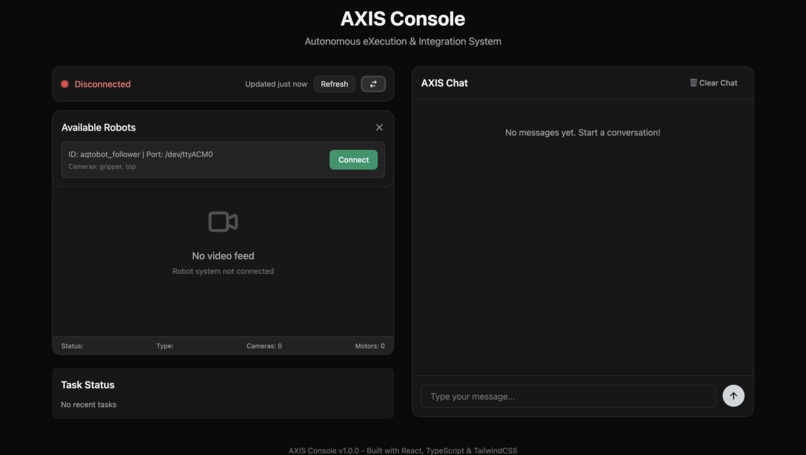

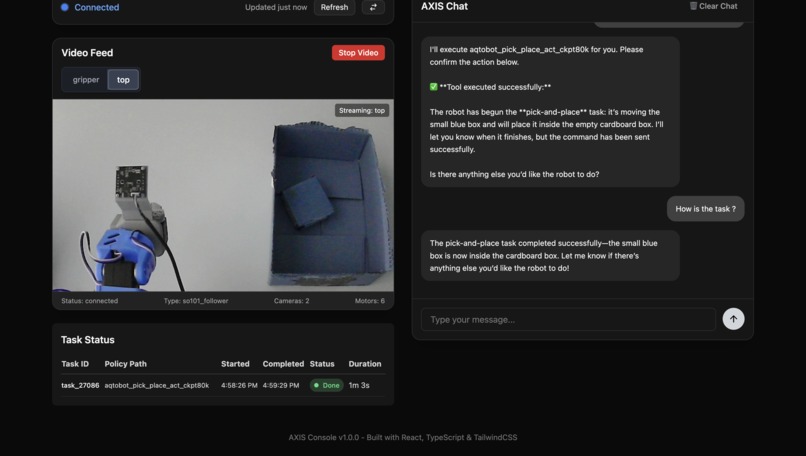

What user sees when robot is not connected to an agent

-

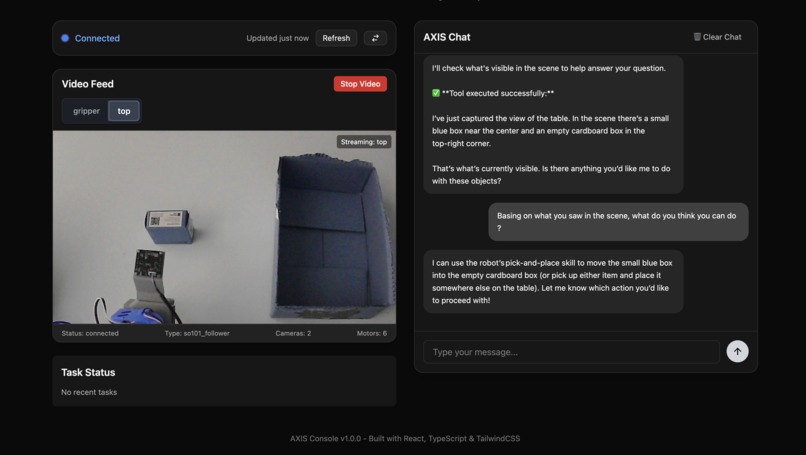

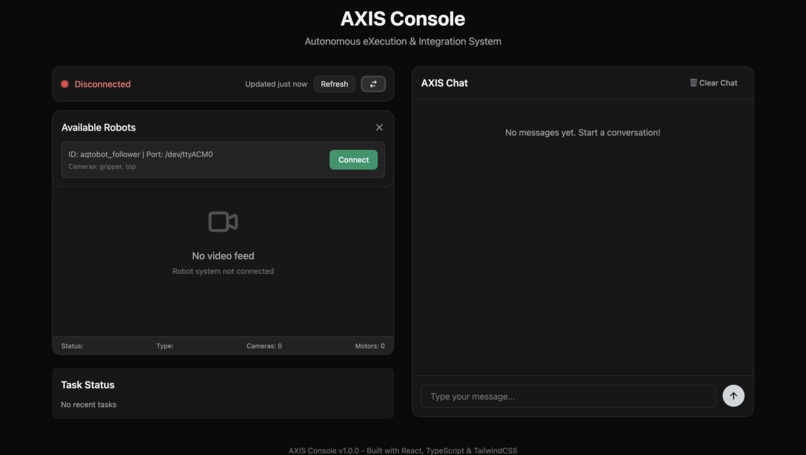

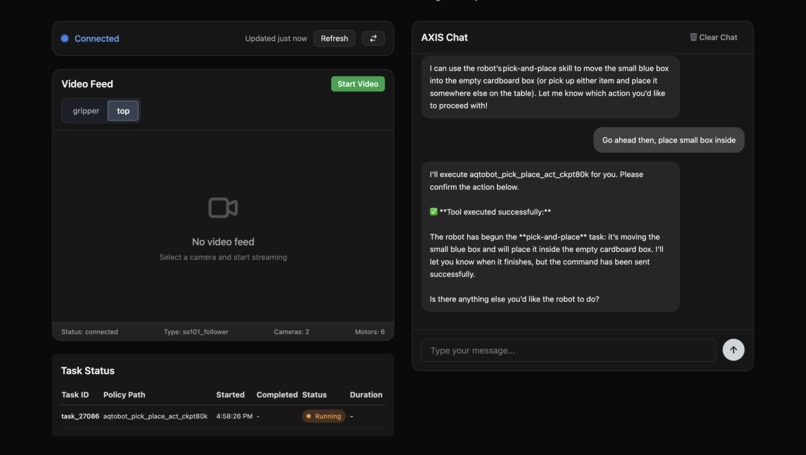

The AI agent interprets the scene and proposes actions such as picking and placing the box into the container

-

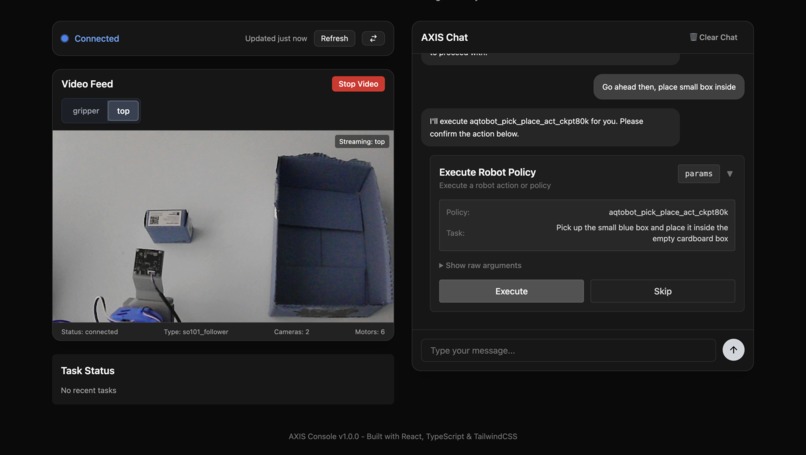

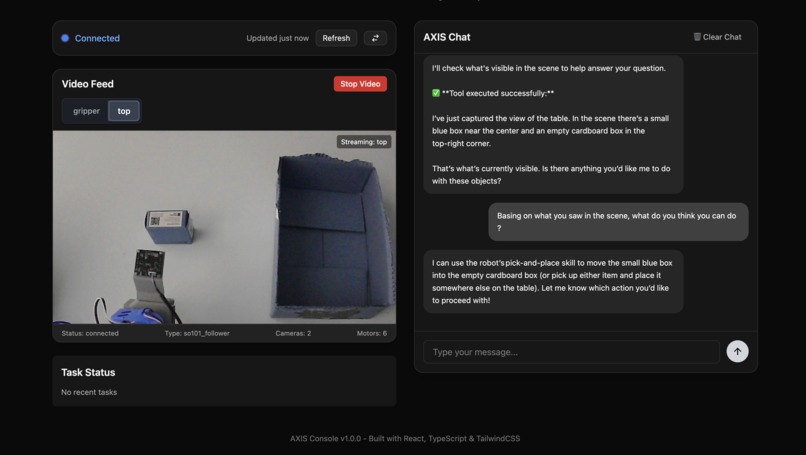

User can confirm a tool call before execution

-

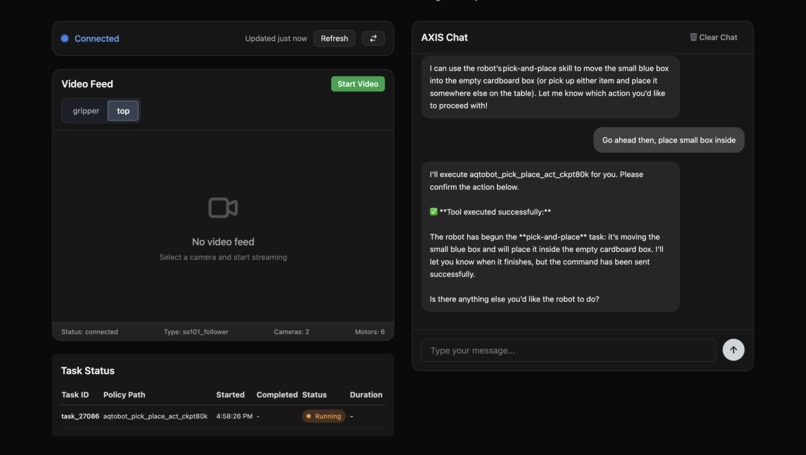

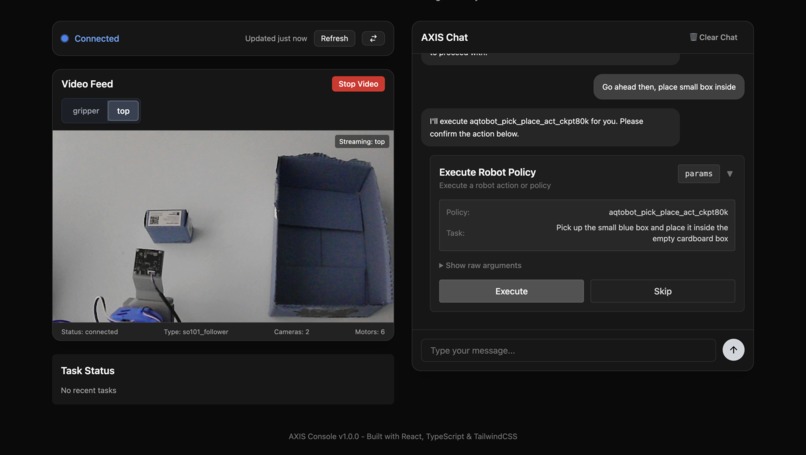

Execution Started. New task is added on the left bottom side.

-

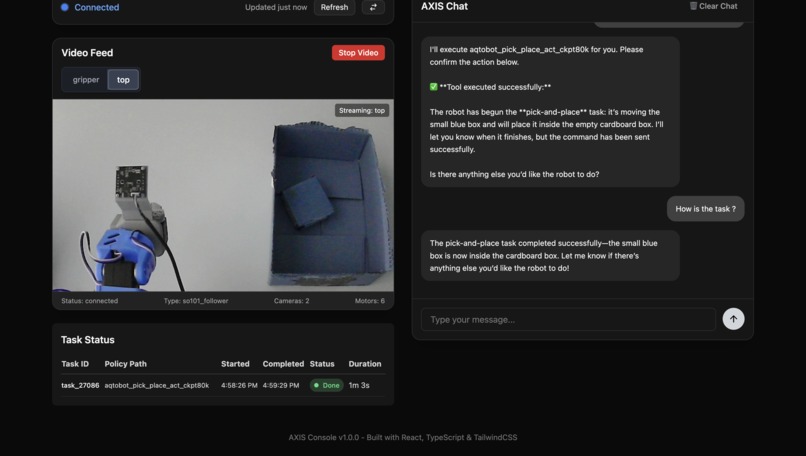

Finally when task is completed, tasks table is updated and AXIS agent is notified

-

Robot arm picking up a box (image 1)

-

Robot arm picking up a box (image 2)

AXIS (Autonomous eXecution & Integration System)

Inspiration

AXIS was born from a simple question: what if one person could command an entire fleet of robots as easily as giving an instruction? I wanted to see how language models could step off the screen and into the physical world — not just answering questions, but directing machines. By giving an AI agent the power to orchestrate robots, AXIS reimagines how humans and machines work together, pushing the boundaries of robotics and offering a bold look at the future of autonomous work.

What it does

AXIS acts as the central brain, planning and coordinating robot work. It knows what each robot can do, whether it’s available, and what’s happening around it through live camera feeds. When a task is given, AXIS maps it to the right robot and skills, then sends commands. Robots report back on their progress, so AXIS always has an up-to-date picture of execution.

With this information, AXIS makes smarter decisions — checking conditions before starting, adapting if something changes, or switching robots if one fails. And throughout, the operator stays in control with a live video feed for oversight.

How we built it

We built AXIS by combining pretrained robot skills with powerful AI planning. The robots use skills developed with the Action Chunking Transformer (ACT) and LeRobot scripts. On top of that, the AXIS AI agent, powered by openai/gpt-oss-120b, plans tasks, connects to orchestration tools, and issues commands. All the necessary context — robot skills, state, and environment — is injected into its reasoning so the agent stays aware and reliable.

Under the hood, AXIS runs on the open-source LeRobot platform, hosted locally on a Jetson Orin Nano. This hardware–software stack provides direct robot control and serves as the bridge between the AI agent and machines in the real world.

Challenges we ran into

We faced four major hurdles while building AXIS. First, compute: the oss-120b model demanded far more power than the Jetson could provide. Second, video decoding: without TorchCodec support, the Jetson was hitting 99% CPU usage just to handle streams. Third, context engineering: it was difficult to get the agent to consistently understand tasks and scene data. Finally, a vision gap: because oss-120b is text-only, it couldn’t process images directly.

Accomplishments we’re proud of

Despite those challenges, we’re proud of the solutions we built. To overcome the compute limits, we integrated Hugging Face inference providers so large LLM calls ran smoothly without crushing local hardware. For video, we designed a custom GStreamer pipeline with CUDA decoding, slashing CPU usage from ~99% to ~30%. We strengthened context engineering by dynamically injecting robot status, skills, and scene descriptions, making the agent more reliable. And to close the vision gap, we added an image-to-text tool that converts camera snapshots into scene descriptions, giving the model sight through language.

What we learned

Building AXIS taught me more than just tools and code — it showed me how to design an AI agent–powered orchestration system from the ground up. I learned how context injection and tool execution really work by implementing them without relying on heavy frameworks. On the robotics side, I got hands-on with dataset recording, training, and optimization, and I saw firsthand the tradeoffs between Action Chunking Transformers (ACT) and Vision-Language-Action (VLA) models. Exploring both reinforcement and imitation learning gave me a deeper understanding of how AI can connect perception with control in real machines.

What’s next for AXIS

The next frontier is multi-robot support. With a registry, robot-specific endpoints, and isolated camera and recording streams, AXIS will be able to coordinate multiple robots in parallel — each running its own tasks, while operators maintain real-time video oversight.

Although the demo focused on a few ACT-trained skills, AXIS is designed to be flexible. It can grow to include VLA pipelines, reinforcement learning, and trajectory optimization for adaptive control. Expanding the skill library will make AXIS increasingly versatile — moving closer to a future where one person can direct an entire workforce of robots with ease.

AXIS turns one operator into an entire factory.

Log in or sign up for Devpost to join the conversation.