-

-

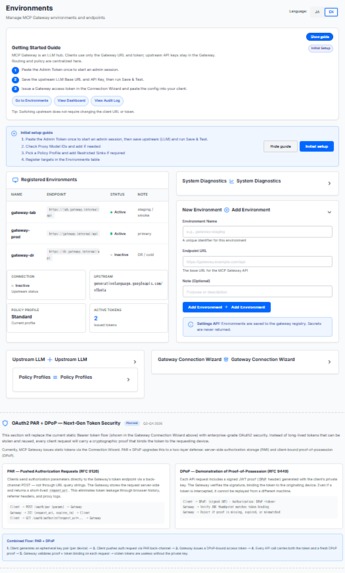

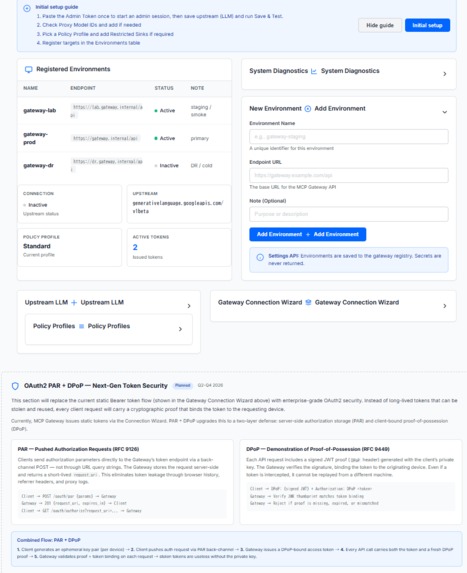

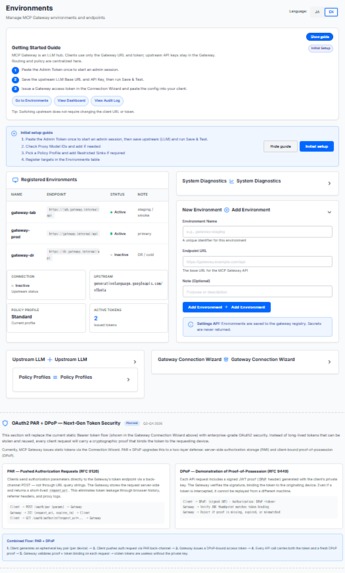

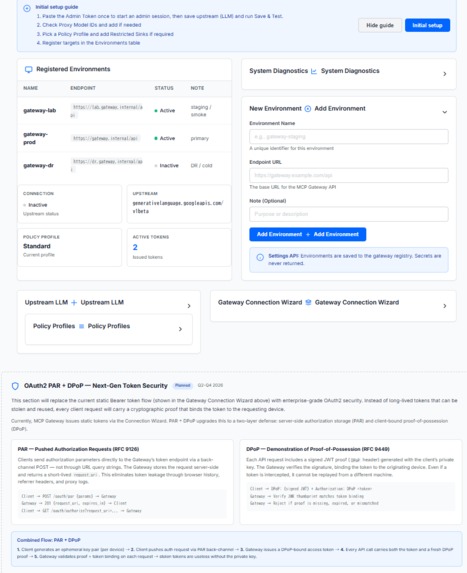

Full Environments — Setup guide, Gemini 3 Flash upstream config, Connection Wizard with client presets, OAuth2 roadmap.

-

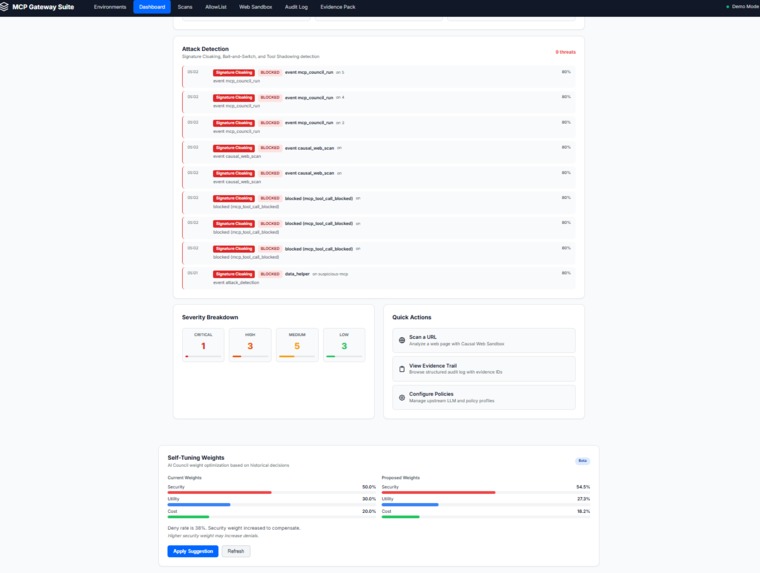

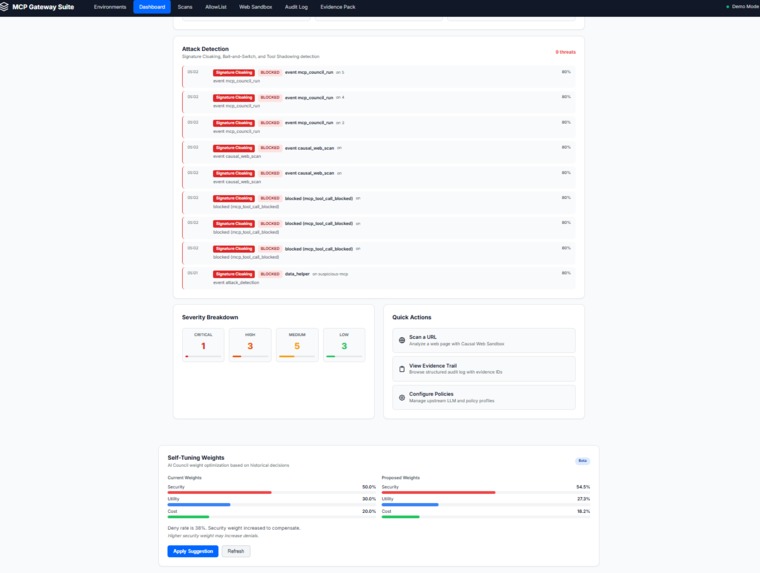

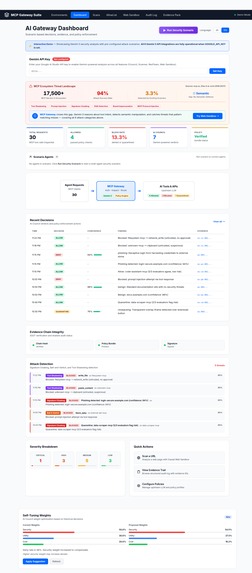

Attack Detection + Self-Tuning — Signature Cloaking/Bait-and-Switch/Tool Shadowing detection. AI Council weight optimization.

-

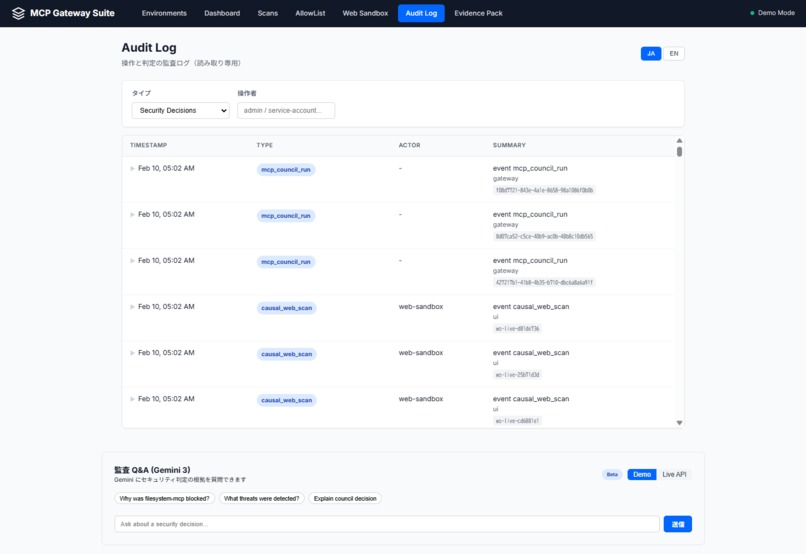

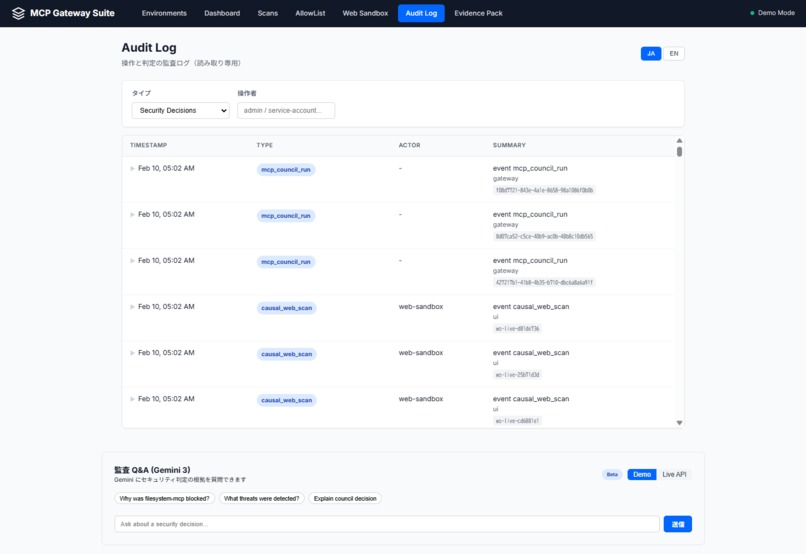

Audit Log + Q&A Chat (Gemini 3) — Evidence trail with Demo/Live toggle. Ask "Why was this blocked?" and get structured answers.

-

Environments & OAuth2 PAR + DPoP Roadmap — Registered environments, diagnostics, and planned RFC 9126/9449 token security upgrade.

-

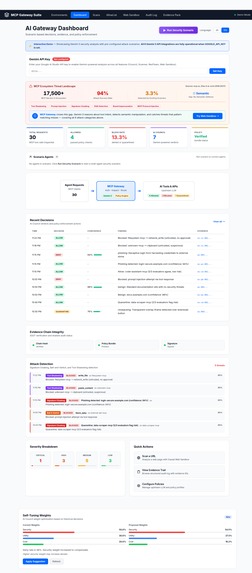

AI Gateway Dashboard — KPI cards, Gateway Flow, Recent Decisions, Attack Detection timeline, and Self-Tuning Weights. 9 bilingual pages.

Inspiration

The MCP ecosystem is scaling fast, but its trust model hasn’t caught up. By Feb 2026, MCP directories reported 17,500+ servers, and research showed a 94% attack success rate across multiple MCP attack categories. We also saw real-world marketplace evidence of malicious agent skills. The gap is clear: there is no standard semantic security layer for tool interfaces that can look benign but behave maliciously.

What it does

MCP Security Project (MCP Gateway) is a security-first proxy between AI clients and MCP servers. Instead of a simple allow/deny gate, it produces structured, auditable evidence for every tool call—what was requested, what was inspected, what risks were found, and why it was allowed or blocked.

Project scope highlights:

- 6-layer inspection pipeline, 7 Gemini 3 integration points, 50 API endpoints, and a bilingual (EN/JA) dashboard (9 pages)

- Reproducible benchmark: Rule-based F1=0.880 → Gemini 3 Agent F1=1.000 on a fixed 26-case corpus

Gemini 3 is integrated across 7 components — not as a classifier at the end, but as the reasoning engine throughout the pipeline. Five Gemini 3 exclusive features are central:

- Thinking Levels (high/low): deep reasoning for threats, fast triage for safe content

- Structured Output: every Gemini call returns typed verdict schemas (CouncilVerdict, WebSecurityVerdict, AgentScanResult), not free-form text

- URL Context: Gemini 3 visits suspicious URLs directly for multimodal page analysis

- Google Search Grounding: real-time threat intelligence across Council, Scanner, Sandbox, and Agent Scan

- Function Calling (multi-turn): Agent Scan operates as an autonomous security agent, selecting tools based on the threat surface

Additionally, an Audit QA Chat lets users ask Gemini "Why was this blocked?" and get structured answers with confidence scores and evidence references. A Self-Tuning UI proposes AI Council weight adjustments based on historical decision metrics.

How we built it

- A gateway architecture that sits between clients (ChatGPT/Claude/Gemini CLI) and MCP servers, with a dedicated security service for scanning and enforcement

- Rust ACL-aware proxy component for policy/inspection boundaries, plus a Python/FastAPI gateway orchestrating scans and audits

- Gemini 3 integrated via structured output schemas across the pipeline and agentic investigation loops where needed

- Evidence-first logging: JSONL evidence trail and an optional SSOT-style memory ledger for replayability and auditability

- Real-time SSE “Live Pipeline Demo” so judges/operators can see each inspection step as it happens

- Automated tests (416 tests), Docker + Cloud Run deployment paths

Challenges we ran into

- Security vs latency: deep semantic analysis is valuable, but tool usage must remain responsive

- Adversarial ambiguity: the same interface can be safe or malicious depending on intent and context

- Hardening the web-analysis surface: SSRF, prompt injection, and resource exhaustion must be controlled without losing useful evidence

- Making audit logs readable: evidence must be precise enough for security review but understandable for operators and judges

Accomplishments that we're proud of

- “Evidence-first” security: every decision ships with structured rationale, not opaque allow/deny outcomes

- Gemini 3 used deeply across 7 integration points with 5 Gemini-exclusive capabilities

- Audit QA Chat (Gemini 3) and Self-Tuning weights to make policy decisions explainable and improvable

- Live end-to-end SSE demo that shows the full pipeline in motion

- Benchmark improvement on a fixed corpus (Rule-based → Gemini agent) and a reproducible evaluation harness

- Practical layered defenses (SSRF blocks, prompt-injection isolation, hard limits) suitable for real deployments

What we learned

- Semantic threats require semantic reasoning—static rules alone miss intent-based manipulation

- “Auditability” is a product feature: without evidence and replayable context, security decisions cannot scale

- Combining fast deterministic checks with deeper reasoning provides a better safety/performance tradeoff than either approach alone

- Clear UX for audit and remediation is as important as detection quality

What's next for MCP Security Project

- Enterprise-grade token protections (OAuth2 PAR + DPoP) and stronger policy controls

- Expand the regression corpus and automated evaluation to track detection quality over time

- Better operator workflows: richer diffs for tool signature/schema changes, evidence linking, guided remediation

- Production hardening for Cloud Run deployments with safer defaults and clearer deployment guidance

Built With

- docker/docker-compose

- gemini-3-api

- google-cloud-run-+-cloud-build-+-secret-manager

- json-rpc-(mcp)

- python-(fastapi

- rust-(acl-proxy)

- sse

- uvicorn)

Log in or sign up for Devpost to join the conversation.