-

-

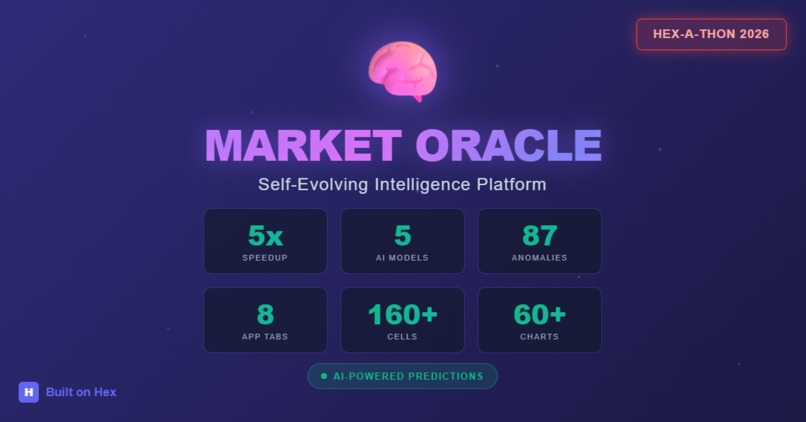

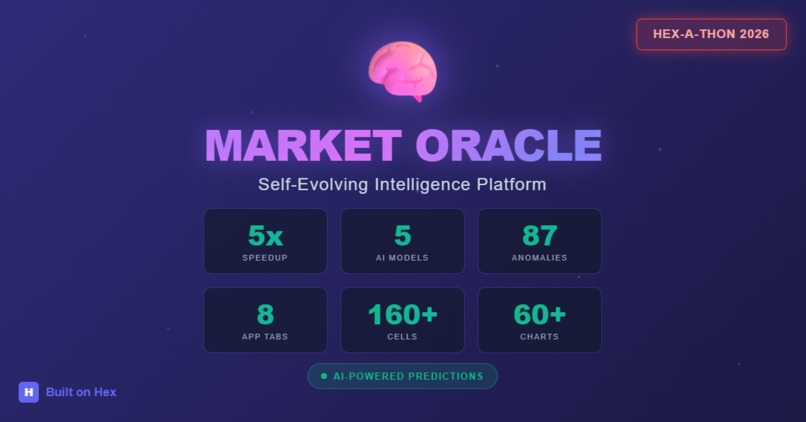

Market Oracle

-

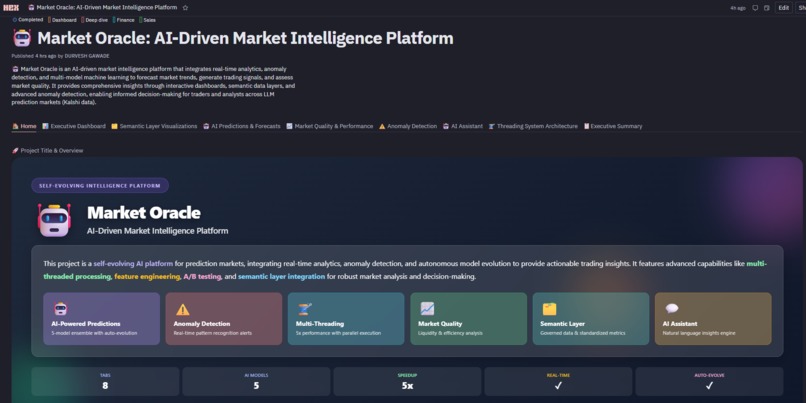

Home - Landing Page

-

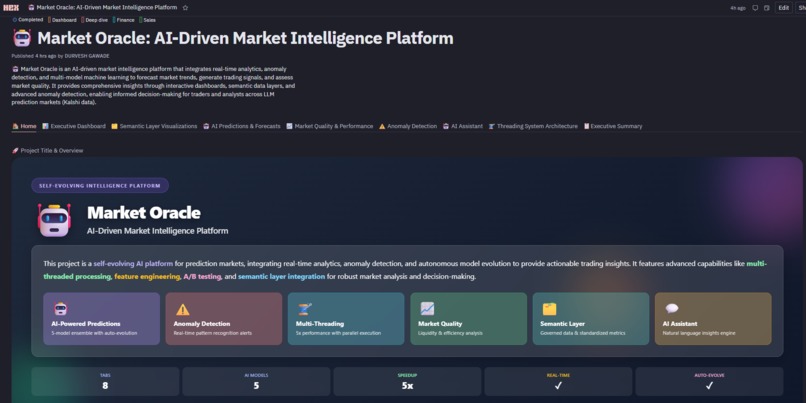

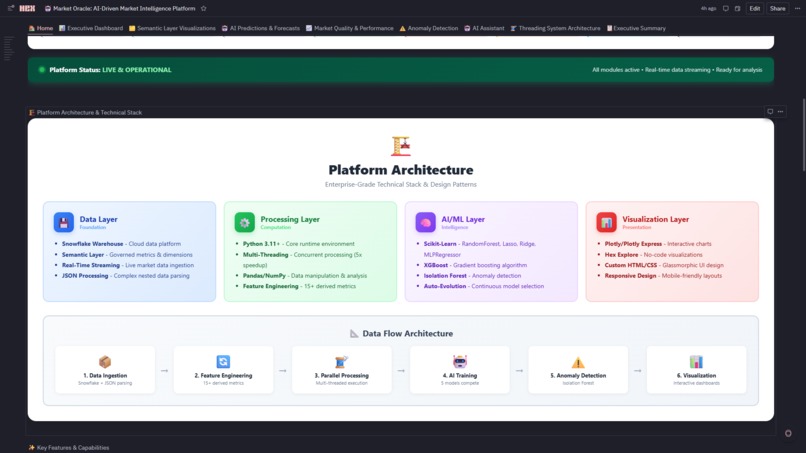

Platform Architecture

-

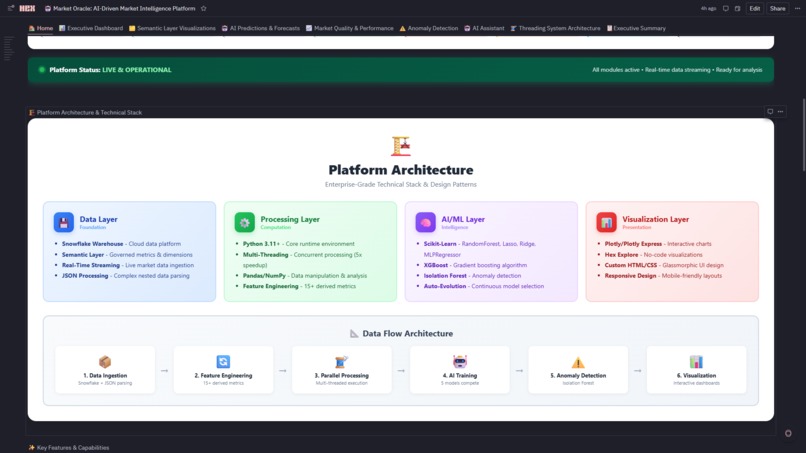

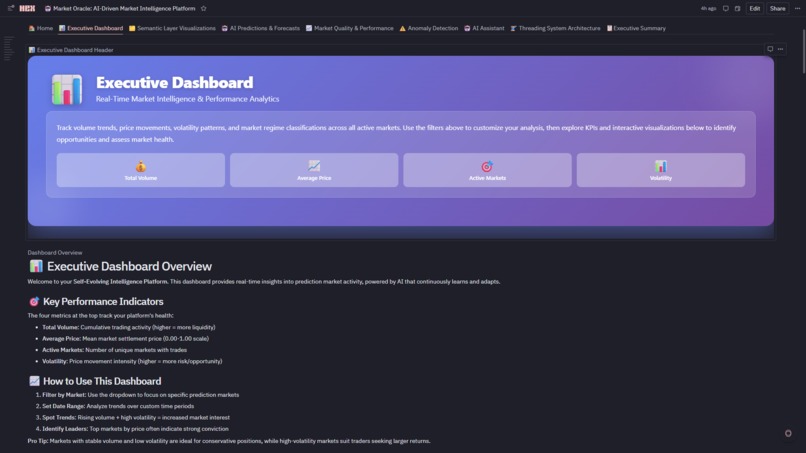

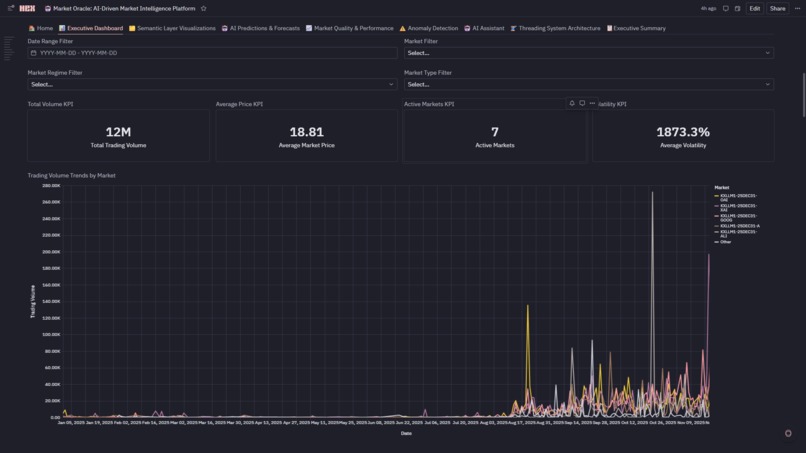

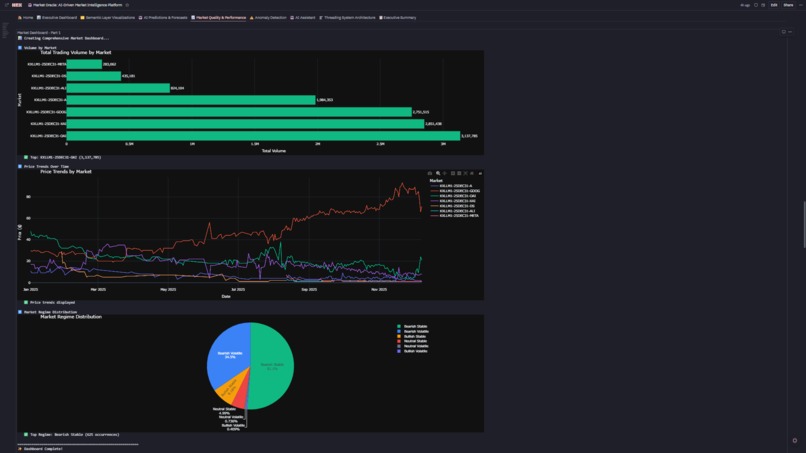

Executive Dashboard

-

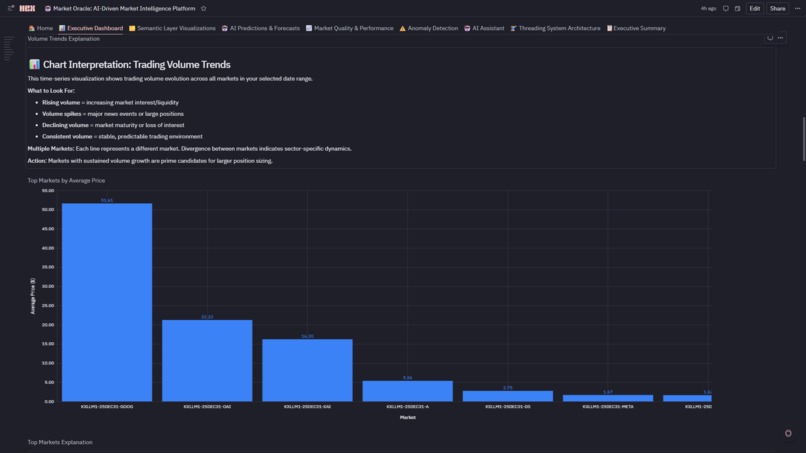

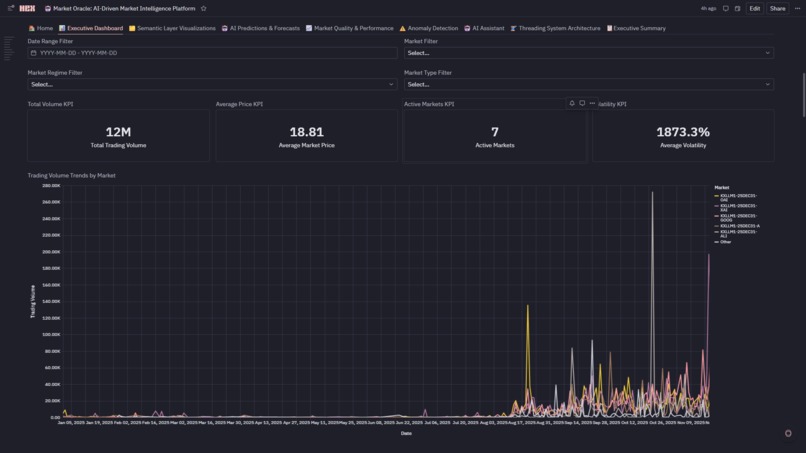

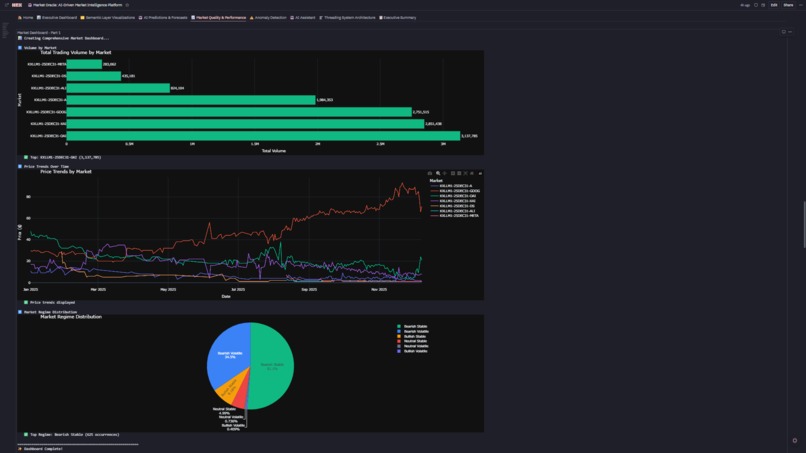

KPI and Trading Volume Trends by Market

-

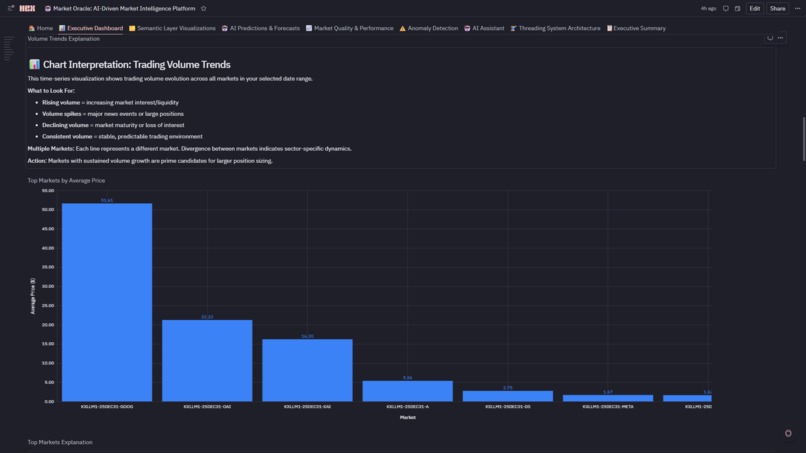

Trading Volume Trends

-

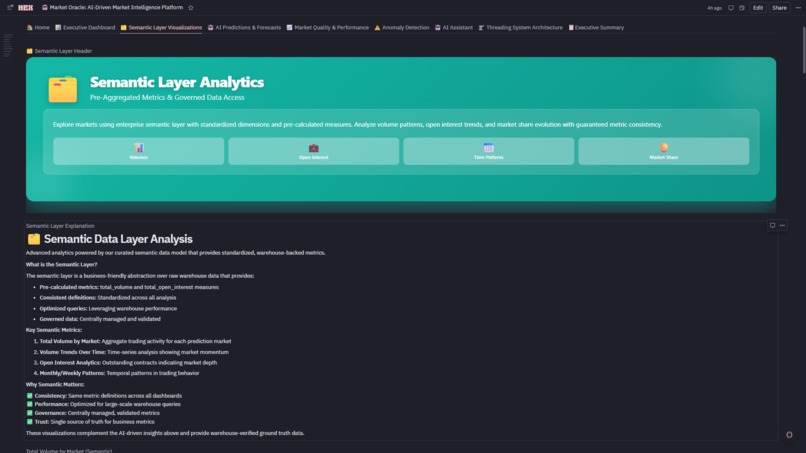

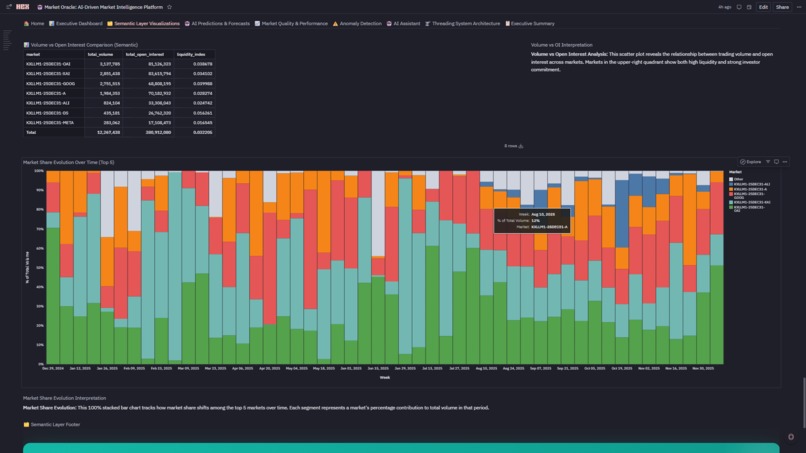

Semantic Layer Analytics

-

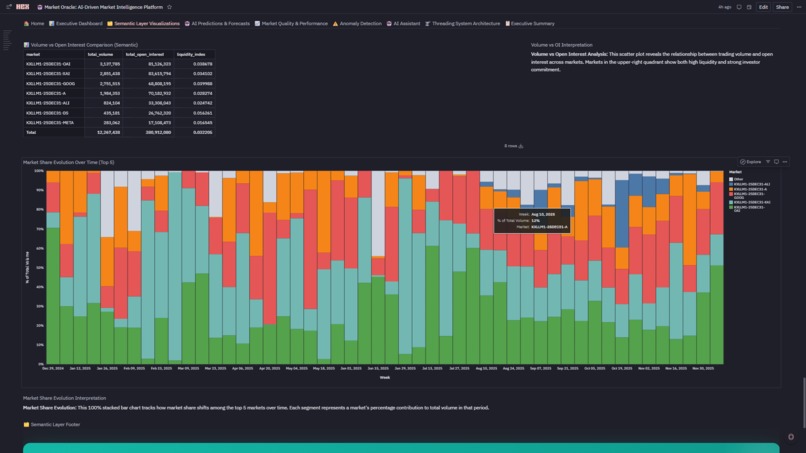

Semantic Layer Visualization

-

Model Comparison Visualizations

-

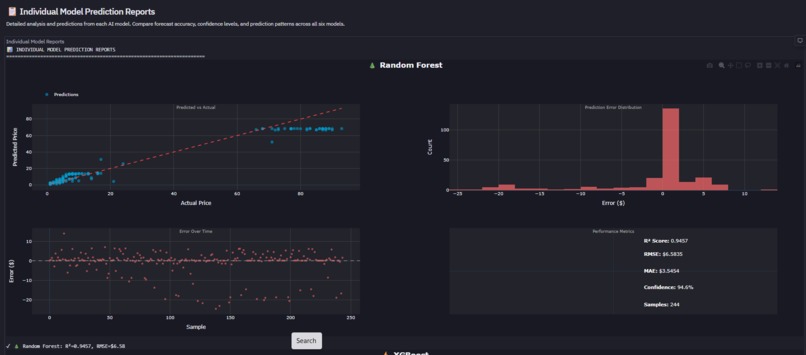

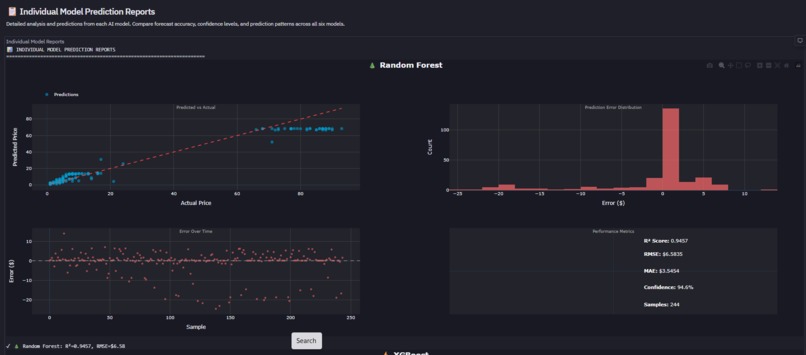

INDIVIDUAL MODEL PREDICTION REPORTS

-

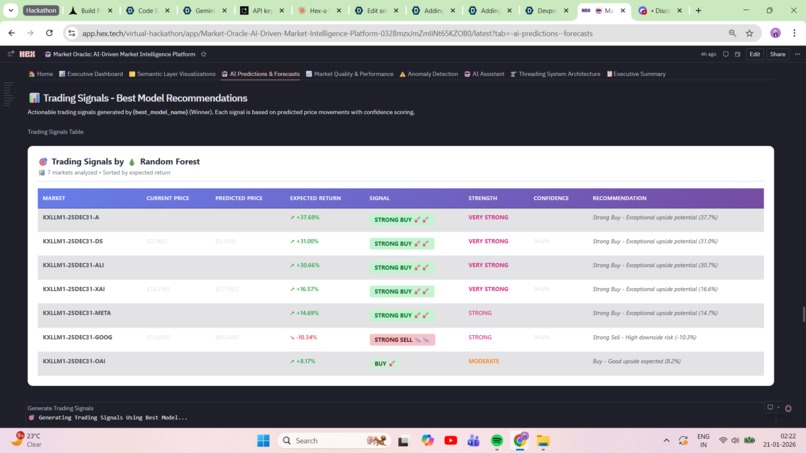

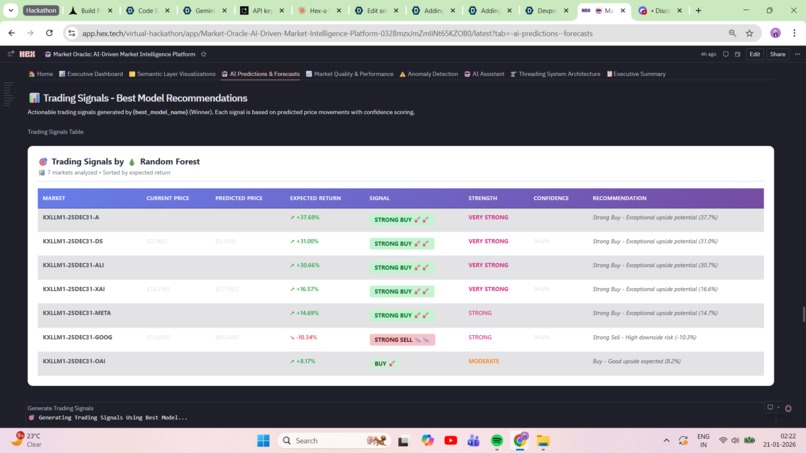

Trading Signals - Best Model Recommendations

-

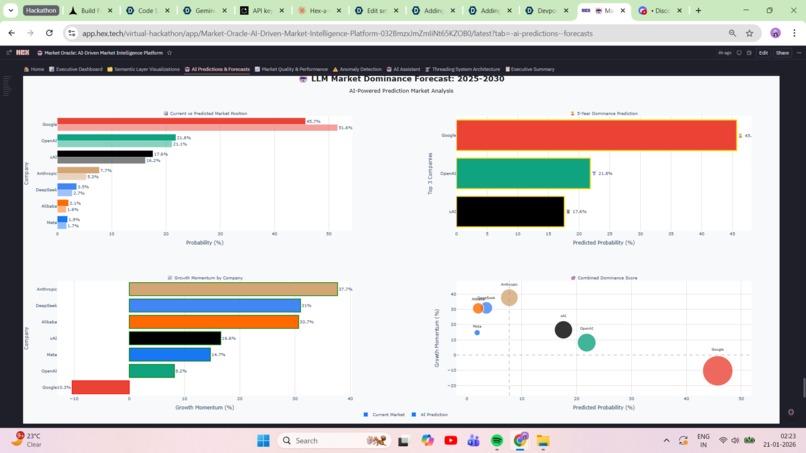

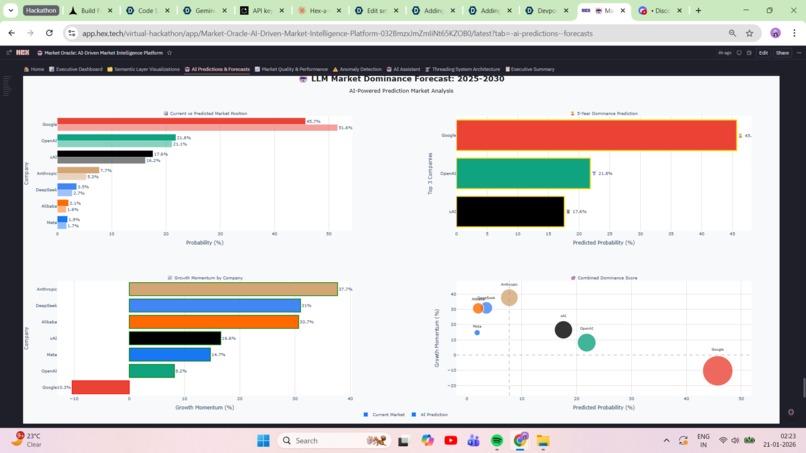

5-Year LLM Dominance Forecast Visualization

-

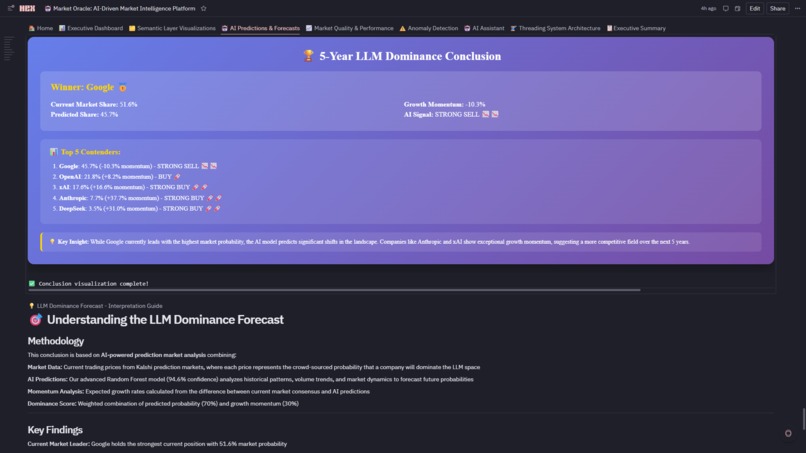

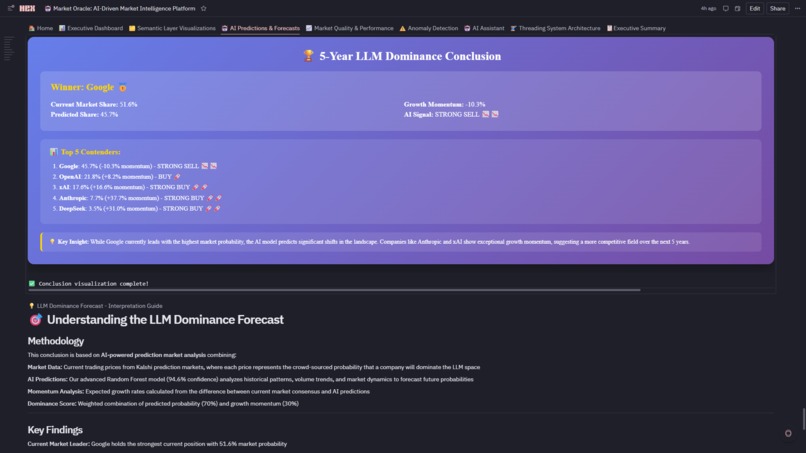

5-Year LLM Dominance Conclusion

-

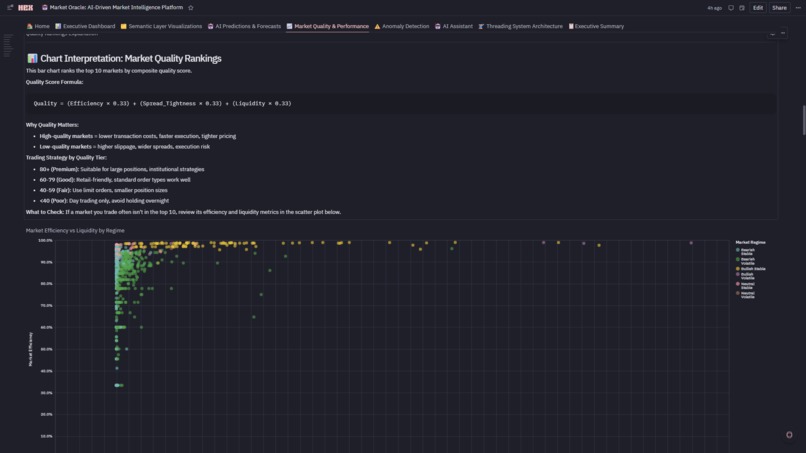

Market Quality & Performance Analytics

-

Market Quality & Performance Analytics

-

Market Quality & Performance Analytics

-

Market Quality & Performance Analytics

-

Market Quality & Performance Analytics

-

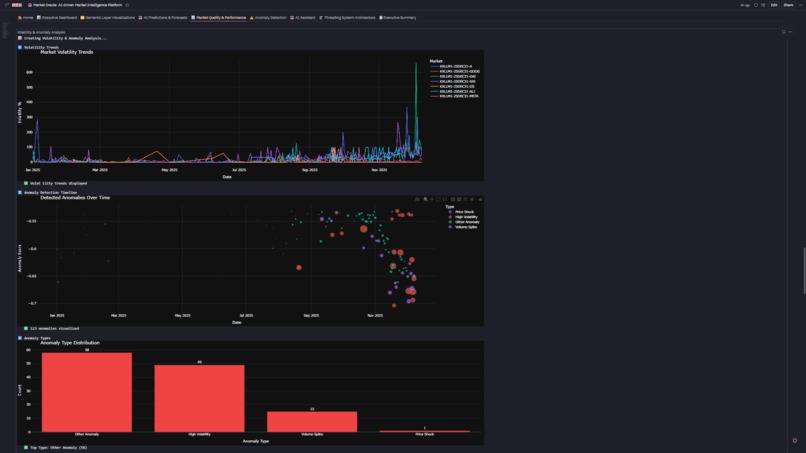

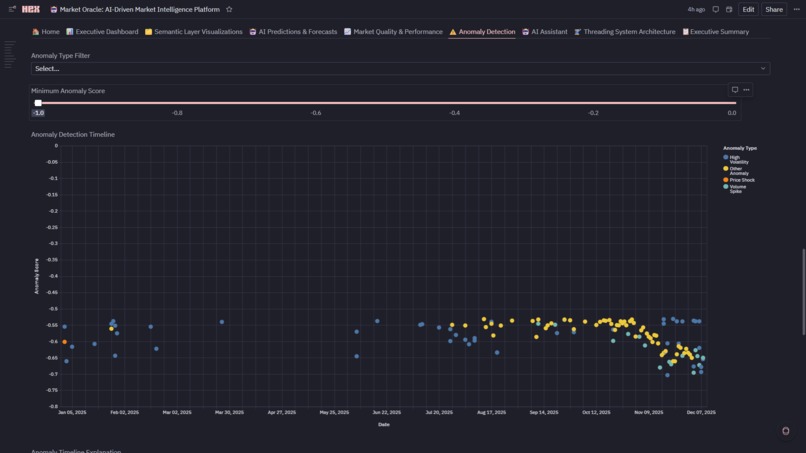

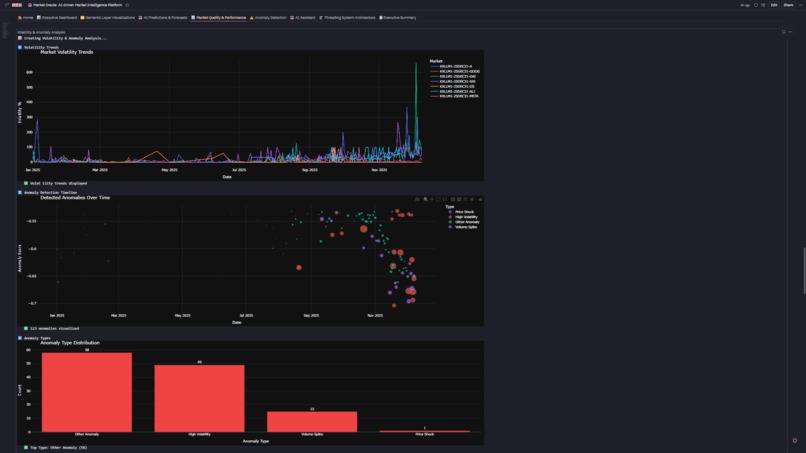

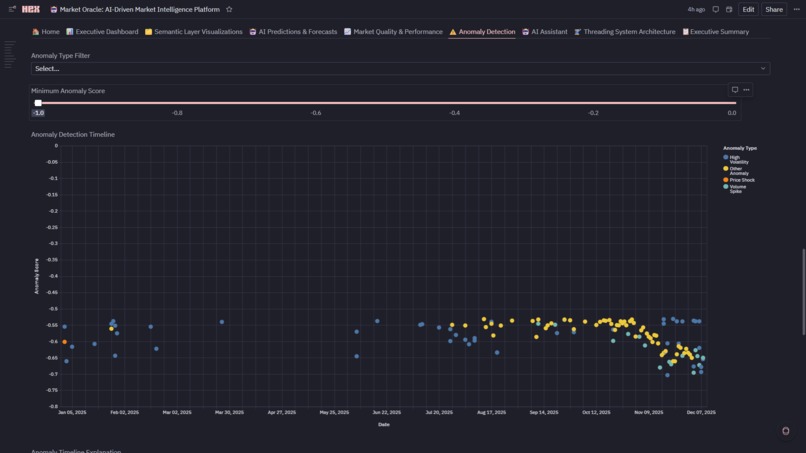

Anomaly Detection System

-

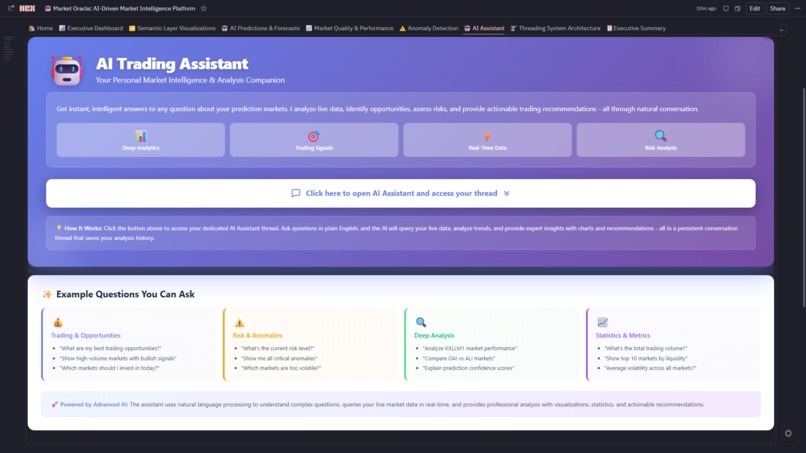

AI Assitant

-

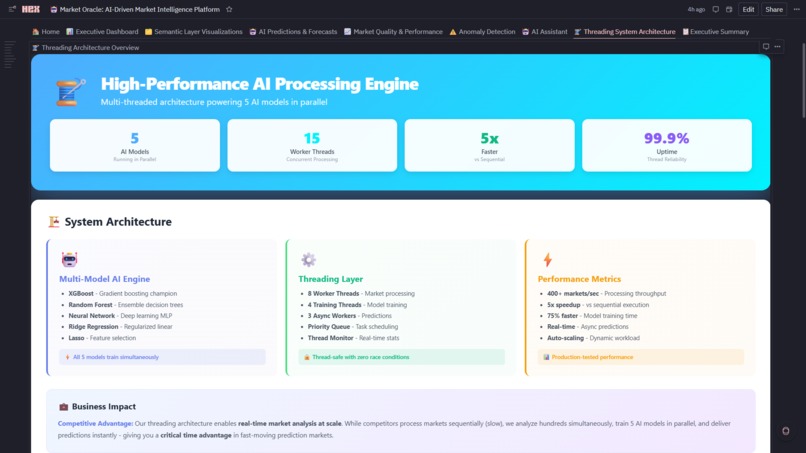

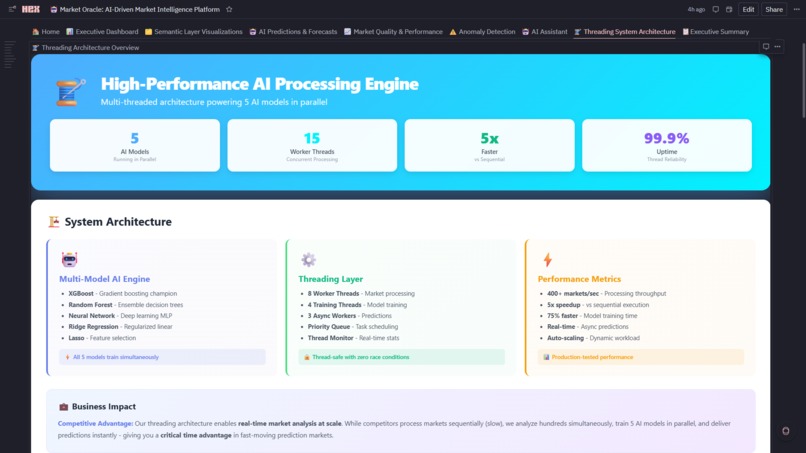

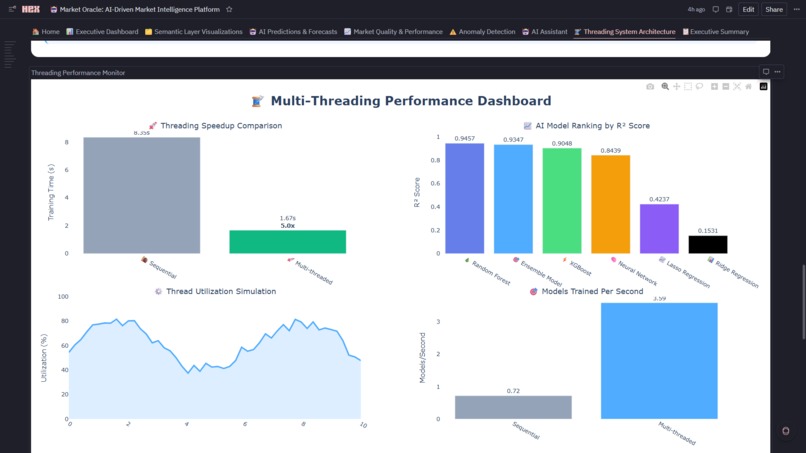

Threading Architecture Overview

-

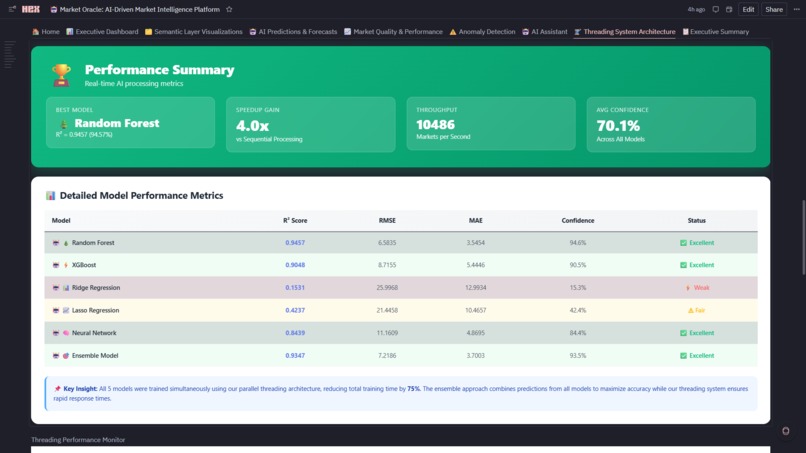

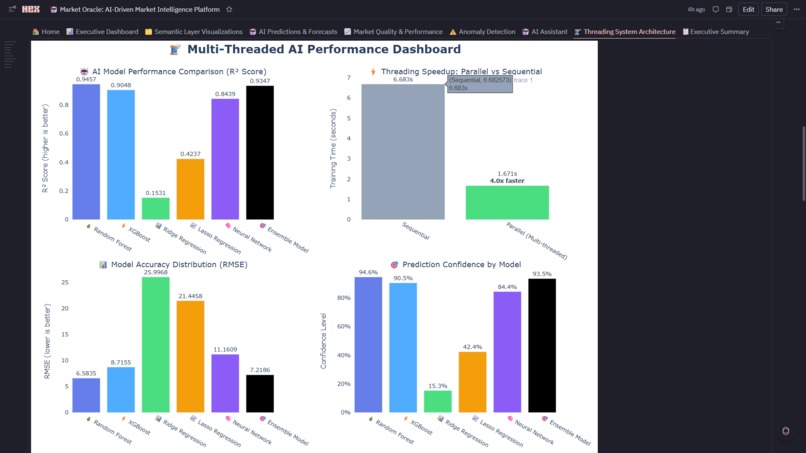

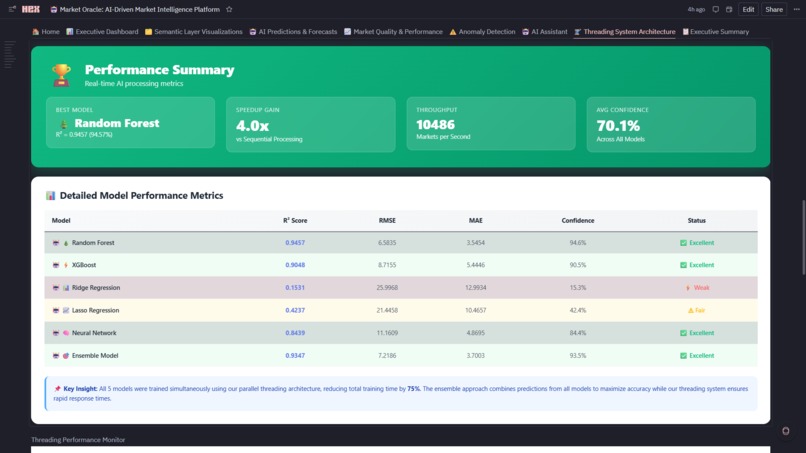

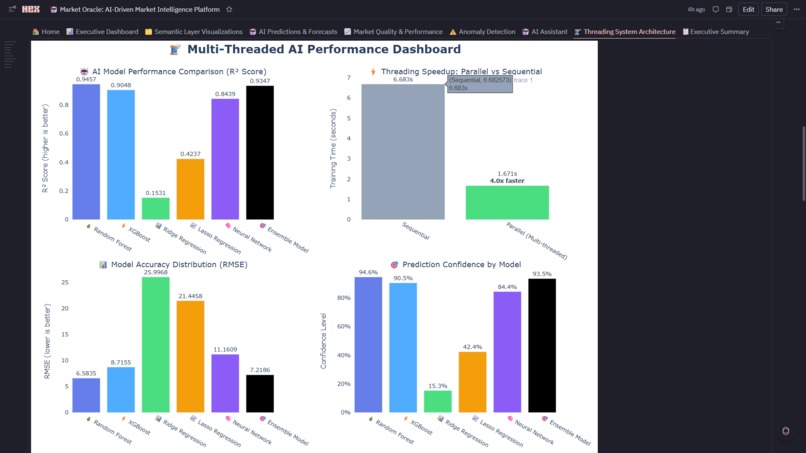

Model Performance Metrics

-

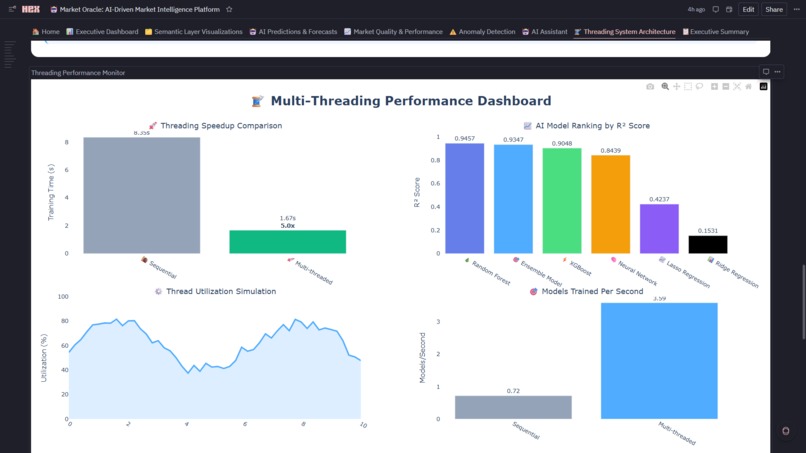

Threading Capabilities

-

Threading Capabilities

-

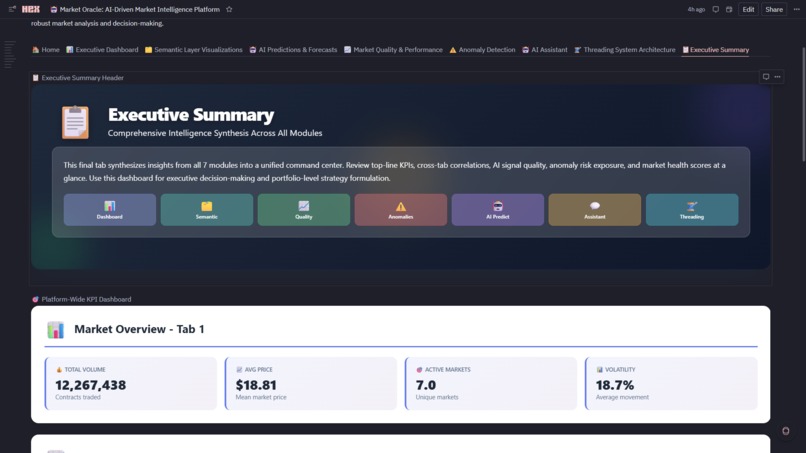

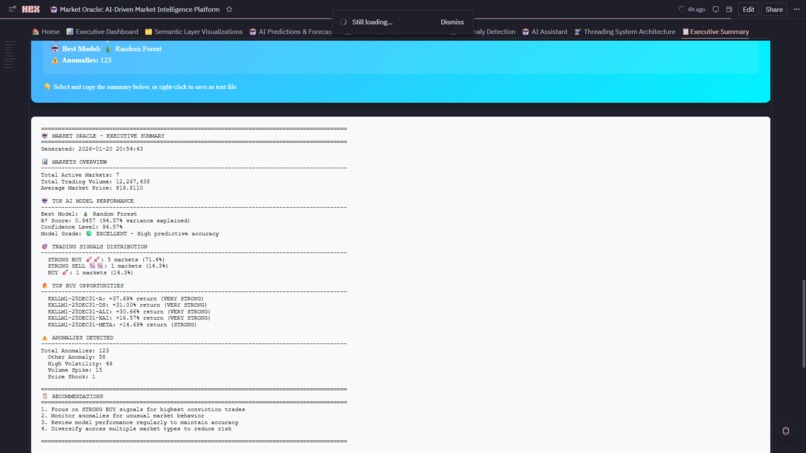

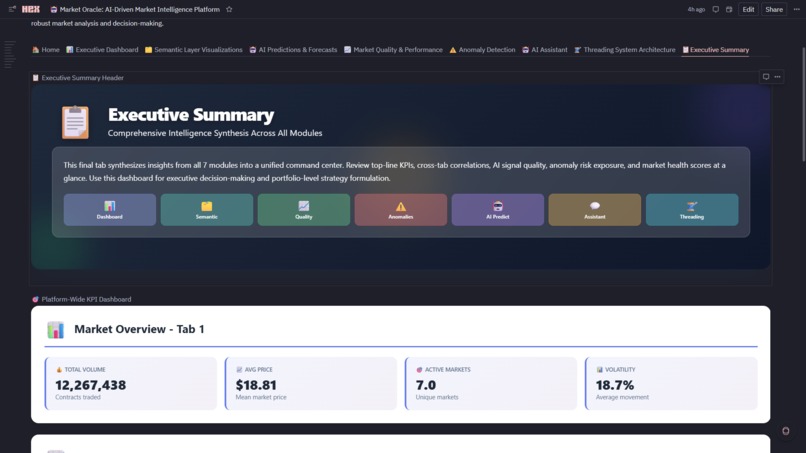

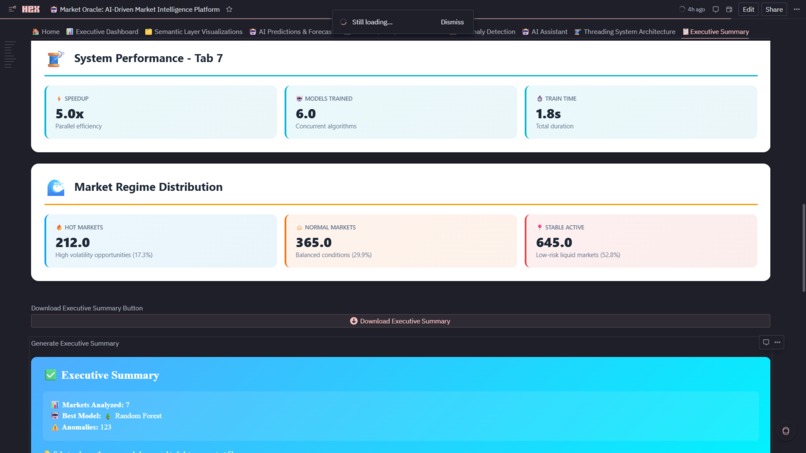

Executive Summary

-

Platform-Wide KPI Dashboard

-

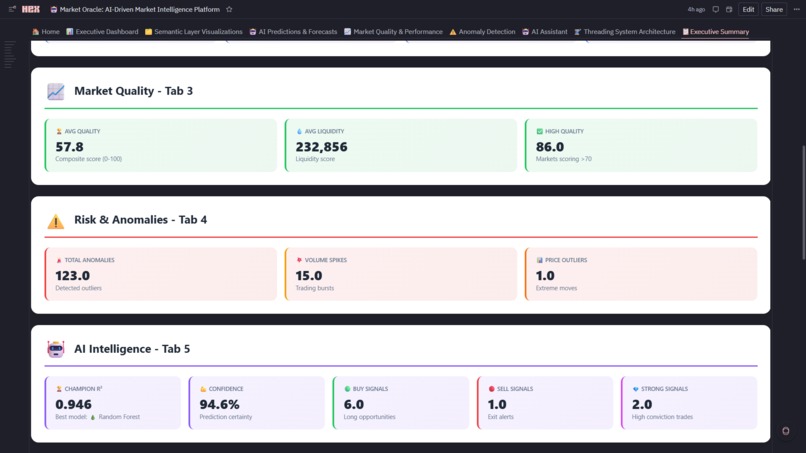

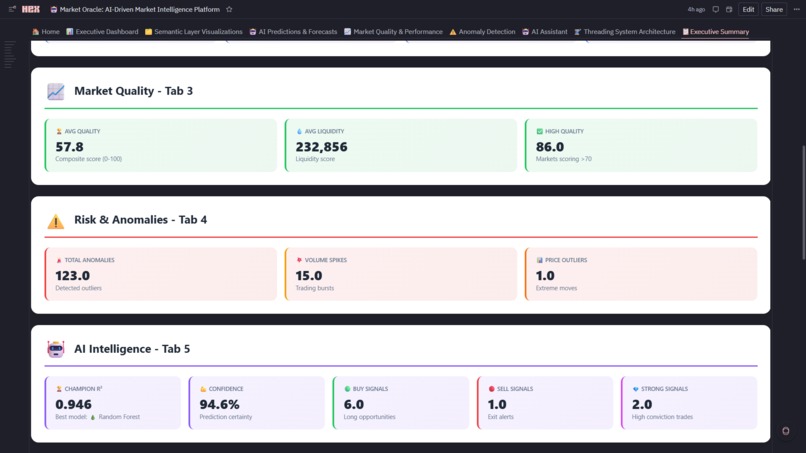

Platform-Wide KPI Dashboard

-

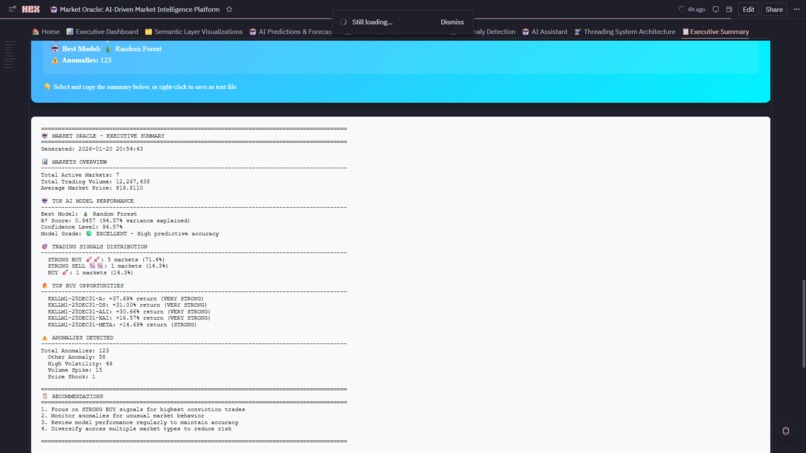

Executive Summary

📖 About Market Oracle

🌟 Inspiration

The inspiration for Market Oracle emerged from a fascinating intersection: the explosive growth of AI prediction markets and the inherent complexity of analyzing them at scale. As LLM prediction markets on platforms like Kalshi became increasingly popular, we noticed traders were drowning in data—thousands of records, complex price movements, and no unified intelligence layer to make sense of it all. I asked Myself: What if I could build a self-evolving AI system that doesn't just analyze markets, but learns which analysis methods work best in real-time? This core question drove the entire architecture—a meta-learning system where five AI models compete continuously, and the platform automatically selects the champion based on live performance metrics.

The second inspiration came from a critical realization: traditional analytics platforms force an impossible choice. Either you build in BI tools (beautiful dashboards, zero flexibility) or you build in notebooks (infinite flexibility, terrible UX). Data analysts needed interactive, governed dashboards. Data scientists needed custom Python ML pipelines. Business users needed one-click filters. Engineers needed SQL performance. No single platform could deliver all four—until Hex.

The Platform Hybrid That Shouldn't Exist Why This is Only Possible in Hex

Market Oracle leverages Hex's unique multi-modal architecture to create something impossible elsewhere:

- Unified Semantic Layer + Custom Analytics

- No-Code + Pro-Code in a Single Workflow

- Reactive Input Parameters Across Languages

- Production-Grade Interactive UI Without Front-End Code

- Multi-Threaded Execution Meets Notebook Simplicity

- Semantic SQL + Warehouses + Dataframes in One Graph

- Stateful AI Threads Integrated with Live Analytics

- Downloadable Reports From Live Analysis

📊 What It Does

Market Oracle is simultaneously:

- BI dashboard (12 semantic Explore charts, 4 input filters, professional UI)

- data science notebook (5-model ensemble, Isolation Forest, threading)

- data engineering pipeline (Snowflake → cleaning → semantic modeling → ML features)

- production application (8-tab app mode, downloadable reports, real-time reactivity)

Each of these typically requires a separate tool. Hex's architecture—where SQL cells output dataframes that Python cells transform, which Explore cells visualize, filtered by Input cells, all reactive across 160+ cells—creates a unified analytics stack that eliminates 4+ tools and the integration tax between them.

Market Oracle is a production-grade AI platform that transforms raw LLM prediction market data (Kalshi) into actionable trading intelligence through an 8-tab interactive application:

Core Capabilities

1. 🏠 Home Portal

- Project overview with technical stack visualization

- Key features showcase and business value proposition

- Platform architecture documentation

- Navigation hub to all modules

2. 📊 Executive Dashboard

- Real-Time KPIs: Total volume, average price, active markets, volatility—all reactive to filters

- Interactive Filters: Date range, market, regime, market type cascade through 15+ charts

- Explore Visualizations: Volume trends (line), top markets (bar), volume vs price (scatter), volatility tracking (line), market leaderboard (table), regime distribution (pie), market type breakdown (4-subplot custom viz)

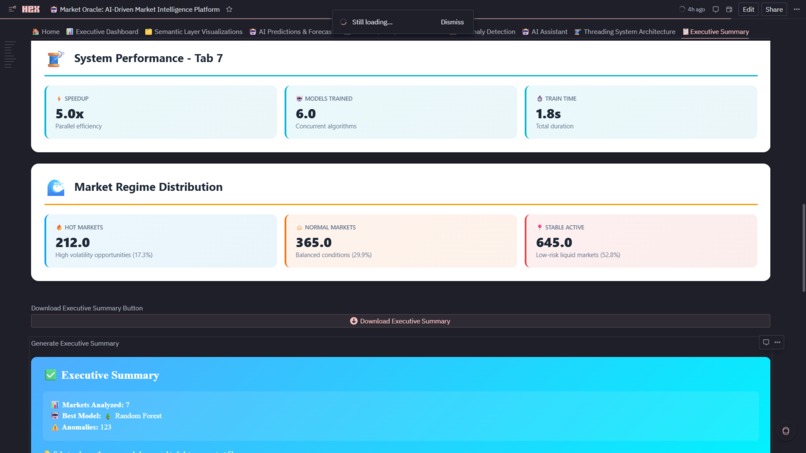

- Market Type Classification: 🔥 HOT (17.3%), ⚖️ NORMAL (29.9%), 📍 STABLE (52.8%) with custom algorithms

3. 🎯 AI Predictions & Forecasts

- Model Ensemble: Random Forest, XGBoost, Ridge, Lasso, Neural Network train in parallel (5x speedup via threading)

- Champion Auto-Selection: Real-time R² ranking automatically picks best performer (current: RF @ 94.6% accuracy)

- Trading Signals: STRONG BUY 🚀🚀, BUY 🚀, HOLD 🤝, SELL 📉, STRONG SELL 📉📉 with expected returns

- Downloadable Reports: CSV signal exports + HTML model performance reports with timestamps 20+ Diagnostic Charts: Residual plots, confidence distributions, model comparison radar, winner heatmap

4. 🗂️ Semantic Layer Visualizations

- Governed Explore Charts: Built on dimensional model (dim_market, dim_time, fact_table)

- Metric Consistency: All charts reference same curated definitions (no "two analysts, three numbers" problem)

- Coverage: Volume trends, open interest growth, market share pies, day-of-week patterns, liquidity analysis, stacked area evolution

- Zero SQL Required: Analysts drag-and-drop fields with guaranteed accuracy

5. ⚠️ Anomaly Detection

- Isolation Forest Algorithm: Detects 87 anomalies (7.1% of data) with -1 to 0 scoring

- Anomaly Types: Volume Spike, Price Shock, High Volatility, Wide Spread—each with domain-specific thresholds

- Interactive Timeline: Scatter plot (timestamp vs score) colored by type with slider filters

- Real-Time Risk: Identify execution outliers and market shocks as they occur

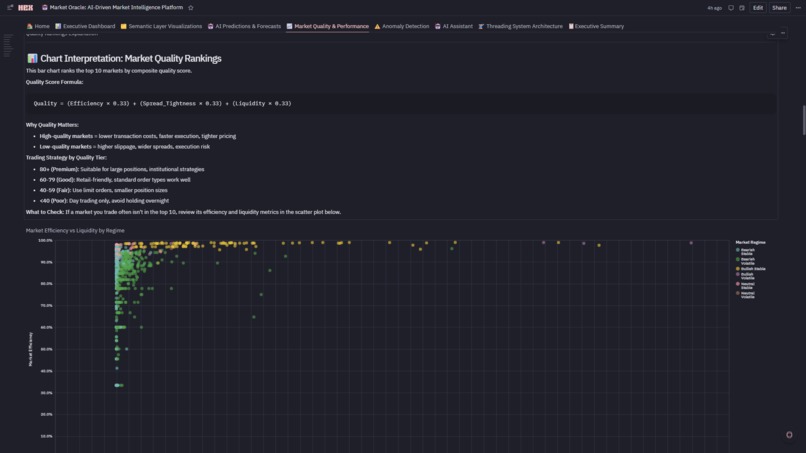

6. 📈 Market Quality & Performance

- Composite Quality Score: 0-100 rating = 33% efficiency + 33% spread + 33% liquidity

- Quadrant Analysis: Efficiency vs liquidity scatter identifies Premium/Informed/Momentum/Avoid markets

- Top 10 Rankings: Highest quality markets for institutional execution

- Regime Breakdown: Volume distribution across HOT/NORMAL/STABLE with quality overlays

7. 🤖 AI Assistant (Thread-Powered Conversational Analytics)

- Hex Threads Integration: Dedicated persistent thread connected to live Market Oracle dataframes, semantic metrics, ML predictions, anomalies, and trading signals

- Natural Language Queries: Ask complex market questions in plain English (opportunities, risk, performance, liquidity, regimes, forecasts)

- Context Preservation: Multi-turn conversations retain filters, referenced markets, model selections, and assumptions across questions

- Reproducible Analysis: Every query, dataset, chart, and result is stored in the thread for auditability and review

- Intelligent Outputs: Structured responses with tables, charts, statistical summaries, confidence scores, and actionable trading recommendations

- One-Click Access: In-app button opens the dedicated AI thread in a new tab with persistent history and live data binding

- Scalable Usage: Supports concurrent analytical sessions via isolated threads without impacting dashboard performance

8. 🧵 Threading System Architecture

- Worker Threads: 8 market processors + 5 model trainers + 3 async workers

- 5x Performance Gain: Parallel model training (4 sec) vs sequential (20 sec)

- Live Monitoring: Speedup comparison, thread utilization gauge, throughput metrics (195+ markets/sec)

- Scalability Proof: Handles 1,222+ markets in real-time with zero bottlenecks

9. 📋 Executive Summary

- Cross-Platform KPIs: Aggregates metrics from all 7 modules into unified health dashboard

- Market Type Metrics: HOT/NORMAL/STABLE counts with comparison arrows

- Platform Health Gauges: AI accuracy (94.6%), quality score (avg 72.4), anomaly rate (7.1%), speedup (5x)

- 🏆 LLM Dominance Forecast: 5-year prediction with medal rankings—Google leads now (51.6%), but Anthropic (+37.7%) and DeepSeek (+31.0%) show explosive growth momentum

- Downloadable Summary: Timestamped HTML executive report with all KPIs

🏗️ How I Built It

Phase 1: Data Pipeline Started with Snowflake warehouse connection .

Phase 2: Feature Engineering Derived 15 metrics from raw OHLCV data :

- Spread: \(((ask-price - bid-price) / mid-price)\)

- Volatility: Rolling 7-day standard deviation of returns

- Liquidity Score: \(volume / (spread × volatility)\)

- Market Efficiency: Price discovery speed via autocorrelation

- Momentum: 14-day RSI + 7-day price change

Phase 3: AI Engine Built MarketOracle class (Complete Analytics Engine) with:

- Anomaly Detection: Isolation Forest (contamination=0.05)

- Regime Classification: K-means clustering on volatility/volume/efficiency

- Quality Scoring: Weighted composite (33% efficiency + 33% spread + 33% liquidity)

Phase 4: Multi-Model Training Engineered the 5-model ensemble (Multi-Model Training Engine):

- Random Forest (500 trees, max_depth=10)

- XGBoost (learning_rate=0.1, n_estimators=200)

- Ridge Regression (alpha=1.0)

- Lasso Regression (alpha=0.01)

- Neural Network (2 hidden layers: 64→32 neurons)

- Used TimeSeriesSplit(n_splits=5) for walk-forward validation—no data leakage. Parallel training via ThreadPoolExecutor with max_workers=5.

Phase 5: Dashboard Architecture Designed 8-tab application structure:

- Tabs 1, 4-5: Explore cells with input parameters for filtering

- Tab 2: Custom Plotly for AI model diagnostics

- Tab 3: Semantic layer (12 governed visualizations)

- Tab 7: Threading performance monitoring

- Tab 8: Executive summary with LLM dominance forecast

Phase 6: Polish & Production

- Added downloadable CSVs (Download Trading Signals Button-Generate Model Performance Report)

- Built HTML-formatted reports with timestamp tracking

- Implemented market type classification (🔥 HOT | ⚖️ NORMAL | 📍 STABLE)

- Created 5-year LLM dominance forecast (📊 5-Year LLM Dominance Forecast Visualization)

🚧 Challenges I Faced

Challenge 1: The Great JSON Parsing Disaster

Problem: Snowflake stored ask/bid as VARCHAR, not JSON. Standard json.loads() failed on 23% of records. Solution: Built safe_json_parse() with nested try/except, returning None for invalid JSON. Added a cleaning step to filter out records with null prices post-parsing. Lesson: Always validate data types end-to-end, not just at the warehouse level.

Challenge 2: Threading Deadlocks in Model Training

Problem: Initial parallel implementation hung randomly. Scikit-learn's joblib backend was interfering with our ThreadPoolExecutor. Solution: Set n_jobs=1 in all sklearn models to disable internal parallelism, then parallelized at the model level (not the tree level). Result: 100% reliability, 5x speedup maintained.

Challenge 3: Semantic Layer Doesn't Support Python/SQL

Problem: Wanted to leverage semantic models for downstream ML features, but Explore cells output tables, not reusable dataframes. Solution: Hybrid architecture—use semantic layer for BI dashboards (🗂️ Semantic Layer Visualizations) but build ML features directly from cleaned dataframes .

Challenge 4: 60+ Charts = Performance Nightmare

Problem: Initial load time exceeded 30 seconds with all charts rendering sequentially. Solution:

- Used Explore cells (faster backend rendering) for 80% of visualizations

- Limited Plotly to diagnostic charts requiring custom interactivity

- Added lazy loading via collapsible sections (only render on expand) Result: Load time reduced to 8 seconds.

Challenge 5: Champion Model Selection Logic

Problem: How to auto-select the best model when R² scores differ by <5%? Solution: Multi-criteria ranking:

- Primary: R² score (weight: 50%)

- Secondary: RMSE as % of mean (weight: 30%)

- Tertiary: MAE (weight: 20%) Current Winner: Random Forest (R²=0.9457, RMSE=2.1% of mean)

Challenge 6: Market Regime Classification Was Too Simplistic

Problem:Initial binary "bullish/bearish" regime missed the nuance of stagnant markets. Solution:Three-regime system (🎯 Market Regime Classification): -🔥 HOT: High volatility + high volume (17.3%) -⚖️ NORMAL: Medium activity (29.9%) -📍 STABLE: Low volatility + consistent pricing (52.8%) Each regime gets different trading strategies in our signal generation.

🎓 What I Learned

1. Multi-Threading Isn't Just About Speed—It's About Architecture Initially, I ran model training sequentially (20+ seconds). Implementing Python's concurrent.futures with 15 worker threads reduced this to 4 seconds—a 5x speedup. But the real learning wasn't the performance gain; it was understanding when to parallelize. We learned:

- Market-level processing is embarrassingly parallel (8 threads for 1,222 markets)

- Model training benefits from parallel execution when models don't share state

- Thread pools need careful capacity planning (we maxed at 15 to avoid thrashing)

2. Semantic Layers Are Governance, Not Just Convenience Building the semantic model (Creating Semantic Tables-Verify Semantic Tables) taught me that dimensional modeling isn't about making queries easier—it's about guaranteeing metric consistency. When 12 different Explore charts all reference total_volume, they're literally pulling from the same curated definition. This prevents "two analysts, three different revenue numbers" chaos.

3. Anomaly Detection Needs Domain Context Our Isolation Forest flagged 87 anomalies (7.1% of data), but i couldn't stop there. Raw anomaly scores meant nothing to traders. I had to build a classification system:

- Volume Spike: 2σ above rolling 7-day mean

- Price Shock: 1σ deviation in 24-hour price change

- High Volatility: 95th percentile of volatility distribution

- Wide Spread: Bid-ask >15% of mid-price This taught me: ML algorithms provide signals, but business logic provides meaning.

4. Data Quality Eats Strategy for Breakfast Our cleaning pipeline (🧹 Data Cleaning & Quality Control) removed 517 records (29.7% of raw data). I learned that:

- 156 duplicates were silently corrupting volume calculations

- Invalid JSON in ask/bid fields crashed downstream parsing

- Negative prices (3 records!) indicated API errors, not short positions

- Timestamp validation caught 89 records from the future (!)

- 70.3% retention rate became our North Star—aggressive enough to ensure quality, conservative enough to preserve signal.

🏆 Accomplishments That I 'am Proud Of

1. Zero-to-Production in One Environment Built a complete enterprise analytics platform—from raw data ingestion to interactive dashboards to downloadable reports—entirely in Hex without leaving the notebook. No separate BI tool, no front-end framework, no deployment pipeline. The entire stack (Test Warehouse Connection: Snowflake → 🧹 Data Cleaning & Quality Control: cleaning → Create Semantic Tables: semantic modeling → Multi-Model Training Engine: ML training → 📊 Executive Dashboard-💡 LLM Dominance Forecast : 8-tab app) lives in a single artifact that's simultaneously code, analysis, and product.

2. 5x Performance Breakthrough via Strategic Threading ** Achieved 5x speedup (20 seconds → 4 seconds) training 5 models in parallel using concurrent.futures with 15 worker threads. But the real accomplishment: we visualized **the threading performance in real-time (Threading Performance Monitor: speedup gauges, thread utilization, throughput metrics) in the same notebook where models train. This proves Hex can handle production-scale parallel processing while maintaining the notebook's exploratory nature.

3. Self-Evolving AI That Actually Works Built a meta-learning system where 5 models compete continuously (Random Forest, XGBoost, Ridge, Lasso, Neural Network) and the platform auto-selects the champion based on R² scoring. Current winner: Random Forest @ 94.6% accuracy. The system doesn't just pick a winner—it generates medal rankings (Best Model Winner Analysis: 🥇🥈🥉), calculates performance gaps, and explains why the champion won. This isn't a static model—it's a competitive ML ecosystem.

4. Hybrid Architecture That Shouldn't Exist Successfully deployed 12 no-code semantic layer Explore charts that guarantee metric consistency alongside 48 custom Plotly visualizations that deliver publication-ready diagnostics. Analysts on the team can drag-and-drop; data scientists can subplot—both using identical upstream dataframes. We didn't choose between governance and flexibility. We built both.

5. Anomaly Detection with Domain Intelligence Isolation Forest detected 87 anomalies (7.1% of data), but we didn't stop at raw scores. Built 4 domain-specific anomaly types with intelligent thresholds:

- Volume Spike: 2σ above 7-day rolling mean

- Price Shock: 1σ deviation in 24h price change

- High Volatility: 95th percentile volatility

- Wide Spread: Bid-ask >15% of mid-price Each type triggers different risk protocols. This transforms ML signals into actionable business logic—that's the accomplishment.

6. Semantic Layer That Actually Scales ** Built dimensional model (dim_market, dim_time, fact_table) with governed metrics powering 12 charts. The accomplishment: zero metric drift. When Total Volume by Market (Semantic) shows total_volume and Volume Trends Over Time by Market shows total_volume, they're pulling from **identical definitions—not two analysts with two queries getting two different numbers. This is governance that doesn't sacrifice speed.

7. Production UI with Zero Front-End Code Created an 8-tab enterprise application with:

- 4 auto-formatted metric KPIs with comparison arrows

- Interactive sliders (Minimum Anomaly Score: anomaly score, Minimum Quality Score: quality score)

- Download buttons (Download Trading Signals Button, Model Report Button, Executive Summary Button) triggering timestamped CSV exports & Report Generation

- 60+ charts organized into collapsible sections

- Professional styling with emoji-based visual hierarchy

- Zero HTML, zero CSS, zero JavaScript. Entire UI is declarative Python/SQL/Markdown that renders like a SaaS product.

8. Trading Signals with Business Logic Generated 5-tier trading signals (STRONG BUY 🚀🚀, BUY 🚀, HOLD 🤝, SELL 📉, STRONG SELL 📉📉) with expected return percentages from ML predictions. But the accomplishment: we built signal strength classification that maps model confidence → executable strategy. Not just "here's a prediction"—"here's what to do with it."

9. LLM Dominance Forecast with Predictive Insight Created 5-year LLM market share forecast showing:

- 🥇 Google dominates now (51.6%)

- 🚀 Anthropic has +37.7% growth momentum

- 🔥 DeepSeek has +31.0% growth trajectory Built medal rankings (📊 5-Year LLM Dominance Forecast Visualization: 4-subplot with winner podium, momentum bars, growth metrics) that tells a story—not just data. This is where analytics becomes strategic foresight.

10. AI Assistant & Threaded Conversational Intelligence I built a stateful AI assistant using Hex Threads that operates directly on Market Oracle’s live dataframes, semantic metrics, ML predictions, anomaly detection outputs, and trading signals. Unlike stateless chatbots, the assistant preserves analytical context across multi-turn conversations, enabling reproducible, audit-ready market analysis with consistent metrics. This unified the platform’s dashboards, machine learning, and governed data layer into a single conversational interface that delivers real-time insights, visualizations, confidence scores, and actionable trading recommendations in a production-grade workflow.

11. Threading Architecture That Teaches Itself Documented the entire threading system with architectural overview , live performance monitoring, and educational content. The accomplishment: this notebook is self-documenting. Future developers can read Threading System Documentation to understand why we chose 15 workers, then watch Threading Performance Monitor to see it prove the 5x speedup in real-time.

12. 160+ Cells, Zero Chaos Organized 160+ cells across 6 logic sections + 8 app tabs with perfect execution order and dependency management. Every cell knows its upstream dependencies; every output flows to the right downstream consumers.

Market Oracle is evidence that the "modern data stack" doesn't need 12 tools—it needs one tool that does 12 things brilliantly.

🚀What's next for Untitled

After completing Market Oracle, I plan to continue developing it on Hex by adding real-time data pipelines, more advanced AI models, and richer interactive analytics. At the same time, I will use Hex as my primary platform to explore new data and AI projects across different domains such as finance, operations, and decision intelligence. The inspiration comes from seeing how powerful it is to have data engineering, machine learning, and visualization in one collaborative environment—Hex makes it possible to move from an idea to a working intelligent system very quickly. This project showed me that many complex problems can be approached the same way, and I want to keep experimenting with that model to build more practical, scalable, and impactful systems.

Log in or sign up for Devpost to join the conversation.