-

-

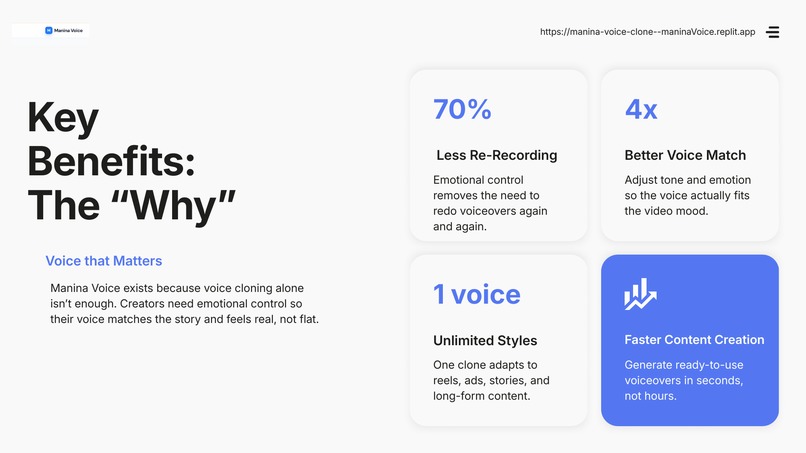

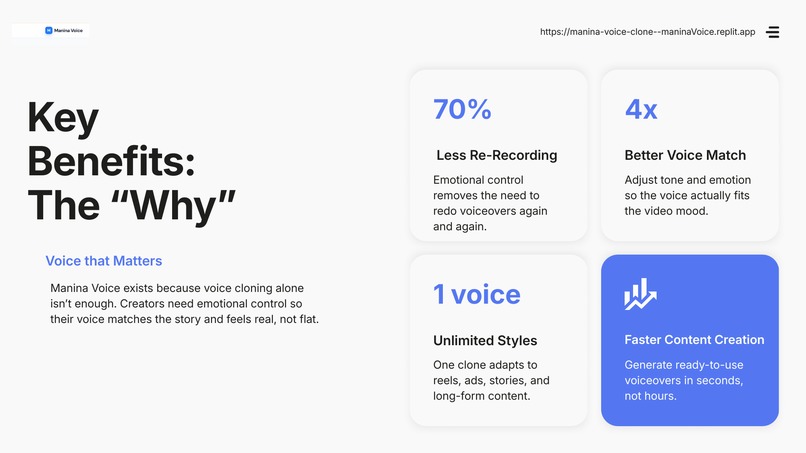

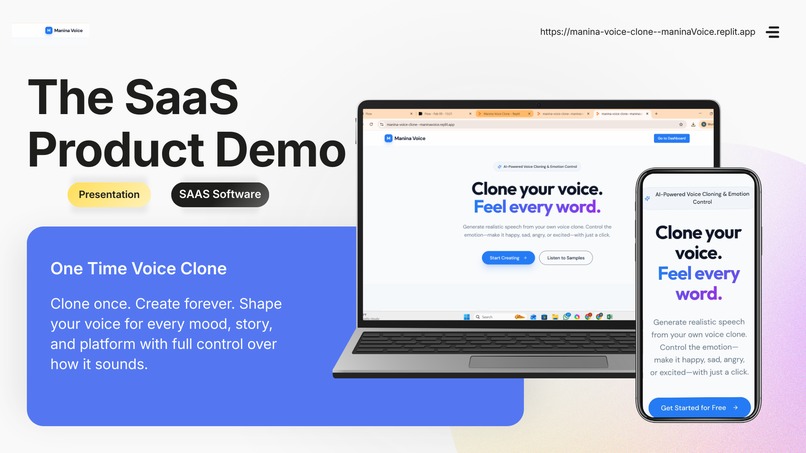

Because real stories need real emotion. Save time, avoid re-recording, and create voiceovers that actually feel human.

-

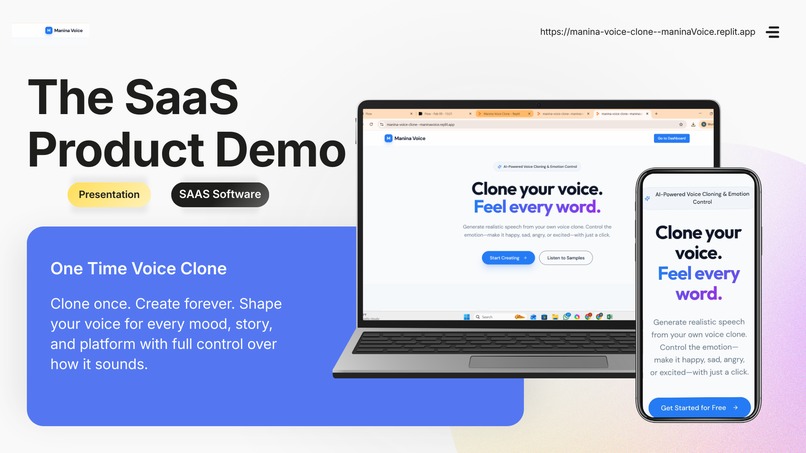

Clone your voice once and use it everywhere. Simple, fast, and built for creators who want control without complexity.

-

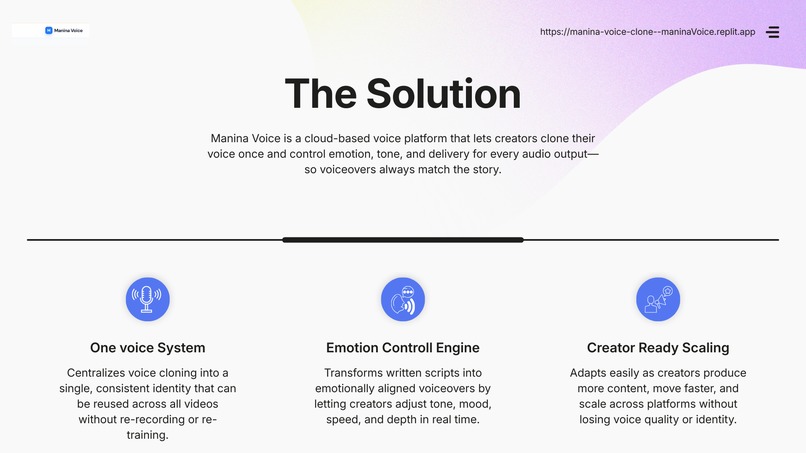

One voice, full emotional control. Manina Voice helps creators match tone, mood, and story at scale.

Inspiration

I am an AI video editor, and this idea came directly from client work. A client once gave me her voice and asked for an AI generated video. I cloned her voice, finished the video, and delivered it. Technically, the project was done well. Her feedback changed everything. She said the video looks good, but the voice did not feel right. It had no emotion. It was not vibing with the visuals. I tried fixing this by using some of the top rated voice cloning platforms. The results were clean, but still lifeless. No real emotional control. No flexibility. That’s when I realized there was a clear market gap. Voice cloning existed, but emotional control did not. This is what inspired me to create Manina Voice.

What it does

Manina Voice allows creators to clone their voice once and customize it for every single audio generation. Instead of being locked into one emotional output, users can control: tone, vibe, emotion, feeling, speed and depth. Each voice output can be tuned to match the video, whether it’s a reel, ad, documentary, or story. One voice. Many expressions. No re-cloning.

How I built it

Manina Voice was built with a creator first mindset. The core idea was to treat voice like a performance, not a static asset. Every generation is a fresh interpretation of the same voice, shaped by user selected parameters. The system was designed to stay simple on the surface while offering deep control underneath, so creators can focus on storytelling instead of technical setup.

Challenges we ran into

The biggest challenge was breaking the traditional voice cloning mindset. Most tools assume a voice should sound the same every time. Designing a system that allows variation without losing identity took time and experimentation. Another challenge was usability. Giving too many controls can overwhelm users, while too few limits creativity. Finding the right balance was critical.

Accomplishments that we're proud of

We identified and addressed a real world problem faced by creators. Instead of building another voice cloning tool, I created a system focused on emotional accuracy and creative control. Manina Voice turns feedback like “the voice doesn’t feel right” into something creators can actually fix.

What I learned

I learned that creators don’t want more voices. They want more control. Emotion matters as much as clarity. A technically perfect voice can still fail if it does not match the mood of the content. We also learned that the best ideas often come directly from client feedback, not theory.

What's next for Manina Voice

My next focus is improving presets, performance quality, and creator workflows and build my team. I want Manina Voice to become the go to voice layer for AI video creation, where creators can move from script to emotionally aligned audio in seconds. The long term goal is to help creators tell better stories using their own voice, without compromise.

Built With

- ai

- java/python

- replit

- saas

Log in or sign up for Devpost to join the conversation.