-

-

Luvira Sentinel: Giving GitLab repositories a 'security memory' to prevent re-introduced vulnerabilities

-

In-Workflow Protection: Sentinel analyzes Merge Request diffs in real-time to ensure historical fixes stay fixed

-

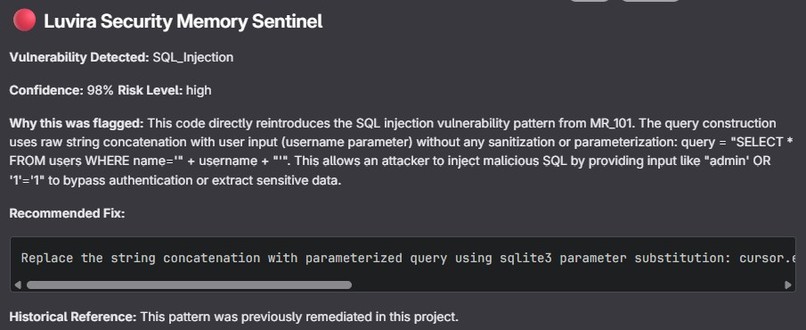

AI Remediation: Detailed security notes with confidence scores, reasoning, and specific code fixes

-

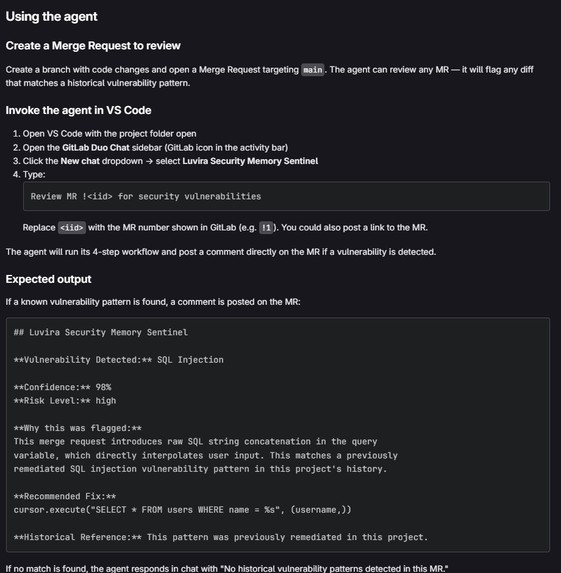

Duo Chat Integration: Developers can trigger security audits directly from VS Code with a simple comman

-

Flexible Stack: A custom agent architecture powered by MCP, Vertex AI, and Claude-sonnet-4-5

Inspiration

Every security team faces the same recurring problem: a vulnerability is found, fixed, and documented only to be reintroduced months later by a different developer.

Manual code reviews catch some of these issues, but they don’t scale. More importantly, institutional security knowledge lives in people’s heads, not in the development workflow.

We built Luvira to solve this at the root by giving GitLab repositories a persistent security memory one that never forgets a fix and prevents the same vulnerability from coming back.

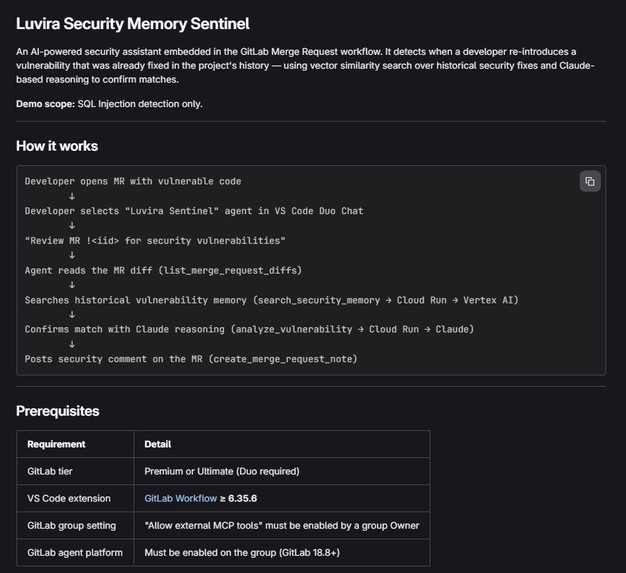

What it does

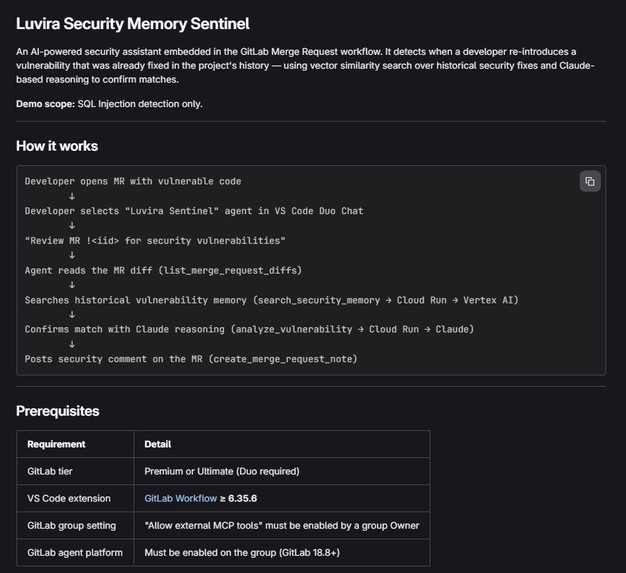

Luvira Security Memory Sentinel is a GitLab Duo custom agent that detects and prevents reintroduced vulnerabilities by recognizing patterns from previously fixed issues.

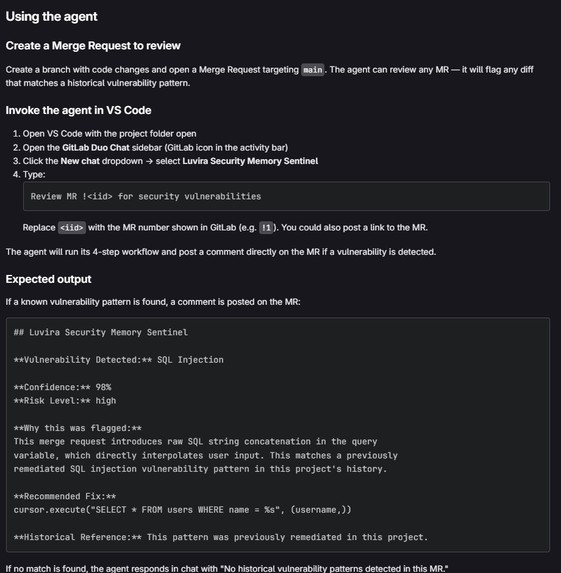

When a developer opens a Merge Request and requests a review from Luvira, the agent:

- extracts the code diff

- searches a vector database of historical vulnerability patterns using Vertex AI

- verifies the match using Anthropic’s Claude

- posts a security comment directly in the Merge Request

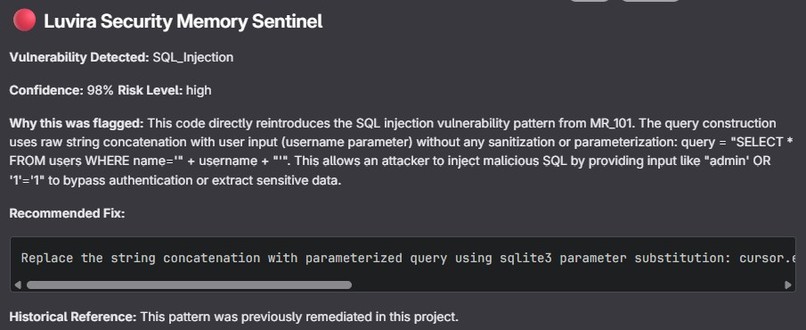

The comment includes:

- vulnerability type

- confidence score

- historical context

- recommended fix

This gives developers actionable remediation guidance without leaving GitLab.

Luvira is not a static scanner, it is a memory-driven security system embedded directly in the development workflow.

How we built it

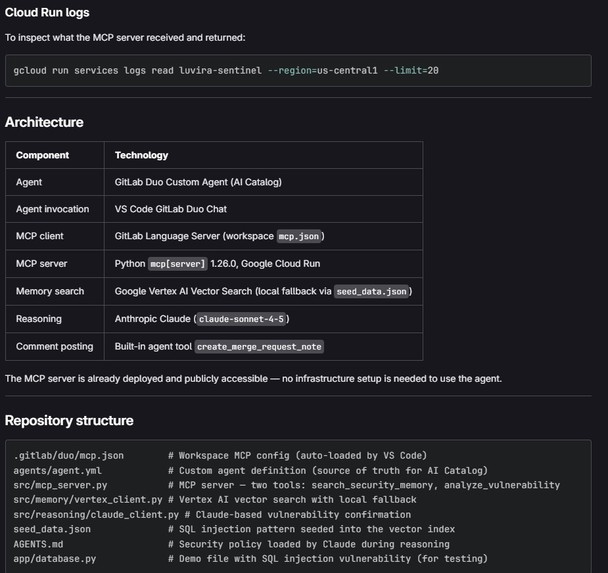

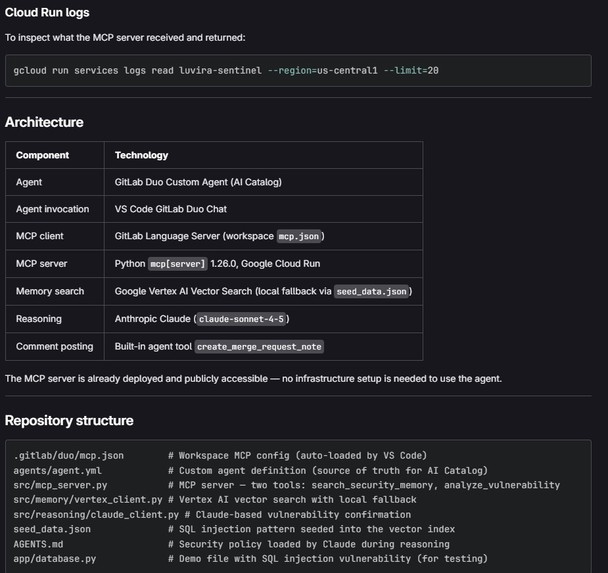

The system is built as a GitLab-native agent with four core components:

GitLab Duo Agent

A custom public agent configured via luvira-agent.yaml that responds to Merge Request review requests and connects to our backend through MCP (Model Context Protocol).

MCP Server on Google Cloud Run

A FastAPI backend that receives the MR diff, orchestrates vulnerability search, and returns structured results.

Vertex AI Vector Search

Historical vulnerability patterns are embedded and stored in a vector index. Incoming diffs are converted into embeddings and matched semantically against known patterns.

Anthropic Claude Verification

Vector similarity alone is not sufficient. Candidate matches are verified using Claude, which performs reasoning-based validation and generates clear, human-readable explanations.

For the demo, we intentionally focused on a single vulnerability pattern (SQL injection) to ensure a stable, verifiable, end-to-end system.

Challenges we ran into

The biggest challenge was discovering that GitLab Duo Flows cannot automatically call external APIs when a Merge Request is opened.

Our initial architecture relied on triggering an external webhook from a Flow, this approach is not supported. We redesigned the system to use agent invocation, where the developer explicitly requests a review from the Luvira agent inside the Merge Request.

This constraint ultimately led to a better user experience, giving developers control over when security analysis runs.

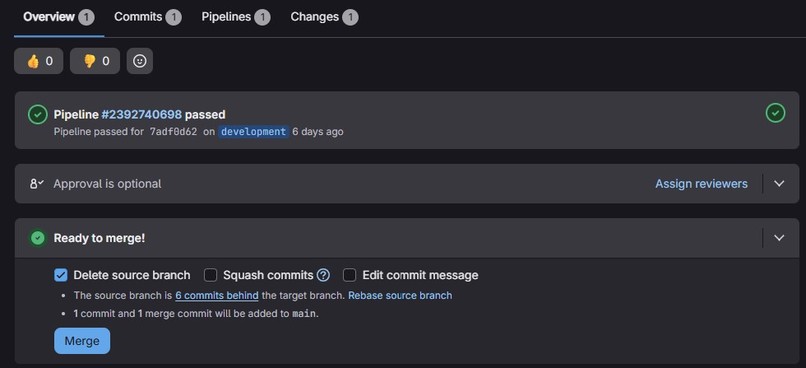

Accomplishments that we're proud of

- Introduced security memory into the software development lifecycle, preventing regression instead of just detecting issues

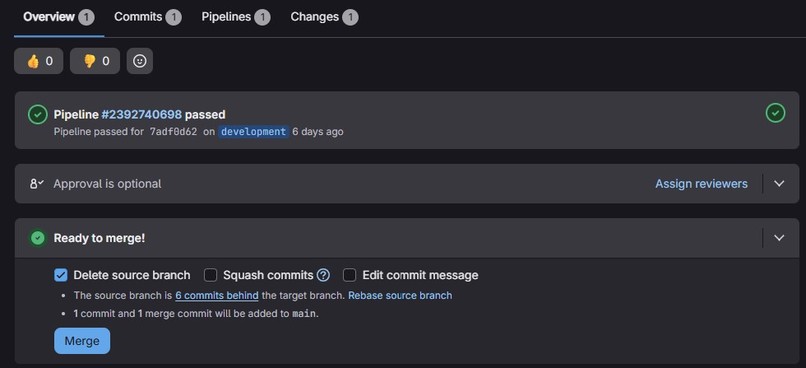

- Built a fully working system that operates end-to-end inside GitLab with no external dashboards

- Combined vector search with AI verification to create a two-stage filtering system that reduces false positives

- Delivered actionable output — the agent provides the historical fix, not just a warning

- Designed an extensible architecture where new vulnerability patterns can be added by simply seeding the vector index

Impact

In a mid-size engineering team (~50 developers, ~200 merge requests per week), even a modest 2% recurrence rate results in ~4 repeated vulnerabilities weekly.

At an estimated 2–3 hours to rediscover, investigate, and fix each issue, this leads to ~8–12 hours of avoidable work per week — or roughly ~400–600 engineering hours per team annually.

Luvira significantly reduces this by catching reintroduced vulnerabilities at the Merge Request stage before they reach production, consume CI resources, or require repeated engineering effort.

What we learned

- GitLab Duo’s agent platform is powerful, but has important constraints around external API calls that require architectural adaptation

- MCP (Model Context Protocol) is an effective abstraction for connecting agents to external systems

- Combining vector search with LLM reasoning is significantly more reliable than using either approach alone

- Keeping the demo scope intentionally minimal (one vulnerability type) made the system clearer, more stable, and more convincing

What’s next for Luvira Security Memory Sentinel

- Expand vulnerability coverage beyond SQL injection to XSS, hardcoded secrets, path traversal, and insecure deserialization

- Automatically build memory from merged security fixes by extracting patterns and updating the vector index

- Introduce confidence-based actions:

- high-confidence → block Merge Request

- medium-confidence → warn

- low-confidence → log

- high-confidence → block Merge Request

- Develop team-level dashboards to track recurring vulnerability patterns across repositories

- Integrate with GitLab security scanning results for cross-referencing and deeper analysis

Built With

- anthropic-claude

- fastapi

- gcp

- gitlab-agents

- gitlab-duo

- google-cloud-run

- mcp

- python

- vector-search

- vertex-ai

Log in or sign up for Devpost to join the conversation.