-

AI-powered legal platform delivering real-time BNS mapping, instant FIR generation, and high-precision case analysis.

-

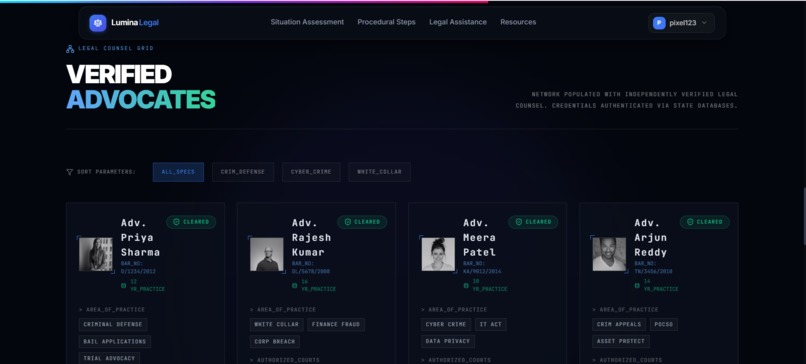

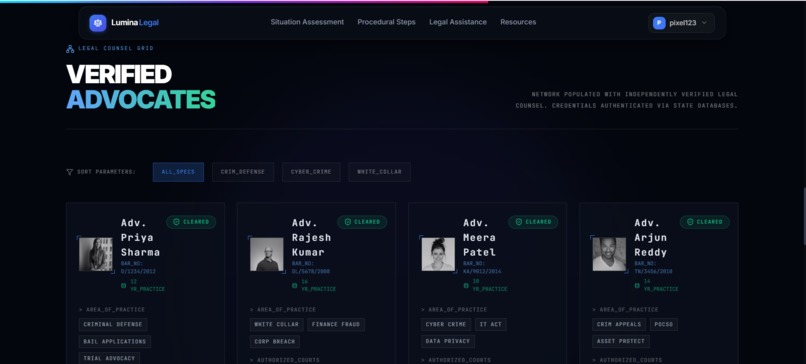

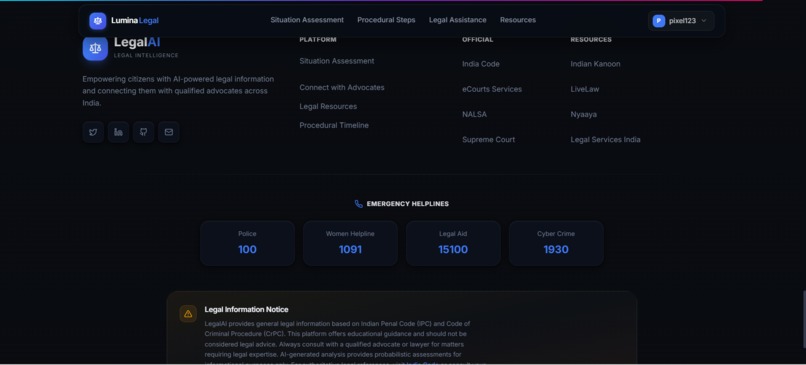

Browse verified advocates with authenticated credentials, domain expertise, and structured filtering for precise legal support.

-

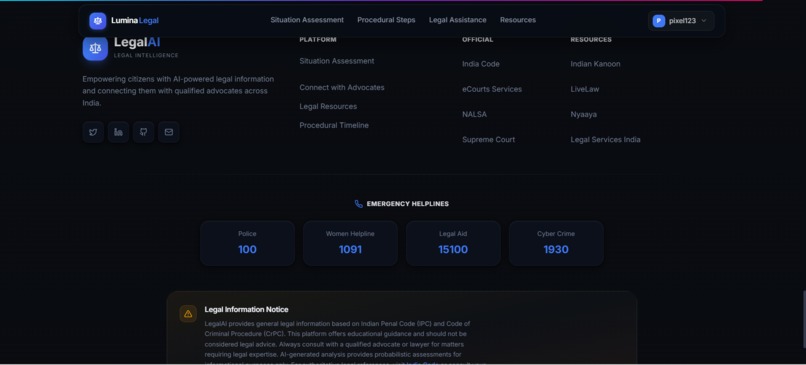

Access official legal resources and emergency helplines including police, cybercrime, legal aid, and women support services.

-

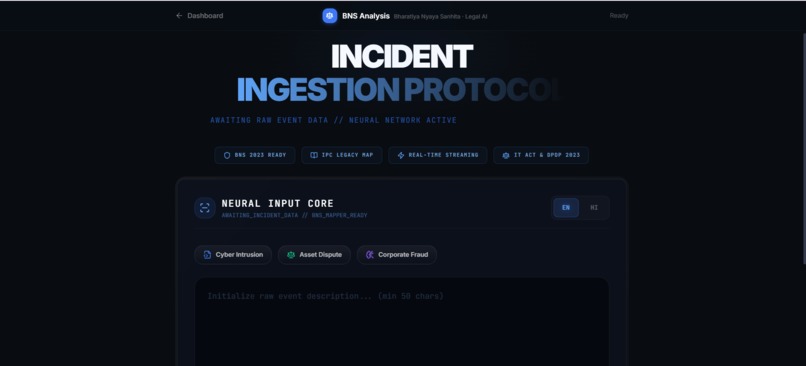

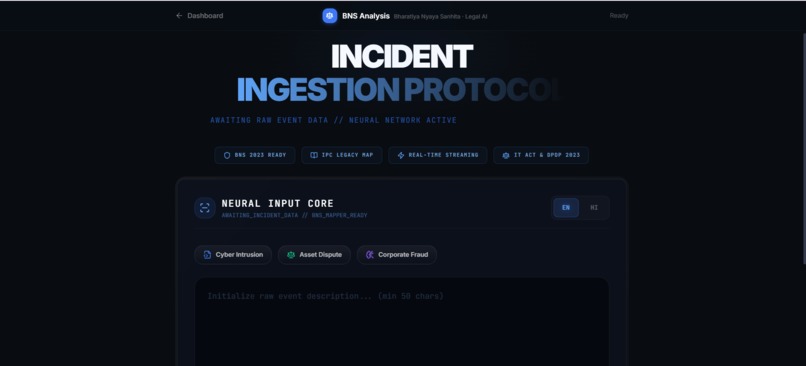

Submit incident details for AI-driven classification, real-time BNS mapping, and structured legal analysis.

-

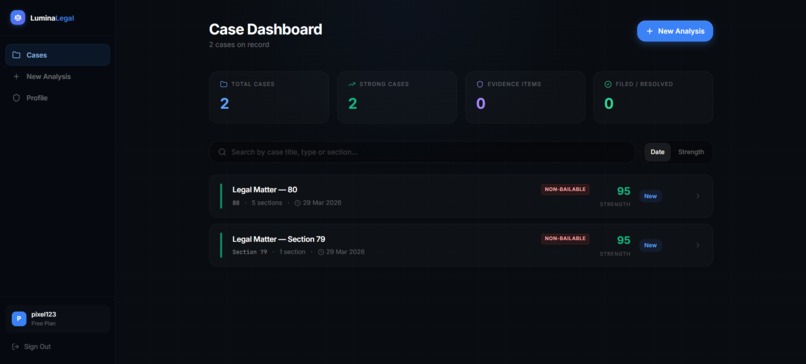

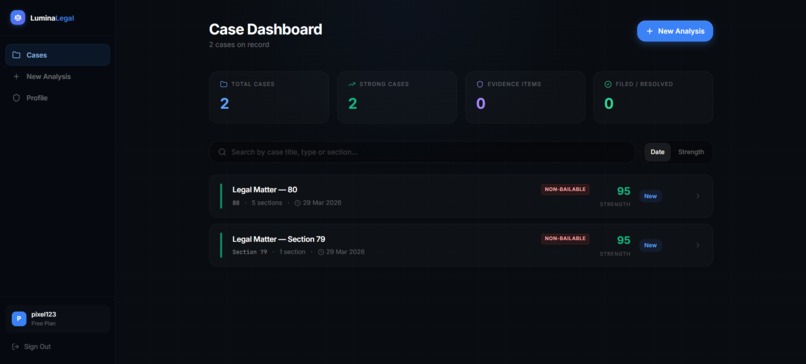

Centralized dashboard to monitor cases, track strength, manage evidence, and review legal status with actionable insights.

-

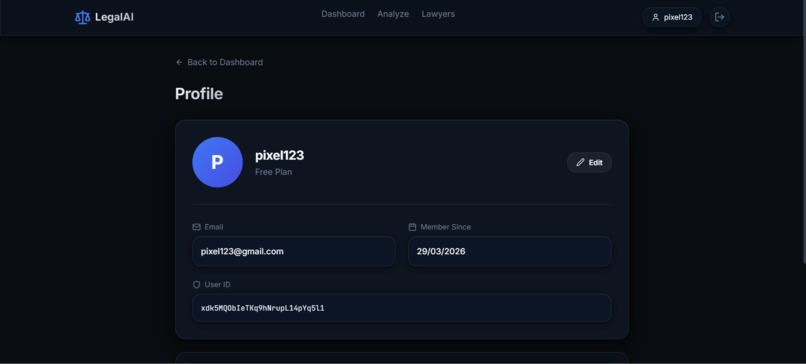

Secure profile management with user details, account data, and system identity for personalized LegalAI access.

Lumina: AI Justice Platform Inspiration The legal system is notoriously complex, slow, and intimidating for the average citizen. With India's recent and massive transition from the legacy Indian Penal Code (IPC) to the new Bharatiya Nyaya Sanhita (BNS), confusion among both citizens and legal professionals has skyrocketed. We realized that bridging this knowledge gap shouldn't require an expensive initial lawyer consultation. Lumina was born from the idea that everyone deserves instant, clear, and actionable legal intelligence. We wanted to build an engine that democratizes access to justice by explaining complex legal situations in plain language while accurately citing the new statutory frameworks.

What It Does Lumina: AI Justice Platform is an autonomous legal intelligence dashboard. Users can describe their legal situation or dispute in natural language, and our custom AI pipeline immediately processes the narrative to:

Classify and Map: Identify the correct statutes under the newly enforced BNS (and map them to legacy IPC sections if necessary). Quantify Risk: Calculate a probabilistic "Confidence Score" and outline severity (Bailable vs. Non-Bailable). Generate Action Plans: Create personalized next steps, risk warnings, and a dynamic Evidence Checklist to secure the user's case before evidence degrades. Draft Documents: Automatically generate legally-formatted First Information Report (FIR) templates based on the user's localized inputs. Connect: Seamlessly bridge the gap between AI analysis and human expertise via an integrated Lawyer Marketplace for secure consultations.

How We Built It Lumina is a highly decoupled, production-grade web application:

Frontend Engine: Built with React and Vite, styled with Tailwind CSS. We heavily utilized Framer Motion to create a premium, dynamic, "holographic" UI that reacts intelligently to case strength and severity. Backend Architecture: A high-performance FastAPI (Python) service handles the AI orchestration running on Render. AI Integration: We leveraged Google Gemini 2.5 Flash with highly tuned, systematic prompt engineering to enforce strict, predictable JSON structured outputs rather than hallucinated free-text. Database & Auth: Firebase acts as our backbone, securing user sessions via Firebase Auth logic and persisting generated FIRs, legal case records, and evidence arrays in Firestore. Deployment: The frontend operates on the Edge via Vercel, securely talking to our Render-bound algorithmic backend.

Challenges We Ran Into Building a legal AI requires zero tolerance for hallucinated statutes.

Statutory Transition: Teaching the AI to reliably abandon the old IPC structures and adhere exclusively to the new BNS guidelines required sophisticated prompt framing and post-processing validation. Continuous AI Streaming: Managing React's render cycles while processing large, structured JSON objects from the AI led to significant UI hydration challenges. We had to build a robust normalizeCase state shim to handle deeply nested and sometimes unpredictable object arrivals. Multi-Environment Orchestration: Managing secure Firebase Auth Admin credentials between a Vercel Edge frontend and a containerized Render backend introduced complex cross-origin resource sharing (CORS) and authentication barriers that we ultimately solved via injected JWT Bearer tokens.

Accomplishments That We're Proud Of

Zero-Crash Resilience: We built an incredibly robust React Error Boundary and data normalization layer. Even if the AI drops a parameter or users access legacy database entries, the UI falls back gracefully without crashing. The BNS Engine: Successfully modeling the new Indian legal code into a visually stunning, easy-to-digest timeline for normal citizens. The Design Architecture: We are immensely proud of Lumina's aesthetic. It sheds the boring, bureaucratic look of typical legal portals and feels like a sleek, state-of-the-art intelligence dashboard.

What We Learned

The immense importance of defensive programming when handling structured AI outputs. You cannot perfectly trust an LLM to always return every single JSON key, so aggressive optional chaining and fallback defaults are mandatory. How to architect a highly secure, multi-cloud application (Vercel + Render + Firebase) while maintaining a strict sub-second response time for the end user.

What's Next for Lumina: AI Justice Platform We plan to scale Lumina's capabilities by introducing:

Multilingual Support: Giving rural citizens the ability to dictate their case in regional languages (Hindi, Marathi, etc.) and translating it into formal English FIRs. Document OCR: Allowing users to upload messy legal notices or police reports, using Vision AI to instantly summarize the threat level. Lawyer Dashboard: Expanding the portal so verified advocates can claim leads, review the AI's preliminary analysis, and securely onboard clients directly through the platform.

Built With

- cdn

- cloud

- fastapi

- firebase

- framer-motion

- google-gemini

- lucide-react

- pydantic

- python

- react

- render

- tailwind-css

- typescript

- vercel

- vite

Log in or sign up for Devpost to join the conversation.