-

-

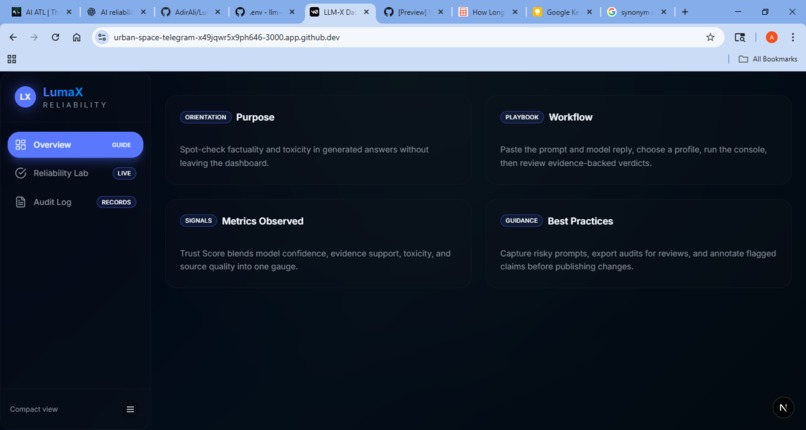

Frontpage with description of the app

-

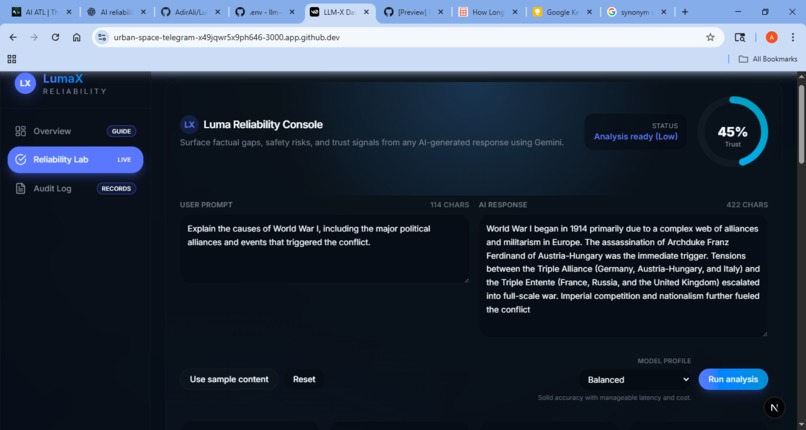

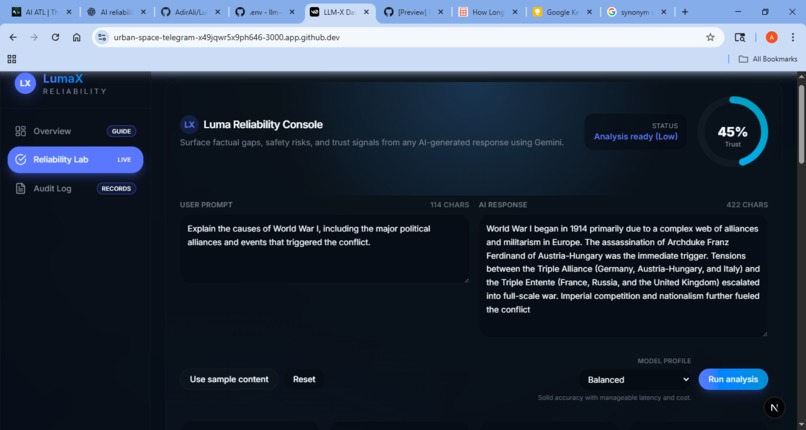

Example of a prompt and response being analyzed

-

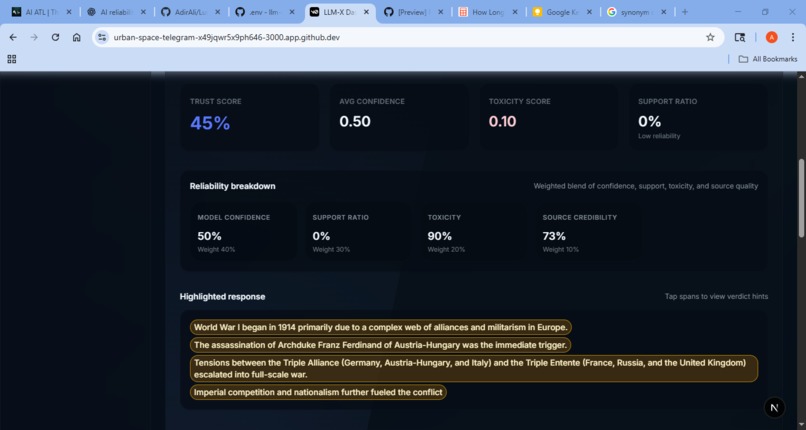

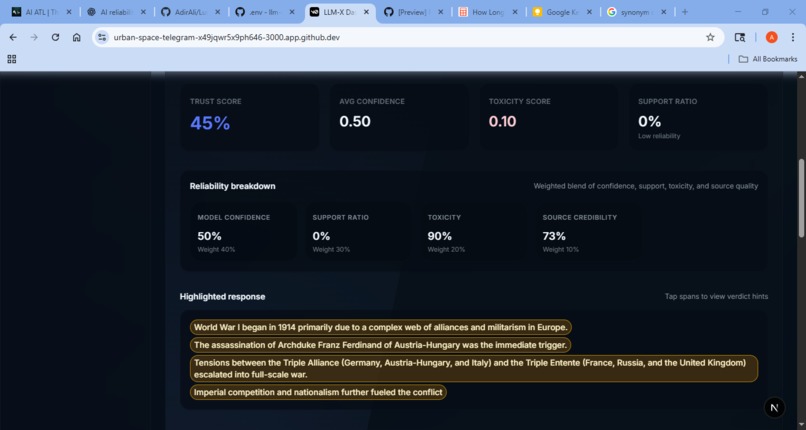

Metric determining the factuality based on models analysis

-

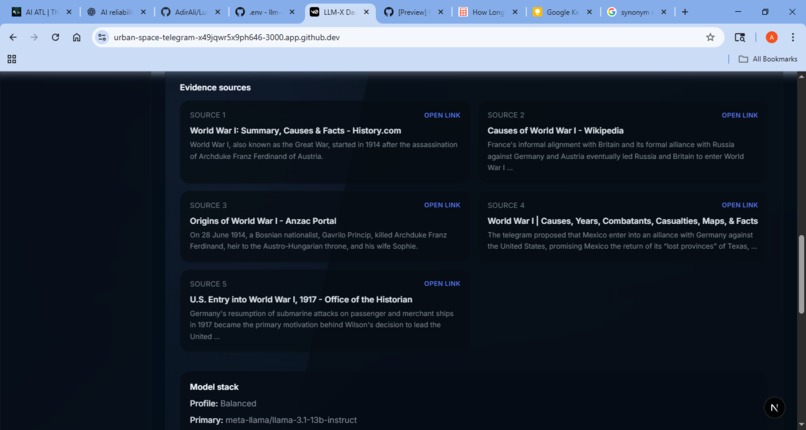

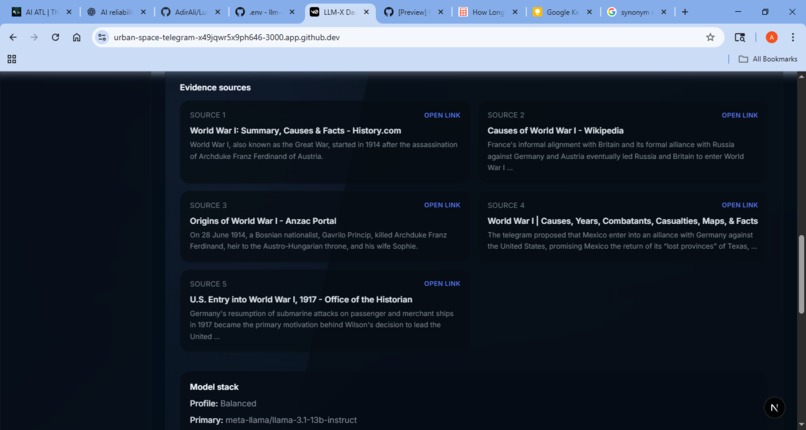

Cited sources to either accept or refute the factuality of a source.

-

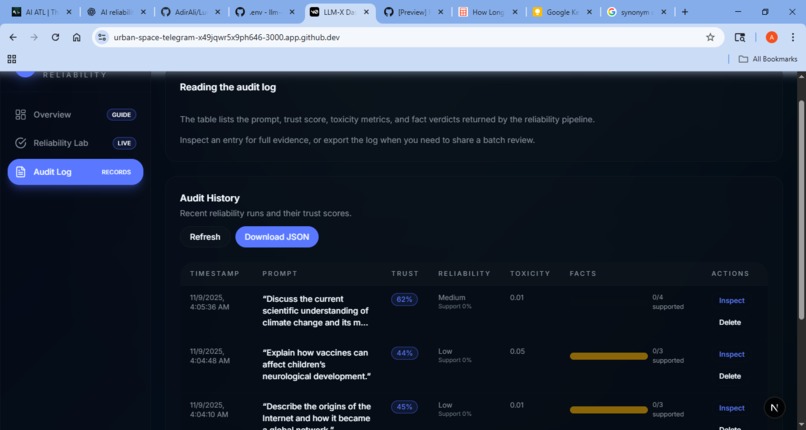

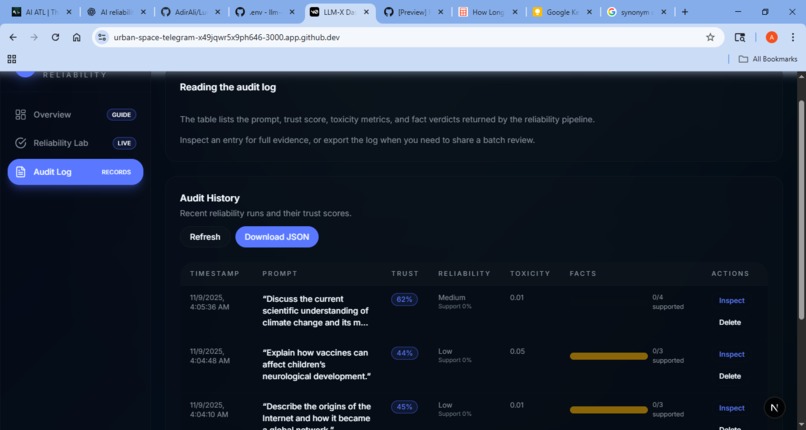

Audit log with previous analysis information. Could click inspect for more information

Inspiration

I built LumaX for the M-Drive Capital Hackathon to solve a common problem: AI models often sound confident even when they’re wrong. In business, these mistakes can lead to bad decisions and wasted money. LumaX helps users quickly check if an AI response is backed by real evidence.

What it does

LumaX reviews any AI-generated response and gives an easy-to-read report. It marks each sentence as Supported, Uncertain, or Hallucinated, and shows short explanations with evidence links. It also gives an overall trust score and keeps an audit log of past checks. Users can choose different model modes that balance speed, cost, and accuracy.

How I built it

The backend uses an API that does three things: searches the web for sources, runs a main model to check facts, and uses a second model to verify results. There are three model profiles, premium, balanced, and budget, that trade accuracy for cost. If the main system fails, a lightweight fallback (powered by Gemini) keeps the app running. The frontend is a simple dashboard where users can paste AI text, run a check, and see the verdicts, evidence, and history.

Challenges I ran into

Some open-source tools needed too much memory and storage, so I switched to cloud models and lighter profiles. Another issue was fake citations, models inventing links or quotes. Logging outputs and requiring real sources helped fix this. The verifier sometimes repeated the same mistakes, showing that adding deterministic checks or model ensembles works better. Finally, formatting data in JSON required extra parsing and validation to stay stable.

Accomplishments that we're proud of

I built a working audit dashboard that goes from retrieval to analysis, verification, scoring, and logging. The model profiles let teams test cost versus accuracy. Storing outputs and history also made debugging and improvement much easier.

What I learned

Large models reduce errors but still hallucinate, so grounding answers in web evidence is key. Combining AI models with factual checks—like snippet matching or API lookups—improves reliability. Good engineering practices such as validation, retries, and logging make the system more stable, while saving raw data and using structured profiles speed up testing.

What's next for LumaX - Reliability

Long term: Launch LumaX for real users, collect feedback, and refine the system. Eventually, I plan to build a browser extension so anyone can fact-check AI responses instantly.

Built With

- aistudio

- css

- gemini

- html

- javascript

- meta-llama/llama-3.1-13b-instruct

- meta-llama/llama-3.1-70b-instruct

- mistralai/mistral-large-latest

- python

- typescript

- vercel

- vscode

Log in or sign up for Devpost to join the conversation.