-

-

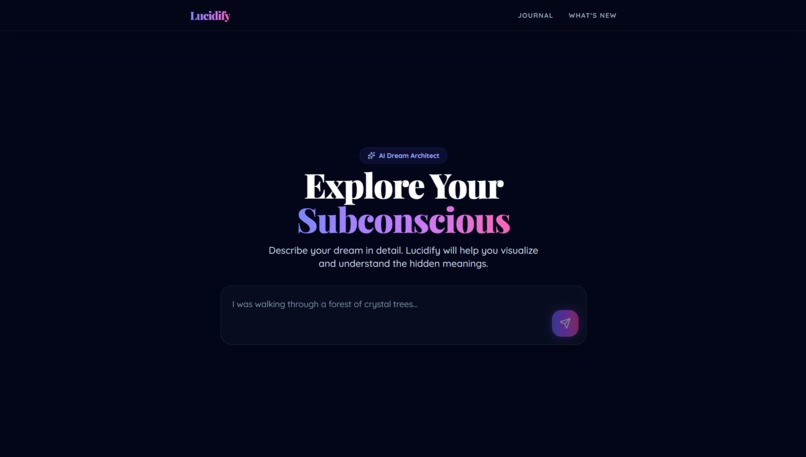

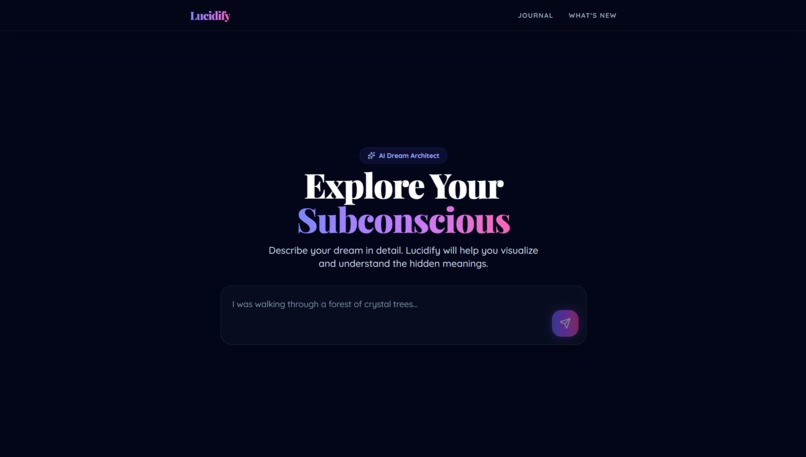

The Dream Engine: Enter your dream fragments to begin the journey.

-

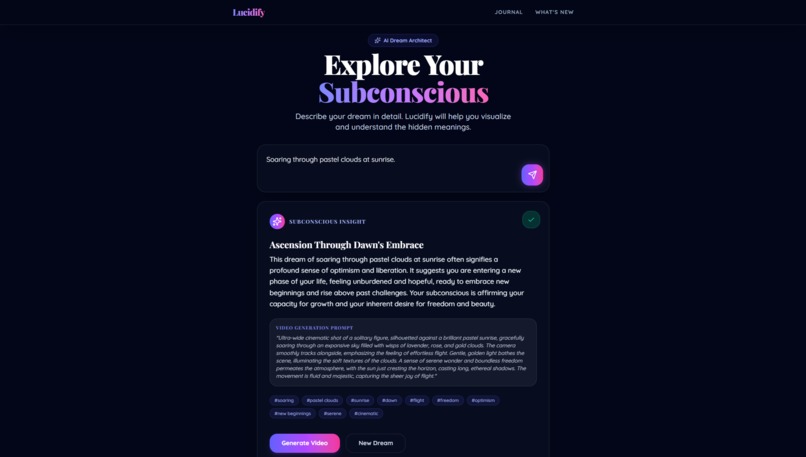

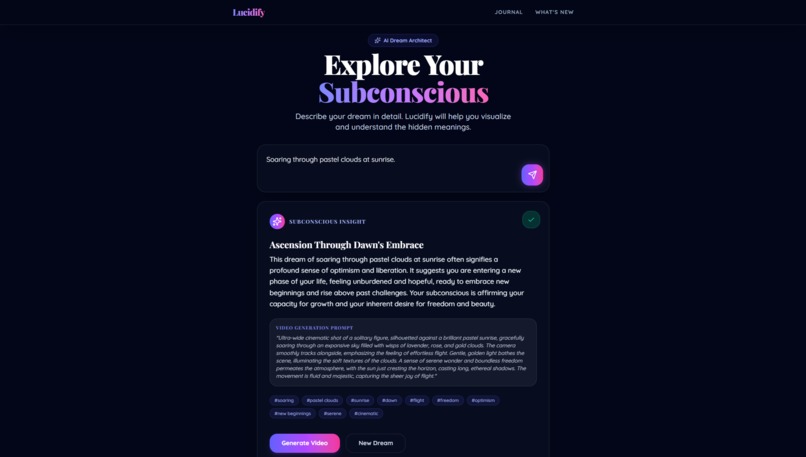

Subconscious Insight: Lucidify visualizes your dream and uncovers hidden meanings.

-

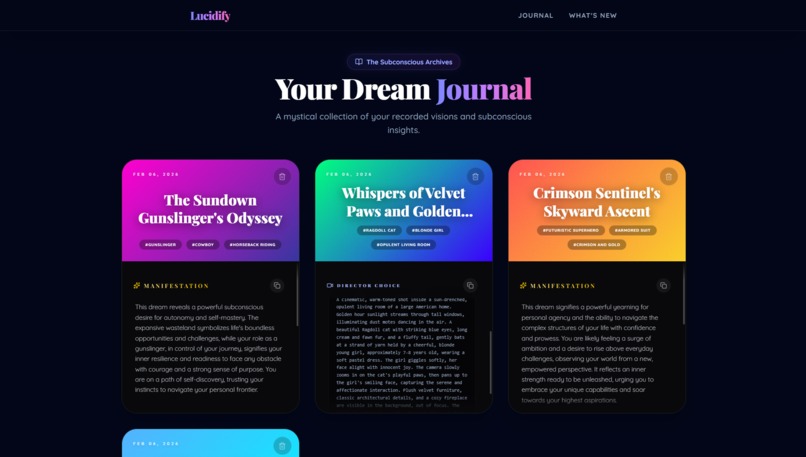

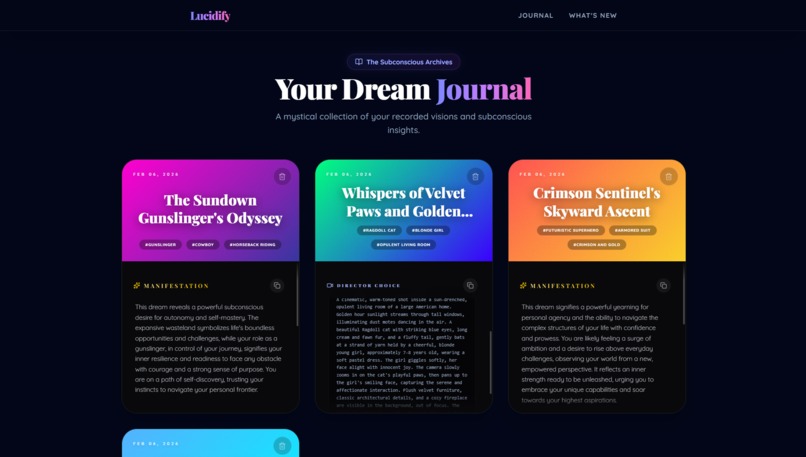

The Dream Journal: A mystical archive featuring the "Chroma Aura" 3D card system.

-

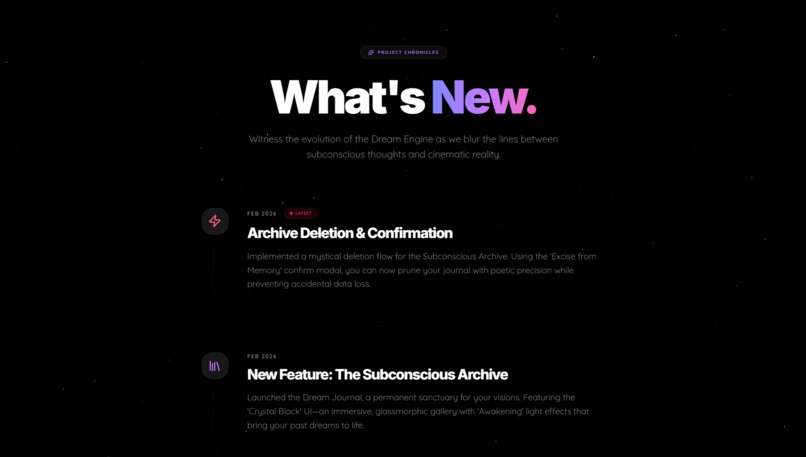

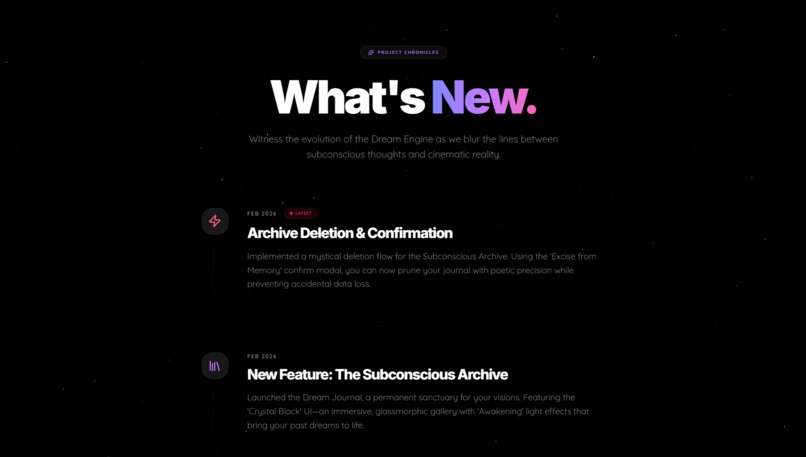

Evolution of Lucidify: A chronicle of our agentic workflow and technical milestones.

Inspiration

"What if you could interpret your dreams, enter them, and control the narrative?"

I am a lucid dreamer. I have experienced moments where I could consciously control my dreams, and I have even had precognitive dreams that blurred the lines between reality and the subconscious.

This personal ability sparked a question: Could technology replicate this experience for everyone? Lucidify was born from my lifelong fascination with the subconscious. It is not just an app; it is a digital bridge designed to help users interpret their dream content and virtually "enter" and "steer" their dreams using the power of Generative AI.

What it does

Lucidify is an AI-powered subconscious curation tool.

- Dream Input: Users enter fragments of their dreams or vague thoughts.

- Hypnotic Scripting: Gemini analyzes the input and generates a personalized "Hypnotic Script."

- Auditory Immersion: Google Cloud TTS (Neural2-F) acts as your digital guide. It delivers the script with a soothing, paced cadence designed to induce a meditative state, syncing perfectly with the visual flow.

- Visual Manifestation: Using Veo, the system generates ethereal videos that visualize the user's subconscious state.

- Lucid Transition: The app guides the user through a "Lucid Transition," turning abstract visuals into clear, actionable "Subconscious Insights."

How we built it

We utilized an AI-native workflow (Antigravity IDE) to orchestrate the absolute latest from Google's AI and web ecosystem, building a futuristic experience with:

- Frontend: Svelte 5, Vite 7, Tailwind CSS 4, Lucide Svelte.

- Backend: SvelteKit (Serverless Functions) on Google Cloud Platform (GCP).

- The AI Engine:

- Google Gemini 2.5 Flash: For ultra-fast reasoning and script generation.

- Google Veo 3.1 (via Google AI Studio): For high-fidelity dream video generation.

- Imagen 4.0 Ultra: For thumbnail imagery.

- Google TTS (Neural2-F): For the hypnotic voice guide.

Challenges we ran into (Technical Deep Dive)

We encountered significant engineering hurdles while orchestrating cutting-edge AI models on a serverless architecture. Here is how we solved them:

1. Real-time Video Generation vs. Serverless Limits

- The Challenge: Generating high-fidelity video with Google Veo 3.1 takes time, often exceeding the 60s hard timeout of serverless platforms (Vercel), leading to wasted compute and generic errors.

- The Solution: We implemented a Time-Bounded Polling Architecture.

- Implementation: We switched to Vertex AI's

predictLongRunningendpoint and built a backend polling loop monitored by a safety timer (55s limit). We utilize Server-Sent Events (SSE) to sendPROGRESSheartbeats every 4 seconds, keeping the connection alive while the heavy rendering happens on Google's infrastructure.

2. Robust LRO Polling for Async API Models

- The Challenge: Veo 3.1 operations are Long-Running Operations (LROs) with deeply nested, variable response structures. Standard polling often failed with

404or400errors due to schema mismatches between preview and production models. - The Solution: A Recursive URI Extraction & Polling mechanism.

- Implementation: Our system captures the

operationNamefrom the kickoff response and uses thev1betaendpoint to poll. We implemented a resilient JSON parser that recursively searches forvideo.uriacross multiple potential fields (generatedSamples,response.video[0]), ensuring stability regardless of API schema shifts.

3. The "Swan Strategy" (Decoupled UX)

- The Challenge: Complex backend polling (LROs, multiple API calls) risked making the frontend unstable and jittery.

- The Solution: We decoupled the UX using the "Swan Analogy."

- Implementation: Like a swan appearing graceful while paddling frantically underwater, our frontend displays a high-quality static state ("Warping Reality..."), while the backend handles the heavy lifting. We use SSE solely as a "heartbeat" to maintain the stream without triggering visual flickers, allowing us to migrate backend logic without breaking the UI.

4. High-Fidelity Cloud TTS & Key Scoping

- The Challenge: Standard AI Studio keys don't support Google Cloud TTS, and the experimental

Journey-Fvoices crashed with standard SSML parameters (pitch/rate). - The Solution: A Dedicated Multi-Key Architecture and Safe Parameter Protocol.

- Implementation: We separated the architecture to use a dedicated GCP API Key for TTS services. We also migrated to the Neural2-F voice model, optimizing it with aggressive SSML pacing (

rate="0.85",pitch="-4.0st") to achieve the deep, hypnotic tone required for the app.

5. Aesthetic Cohesion with "The Chroma Aura System"

- The Challenge: Managing dozens of 3D-rotating, interactive cards with unique aesthetics in a performant way.

- The Solution: The Chroma Aura System powered by Svelte 5 Runes.

- Implementation: We created a deterministic gradient palette where each card's "soul" is derived from its index. Using Svelte 5's

$stateand$derivedrunes, we handle complex 3D CSS transitions on the GPU, ensuring a silky-smooth 60fps experience even with heavy visual elements.

Accomplishments that we're proud of

🌟 1. Architecting "The Dream Engine"

We built a robust multi-model pipeline that acts as a bridge to the subconscious.

- Parallel Synthesis: We achieved perfect synchronization between audio and visuals. The backend triggers Voice Synthesis (TTS) and Prompt Refinement (Director) in parallel, ensuring the narrator speaks the exact script being visualized.

- Smart Ambient Audio: We use Gemini 2.5 Flash to analyze the dream's emotional profile and intelligently select one of 5 ambient loops (Nature, Space, Horror, etc.), cross-fading them dynamically using a zero-clash sequence.

- Aesthetic Sanitization: Gemini acts as a safety filter, automatically translating violent or sensitive content into artistic metaphors (e.g., "blood" → "rose petals") before manifestation.

🎥 2. Multi-Model Manifestation & Resilience

We didn't just rely on one model; we built a resilient fallback system.

- Veo 3.1 Fast: The primary engine for cinematic dream shorts.

- Imagen 4.0 Ultra Fallback: If video quotas are hit, the system gracefully downgrades to state-of-the-art static imagery.

- Fixing the "Mist": We solved the "Only Mist" bug in Veo by implementing browser-side key appending and a theatrical transition sequence that clears the introductory fog.

🔮 3. The "Chroma Aura" Journal (Svelte 5)

We pushed the boundaries of the new Svelte 5 framework.

- Runes Everywhere: The entire UI state is managed by Svelte 5 Runes (

$state,$effect), creating a reactive and bug-free experience. - 3D Tactile Interaction: The Dream Journal features Tarot-style cards that levitate and flip in 3D space. Each card has a unique "Chroma Aura"—a dual-color gradient generated deterministically—giving every dream a unique visual soul.

- Local Persistence: We implemented a robust

localStoragesystem with a "Confirm Modal" (inspired by our other SaaS, Cubrain) to prevent accidental deletions of precious dream records.

🕹️ 4. Lucid Mode & The Easter Egg

- Lucid Mode: We created an interactive "Take Control" flow. A "Lucid Transition" freezes time (

grayscale+awakening.mp3), allowing the user to rewrite the narrative mid-dream. - Ghost Typing: We added a production-ready Easter Egg (F8 key) that simulates "Ghost Typing," auto-filling context-aware prompts for demo purposes.

🤖 5. The "Agentic" Development Process

We didn't just code; we orchestrated. Using Antigravity IDE, we leveraged Gemini to architect the backend logic while we focused on the creative vision, proving that a single maker can build enterprise-grade apps with AI assistance.

What we learned

We learned that Multimodal AI is not just for productivity; it's a powerful tool for self-reflection. We also mastered the art of asynchronous AI orchestration—making multiple models (Gemini, Veo, TTS) work together in harmony despite their different latency profiles.

What's next for Lucidify

We have a clear roadmap to evolve Lucidify from a web prototype into a daily habit-forming ecosystem.

1. The "Hypnopompic" Wake-Up Mode (Killer Feature)

We plan to solve the "forgotten dream" problem by integrating an alarm system.

- The Flow: Stop alarm → Mic activates immediately.

- Native Audio AI: Gemini 2.5 Flash captures groggy voice notes and instantly structures them into dream prompts.

2. The Cognitive Ecosystem: "The Subconscious Bridge"

Lucidify is the missing link in our cognitive suite.

- Conscious Learning (Cubrain): Connects with Cubrain (our SaaS for active recall/flashcards).

- Subconscious Insight (Lucidify): Cubrain structures awake knowledge; Lucidify structures asleep insights.

3. Mobile Expansion (TWA Strategy)

- Phase 1 (Now): PWA implementation.

- Phase 2 (Next): Packaging the SvelteKit app into an Android TWA (Trusted Web Activity) for Play Store launch, maintaining a single serverless backend.

4. Empathetic Interpretation & Therapy

Expanding the "Dream Architect" into a mental health tool to visualize anxieties in a non-threatening, metaphorical way.

Built With

- gemini

- google-cloud

- google-tts

- imagen

- svelte

- tailwind-css

- veo

- vite

Log in or sign up for Devpost to join the conversation.