-

-

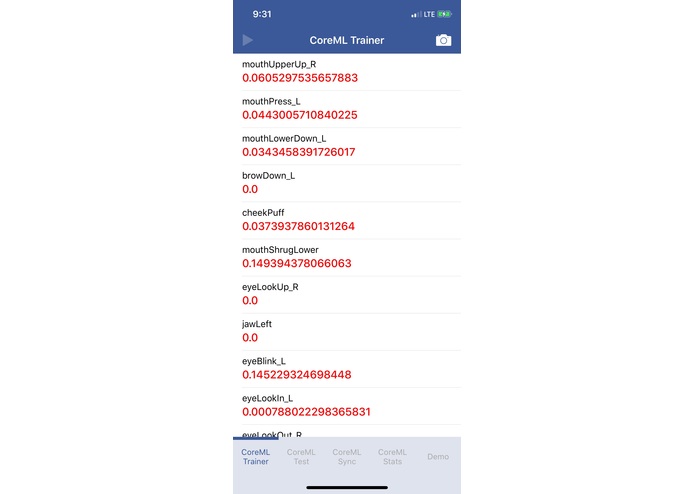

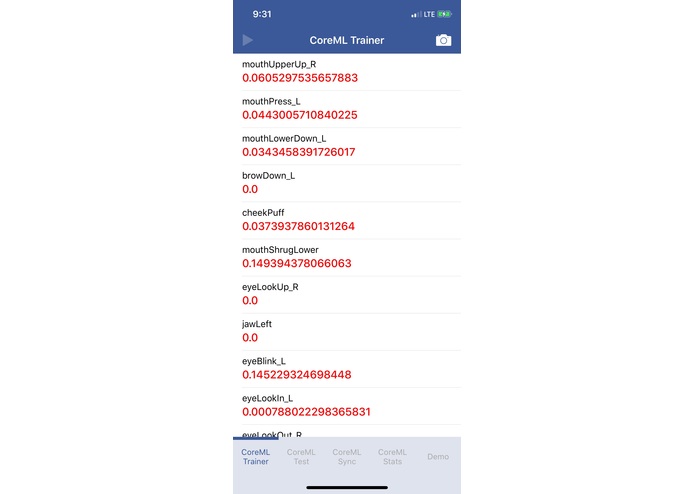

CoreML Trainer - captures our supervised training data

-

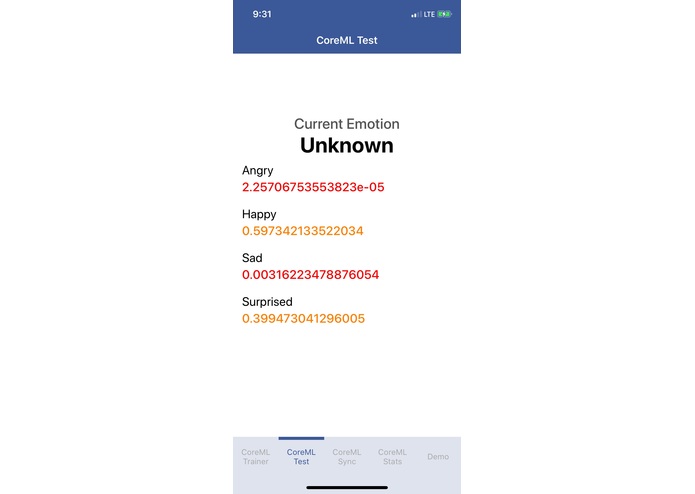

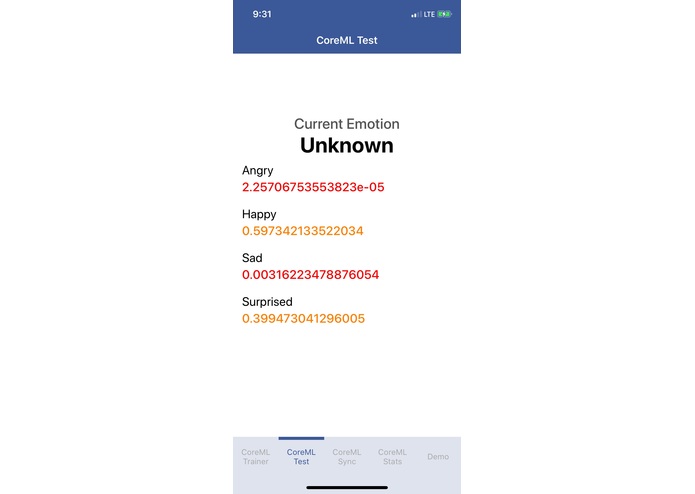

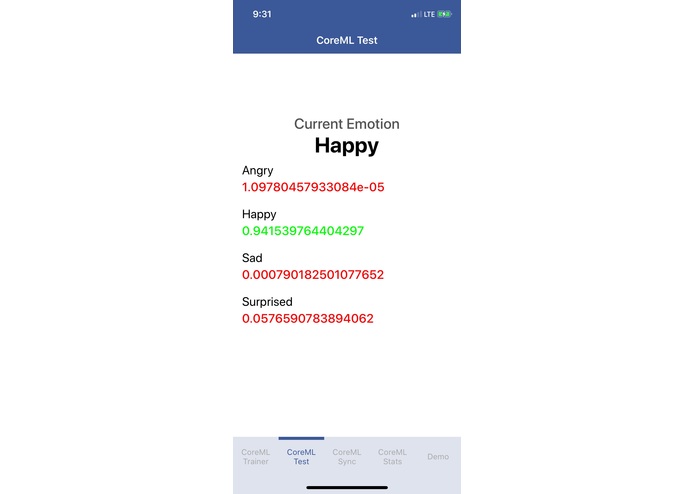

CoreML Test - integrates our ML model into our iOS app capturing real-time emotions

-

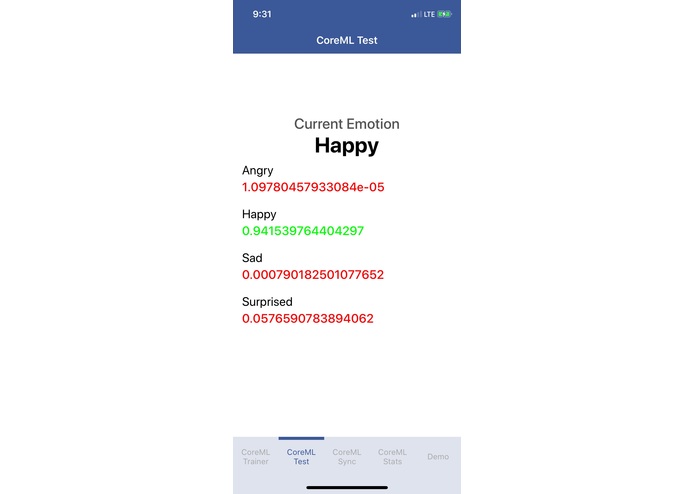

CoreML Test - integrates our ML model into our iOS app capturing real-time emotions

-

CoreML Sync - fetches the latest model from our backend server

-

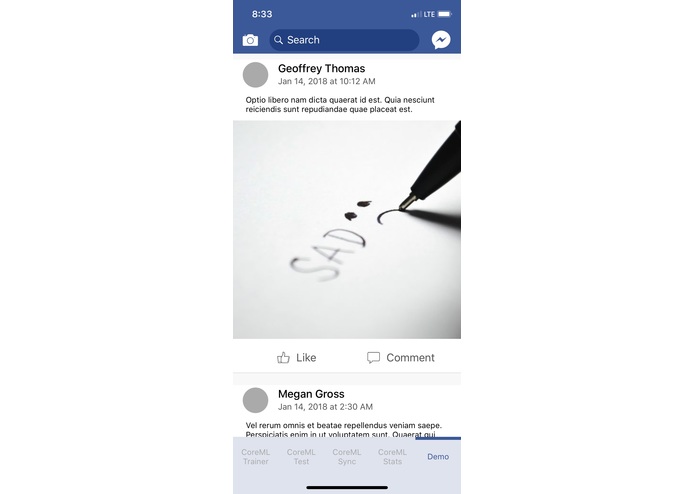

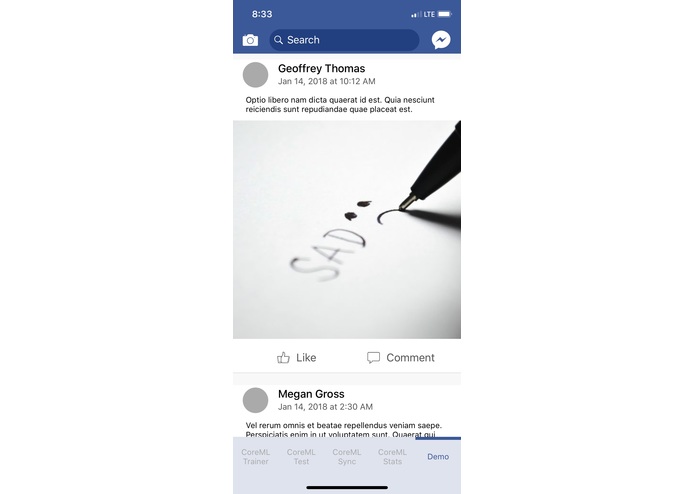

Demo - simulates social media app. The continuous feed reflects the users' reactions

Inspiration

We were inspired to build Loki to illustrate the plausibility of social media platforms tracking user emotions to manipulate the content (and advertisements) that they view.

What it does

Loki presents a news feed to the user much like other popular social networking apps. However, in the background, it uses iOS’ ARKit to gather the user’s facial data. This data is piped through a neural network model we trained to map facial data to emotions. We use the currently-detected emotion to modify the type of content that gets loaded into the news feed.

How we built it

Our project consists of three parts:

- Gather training data to infer emotions from facial expression

- We built a native iOS application view that displays the 51 facial attributes returned by ARKit.

- On the screen, a snapshot of the current face can be taken and manually annotated with one of four emotions [happiness, sadness, anger, and surprise]. That data is then posted to our backend server and stored in a Postgres database.

- Train a neural network with the stored data to map the 51-dimensional facial data to one of four emotion classes. Therefore, we:

- Format the data from the database in a preprocessing step to fit into the purely numeric neural network

- Train the machine learning algorithm to discriminate different emotions

- Save the final network state and transform it into a mobile-enabled format using CoreMLTools

- Use the machine learning approach to discreetly detect the emotion of iPhone users in a Facebook-like application.

- The iOS application utilizes the neural network to infer user emotions in real time and show post that fit the emotional state of the user

- With this proof of concept we showed how easy applications can use the camera feature to spy on users.

Challenges we ran into

One of the challenges we ran into was the problem of converting the raw facial data into emotions. Since there are 51 distinct data points returned by the API, it would have been difficult to manually encode notions of different emotions. However, using our machine learning pipeline, we were able to solve this.

Accomplishments that we're proud of

We’re proud of managing to build an entire machine learning pipeline that harnesses CoreML — a feature that is new in iOS 11 — to perform on-device prediction.

What we learned

We learned that it is remarkably easy to detect a user’s emotion with a surprising level of accuracy using very few data points, which suggests that large platforms could be doing this right now.

What's next for Loki

Loki is currently not saving any new data that it encounters. One possibility is for the application to record the expression of the user mapped to the social media post. Another possibility is to expand on our current list of emotions (happy, sad, anger, and surprise) as well as train on more data to provide more accurate recognition. Furthermore, we can utilize the model’s data points to create additional functionalities.

Log in or sign up for Devpost to join the conversation.