-

-

Logo

-

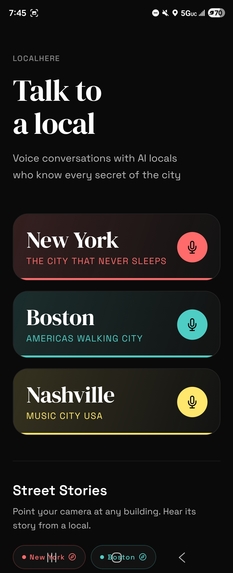

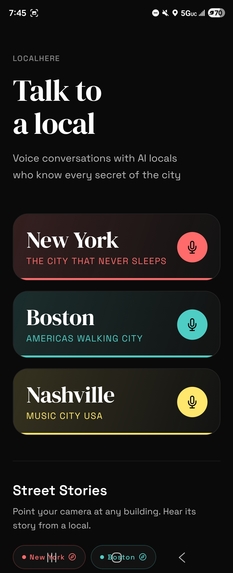

Home Page (Part 1)

-

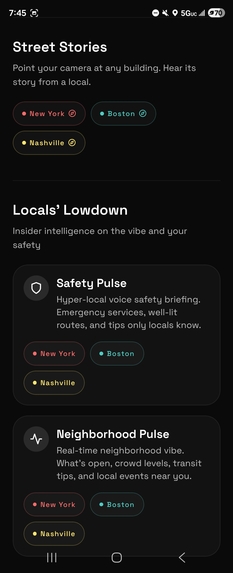

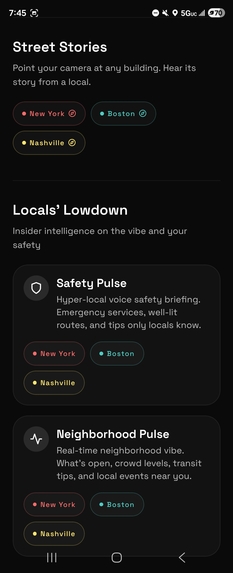

Home Page (Part 2)

-

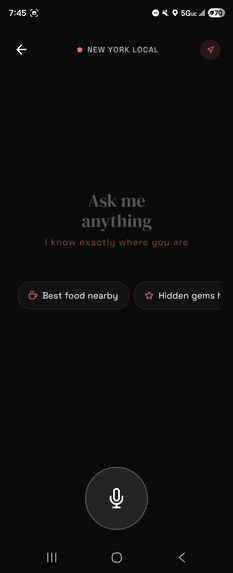

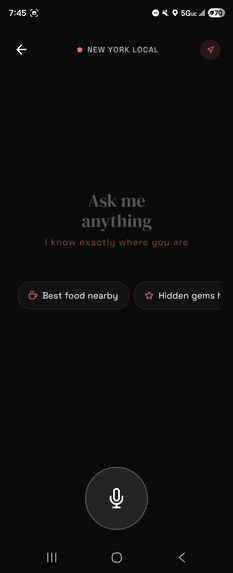

Talk to a local (New York) - testing device has NYC as virtual location

-

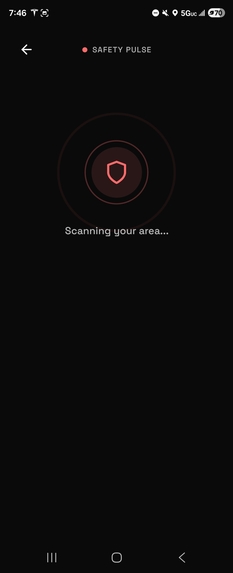

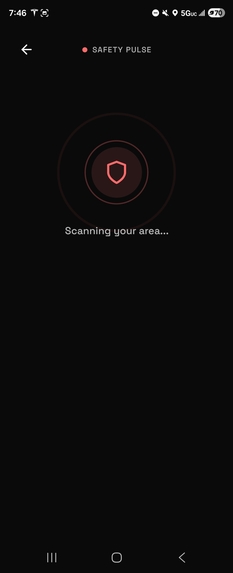

Safety Pulse Scan - testing device has NYC as virtual location

-

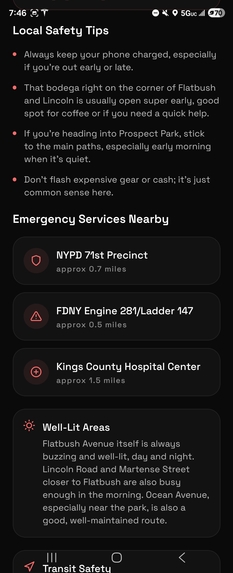

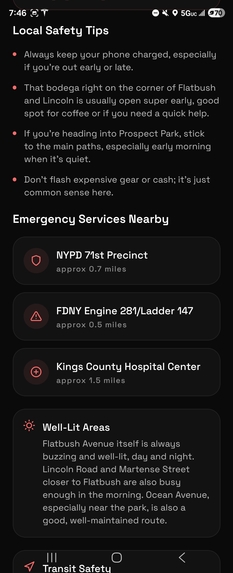

Safety Pulse Scan Result (Part 1) - testing device has NYC as virtual location

-

Safety Pulse Scan Result (Part 2) - testing device has NYC as virtual location

-

Safety Pulse Scan Result (Part 3) - testing device has NYC as virtual location

-

Neighborhood Pulse Scan - testing device has NYC as virtual location

-

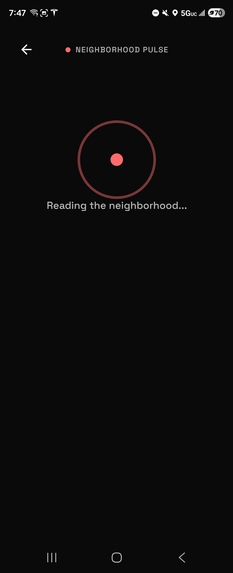

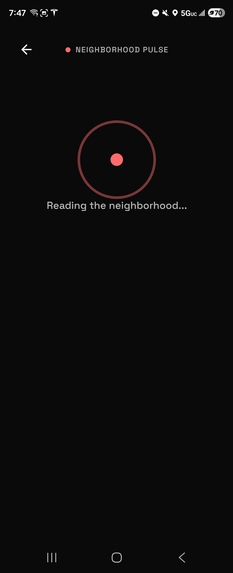

Neighborhood Pulse Result (Part 1) - testing device has NYC as virtual location

-

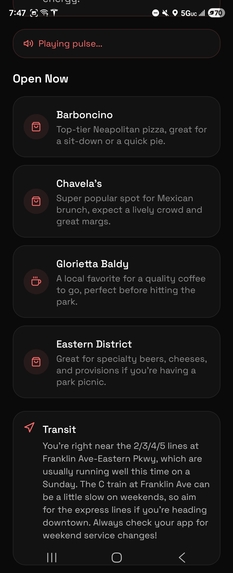

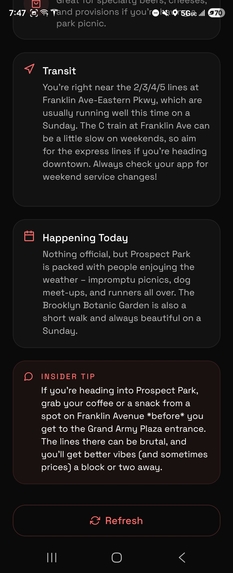

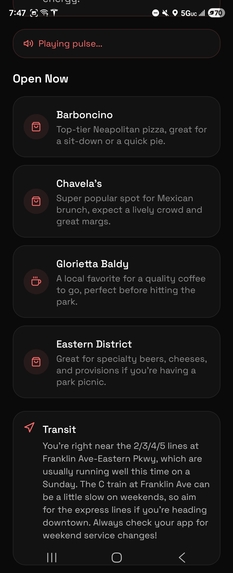

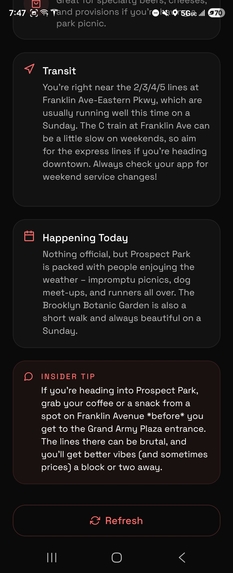

Neighborhood Pulse Result (Part 2) - testing device has NYC as virtual location

-

Neighborhood Pulse Result (Part 3) - testing device has NYC as virtual location

-

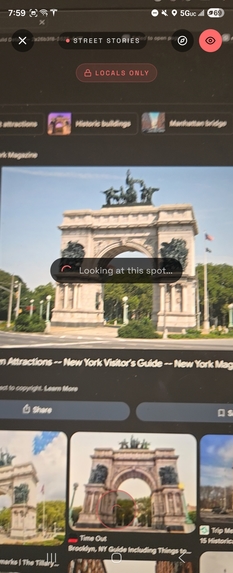

Street Stories Scan (Part 1) - not actually in NYC, but a pic on my laptop should do

-

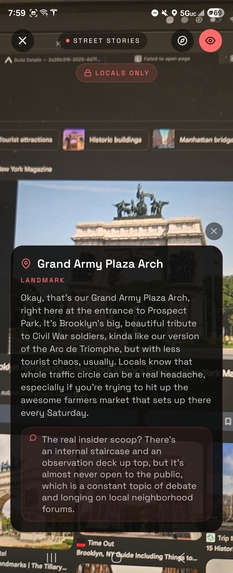

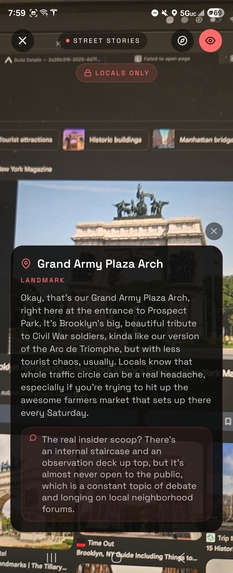

Street Stories Scan (Part 2) - not actually in NYC, but a pic on my laptop should do

-

Street Stories Scan Result - not actually in NYC, but a pic on my laptop should do

LocalHere

The city's soul, in your ear

Inspiration

I am an avid tourist having visited pretty much all the major cities in the US. But often times, I wind up confused with safety and the best local experiences and not just tourist traps. I wanted to build something that felt like having a local best friend in your pocket someone who tells you where the real vibe is, which subway entrance is better lit at 11 PM, and the story behind that weird mural on the corner that isn't in any guidebook. Also, I wanted to ensure that they have the local accent for the most realistic and human-like experience.

What it does

LocalHere is a voice-first AI guide for NYC, Boston, and Nashville (plan to add more cities in the future). It provides:

- Voice Conversations: Talk naturally to AI locals with authentic regional accents.

- Street Stories: Point your camera at landmarks to hear their "Only Locals" backstories.

- Locals' Lowdown: Real-time safety pulses and neighborhood vibe checks based on your exact GPS and time of day.

- Accessibility Scout: Multimodal AI analysis of building entrances and transit for accessibility features.

How I built it

- Frontend: React Native with Expo SDK 54, utilizing a "Liquid Glass" UI aesthetic.

- AI Brain: OpenAI GPT-4o for reasoning and Whisper for high-accuracy voice transcription.

- Data from Real Locals: This app would not be complete without genuine local data. Alongside layers of fact checking, data from online forms were scraped via dedicated API services, forming the most authentic experience possible for the cities supported.

- Vision: Google Gemini 2.5 Flash for multimodal identification of landmarks and accessibility features.

- Voice: ElevenLabs for soulful, city-specific text-to-speech synthesis.

- Backend: Express.js with TypeScript handling the AI services and audio processing.

Challenges I ran into

The biggest challenge was low-latency audio processing on mobile. Converting high-quality voice recordings into AI-ready formats while maintaining a conversational "pulse" required fine-tuning buffer management and server-side synthesis. I also had to balance the "Only Locals" personality ensuring the AI felt opinionated and authentic without being unhelpful.

Accomplishments that I'm proud of

I am incredibly proud of the seamless integration of vision and voice. Seeing the app identify a specific NYC storefront and immediately narrate its history in an authentic NYC accent felt like magic. I'm also proud of the user friendliness of the interface as it makes the interaction much more direct and straightforward in terms of getting information.

What I learned

I learned that the best AI interfaces are the ones that disappear. By removing text inputs and focusing entirely on voice and vision, the technology steps back and the experience of the city steps forward.

$$ \text{Authenticity} = \frac{\text{Local Knowledge} + \text{Real-time Context}}{\text{Tourist Traps}} $$

What's next for LocalHere

I plan to expand to more cities like Chicago and New Orleans, integrate real-time transit data APIs for even more precise "Pulse" updates, and add a "Local Meetup" feature where the AI can suggest community events happening in the next hour. I also plan on making the experience more personalized (like a digital passport) where the local can give you the most authentic recommendations for a new city based on your previous experience/preference(s) with other cities where you used LocalHere.

Built With

- elevenlabs

- expo.io

- express.js

- gemini

- gpt

- react

Log in or sign up for Devpost to join the conversation.