-

-

Local’s start screen: choose your destination by tap or voice.

-

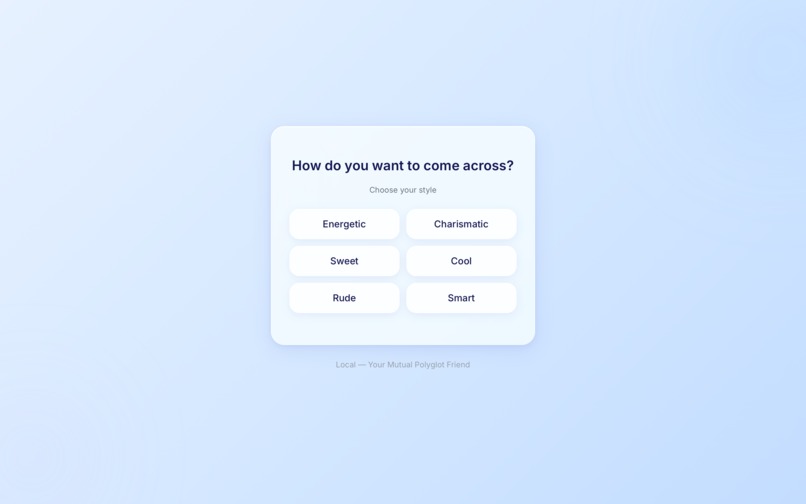

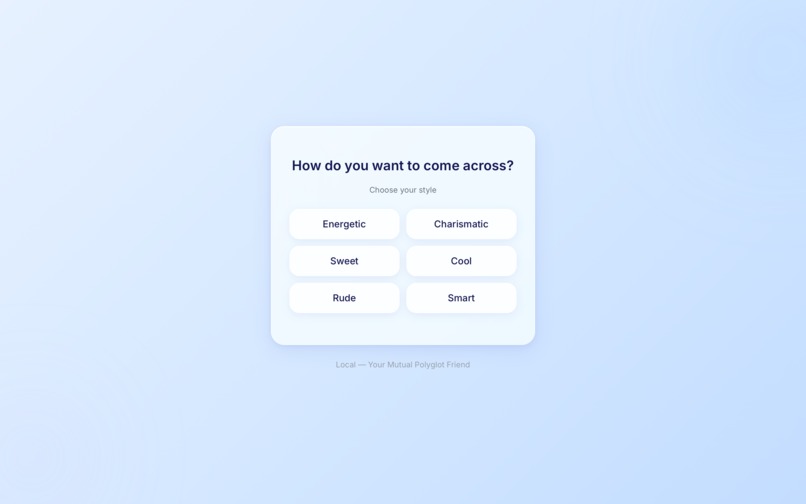

Choose how you want to come across: Energetic, Sweet, Rude, Charismatic, Cool, or Smar

-

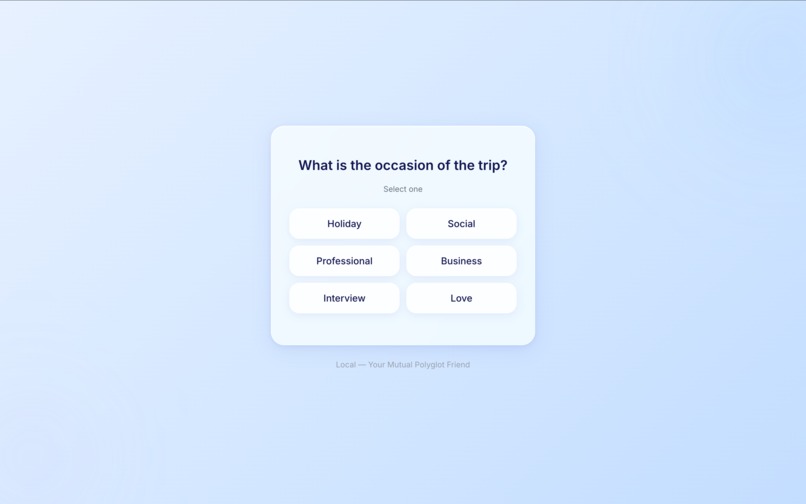

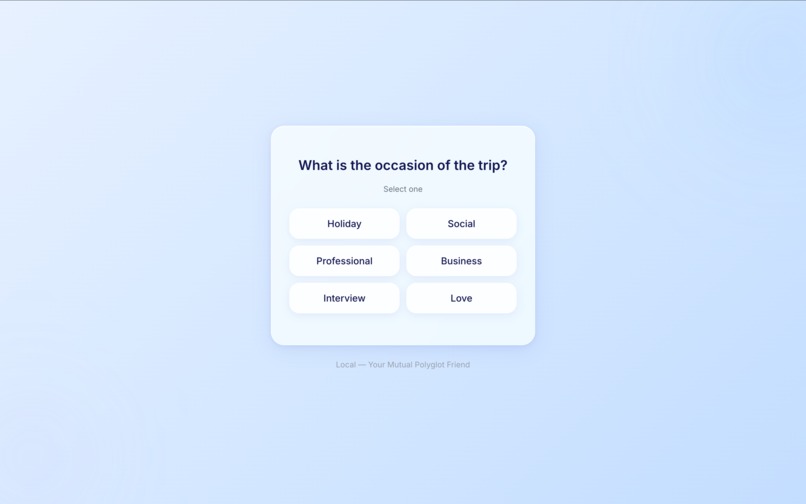

Users pick the trip occasion (e.g. Holiday, Business, Social) so Local can tailor conversation suggestions to the context.

-

Onboarding asks how easy you find pronouncing the target language (Easy / Medium / Hard) so Local can tailor practice to your level.

-

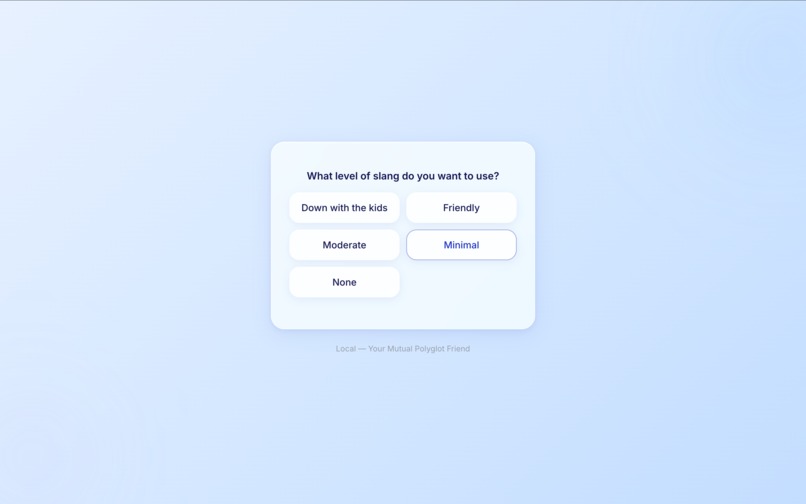

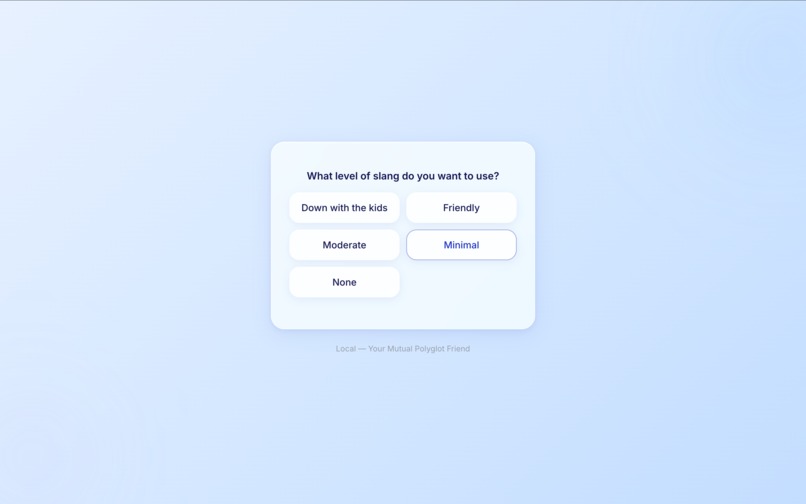

What level of slang does the user wanna use

-

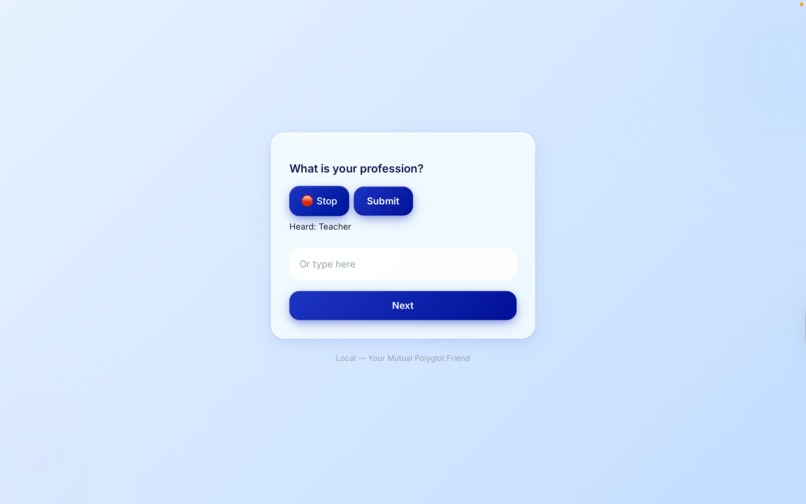

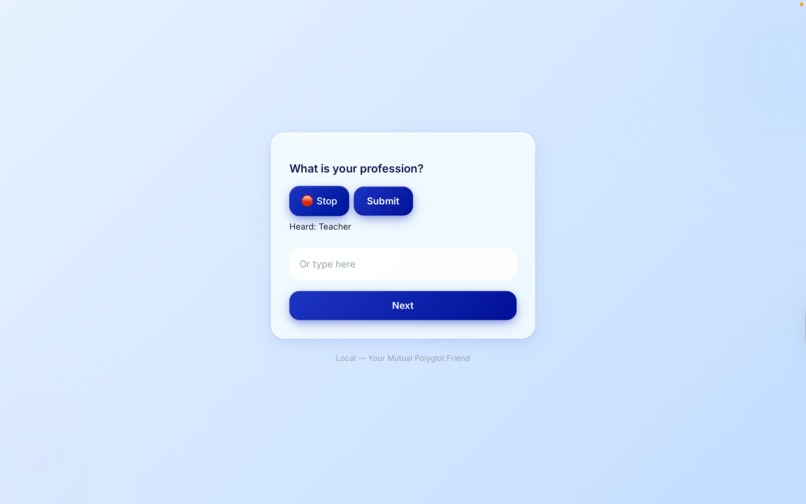

Answer “What is your profession?” by voice or text. Here, “Teacher” was heard via speech recognition; you can also type or tap Next.

-

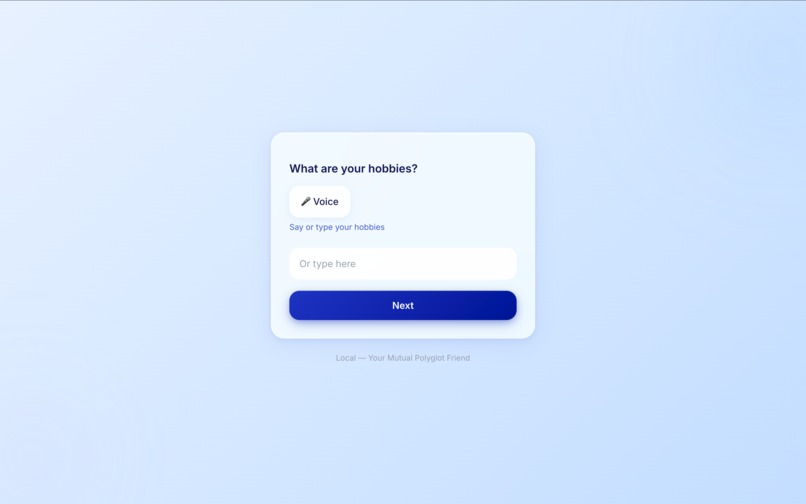

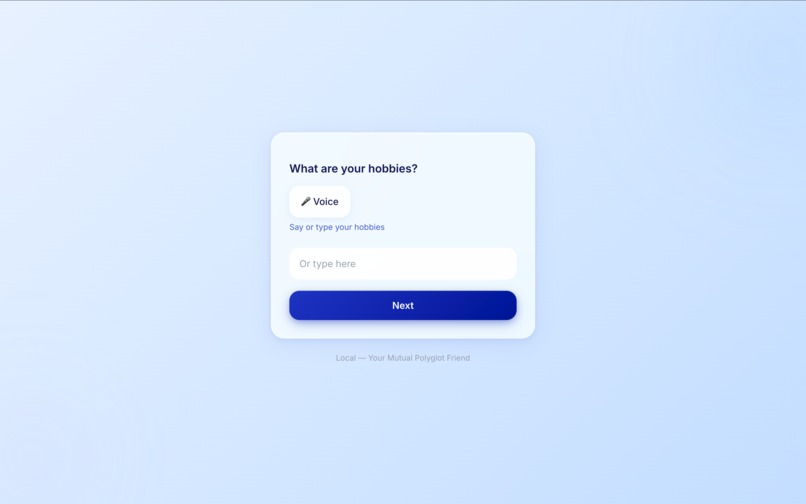

Hobbies step: say or type your interests with the Voice button or text field, then tap Next to continue onboarding.

-

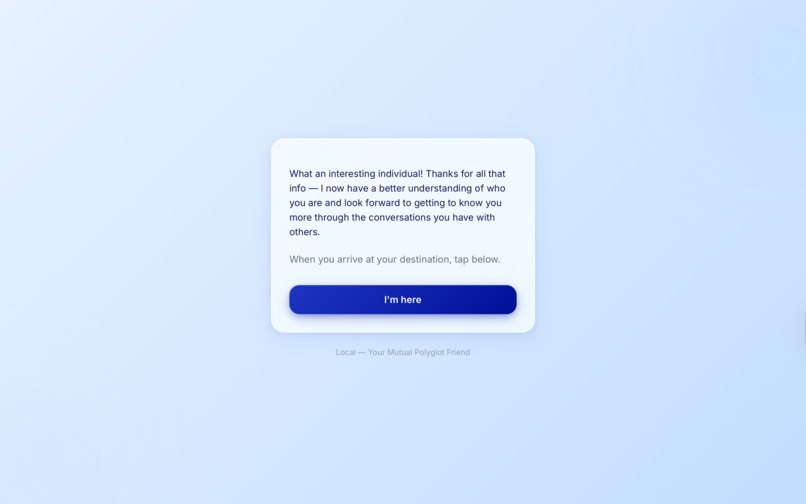

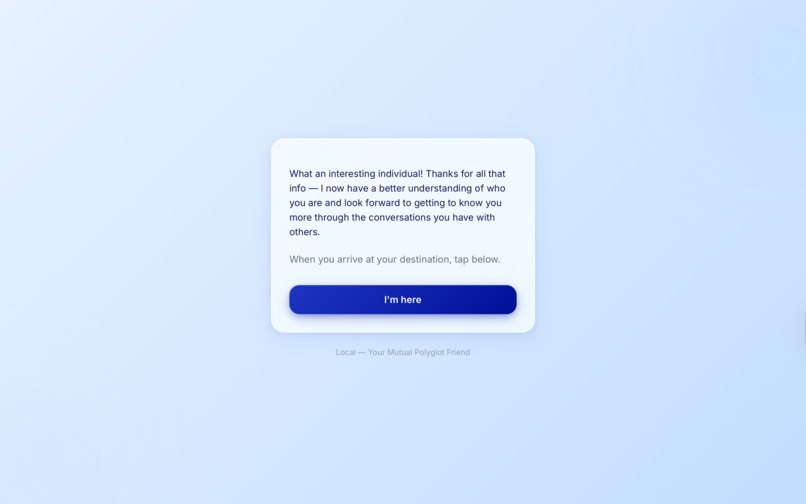

After onboarding, users see a short thank-you and the prompt “When you arrive at your destination, tap below,” then tap “I’m here” to start.

-

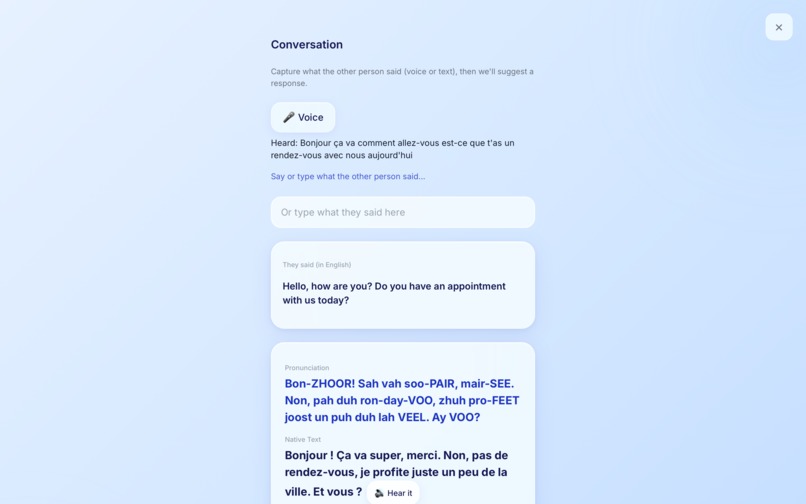

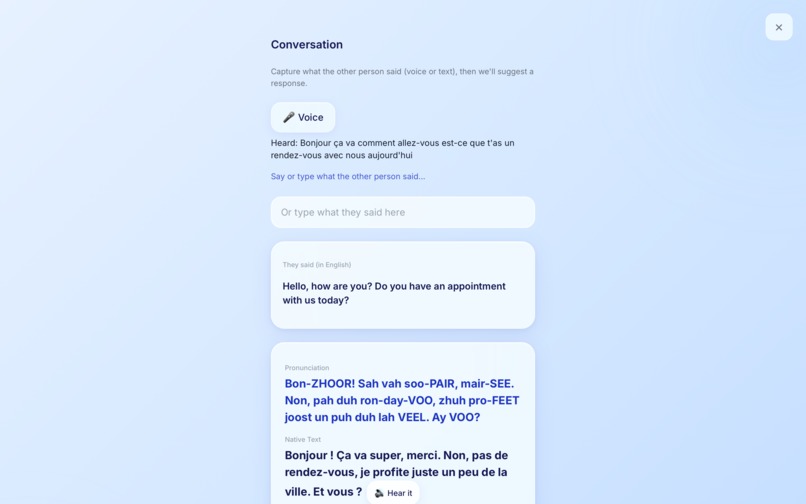

See what they said in English, get a suggested reply with pronunciation, native text, and “Hear it” then repeat via voice or say “next.”

-

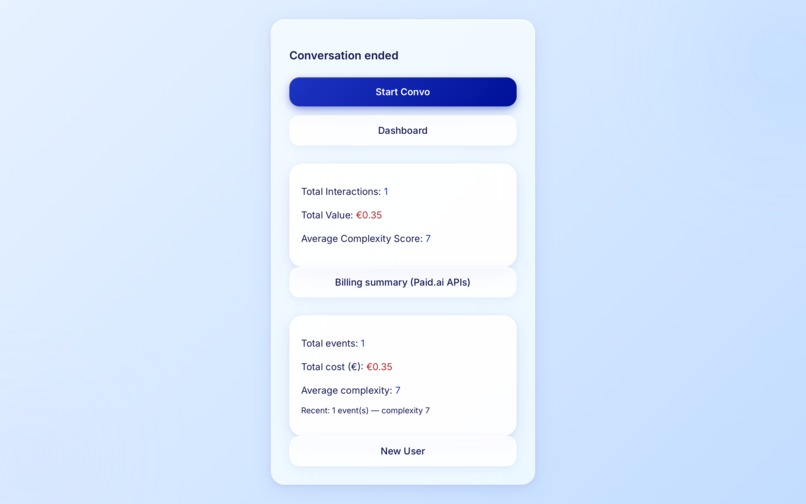

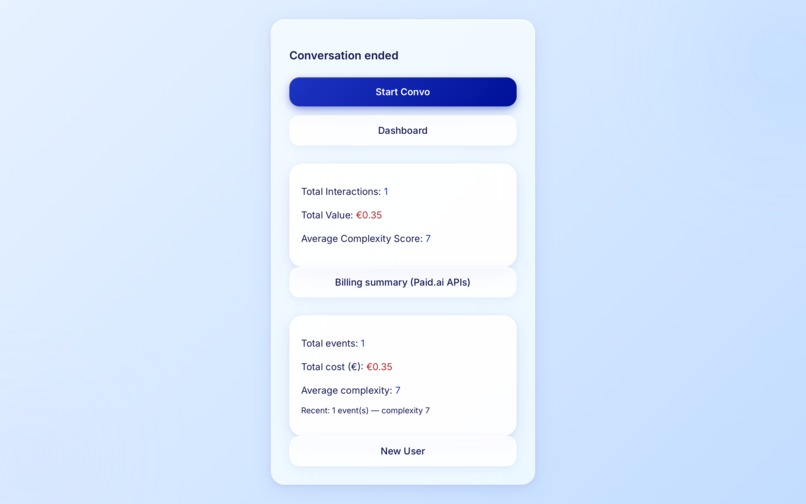

See total interactions and value, Paid.ai billing summary, and options to start a new convo, open the dashboard, or start as a new user.

Inspiration

The journey on the Eurostar from London to Paris highlighted a stark reality: navigating a new country without the language is isolating. Struggling to buy a simple data SIM card sparked the vision for Local—a mutual polyglot friend that prepares you before you travel and translates for you in real-time once you arrive.

What it does

Local is an ultra-low-latency, voice-first AI language coach and live translator. Users complete a rapid onboarding flow (specifying destination, occasion, slang preferences, and profession) to dynamically shape the agent's persona. Through our continuous "Step Z" conversation loop, users speak naturally into their earphones. Local instantly replies with native spoken audio alongside a three-line UI display: phonetic spelling (for pronunciation assistance), the native text, and the English translation.

How we built it

We architected a Unified Agentic Workflow to eliminate the latency of traditional multi-model pipelines. The frontend uses React, Vite, and Tailwind CSS (achieving an iOS 26 liquid glass aesthetic), capturing raw audio via the Web Audio API. This audio streams over WebSockets to a Python FastAPI backend. Here, the Gemini 2.5 Live API simultaneously processes the audio and streams back synthesised voice and structured JSON via concurrent tool calls. For the Paid.ai track, we built a server-side ValueTracker agent. Rather than measuring raw compute, it treats every completed translation loop as a billable signal, calculating a complexity_score (factoring in slang intensity and conversational turns) to dynamically price the value of the interaction.

Challenges we ran into

Handling raw PCM audio streams over WebSockets whilst simultaneously catching asynchronous JSON tool calls for the UI required precise concurrency management. Moving away from standard browser Speech-to-Text APIs to a fully native multimodal stream meant strictly managing buffer sizes and audio context sample rates to prevent distortion.

Accomplishments that we're proud of

• Engineered a truly unified multimodal agent using Gemini Live, achieving near-instantaneous voice-to-voice translation without daisy-chaining LLMs. • Implemented Paid.ai's agentic billing philosophy, autonomously monetising AI tasks based on the contextual complexity of the outcome. • Built a sleek, highly responsive frontend that handles complex audio states seamlessly.

What we learned

• Latency is the killer of voice applications; raw data streaming is non-negotiable for a natural user experience. • Ship the core operational loop first, then refine the background heuristics. • Form teams early and choose people for both their technical skills and their character.

What's next for Local

We aim to expand the ValueTracker into a fully-fledged dashboard for enterprise travel agencies. We also plan to integrate offline fallback models and utilise Gemini's multimodal vision capabilities so Local can instantly read and translate menus or street signs in real-time. We want to expand into further languages

Built With

- fastapi

- gemini

- google-genai

- pydantic

- python

- react

- typescript

- uvicorn

- vite

- websockets

Log in or sign up for Devpost to join the conversation.