-

-

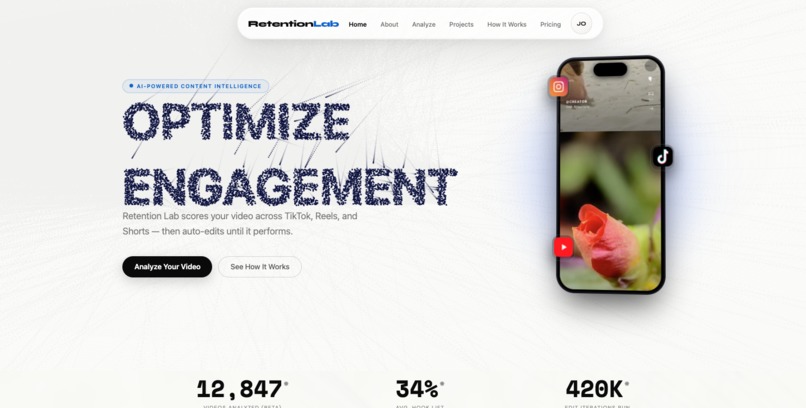

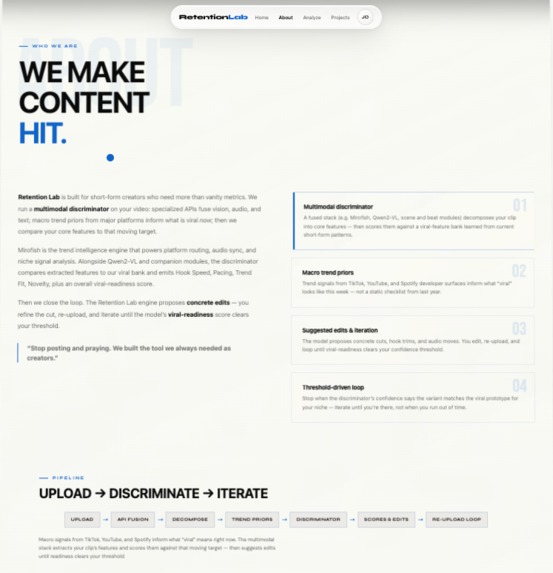

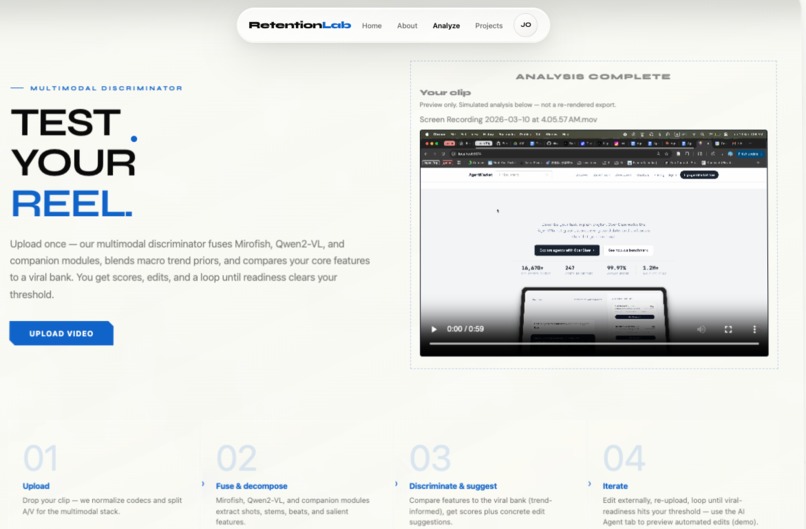

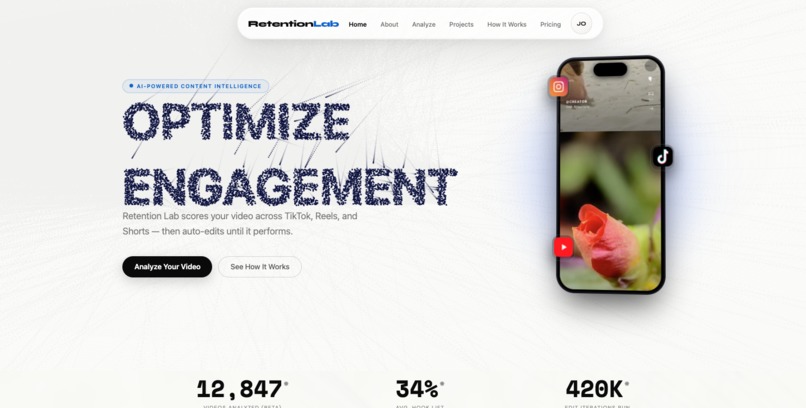

Hero landing Page

-

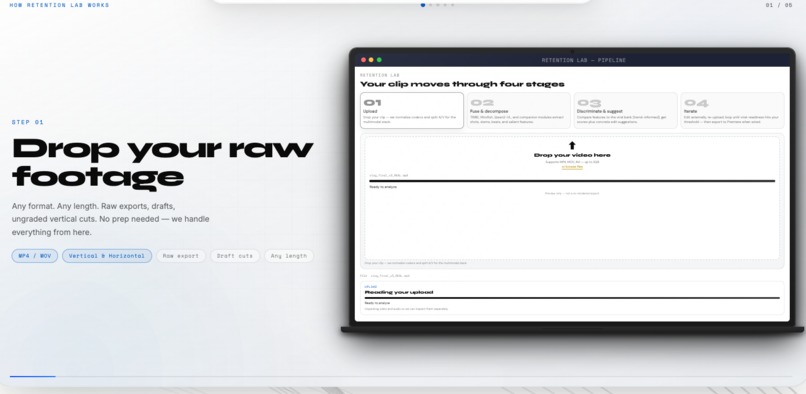

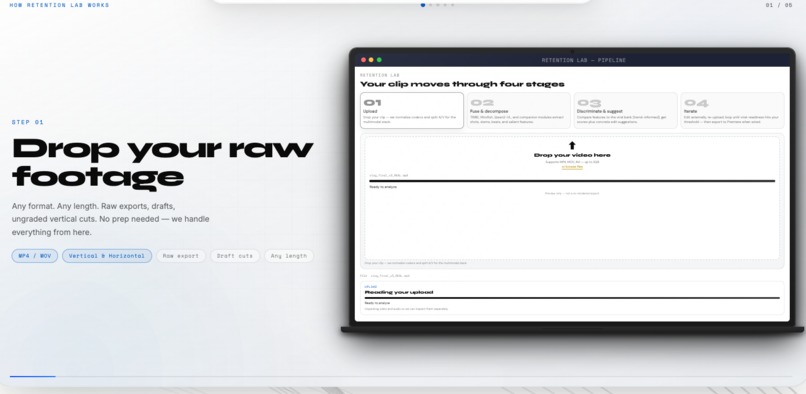

Step 1

-

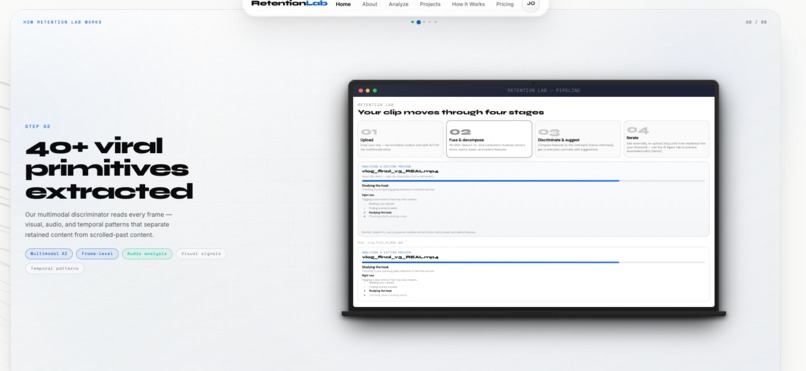

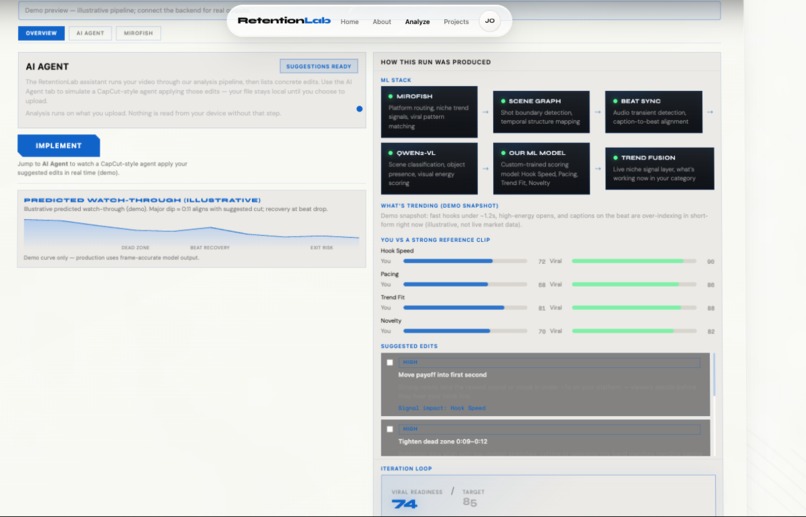

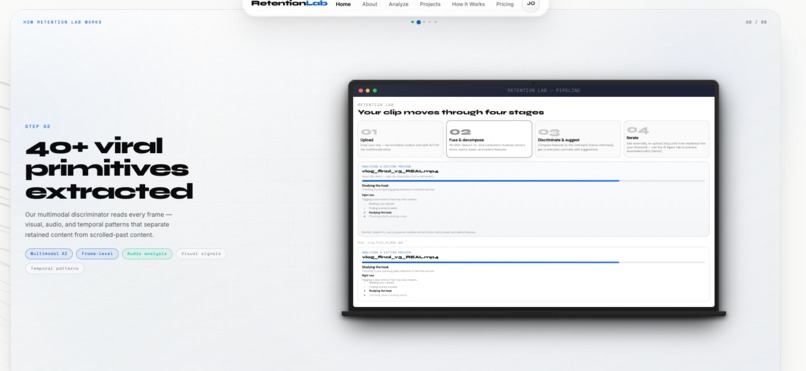

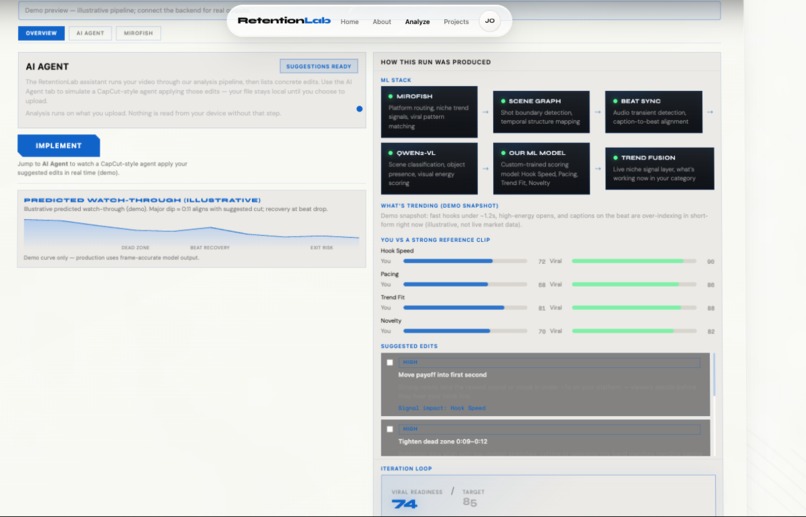

Step 2 ( criteria analyzed)

-

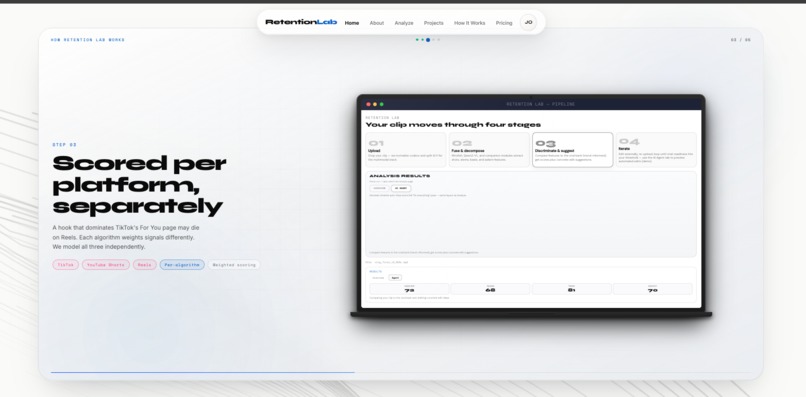

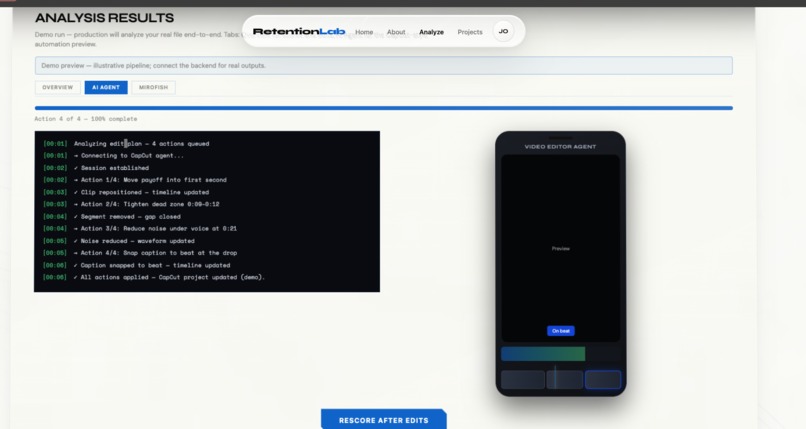

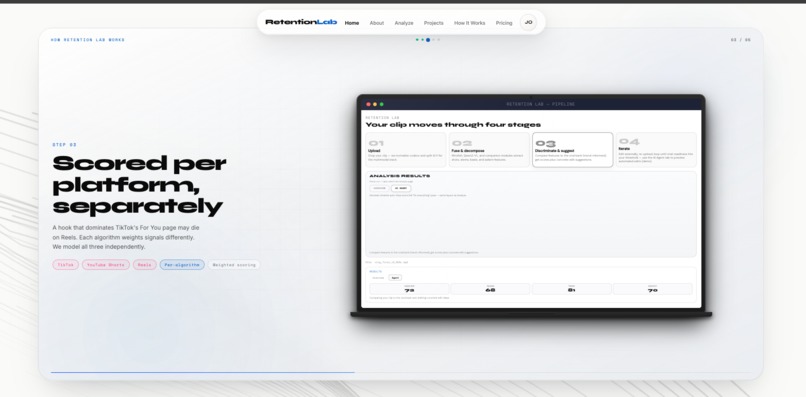

AI Agent reiteration

-

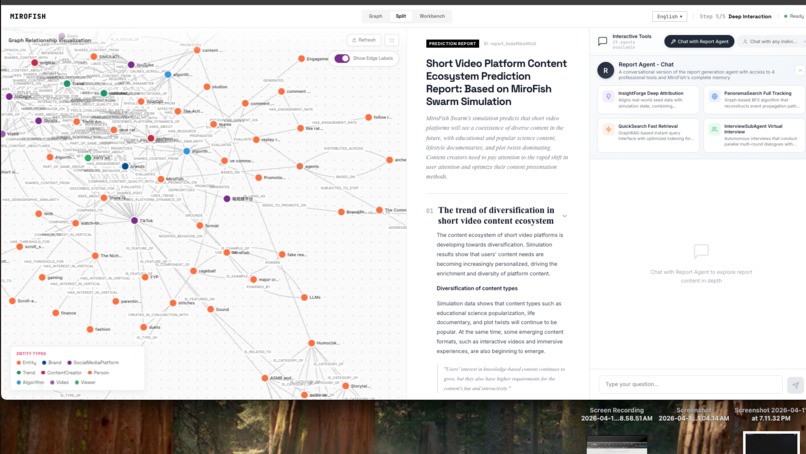

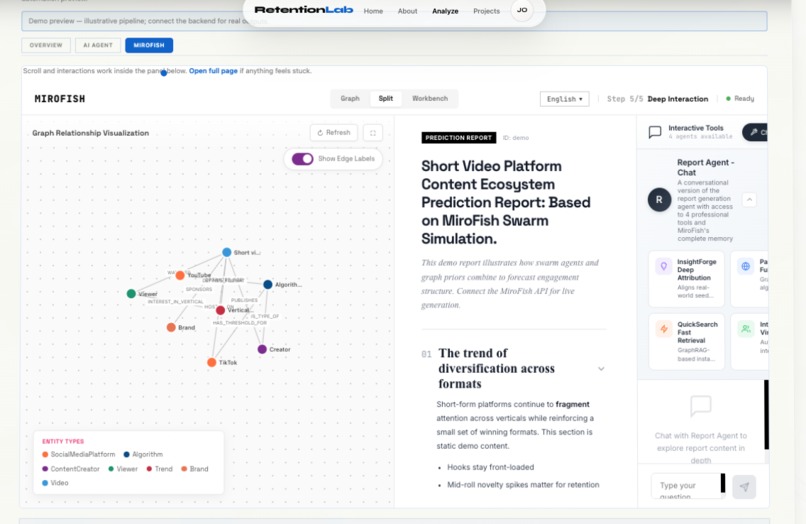

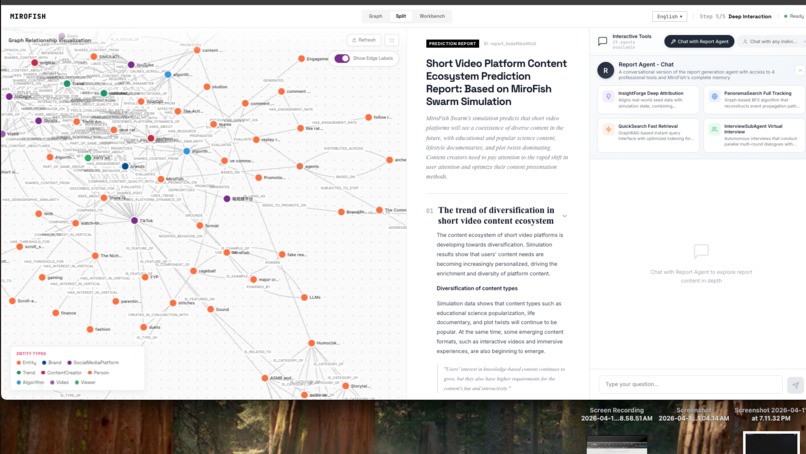

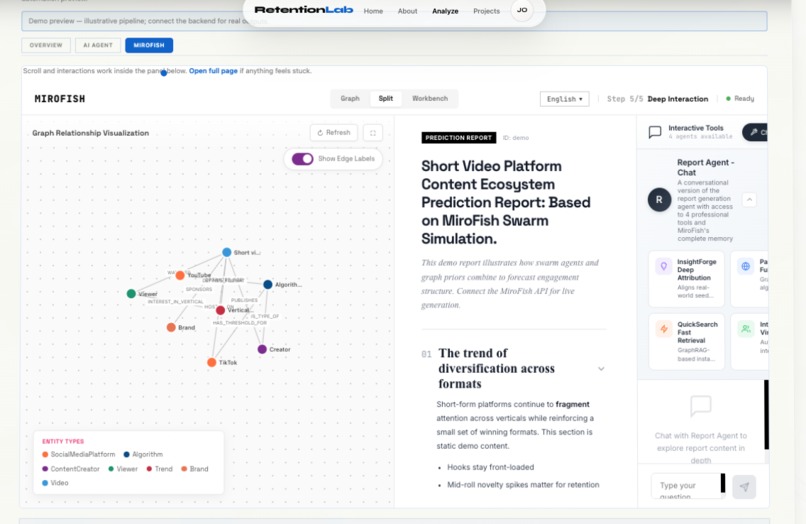

Mirofish anakysis report

-

About us page

-

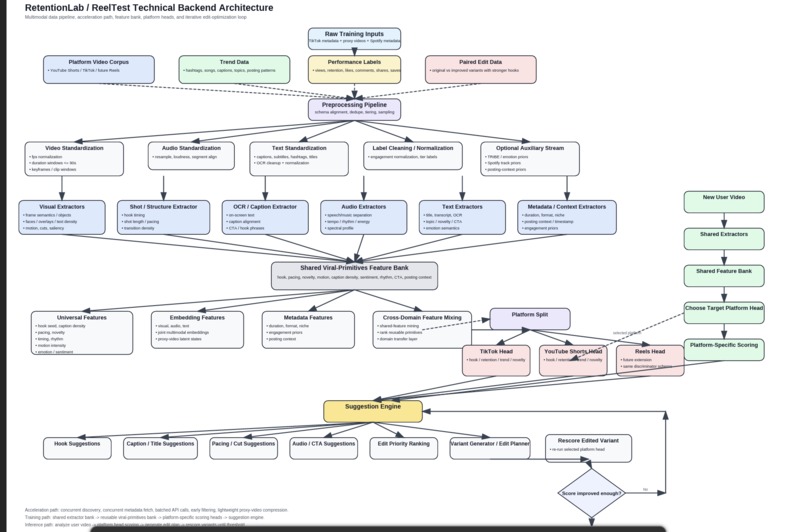

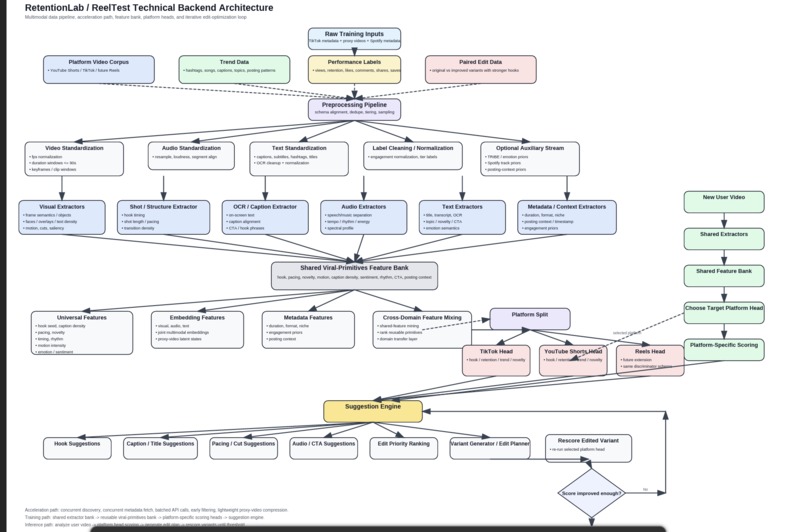

ML and backend technical chart

-

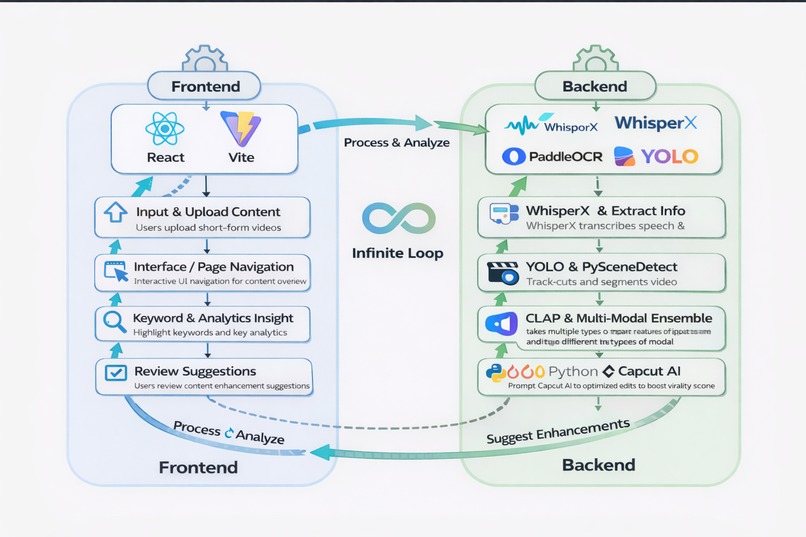

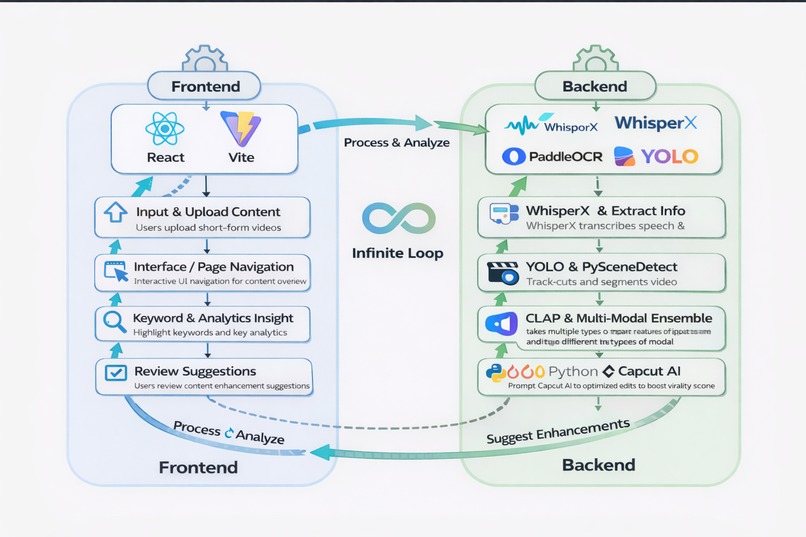

Our Backend pipline/workflow

-

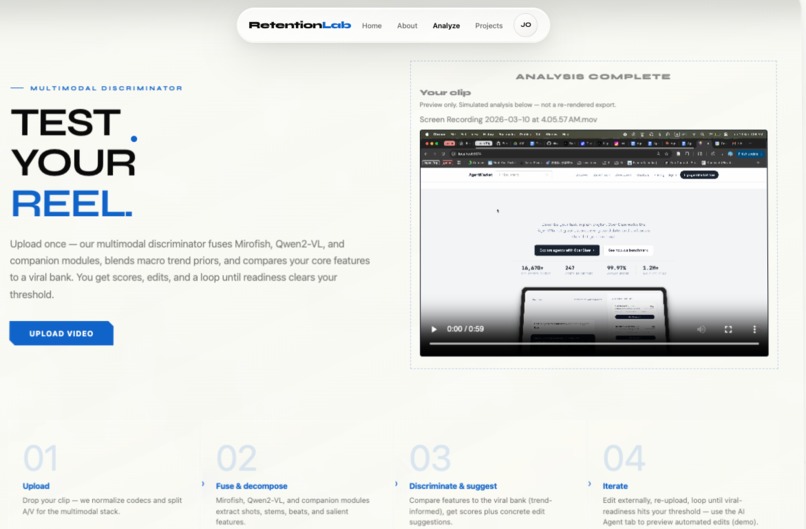

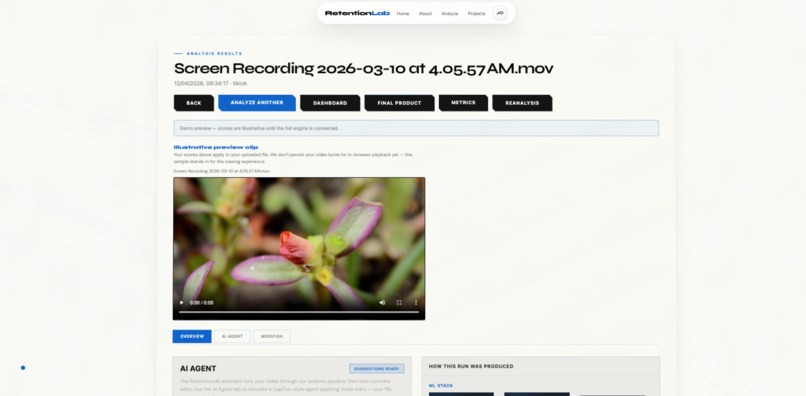

Upload video

-

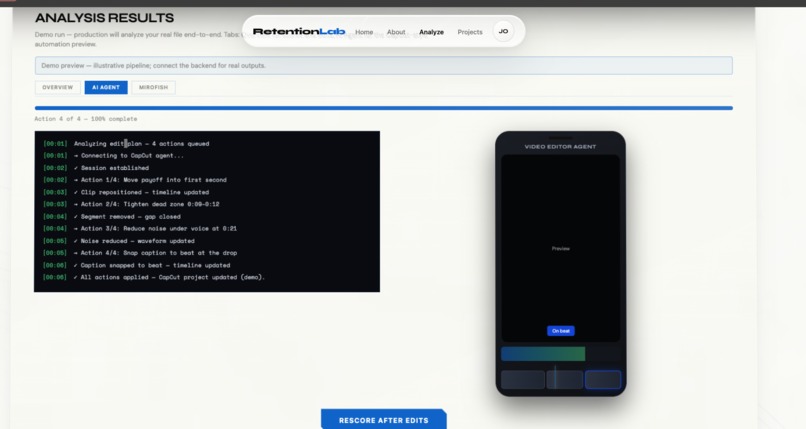

Live edit processs for AI agent editor

-

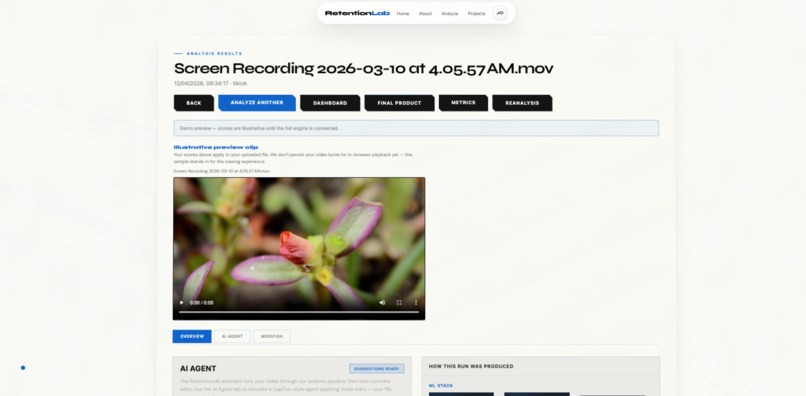

analysis results

-

mirofish intergration

-

final video analysis

-

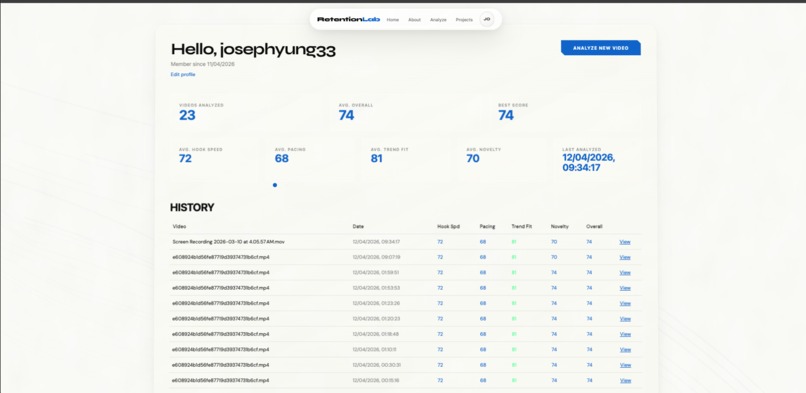

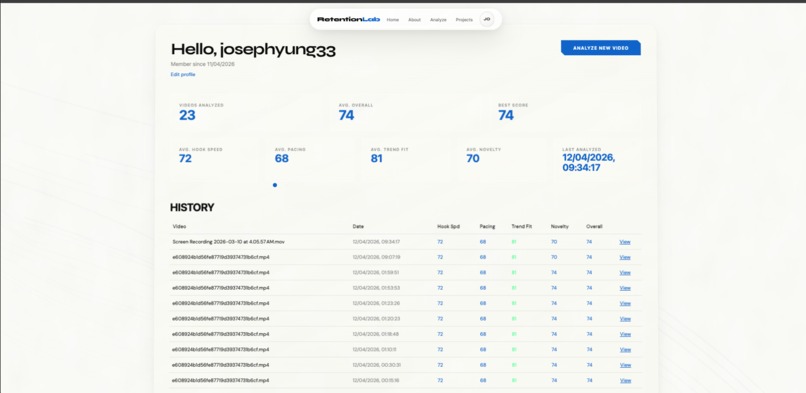

Profile Dashboard

-

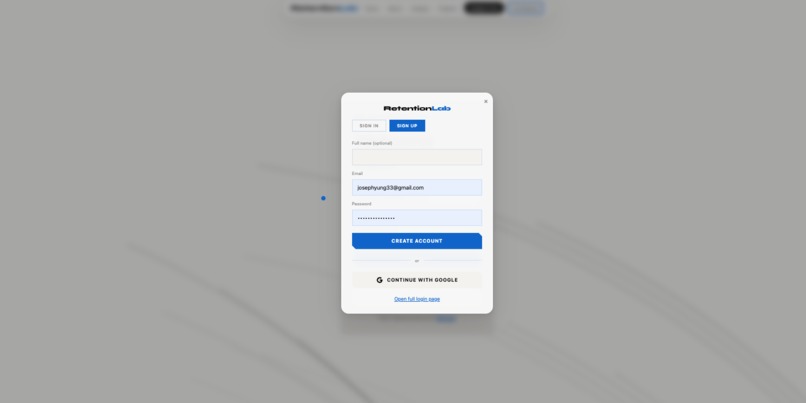

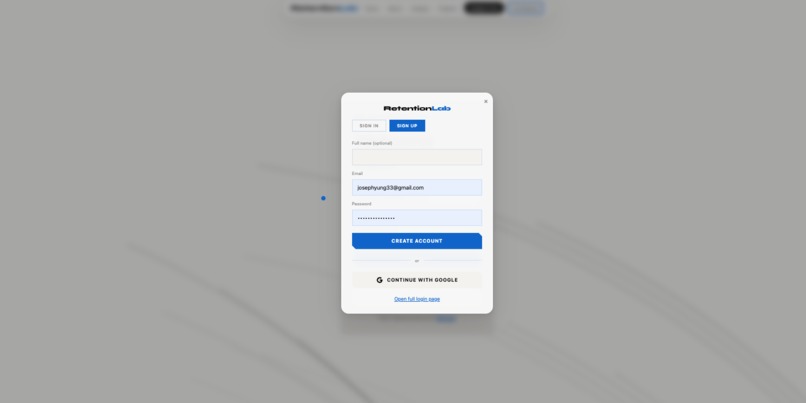

Account making with google auth intergration

Inspiration

Every creator has posted a video they thought would blow up — and watched it die at 200 views. The difference between a video that gets 500 views and one that gets 5 million isn't talent. It's measurable. Hook timing, pacing rhythm, trend alignment, visual novelty — these are signals that top creators optimize intuitively, but nobody had built a tool that quantifies them before you post. We built Retention Lab to change that. Upload your raw footage, get a viral readiness score across four key signals, see exactly what's dragging your score down, and let an AI agent fix it directly in your video editor.

What It Does

Retention Lab is a multimodal AI analysis platform for short-form video. You upload a video, and the system:

What It Does

Retention Lab is a multimodal AI analysis platform for short-form video that handles the technical craft of editing — so creators can focus entirely on content.

The structural work of video editing — hook timing, cut rhythm, beat alignment, caption placement, dead zone removal — is measurable and automatable. It doesn't require creative judgment. It requires pattern recognition against what works. That's what our pipeline does.

You upload your footage. Within 60 seconds you have four calibrated scores, a ranked edit plan, and a direct comparison against top-performing content in your niche. The AI agent then executes every technical edit autonomously — restructuring your opening, cutting dead zones, snapping cuts to beat transients, placing captions at drop points. It rescores, iterates, and surfaces the best variant when it crosses the threshold.

What that frees up is significant. Editors and creators currently spend the majority of their production time on technical optimization — trimming, pacing, syncing — work that is repetitive, rules-based, and increasingly solvable by AI. Retention Lab compresses that work from hours to minutes. A creator who used to spend 4 hours on a single short now spends 20 minutes on the content itself and lets the agent handle the rest.

The creative decisions — what to say, how to say it, what story to tell — remain entirely human. Everything structural that sits between that creative vision and a video that actually retains viewers is now automated.

That's the shift. From editors spending time on craft mechanics, to creators spending time on ideas.

How We Built It

Frontend: React + Vite. The analysis dashboard shows your four signal scores, a You vs. Viral reference comparison, the Mirofish trend intelligence panel, and the AI Agent view with a live terminal log and synchronized mobile editor simulation. Backend pipeline — five stages: Stage 1 — WhisperX + PaddleOCR + YOLO On upload, WhisperX transcribes all speech and extracts word-level timestamps. PaddleOCR reads any on-screen text and captions. YOLO runs object detection and tracks subject presence across frames. Stage 2 — YOLO + PySceneDetect PySceneDetect identifies every cut boundary in the video. Combined with YOLO's frame-level detections, we build a scene graph — a temporal map of what's happening when, and how fast the visual content is changing. Stage 3 — CLAP + Multimodal Ensemble CLAP (Contrastive Language-Audio Pretraining) analyzes the audio track — energy levels, beat positions, music-speech separation, and audio-visual sync. The multimodal ensemble combines visual features, audio features, transcript data, and scene structure into a unified feature vector. Stage 4 — Our Custom ML Scoring Model RetentionLab uses a multimodal discriminator pipeline to predict short-form video virality. Internal extractors analyze the uploaded video across visual features (scene detection, motion energy, face/emotion detection), audio features (transcription via Whisper, loudness curves, silence detection), and temporal features (hook windows at 1s/3s/5s, pacing, dead zones). These feed into MiroFish, a multi-agent social simulation where 150 synthetic personas — casual scrollers, creators, critics — react to the content across multiple rounds. All features merge into a 64-dimension vector scored by an ONNX discriminator across four weighted signals: hook, retention, trend, and novelty, producing a composite virality score.

Stage 5 — Mirofish Trend Layer + CapCut AI Agent Mirofish provides live niche signal data — trending audio types, caption styles, opening formats, and hook timing of top performers in your category this week. These signals dynamically reweight the Trend Fit score in real time, so your results reflect current platform behavior rather than a static training set. Once scoring is complete, a Python orchestration layer takes the ranked edit plan and opens an autonomous execution loop against the CapCut AI agent. Each edit is translated into a structured prompt — move this clip, cut this segment, snap this cut to the nearest beat transient, insert a caption at this timestamp — and dispatched sequentially. The agent executes, confirms, and the orchestrator advances to the next action. What makes this technically non-trivial is what happens after execution. The edited video is automatically re-exported and fed back into the full pipeline — Stage 1 through Stage 4 — for a fresh scoring pass. The loop runs iteratively: score, edit, re-export, rescore, edit again. Each iteration targets the highest-delta edits remaining. The system tracks score trajectory across runs, detecting diminishing returns and halting when marginal gain per edit drops below a threshold. When the composite score crosses 82/100, the loop exits. Rather than returning a single output, the system surfaces every variant generated across iterations as a branching decision tree — each branch representing a distinct edit path with its own score profile across all four signals. You review them side by side and select the version that best matches your intent.

Challenges We Ran Into'

We had the most trouble when we were training the ML model with videos, High quality videos have very large file and we needed to find a way to process the data quickly within the short time frame we had hence: RetentionLab uses a two-tier FFmpeg compression strategy. On upload, videos are downscaled to a 480p proxy — shortest side at 480 pixels, capped at 30fps — and encoded with H.264 (libx264) at CRF 28 with AAC mono audio at 64kbps. This lightweight proxy feeds into the feature extraction pipeline for fast analysis. When the system generates edited variants — applying trims, cuts, or text overlays — it re-encodes at CRF 18 with AAC stereo at 128kbps, preserving near-original quality for the final output. If no edits are applied, the stream is copied directly with zero re-encoding overhead.

Getting multimodal signals to agree was the hardest part. Audio says the pacing is fast. Vision says the motion is slow. The scene graph says there are 12 cuts. Which signal wins? We had to build a weighting system that resolves conflicts between modalities rather than averaging them blindly. The CapCut AI agent integration required reverse-engineering the prompt structure that reliably produces timeline edits. Most prompts produce suggestions, not actions. Getting it to actually move clips took significant iteration. Calibrating the scoring model against real-world virality data — where ground truth is messy, niche-dependent, and constantly shifting — meant building the Mirofish trend layer not as a static dataset but as a live reweighting mechanism.

Accomplishments We're Proud Of

A fully working end-to-end pipeline: raw video in, scored edit plan out, agent executes Four signals that are genuinely predictive and explainable — not a black-box "viral score" The AI Agent view where you watch your video being edited in real time by the agent Mirofish integration that makes the scoring context-aware, not just pattern-matching against a static dataset

What We Learned

Virality is more mechanical than most creators believe. The signals that separate retained content from scrolled-past content are measurable at the frame level. Hook timing within the first second, cut alignment to audio transients, caption placement at beat drops — these are not aesthetic judgments. They are engineering problems.

What's Next

Native Premiere Pro panel (in development) — apply edits without leaving your NLE Platform-specific scoring heads: separate scoring models for TikTok, YouTube Shorts, and Reels Batch analysis for agencies managing multiple creators Watch-through prediction curve — frame-accurate model output showing exactly where viewers drop

Tech Stack

React, Vite, Python, WhisperX, PaddleOCR, YOLO, PySceneDetect, CLAP, CapCut AI Agent, Mirofish API, custom ML scoring model

Log in or sign up for Devpost to join the conversation.