Inspiration

We are often in situations where it's so difficult to figure out the myriad owes and pays between you and others. You are splitting a meal with a bunch of friends. You went on a hiking trip with your roommates; everyone paid for different things and the final cost needed to be sorted out. We wanted to develop an app to keep track of all the daily transactions that need to be split up.

What it does

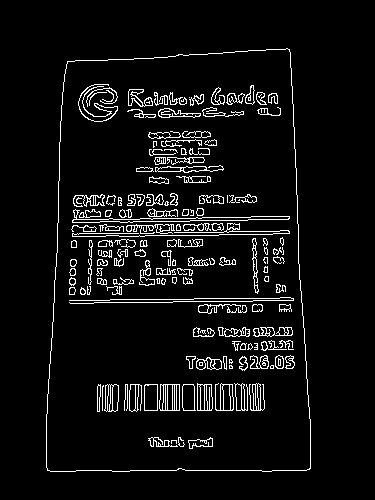

Ledger allows users to login with their Facebook account. Adding transaction is as easy as writing down a reminder, then split the bill with a few taps. Payment is also simple; Ledger can pay bills through either Paypal or Venmo. The app also supports OCR (Optical character recognition); you can take a picture of any receipt and the app can recognize and parse the amounts. Interact on the scanned receipt to easily add amounts displayed on the receipt.

How we built it

We built the app with state-of-art technology to ensure a beautiful, easy and secure user experience. Tech we used include Node.js running on Microsoft Azure, restify framework, Swift and multiple APIs. OCR is achieved with Tesseract OCR and OpenCV.

Challenges we ran into

This is the first time we had to use OCR. Although the Tesseract OCR library is already very mature and is being used commercially by companies such as HP, the image preprocessing still pose a challenge to us. We need to transform the image from the phone's camera into a clean and high contrast image that Tesseract can easily extract text information.

Also the deployment of our backend take more effort than we thought. Our backend is build with node.js and need to integrated with the OCR code (with Python), etc. The deployment & the unit testing was also very time consuming.

Accomplishments that we're proud of - OCR (Optical Character Recognition)

Using computer vision and image processing techniques, we were able to recognize and parse items in a receipt so that users don't have to manually input the numbers.

We used OpenCV library to generate a scanned out version of the picture of the receipt.

- Step 1: Take in the image and convert to grayscale

- Step 2: Use canny edge detection to detect edges

- Step 3: Find the largest contour

- Step 4: Apply perspective transform and apply threshold function to augment the black and white pixels.

Then, we use Tesseract OCR to recognize the texts in the image. An hocr formated xml file is outputted with bounding boxes of all the recognized text. (will be parsed to json then send to client). The bounding boxes can be used to create UI clickable to retrieve recognized text information.

The recognition result for the image above:

----------------------------------------------

RAINBOW GARDEN 202 E. UNIVERSITY AVE. URBANA, IL 61801 (217)344-1888 www.rainbow-garden.com

Friday 2/19/2016

CHK#: 5734.2 SVR: Kevin

Table #: 61 Guest #: O M

Order Time: 02/19/2016 09:07:05 PM W

0.33 BRAISED BEEF BRISKST $ 5.33 0.33 Ant Climb Tree $ 3.65 0.33 Boild_Sole Filet Szcch Sau $ 4.98 0.33 Stir Fried Bokchoy $ 4.32 0.33 Rainbow Spare Ribs $ 4.32 0.67 PEPSI $ 1.24

02/19/2016 09:37 PM

Sub Total: $23.83 Tax: $2.22

Total: $26.05

Thank you!

----------------------------------------------

What we learned

Getting enough sleep is essential to maintaining work efficiency.

What's next for Ledger

Features we would like to add to Ledger

- import and search friends through contacts

- multi-platform support for the app

Log in or sign up for Devpost to join the conversation.