Inspiration

Growing up, I watched education fail the students who needed it most, not because they lacked the will to learn, but because every platform assumed they could see the screen, use a keyboard, sit still for 45 minutes, and parse a wall of text.

The numbers are not abstractions. 1 in 6 people on earth lives with a disability that makes a standard interface difficult or impossible to use. 7.5 million students in the US alone have a recognized learning disability. One in three of them has already been held back a grade. They drop out at nearly three times the rate of their peers. Not because they cannot learn but because the classroom, and every digital extension of it, was never designed for them.

I kept asking the same question: why does every accessibility solution bolt features onto an existing interface, rather than questioning whether the interface itself is the problem? LearnHub started from a different premise entirely. What if the interface was never the requirement?

What it does

LearnHub is an AI-powered educational ecosystem that removes the UI as a barrier to learning. It does not add accessibility features on top of a standard platform. It replaces the standard platform entirely with a voice-first, multimodal agent that adapts to the student and not the other way around.

1) Hands free navigations: Our Learnhub agent has a set of registered commands that allow the agent to navigate and control the entire platform with just a student's voice. Navigating to pages, clicking buttons, asking for help, searching for features all can be done using voice, which makes this platform really easy to use for students with motor disabilities.

2) The Pulse (Gemini Live Voice Agent): The centerpiece of LearnHub is a real-time bidirectional voice agent powered by the Gemini Live API. Students say "Hey agent, let's learn" and the platform transitions into Pulse Mode which is a high-contrast, audio-first environment where the entire UI dissolves and learning begins through natural conversation. The agent greets students by name, recalls their last lesson, generates a live lesson roadmap, narrates microsections, answers questions mid-lesson, and responds to interruptions gracefully. Students with motor impairments can navigate, learn, and complete entire chapters without touching a keyboard or mouse once.

3) Technical Braille and Nemeth Code: LearnHub is the only platform that automatically converts STEM content and LaTeX equations into Grade 2 Braille and Nemeth Code (the technical Braille standard for mathematics). The conversion is instant, client-side, and compatible with refreshable Braille displays. A blind student can photograph a printed textbook equation, send it to LearnHub, and receive a Nemeth Code output in under 10 seconds. No other mainstream educational platform provides this. Learnhub provides the braille output in .brf format which can be inputted inside a braille embosser to get a physical braille print of the lessons.

4) Multimodal Content Delivery (Four Channels, One Concept): Every lesson is delivered across four distinct formats: article, visual canvas, quiz, and story mode. The Visual Canvas generates live Mermaid SVG diagrams and Imagen 4 illustrations on demand, which means that the agent decides when a visual aid is needed and renders it in under 2 seconds. Story Mode reframes the same academic concept as a narrative with generated imagery, specifically designed for students with ADHD or dyslexia who cannot engage with textbook-format content. Same concept. Four ways in. Because one format was never enough.

5) Admin Automation For Teachers: LearnHub's admin panel gives teachers three tools that collectively eliminate the expertise barrier between a teacher and an accessible classroom.

a) Roadmap Automation. A teacher describes a student profile by voice like "an ADHD student who loves visuals" and the agent builds a complete personalized learning roadmap and exports it as an interactive PDF in under two seconds. No accessibility training required. b) Textbook Pipeline. Teachers upload any PDF, textbook, or raw content, and Gemini 2.0 Flash automatically scrapes, parses, and restructures it into sequenced micro-lessons with LaTeX preserved, key concepts extracted, and all four content formats generated. Hours of lesson planning, done in seconds. c) The third is the Lesson Editor. A full rich-text editor lets teachers write new lessons from scratch, edit AI-generated content, adjust pacing, and publish directly to specific students or classes. Every edit is voice-compatible where the agent transcribes and formats in real time.

Together, these three tools mean a teacher with no background in accessibility, no lesson planning time, and no specialist training can build a fully personalised, multimodal, accessible curriculum for any student in a single session.

6) Proactive Telegram Agent: LearnHub does not wait to be opened. Students don't need a web app to learn. They can just connect telegram to their account and a background service monitors student progress and sends personalized Telegram messages, referencing exactly what the student was working on and inviting them back with a single magic link. No login screen. No navigation. Direct to the right lesson. The classroom in a notification.

How we built it

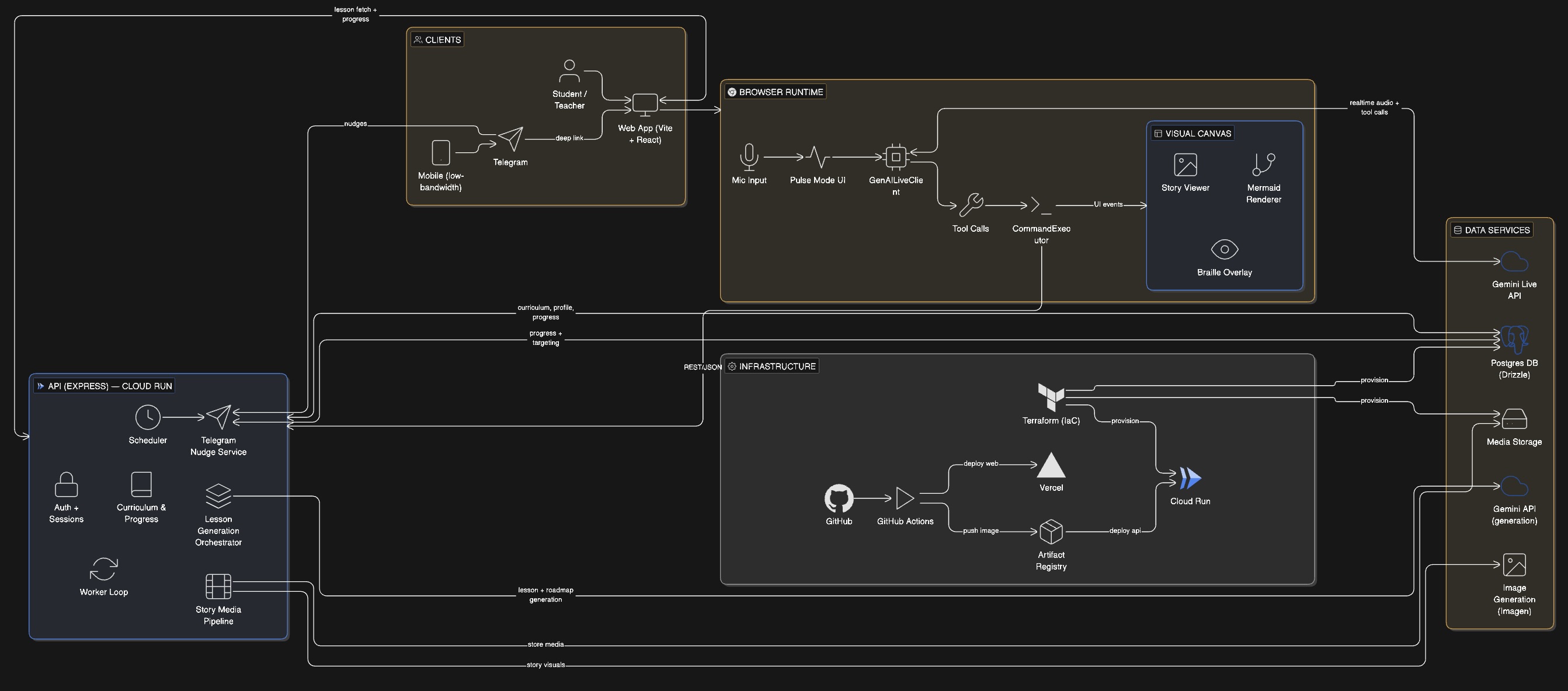

Frontend: React 18 with TypeScript, built around ARIA standards and keyboard-less navigation from the ground up. The voice interface uses a custom VoiceAgentProvider that manages bidirectional WebSocket state, handles interruptions, and maps spoken intents directly to frontend routes via Tool Calling.

The Voice Agent: Gemini Live API handles real-time bidirectional audio streaming. Tool Calling maps voice commands to frontend actions, including planLesson,

generateVisualCanvas, executeAction, toggleFocusMode with sub-100ms tool response latency. The agent uses the Sulafat voice for warm, expressive narration. All session state is preserved across interruptions.

Content Processing: Gemini 2.0 Flash acts as the intelligent content parser — extracting structured lesson data from PDFs and raw text while preserving LaTeX strings for downstream Braille processing.

Braille and Nemeth Code Engine: LibLouis open-source library handles Grade 2 Braille and Nemeth Code translation. A custom validation layer checks LaTeX syntax before it reaches the LibLouis engine, ensuring 100% mathematical accuracy. The entire pipeline runs client-side with zero latency.

Visual Canvas: Mermaid.js renders live SVG diagrams. Imagen 4 generates high-fidelity educational illustrations from agent-constructed prompts. Both are triggered autonomously by the agent when it determines a visual aid will improve comprehension.

Backend and Infrastructure: Node.js/Express server manages data flow and session orchestration. PostgreSQL with Drizzle ORM handles user progress, lesson metadata, and spaced repetition scheduling. The entire backend is deployed on Google Cloud Run. Proactive Telegram nudges are scheduled via Google Cloud Scheduler, calling a Cloud Run endpoint that uses Gemini to generate personalised re-engagement messages.

Telegram Integration: Python Telegram Bot API handles the proactive nudge service. Magic links use session tokens to deep-link students directly to their active lesson with zero authentication friction.

Challenges we ran into

Latency and Interruption Handling: The most significant technical challenge was building a voice interface that felt genuinely natural and not a turn-based chatbot disguised as a voice agent. Orchestrating the Gemini Live API WebSocket while keeping the React UI in sync, handling mid-sentence interruptions without losing lesson context, and ensuring tool calls completed before the agent continued narrating required careful state machine design. We built a custom LessonState manager with six discrete states IDLE, INTRODUCING, TEACHING, CHECKING, REVIEWING, CELEBRATING to ensure every transition felt intentional rather than jarring.

Gemini API Rate Limits on a Student Budget: Building on the free tier of the Gemini API meant working within strict rate limits. Every architecture decision had to account for this constraint. We batched tool calls where possible, implemented client-side caching for lesson content to avoid

redundant API calls, and designed the LessonState machine partly to prevent the agent from making unnecessary requests mid-session. What started as a financial constraint became a discipline that made the system more efficient. The platform works within free tier limits not because we had no choice but because we designed it to.

Mathematical Accuracy in Braille: Nemeth Code for mathematics is unforgiving. A single misplaced character changes the meaning of an equation for a visually impaired student in ways that are invisible to everyone else. We developed rigorous prompt schemas to ensure Gemini 2.0 Flash consistently outputs correctly formatted LaTeX, and built a validation layer that checks syntax before the LibLouis engine ever runs. Every Braille output is verified before it reaches the student.

Designing for Zero Interface: The hardest design challenge was not building the voice agent but it was resisting the instinct to add visual fallbacks everywhere. Every time we hit an edge case, the default solution was to add a button or a tooltip. We had to consistently ask: can this be solved with voice instead? The answer was almost always yes, and the product is better for the discipline.

Accomplishments that we're proud of

Zero-UI Navigation is Real: LearnHub can be fully operated with lesson navigation, content consumption, quiz completion, Braille output, diagram generation without a single mouse click or keyboard input. For a student with motor impairments, this is not a workaround. It is the designed experience.

The Only Platform With Automated Nemeth Code: We are not aware of any mainstream educational platform that automatically converts STEM content to Nemeth Braille Code in real time. This feature alone represents a genuine gap in the market that has existed since digital education began.

Four Gemini Capabilities in One Cohesive Experience: Gemini Live API for voice navigation, Gemini 2.0 Flash for content parsing, Gemini TTS for lesson narration, and Imagen 4 for visual generation — all orchestrated through a single agent that students experience as one natural interaction. The seams are invisible.

The Teacher Tool Changes the Equation: The Admin Roadmap Automation means that accessibility is no longer dependent on a teacher's personal expertise or training. Any teacher, anywhere, can build a personalised accessible curriculum in under two seconds. That scales.

What we learned

Accessibility is the ultimate stress test for product design. When you design for a student who cannot see the screen, cannot use their hands, cannot sit still for forty-five minutes, and cannot parse dense text, you are forced to question every assumption your interface makes. The resulting product is better for everyone, not just the students it was specifically designed for.

We also learned that the Gemini Live API is genuinely capable of the kind of natural, interruptible, context-aware conversation that voice interfaces have promised for a decade and never delivered. The sub-100ms tool response latency is what makes the difference between a demo and a product.

And we learned that building for the students who have been failed longest is not a constraint. It is a direction.

What's next for LearnHub

Sign Language Generation: Real-time sign language avatar generation for deaf and hard-of-hearing students, using Gemini's video generation capabilities to translate lesson narration into visual signing in real time.

Institutional Dashboard: A dedicated school and district dashboard allowing teachers to assign personalised roadmaps, track accessibility metrics across classrooms, and use Gemini's reasoning to surface students who need additional support before they fall behind.

WhatsApp and Voice Call Channels: Extending the Telegram agent to WhatsApp and Twilio-powered voice calls so students in low-bandwidth regions whose only device is a phone can access a full tutoring session through a phone call, no data plan required.

Offline Edge Mode: A lightweight, edge-compatible version of the Braille translator and core voice navigation for students in low-connectivity or remote areas where cloud latency makes real-time AI impractical.

Spaced Repetition Engine: A fully automated review system that schedules concept re-testing at scientifically optimal intervals like 1, 3, 7, 14, 30 days and delivers reviews proactively through Telegram without any student action required.

Built With

- 2.0

- ai

- api

- drizzle

- express.js

- flash

- gcp

- gemini

- genai

- imagen

- liblouis

- live

- mermaid.js

- node.js

- orm

- postgresql

- python

- react

- scheduler

- sdk

- ssml

- telegram

- typescript

- vertex

- webrtc

- websockets

Log in or sign up for Devpost to join the conversation.