-

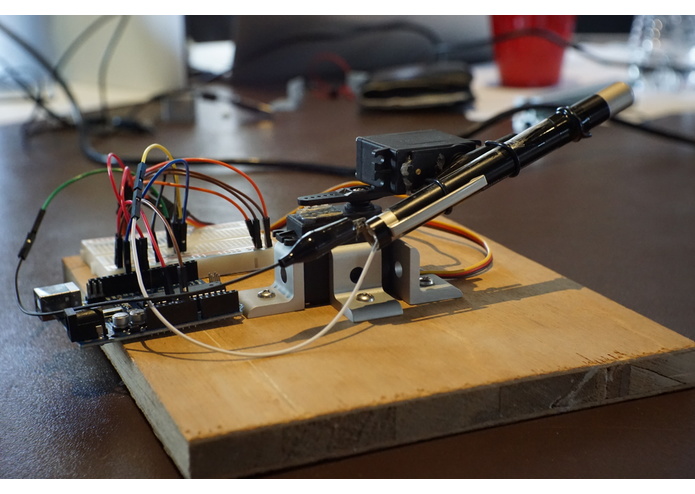

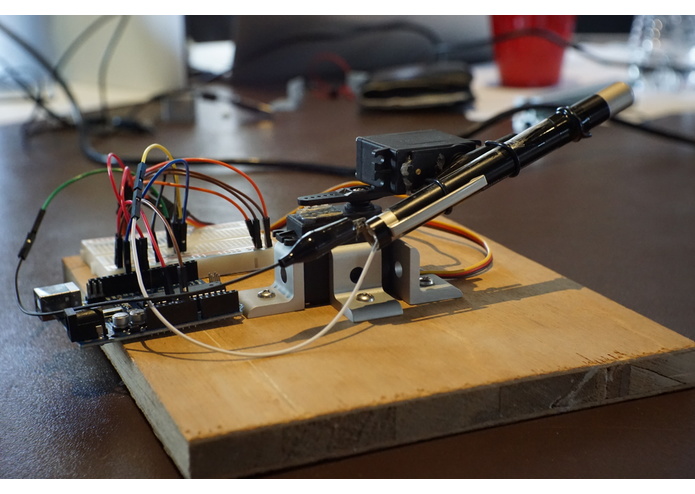

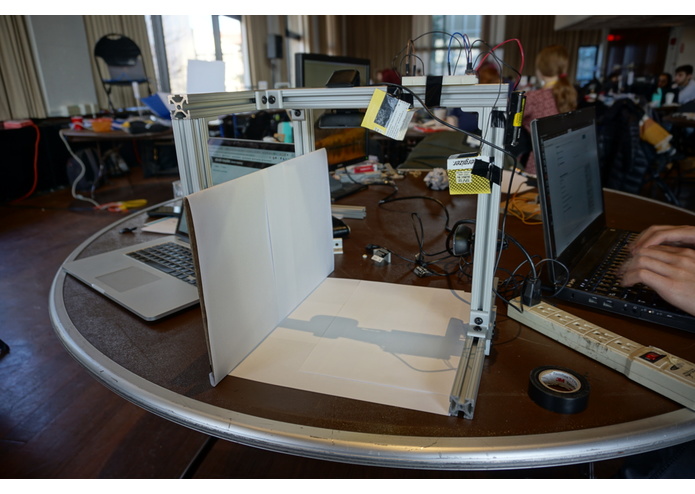

The laser module. The laser module receives instructions via the internet and matches its pointing to your finger's orientation.

-

The laser module. The laser module receives instructions via the internet and matches its pointing to your finger's orientation.

-

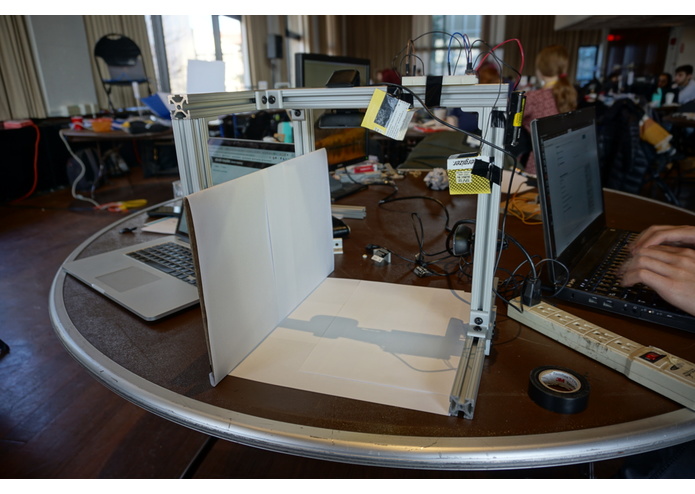

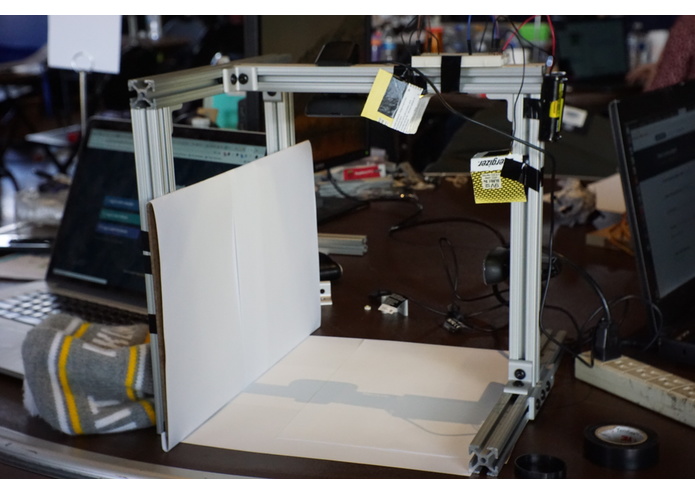

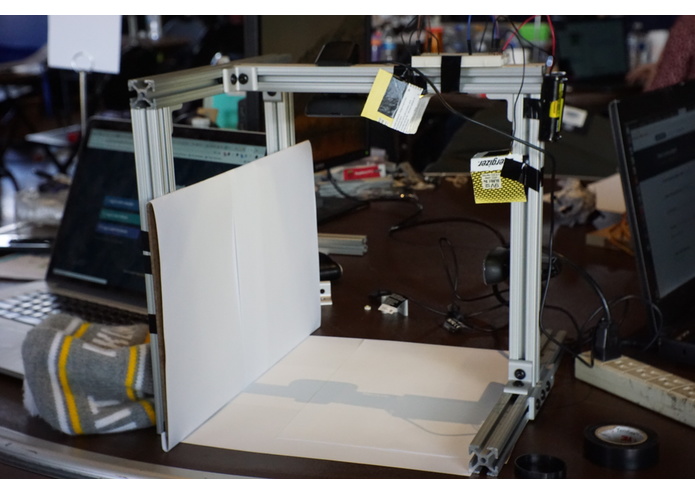

The camera module. Two cameras read the location of your finger tip. This is useful for representing a 3 dimensional finger in 2D space.

-

The camera module. Two cameras read the location of your finger tip. This is useful for representing a 3 dimensional finger in 2D space.

Inspiration

We first wanted to experiment with gesture recognition technology, and wanted to make a project to demonstrate it. So we came up with the idea that we can use gestures to, say, point a laser pointer during a presentation.

What it does

A lightweight motion capture system with two cameras that capture the motion of your finger. It then transmits the motion over the internet to a laser pointer. Which is calibrated to project your gesture on some flat screen.

How we built it

For the motion capture system we used a few 80-20 aluminum pieces and two webcams. We used OpenCV for finger tip detection. On the other end we have a laser pointer module made up of servos and an arduino, able to be connected to a raspberry pi or a laptop. The module receives the detected finger tip over wifi and moves accordingly to project the gesture on the screen.

Challenges we ran into

For the laser module, we spent quite some time working out the math for the calibration and projection onto any screen of varying sizes and distances due to awkward offsets in the angles.

Accomplishments that we're proud of

We solved most of the challenges we ran into.

What we learned

We learned how to connect two devices through a server/client model using sockets in python. We also learned how to use OpenCV for detecting things in a live feed. Lastly, we learned a lot of math. Projective geometry in particular (Trig).

What's next for LaserGeorge

Laser George is more than just a laser pointer. We were able to properly read our hand motion and reflect that on a system through the internet. The laser could be replaced with a variety of things, like security cameras, robotic arms, or (if our precision is even more fine-tuned) even a surgical laser!

Log in or sign up for Devpost to join the conversation.