-

-

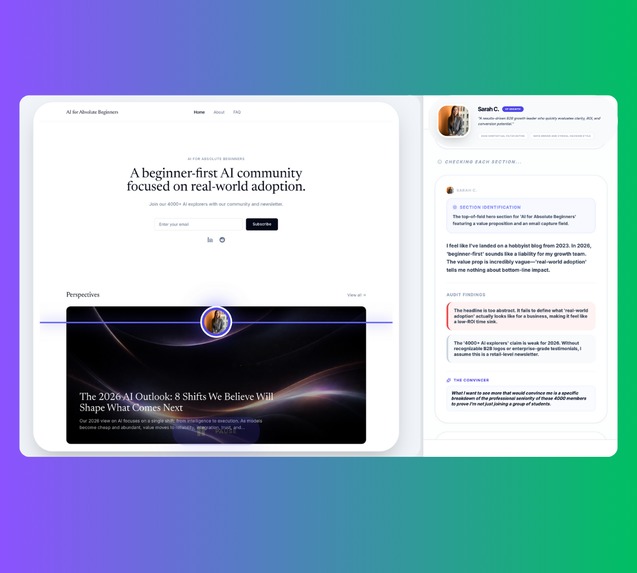

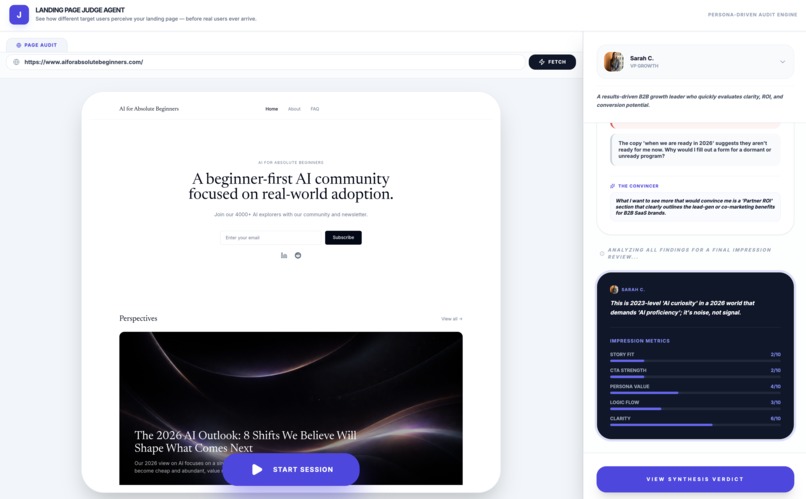

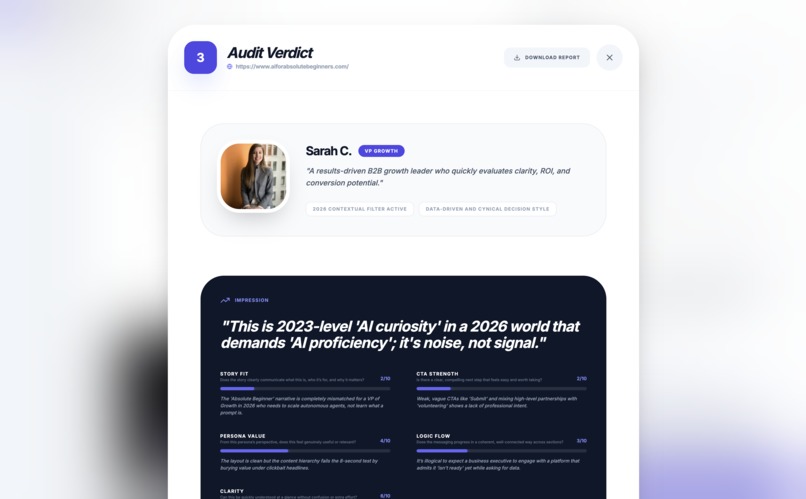

Meet Landing Page Judge

-

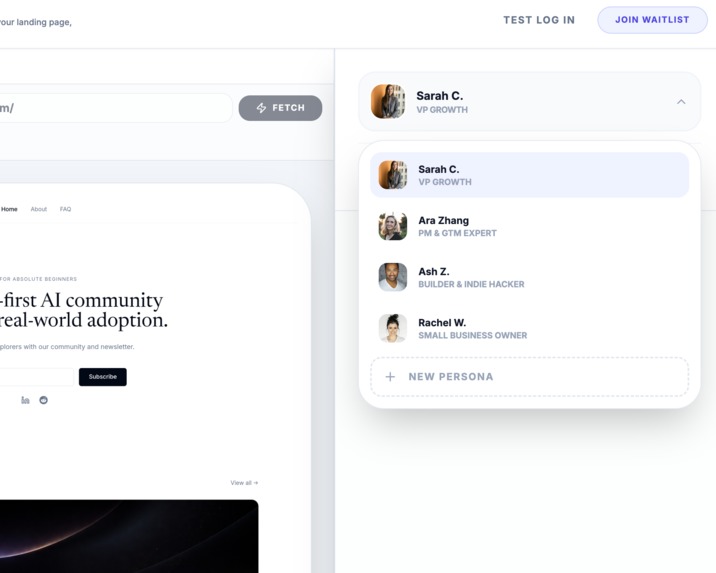

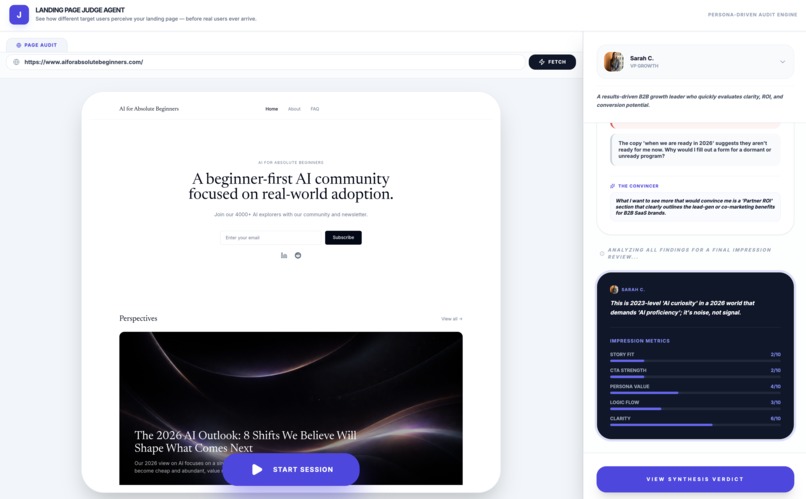

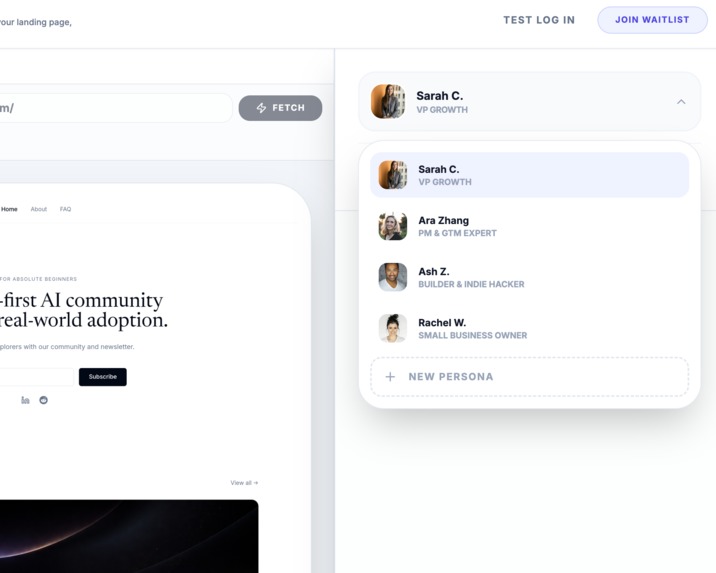

Invite real-world personas to check your anding page

-

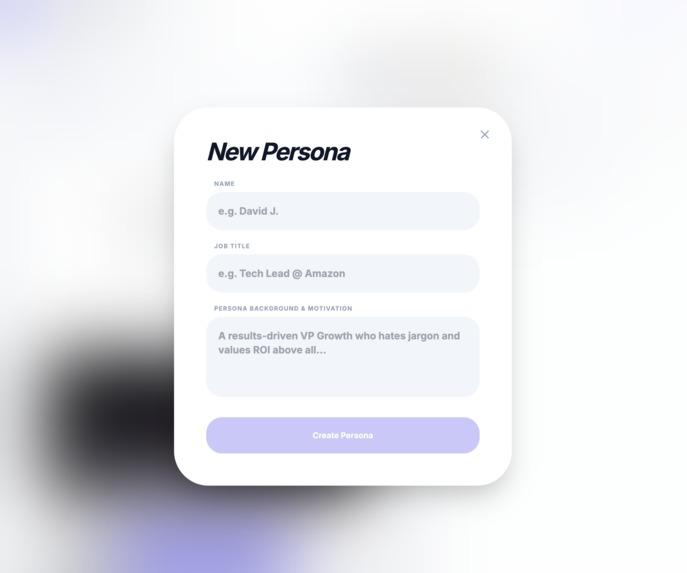

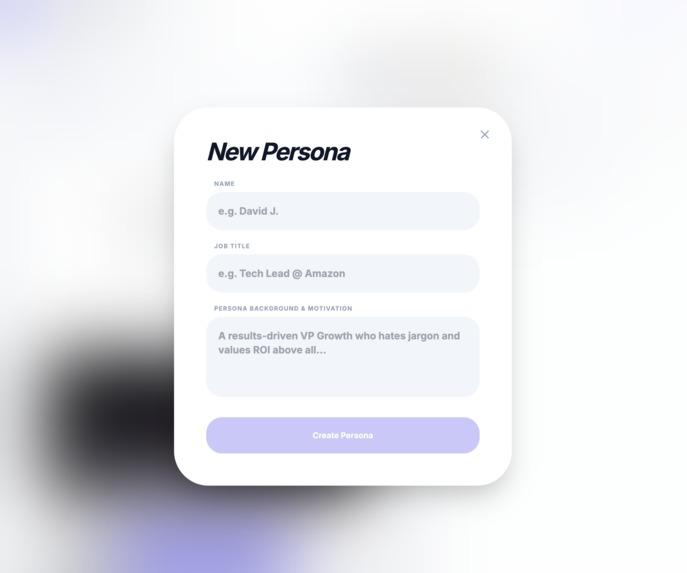

Or create any persona just like your target user

-

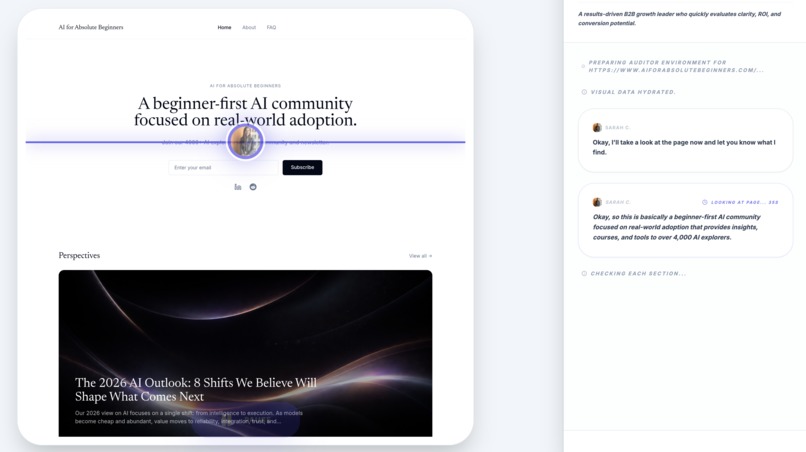

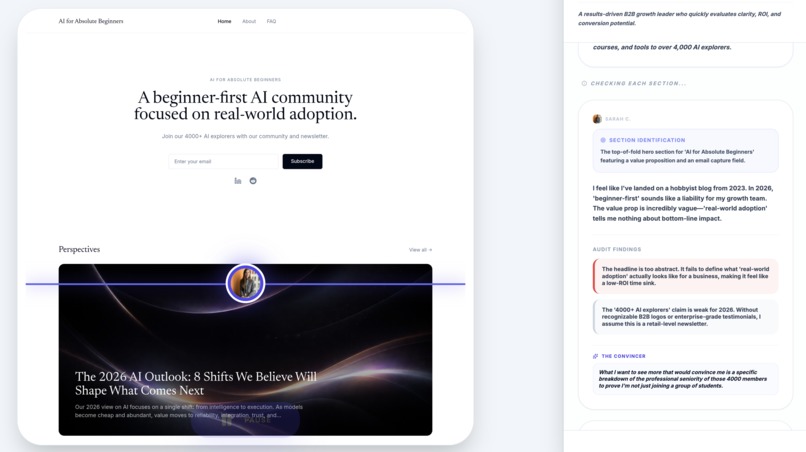

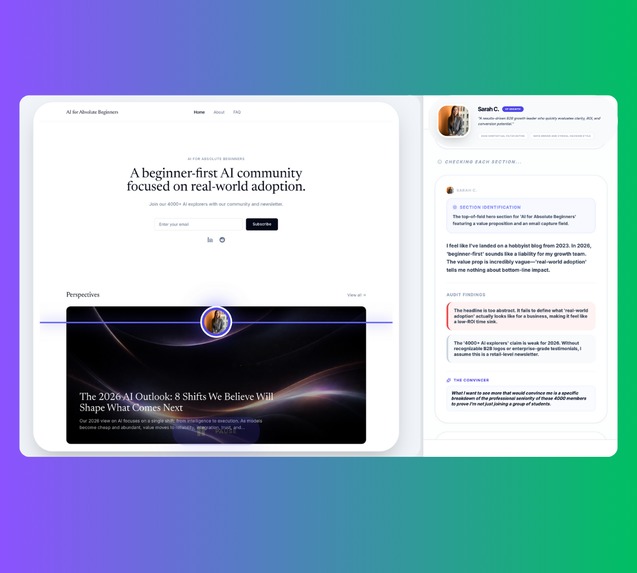

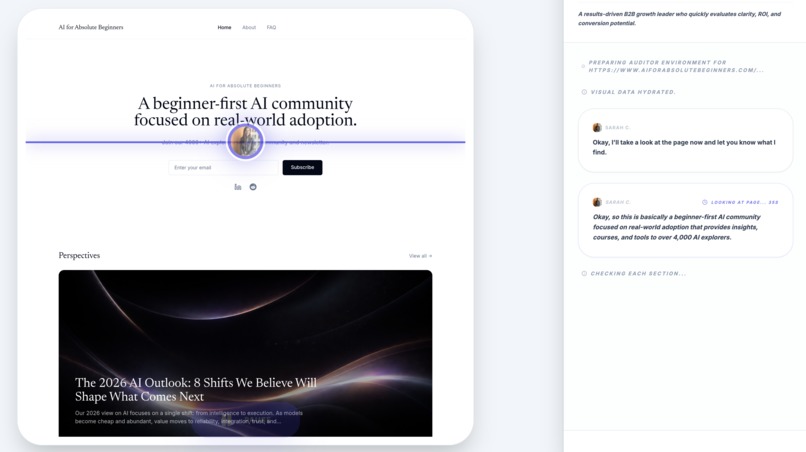

Real-time analysis scrolling down like a real user

-

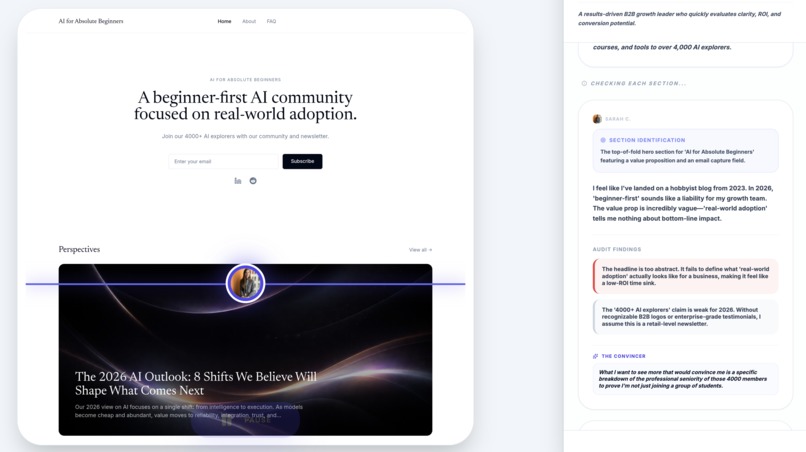

Detailed section by section comments with critics and convincer

-

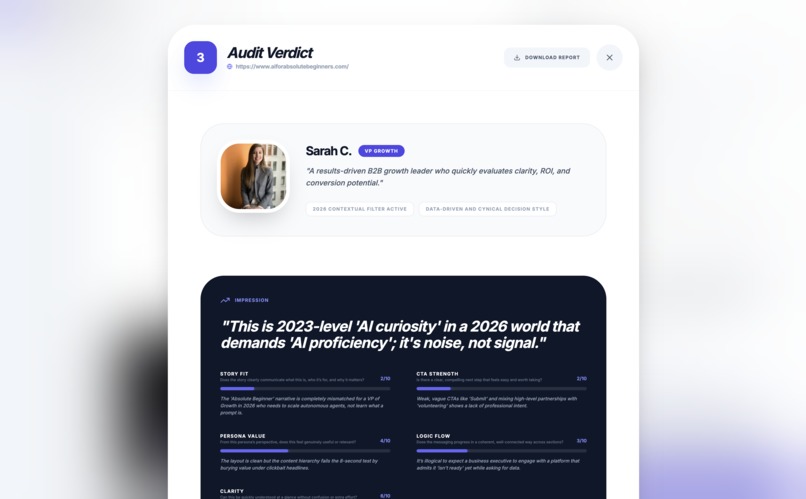

Final impression evaluated on a standardized landing page metrics

-

Download Full Report and save for later

Inspiration

I’ve worked as a product and GTM strategist for both large companies and early-stage startups for years. I’ve built landing pages myself and also advised many founders on their first landing pages. One pain point keeps repeating. Founders often spend weeks polishing their landing pages. But when the page is finally handed off to me, I still have to ask one fundamental question: Who do you think this landing page is actually talking to?

Most of the time, the page is talking to the wrong person, talking to too many people or just generally a monologue. And the page reflects what the founder wants to say, not how a first-time user actually perceives it. Even with experience, I still need time to step back and get “fresh eyes” on the page.

That gap between intention and perception is what inspired this project.

What it does

Landing Page Judge Agent allows founders to input a landing page link and have it evaluated by customizable AI personas that simulate real first-time users.

Each persona reviews the landing page from their own role, perspective, and industry, and provides feedback on:

- What they think the page is about

- Whether it feels relevant to them

- What feels confusing, missing, or off

- What they expect or want to see next

- Whether they feel a connection strong enough to keep engaging

The goal is to understand how the page is perceived at first glance from a profile ground in the real world: a college student living in Texas, VP of Growth of at a FANNG company, a small business owner looking for vendors.

How we built it

- We used Google AI Studio and Gemini 3 APIs

- The system performs both text and visual analysis of landing pages

- Personas are defined with different roles and perspectives

- Each persona independently evaluates the page and generates structured feedback

Challenges we ran into

Fetching the right landing page content

Many landing pages cannot be reliably evaluated through simple text scraping. I had to combine both text and image analysis to capture what users actually see.Simulating human scrolling behavior

To make persona comments feel realistic, the audit progress bar needs to stay in sync with the agent’s observations. This required careful UI refinement.Defining useful judging criteria

AI can say anything, but from what perspective and by what criteria makes all the difference.

I had to translate real my GTM experience into structured, repeatable judging frameworks that an agent could apply consistently. This directly determines output quality.

Accomplishments that we're proud of

I am super proud that I was able to turn real GTM experience into adaptable judging criteria that work across different personas.

When reviewing the output - even on my own current landing pages - the feedback felt accurate and genuinely provided fresh perspectives. That validation was especially meaningful.

What we learned

Having fresh eyes is far more valuable than more data, especially early on. Most landing page issues are not about design polish or word choices, but a comprehensive sanity check about perception gaps.

What's next for Landing Page Judge Agent

The future focus is on two key areas:

- Persona quality and output depth

Personas need to be solid, controllable, and adaptive. We plan to build more structured persona profiles and incorporate domain-specific expertise to better reflect how real users think and react. - UI and feedback integration

Currently, the landing page and the agent feedback are separated. We plan to add richer overlay comments directly on the page to make insights more intuitive and actionable.

Even further future

The current structure works best for long, waterfall-style landing pages.

Looking ahead, the system could expand to:

- E-commerce product pages

- UI/UX flows

- Tutorials and onboarding experiences

Built With

- gemini

- gemini3

- google-ai-studio

Log in or sign up for Devpost to join the conversation.