-

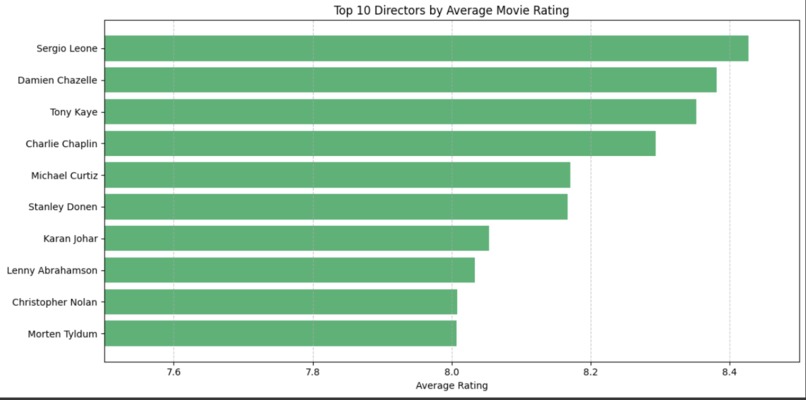

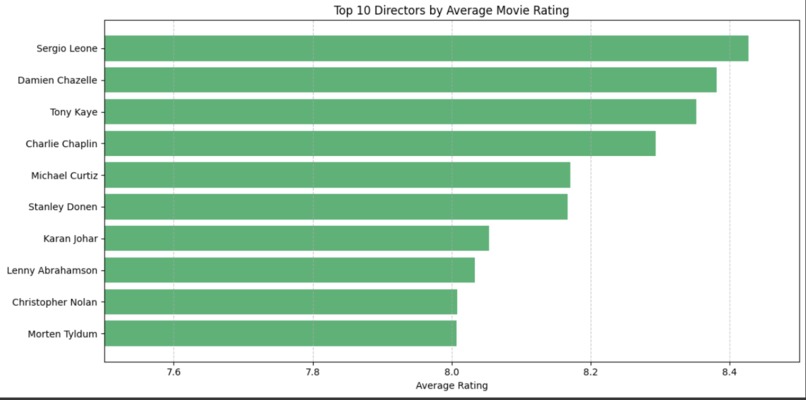

Directors affect on Average Rating - Good Director

-

Directors affect on Average Rating - Bad Director

-

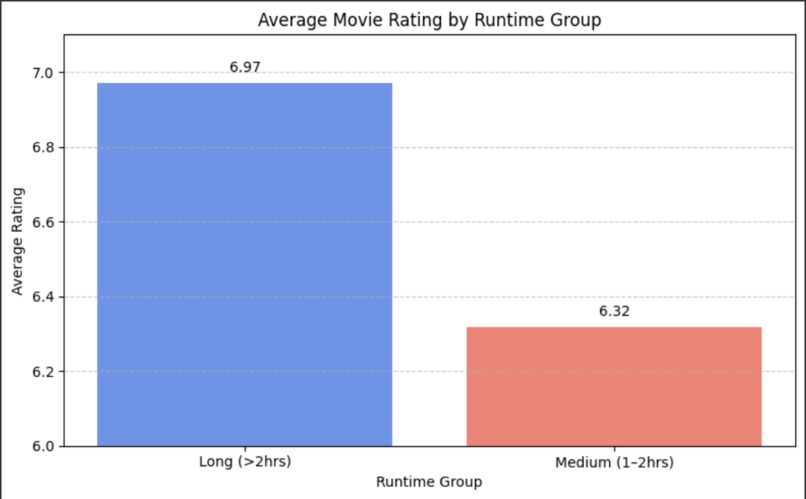

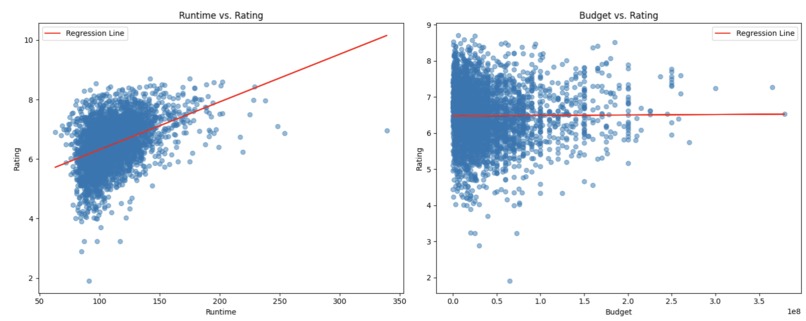

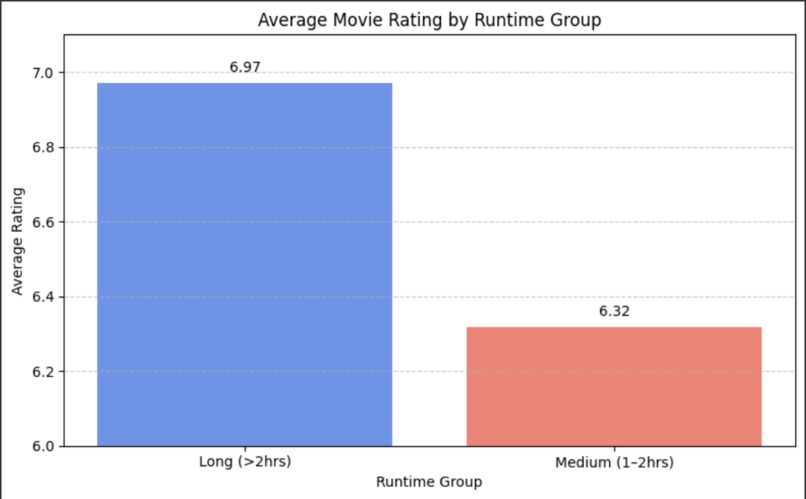

Movie’s Runtime affect on Average Rating

-

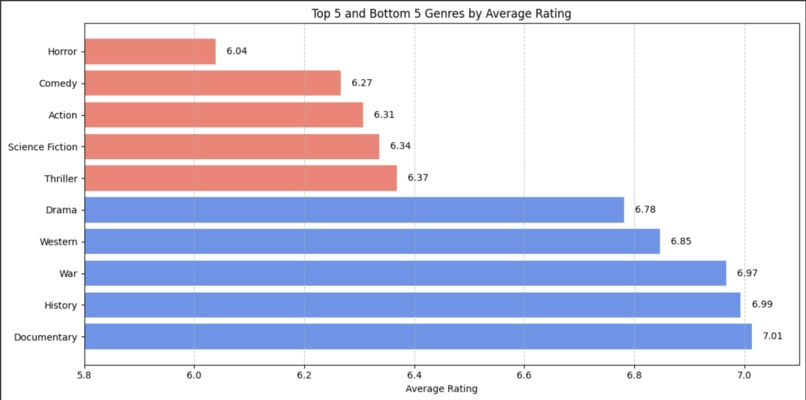

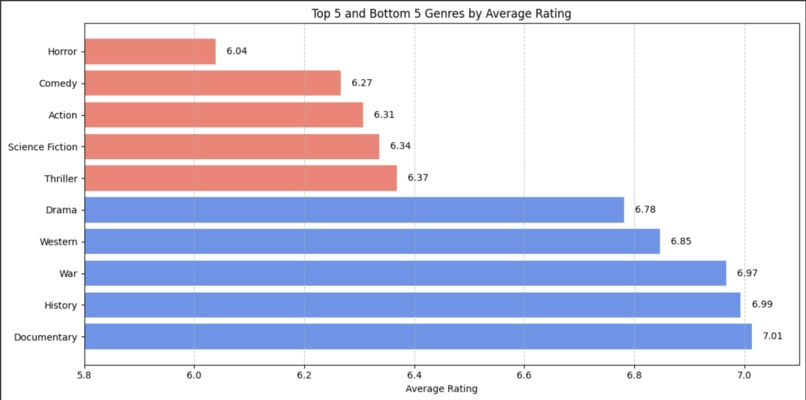

Genres affect on Average Rating

-

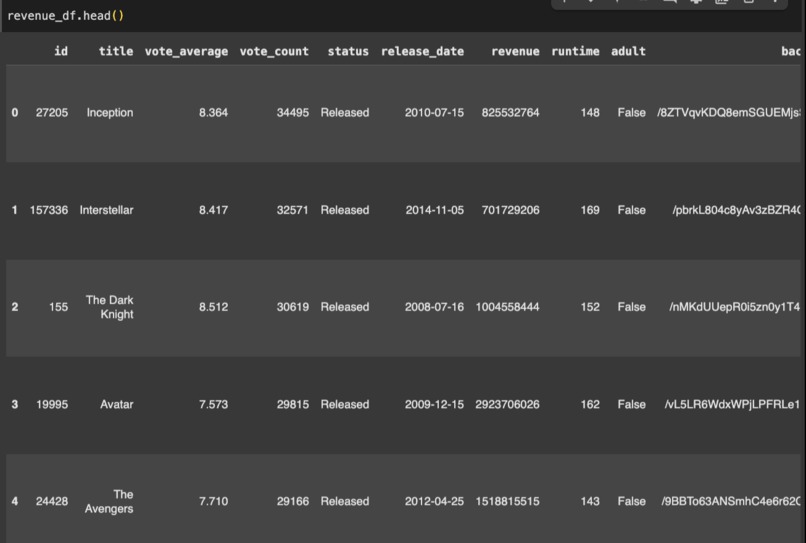

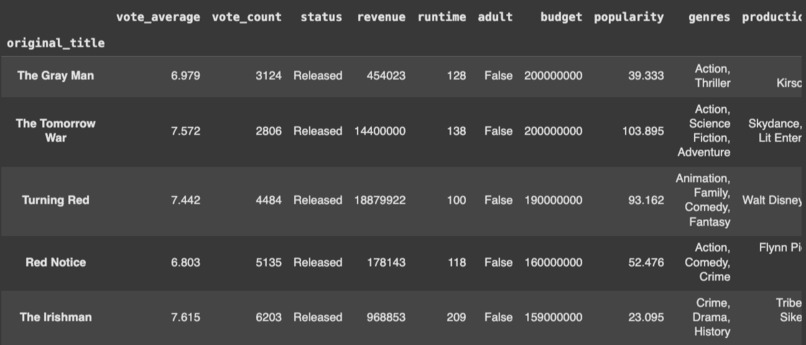

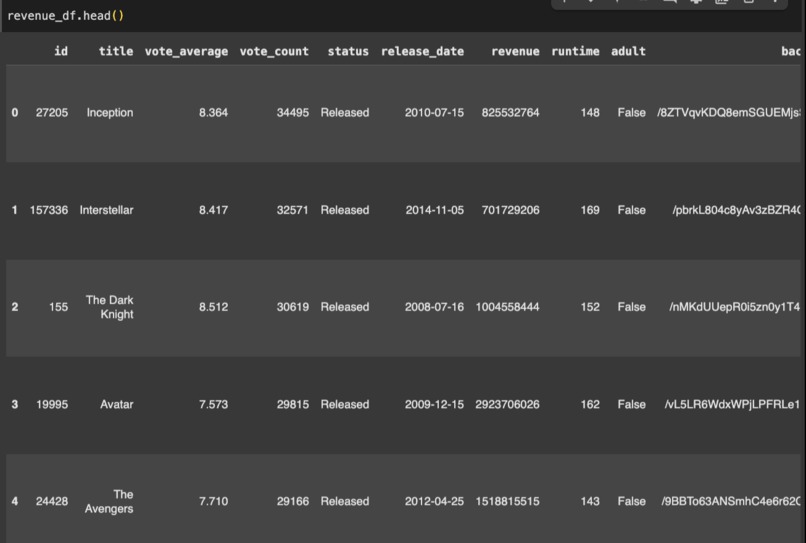

Original dataset

-

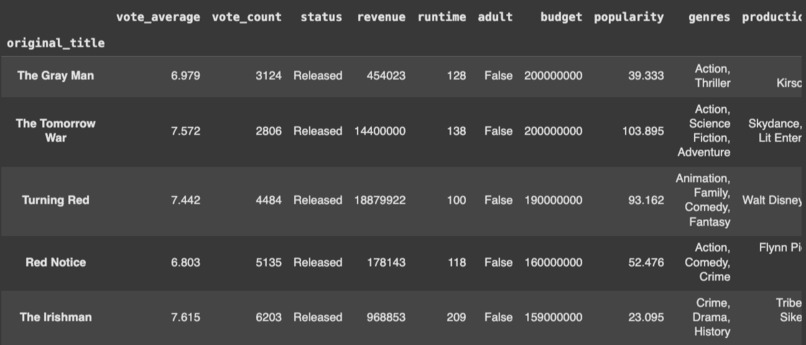

Dataset after cleaning up dataframe

-

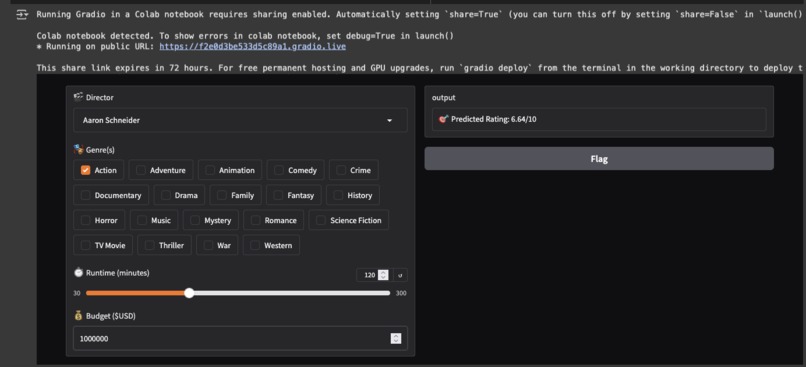

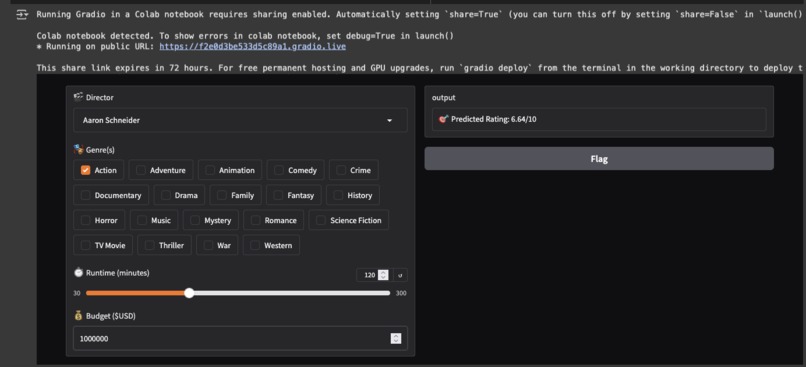

User interactive movie rating prediction tool

-

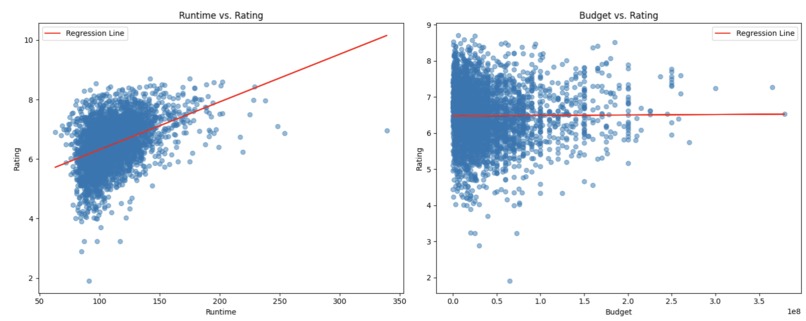

Scatter plot and regression on ratings

Inspiration

This project was inspired by the DSC 10 Spotify project and the tool we were tasked to create that matches similar songs based on similar song characteristics.

What it does

This project predicts a movie’s rating based on user-inputted features like director, genre, and runtime. It analyzes trends across thousands of films to show how these factors influence ratings, then uses a machine learning model to generate a predicted score on a 1–10 scale. Users can explore visual insights about directors, genres, and runtimes — and test their own movie ideas to see how they'd likely be received by audiences.

How we built it

This project was built in Google Colab using Python, powered by libraries like pandas, numpy, matplotlib, and scikit-learn. We began by merging two large Kaggle datasets containing over 900,000 movies. The data was cleaned to remove duplicates, missing values, and movies with unreliable or extreme financial performance.

We engineered features like profit, grouped runtime into categories (short, medium, long), and exploded multi-genre movies into individual genre rows for more precise analysis. We then visualized trends across directors, genres, and runtime groups to understand what drives high or low movie ratings.

To make predictions, we trained a linear regression model using one-hot encoded directors and genres, along with runtime as a numeric input. We also implemented clipping to ensure predicted ratings stayed within a 1–10 range.

The final model allows users to input a director, genre(s), and runtime, and instantly receive a predicted average rating — turning raw movie metadata into smart insights.

Challenges we ran into

We originally planned on measuring success based on average rating and profitabiltiy but streaming only movies don't generate profit directly. We couldn't find a way to remove streaming only movies so we decided to only measure success based on average rating.

Accomplishments that we're proud of

We're extremely proud of how our user interactive menu came out and it's accuracy at predicting a user's generated movie.

What we learned

Budget has less correlation with rating than expected Runtime around 90-120 minutes tends to perform best Clean data is half the battle How to build a user-friendly ML interface with Gradio

What's next for LA County Coders

We want to find a way to implement profit and be able to measure this success. We also see ourselves trying to add more characteristics to improve movie rating accuracy.

Built With

- google-colab

- kaggle

- python

Log in or sign up for Devpost to join the conversation.